Learning Rate Scheduling

Meaning ⎊ Dynamic adjustment of the step size during model training to balance convergence speed and solution stability.

Reinforcement Learning Strategies

Meaning ⎊ Reinforcement learning strategies enable autonomous, adaptive decision-making to optimize liquidity and risk management within decentralized markets.

Decentralized Machine Learning

Meaning ⎊ Decentralized machine learning redefines financial intelligence by replacing opaque centralized systems with transparent, cryptographically secured logic.

Machine Learning in Finance

Meaning ⎊ Applying advanced statistical models to financial data for predictive analysis, automation, and decision-making optimization.

Convergence Rate Optimization

Meaning ⎊ Methods to accelerate the accuracy of simulations, reducing the number of samples needed for precise results.

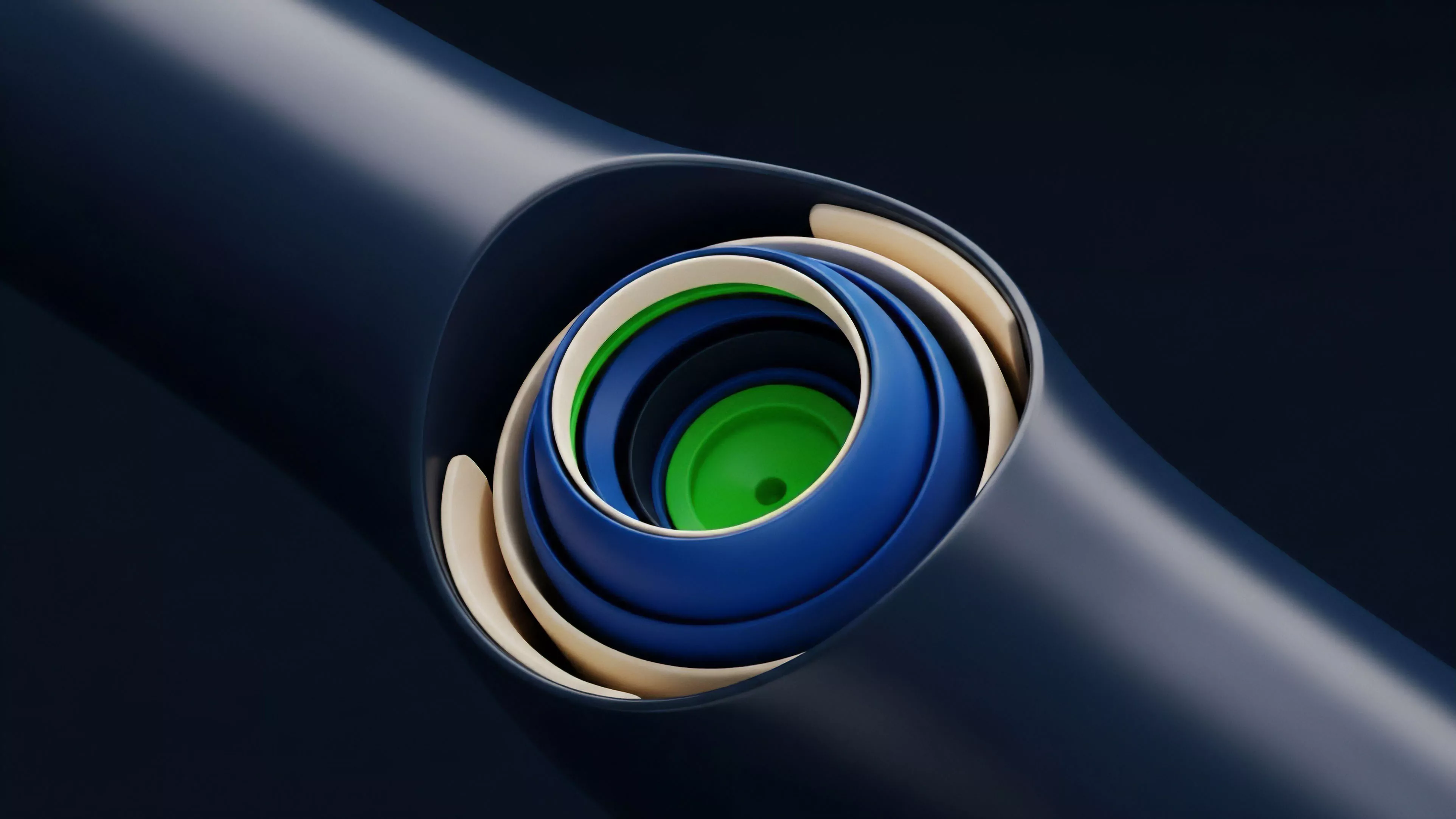

Deep Learning Architecture

Meaning ⎊ The design of neural network layers used in AI models to generate or identify complex patterns in digital data.

Fill Rate Optimization

Meaning ⎊ The systematic adjustment of trading parameters to increase the success rate of order executions.

Machine Learning Integrity Proofs

Meaning ⎊ Machine Learning Integrity Proofs provide the cryptographic verification necessary to secure autonomous algorithmic activity in decentralized markets.

Machine Learning Security

Meaning ⎊ Machine Learning Security protects decentralized financial protocols by ensuring the integrity of algorithmic inputs against adversarial manipulation.

Win Rate Optimization

Meaning ⎊ Process of refining a strategy to increase the frequency of winning trades while maintaining a positive risk-reward.

Machine Learning Finance

Meaning ⎊ Machine Learning Finance enables autonomous, adaptive risk management and optimized pricing within decentralized derivatives markets.

Funding Rate Optimization

Meaning ⎊ Funding Rate Optimization is the strategic management of derivative position costs to transform interest exchange into predictable portfolio yield.

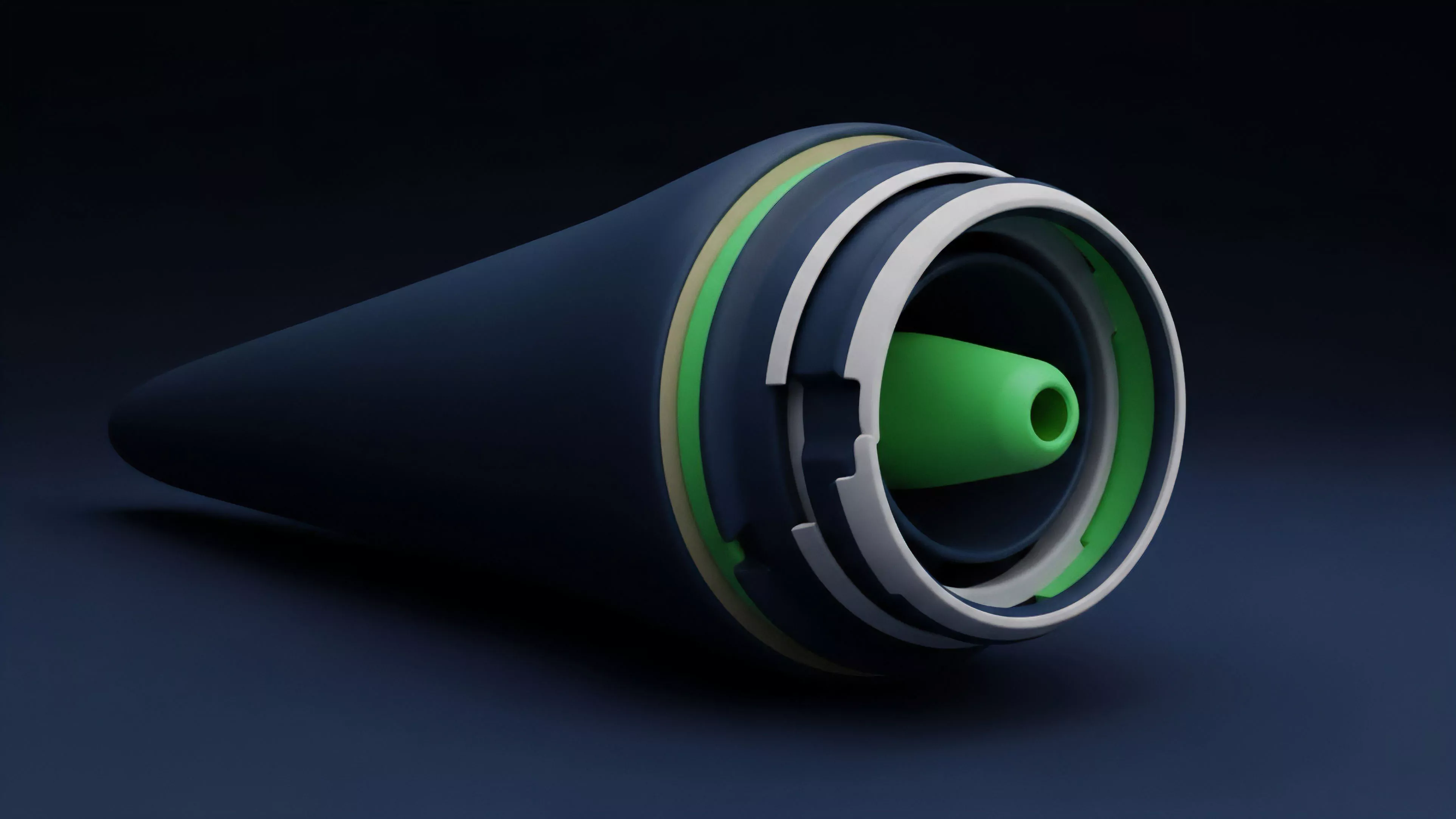

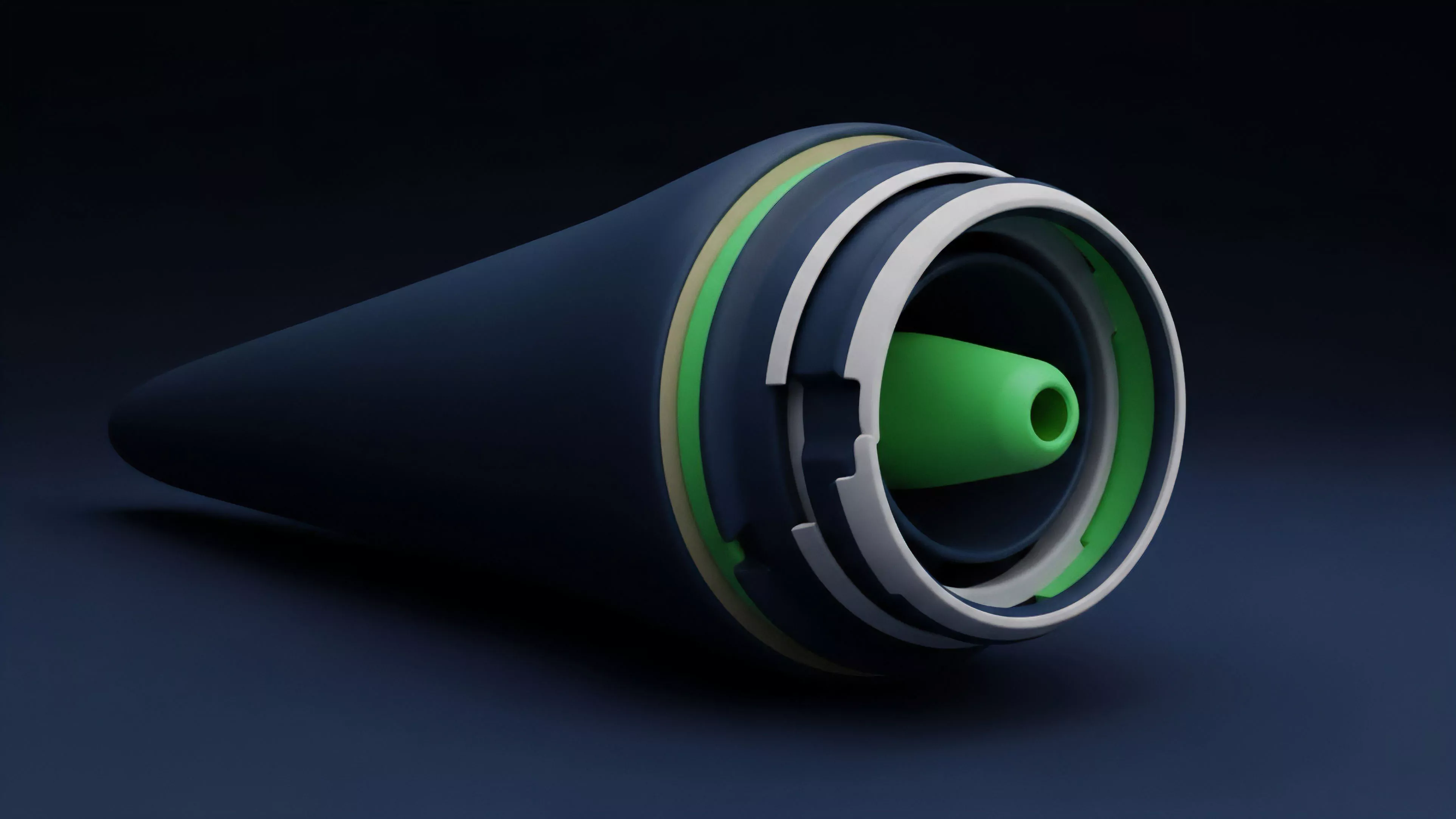

Off-Chain Machine Learning

Meaning ⎊ Off-Chain Machine Learning optimizes decentralized derivative markets by delegating complex computations to scalable layers while ensuring cryptographic trust.

Deep Learning Models

Meaning ⎊ Deep Learning Models provide dynamic, non-linear frameworks for pricing crypto options and managing risk within decentralized market structures.

Deep Learning Option Pricing

Meaning ⎊ Deep Learning Option Pricing replaces static formulas with adaptive neural models to improve derivative valuation in high-volatility decentralized markets.

Machine Learning Applications

Meaning ⎊ Machine learning applications automate complex derivative pricing and risk management by identifying predictive patterns in decentralized market data.

Time-Based Optimization

Meaning ⎊ Time-Based Optimization is the systematic extraction of premium through the automated management of temporal decay within derivative portfolios.

Network Performance Optimization Reports

Meaning ⎊ Network Performance Optimization Reports quantify the technical latency and throughput constraints that determine the solvency of on-chain derivative vaults.

Cryptographic Proof Optimization Algorithms

Meaning ⎊ Cryptographic Proof Optimization Algorithms reduce computational overhead to enable scalable, private, and mathematically certain financial settlement.

Cryptographic Proof Optimization Strategies

Meaning ⎊ Cryptographic Proof Optimization Strategies reduce computational overhead and latency to enable scalable, privacy-preserving decentralized finance.

Cryptographic Proof Complexity Tradeoffs and Optimization

Meaning ⎊ Cryptographic Proof Complexity Tradeoffs and Optimization balance prover resources and verifier speed to secure high-throughput decentralized finance.

Cryptographic Proof Complexity Optimization and Efficiency

Meaning ⎊ Cryptographic Proof Complexity Optimization and Efficiency enables the compression of vast financial computations into succinct, trustless certificates.

Cryptographic Proof Optimization Techniques and Algorithms

Meaning ⎊ Cryptographic Proof Optimization Techniques and Algorithms enable trustless, private, and high-speed settlement of complex derivatives by compressing computation into verifiable mathematical proofs.

Order Book Optimization Algorithms

Meaning ⎊ Order Book Optimization Algorithms manage the mathematical mediation of liquidity to minimize execution costs and systemic risk in digital markets.

Order Book Order Flow Optimization

Meaning ⎊ DOFS is the computational method of inferring directional conviction and systemic risk by synthesizing fragmented, time-decaying order flow across decentralized options protocols.

Order Book Order Flow Optimization Techniques

Meaning ⎊ Adaptive Latency-Weighted Order Flow is a quantitative technique that minimizes options execution cost by dynamically adjusting order slice size based on real-time market microstructure and protocol-level latency.

Proof Latency Optimization

Meaning ⎊ Proof Latency Optimization reduces the temporal gap between order submission and settlement to mitigate front-running and improve capital efficiency.

Cryptographic Proof Optimization

Meaning ⎊ Cryptographic Proof Optimization drives decentralized derivatives scalability by minimizing the on-chain verification cost of complex financial state transitions through succinct zero-knowledge proofs.

Cryptographic Proof Optimization Techniques

Meaning ⎊ Cryptographic Proof Optimization Techniques enable the succinct, private, and high-speed verification of complex financial state transitions in decentralized markets.