Essence

The structural equilibrium of Cryptographic Proof Complexity Tradeoffs and Optimization dictates the economic feasibility of trustless settlement. This mechanism governs the distribution of computational labor between the entity generating a proof and the entity validating it. In decentralized systems, the objective remains the minimization of verification costs to enable execution on resource-constrained environments like Ethereum.

High-performance proving requires substantial memory and processing power, often necessitating a departure from standard consumer hardware.

Proof systems represent the ultimate compression of trust into mathematical certainty.

Efficiency in this domain centers on the concept of succinctness. A proof must remain significantly smaller than the witness data it validates, ensuring that the cost of checking the proof does not scale linearly with the complexity of the underlying computation. Cryptographic Proof Complexity Tradeoffs and Optimization involves selecting specific mathematical primitives ⎊ such as elliptic curves or hash functions ⎊ that align with the target execution environment.

This selection determines the boundary between privacy, speed, and security.

Computational Equilibrium

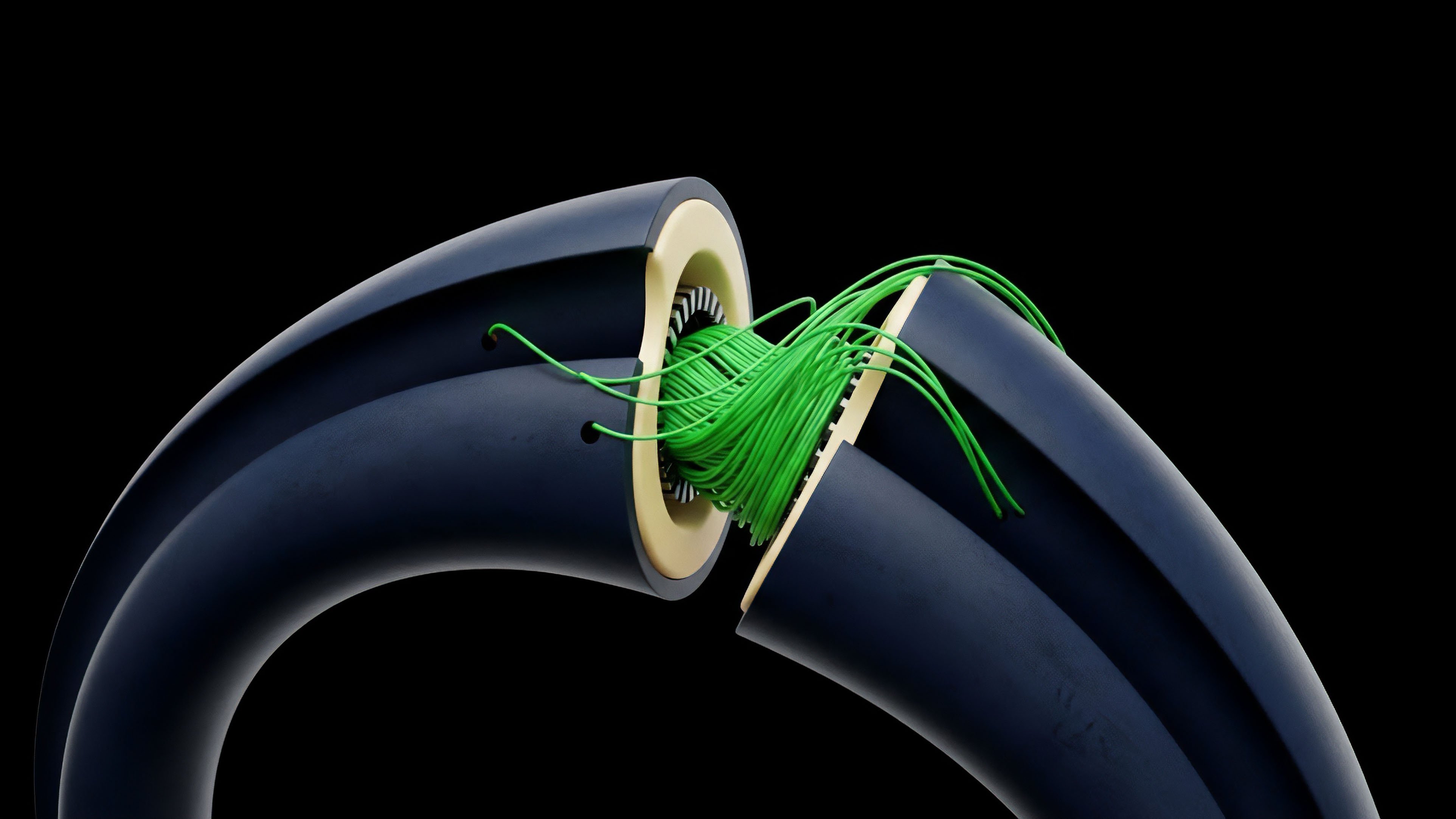

The relationship between prover time and verifier time is often inverse. Systems that offer instantaneous verification frequently demand intensive, long-duration proving cycles. This asymmetry is a deliberate architectural choice to protect the network from denial-of-service attacks during validation.

By shifting the burden to the prover, the protocol ensures that the global state can be updated with minimal overhead for the majority of participants.

Verification Efficiency

Verification cost is the primary driver of layer-two scaling profitability. If a proof requires excessive gas for on-chain validation, the margin for decentralized derivatives and high-frequency trading narrows. Optimization strategies focus on reducing the number of constraints in an arithmetic circuit, which directly impacts the final proof size and the complexity of the verification algorithm.

Origin

The genesis of these trade-offs lies in the 1985 introduction of zero-knowledge proofs by Goldwasser, Micali, and Rackoff.

Initial theoretical models focused on the possibility of proving knowledge without revealing the underlying data, but these early constructions were too computationally expensive for practical application. The shift toward Cryptographic Proof Complexity Tradeoffs and Optimization became a necessity with the rise of blockchain technology, where every byte of data carries a financial cost.

The tension between prover overhead and verifier speed defines the economic boundary of decentralized scaling.

Early implementations like Zcash utilized zk-SNARKs, which offered small proof sizes but required a trusted setup. This reliance on initial parameters highlighted a critical trade-off: accepting a centralized security assumption in exchange for extreme verification efficiency. As the industry matured, the demand for transparent, setup-free systems led to the development of STARKs and other transparent polynomial commitment schemes.

Transition to Blockchain

The move from academic theory to financial infrastructure forced a re-evaluation of complexity classes. Researchers realized that for a proof system to secure billions in assets, it needed to be both sound and efficient. This led to the creation of more sophisticated arithmetization techniques, such as R1CS and Plonkish arithmetization, which allow for more flexible and dense circuit designs.

Evolution of Commitment Schemes

The choice of a commitment scheme is a defining moment in the history of proof optimization. Early systems relied heavily on pairing-friendly elliptic curves. The discovery of inner product arguments and FRI (Fast Reed-Solomon Interactive Oracle Proofs) provided alternative pathways that traded larger proof sizes for faster proving times and quantum resistance.

Theory

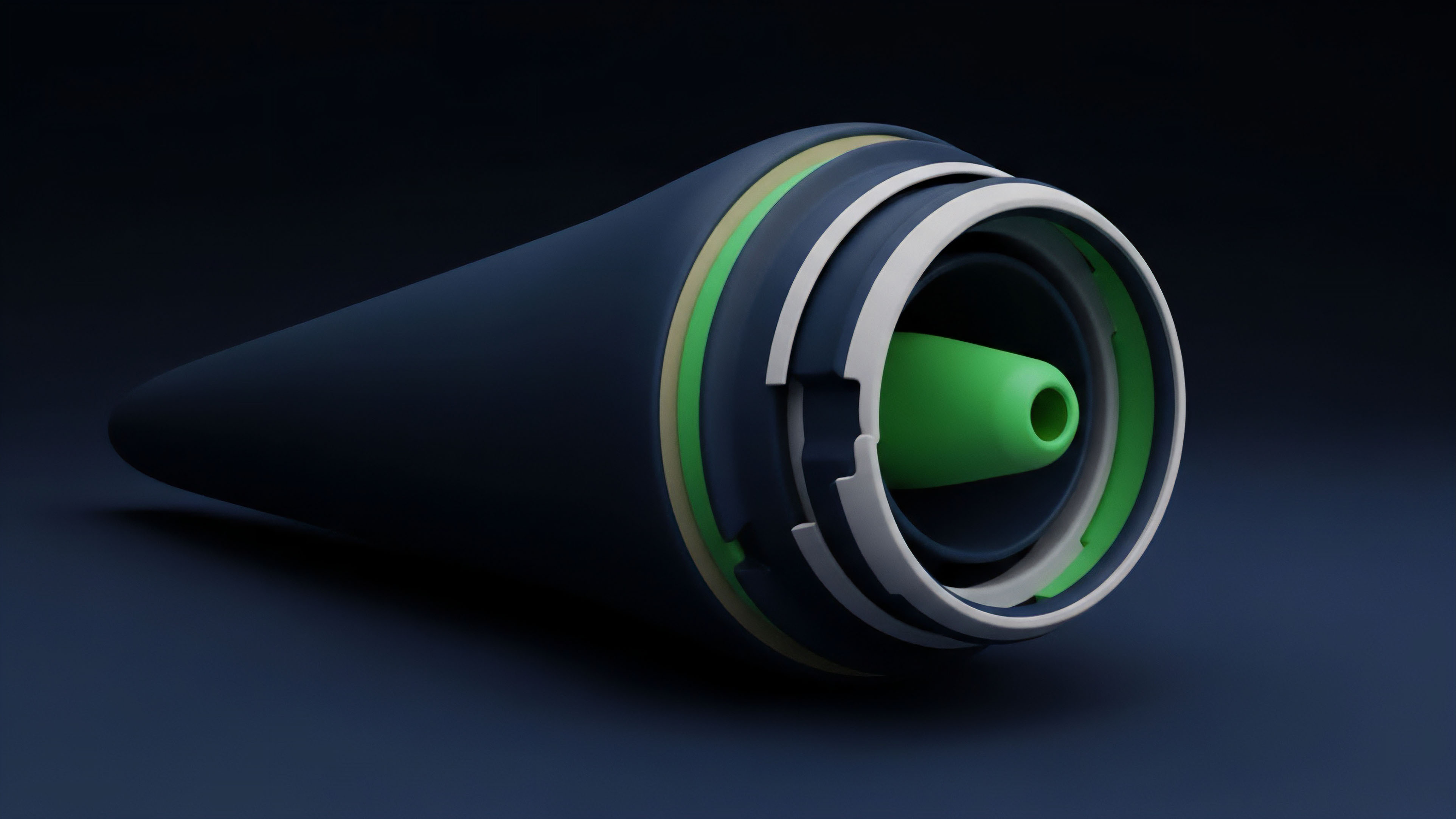

The mathematical framework of Cryptographic Proof Complexity Tradeoffs and Optimization is built upon the interaction between arithmetic circuits and polynomial commitments.

A computation is transformed into a set of polynomial equations, and the prover demonstrates knowledge of a solution without revealing the solution itself. The complexity of this process is measured in terms of the number of gates in the circuit and the degree of the polynomials involved.

- Prover Complexity: The time required to generate a proof, typically scaling at O(n log n) or O(n) relative to the number of constraints.

- Verifier Complexity: The time required to validate a proof, ideally remaining constant or scaling logarithmically with the computation size.

- Proof Size: The total data transmitted to the verifier, which determines the bandwidth and storage requirements for the network.

- Soundness Error: The probability that a malicious prover can convince a verifier of a false statement.

Complexity Metrics Comparison

The following table illustrates the theoretical differences between the most prominent proof systems used in modern financial protocols.

| System Type | Prover Time | Verifier Time | Proof Size | Setup Type |

|---|---|---|---|---|

| zk-SNARK (Groth16) | O(n log n) | O(1) | ~200 Bytes | Trusted |

| zk-STARK | O(n log^2 n) | O(log^2 n) | ~100 KB | Transparent |

| Bulletproofs | O(n) | O(n) | ~1-2 KB | Transparent |

| Halo2 (IPA) | O(n log n) | O(log n) | ~4-6 KB | Transparent |

Arithmetic Circuit Optimization

Optimizing a circuit involves reducing the number of non-linear constraints. In many proof systems, additions are virtually free, while multiplications consume significant resources. Cryptographic Proof Complexity Tradeoffs and Optimization focuses on “custom gates” and “lookups” to handle complex operations like range checks or hash functions more efficiently than traditional R1CS structures.

Approach

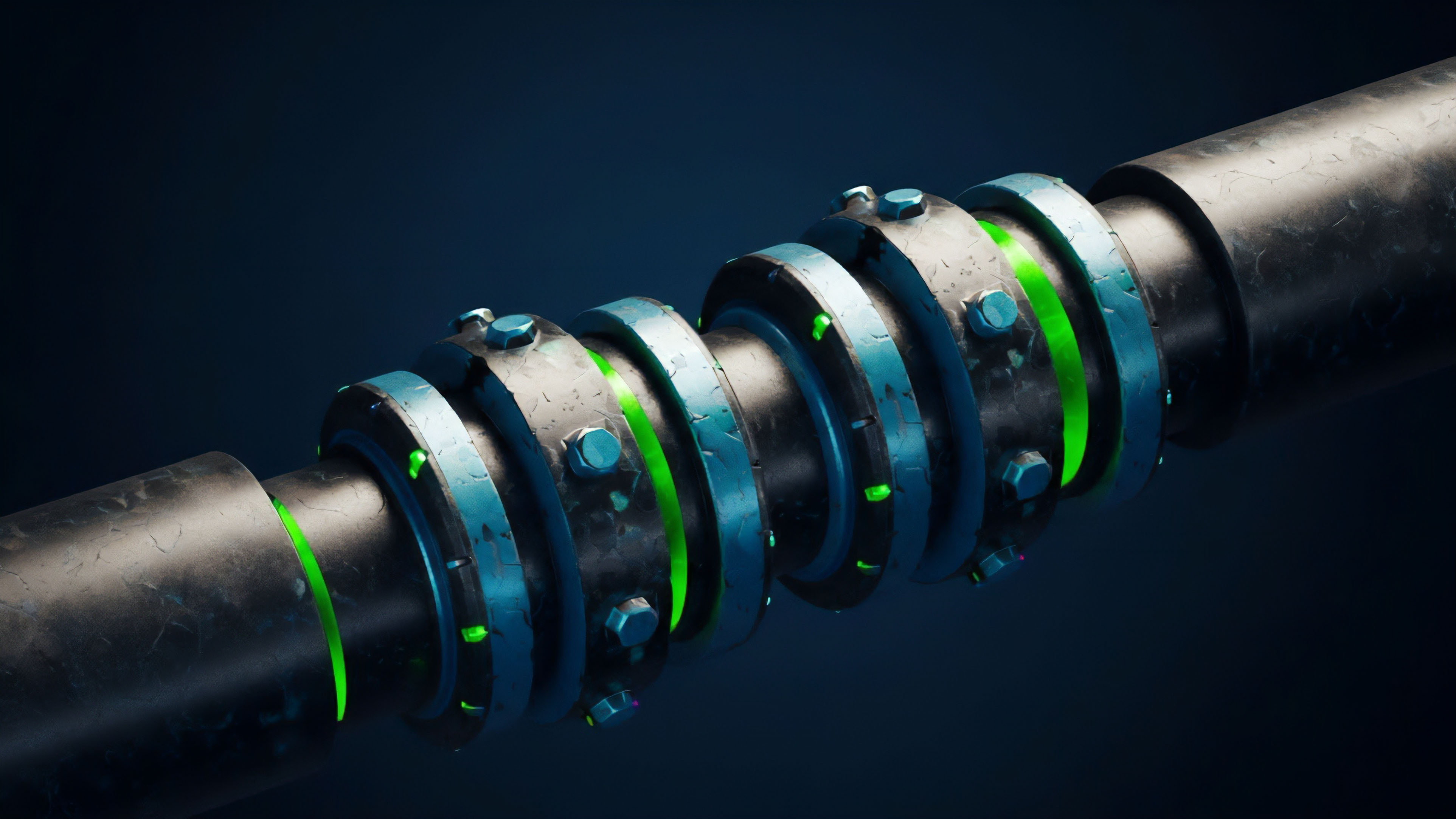

Current methodologies for Cryptographic Proof Complexity Tradeoffs and Optimization involve a multi-layered strategy that combines software-level circuit design with hardware-level acceleration.

Engineers prioritize the reduction of the “proving bottleneck” by utilizing field-programmable gate arrays (FPGAs) and application-specific integrated circuits (ASICs). These hardware solutions are designed to handle the massive multi-scalar multiplications (MSM) and fast Fourier transforms (FFT) that dominate prover execution time.

Recursive composition allows for the infinite nesting of validity, transforming linear history into logarithmic verification.

Another dominant strategy is the use of recursive proof composition. This involves a proof system that can verify its own proofs. By aggregating multiple proofs into a single statement, the marginal cost of verification is distributed across thousands of transactions.

This approach is vital for the operation of zk-Rollups, where the goal is to compress an entire block of transactions into a single validity proof.

Hardware Acceleration Benefits

The shift toward specialized hardware is a pragmatic response to the limits of general-purpose CPUs. The following list details the advantages of hardware-centric optimization.

- Parallelization: Distributing MSM and FFT operations across thousands of small, efficient cores.

- Memory Bandwidth: Designing custom memory architectures to handle the large datasets required for high-degree polynomial operations.

- Energy Efficiency: Reducing the power consumption per proof, which is a vital factor for large-scale prover markets.

- Latency Reduction: Enabling real-time proof generation for interactive applications like decentralized gaming or high-speed trading.

Polynomial Commitment Selection

The choice between KZG, FRI, or IPA commitment schemes depends on the specific needs of the protocol. KZG offers the smallest proofs but requires a trusted setup and is not quantum-resistant. FRI is transparent and fast but results in much larger proofs.

Cryptographic Proof Complexity Tradeoffs and Optimization requires a deep analysis of these parameters to match the protocol’s security and cost profile.

Evolution

The field has moved from monolithic proof systems to modular architectures where different components can be swapped based on performance requirements. Cryptographic Proof Complexity Tradeoffs and Optimization now includes the use of “small fields” like the Goldilocks field or the Mersenne31 field. These fields are designed to be extremely fast on modern 64-bit processors, significantly reducing the overhead of field arithmetic.

Shift to Plonkish Arithmetization

The transition from R1CS to Plonkish arithmetization represents a major shift in how circuits are constructed. Plonkish systems allow for columns and custom gates, giving developers more granular control over the layout of the computation. This flexibility enables the creation of highly optimized “pre-compiles” for common cryptographic operations, reducing the total constraint count by orders of magnitude.

Prover Markets and Incentives

The emergence of decentralized prover markets is a recent development in the evolution of these systems. Instead of a single entity generating proofs, a competitive market of provers vies for the right to secure the network. This competition drives further Cryptographic Proof Complexity Tradeoffs and Optimization, as provers must constantly improve their efficiency to remain profitable.

| Evolutionary Phase | Primary Innovation | Impact on Complexity |

|---|---|---|

| Early SNARKs | QAP / R1CS | Small proofs, high prover cost |

| STARK Era | FRI / Hash-based | No setup, larger proofs |

| Recursive Era | Halo / Plonky2 | Proof aggregation, hyper-scaling |

| Small Field Era | Circle STARKs | Ultra-fast field arithmetic |

Horizon

The future of Cryptographic Proof Complexity Tradeoffs and Optimization lies in the total commoditization of proving power. We are moving toward a world where proof generation is as ubiquitous as hashing in the Bitcoin network. This will be driven by the integration of zero-knowledge primitives directly into hardware, perhaps even at the mobile device level, allowing for private, verifiable interactions in every aspect of digital life.

Post-Quantum Security

As quantum computing capabilities advance, the industry will shift toward hash-based systems like STARKs or lattice-based cryptography. These systems avoid the vulnerabilities of elliptic curve pairings. The trade-off will be a temporary increase in proof size, which will then be mitigated through more advanced recursive techniques and data availability sampling.

Fully Homomorphic Encryption Integration

The ultimate frontier is the combination of zero-knowledge proofs with fully homomorphic encryption (FHE). While ZKPs prove that a computation was done correctly, FHE allows the computation to be performed on encrypted data. Combining these two technologies will enable a new class of decentralized applications where the state is always private, yet its validity is always publicly verifiable. This represents the final step in the quest for a truly sovereign and secure financial operating system.

Glossary

Halo2

Cryptographic Primitives

Pairing-Friendly Curves

Zk-Rollups

Zero Knowledge Property

Proof Succinctness

Fri Protocol

Hyper-Scaling

Non-Interactive Proofs