Conceptual Identity

Cryptographic Proof Optimization Techniques and Algorithms function as the mathematical compression layer for decentralized settlement. They transform the burden of repeated computation into a singular act of verification. Within the context of digital asset derivatives, these methods enable a verifier to confirm the validity of complex trade executions, margin calculations, or solvency states without re-executing the underlying logic.

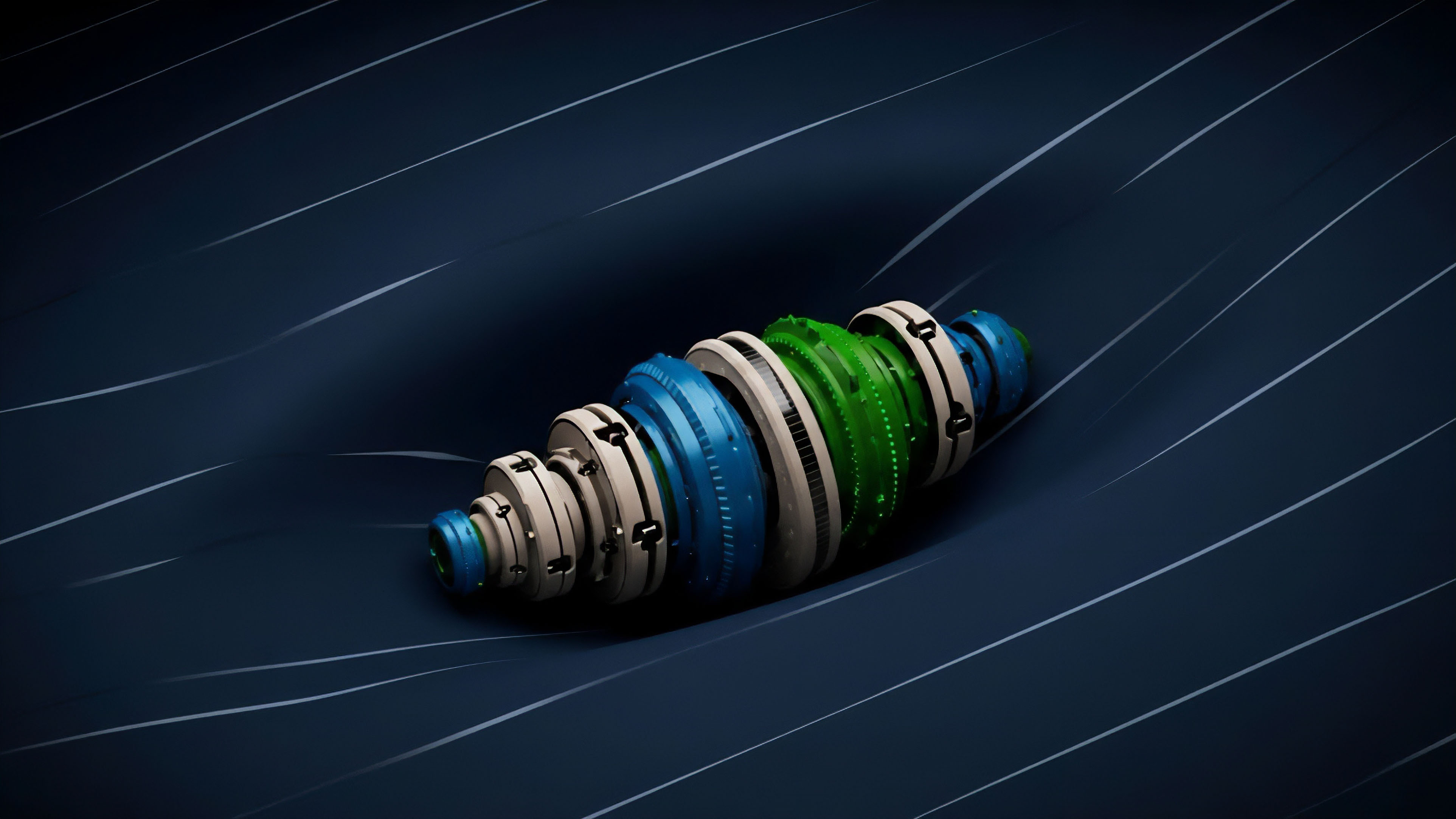

This transition from execution-heavy models to verification-centric models addresses the inherent constraints of distributed ledgers where bandwidth and compute are finite resources. The objective of Cryptographic Proof Optimization Techniques and Algorithms is the reduction of prover time, proof size, and verification gas costs. In high-frequency options markets, the latency of proof generation determines the viability of on-chain risk management.

If the prover cannot generate a validity proof within the same block as the price update, the system remains exposed to toxic flow and stale liquidations. By refining the arithmetization of financial logic and the commitment schemes used to bind data, these algorithms ensure that trustless systems can match the throughput of centralized counterparts.

Cryptographic Proof Optimization Techniques and Algorithms represent the shift from trust-by-repetition to trust-by-mathematical-succinctness, enabling capital-efficient settlement without centralized intermediaries.

The systemic implication of these optimizations is the decoupling of verification cost from computation complexity. A margin engine calculating the Greeks for a portfolio of ten thousand exotic options requires the same verification effort as a simple transfer once the proof is generated. This non-linear scaling property allows for the creation of sophisticated financial instruments that were previously too expensive to secure on a public ledger.

Historical Genesis

The lineage of Cryptographic Proof Optimization Techniques and Algorithms traces back to the 1985 introduction of zero-knowledge proofs by Goldwasser, Micali, and Rackoff.

While the initial focus was purely theoretical, the rise of programmable blockchains necessitated a practical application for these concepts. Early iterations, such as the Groth16 construction, provided the succinctness required for blockchain integration but suffered from the requirement of a trusted setup. This dependency created a systemic risk where the compromise of the initial parameters could lead to the undetected minting of assets.

As the demand for more flexible and secure systems grew, the focus shifted toward universal and transparent systems. The introduction of Bulletproofs and later the PLONK construction removed the need for per-circuit trusted setups, though at the cost of larger proof sizes or slower verification. The drive for optimization intensified as decentralized finance began to hit the limits of Ethereum’s execution environment.

Developers realized that general-purpose circuits were inefficient for specialized financial tasks like order matching or volatility surface updates.

The historical trajectory of proof systems is defined by the continuous removal of trust assumptions and the reduction of the computational overhead required for cryptographic certainty.

The maturation of Cryptographic Proof Optimization Techniques and Algorithms was further accelerated by the introduction of STARKs, which utilized hash-based functions to achieve post-quantum security and eliminate trusted setups entirely. This era marked the transition from academic curiosity to industrial-grade financial infrastructure. The current state of the art focuses on recursion and folding, where proofs can verify other proofs, allowing for the infinite scaling of transactional history into a constant-sized data packet.

Mathematical Architecture

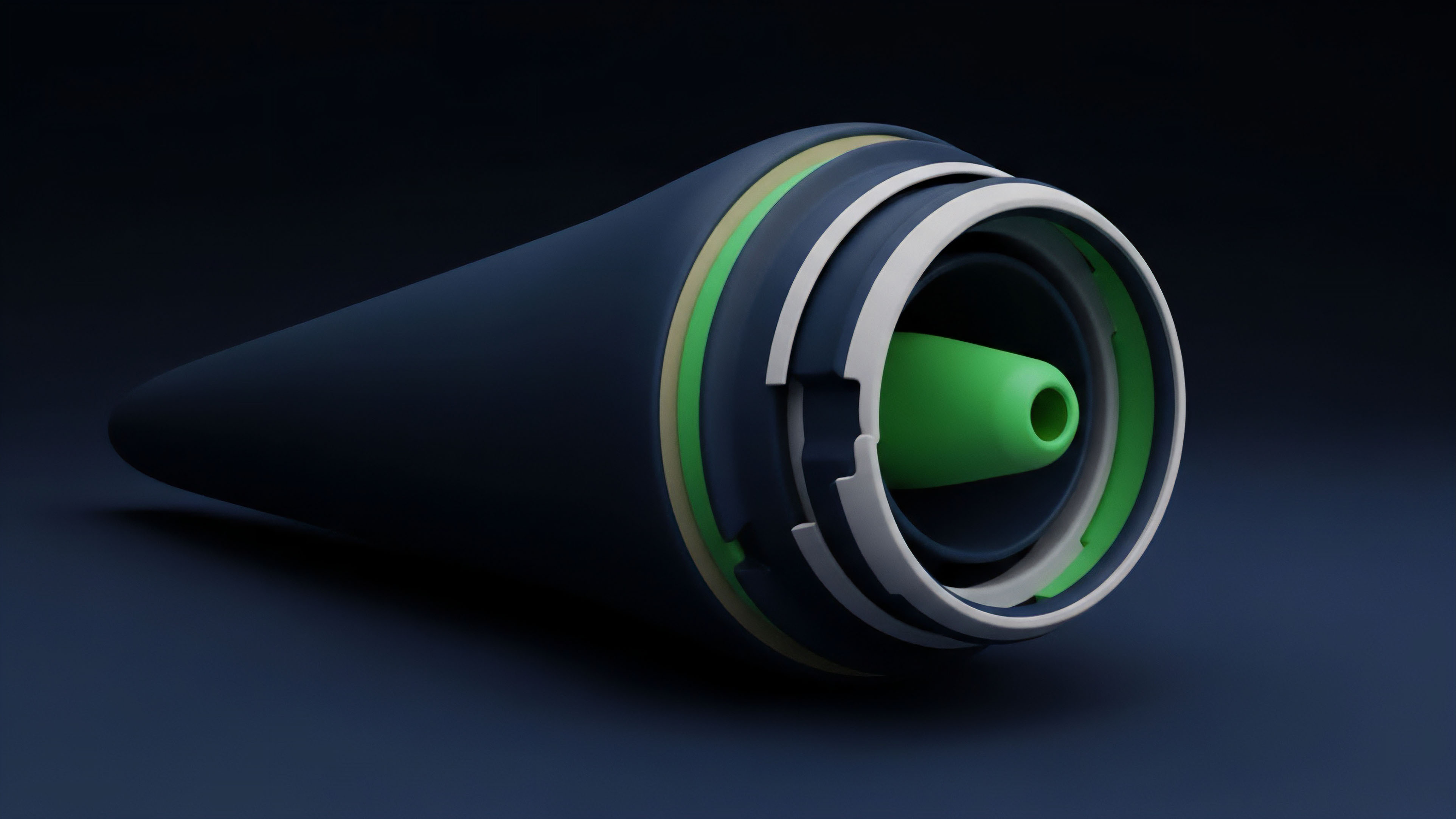

The structural logic of Cryptographic Proof Optimization Techniques and Algorithms rests on two primary pillars: arithmetization and polynomial commitment schemes.

Arithmetization is the process of translating high-level financial logic ⎊ such as a Black-Scholes pricing formula ⎊ into a system of polynomial equations over a finite field. The efficiency of this translation determines the number of constraints the prover must satisfy. Modern techniques like Plonkish arithmetization allow for custom gates and lookups, which significantly reduce the complexity of non-linear operations common in derivatives pricing.

Polynomial commitment schemes (PCS) serve as the mechanism to prove that the prover knows a polynomial that satisfies the arithmetized constraints without revealing the polynomial itself. The choice of PCS involves a trade-off between proof size and verification speed. For instance, KZG commitments offer constant-sized proofs but require a trusted setup, whereas FRI-based commitments used in STARKs are transparent but result in larger proofs.

| Component | Primary Function | Optimization Target |

|---|---|---|

| Arithmetization | Logic to Polynomial Translation | Constraint Count Reduction |

| Commitment Scheme | Data Binding and Hiding | Proof Size and Succinctness |

| Lookup Tables | Precomputed Value Retrieval | Non-linear Op Efficiency |

| Recursion/Folding | Proof Aggregation | Verification Cost Amortization |

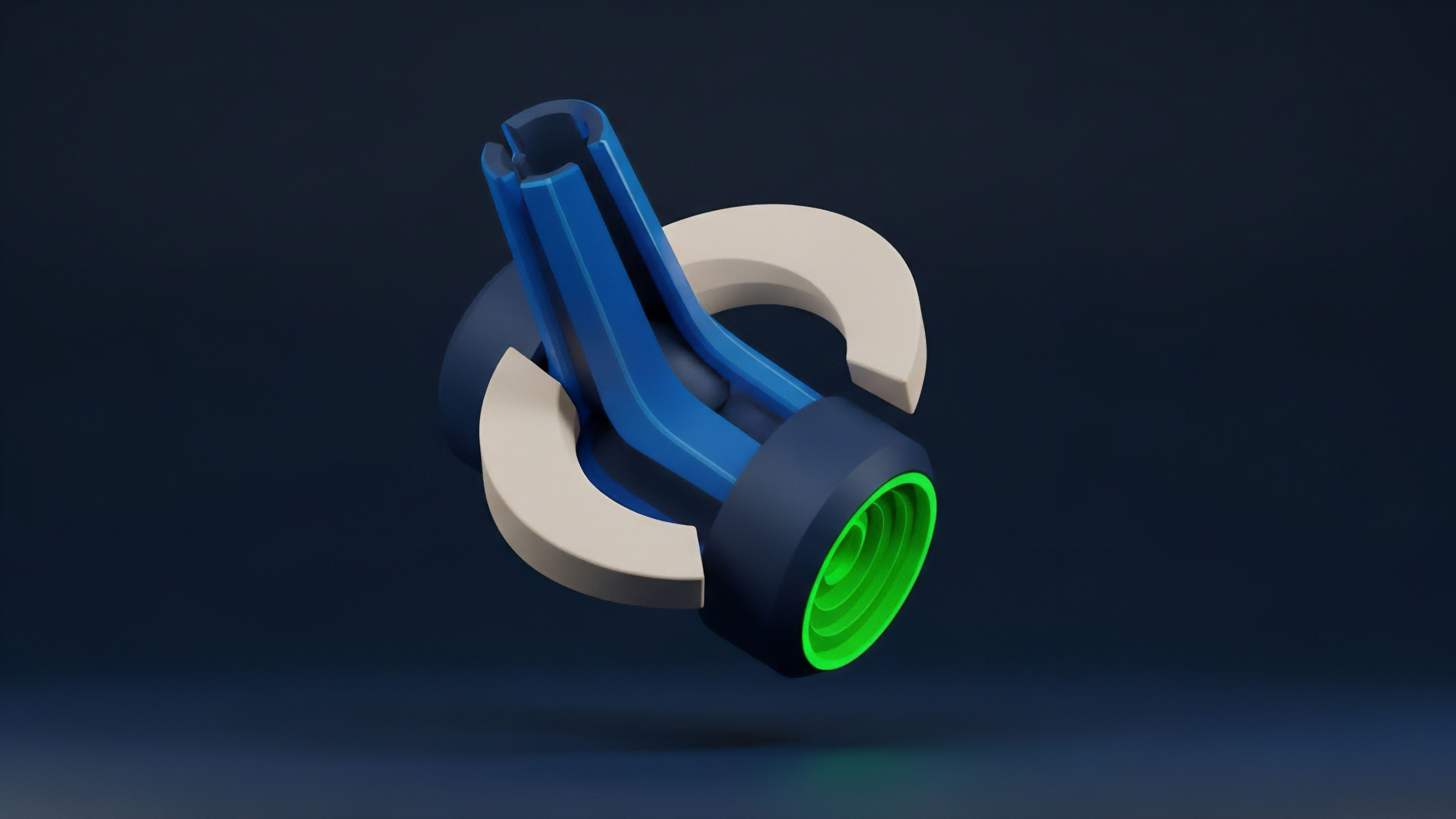

Recursive proof composition allows a prover to generate a proof of the validity of a previous proof. This is achieved by including the verifier logic of the first proof as a circuit within the second. This mathematical nesting is vital for rollups and decentralized options vaults, where thousands of individual trades must be compressed into a single state update.

Folding schemes like Nova take this further by combining the internal states of multiple instances of the same circuit, avoiding the heavy cryptographic machinery of recursion for every step.

The architecture of modern proof systems relies on the strategic selection of arithmetization methods and commitment schemes to balance prover latency against verifier cost.

Implementation Mechanics

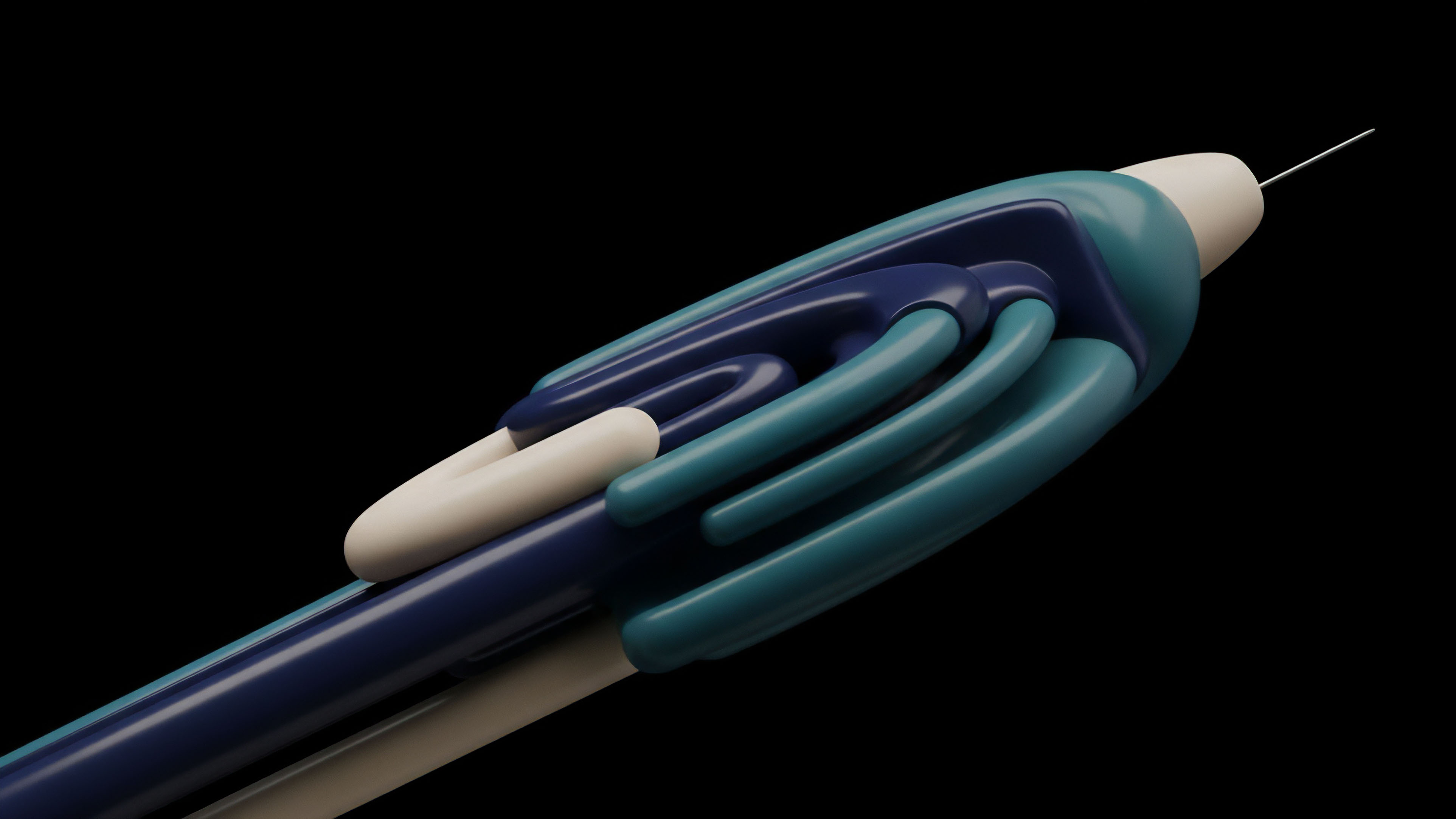

Current deployment of Cryptographic Proof Optimization Techniques and Algorithms involves a multi-layered stack of software and hardware. Software libraries like Halo2, Plonky2, and Gnark provide the primitives for developers to build specialized circuits for financial applications. These libraries focus on optimizing the “Prover” side of the equation, which is the most resource-intensive part of the process.

In a decentralized options exchange, the prover must handle the ingestion of oracle data, the calculation of margin requirements, and the execution of liquidations in a single proof. The use of lookup tables, specifically through methods like Lasso and Jolt, has become a standard for optimizing complex operations. Instead of proving a calculation from scratch, the prover simply demonstrates that the input and output exist in a precomputed table of valid results.

This is particularly effective for range checks and bitwise operations, which are notoriously expensive in standard arithmetization.

- Circuit Compilation: High-level financial logic is compiled into a constraint system, such as R1CS or Plonkish gates.

- Witness Generation: The prover calculates the private inputs (witness) that satisfy the circuit constraints for a specific set of trades.

- Commitment Phase: The prover commits to the witness polynomials using a scheme like KZG or FRI.

- Proof Generation: Through a series of challenges and responses (Fiat-Shamir heuristic), the prover generates the final succinct proof.

- On-chain Verification: The verifier contract on the base layer checks the proof against the public inputs and state roots.

Hardware acceleration is the next frontier for Cryptographic Proof Optimization Techniques and Algorithms. General-purpose CPUs are inefficient at the large-scale modular arithmetic and Fast Fourier Transforms (FFTs) required for proof generation. The industry is shifting toward GPUs and specialized ASICs (Application-Specific Integrated Circuits) to reduce prover latency.

This hardware-software co-design is critical for maintaining the competitive edge of decentralized derivatives against centralized platforms that operate with microsecond latency.

| Hardware Type | Strength | Role in Proof Generation |

|---|---|---|

| CPU | General Logic | Witness Generation and Orchestration |

| GPU | Parallelism | FFT and Multi-Scalar Multiplication |

| FPGA | Flexibility | Custom Pipeline Prototyping |

| ASIC | Maximum Efficiency | Production-grade High-speed Proving |

Systemic Maturation

The transition from general-purpose ZK-VMs to specialized financial circuits marks the maturation of Cryptographic Proof Optimization Techniques and Algorithms. Early DeFi protocols attempted to run entire execution environments inside a proof, which introduced significant overhead. Modern strategies involve “App-specific” circuits where the constraints are tailored to the specific math of options and futures.

This specialization reduces the proof generation time from minutes to seconds, making real-time risk management feasible. Market microstructure in a ZK-enabled world is fundamentally different. Privacy-preserving dark pools utilize Cryptographic Proof Optimization Techniques and Algorithms to allow traders to prove they have the collateral for a large order without revealing their position or strategy to the market.

This mitigates front-running and MEV (Maximal Extractable Value) while maintaining the security of a cleared exchange. The evolution of these techniques has moved the focus from simple transaction privacy to complex state privacy. The risk of prover centralization is a significant concern in the current maturation phase.

Because proof generation is computationally expensive, there is a tendency for it to be outsourced to specialized “Prover Markets.” While this increases efficiency, it introduces a new dependency on a small number of high-performance operators. Protocols are now integrating incentive structures to decentralize the prover role, ensuring that no single entity can censor the verification of financial states.

- Prover Market Dynamics: The emergence of a secondary market where users pay provers to generate proofs for their transactions.

- Proof-as-a-Service: Specialized firms offering high-speed cryptographic verification for institutional DeFi.

- Circuit Auditing: The development of formal verification tools to ensure that optimized circuits do not contain logic errors or “soundness” bugs.

Terminal Trajectory

The future of Cryptographic Proof Optimization Techniques and Algorithms lies in the total commoditization of proof generation. As specialized ASICs become ubiquitous, the cost of proving will drop toward the cost of electricity. This will enable “Instant Finality” for options markets, where every trade is accompanied by a proof of solvency and a proof of correct execution that is verified by the network in milliseconds. The clearinghouse of the future is not a legal entity but a cryptographic verifier. The synthesis of folding schemes and hardware acceleration will likely lead to a state where the entire history of a global financial system can be verified on a mobile device. This level of accessibility will force a radical shift in regulatory frameworks. If the math provides a guarantee of solvency and compliance, the need for traditional reporting and oversight diminishes. The regulator’s role will shift from monitoring entities to auditing the circuits and the Cryptographic Proof Optimization Techniques and Algorithms themselves. A novel conjecture arises: the decoupling of execution from verification will create a “Proof-Native” asset class. These are assets whose value and utility are derived from their ability to be instantly and privately verified across any chain or jurisdiction. This would lead to the creation of a Zero-Knowledge Contingent Margin Protocol (ZKCMP), a system where margin calls are executed automatically via proofs without the need for a central liquidator. The protocol would monitor the volatility of the underlying assets and generate proofs of margin sufficiency in real-time, only triggering a liquidation when the math dictates it. The ultimate question remains: if verification becomes nearly free and instantaneous, does the concept of a “trusted” intermediary retain any economic utility beyond providing a legal backstop for physical assets?

Glossary

Modular Arithmetic

Greeks Sensitivity

Fri Protocol

Automated Liquidations

Halo2

Completeness Property

Order Flow

Layer 2 Scalability

On-Chain Margin Engines