Essence

Cryptographic Proof Optimization Strategies represent the mathematical and architectural refinements aimed at reducing the computational overhead, latency, and data footprint of zero-knowledge proofs and succinct non-interactive arguments of knowledge. These methodologies compress complex computational histories into verifiable digests, allowing a single node to validate the integrity of an entire network’s state transition without re-executing every transaction. The primary objective involves achieving a state where the cost of verification remains constant or grows logarithmically relative to the complexity of the computation being proven.

The implementation of these strategies dictates the feasibility of privacy-preserving decentralized finance. By minimizing the proof size and the time required for generation, these techniques enable mobile devices and low-power hardware to participate in secure state validation. This efficiency shift moves the bottleneck of blockchain scaling from bandwidth and storage to the raw throughput of cryptographic provers.

Succinctness in cryptographic proofs enables the validation of massive datasets through minimal computational resources.

The systemic implication of these optimizations extends to the very nature of trust in digital markets. When proofs become cheap and fast, the need for centralized intermediaries to attest to the validity of transactions vanishes. Financial protocols can then operate with absolute mathematical certainty, ensuring that every margin call, liquidation, and option settlement adheres to the predefined logic of the smart contract without exposing sensitive trade data to the public ledger.

Origin

The genesis of these strategies lies in the tension between blockchain transparency and the prohibitive costs of redundant computation.

Early cryptographic constructions required extensive interaction or massive proof sizes that hindered the throughput of distributed ledgers. The “verifier’s dilemma” ⎊ where nodes must choose between trustless validation and rapid synchronization ⎊ necessitated a move toward non-interactive proofs that could be verified in milliseconds. The timeline of development highlights a shift from theoretical curiosity to practical financial infrastructure:

- Pinocchio Protocol established the early framework for verifiable computation using Quadratic Arithmetic Programs, though it required significant per-circuit setup overhead.

- Groth16 introduced the most compact proof sizes available, becoming the standard for early privacy-centric assets, despite the risk associated with its circuit-specific trusted setup.

- PlonK utilized a universal and updateable setup, allowing a single ceremony to support a wide range of circuit designs, which greatly increased the flexibility for developers building complex derivatives.

- Halo2 eliminated the requirement for a trusted setup altogether by utilizing recursive proof composition, paving the way for fully transparent and infinitely scalable proof systems.

These advancements were driven by the realization that for decentralized options and futures to compete with centralized exchanges, they must offer the same execution speed while maintaining the security guarantees of a blockchain. The evolution of these proofs mirrors the history of compression algorithms, where the goal is always to transmit the maximum amount of information with the minimum number of bits.

Theory

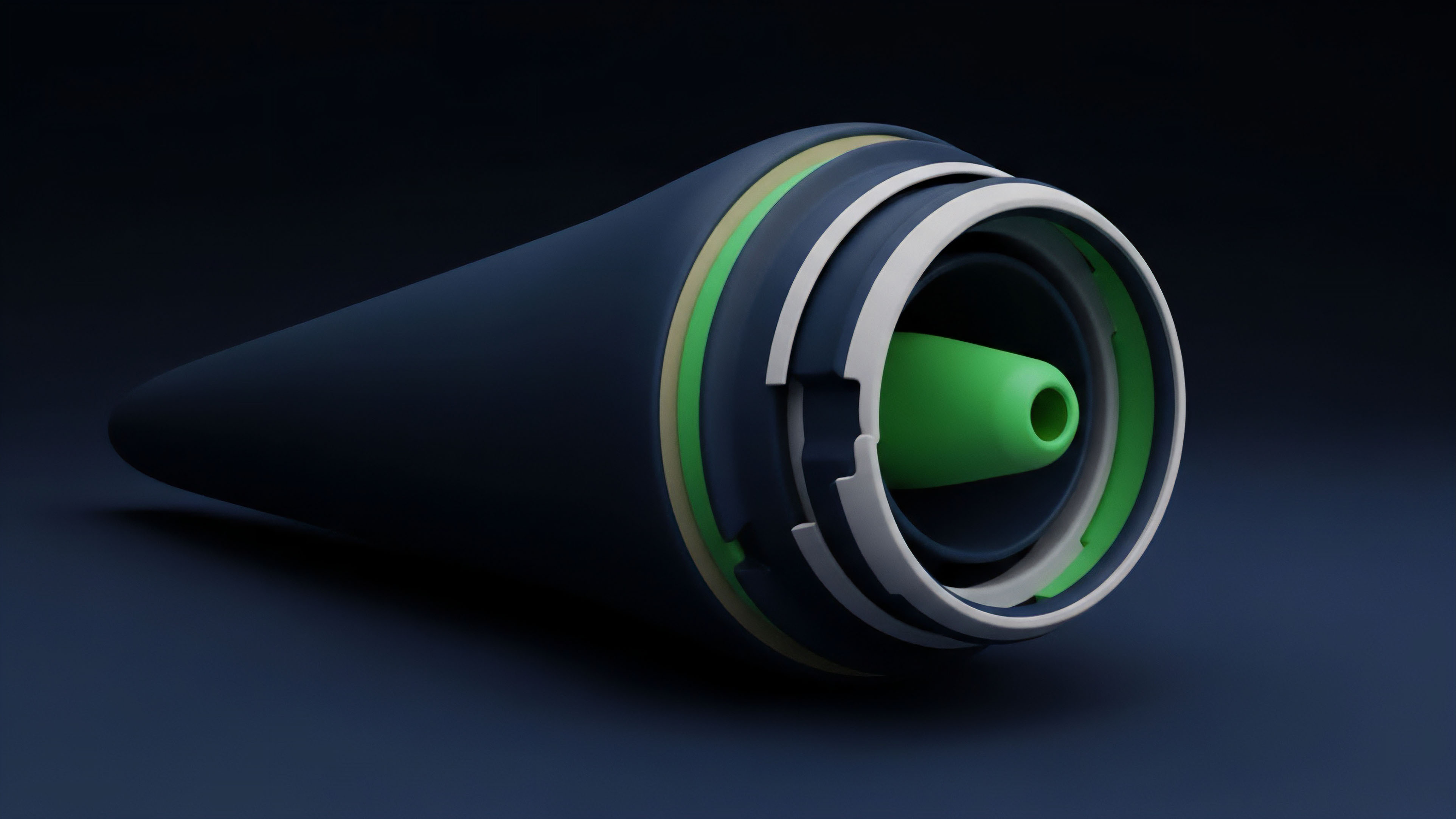

The structural integrity of modern proof systems relies on polynomial commitment schemes that facilitate succinctness. By representing computations as high-degree polynomials, provers demonstrate execution correctness through evaluation at random points, governed by the Schwartz-Zippel Lemma.

This mathematical abstraction allows the verifier to check the validity of a statement without ever seeing the full execution trace. The efficiency of these systems is often measured by the trade-offs between proof size, prover time, and verification complexity. Different commitment schemes offer varying performance profiles:

| Commitment Scheme | Proof Size | Prover Time | Transparency |

|---|---|---|---|

| KZG | Constant | Linear | Trusted Setup |

| FRI | Logarithmic | Quasi-linear | Transparent |

| Inner Product Argument | Logarithmic | Linear | Transparent |

Arithmetization ⎊ the process of converting computer programs into mathematical equations ⎊ serves as the bridge between code and proof. Modern systems use PlonKish arithmetization, which allows for custom gates and lookup tables. These features enable the prover to handle complex operations like range checks and hash functions with significantly fewer constraints than traditional R1CS frameworks.

This reduction in constraint count directly translates to faster proof generation and lower memory requirements for the prover.

Polynomial commitments function as the mathematical anchor for verifying state transitions without revealing underlying data.

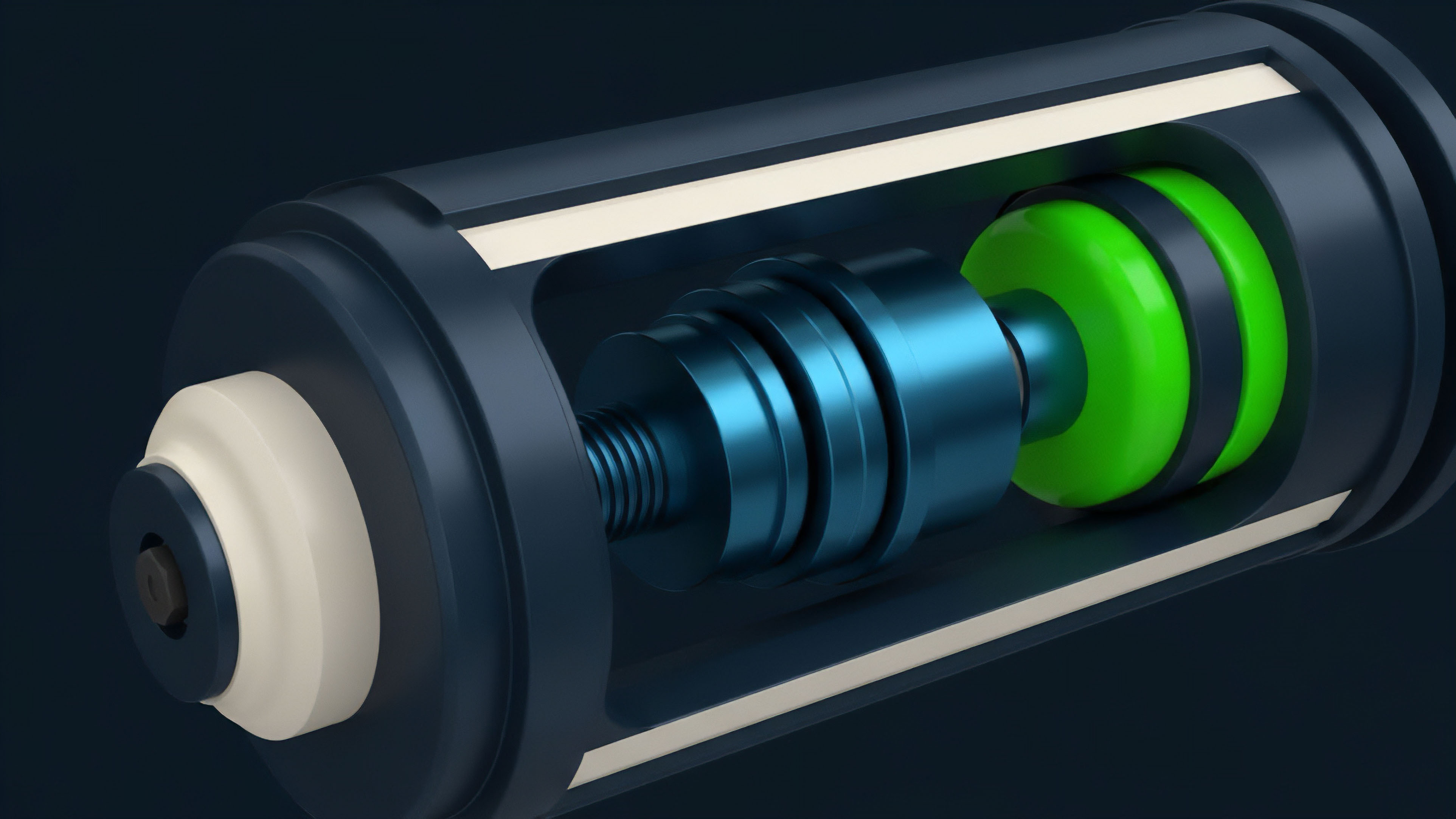

Recursion stands as the most advanced theoretical pillar in this domain. By allowing a proof to verify another proof, systems can aggregate thousands of transactions into a single validity certificate. This hierarchical structure creates a massive reduction in the data that must be posted on-chain, effectively decoupling the cost of security from the volume of transactions.

Approach

Current methodologies focus on the integration of lookup tables to bypass the high cost of bitwise operations within arithmetic circuits.

These tables allow the prover to reference pre-computed values, significantly reducing the number of constraints required for complex operations. This technique is particularly effective for implementing standardized financial formulas, such as the Black-Scholes model, within a zero-knowledge environment. The physical layer of proof generation has moved toward specialized hardware to handle the intense computational demands of Multi-Scalar Multiplication and Number Theoretic Transforms.

The distribution of labor in modern prover networks follows several distinct paths:

- Field Programmable Gate Arrays provide a balance of flexibility and speed, allowing for the optimization of specific cryptographic primitives without the high cost of custom silicon.

- Application Specific Integrated Circuits represent the peak of efficiency, offering the highest throughput for proof generation at the cost of being locked into specific mathematical curves.

- Distributed Prover Markets utilize a decentralized network of nodes to compete for the right to generate proofs, ensuring that the system remains censorship-resistant and resilient to single points of failure.

- GPU Acceleration leverages the parallel processing power of modern graphics cards to handle the massive polynomial evaluations required by STARK-based systems.

Software-level optimizations involve the use of specialized elliptic curves, such as the Pasta curves (Pallas and Vesta), which are designed specifically for efficient recursive proof composition. These curves allow for cycles of elliptic curves where the base field of one is the scalar field of the other, enabling proofs to verify themselves without the massive overhead of non-native arithmetic.

Evolution

The transition from circuits requiring trusted setups to transparent, universal schemes reflects a strategic shift toward systemic resilience. Groth16 offered unmatched verification speed but suffered from per-circuit ceremony risks, leading to the adoption of universal systems.

This shift allowed developers to iterate on financial products ⎊ such as new option expiries or strike prices ⎊ without needing a new security ceremony for every change. The following table outlines the generational shifts in proof architecture:

| Generation | Primary Focus | Key Innovation | Financial Impact |

|---|---|---|---|

| First | Privacy | Groth16 SNARKs | Anonymous Transfers |

| Second | Universal Use | PlonK Arithmetization | Flexible DeFi Logic |

| Third | Scalability | Recursive STARKs | High-Throughput L2s |

The emergence of zkEVM technology represents the latest stage of this evolution. By creating a zero-knowledge version of the Ethereum Virtual Machine, these strategies allow existing smart contracts to benefit from cryptographic proofs without any modification to their underlying code. This preserves the network effects of existing liquidity while providing the scaling benefits of validity proofs.

Hardware specialization transforms cryptographic proof generation from a software bottleneck into a high-throughput commodity.

The market has also seen a move toward “proof aggregation,” where multiple proofs from different applications are combined into one. This shared security model reduces the marginal cost of verification for every participant, creating a more efficient ecosystem for high-frequency trading and complex derivative settlement.

Horizon

The future of proof optimization centers on the commoditization of prover infrastructure. As specialized hardware becomes ubiquitous, the time required to generate a proof for a complex financial transaction will drop to sub-second levels. This near-instant proof generation will enable real-time, privacy-preserving order books that rival the performance of centralized exchanges while maintaining full self-custody of assets. The integration of these strategies into cross-chain communication will redefine liquidity. State proofs will allow assets to move between disparate networks with the same security as a local transaction, eliminating the risks associated with traditional multisig bridges. This creates a unified global liquidity pool where capital can flow to the most efficient yield opportunities without friction. Advanced research into lattice-based cryptography suggests a path toward quantum-resistant proofs. While current elliptic curve-based systems are vulnerable to future quantum computers, new optimization strategies are being developed to make post-quantum proofs small enough for practical use. This forward-looking approach ensures that the financial infrastructure being built today will remain secure for decades to come. The ultimate destination is a “proof-of-everything” architecture. In this world, every piece of financial data ⎊ from credit scores to portfolio risk ⎊ is represented by a succinct cryptographic proof. This allows for a hyper-efficient market where information is shared only on a need-to-know basis, and every participant can verify the solvency and integrity of their counterparties with a single mathematical check.

Glossary

Verifier Gas Efficiency

Bulletproofs

Succinct State Transitions

Plonkish Arithmetization

Regulatory-Compliant Privacy

Interactive Oracle Proofs

Number Theoretic Transform

Fiat-Shamir Heuristic

Homomorphic Encryption Integration