Essence

The primary function of Cryptographic Proof Complexity Optimization and Efficiency resides in the reduction of computational overhead required to validate state transitions within decentralized ledgers. It represents a mathematical shift from exhaustive re-execution of logic to the verification of succinct certificates. By minimizing the size of these proofs and the time required for their generation, protocols achieve a state of high-fidelity scalability that preserves the security guarantees of the underlying base layer.

This mechanism ensures that complex financial instruments, such as multi-leg options or cross-margined derivatives, can execute with the speed of centralized venues while maintaining the censorship resistance of a distributed network.

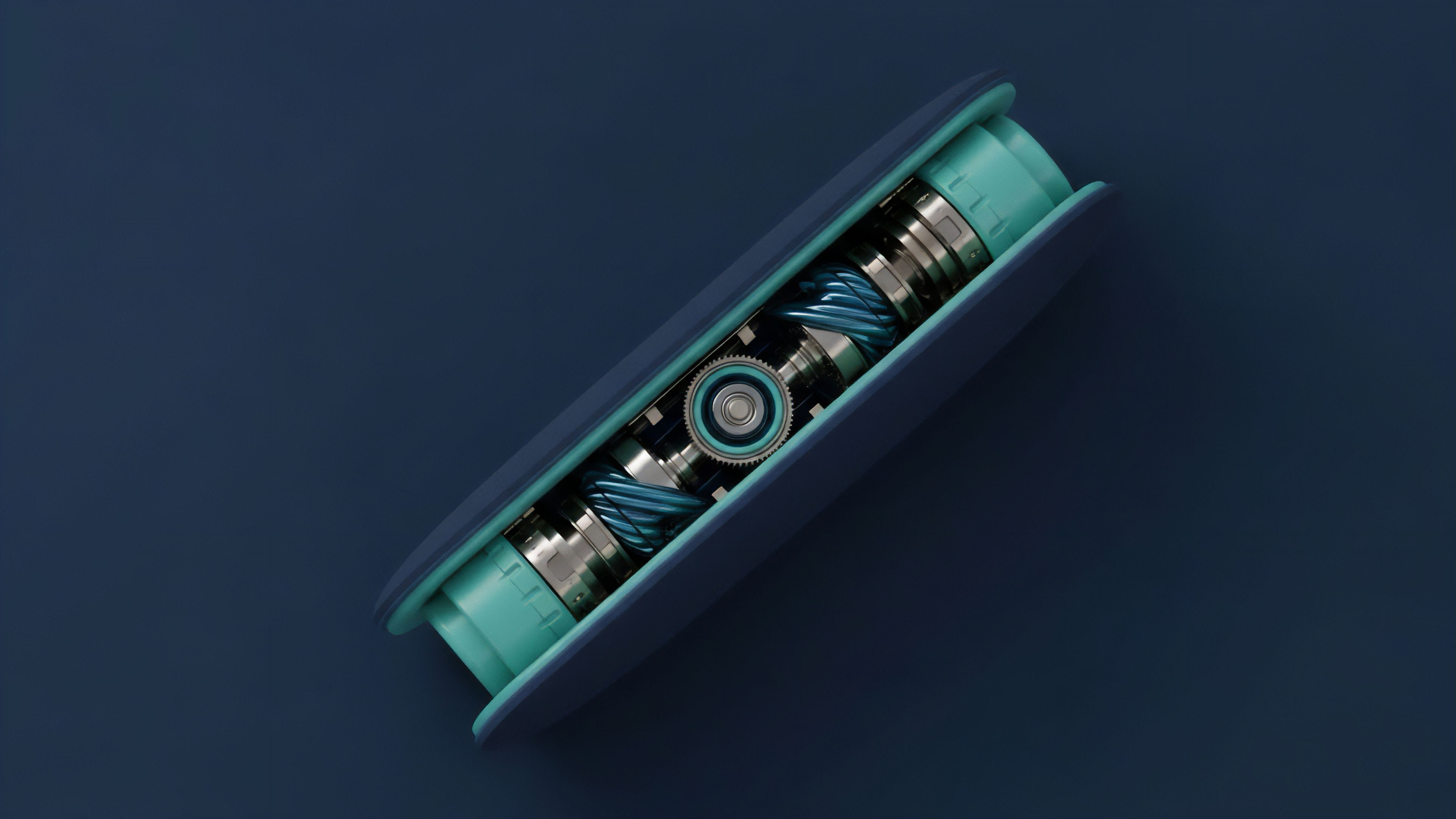

Cryptographic Proof Complexity Optimization and Efficiency functions as the mathematical engine for trustless scaling by converting vast computational workloads into small, verifiable data packets.

Within the domain of digital asset derivatives, this optimization dictates the upper bounds of capital efficiency. High proof complexity results in latent settlement and increased gas costs, which directly translates to wider bid-ask spreads and reduced liquidity depth. Conversely, efficient proof systems enable the compression of thousands of transactions into a single validity proof, allowing for real-time risk management and instant margin updates.

This technical refinement is the prerequisite for a financial operating system that operates without intermediaries, where the validity of a trade is a mathematical certainty rather than a probabilistic outcome.

Computational Succinctness

The pursuit of succinctness involves a trade-off between prover time, proof size, and verifier complexity. In a market environment, the verifier is typically a smart contract with limited gas resources. Therefore, the optimization of proof complexity is a direct attempt to lower the barrier for on-chain verification.

Systems that utilize SNARKs or STARKs rely on arithmetization to translate code into polynomial equations. The efficiency of this translation determines the throughput of the entire derivative platform.

Resource Allocation and Throughput

Optimization strategies focus on reducing the number of constraints in the arithmetic circuit. Fewer constraints mean the prover requires less memory and processing power, which lowers the latency between trade execution and finality. For high-frequency trading applications, this latency reduction is vital for maintaining price discovery and preventing toxic order flow from exploiting outdated state updates.

Origin

The foundational principles of Cryptographic Proof Complexity Optimization and Efficiency trace back to the introduction of interactive proof systems in the mid-1980s.

Early theoretical work by Goldwasser, Micali, and Rackoff established that a prover could convince a verifier of a statement’s truth without revealing the underlying data. This initial breakthrough was purely academic until the rise of blockchain technology necessitated a method for scaling transaction volume without requiring every node to process every transaction. The shift from interactive to non-interactive proofs, facilitated by the Fiat-Shamir heuristic, provided the initial architecture for what would become modern validity proofs.

The historical trajectory of proof optimization reflects a transition from theoretical curiosity to a vital requirement for decentralized financial infrastructure.

As Ethereum encountered significant congestion, the focus shifted from simple payment verification to the execution of complex smart contracts. The birth of zk-Rollups demanded a new level of efficiency. Early implementations like Pinocchio and Groth16 required a trusted setup, which introduced a point of failure.

The subsequent drive for optimization led to the creation of universal and transparent proof systems. These advancements were not motivated by aesthetic preference but by the hard reality of gas limits and the competitive pressure to offer a user experience that rivals traditional finance.

The Shift to Transparency

The removal of the trusted setup marked a significant milestone in the evolution of proof systems. Protocols began adopting FRI (Fast Reed-Solomon Interactive Oracle Proof of Proximity) and other transparent mechanisms to ensure that the security of the system did not rely on the integrity of a specific group of participants. This transition increased the computational load on the prover but significantly enhanced the long-term resilience and decentralization of the network.

Arithmetization Advancements

Early systems used Rank-1 Constraint Systems (R1CS), which were rigid and difficult to optimize for complex logic. The introduction of PLONK and its variants allowed for custom gates and lookup tables, providing developers with the tools to build more efficient circuits. This architectural shift enabled the creation of specialized circuits for derivative pricing models, such as Black-Scholes or Greeks calculation, which were previously too expensive to prove.

Theory

The theoretical basis of Cryptographic Proof Complexity Optimization and Efficiency is rooted in the arithmetization of computation and the application of polynomial identity testing.

A computation is transformed into a set of polynomials over a finite field. The prover demonstrates that these polynomials satisfy certain relations at a random point chosen by the verifier. The Schwartz-Zippel Lemma provides the mathematical guarantee that if two distinct polynomials of a certain degree are evaluated at a random point, the probability of them being equal is negligible.

This allows the verifier to check a single point instead of the entire computation.

Mathematical optimization in proof systems relies on the probabilistic certainty that a single point evaluation can validate the integrity of an entire computational trace.

Modern proof systems utilize Polynomial Commitment Schemes (PCS) to further reduce complexity. These schemes allow a prover to commit to a polynomial and later open it at any point, providing a succinct proof of the evaluation. The choice of PCS ⎊ whether it be KZG, IPA, or FRI ⎊ dictates the performance characteristics of the protocol.

For instance, KZG commitments result in very small proofs but require a trusted setup, while FRI-based systems are transparent but produce larger proofs.

Comparative Architecture Analysis

The following table outlines the trade-offs between different polynomial commitment schemes used in modern proof optimization.

| Scheme Type | Proof Size | Verification Speed | Setup Requirement | Quantum Resistance |

|---|---|---|---|---|

| KZG (Kate) | Constant (Small) | Fast | Trusted Setup | No |

| FRI (STARKs) | Logarithmic (Large) | Very Fast | Transparent | Yes |

| IPA (Bulletproofs) | Logarithmic (Medium) | Linear (Slow) | Transparent | No |

Constraint System Refinement

Optimizing the constraint system involves minimizing the degree of the polynomials and the number of variables. Lookups have emerged as a primary method for efficiency. Instead of proving a complex operation through raw arithmetic gates, the prover can simply show that the input and output exist in a precomputed table.

This is particularly useful for bitwise operations and hash functions, which are notoriously expensive in standard arithmetization.

Approach

Current implementation strategies for Cryptographic Proof Complexity Optimization and Efficiency focus on the deployment of recursive proofs and folding schemes. Recursion allows a proof to verify the validity of another proof, enabling the aggregation of multiple transactions into a single certificate. This creates a tree-like structure where the root proof represents the validity of thousands of sub-proofs.

This approach is the primary driver behind the massive throughput observed in modern Layer 2 solutions.

Performance Benchmarking

To understand the practical application of these optimizations, we must examine the performance metrics of leading proof systems.

| System Name | Arithmetization | Commitment Scheme | Prover Time (per gate) | Main Application |

|---|---|---|---|---|

| Halo 2 | PLONKish | IPA / KZG | Moderate | Privacy / Zcash |

| Boojum | PLONKish | FRI | Fast | zkSync Era |

| Plonky2 | PLONKish | FRI | Ultra-Fast | Polygon zkEVM |

Folding Schemes and Nova

A significant departure from traditional recursion is the introduction of folding schemes like Nova and Sangria. Instead of verifying a proof within another proof ⎊ which is computationally expensive ⎊ folding schemes combine two instances of a problem into a single instance of the same size. This process is repeated until a final, single proof is generated.

This reduces the prover’s workload by several orders of magnitude, making it feasible to generate proofs on consumer-grade hardware.

Arithmetization Strategies

Developers are increasingly moving toward PLONKish arithmetization, which provides the flexibility to define custom gates. This allows for the creation of “derivative-specific” circuits. For example, a circuit can be optimized specifically for calculating the Delta or Gamma of an options portfolio.

By tailoring the arithmetic gates to the specific financial logic, the protocol reduces the total number of constraints, leading to faster execution and lower verification costs.

Evolution

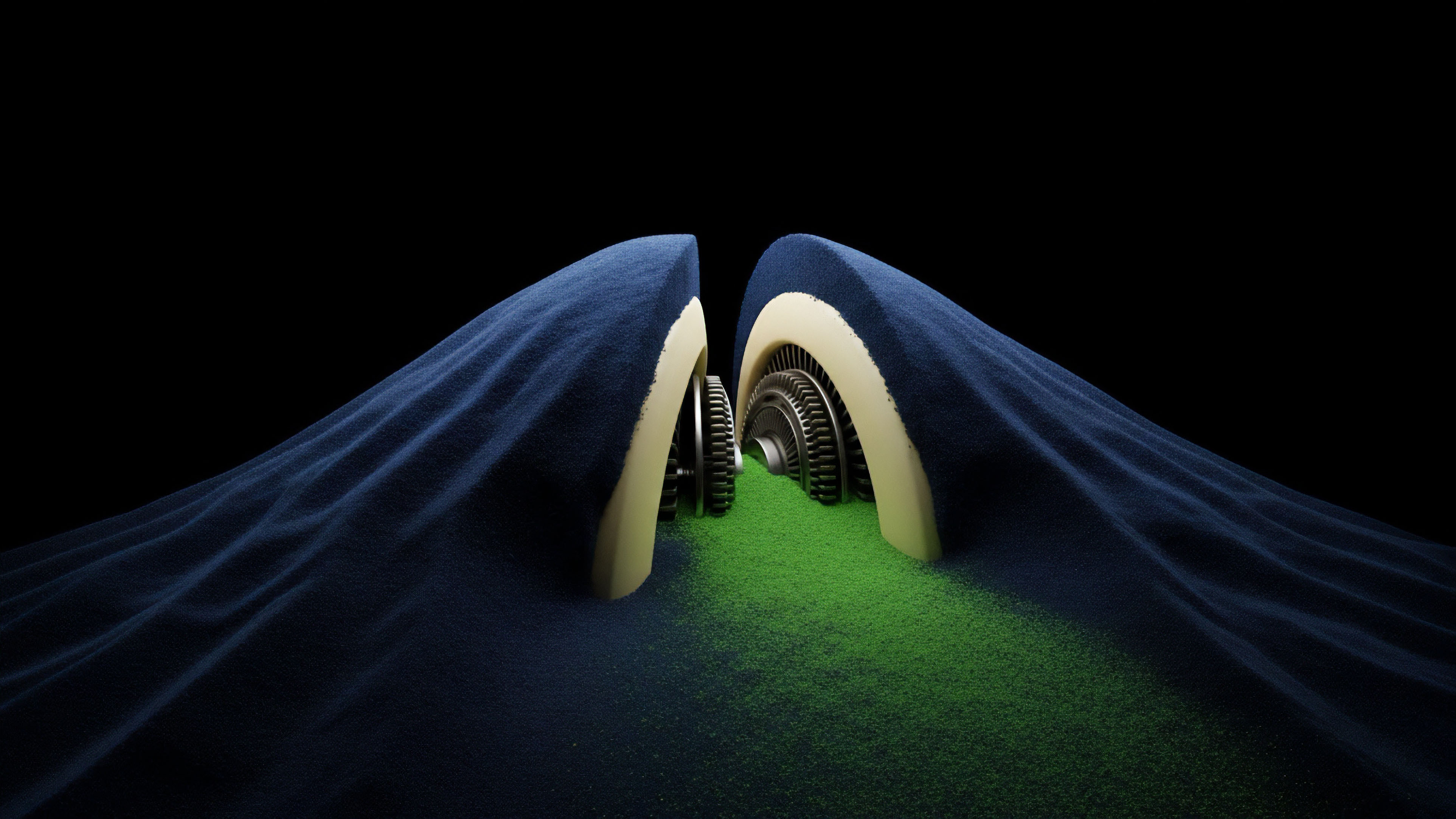

The transition of Cryptographic Proof Complexity Optimization and Efficiency has moved from monolithic architectures to modular, highly specialized systems. In the early stages, proof systems were general-purpose and lacked the efficiency required for high-throughput financial applications. As the limitations of these systems became apparent, the industry shifted toward hardware acceleration and specialized arithmetization.

The use of FPGAs and ASICs for generating proofs has become a standard practice for large-scale sequencers, further reducing the latency of the prover.

The evolution of proof systems is characterized by a move away from general-purpose computation toward specialized, hardware-accelerated arithmetic circuits.

The introduction of Recursive SNARKs allowed for the creation of “proofs of proofs,” which solved the problem of state bloat. By verifying only the most recent proof, a node can be certain of the entire history of the chain without downloading the full ledger. This advancement has been instrumental in the development of light clients and mobile-friendly decentralized applications.

Furthermore, the move toward Post-Quantum Cryptography has influenced the selection of proof systems, with many protocols opting for STARKs due to their reliance on hash functions rather than elliptic curves.

Phases of Proof Advancement

- Monolithic Proofs: Single proofs for single transactions, leading to high on-chain costs and limited scalability.

- Batching and Aggregation: Combining multiple transactions into a single proof to distribute the verification cost across many users.

- Recursive Verification: Enabling proofs to verify other proofs, allowing for infinite scaling and succinct state representation.

- Folding and Accumulation: Moving beyond recursion to combine computational instances, drastically reducing prover overhead.

The Death of the Trusted Setup

The industry has largely moved away from protocols requiring a trusted setup. While Groth16 remains the most efficient in terms of proof size, the operational risk and lack of flexibility associated with the setup ceremony have led to the dominance of Halo 2 and Plonky2. These newer systems allow for constant upgrades to the circuit without requiring a new ceremony, which is vital for the fast-paced development of derivative markets.

Horizon

The future of Cryptographic Proof Complexity Optimization and Efficiency lies in the realization of Zero-Knowledge Clearinghouses.

These entities will act as trustless intermediaries that manage collateral, execute liquidations, and settle trades off-chain, while providing a continuous stream of validity proofs to the base layer. This will allow for the creation of global liquidity pools that are not fragmented across different chains. The optimization of proof systems will reach a point where the cost of verification is so low that even micro-options and small-scale derivatives can be proven and settled on-chain.

Systemic Market Shifts

- Hyper-Liquid On-Chain Venues: Proof efficiency will enable order books with sub-millisecond latency, rivaling the performance of centralized exchanges.

- Trustless Cross-Chain Margin: Recursive proofs will allow a user to prove their collateral on one chain to take a position on another, without moving the underlying assets.

- Privacy-Preserving Compliance: Advanced proof systems will enable traders to prove they are compliant with regulations without revealing their trading strategies or identity.

- Automated Risk Engines: On-chain margin engines will use optimized proofs to verify complex risk calculations, ensuring the solvency of the protocol in real-time.

The Integration of AI and ZK

The intersection of artificial intelligence and zero-knowledge proofs ⎊ often referred to as zkML ⎊ will allow for the verification of machine learning models used in trading. A protocol could use an AI model to determine funding rates or liquidation thresholds, and then provide a proof that the model was executed correctly. This prevents the manipulation of the model by the protocol operators and ensures that all participants are treated fairly.

The Final Efficiency Frontier

Ultimately, the goal is to reach the Theoretical Minimum of proof complexity. This involves finding the most efficient way to represent any given computation as a polynomial identity. As we approach this limit, the distinction between centralized and decentralized finance will blur. The security of the system will be derived from the laws of mathematics, and the efficiency will be limited only by the speed of light and the availability of specialized hardware. This is the endgame for derivative systems: a world where trust is obsolete because verification is instant and universal.

Glossary

Sum-Check Protocol

Plookup

Elliptic Curve Cryptography

Validity Proofs

Fpga Proving

Discrete Logarithm Problem

Number Theoretic Transform

Zk-Rollups

Plonkish Arithmetization