Essence

Data source integrity defines the reliability and accuracy of external information consumed by smart contracts, a fundamental requirement for decentralized derivatives. In the context of crypto options, the integrity of price feeds ⎊ often provided by oracles ⎊ determines the solvency of the protocol, the accuracy of collateral valuation, and the fairness of settlement at expiration. The core challenge lies in bridging the gap between a trustless, deterministic on-chain environment and the inherently messy, off-chain reality of market data.

A derivative contract’s value is a function of its underlying asset price; if that input is corrupted, the contract’s entire financial logic collapses. This vulnerability represents a systemic risk to all decentralized financial systems.

The integrity of data feeds is the single most critical factor determining the functional solvency and security of decentralized options protocols.

Without robust data integrity, the system cannot reliably calculate key parameters such as liquidation thresholds, margin requirements, or the final settlement price of an option contract. The data source integrity problem is essentially a game theory challenge: how to economically incentivize data providers to deliver truthful information, even when a large financial incentive exists to provide false data for personal gain. This issue is magnified in options markets where small price movements near expiration can have disproportionate impacts on profit and loss, making data manipulation particularly attractive to malicious actors.

Core Risk Vectors

- Manipulation Risk: An attacker exploits a vulnerability in the data feed mechanism to provide a false price, triggering liquidations or profitable option settlements in their favor.

- Staleness Risk: The data feed fails to update in a timely manner, causing contracts to settle or liquidate based on outdated prices during periods of high volatility.

- Censorship Risk: A centralized oracle provider refuses to update data or provides biased data due to external pressure or internal policy, compromising the neutrality of the derivative protocol.

- Flash Loan Attacks: A rapid, low-cost attack where an attacker borrows assets, manipulates a single-source price feed, executes a profitable trade or liquidation, and repays the loan within a single transaction block.

Origin

The problem of data integrity is not new; traditional finance has long struggled with issues of data latency, front-running, and information asymmetry. However, in traditional markets, data sources like Bloomberg Terminals or Reuters feeds are typically highly centralized and regulated, relying on legal frameworks and high-cost access barriers to enforce integrity. The advent of decentralized finance introduced a new requirement: trustless data.

Early DeFi protocols, particularly those supporting lending and options, often relied on simple, single-source price feeds from exchanges. This architecture proved brittle. The 2020 “Black Thursday” market crash served as a brutal, real-world stress test for these early systems.

As network congestion increased, data feeds became slow or stalled, leading to cascading liquidations on platforms that relied on those feeds. The subsequent flash loan attacks, which exploited single-source price feeds to manipulate asset values, demonstrated that a centralized data source was a single point of failure that could be exploited for profit. This led to a significant shift in protocol design.

The community recognized that data integrity required the same level of decentralization as the settlement layer itself. The solution required a transition from relying on trusted, centralized entities to building economically secure, decentralized networks for data aggregation and delivery.

Historical Vulnerabilities

The evolution of data integrity solutions was driven by specific, high-profile failures. The first generation of oracle solutions often used a “pull” model, where protocols requested data on demand, making them susceptible to manipulation during periods of low liquidity. The “push” model, where data providers proactively update prices, introduced latency and cost issues.

The challenge for options protocols was particularly acute, as a derivative’s value is highly sensitive to price changes. A delay of even a few seconds in a volatile market could mean the difference between a solvent and insolvent position.

Historical failures demonstrated that a centralized data source was a single point of failure that could be exploited for profit.

This realization forced a fundamental re-evaluation of how price discovery happens in a decentralized environment. The goal became to create a data feed that was not only accurate but also resistant to a coordinated attack. This required moving beyond simple data aggregation to a more sophisticated model involving economic incentives, reputational staking, and decentralized network architecture.

Theory

The theoretical foundation of data integrity in decentralized options relies on a blend of economic game theory and cryptographic security.

The core principle is to make the cost of providing false data significantly higher than the potential profit from doing so. This is achieved through a combination of data aggregation, economic incentives, and dispute resolution mechanisms.

Data Aggregation and Price Calculation

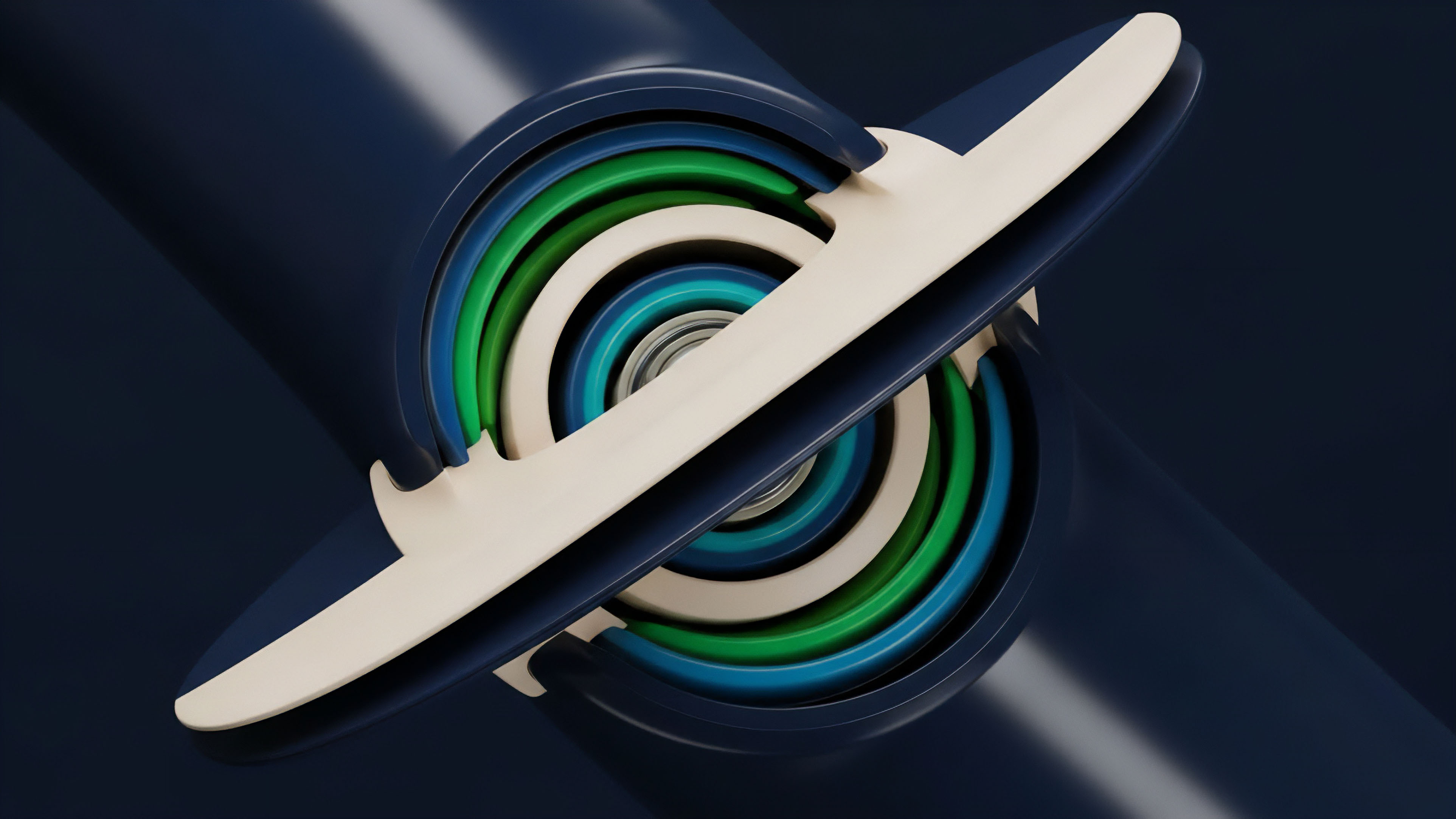

To mitigate the risk of a single malicious data provider, oracles employ aggregation techniques. Instead of taking a single price from one source, a protocol gathers data from multiple independent data providers. The resulting price is typically a median or volume-weighted average price (VWAP) of these inputs.

This design choice makes it prohibitively expensive for an attacker to manipulate the final aggregated price, as they would need to compromise a majority of the data providers simultaneously.

| Aggregation Mechanism | Description | Risk Mitigation |

|---|---|---|

| Median Price | Takes the middle value from a set of price inputs, effectively filtering out extreme outliers. | Resistant to single-point manipulation and “tail-end” attacks where one or two providers submit drastically incorrect data. |

| Volume-Weighted Average Price (VWAP) | Calculates the average price based on the trading volume at different exchanges. | Reduces the impact of low-liquidity exchanges and manipulation on thin markets. Reflects the actual cost of acquiring a large volume of the asset. |

| Time-Weighted Average Price (TWAP) | Calculates the average price over a specific time window. | Mitigates flash loan attacks by preventing immediate price manipulation within a single block. Ensures prices reflect sustained market conditions rather than momentary spikes. |

Economic Security and Disincentives

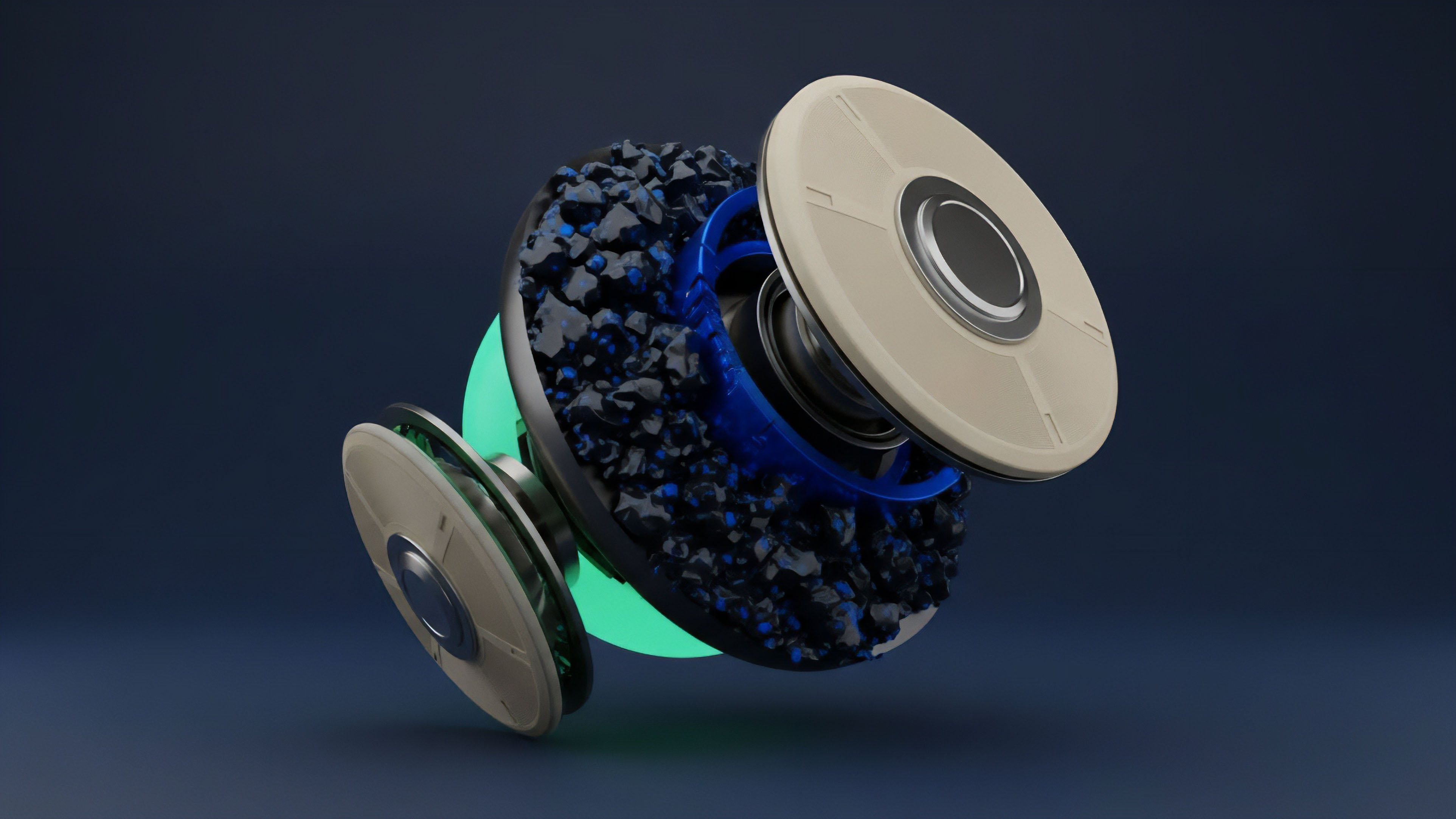

The security of decentralized data feeds is not cryptographic alone; it is fundamentally economic. Data providers are required to stake collateral (tokens) in the oracle network. If a provider submits incorrect data that is successfully disputed by other participants, their staked collateral is slashed (taken away).

This mechanism aligns incentives: data providers profit from providing truthful data and lose money from providing false data. The effectiveness of this model relies on a few key assumptions: a sufficient number of honest participants, a robust dispute resolution system, and a high enough collateral requirement to make manipulation unprofitable. The design of options protocols must also consider the “last mile problem” of data delivery.

While a robust oracle network provides a secure price feed, the protocol itself must integrate this data correctly. A common design pattern for options protocols is to use a TWAP to determine the strike price at expiration. This prevents attackers from executing high-frequency manipulation attempts in the final seconds before settlement, ensuring a more stable and fair settlement price.

Approach

Current solutions for data integrity in crypto options focus on a hybrid approach that combines decentralized data sourcing with economic security models.

The most widely adopted model involves a network of independent node operators that source data from multiple exchanges, aggregate it, and then deliver it on-chain.

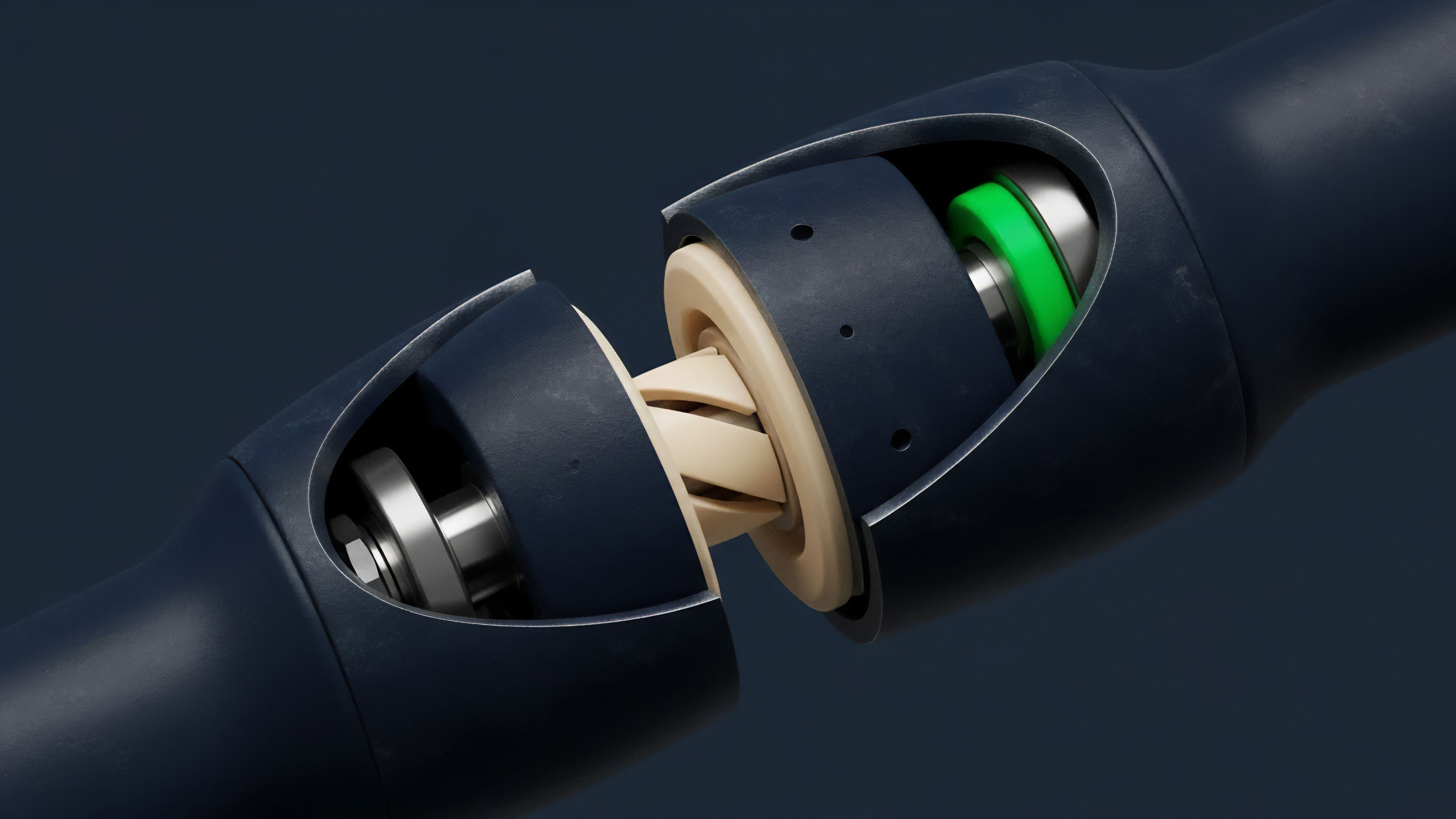

Oracle Network Architecture

The leading approach for securing data feeds for options protocols involves decentralized oracle networks. These networks typically function as follows:

- Data Request: A smart contract on an options protocol sends a request for a specific price feed (e.g. ETH/USD).

- Data Collection: Multiple independent node operators in the network gather price data from a variety of centralized and decentralized exchanges.

- Aggregation and Validation: The collected data is aggregated using a median or VWAP calculation to remove outliers. The network then validates the result through a consensus mechanism among the nodes.

- On-Chain Delivery: The validated price data is delivered to the options protocol’s smart contract, where it is used to calculate collateral value, liquidations, and settlements.

Data Source Selection and Auditing

A critical aspect of data integrity for options protocols is the selection of the underlying data sources. A high-quality data feed must be derived from exchanges with deep liquidity and high trading volume to prevent price manipulation. A common pitfall for new protocols is relying on data from exchanges with thin order books, where a relatively small trade can disproportionately affect the reported price.

A high-quality data feed must be derived from exchanges with deep liquidity and high trading volume to prevent price manipulation.

Furthermore, protocols often implement auditing mechanisms where a separate set of validators or governance participants monitor the data feeds for suspicious activity. If a discrepancy is found, a dispute resolution process is initiated. This layered approach adds an additional layer of security beyond simple economic incentives.

Comparative Data Source Models

Different protocols adopt varied approaches to data integrity, each with trade-offs in speed, security, and cost.

| Model | Example Protocol | Mechanism | Pros | Cons |

|---|---|---|---|---|

| Decentralized Oracle Network | Chainlink | Aggregates data from multiple nodes; economic incentives via staking/slashing. | High resistance to manipulation; broad market coverage; robust security model. | Higher latency and cost; potential for network congestion issues. |

| Internal TWAP/VWAP Oracle | GMX (for perpetuals) | Calculates price based on internal exchange data; relies on internal liquidity. | Low latency; tight integration with protocol; reduced external dependencies. | Susceptible to manipulation if internal liquidity is low; “chicken and egg” problem with bootstrapping liquidity. |

| Decentralized Data Market | Tellor | Data providers compete to submit data; a decentralized community validates and disputes. | Censorship resistance; lower cost for data requests; flexible data types. | Slower data updates; potential for higher variance in data quality; relies heavily on community participation. |

Evolution

The evolution of data integrity for crypto options has progressed from simple price feeds to highly complex data services required for sophisticated financial products. Early solutions focused primarily on spot prices for collateral valuation. As the options market matured, the requirements expanded to include more complex data inputs necessary for accurate pricing models.

From Spot Price to Volatility Surfaces

Options pricing models, such as Black-Scholes, require inputs beyond just the underlying asset price. They require volatility data, specifically the implied volatility (IV) surface. The IV surface represents the market’s expectation of future volatility across different strike prices and expiration dates.

To price exotic options or even standard options accurately, protocols need a reliable, decentralized source for this volatility data. This presents a significant challenge: while spot prices are readily available from exchanges, a consensus on IV requires complex calculations and aggregation from multiple sources, including options-specific decentralized exchanges (DEXs) and order books.

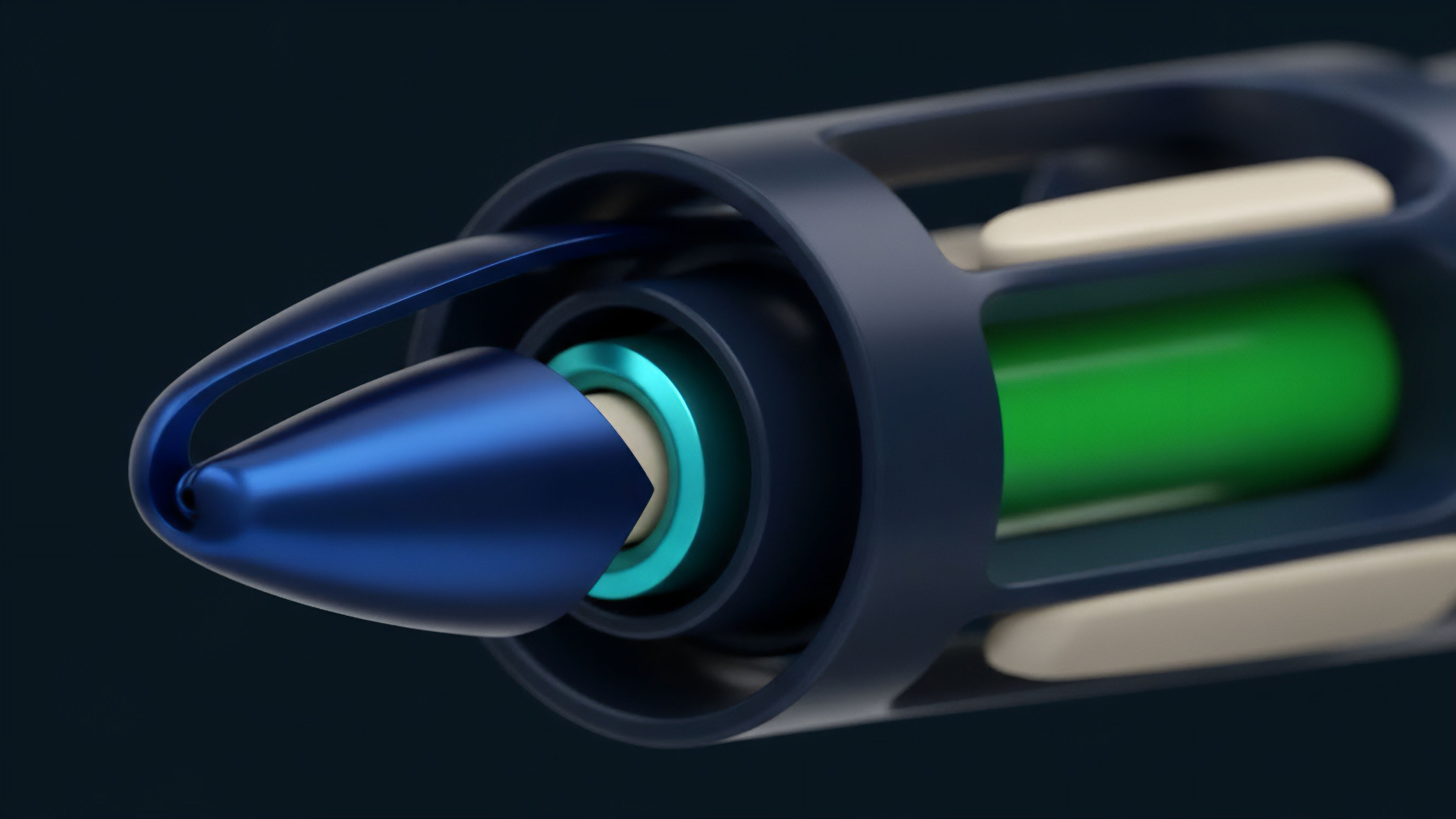

Internal Oracles and Protocol Integration

A significant trend in data integrity evolution is the shift toward internal or “oracle-less” systems. Instead of relying on external data feeds, some options protocols derive pricing internally from their own liquidity pools or automated market makers (AMMs). This approach tightens the integration between the data source and the protocol, eliminating the “last mile” risk.

The protocol essentially becomes its own source of truth. However, this design introduces a new set of risks. If the protocol’s liquidity pool is thin, or if its pricing mechanism is flawed, it can be exploited by an attacker who can manipulate the internal price through large trades.

This is a trade-off between external dependency risk and internal manipulation risk. The success of this approach depends entirely on the protocol’s ability to attract and maintain deep liquidity.

The Role of ZKPs and Off-Chain Computation

The next phase in data integrity involves leveraging advanced cryptographic techniques to verify off-chain computations. Zero-Knowledge Proofs (ZKPs) allow a protocol to prove that a complex calculation (like an IV surface calculation or a settlement price based on a large dataset) was performed correctly off-chain without revealing the raw data inputs themselves. This enhances both privacy and efficiency, allowing protocols to utilize data from sources that may not be willing to share their raw data publicly.

This moves data integrity from simply providing a price to providing verifiable proof of a complex calculation.

Horizon

Looking ahead, the future of data integrity for crypto options involves a deeper integration of data verification with protocol design, moving beyond external oracles toward self-contained, trustless data environments. The focus shifts from simply securing the price feed to ensuring the integrity of complex, multi-dimensional data required for sophisticated financial engineering.

Real-World Asset Data Feeds

The next generation of options protocols will require data feeds for real-world assets (RWAs) as traditional assets become tokenized. This introduces new challenges. The integrity of RWA data requires not only market price verification but also verification of off-chain events, legal status, and physical asset conditions.

The data sources for these assets are inherently centralized and non-transparent, creating a significant friction point for decentralized protocols. The horizon for data integrity will require new methods for verifying data from legacy financial systems and physical supply chains.

Regulatory Arbitrage and Compliance

As decentralized options mature, regulatory bodies will inevitably focus on data integrity. The integrity of a price feed determines the fairness of a financial instrument. Regulators may require specific data sources or methodologies for calculating settlement prices to ensure market fairness.

This creates a potential conflict: decentralized protocols value censorship resistance and permissionless access, while regulators prioritize verifiable compliance and specific data standards. The future of data integrity will likely involve new hybrid oracle designs that can simultaneously satisfy both decentralized security requirements and centralized regulatory mandates.

Systemic Risk and Interconnectedness

The greatest risk on the horizon for data integrity is not a single protocol failure but systemic contagion across multiple protocols. If several options protocols rely on the same oracle network, a single point of failure in that network could trigger cascading liquidations across the entire ecosystem. The next phase of data integrity requires not only securing individual data feeds but also building a diverse and redundant ecosystem of data providers to prevent systemic risk propagation.

| Future Challenge | Systemic Implication | Potential Solution |

|---|---|---|

| RWA Data Verification | Risk of non-transparent data from legacy systems; potential for legal disputes. | Decentralized identity verification; tokenized legal agreements; ZKPs for off-chain event verification. |

| Systemic Contagion Risk | A single oracle failure cascades across multiple options protocols, causing widespread insolvency. | Diversification of oracle networks; protocol-level data source redundancy; cross-protocol risk modeling. |

| Regulatory Compliance | Data feeds may be required to meet specific legal standards for market fairness and transparency. | Hybrid oracle designs that allow for both decentralized and auditable data streams. |

Glossary

Data Source Auditing

Open Source Matching Protocol

Smart Contract Data Integrity

Data Source Attestation

Atomic Integrity

Cryptographic Integrity

Staked Capital Integrity

Protocol Operational Integrity

Trustless Integrity