Essence of Data Integrity

The integrity of the data feed represents the foundational layer of trust for all automated financial contracts, particularly in the realm of decentralized options. It is the critical mechanism that bridges the gap between off-chain market reality and on-chain contract execution. A derivatives protocol cannot function without a reliable source of price data to calculate collateral requirements, determine margin health, and execute final settlement logic.

The challenge for decentralized finance is to secure this data without relying on a centralized authority ⎊ the very element that smart contracts seek to replace. This necessity introduces a systemic risk: if the data feed is corrupted, the entire financial structure built upon it collapses, regardless of the security of the underlying smart contract code. The system’s robustness is therefore directly proportional to the integrity of its data inputs.

Data feed integrity is the non-negotiable prerequisite for secure and functional decentralized derivatives markets.

Origin of the Oracle Problem

The challenge of data integrity in decentralized systems stems from what is known as the “oracle problem.” In traditional finance, price discovery is handled by trusted, centralized data providers or exchanges. These entities act as the authoritative source of truth. When smart contracts were introduced, they presented a paradox: they are deterministic systems designed to execute logic based on verifiable, on-chain data, yet most financial value is derived from off-chain events, such as asset prices.

The initial attempts to solve this involved simple, single-source price feeds, which proved to be catastrophic during periods of high volatility or market stress. The earliest flash loan attacks, for instance, exploited this vulnerability by manipulating the price on a single, low-liquidity exchange and forcing protocols to liquidate positions based on incorrect data. This exposed the inherent fragility of single-point-of-failure oracle designs.

The solution required a paradigm shift from simple data retrieval to a complex, economically secure aggregation mechanism.

Theoretical Frameworks for Data Integrity

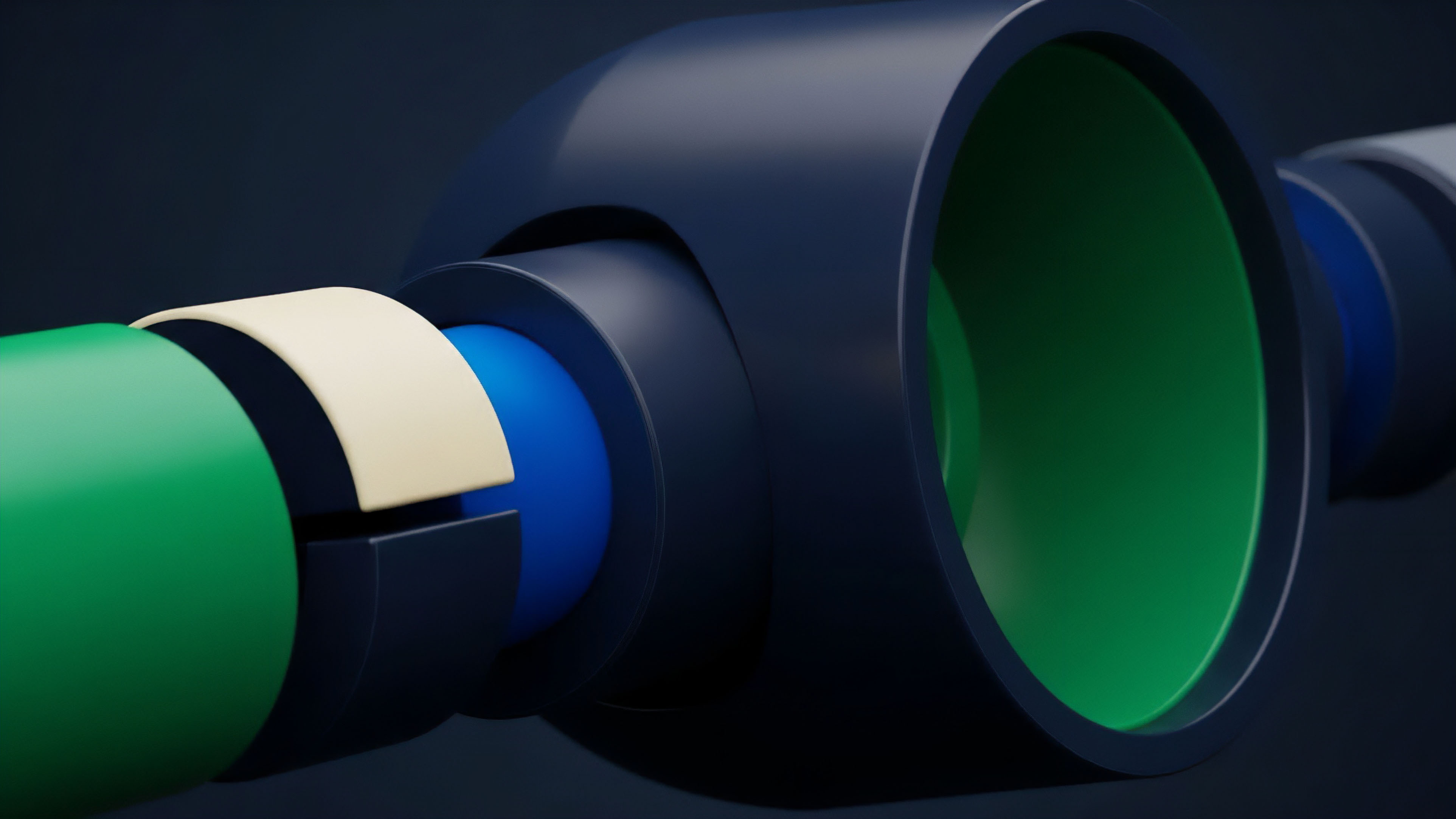

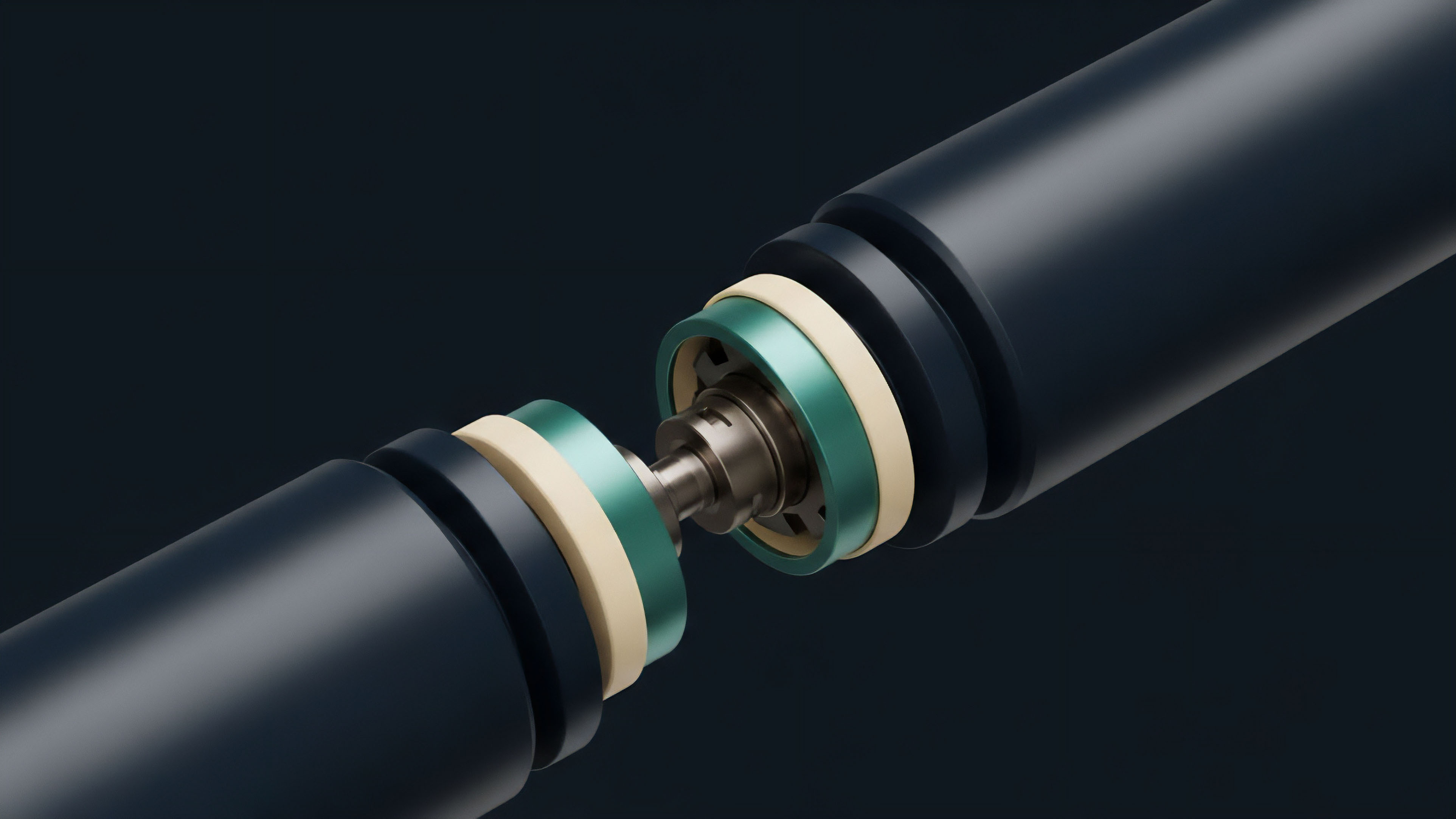

From a systems engineering perspective, data integrity for derivatives protocols requires a robust framework that accounts for both technical and economic security. The theoretical foundation rests on a shift from single-point data snapshots to time-weighted average prices (TWAP) and decentralized data aggregation.

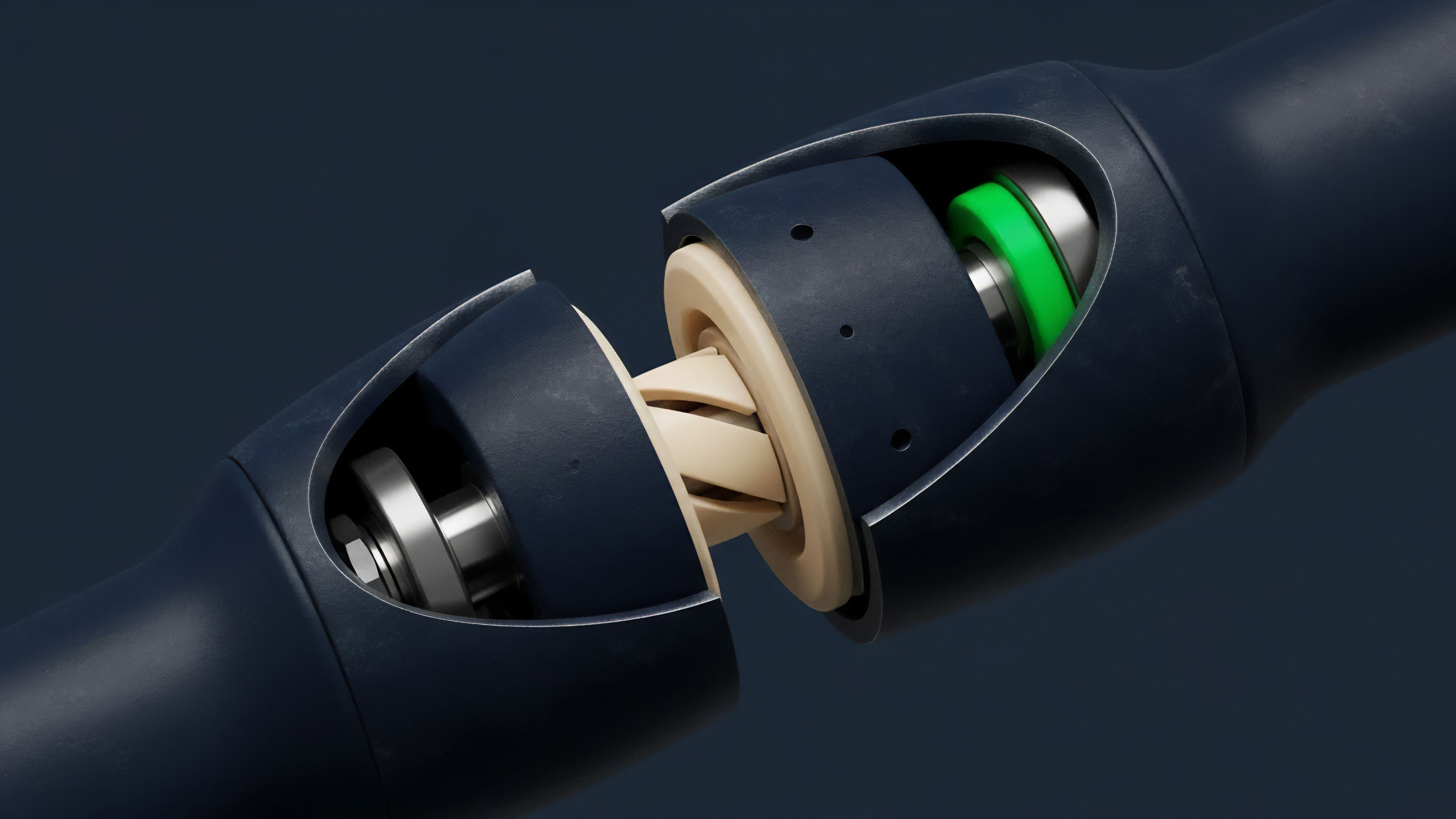

The TWAP methodology mitigates short-term price manipulation by calculating the average price over a specified time window, effectively smoothing out transient spikes caused by flash loans or front-running. The data aggregation layer further enhances security by requiring multiple independent sources to report prices, with the final value derived from a median calculation. This design makes it economically unfeasible to corrupt the final price feed, as an attacker would need to manipulate numerous independent sources simultaneously.

The core objective is to ensure that the cost of manipulating the oracle exceeds the potential profit from exploiting the derivative contract. This economic security model is essential for high-value options protocols. The following table illustrates the primary trade-offs between different data aggregation models:

| Model Type | Key Mechanism | Security Trade-off | Latency Trade-off |

|---|---|---|---|

| Single Source Snapshot | Single exchange price at a specific time. | Low security; high manipulation risk. | Low latency; immediate update. |

| Multi-Source Median | Aggregates prices from multiple sources, calculates median. | High security; resists single source failure. | Moderate latency; requires multiple reports. |

| Time-Weighted Average Price (TWAP) | Calculates average price over a defined time window. | High security; resists transient spikes. | High latency; data is inherently delayed. |

Current Approaches and Implementation

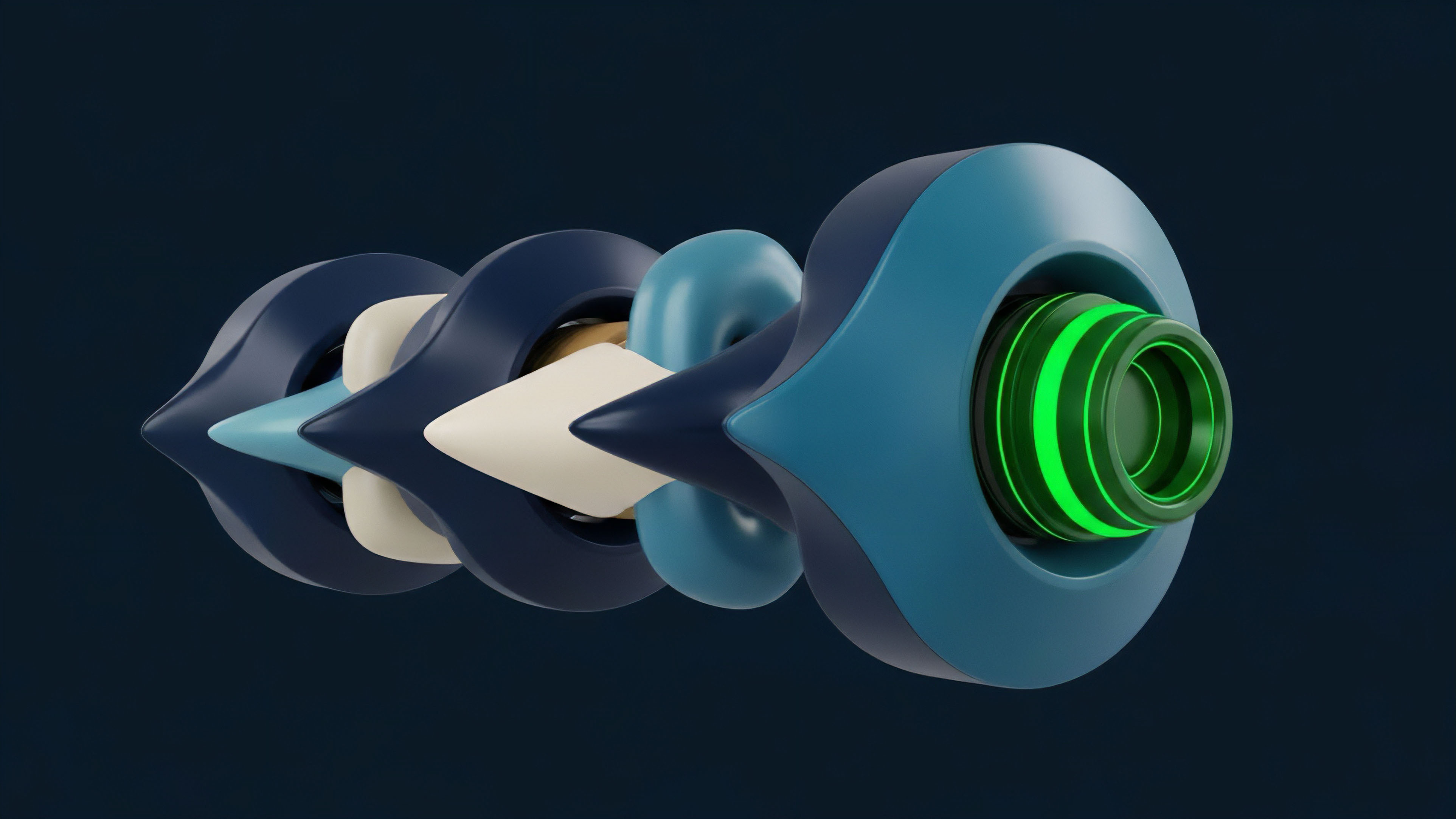

The implementation of data feed integrity in modern crypto options protocols varies depending on the specific risk tolerance and capital efficiency requirements of the protocol. We observe a clear divergence between two primary architectural approaches: external oracle networks and internal on-chain mechanisms.

External Oracle Networks

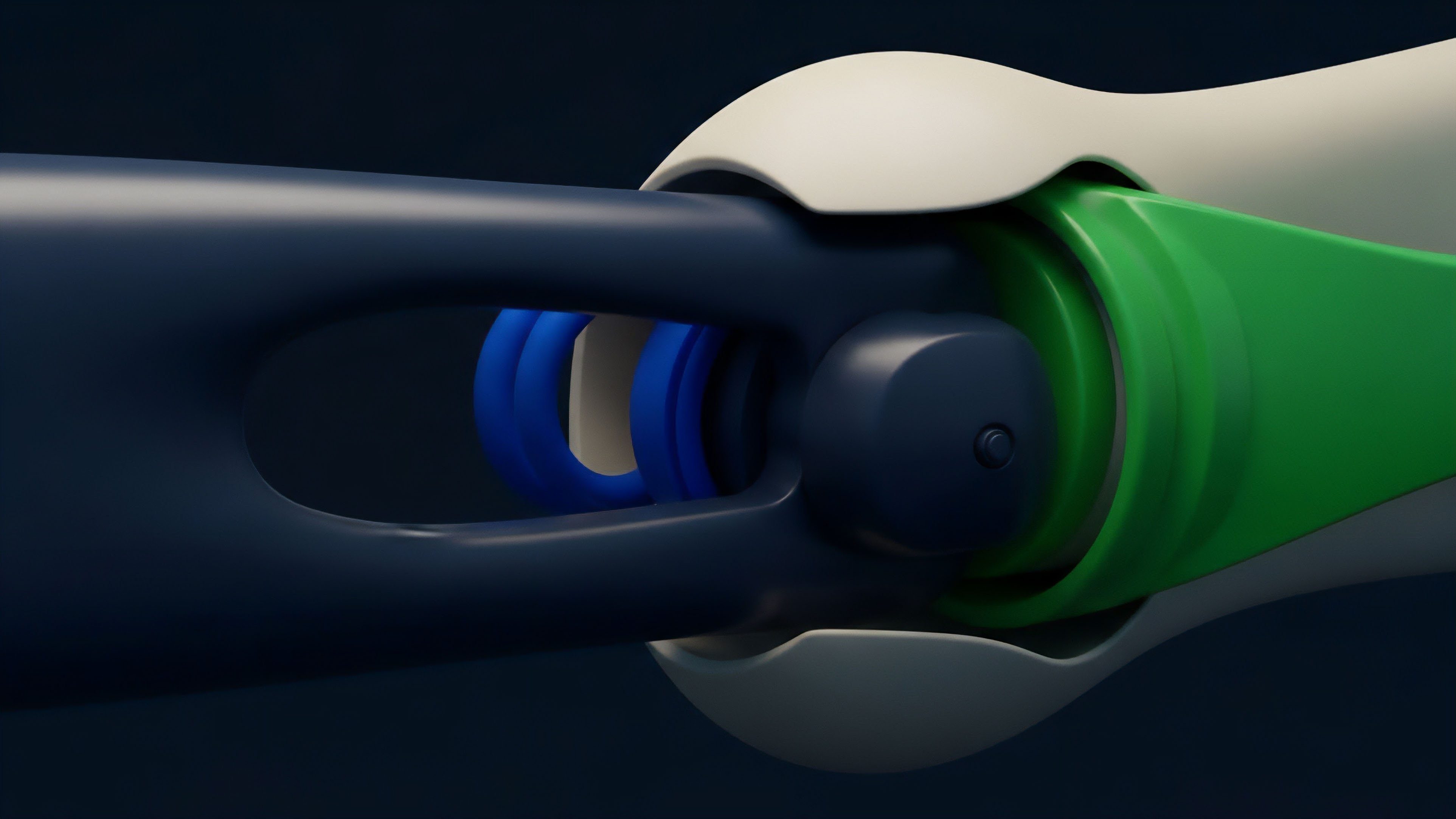

Protocols like Chainlink utilize a decentralized network of independent node operators to gather data from multiple off-chain exchanges. These nodes report data to a central contract, where a median price is calculated and updated on-chain. This approach offers high data quality and resistance to single exchange manipulation, as the price is derived from a broad market consensus.

The trade-off here is latency and gas cost. The frequency of updates is limited by the cost of writing new data to the blockchain, which introduces a delay between real-world price movements and the on-chain representation. This delay can create opportunities for latency arbitrage, where traders with faster access to off-chain data can front-run protocol liquidations.

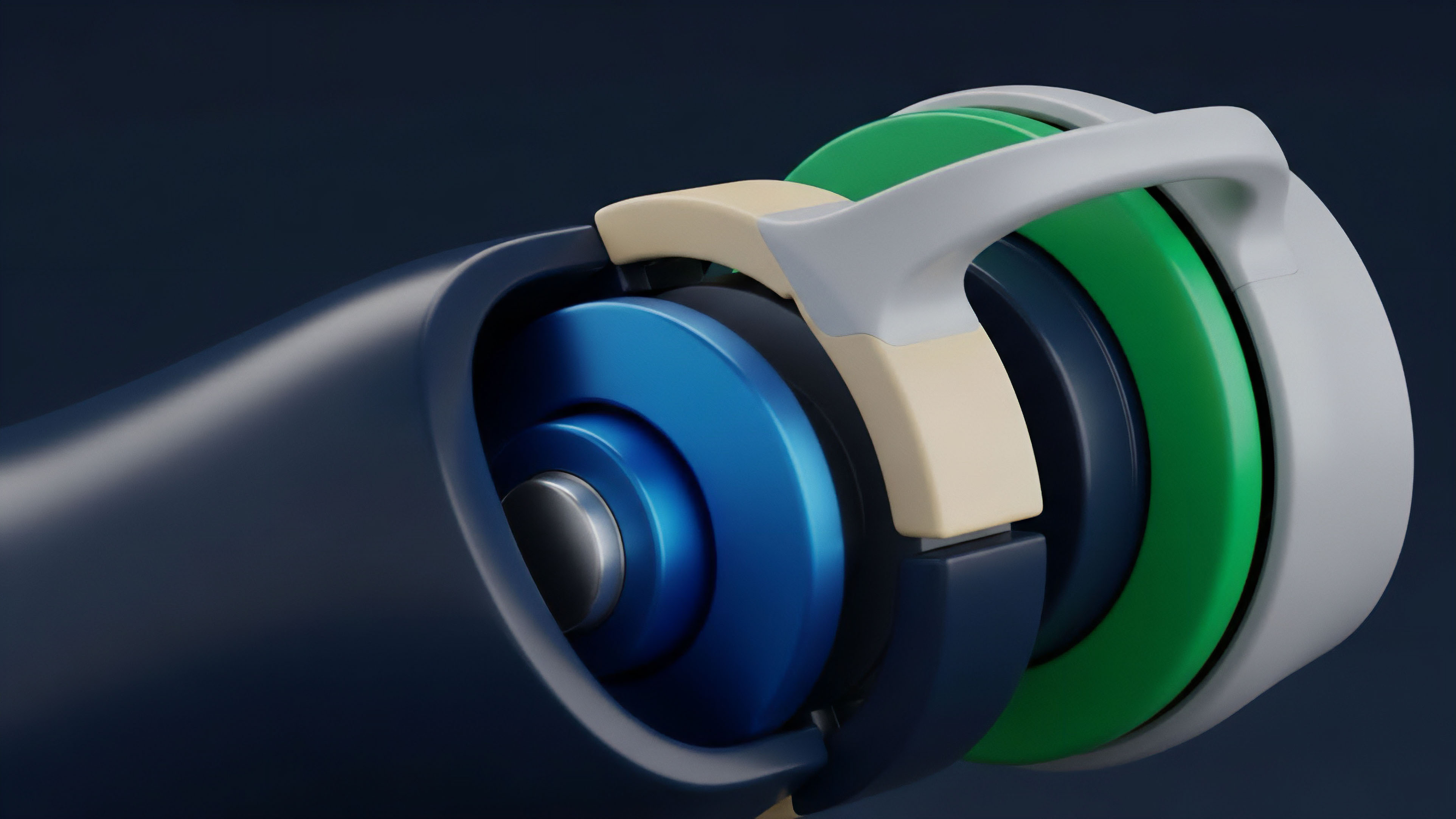

Internal On-Chain Mechanisms

Some protocols, particularly those built on automated market makers (AMMs), derive their price feeds directly from their own liquidity pools. The most prominent example is Uniswap V3’s TWAP oracle. This approach eliminates the need for external, off-chain data sources.

The price is derived from the ratio of assets in the pool over a time period. While this eliminates external dependencies, its integrity relies heavily on the depth of the liquidity pool itself. If the pool is shallow, it can still be manipulated, though the cost to do so increases significantly with the TWAP mechanism.

The selection of an oracle design involves a critical trade-off between update frequency, gas cost, and resistance to specific attack vectors.

Evolution of Integrity and Systemic Risk

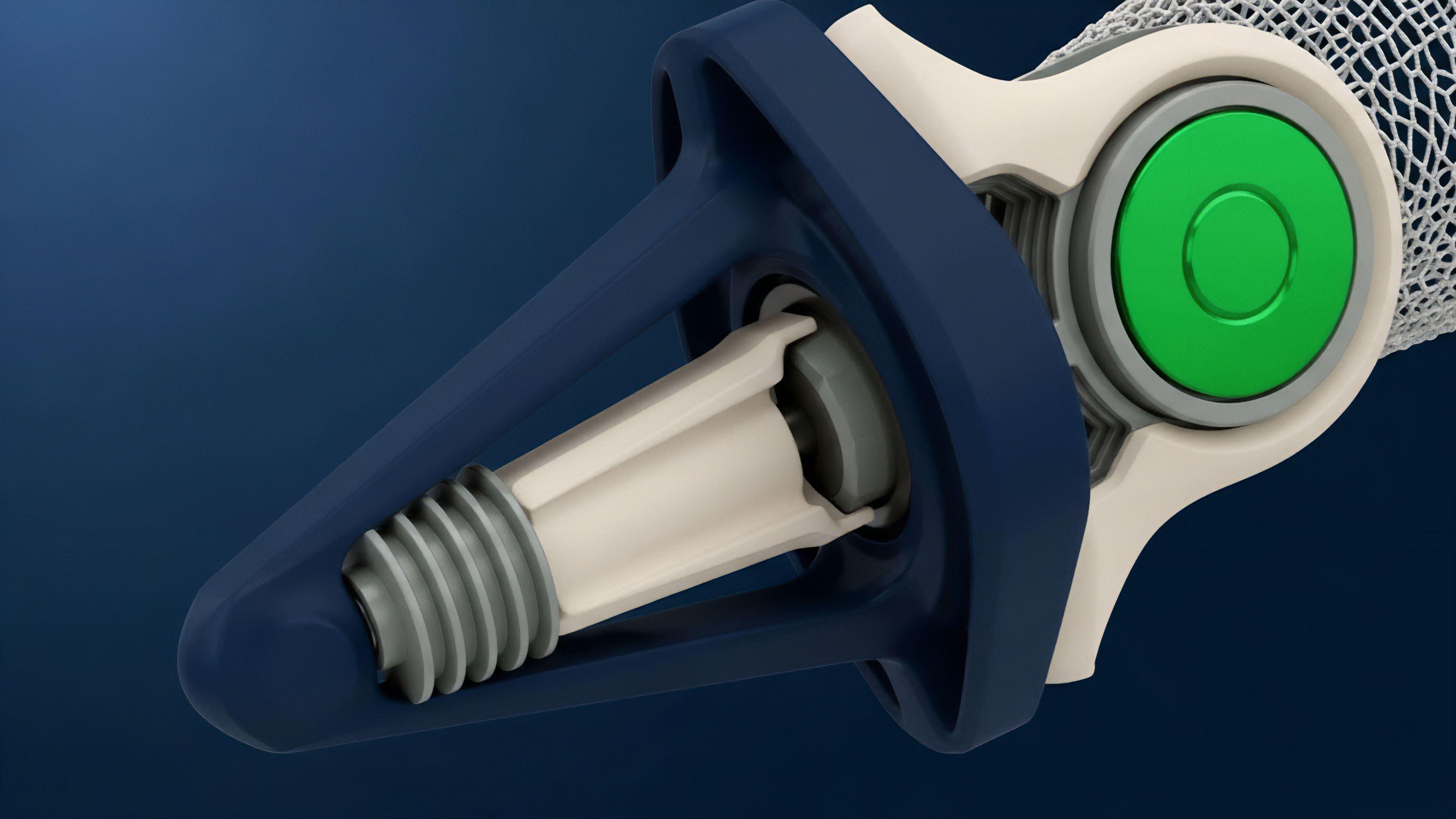

The evolution of data feed integrity has been a reactive process, driven by a cycle of attack and defense. Early protocols failed to account for the economic incentives of adversarial actors, assuming that high liquidity alone would be sufficient protection. The reality, as demonstrated by flash loan attacks, proved otherwise.

An attacker could borrow capital, manipulate the price, exploit the derivative contract, and repay the loan ⎊ all within a single transaction block. This led to the widespread adoption of multi-source aggregation and TWAP as standard defensive measures. The shift fundamentally changed the risk parameters of derivatives protocols.

The system evolved from relying on a snapshot price to relying on a time-averaged price, which significantly increased the cost and complexity of a successful attack. A key development has been the implementation of data integrity checks within the oracle design itself. These checks monitor for sudden, large deviations in price from historical norms or from other sources.

If a price update exceeds a certain threshold, the system can automatically halt, preventing incorrect liquidations. This introduces a necessary friction point to prioritize security over liveness during extreme market stress.

- Single-Source Vulnerability: Early protocols used single-source feeds, creating a critical vulnerability for flash loan attacks.

- Multi-Source Aggregation: The response involved aggregating data from multiple exchanges and node operators to filter out single-point manipulations.

- Time-Weighted Averages: The adoption of TWAPs further hardened protocols by making transient price spikes ineffective for contract exploitation.

- Liveness vs. Safety Trade-off: The current state involves balancing update frequency with the need for security checks that may halt operations during high volatility.

Future Horizon for Data Integrity

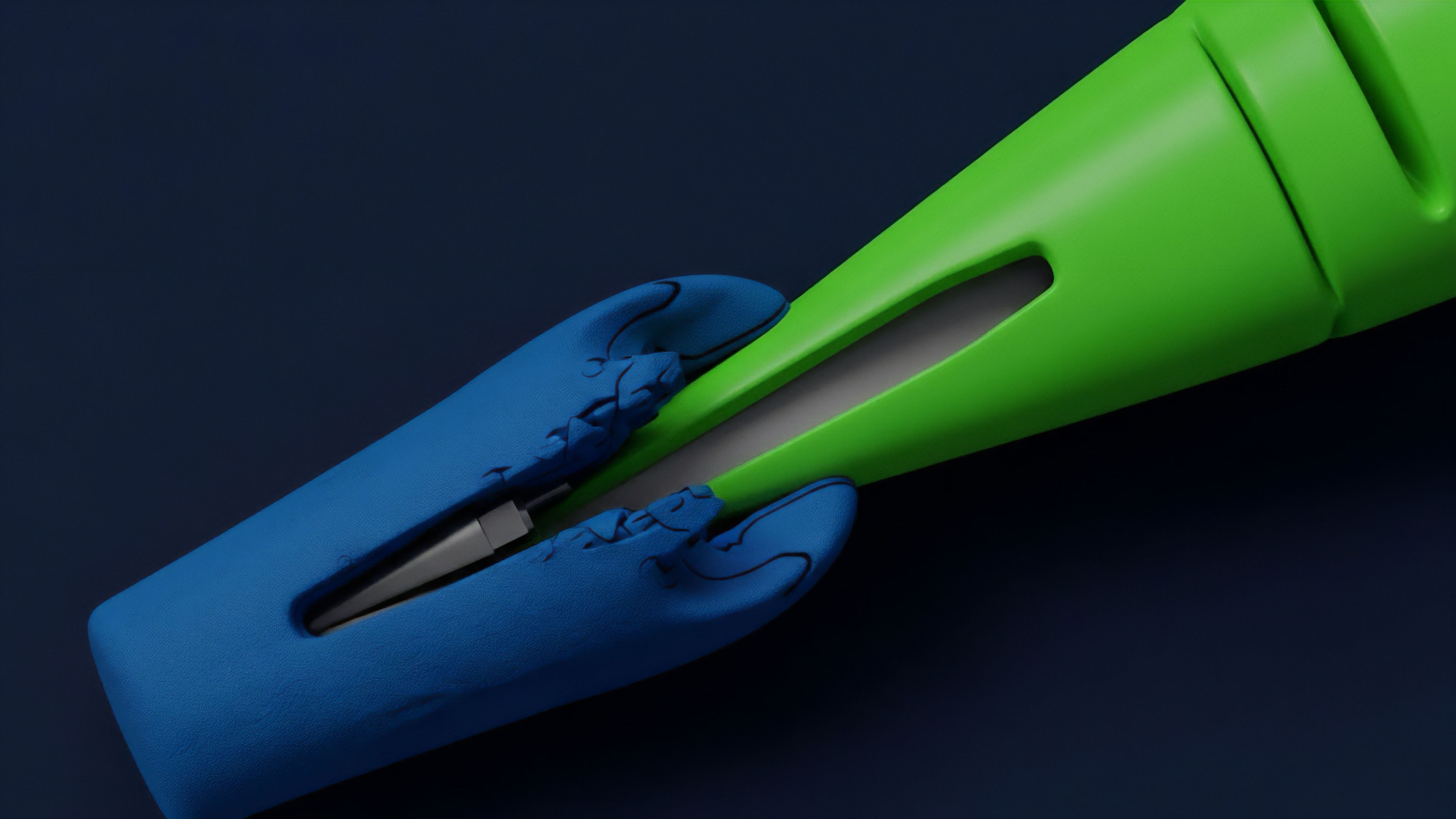

Looking ahead, the next generation of data feed integrity solutions will move beyond simple aggregation and toward more sophisticated cryptographic and game-theoretic models. The future of data integrity lies in a complete separation of data source from data validation.

Zero-Knowledge Proofs for Data Validity

One promising direction involves zero-knowledge proofs (ZKPs). ZKPs allow a data provider to prove that they have correctly calculated a price based on a set of off-chain data without actually revealing the underlying data itself. This could enhance privacy for sensitive financial data while simultaneously providing a high degree of verifiable integrity.

The oracle would not just report a price; it would provide cryptographic proof of its accuracy.

Prediction Markets as Oracles

A more radical approach involves using prediction markets as a data source. Instead of relying on external exchanges, a protocol could source its price from a market where participants bet on the future price. The market consensus, driven by economic incentives, becomes the oracle itself.

This creates a feedback loop where data integrity is maintained through economic incentives rather than purely technical safeguards.

The long-term goal for data integrity is to transition from a reliance on external data providers to an internal, cryptographically verifiable price discovery mechanism.

Glossary

Data Integrity Bonding

Data Integrity Challenges

Data Feed Data Providers

Financial Data Integrity

Cross-Rate Feed Reliability

Price Oracle Integrity

Price Feed Auditing

Data Integrity Challenge

Adversarial System Integrity