Essence

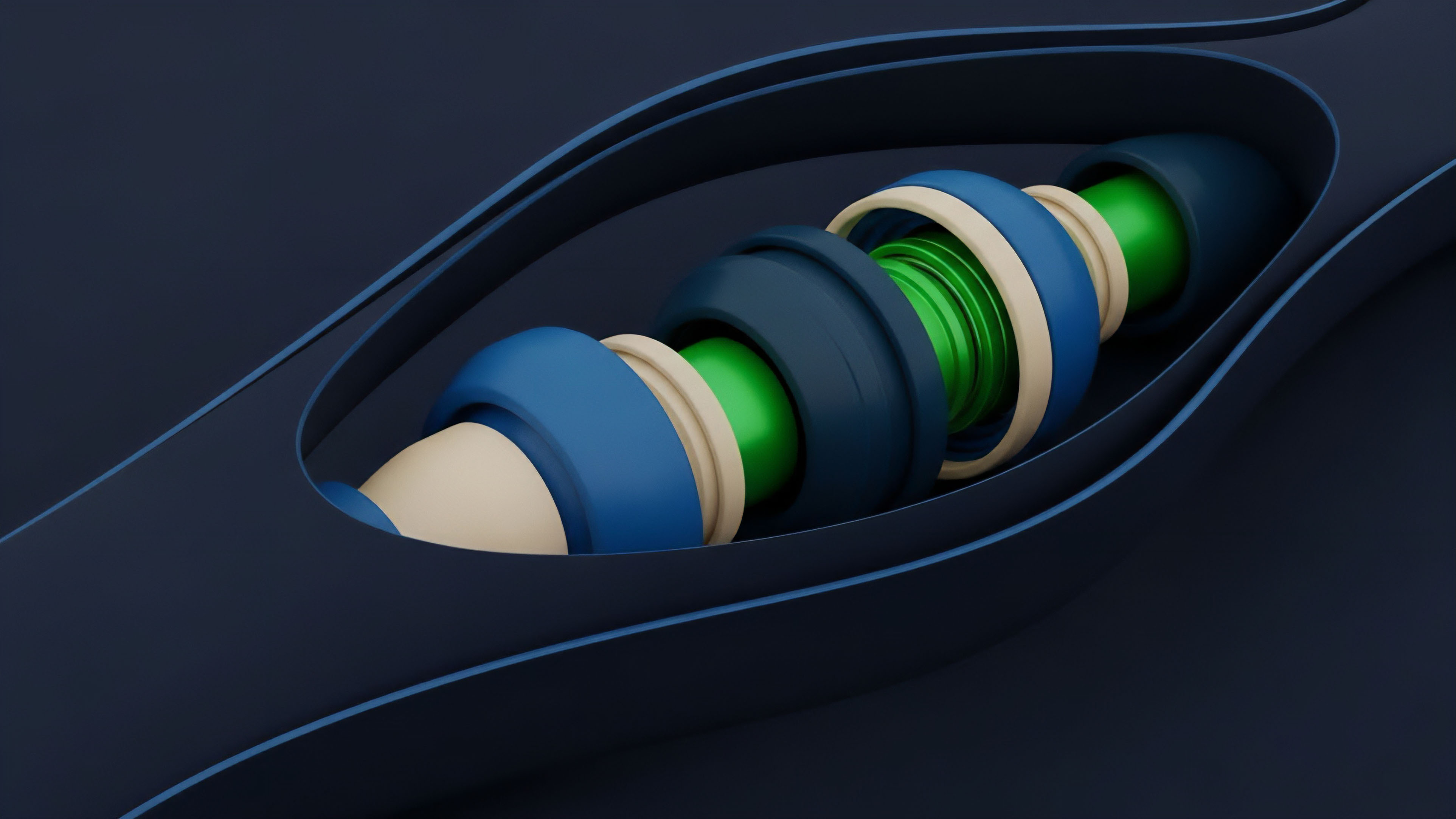

Data source integration for crypto options is the process of securely and reliably bringing off-chain market data onto a blockchain for use by smart contracts. The challenge stems from the fundamental architectural disconnect between the deterministic, isolated nature of blockchain ledgers and the continuous, high-frequency, and often chaotic data streams required for accurate options pricing and risk management. A smart contract, by design, cannot directly access data from the external internet; it requires an oracle to bridge this gap.

This integration is the core technical hurdle for decentralized options protocols, defining the difference between a functional, risk-managed system and a fragile one susceptible to manipulation. The specific data requirements for options extend far beyond simple spot prices. A robust options protocol requires a continuous feed of implied volatility, a dynamic measure derived from market expectations.

This volatility data is necessary to calculate the value of an option contract using models like Black-Scholes or its variants. The integrity of this data stream directly dictates the solvency of the protocol’s margin engine and the accuracy of its pricing for both liquidity providers and traders. A delay or corruption in this data feed can lead to significant systemic risk, enabling arbitrageurs to exploit stale prices and potentially bankrupt the protocol’s liquidity pools.

Data source integration is the foundational engineering challenge of securely transmitting high-fidelity off-chain market information to a decentralized options protocol’s smart contract logic.

The challenge is further complicated by the need for a “volatility surface,” not just a single volatility value. A volatility surface plots implied volatility across different strike prices and expiration dates. Integrating this complex data structure on-chain is computationally intensive and expensive.

Therefore, protocols must carefully select a data integration strategy that balances cost, security, and data freshness. The selection of a reliable oracle solution, or the creation of a proprietary data aggregation mechanism, is the most critical design choice for any decentralized options platform seeking to achieve capital efficiency and maintain market integrity.

Origin

The origin of data source integration challenges in crypto options traces back to the earliest attempts at building decentralized derivatives platforms.

Early protocols in decentralized finance (DeFi) struggled with the fundamental “oracle problem,” where a lack of reliable external data prevented the creation of sophisticated financial products. The first iterations of options protocols often relied on simplistic price feeds or a single, centralized data source. This approach proved highly vulnerable to exploits, particularly during periods of high market volatility where price feeds could be manipulated or delayed.

The initial solutions were often ad-hoc and protocol-specific. Some early protocols attempted to calculate implied volatility on-chain, which quickly proved prohibitively expensive due to high gas costs. Others relied on off-chain calculations submitted by trusted parties, which re-introduced the very counterparty risk that decentralization sought to eliminate.

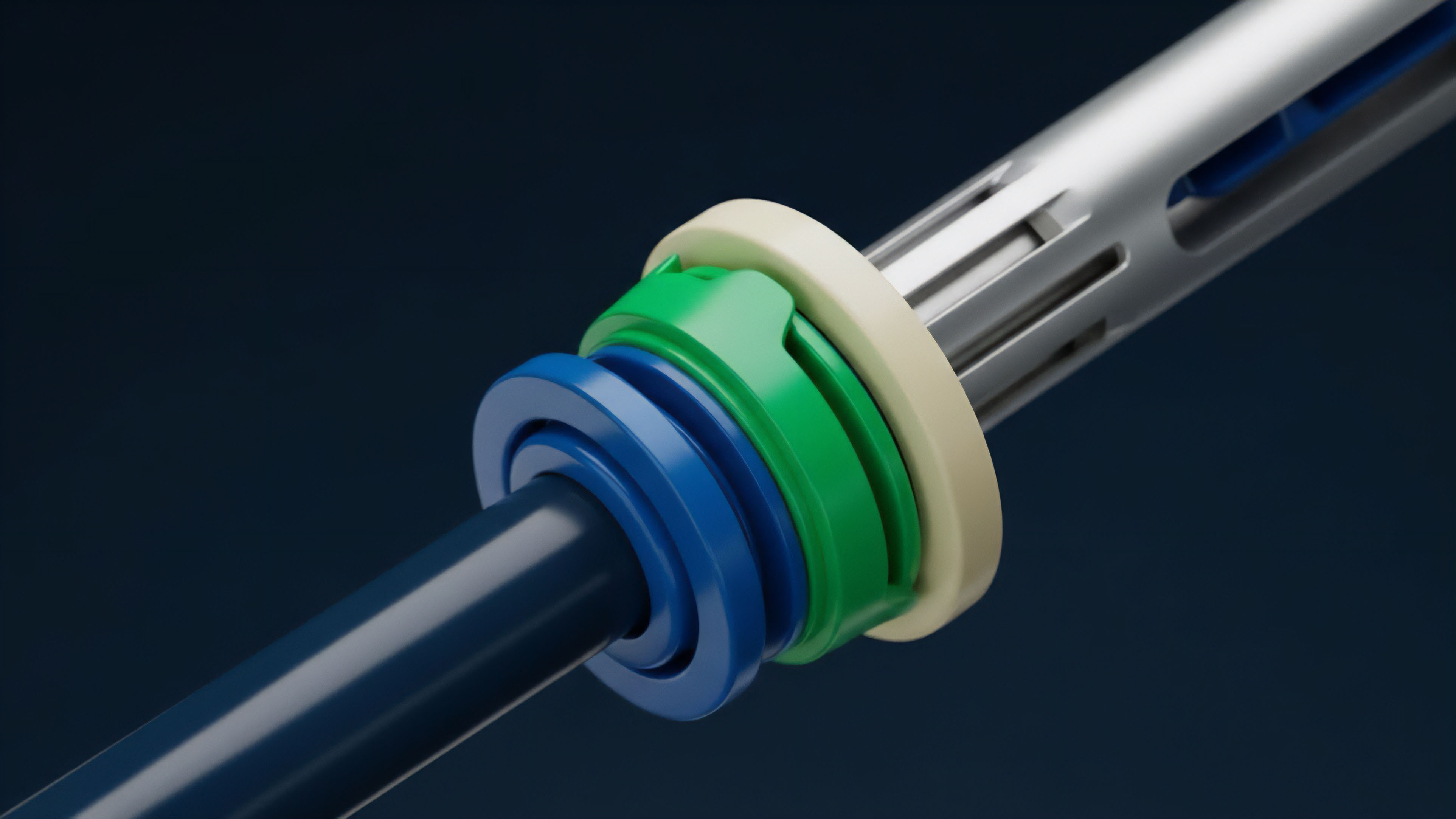

The evolution of this field was driven by the necessity of moving beyond simple spot price feeds to accommodate the complexity of options pricing. The first major architectural shift came with the development of decentralized oracle networks like Chainlink. These networks offered a more robust solution by aggregating data from multiple sources and using cryptographic verification to ensure data integrity before submission to the blockchain.

However, even these solutions were initially designed for spot prices, not the complex volatility surfaces required for options. This led to a new wave of innovation focused on specialized data products and low-latency data streams, specifically tailored for derivatives markets. The challenge was to move from a “data push” model, where data was only updated periodically, to a “data pull” model, where protocols could request real-time data for dynamic margin calculations.

Theory

The theoretical underpinnings of data source integration for options are rooted in quantitative finance, specifically the inputs required for options pricing models. The value of an option contract is determined not solely by the underlying asset’s price, but by a combination of factors including time to expiration, strike price, risk-free rate, and, most importantly, implied volatility. The data source integration challenge is therefore about reliably sourcing and verifying these specific inputs.

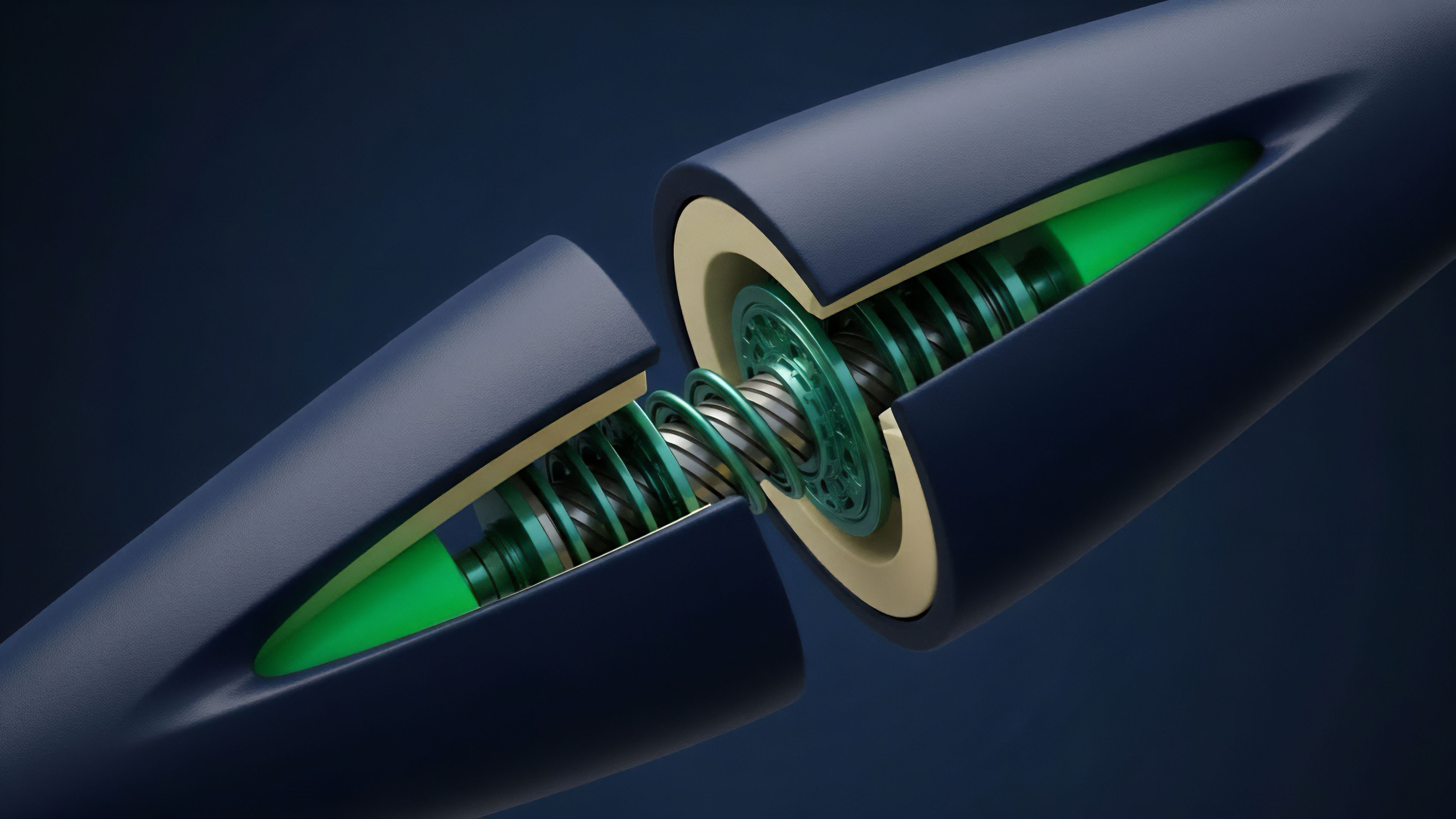

The core data requirement for options pricing is the implied volatility surface. This surface is a three-dimensional plot that represents the implied volatility for different combinations of strike prices and expiration dates. In traditional finance, this data is readily available from centralized exchanges and data providers.

In DeFi, protocols must either calculate this surface on-chain (impractical) or source it from a decentralized oracle network. The integrity of this surface is critical for managing risk. If the implied volatility data is stale or manipulated, the calculated risk metrics (Greeks) will be incorrect, leading to mispricing and potential liquidation cascades.

- Implied Volatility (IV) Calculation: The IV is derived by inverting an options pricing model (like Black-Scholes) using current market prices of existing options contracts. This calculation is computationally intensive and requires continuous access to option market data.

- Greeks Calculation: The Greeks ⎊ Delta, Gamma, Vega, and Theta ⎊ are essential risk management metrics derived from the pricing model’s inputs. Data integration must be fast enough to allow for real-time calculation of these Greeks, enabling protocols to manage their delta hedging positions effectively.

- Data Latency and Time Decay: Options contracts are highly sensitive to time decay (Theta). Data feeds must be low-latency to accurately reflect changes in market conditions and time remaining until expiration. A delayed feed can cause significant mispricing as the option value decays rapidly.

The integration architecture must also consider the adversarial nature of decentralized markets. The system must be designed to resist front-running and manipulation. A common attack vector involves manipulating the price feed on a spot exchange to trigger liquidations or favorable trades on the options protocol.

A robust data source integration strategy mitigates this by using aggregated feeds from multiple sources and implementing time-weighted average prices (TWAPs) or volume-weighted average prices (VWAPs) rather than single-point snapshots.

Approach

The current approach to data source integration for crypto options typically involves a hybrid architecture that balances security, cost, and latency. Protocols rarely attempt to source data exclusively on-chain due to the high computational cost.

Instead, they rely on specialized off-chain infrastructure to aggregate and verify data before submitting it to the blockchain. One common strategy involves utilizing decentralized oracle networks. These networks provide a robust data feed by aggregating information from multiple independent sources.

For options, this approach extends beyond simple spot prices to include more complex data products. The protocol’s smart contract requests data from the oracle network, which then performs the necessary calculations (such as implied volatility calculation) off-chain and submits the verified result to the blockchain.

| Integration Model | Description | Key Trade-offs |

|---|---|---|

| Decentralized Oracle Aggregation | Data aggregated from multiple sources by a decentralized network of nodes, verified cryptographically, and submitted on-chain. | High security, high cost, moderate latency. Suitable for settlement and margin updates. |

| Off-chain Data Stream | Data provided by a single, specialized provider (e.g. a market maker or exchange index feed) directly to the protocol’s front end or off-chain risk engine. | Low cost, high latency, centralized trust. Suitable for real-time pricing display and non-settlement functions. |

| On-chain Calculation | The smart contract calculates implied volatility using data from on-chain liquidity pools (e.g. AMMs). | High security, high cost, low liquidity. Prone to manipulation in low-volume markets. |

Another approach, particularly relevant for high-frequency trading, involves utilizing off-chain data streams for real-time risk calculations. While the final settlement may rely on a decentralized oracle, the dynamic margin requirements and real-time pricing calculations are often performed off-chain by a protocol’s risk engine. This allows for faster responses to market changes, which is critical for options trading where price decay is a constant factor.

The integrity of this off-chain data is paramount; protocols must ensure that data providers cannot be coerced or compromised.

The integration approach must balance the need for high-frequency data to accurately calculate risk with the security requirement of on-chain verification for settlement.

The data selection process itself requires careful consideration. A protocol must choose whether to source data from centralized exchanges (CEXs) or decentralized exchanges (DEXs). CEX data is generally considered more reliable due to higher volume, but it introduces centralization risk.

DEX data, while decentralized, can be vulnerable to manipulation in low-liquidity pools. A robust approach aggregates data from both sources to create a more resilient feed.

Evolution

Data source integration for crypto options has evolved significantly from the initial reliance on simple price feeds.

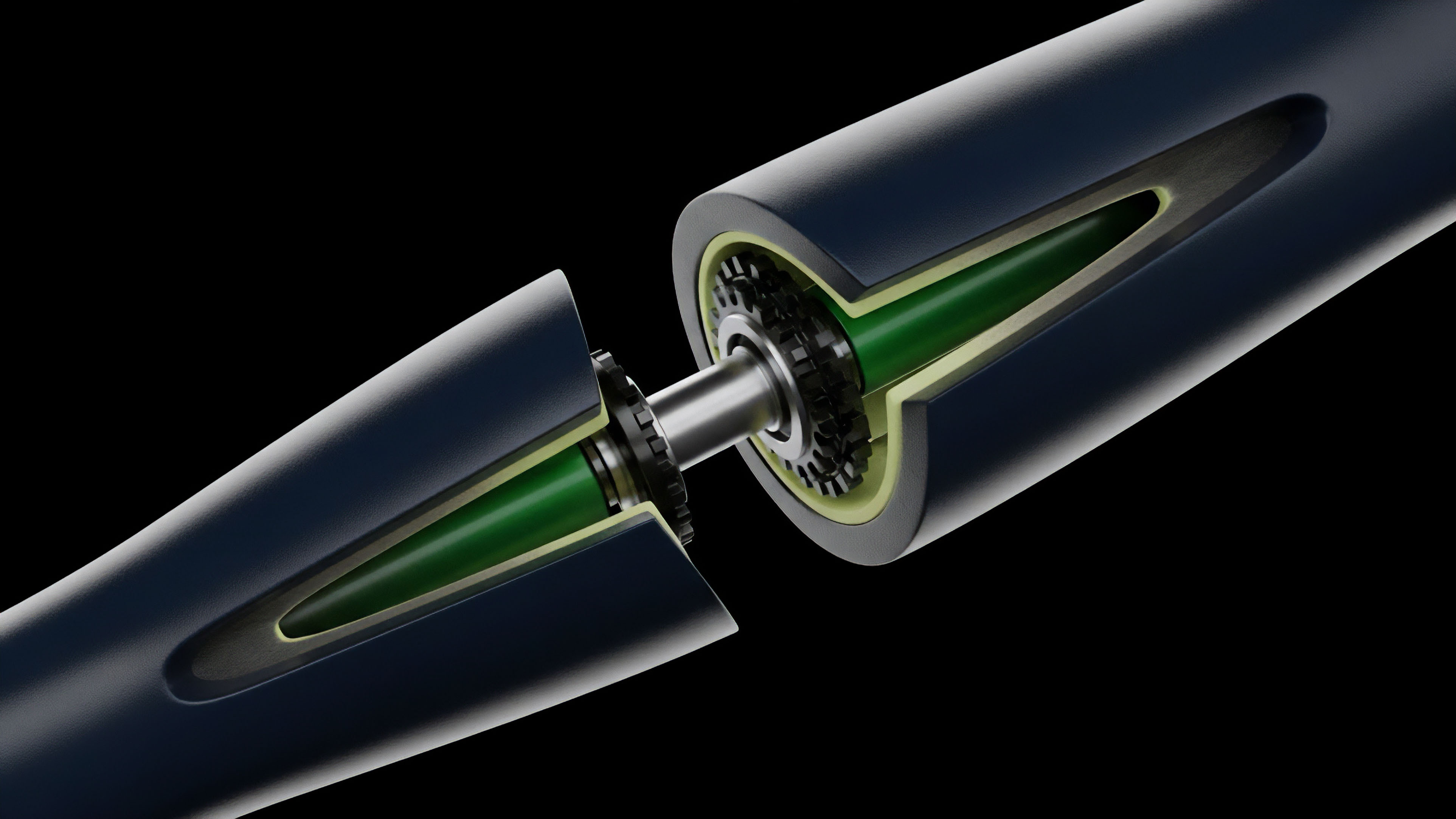

The initial protocols struggled with a fundamental mismatch: they were trying to apply complex financial models to an immature data infrastructure. The first generation of solutions focused on achieving basic functionality, often accepting high latency and a degree of centralization. The current evolution is marked by a transition from static, single-point data snapshots to dynamic, continuous data streams.

This shift is driven by the demand for capital efficiency and real-time risk management. Early protocols updated their data feeds every few minutes, making them vulnerable to rapid price changes. Modern protocols require near-real-time data to calculate dynamic margin requirements and prevent liquidations from occurring based on stale information.

- Specialized Data Products: The market has seen the emergence of specialized data products designed specifically for derivatives. These products provide pre-calculated implied volatility surfaces, rather than requiring protocols to perform complex calculations on-chain. This reduces gas costs and allows for more sophisticated risk management.

- Decentralized Low-Latency Feeds: The development of new oracle architectures, such as Pyth Network, focuses on delivering low-latency data streams directly from market makers and exchanges. This architecture significantly reduces the time lag between market events and on-chain updates, enabling more efficient pricing and liquidation processes.

- On-chain Risk Engines: Protocols are moving toward building more sophisticated on-chain risk engines that process integrated data to dynamically adjust margin requirements based on changing market conditions. This allows for greater capital efficiency, as collateral requirements can be lowered when volatility decreases and increased when risk rises.

The evolution of data integration is closely tied to the broader trend of institutional adoption. As larger financial institutions consider entering the crypto derivatives space, they demand data integrity and latency comparable to traditional finance. This has forced protocols to move beyond basic oracle solutions toward robust, enterprise-grade data infrastructure that can support high-volume, low-latency trading environments.

Horizon

Looking ahead, the horizon for data source integration in crypto options involves a complete re-architecture of data markets. The current model, where protocols pay for data from external sources, will likely evolve into a more integrated system where data itself becomes a core component of the protocol’s value proposition. The focus will shift from simply sourcing data to creating a decentralized standard for data quality and governance.

One critical development will be the creation of truly decentralized, standardized volatility surfaces. Today, many protocols still rely on proprietary methods or centralized sources for implied volatility data. The future requires a shared, verifiable, and transparent volatility surface that can be accessed by all protocols.

This will level the playing field and enable more accurate pricing across the entire ecosystem.

| Current Challenge | Future Horizon |

|---|---|

| Centralized volatility data feeds. | Decentralized, standardized volatility surface protocols. |

| High latency data updates. | Real-time data streams and on-chain risk engines. |

| Reliance on single oracle solutions. | Aggregated feeds from multiple oracle networks for redundancy. |

The regulatory landscape will also force significant changes in data integration. As regulators begin to focus on market integrity and price manipulation, protocols will need to demonstrate that their data sources are resilient and transparent. This will lead to a greater emphasis on verifiable data provenance and auditability. The integration of artificial intelligence and machine learning models for predictive pricing and risk management will further complicate the data requirements, demanding even higher quality and more granular data feeds. The ultimate goal is to move beyond replicating traditional finance to creating a data infrastructure that is inherently more transparent and resilient than its centralized predecessors.

Glossary

Data Aggregation

Real-World Asset Integration Challenges

Open Source Risk Logic

Cryptocurrency Market Data Integration

Decentralized Finance Integration

Cross-Protocol Risk Integration

Market Risk Source

Vertical Integration in Finance

Settlement Integration