Essence

Data verification in the context of crypto derivatives represents the fundamental challenge of aligning a protocol’s internal state with external market reality. The core function of a decentralized options protocol relies on precise, timely information regarding underlying asset prices, volatility, and collateral value. Without a robust verification mechanism, the protocol operates in a vacuum, susceptible to manipulation and economic instability.

The verification process establishes the necessary trust layer, ensuring that the logic of the smart contract executes based on validated inputs rather than arbitrary or malicious data. This process extends beyond simple spot price feeds, requiring specialized verification for complex parameters such as implied volatility surfaces and funding rates. The integrity of every derivative contract, from a simple call option to a complex structured product, hinges entirely on the fidelity of the data verification architecture.

The integrity of a decentralized options protocol depends entirely on its ability to verify external market data accurately and securely.

The challenge intensifies with options because their value is highly sensitive to non-linear changes in the underlying asset price and volatility. A minor error in a price feed can cause a significant miscalculation of an option’s premium or collateral requirement, leading to improper liquidations or a breakdown of the protocol’s risk engine. The verification process acts as the critical bridge between the off-chain world of market movements and the deterministic on-chain logic of the protocol, preventing information asymmetry from being exploited by malicious actors.

The verification system must ensure data delivery is both timely, avoiding front-running opportunities, and accurate, resisting single-point-of-failure attacks.

Origin

The necessity of data verification in decentralized finance originates from the “oracle problem.” Early attempts at creating on-chain derivatives faced a critical dilemma: smart contracts cannot natively access information outside their blockchain. To settle a contract based on an external event, like the price of Bitcoin at expiration, a trusted third party was required to provide that information.

This reliance on a single, centralized data provider reintroduced the very trust assumptions that blockchain technology was designed to eliminate. The early days of options protocols were characterized by simple price feeds provided by single entities, creating a clear attack vector where a malicious or compromised oracle could drain protocol funds by reporting a false price. The evolution of data verification was driven by the need to mitigate this single point of failure.

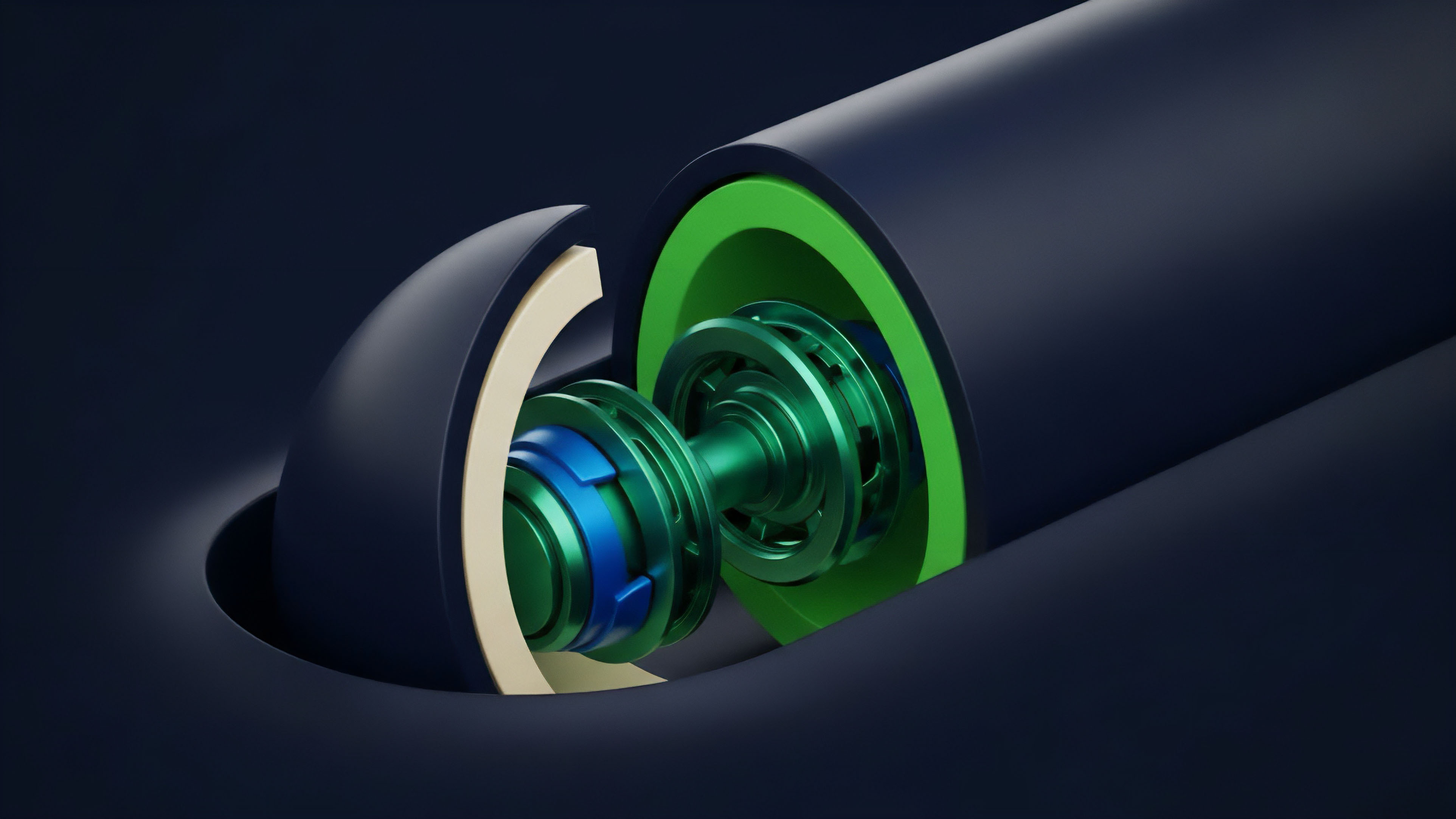

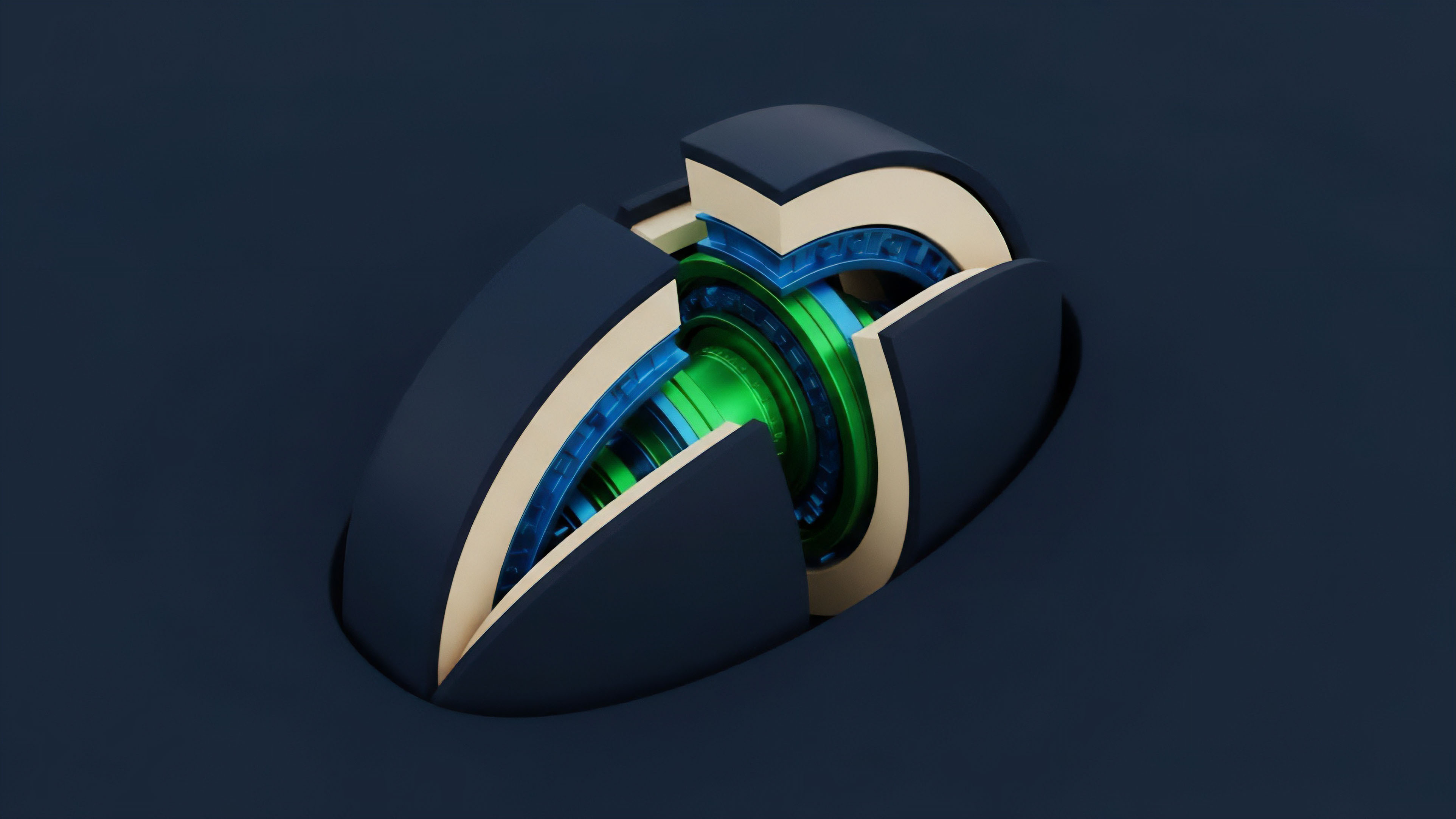

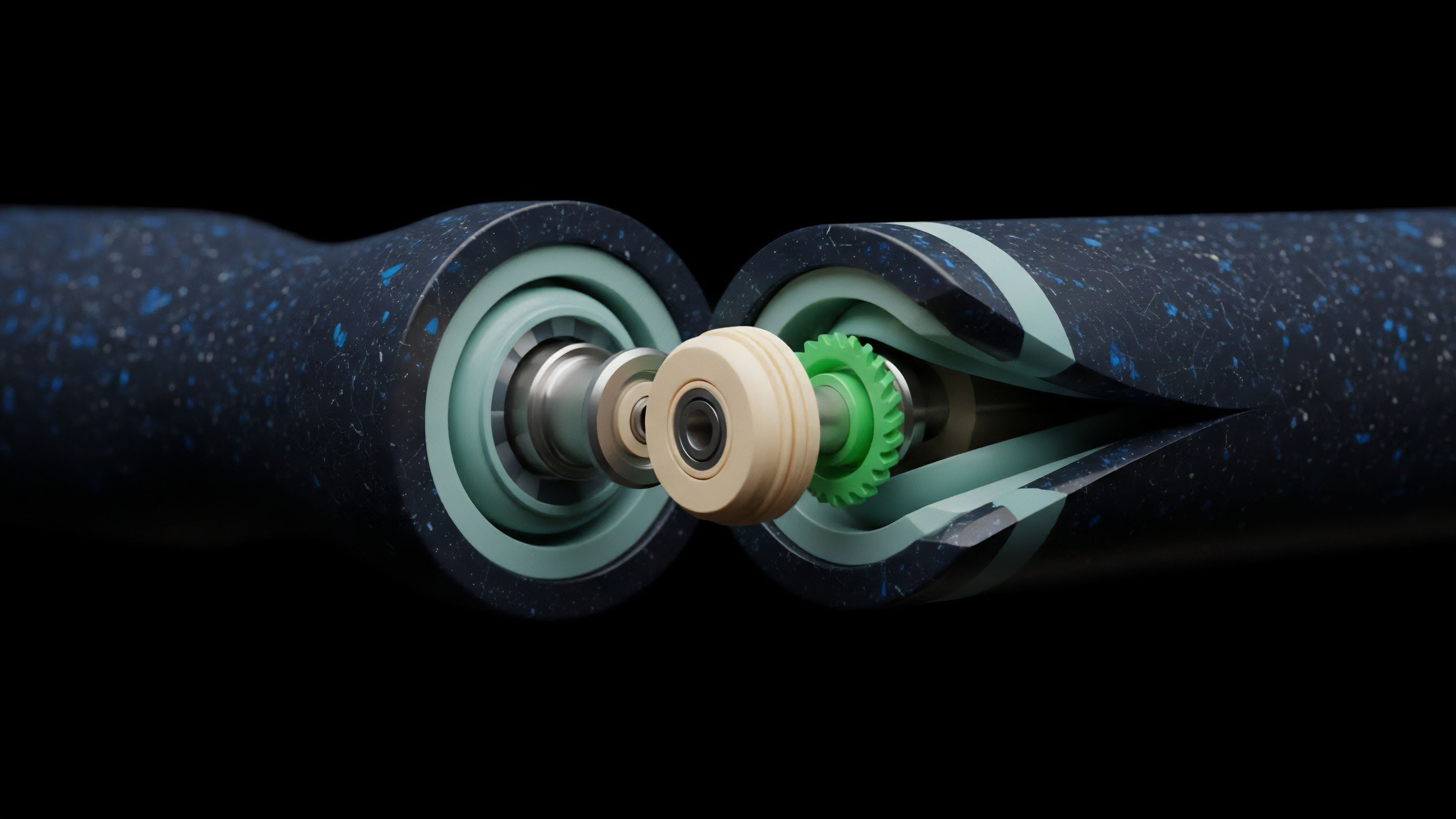

The initial solution involved moving from a single oracle to a network of decentralized oracles. This shift began with a focus on simple spot prices, primarily for lending protocols, but quickly expanded to derivatives as the complexity of contracts grew. The transition to decentralized oracle networks involved economic incentives and cryptographic security, ensuring that multiple independent data providers reached consensus before a value was recorded on-chain.

This development marked a significant architectural shift from a centralized point of trust to a distributed network where data integrity is maintained through economic incentives and redundancy. The challenge then became how to design these incentives to prevent collusion among data providers.

Theory

The theoretical foundation of data verification in derivatives rests on two core pillars: economic security and cryptographic assurance.

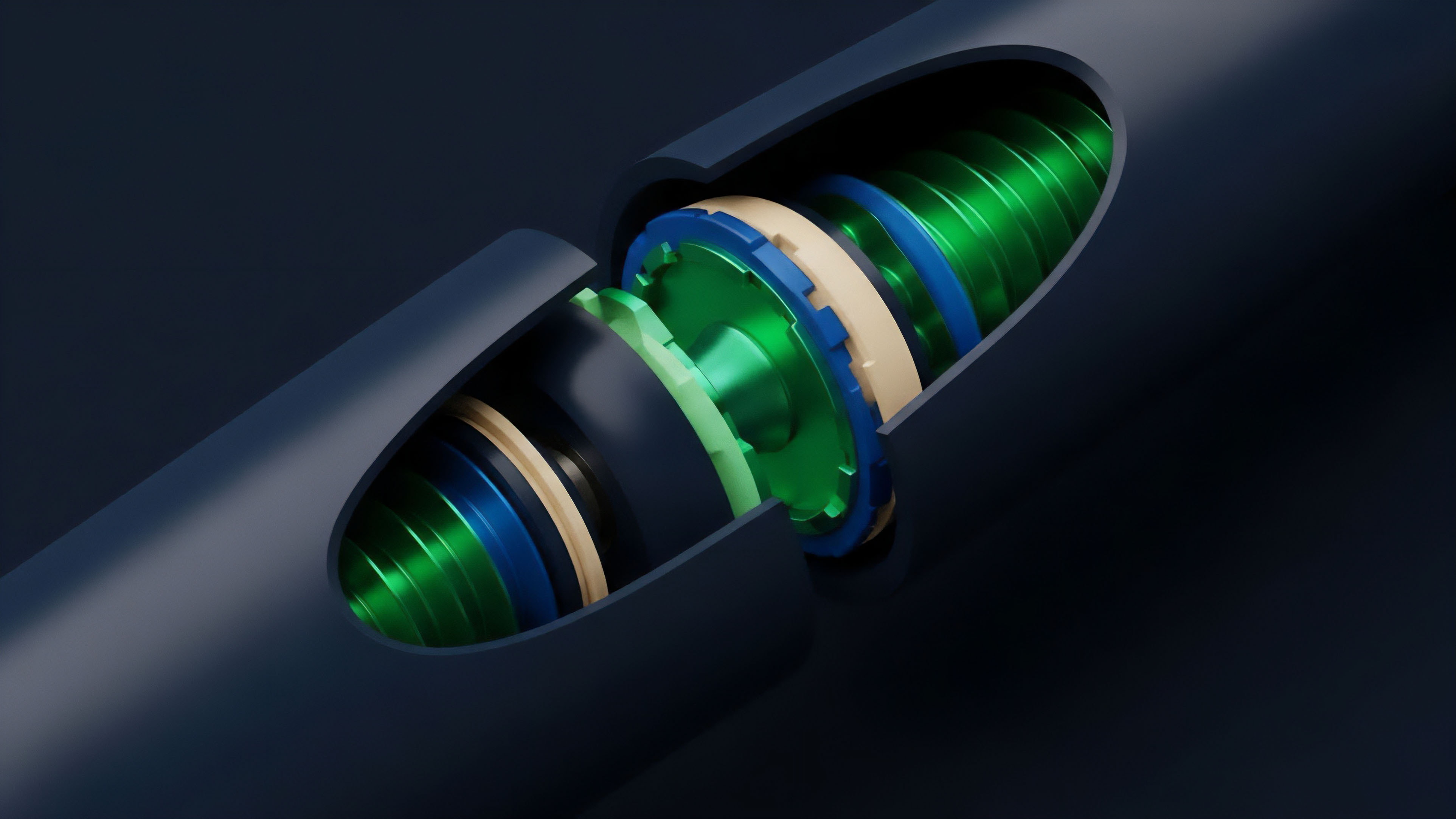

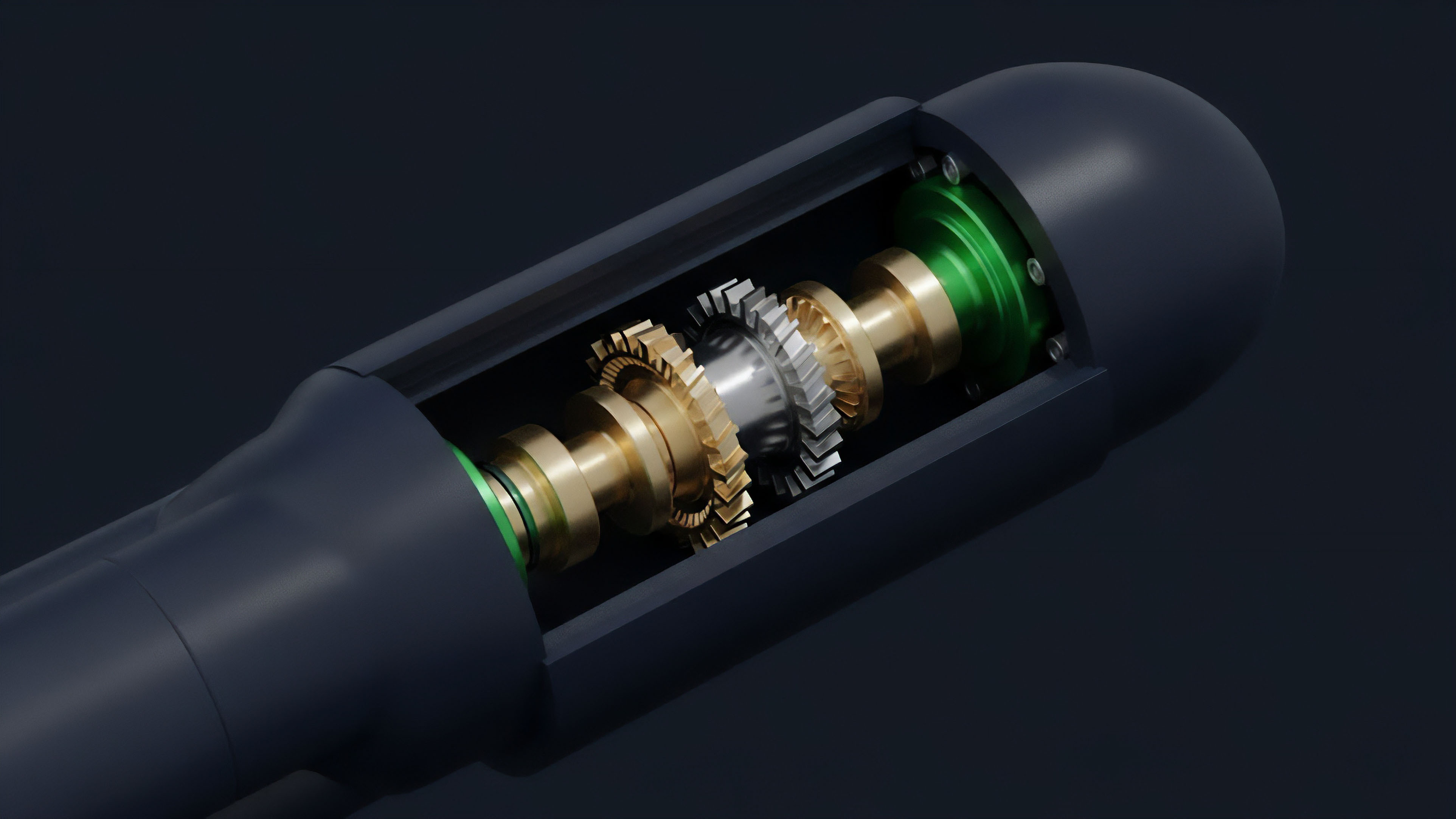

Economic security refers to the cost required to corrupt the data feed. A well-designed verification mechanism ensures that the financial gain from manipulating the data is significantly less than the cost incurred by the attacker to compromise the network of data providers. This is often achieved through a staking mechanism where data providers lock up collateral, which is slashed if they submit inaccurate data.

The Black-Scholes-Merton model, a cornerstone of options pricing, highlights the theoretical importance of volatility as a key input. In a decentralized environment, verifying volatility presents a challenge beyond verifying spot prices. Implied volatility (IV) is not a directly observable market price; it is derived from market prices of options.

This creates a feedback loop where the data verification mechanism must not only verify the underlying asset price but also accurately reflect the market’s expectation of future price movement. The verification system must account for the volatility skew , which reflects market demand for out-of-the-money options. An effective verification system for options protocols must provide a reliable measure of this skew, or else the pricing of options across different strikes will be fundamentally flawed.

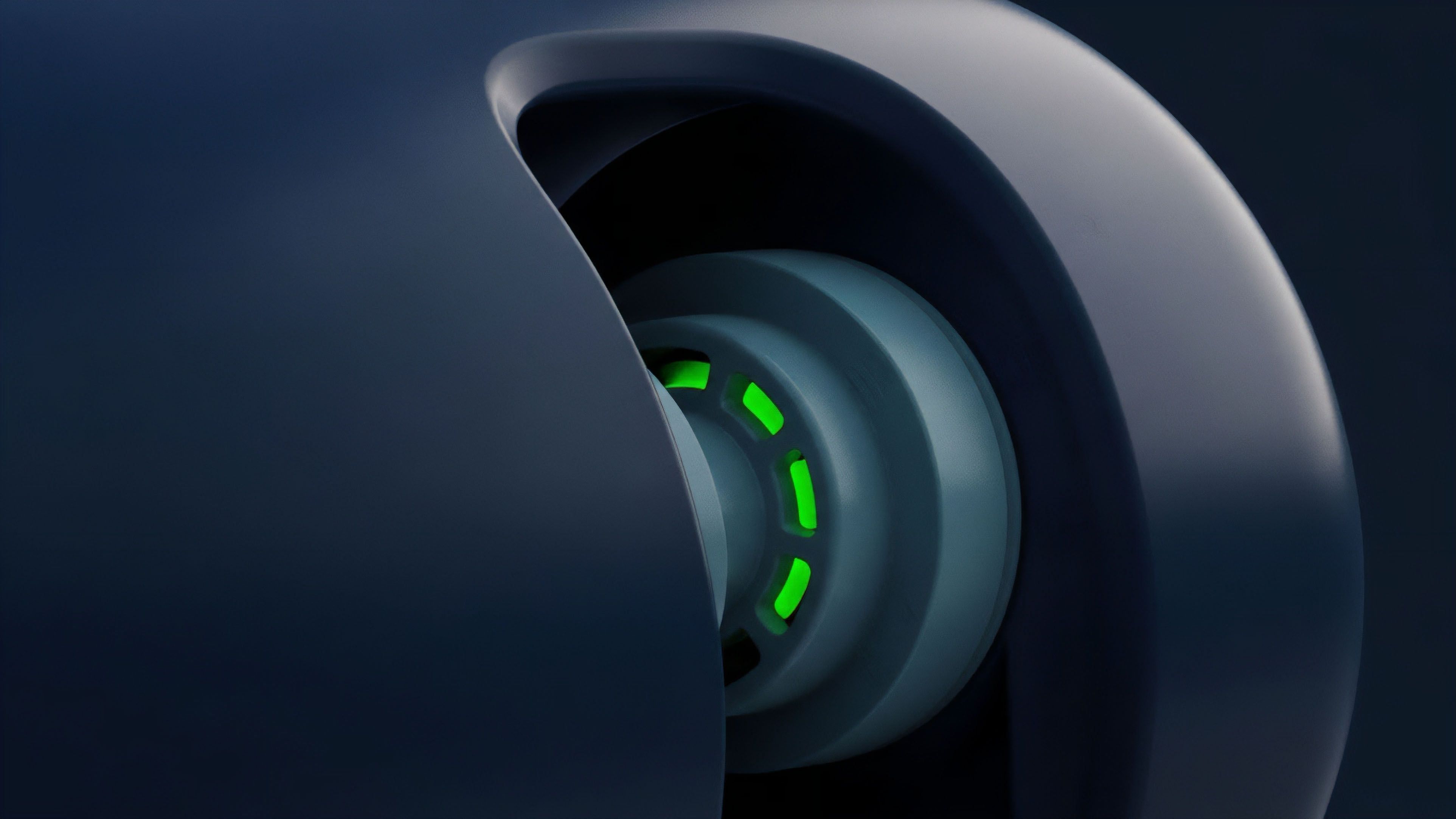

The core challenge in oracle design for derivatives is balancing liveness against freshness. Liveness ensures that data is always available when needed, preventing protocol halts. Freshness ensures that the data reflects the most current market price.

A verification system prioritizing liveness might accept slightly older data, which creates opportunities for front-running. Conversely, a system prioritizing freshness might be susceptible to manipulation if a single data point is verified too quickly without sufficient consensus. The optimal solution involves a time-weighted average price (TWAP) calculation over a specific window to smooth out short-term volatility and manipulation attempts, striking a balance between these competing requirements.

Data Verification Framework Comparison

| Verification Method | Mechanism | Primary Application | Risk Profile |

|---|---|---|---|

| Time-Weighted Average Price (TWAP) | Calculates average price over a time interval. | Spot price verification, anti-manipulation. | Latency risk, susceptible to long-duration manipulation. |

| Decentralized Oracle Networks (DONs) | Aggregates data from multiple independent nodes. | Broad data feeds, complex parameters (IV). | Economic security risk, potential for collusion among nodes. |

| Internalized Verification | Uses on-chain data from a liquidity pool (e.g. Uniswap TWAP). | Spot price verification for specific assets. | Liquidity depth risk, manipulation potential in low liquidity pools. |

| Dispute Resolution Layer | Staking and challenge mechanisms for data accuracy. | Ensuring integrity of data inputs, post-submission checks. | Slashing risk, potential for long settlement times. |

Approach

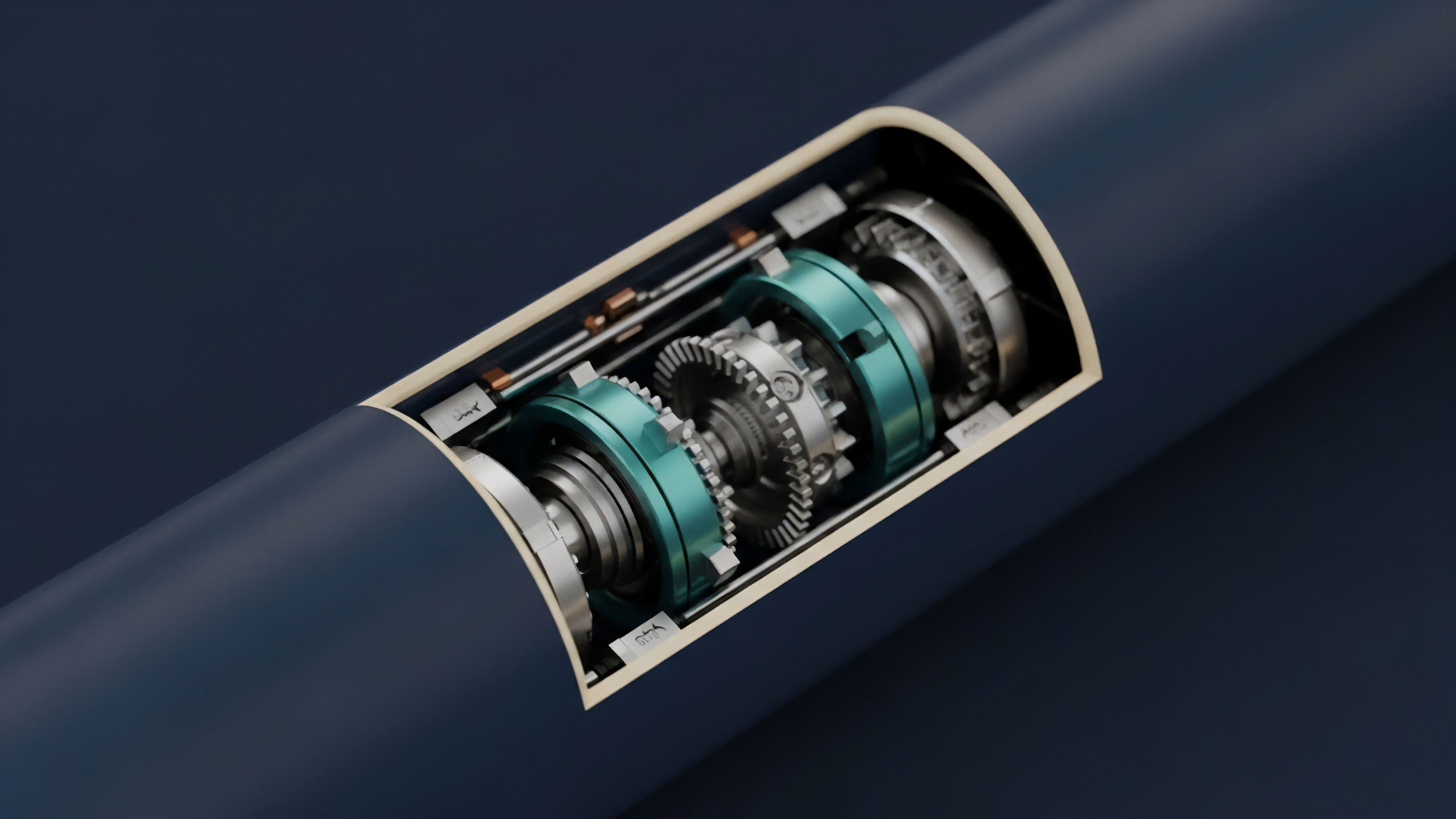

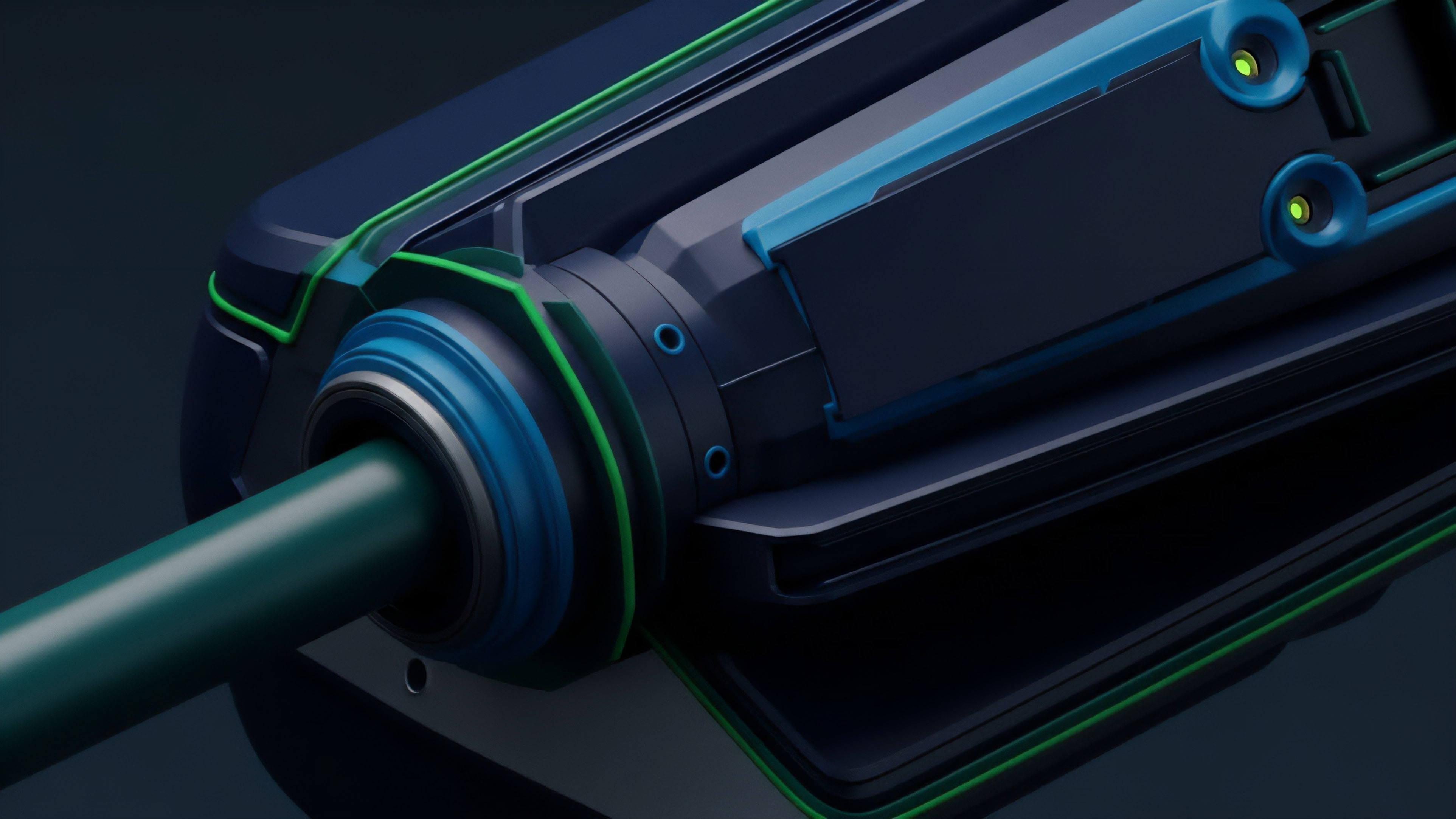

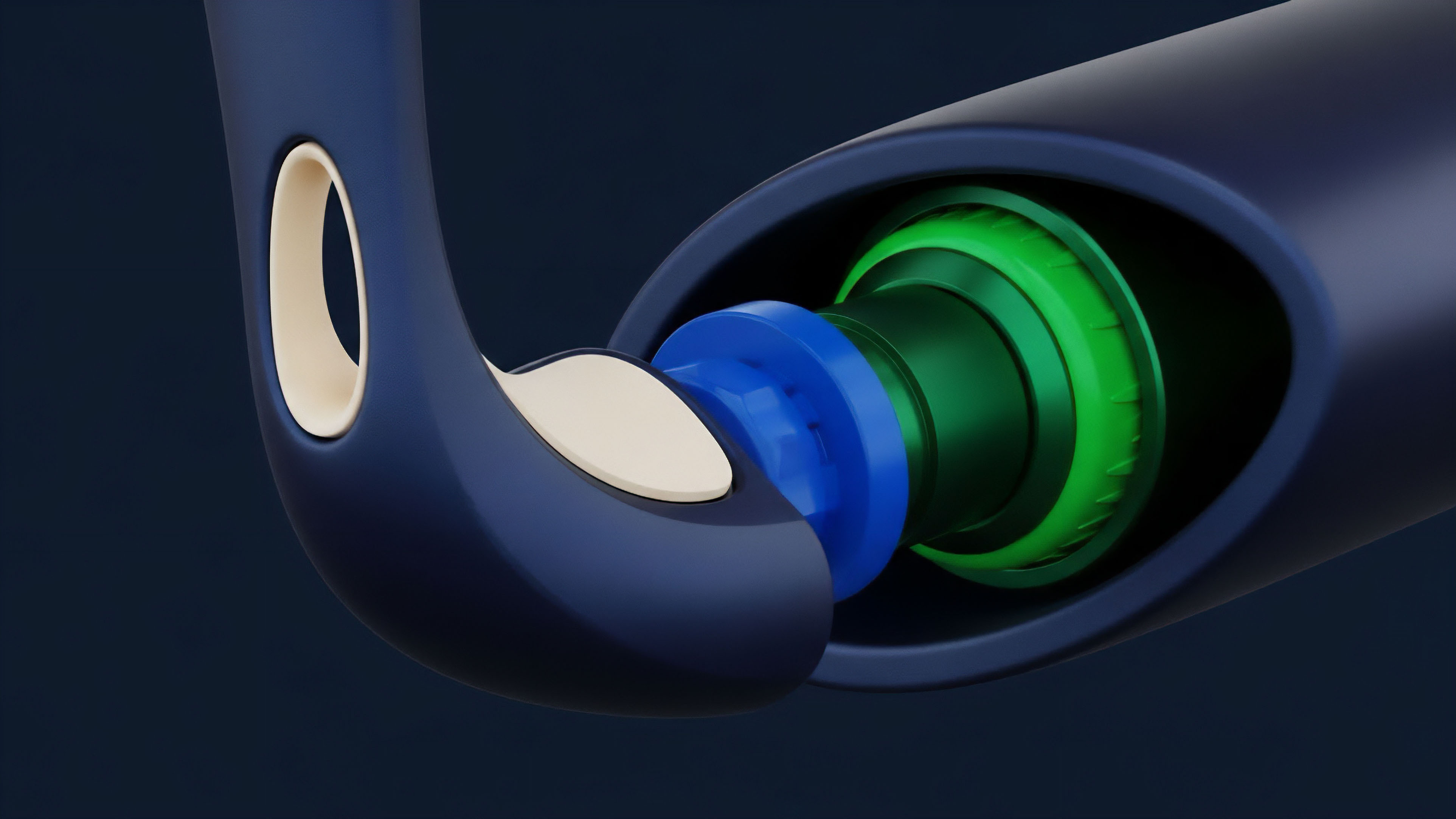

Current data verification approaches for options protocols focus on minimizing the time between a price change and its on-chain reflection, while simultaneously maximizing the cost of manipulation. The most robust systems employ a multi-layered approach that combines multiple verification techniques. A common approach involves a decentralized oracle network that provides data feeds from a variety of sources.

This network aggregates data from numerous exchanges, calculates a median or weighted average, and then submits this verified value on-chain. The verification process often involves a two-stage system: an initial, faster verification for immediate needs like liquidations, and a secondary, slower verification with a dispute mechanism for final settlement. This dual-layered approach balances speed with security.

The verification process for volatility, a critical input for options pricing, requires a specialized approach. Instead of simply providing a spot price, the verification system must calculate a measure of implied volatility based on real-time market data from options exchanges. This calculation must account for the full range of strikes and expirations to accurately reflect the market’s perception of risk.

A protocol might use a volatility oracle specifically designed to verify this complex parameter.

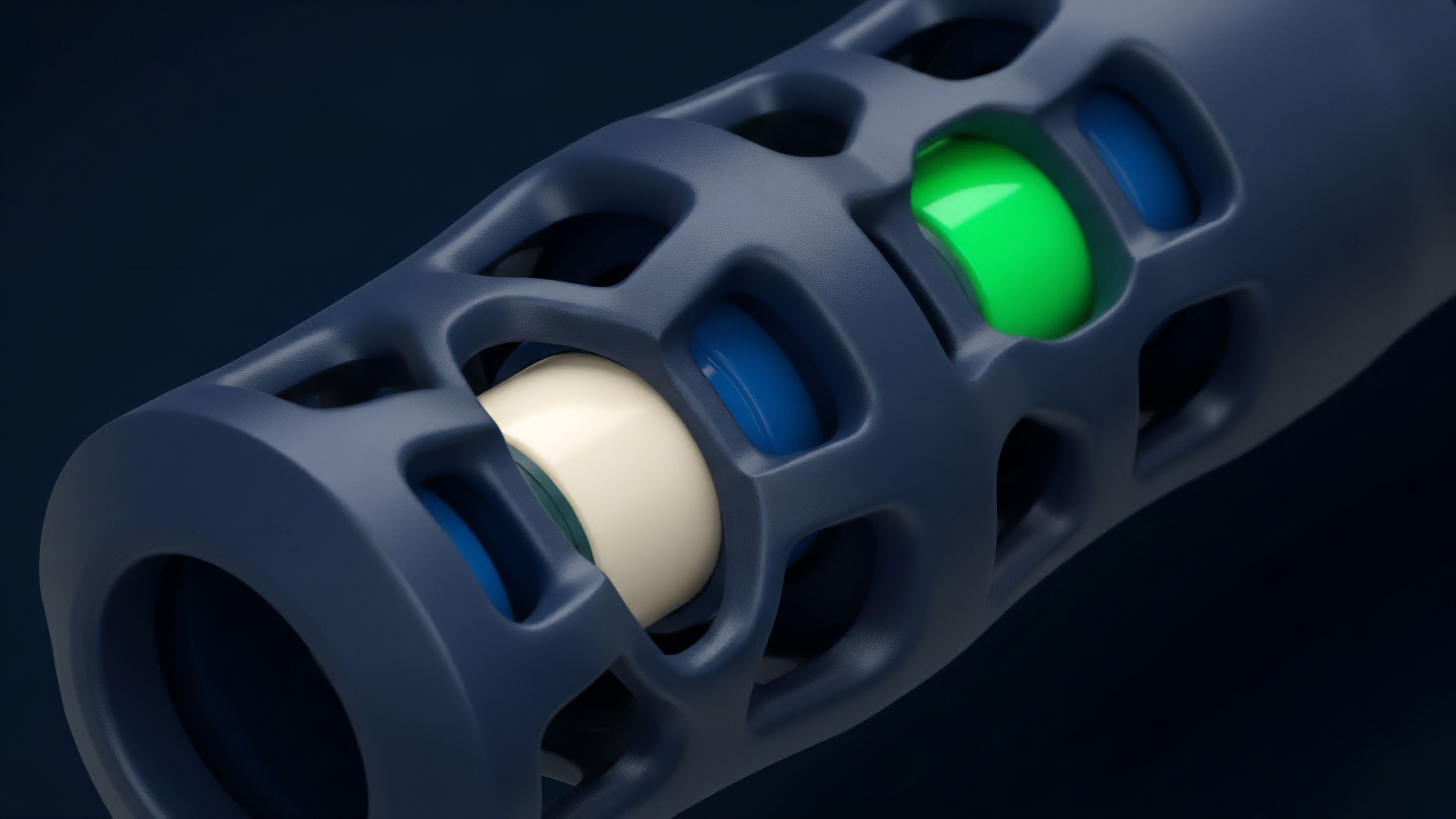

- TWAP Integration: Many protocols use a Time-Weighted Average Price (TWAP) as a primary defense against flash loan attacks. By averaging the price over a set period, the protocol ensures that a momentary price spike from a large, sudden trade does not trigger an incorrect liquidation or settlement.

- Decentralized Aggregation: Data from multiple sources is aggregated to prevent reliance on a single exchange or data provider. This redundancy makes it significantly more difficult and expensive for an attacker to manipulate all sources simultaneously.

- Staking and Slashing: Data providers are required to stake collateral. If a data point they provide is found to be inaccurate, their stake is slashed, creating a powerful economic incentive for honest behavior.

- Dispute Resolution Mechanisms: Some protocols incorporate a challenge period where users can dispute a data point submitted by an oracle. This allows for community oversight and adds another layer of security, albeit with potential delays in settlement.

Evolution

The evolution of data verification has been driven by the increasing complexity of derivatives and the rise of Layer 2 solutions. Early protocols relied on simple price feeds for basic options. The current generation of protocols, however, demands more sophisticated data, including implied volatility surfaces, correlation data between assets, and interest rate benchmarks.

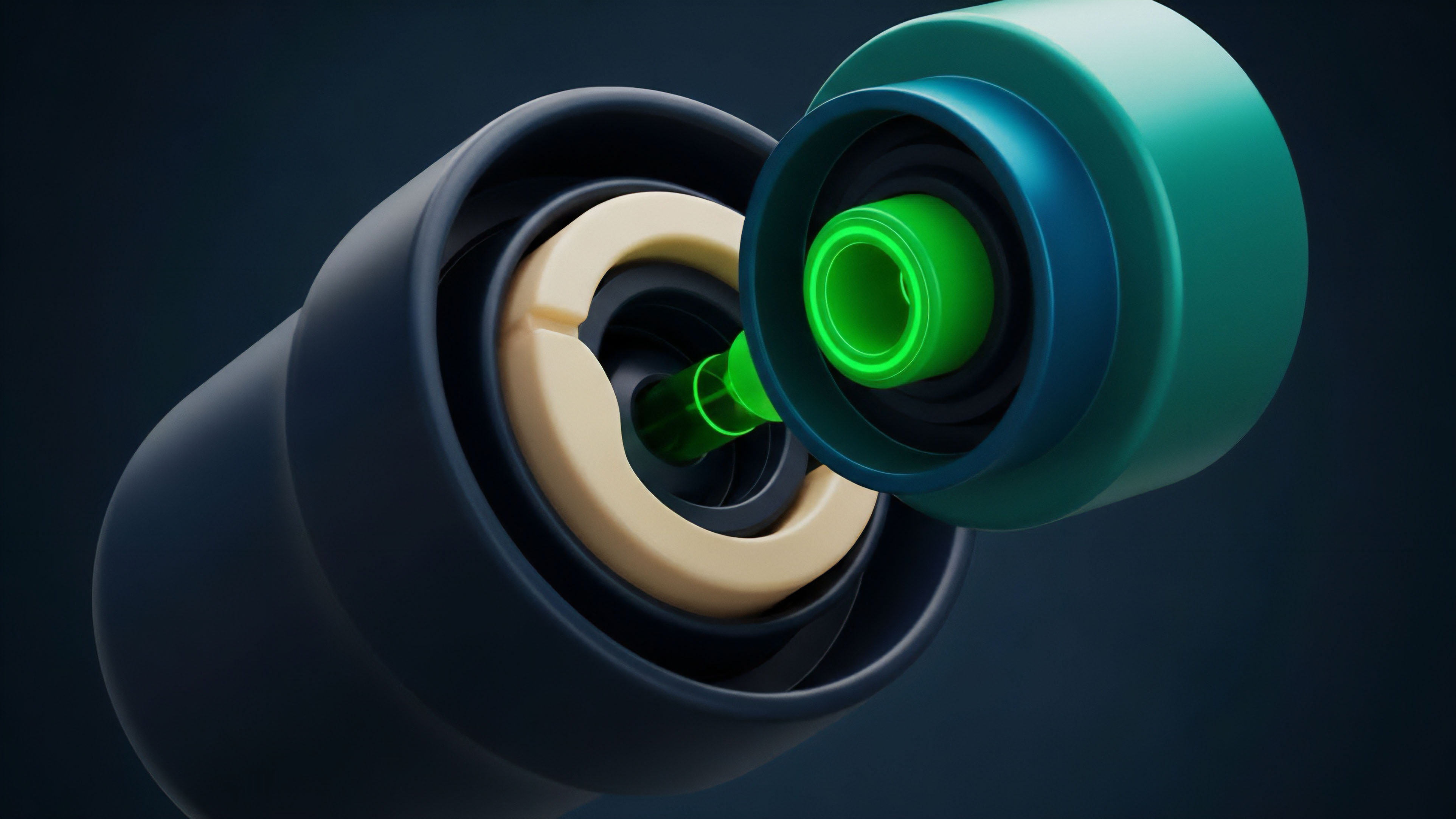

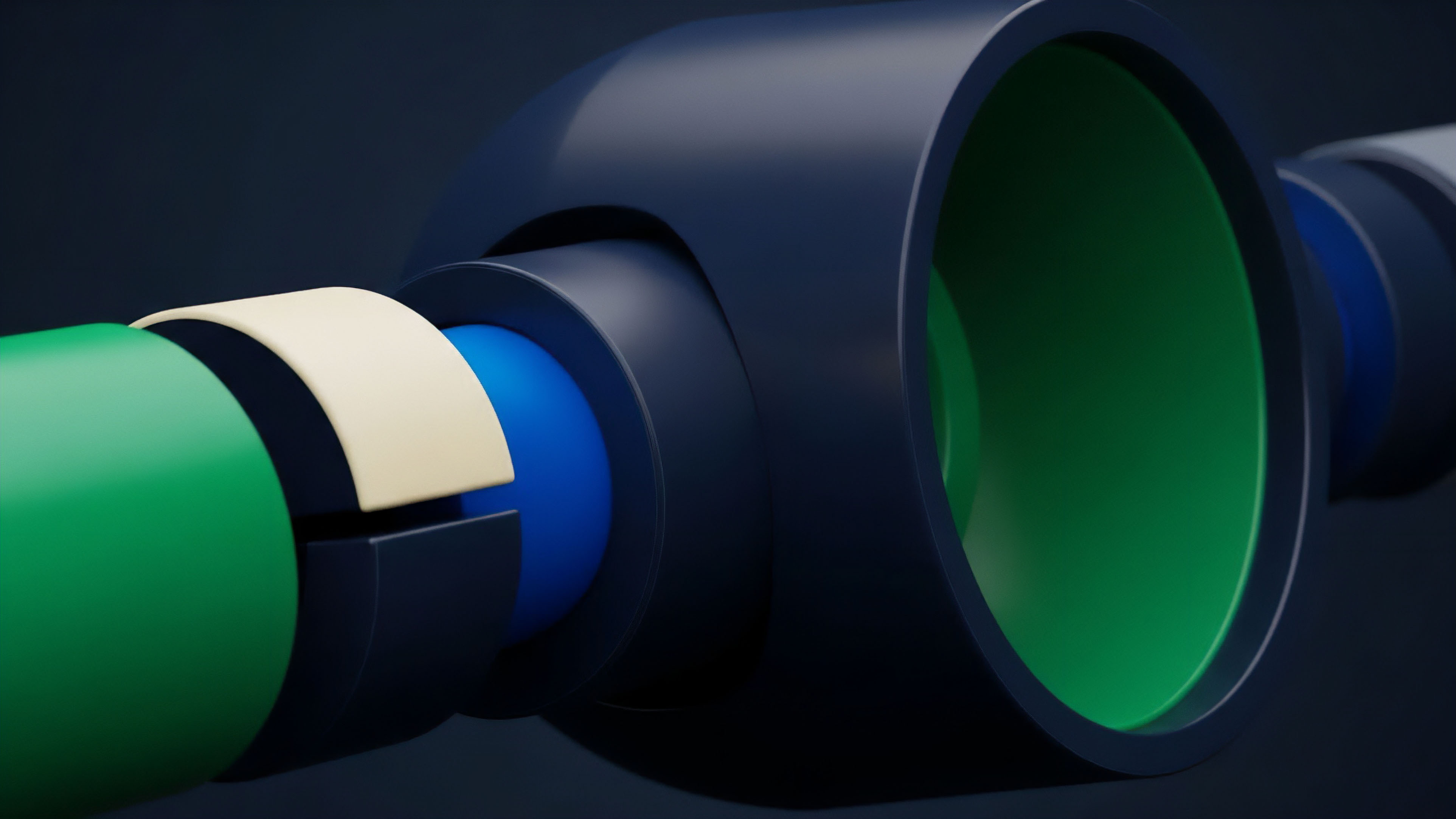

This shift required verification systems to evolve from simple price checks to complex, multi-variable calculations. The transition from single-source oracles to decentralized networks was a necessary step, but the next evolution involves optimizing these networks for speed and cost. The development of Layer 2 scaling solutions presents both an opportunity and a challenge for data verification.

While Layer 2s offer lower transaction costs and faster finality, they also introduce a new layer of data latency. Data verification must now consider the time lag between Layer 1 and Layer 2, ensuring that data feeds remain consistent across different execution environments. The future of data verification involves intent-based architectures , where verification is integrated directly into the transaction itself.

Instead of relying on a pre-defined oracle feed, a user’s intent to trade is matched with a verified price, potentially reducing reliance on external oracles for certain types of contracts.

The move towards Layer 2 solutions necessitates data verification architectures that can manage data consistency across different execution environments while maintaining speed and security.

Another significant evolution involves the integration of on-chain verification. Rather than relying solely on external data providers, some protocols are exploring methods to derive data from on-chain activity, such as liquidity pool prices. This approach reduces external dependencies but introduces new risks related to liquidity depth and manipulation within the protocol itself. The shift toward more complex products, like exotic options or structured products, requires verification systems that can handle a larger volume of data points and a wider range of calculations, moving beyond simple price feeds to encompass complex risk parameters.

Horizon

Looking ahead, the horizon for data verification in derivatives points toward a complete re-architecture of how protocols interact with external information. The current model of external oracle networks faces significant challenges regarding latency and cost, especially as protocols scale across multiple Layer 2s. The future of data verification will likely be characterized by a shift from reactive data feeds to proactive, predictive models. One potential pathway involves ZK-powered verification. Zero-knowledge proofs could be used to prove the accuracy of a data feed without revealing the underlying data sources or the calculation methodology. This would enhance privacy and security simultaneously. Another pathway involves integrated verification layers within the Layer 2 itself. Instead of relying on a separate oracle network, the Layer 2 infrastructure could natively provide verified data, reducing latency and cost significantly. This moves the verification from an external service to an intrinsic function of the execution environment. The ultimate goal for data verification in derivatives is to achieve full on-chain verification without external dependencies. This would require protocols to derive all necessary parameters, including implied volatility, from on-chain trading activity. This approach eliminates the oracle problem entirely by making the protocol self-sufficient. This is a complex engineering challenge, requiring high liquidity and robust market making to ensure that the on-chain data accurately reflects global market conditions. The future of data verification is not about finding better external sources, but about building systems where the data source and the verification mechanism are one and the same, ensuring a truly trust-minimized financial system. The primary challenge remains: how do we verify data for low-liquidity assets without introducing manipulation vectors?

Glossary

On-Chain Verification Gas

Hybrid Verification Systems

Identity Verification Proofs

Accreditation Verification

On-Chain Verification Logic

Ai Agent Strategy Verification

Cryptographic Signature Verification

High-Frequency Trading Verification

Data Stream Verification