Essence

Cryptographic proof generation represents the definitive physical constraint on the scalability of trustless digital ledgers. The Zero Knowledge Rollup Prover Cost refers to the total expenditure of computational resources, electricity, and time required to transform a batch of transactions into a succinct, verifiable mathematical proof. This expenditure functions as a gatekeeper for network throughput and determines the economic feasibility of high-frequency on-chain activity.

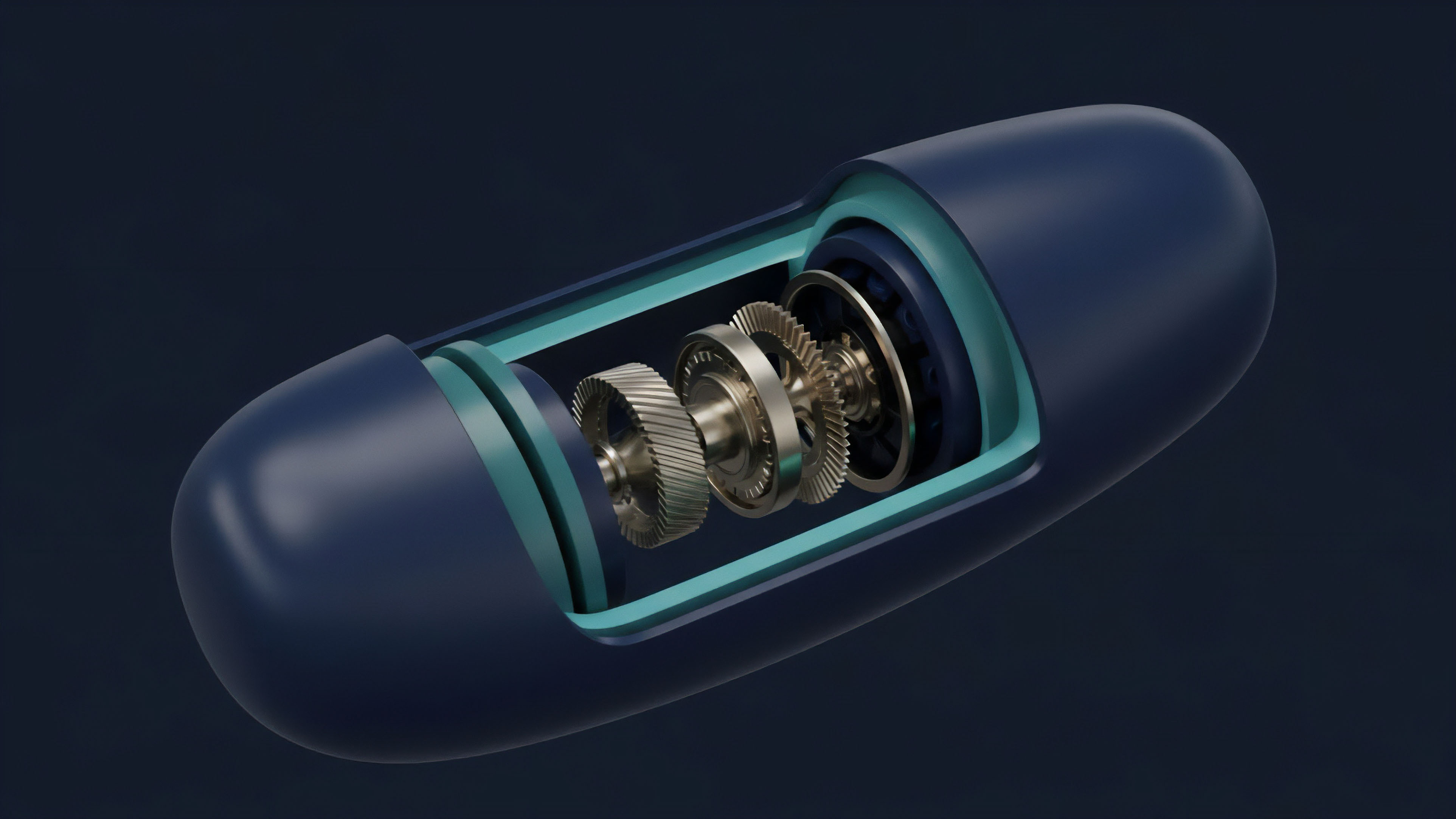

Unlike traditional database updates, these proofs require the construction of arithmetic circuits where every transaction is a set of constraints that must be satisfied. The burden of the Zero Knowledge Rollup Prover Cost manifests as a combination of hardware depreciation and operational latency. Provers must execute massive computations involving large-field arithmetic, which places extreme stress on memory bandwidth and processing units.

This financial friction dictates the minimum fee a user must pay to ensure their transaction is included in a batch that can be economically proven and settled on the base layer.

The Zero Knowledge Rollup Prover Cost acts as the primary economic anchor for Layer 2 scaling, representing the literal price of computational integrity.

- Hardware Amortization: The capital expenditure for high-end GPUs or specialized ASICs required to handle the parallelized workloads of proof generation.

- Electricity Consumption: The variable cost of power needed to run intensive Multi-Scalar Multiplication and Fast Fourier Transform operations.

- Opportunity Cost of Latency: The financial loss incurred by market participants while waiting for the proof to be generated and finalized on the mainnet.

- Memory Overhead: The significant RAM requirements for storing the large witness data and intermediate polynomial commitments during the proving cycle.

The survival of decentralized finance depends on our ability to reduce these expenditures before they centralize the network into a few massive server farms. If the cost of proving remains high, only a few entities will possess the capital to participate, leading to a new form of institutional gatekeeping. We are currently witnessing a race to optimize the mathematical primitives that underpin these systems to prevent such an outcome.

Origin

The necessity for the Zero Knowledge Rollup Prover Cost arose from the fundamental limitations of the Ethereum Virtual Machine and its inability to process thousands of transactions per second without compromising decentralization.

Early scaling solutions relied on optimistic assumptions, where transactions were assumed valid unless challenged. This created long withdrawal delays. The shift toward validity proofs removed the trust requirement but introduced the massive computational debt of generating SNARKs or STARKs.

Initial implementations utilized general-purpose hardware, leading to exorbitant costs and slow batching cycles. As the demand for blockspace increased, the inefficiency of early proof systems became a systemic risk. Developers realized that the Zero Knowledge Rollup Prover Cost was not a static variable but a function of the proof system’s architecture and the underlying hardware’s efficiency.

| Proof System | Primary Cost Driver | Verification Speed | Hardware Requirement |

|---|---|---|---|

| Groth16 | Trusted Setup Maintenance | Constant | Moderate |

| Plonk | Polynomial Commitment Complexity | Linear | High |

| STARKs | Hash Function Iterations | Logarithmic | Very High |

The second law of thermodynamics suggests that order requires energy; in our digital architecture, ZK proofs are the energy we spend to maintain the order of the state. This historical progression shows a clear trend: we are trading off prover-side complexity for verifier-side simplicity. The goal has always been to make the proof as cheap as possible for the base layer to check, even if it makes the Zero Knowledge Rollup Prover Cost significantly higher for the sequencer.

Theory

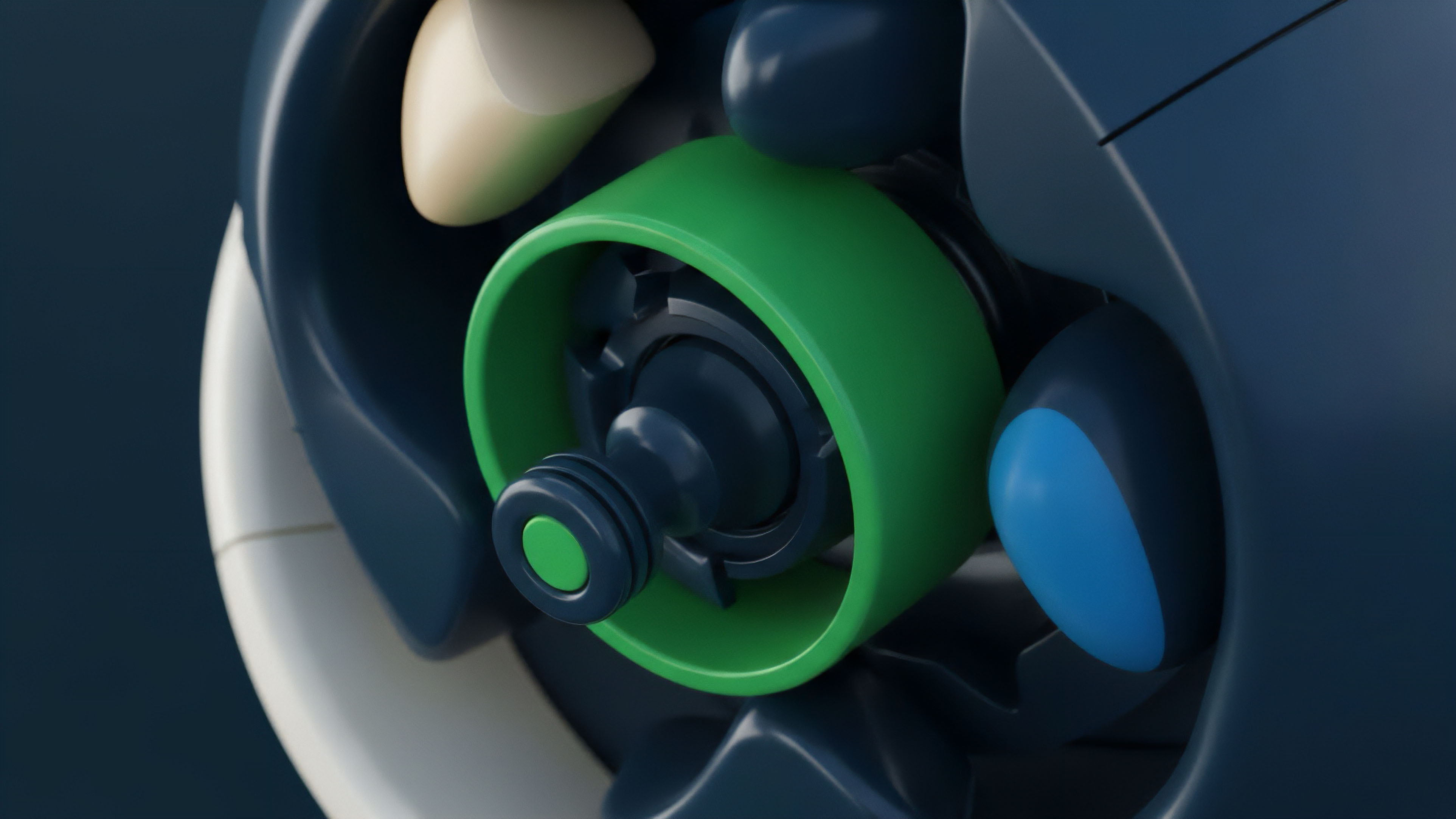

The mathematical foundations of the Zero Knowledge Rollup Prover Cost are rooted in the complexity of two specific operations: Multi-Scalar Multiplication (MSM) and Fast Fourier Transforms (FFT).

These operations dominate the proving time, often accounting for over 80% of the total computational workload. MSM involves calculating the sum of points on an elliptic curve, each multiplied by a scalar, which is a process that scales linearly with the number of constraints but requires massive parallelization to remain performant. FFTs are used for polynomial interpolation and evaluation, which are necessary for the Reed-Solomon encoding used in many proof systems.

The complexity of FFTs is O(n log n), which means as the batch size increases, the Zero Knowledge Rollup Prover Cost grows at a faster rate than the number of transactions, creating a diminishing return on batch size if not managed through recursive proof techniques. The efficiency of these operations is heavily dependent on the memory architecture of the prover. During the generation of a proof for a Zero Knowledge Rollup Prover Cost, the system must handle billions of field elements.

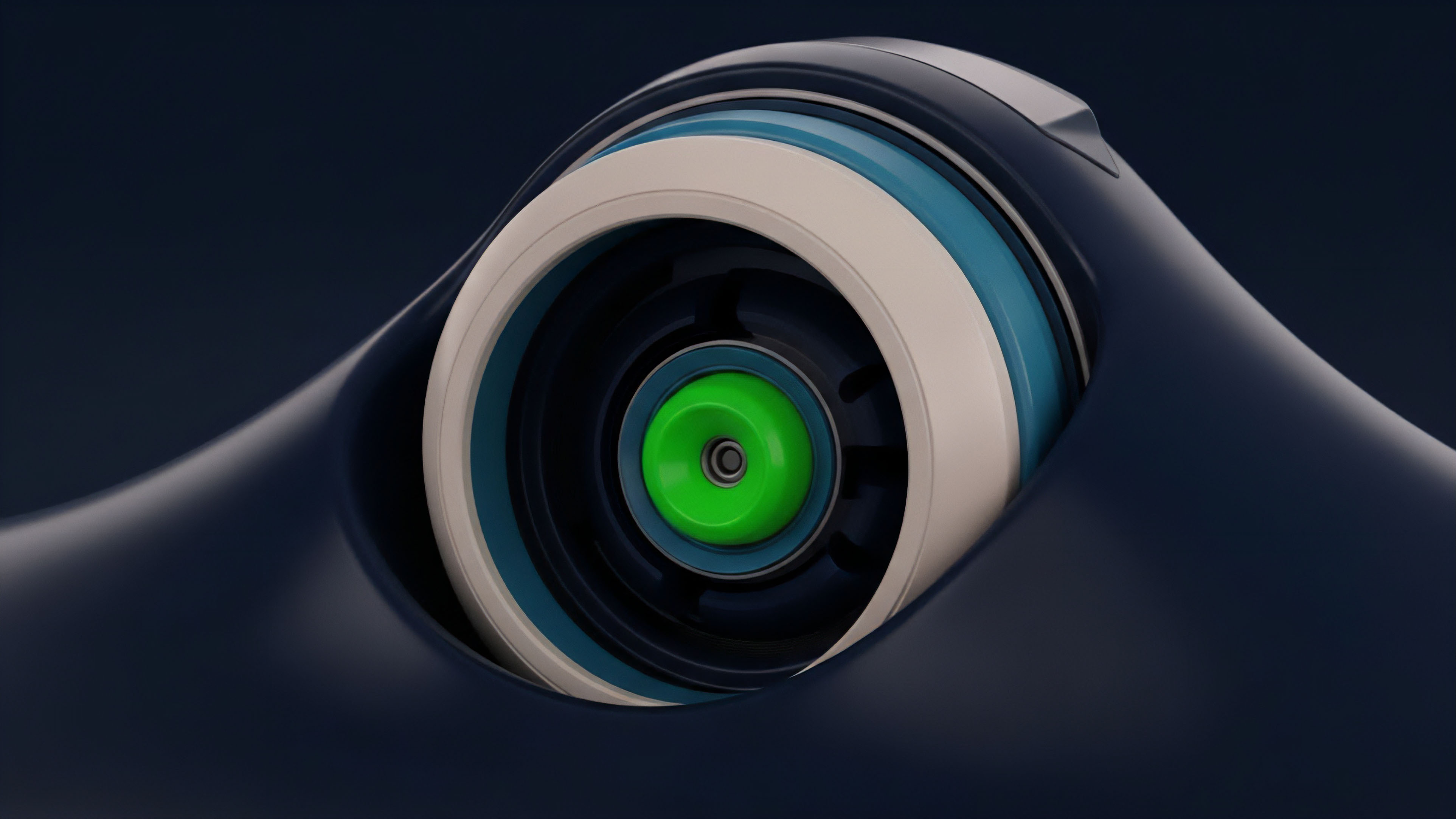

This leads to a “memory wall” where the processor spends more time waiting for data from the RAM than actually performing calculations. To mitigate this, theorists are looking into hardware-friendly proof systems that minimize the need for large FFTs or use smaller fields, such as the Goldilocks field or the BabyBear field, which are more compatible with standard 64-bit CPU architectures.

Computational complexity in proof generation creates a non-linear relationship between batch size and the energy required for finality.

The Zero Knowledge Rollup Prover Cost is also influenced by the arithmetization process, which converts high-level logic into a system of equations. If the circuit is poorly optimized, it will contain unnecessary constraints, directly increasing the number of MSMs and FFTs required. This is why the design of the zkEVM is so difficult; it must map the complex and often inefficient opcodes of the Ethereum Virtual Machine into a streamlined set of mathematical constraints.

Every extra gate in the circuit adds to the Zero Knowledge Rollup Prover Cost, making the development of “circuit-friendly” logic a top priority for quantitative researchers. The interplay between the size of the cryptographic field and the security level of the proof also dictates the cost. Larger fields provide higher security but require more bits per operation, increasing the Zero Knowledge Rollup Prover Cost.

Conversely, smaller fields allow for faster vector instructions on modern hardware but require more complex techniques to maintain the same level of cryptographic resistance against attacks. This balance is a constant source of debate among protocol architects who must choose between the speed of proof generation and the long-term robustness of the network’s security model. My concern is that if we fail to commoditize this cost, we recreate the very gatekeeping we sought to dismantle.

This is where the pricing model becomes truly elegant ⎊ and dangerous if ignored.

Approach

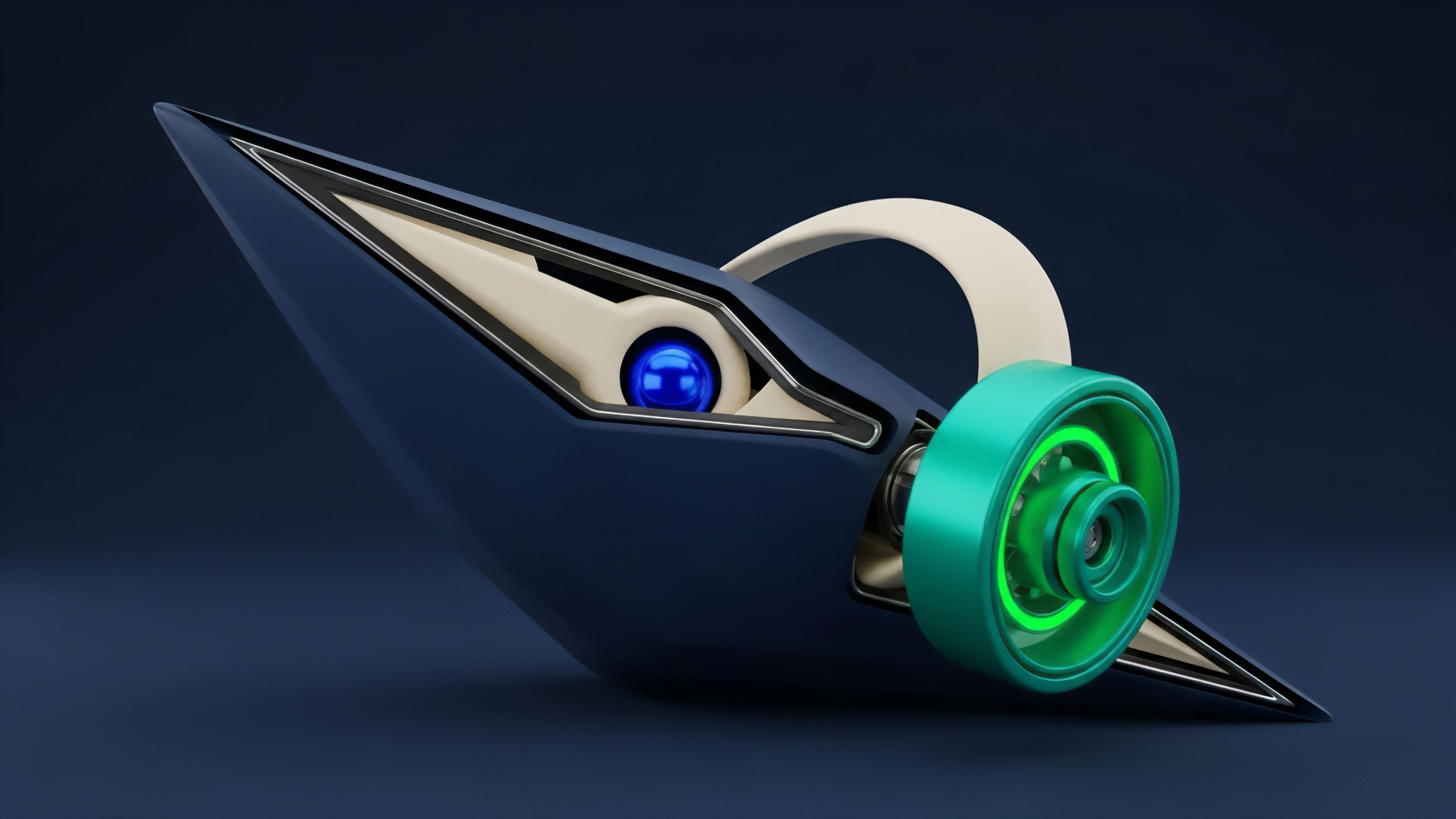

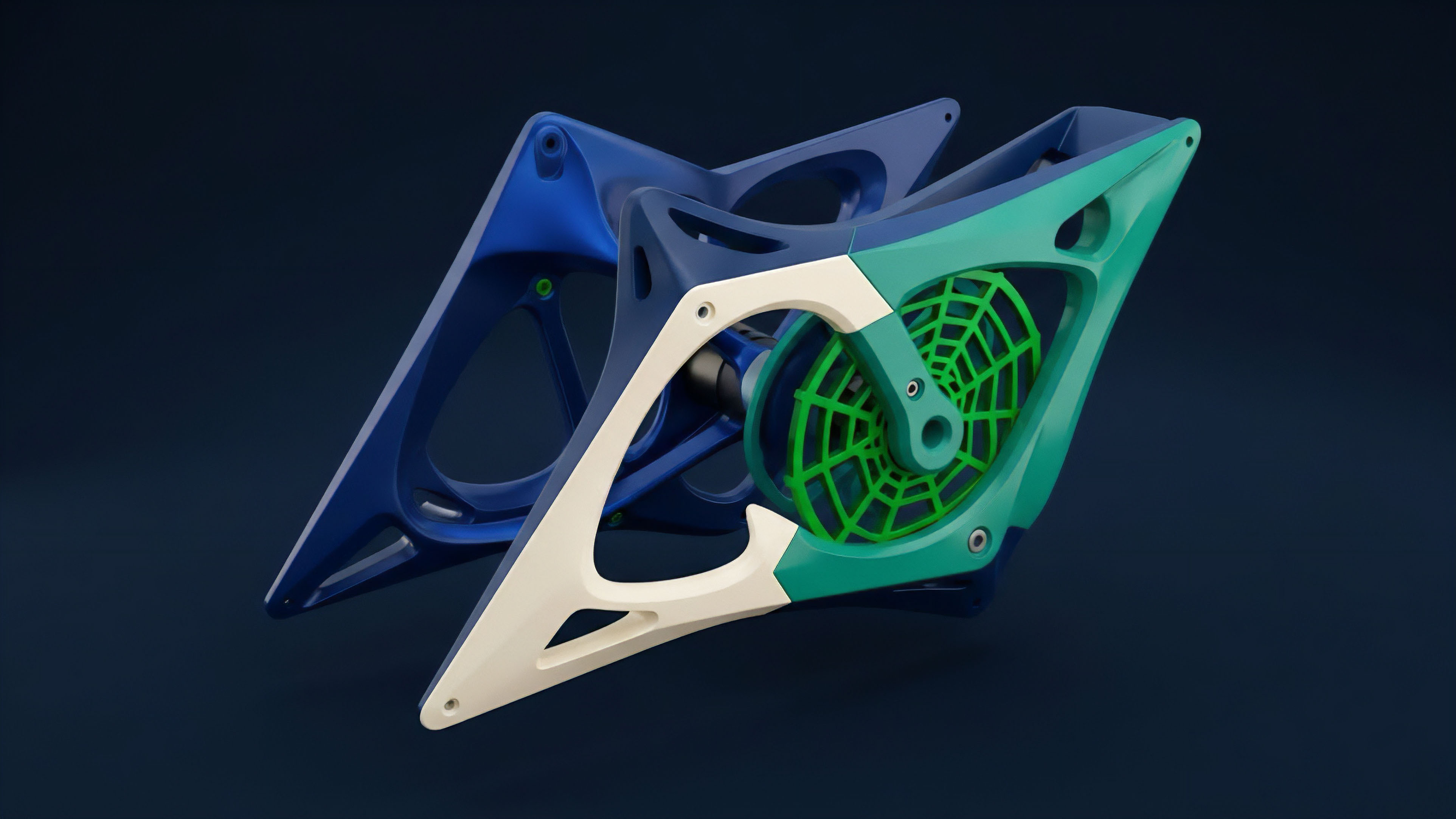

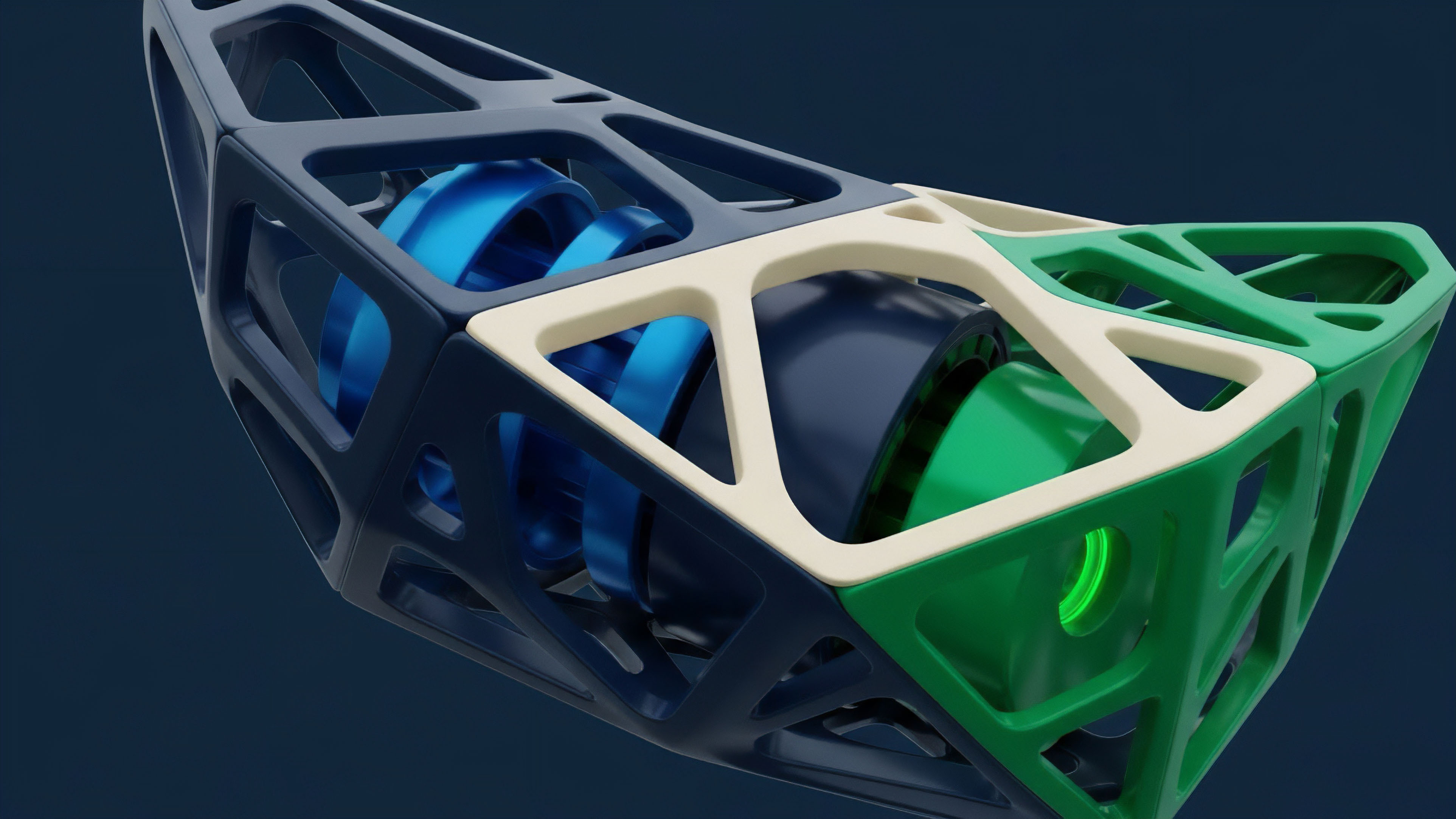

Current strategies to manage the Zero Knowledge Rollup Prover Cost focus on hardware acceleration and proof recursion. Recursion allows a prover to generate a proof of multiple other proofs, effectively compressing the total amount of data that needs to be submitted to the mainnet. This reduces the verifier cost but increases the initial Zero Knowledge Rollup Prover Cost as multiple layers of proofs must be constructed.

To handle this, many protocols are moving toward a decentralized prover network where individual participants can contribute their computational power in exchange for rewards.

| Acceleration Type | Target Operation | Efficiency Gain | Implementation Difficulty |

|---|---|---|---|

| GPU Acceleration | MSM / FFT | 10x – 50x | Moderate |

| FPGA Customization | Pipelined Logic | 20x – 100x | High |

| ASIC Development | Fixed Circuitry | 100x+ | Very High |

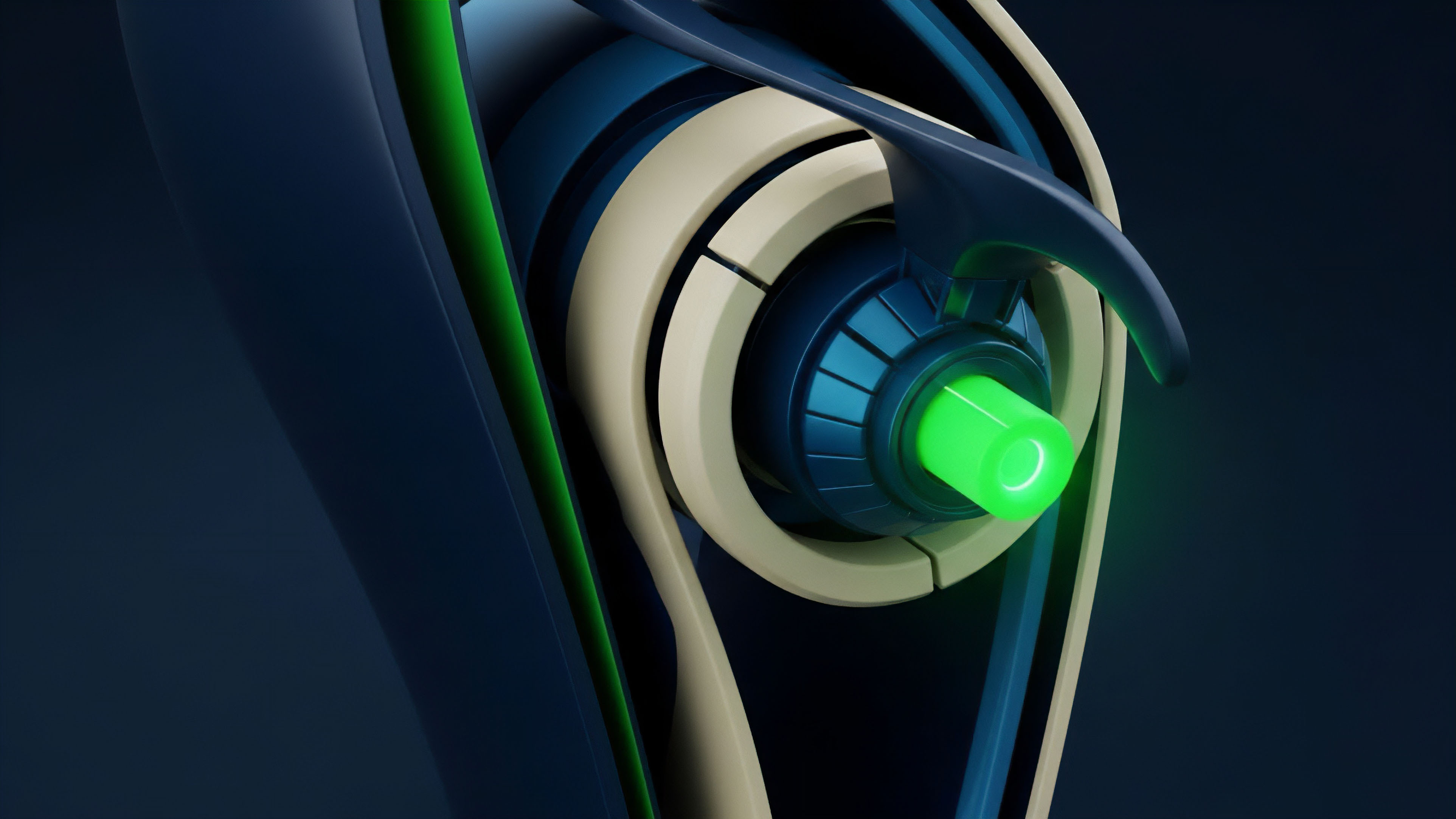

- Parallelized Witness Generation: Distributing the task of calculating intermediate values across multiple CPU cores to reduce the pre-proving latency.

- Recursive SNARK Aggregation: Combining thousands of small proofs into a single master proof to amortize the cost of on-chain verification.

- Custom Elliptic Curves: Utilizing curves like BLS12-381 or BN254 that are specifically designed for efficient pairing and proof generation.

- Zero-Knowledge Hardware (ZKH): Developing specialized chips that implement the arithmetic primitives of ZK proofs directly in silicon.

Another method involves the use of “folding schemes” like Nova or Sangria. These schemes allow for the accumulation of multiple instances of a circuit into a single instance without the heavy overhead of full recursive SNARKs. By using folding, the Zero Knowledge Rollup Prover Cost is spread out over time, allowing for a more continuous and less “bursty” computational load.

This is particularly useful for applications that require frequent state updates, such as decentralized exchanges or gaming platforms.

Evolution

The Zero Knowledge Rollup Prover Cost has shifted from a theoretical research problem to a competitive market for specialized compute. In the early days, a single sequencer would run a monolithic prover, creating a single point of failure and a bottleneck for the entire network. Today, we see the rise of prover marketplaces where computational tasks are auctioned off to the lowest bidder.

This competition forces provers to constantly optimize their software and hardware stacks to remain profitable. The transition from software-based proving to hardware-centric proving is the most significant shift in the history of the Zero Knowledge Rollup Prover Cost. We are moving away from general-purpose CPUs toward a world where ZK-compute is a commodity, similar to Bitcoin mining.

This commoditization is necessary to bring the Zero Knowledge Rollup Prover Cost down to a level where it can compete with traditional centralized databases.

Market-driven prover competition incentivizes the transition from general-purpose hardware to specialized cryptographic silicon.

| Era | Dominant Hardware | Prover Model | Cost Structure |

|---|---|---|---|

| Research Era | Standard CPU | Monolithic / Centralized | Extremely High / Inefficient |

| Scaling Era | High-End GPU | Permissioned Prover Sets | Moderate / Variable |

| Commodity Era | ZK-ASIC / FPGA | Decentralized Prover Markets | Low / Fixed |

This progression has also seen the introduction of “proof-of-useful-work” models, where the energy spent on generating the Zero Knowledge Rollup Prover Cost also serves to secure the network or provide some other utility. By integrating the proving process into the consensus mechanism itself, protocols can offset the financial burden on users, making the Layer 2 experience nearly as cheap as interacting with a centralized server while maintaining the security of the base layer.

Horizon

The future of the Zero Knowledge Rollup Prover Cost lies in its eventual invisibility. We are heading toward a state where proof generation is embedded into the hardware of every mobile device and laptop. This would allow for “client-side proving,” where the user generates the proof of their own transaction’s validity before even sending it to the sequencer. This would effectively distribute the Zero Knowledge Rollup Prover Cost across the entire user base, reducing the burden on the network operators to near zero. As we see the rise of multi-chain architectures, the demand for cross-chain proofs will further drive the need for cheaper and faster proving. The Zero Knowledge Rollup Prover Cost will become a standard metric for evaluating the health and efficiency of a blockchain, much like gas prices are today. Protocols that fail to optimize this cost will find themselves priced out of the market as users migrate to platforms that offer faster finality at a lower price point. The integration of artificial intelligence and machine learning into the circuit optimization process will also play a role. AI can be used to find more efficient ways to represent complex logic as mathematical constraints, further driving down the Zero Knowledge Rollup Prover Cost. We are only at the beginning of this journey, and the innovations we see in the next few years will determine whether the promise of a truly decentralized and scalable financial system can be realized. The ultimate goal is a world where the cost of mathematical truth is so low that it is no longer a factor in the design of digital systems. Still, the risk of specialized hardware leading to new forms of centralization remains a threat that we must actively counter through open-source hardware designs and decentralized prover coordination protocols. This is the next great battle in the quest for digital sovereignty.

Glossary

Scalable Transparent Argument of Knowledge

Decentralized Prover

Arithmetic Circuit Complexity

Proof Generation

Fast Fourier Transform

Recursive Proof Aggregation

Proof Systems

Multi-Scalar Multiplication

Client-Side Proving