Essence

Deterministic computation replaces the fragile reliance on institutional reputation within the derivatives lifecycle. The Verification-Based Model functions as a rigorous framework where the validity of financial transactions is established through mathematical proofs rather than the assertions of a centralized intermediary. This shift represents a fundamental re-engineering of market microstructure, moving away from the trust-based legacy finance toward a verify-then-execute paradigm.

Within this architecture, the clearinghouse is no longer a human-managed entity prone to discretionary errors or opaque risk management; it is an immutable set of cryptographic constraints.

The Verification-Based Model mandates that every state change in an option contract is accompanied by a cryptographic proof of validity.

Financial sovereignty in the crypto options space necessitates that settlement logic is decoupled from the platform operator. By utilizing the Verification-Based Model, protocols ensure that margin requirements, exercise conditions, and final payouts are governed by code that is verifiable by any participant. This eliminates the counterparty risk associated with centralized exchanges, where the internal ledger is a black box.

The model enforces a strict adherence to protocol physics, ensuring that every unit of risk is backed by verifiable collateral or mathematically sound hedging strategies.

Computational Integrity in Option Markets

The architecture relies on the premise that truth is derived from computation. In traditional systems, a trader relies on the exchange to correctly calculate the Greeks and enforce liquidations. In a Verification-Based Model, these calculations are performed on-chain or via off-chain provers that submit validity proofs to the base layer.

This ensures that the margin engine operates with absolute precision, removing the possibility of “fat-finger” errors or malicious manipulation of liquidation thresholds.

Origin

The genesis of this model lies in the systemic opacity that characterized the 2008 financial crisis and the subsequent collapses of centralized digital asset exchanges. These events exposed the catastrophic risks inherent in opaque clearing processes and the discretionary management of collateral. Market participants demanded a system where the solvency of the counterparty and the integrity of the trade were transparent and mathematically guaranteed.

The Verification-Based Model emerged as the technical solution to the “trust gap” in complex financial instruments.

Failure of Institutional Reputation

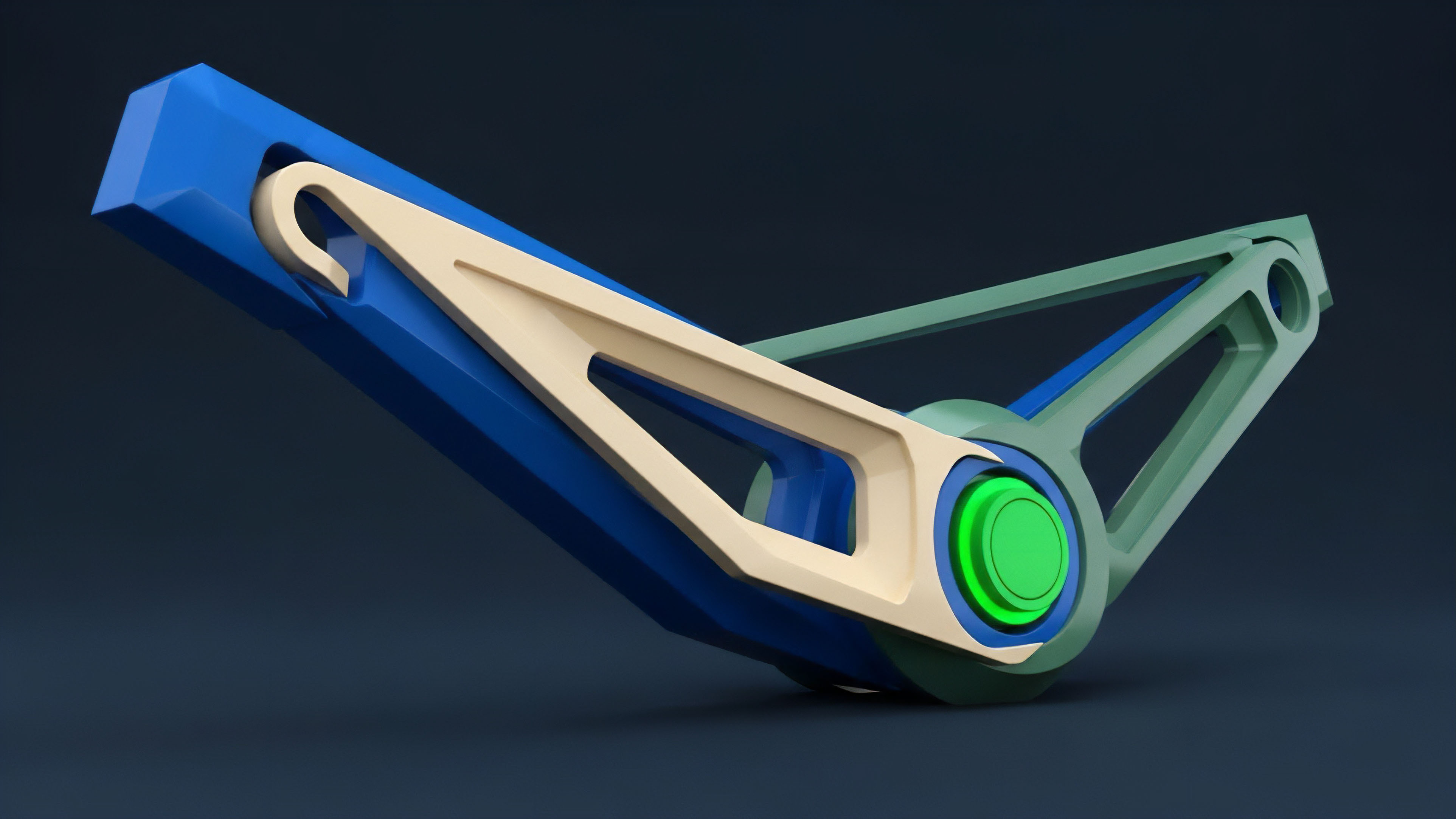

Historically, derivatives trading was restricted to a closed circle of institutions that trusted one another through credit lines and mutual oversight. The Verification-Based Model dismantles this gatekeeping by replacing creditworthiness with cryptographic proof. The shift began with simple atomic swaps but quickly progressed to handle the non-linear risk profiles of options.

The need for real-time, trustless auditability became the primary driver for integrating zero-knowledge proofs and optimistic verification mechanisms into derivative protocols.

Systemic risk is mitigated when the margin engine operates as a mathematical certainty rather than a discretionary process.

Technological Convergence

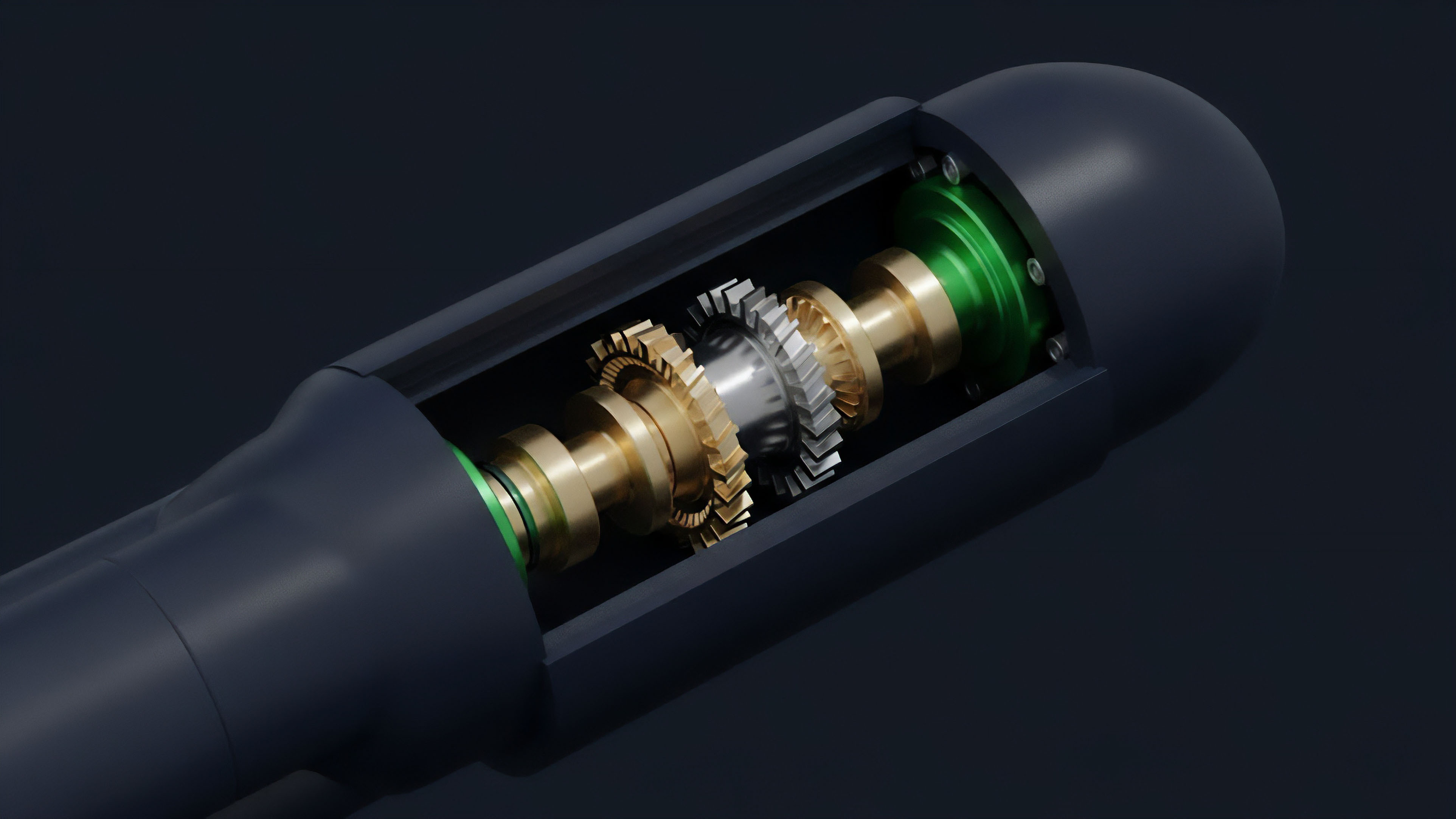

The development of Layer 2 scaling solutions provided the necessary throughput to make the Verification-Based Model viable for high-frequency options trading. Previously, the cost of verifying every state change on a base layer was prohibitive. With the advent of recursive SNARKs and validity rollups, complex option strategies ⎊ including multi-leg spreads and path-dependent barriers ⎊ can now be verified with minimal latency and cost.

This convergence of quantitative finance and advanced cryptography has enabled a new class of resilient financial infrastructure.

Theory

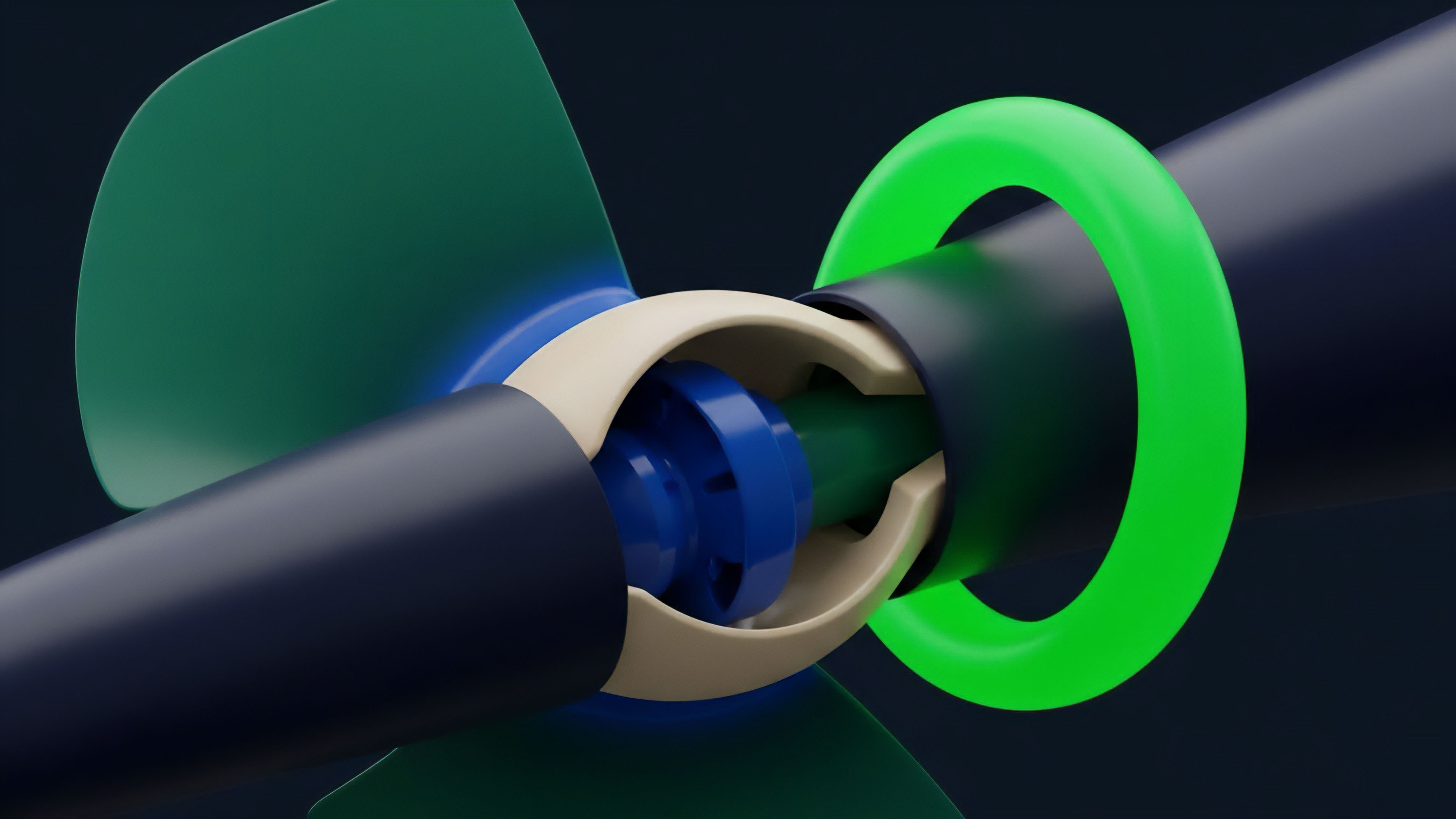

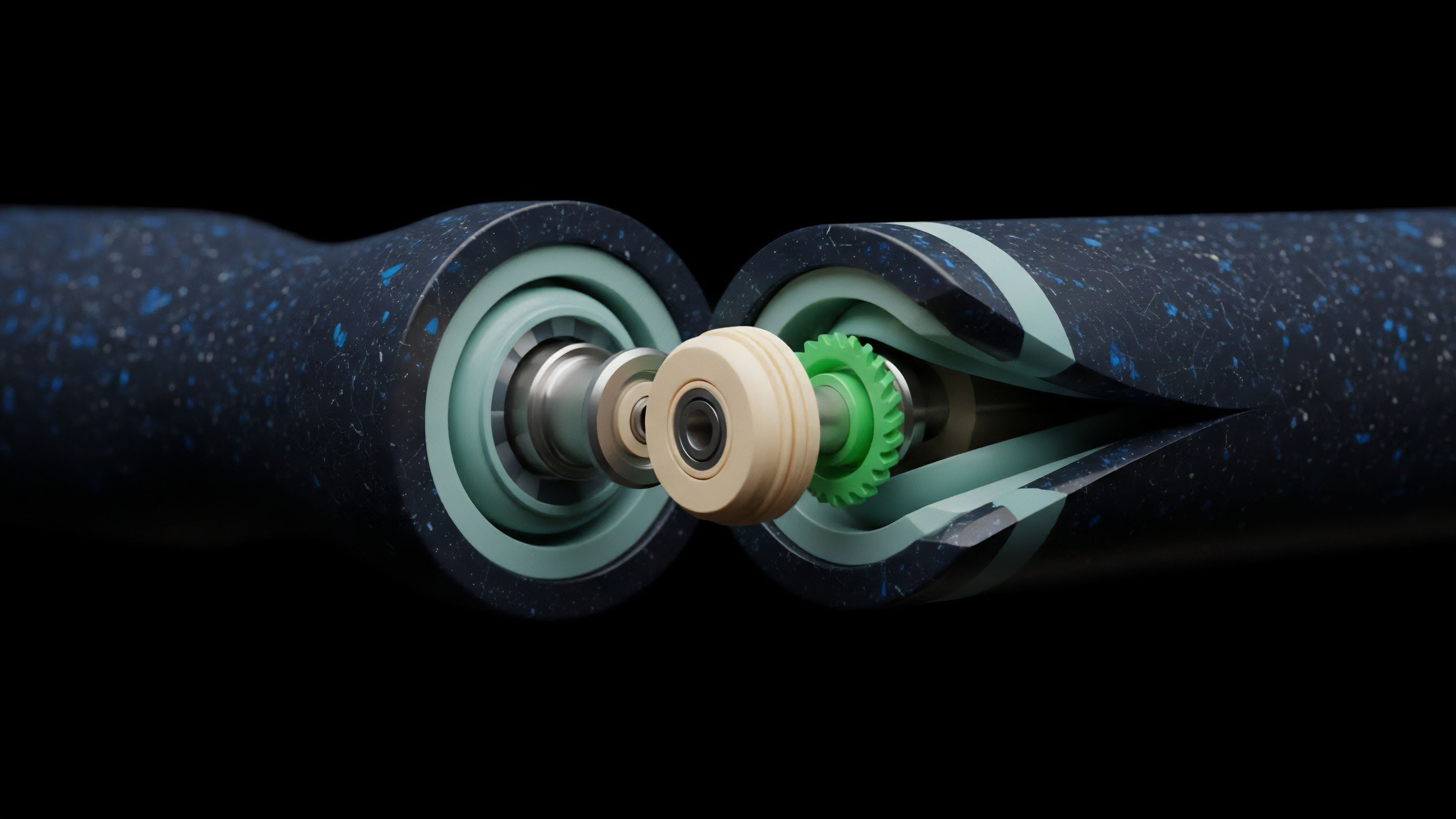

The mathematical structure of the Verification-Based Model treats an option contract as a series of state transitions within a verifiable state machine. Each transition ⎊ from deposit to trade execution to settlement ⎊ requires a proof that the new state adheres to the protocol’s predefined rules. This is grounded in the principle of state consistency, where the system ensures that the total value locked and the aggregate risk exposure always balance across the network.

Verification Logic Framework

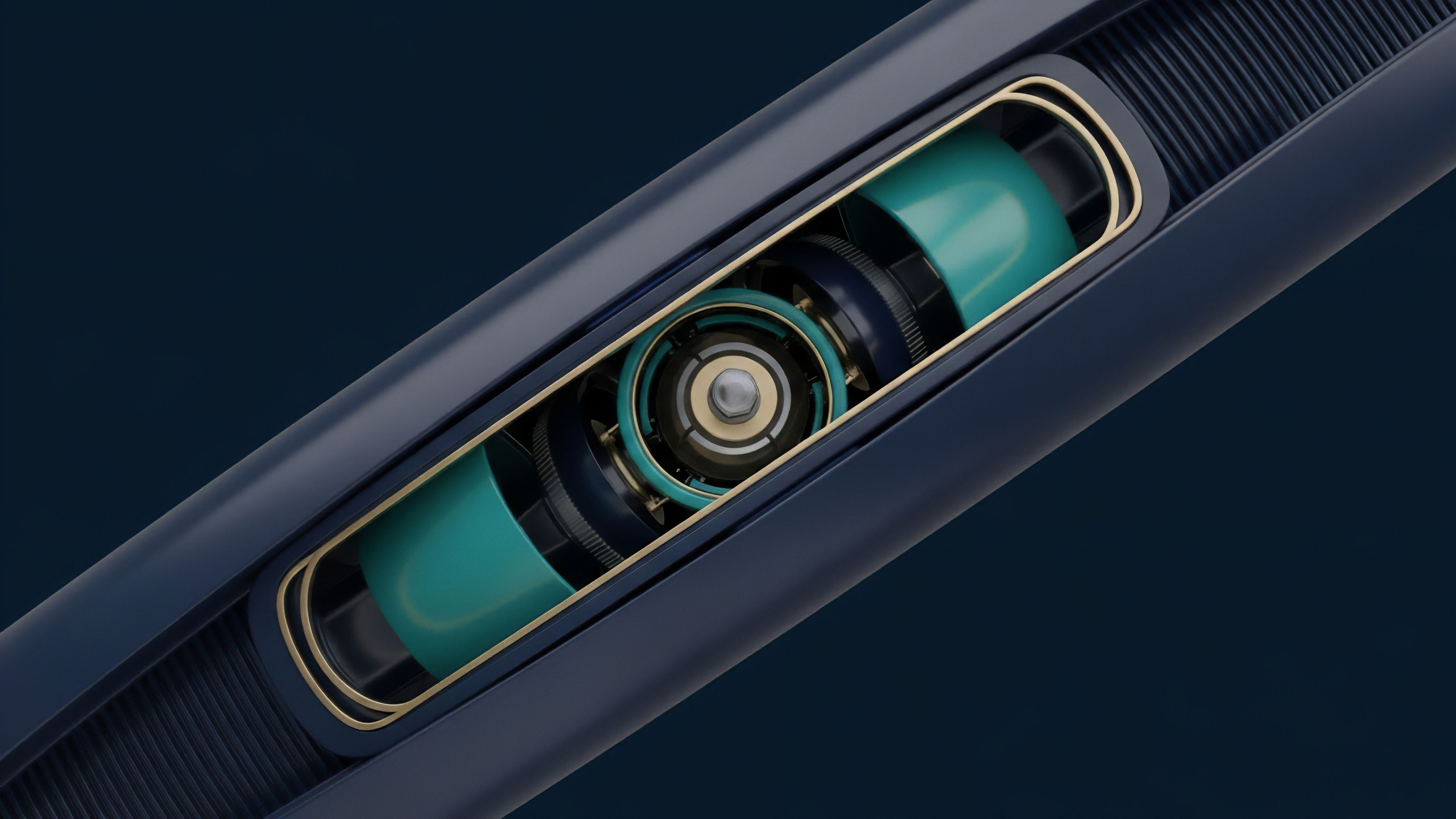

The model utilizes a hierarchy of proofs to maintain the integrity of the margin engine. At the base level, validity proofs ensure that every transaction is authorized and collateralized. At the higher level, risk proofs validate that the portfolio’s delta, gamma, and vega remain within the protocol’s safety parameters.

This creates a multi-layered defense against insolvency.

| Feature | Trust-Based Model | Verification-Based Model |

|---|---|---|

| Settlement Authority | Centralized Clearinghouse | Cryptographic Proof |

| Margin Enforcement | Discretionary / Opaque | Deterministic / Transparent |

| Counterparty Risk | Institutional Solvency | Protocol Code Integrity |

| Auditability | Periodic / Third-Party | Real-Time / On-Chain |

Recursive Risk Assessment

Quantitative models within the Verification-Based Model often employ recursive verification. This means that a proof of a trade’s validity also includes a proof that the previous state of the ledger was valid. This chain of proofs ensures that the entire history of the option market is verifiable from the genesis block.

For options, this involves verifying the Black-Scholes or Monte Carlo inputs used for pricing and margin, ensuring that no participant can manipulate the implied volatility surface to trigger unfair liquidations.

Approach

Implementation of the Verification-Based Model currently follows two primary paths: validity-based (ZK) and fraud-proof-based (Optimistic). Each has distinct trade-offs regarding capital efficiency and latency. In the ZK-centric Verification-Based Model, every state update is accompanied by a proof that is verified by a smart contract before the state is finalized.

This allows for near-instant settlement and high capital efficiency, as the collateral is only released once the proof is accepted.

Financial sovereignty in derivatives requires the elimination of third-party mediation through verifiable execution paths.

Operational Pipeline for Verifiable Options

The execution of a verifiable option trade follows a specific sequence to ensure the integrity of the margin engine:

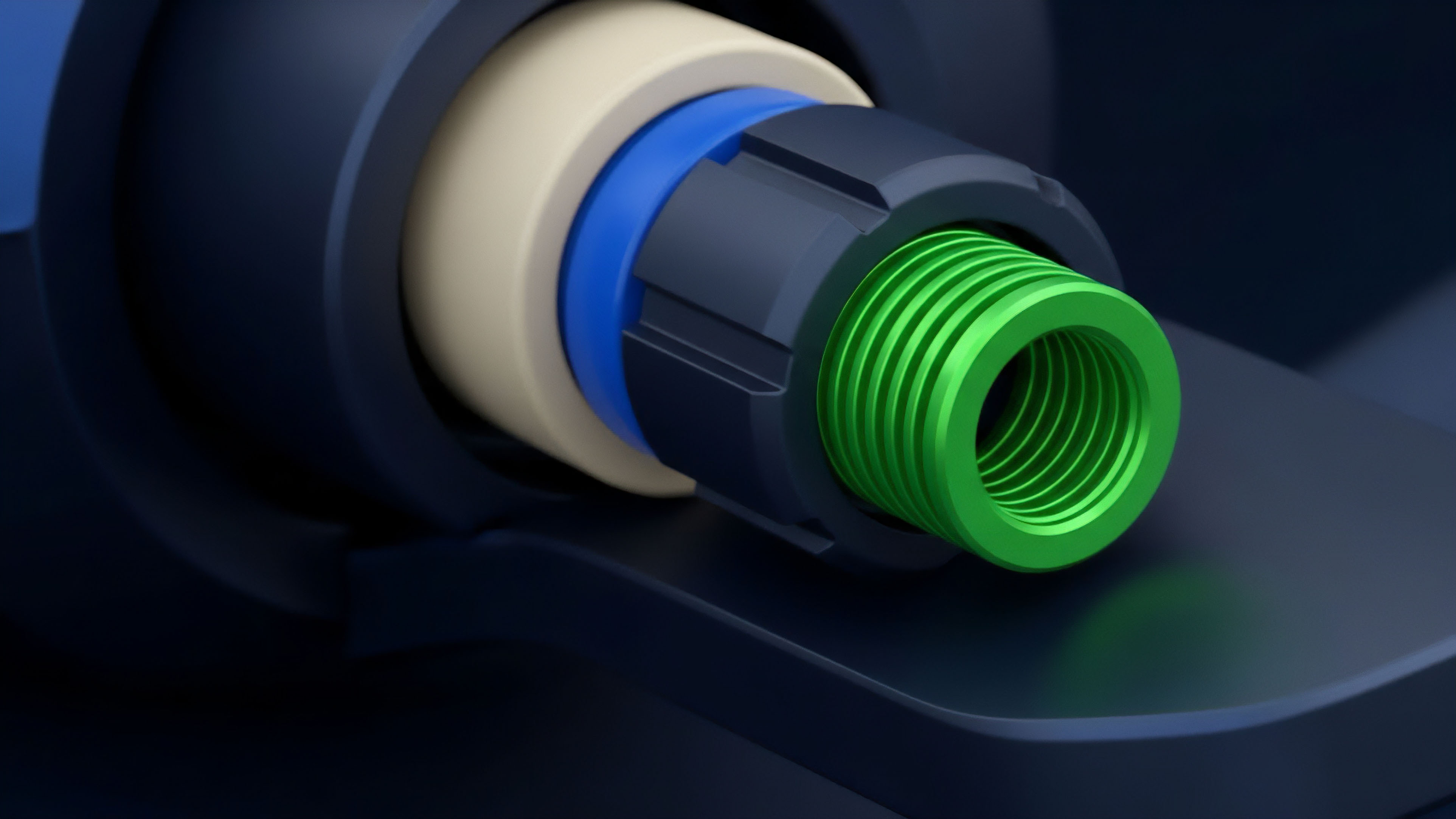

- Proof Generation: The trader’s client or a specialized prover generates a ZK-SNARK or ZK-STARK that demonstrates the trade is fully collateralized according to the current volatility surface.

- State Transition Submission: The proof and the proposed state change are submitted to the verification contract on the blockchain.

- On-Chain Verification: The smart contract executes the verification algorithm; if the proof is valid, the state is updated and the trade is locked.

- Continuous Margin Monitoring: Automated agents monitor the price feeds and trigger liquidation proofs if the collateral value falls below the verifiable threshold.

Comparative Implementation Strategies

The choice between verification methods dictates the user experience and the protocol’s risk profile.

| Metric | ZK-Verification | Optimistic Verification |

|---|---|---|

| Finality Time | Instant (after proof) | Delayed (fraud-proof window) |

| Computation Cost | High (off-chain) | Low (until challenged) |

| Privacy Potential | High (shielded positions) | Low (public state) |

| Capital Efficiency | Maximum | Reduced (due to exit delays) |

Evolution

The progression of the Verification-Based Model has moved from simple collateralized debt positions to sophisticated, non-custodial derivative ecosystems. Early decentralized options were limited by high gas costs and slow settlement, leading to “hybrid” models where only the final settlement was on-chain. The current state represents a shift toward “full-stack verification,” where the order book, matching engine, and risk management are all subject to cryptographic scrutiny.

Structural Shifts in Market Architecture

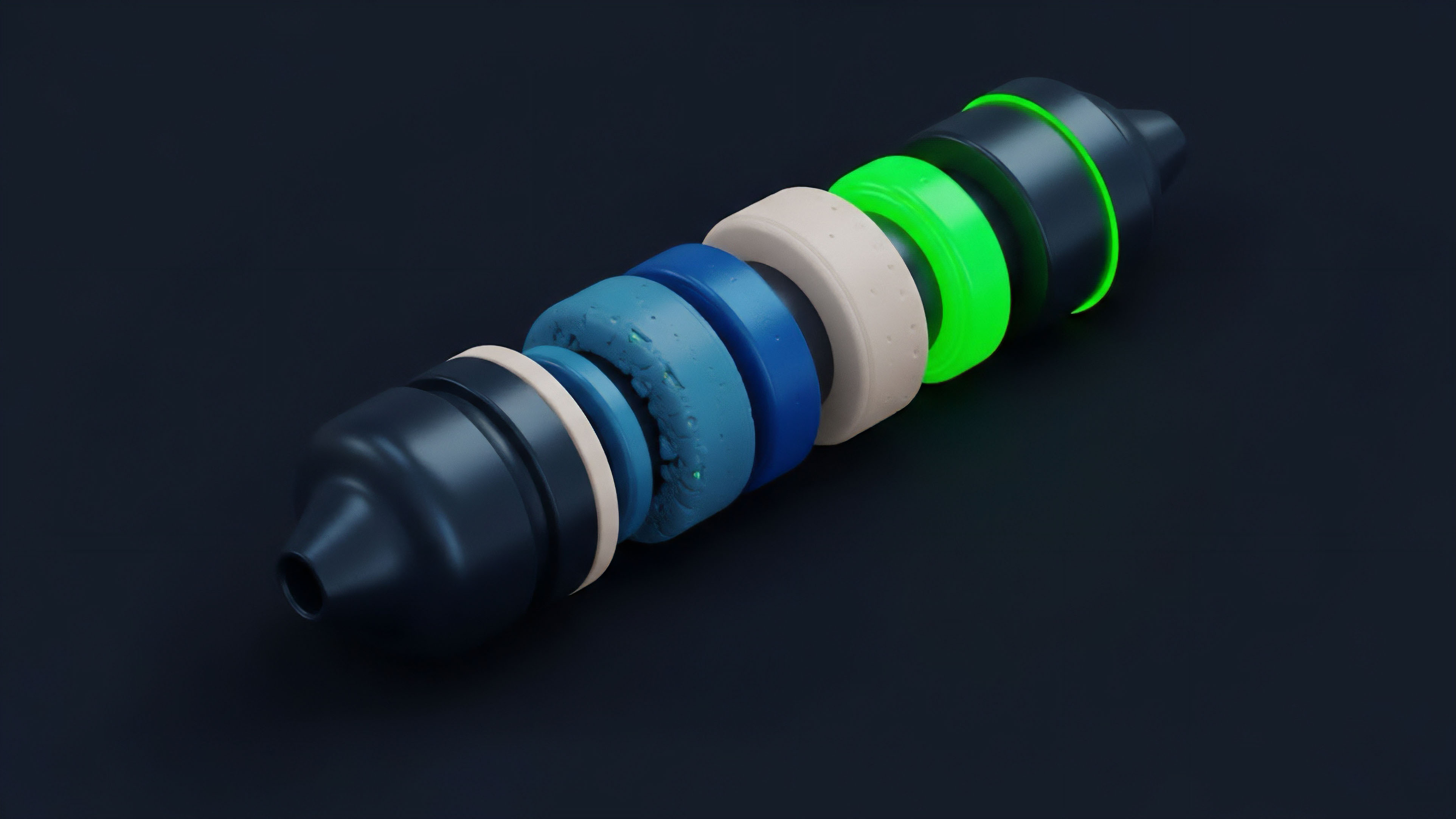

The evolution is marked by several key transitions in how risk is handled:

- Collateralization Phase: Initial models required 100% collateralization, eliminating the need for complex verification but severely limiting capital efficiency.

- Margin Engine Phase: The introduction of partial collateralization necessitated verifiable margin engines that could calculate risk in real-time without centralized intervention.

- Privacy Integration Phase: The current shift involves using Zero-Knowledge proofs not just for scaling, but for hiding trader positions from front-runners while still proving solvency to the protocol.

Adversarial Resilience

The Verification-Based Model has matured through constant stress testing in the adversarial environment of decentralized finance. Exploits that targeted oracle manipulation or flash-loan-induced price swings forced architects to build more robust verification layers. These layers now include time-weighted average prices (TWAP) and multi-oracle consensus, all of which are verified within the cryptographic proof of the trade.

Horizon

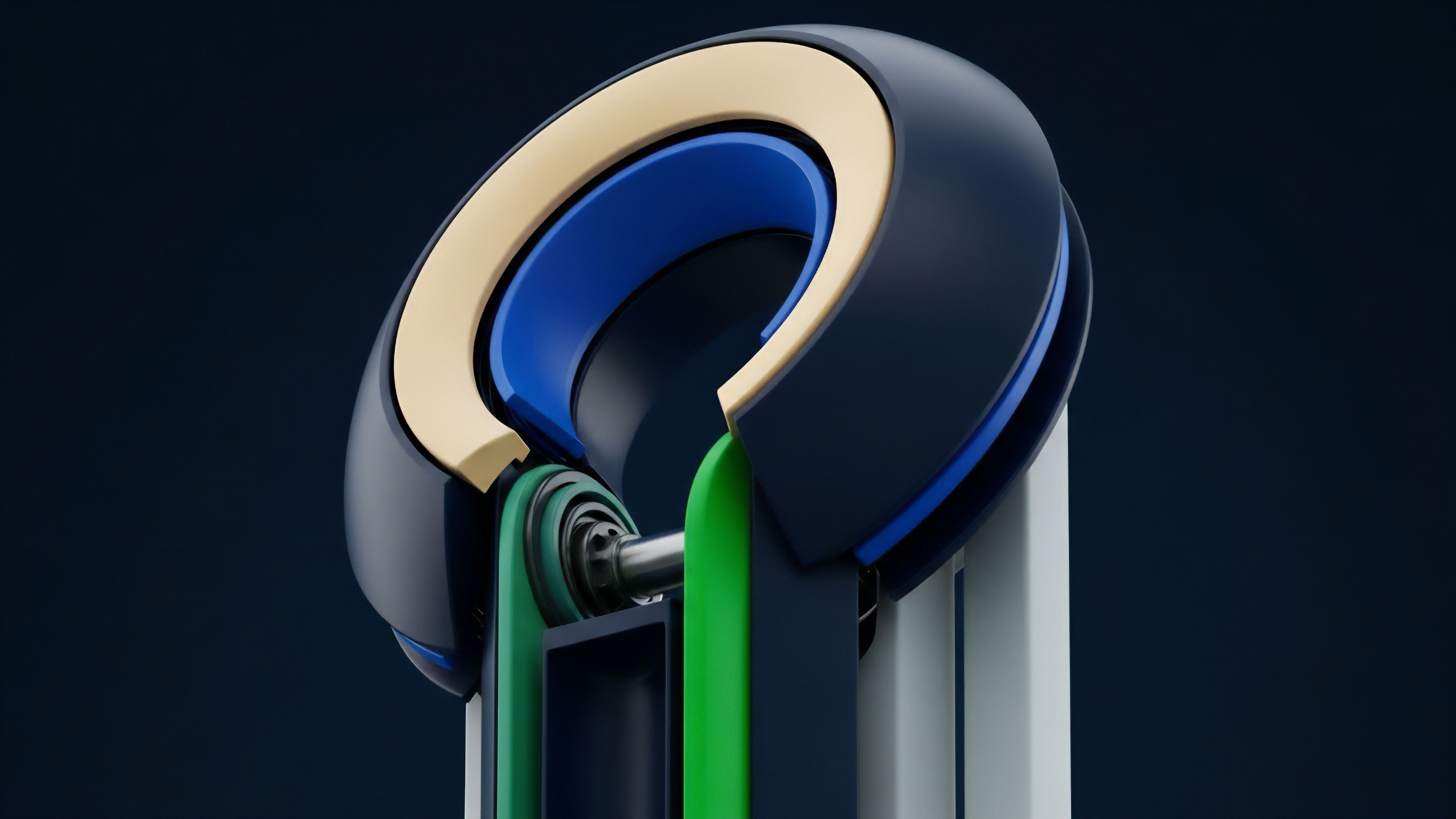

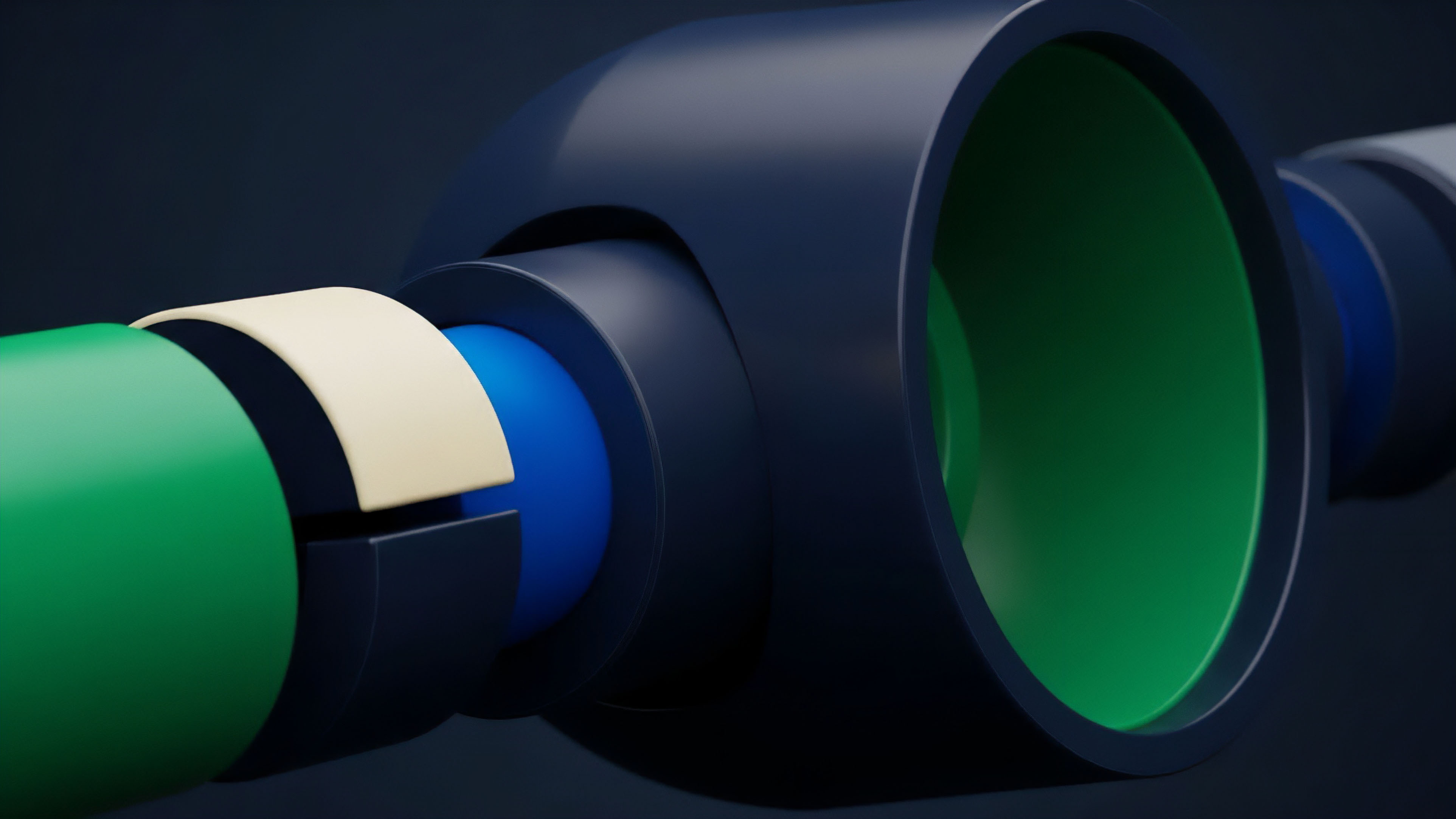

The future of the Verification-Based Model points toward a unified, cross-chain verification layer.

In this future, liquidity is not confined to a single network. Instead, a trader can open an option position on one chain using collateral verified on another, with the entire transaction secured by a single, aggregate proof. This “omnichain verification” will solve the current problem of liquidity fragmentation, allowing for deeper order books and tighter spreads.

Advanced Cryptographic Primitives

New technologies like Fully Homomorphic Encryption (FHE) will likely be integrated into the Verification-Based Model. This would allow the protocol to perform complex risk calculations on encrypted data, providing total privacy for institutional traders while maintaining the absolute certainty of the margin engine. The protocol will be able to verify that a portfolio is delta-neutral without the protocol itself knowing what the underlying assets are.

Systemic Implications

The widespread adoption of the Verification-Based Model will likely force a re-evaluation of regulatory frameworks. When the “clearinghouse” is a verifiable mathematical proof, the traditional definitions of financial intermediaries become obsolete. Regulators will shift their focus from auditing institutions to auditing the open-source code and the cryptographic proofs they generate. This leads to a more resilient global financial system where contagion is limited by the deterministic nature of the code, preventing the cascading failures that define trust-based financial history.

Glossary

Dynamic Collateral Verification

Cryptographic Price Verification

Decentralized Identity Verification

Digital Signature Verification

Portfolio-Based Risk Modeling

Intent-Based Order Routing Systems

Blockchain Based Marketplaces Growth Trends

Verification Layers

Privacy-Preserving Options