Essence

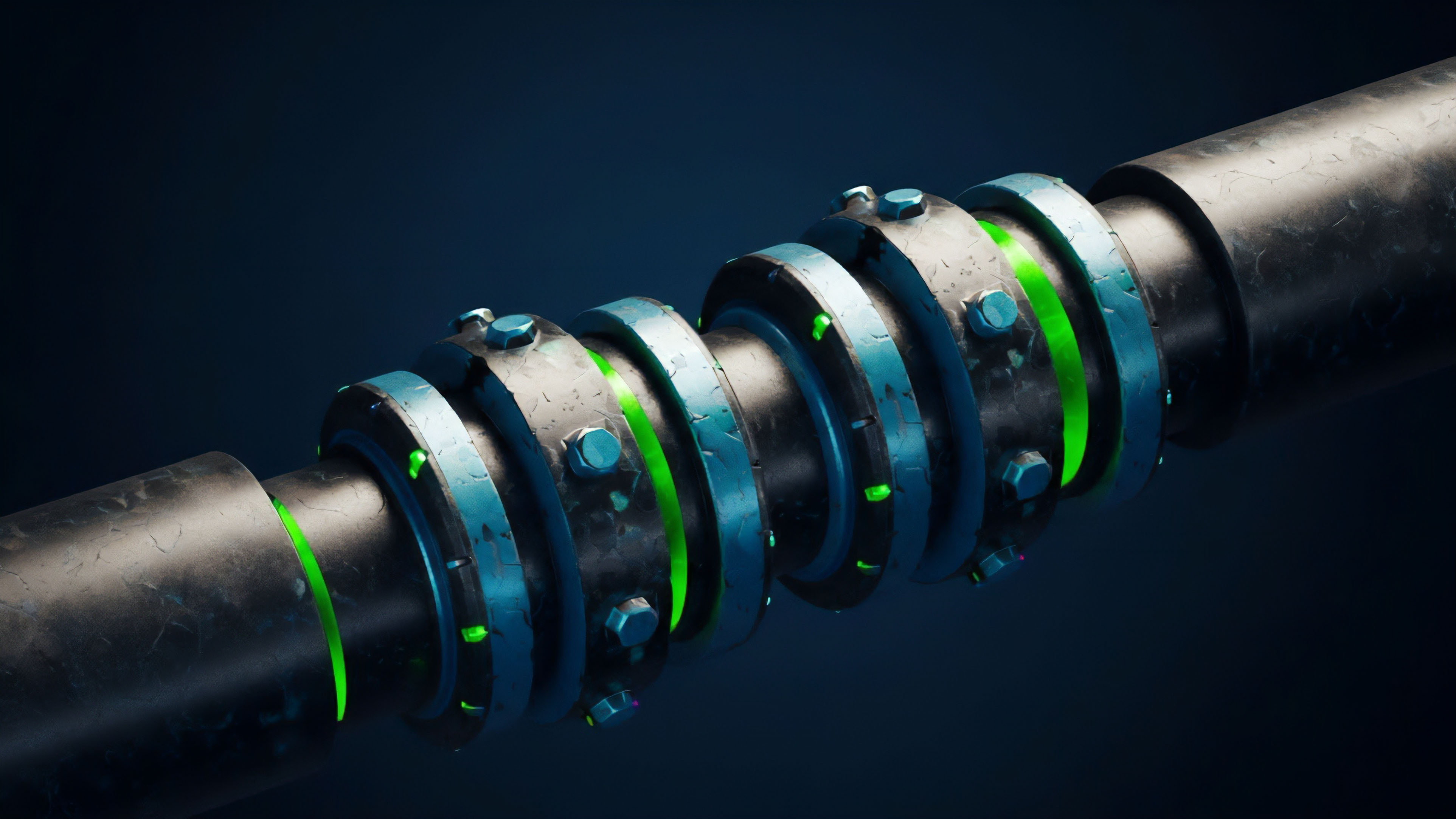

Push data feeds are a fundamental architectural choice for decentralized derivatives protocols. They define how market information ⎊ specifically asset prices, implied volatility, and funding rates ⎊ are delivered to the smart contract layer for calculation and settlement. Unlike traditional financial systems where participants actively poll (pull) data from an exchange API, a push model requires an external actor, often called a keeper or relayer, to actively send data to the blockchain at predetermined intervals or when specific conditions are met.

This distinction is vital for understanding on-chain derivatives. The deterministic nature of smart contracts means they cannot inherently fetch external data; they must receive it. This design choice dictates the latency, cost, and ultimately, the security and efficiency of a derivatives platform.

The core function of a push feed in a crypto options protocol is to provide the settlement price at expiration and to enable real-time risk management. When an option contract expires, the smart contract needs an accurate, verified price to determine the payout. This price must be pushed on-chain by a trusted or decentralized oracle network.

Furthermore, for protocols supporting margined options or perpetuals, continuous risk assessment requires frequent data updates to calculate collateralization ratios and trigger liquidations. A push feed ensures that the necessary data arrives at the exact moment it is needed for these critical, high-stakes operations.

Push data feeds ensure that smart contracts receive market data deterministically, enabling automated liquidations and settlement for decentralized derivatives.

Origin

The origin of push feeds in DeFi is directly linked to the “oracle problem” and the limitations of early decentralized protocols. In the early days of DeFi, protocols like MakerDAO needed price feeds for collateral management. These early systems often relied on a “pull” model where users or keepers would request a price update when needed, paying the gas cost for the transaction.

This worked for slow-moving, long-term collateral management where price updates were infrequent. However, the introduction of options and perpetual futures required a significant increase in data frequency and reliability. Options protocols demand a level of precision and timeliness far beyond simple collateral management.

The value of an option changes rapidly, and liquidations for margined positions must occur almost instantaneously to prevent protocol insolvency. The pull model proved inefficient for this high-frequency requirement; it introduced too much latency and relied on individual users to bear the cost of data updates, creating a potential failure point during periods of high network congestion or volatility. The push model evolved as a necessary solution to this constraint.

It centralizes the data delivery responsibility within a specific network or keeper set, ensuring data availability for critical events like liquidations and settlement, even during peak network activity. This shift from reactive data retrieval to proactive data delivery was a direct response to the specific needs of derivatives trading.

Theory

The theoretical underpinnings of push feeds in derivatives relate directly to market microstructure and quantitative finance.

From a quantitative perspective, the discrete nature of push feeds introduces a fundamental deviation from continuous-time models like Black-Scholes. The Black-Scholes model assumes continuous data availability and frictionless trading, which is demonstrably false in a push feed environment. The data feed’s update frequency creates a discrete time interval during which the on-chain price may not accurately reflect the off-chain market price.

This discrepancy creates a “latency arbitrage” opportunity for market participants who can observe the off-chain price and front-run the upcoming on-chain update. The impact on option pricing and Greeks calculation is profound. The calculation of Delta, Gamma, and Theta relies on a current and accurate underlying asset price.

If the push feed updates every 10 minutes, the protocol’s risk engine operates on stale data for most of that interval. This can lead to inaccurate margin calls and potential protocol insolvency if a rapid price movement occurs between updates. The protocol’s design must account for this data lag by implementing specific mechanisms.

- Latency Arbitrage: The time delay between the off-chain market price and the on-chain pushed price creates a window where sophisticated traders can profit by executing trades on the on-chain derivative before the price update corrects the discrepancy.

- Liquidation Risk: If a push feed’s update frequency is too low, a margined position can fall below its collateralization threshold in the real market without triggering an on-chain liquidation. This can lead to bad debt for the protocol.

- Greeks Calculation: The accuracy of an option’s risk sensitivities (Greeks) depends heavily on the timeliness of the underlying price feed. A delayed feed results in inaccurate Delta calculations, potentially leading to under-hedged positions for market makers.

A key theoretical challenge is balancing data integrity with the economic cost of data delivery. A higher update frequency reduces latency risk but increases gas costs for the protocol. A lower update frequency saves costs but increases the risk of bad debt.

The optimal design requires a deep understanding of the specific volatility profile of the underlying asset and the protocol’s risk tolerance.

Approach

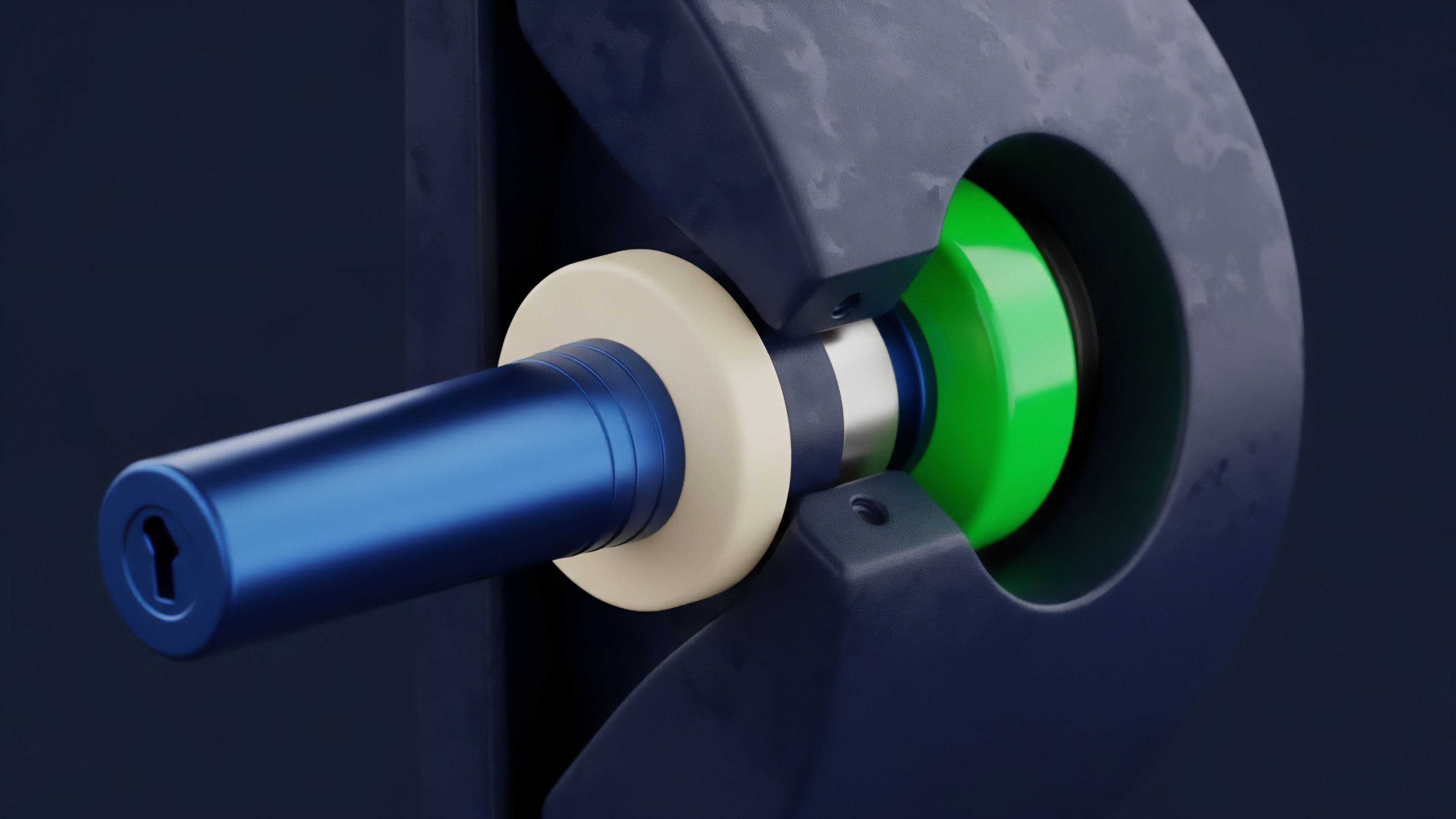

Current implementations of push data feeds in crypto options protocols generally fall into two categories: centralized and decentralized. Centralized approaches rely on a single, trusted entity to push data, offering low latency but high counterparty risk.

Decentralized approaches, which are more common in robust protocols, rely on a network of independent keepers. A common approach for decentralized push feeds involves a “keeper network” where multiple actors are incentivized to perform the data update function. These keepers monitor off-chain market data and compete to submit a price update to the smart contract when a specific condition (e.g. price deviation, time interval) is met.

The protocol verifies the data by checking it against a consensus mechanism or by comparing it to data submitted by other keepers.

| Feature | Decentralized Push Feed | Centralized Push Feed |

|---|---|---|

| Latency | Higher, dependent on network congestion and keeper incentives. | Lower, controlled by a single entity. |

| Security Model | Economic security via staking and consensus. | Reputational security via a trusted entity. |

| Cost Model | Variable gas costs paid by protocol or keepers. | Fixed cost or API fee paid by protocol. |

| Risk Profile | Sybil attack and data manipulation risk. | Single point of failure and censorship risk. |

Another approach involves “data streaming” solutions where data is pushed to a Layer 2 or sidechain and then relayed to the main protocol via a verifiable bridge. This reduces gas costs significantly and allows for much higher update frequencies, making it more suitable for high-frequency trading applications. The core design challenge remains creating an incentive structure that ensures keepers act honestly and reliably without making the data delivery prohibitively expensive.

The implementation of a push feed requires a careful balancing act between the frequency of updates and the cost of on-chain transactions, a trade-off that defines the protocol’s risk profile and capital efficiency.

Evolution

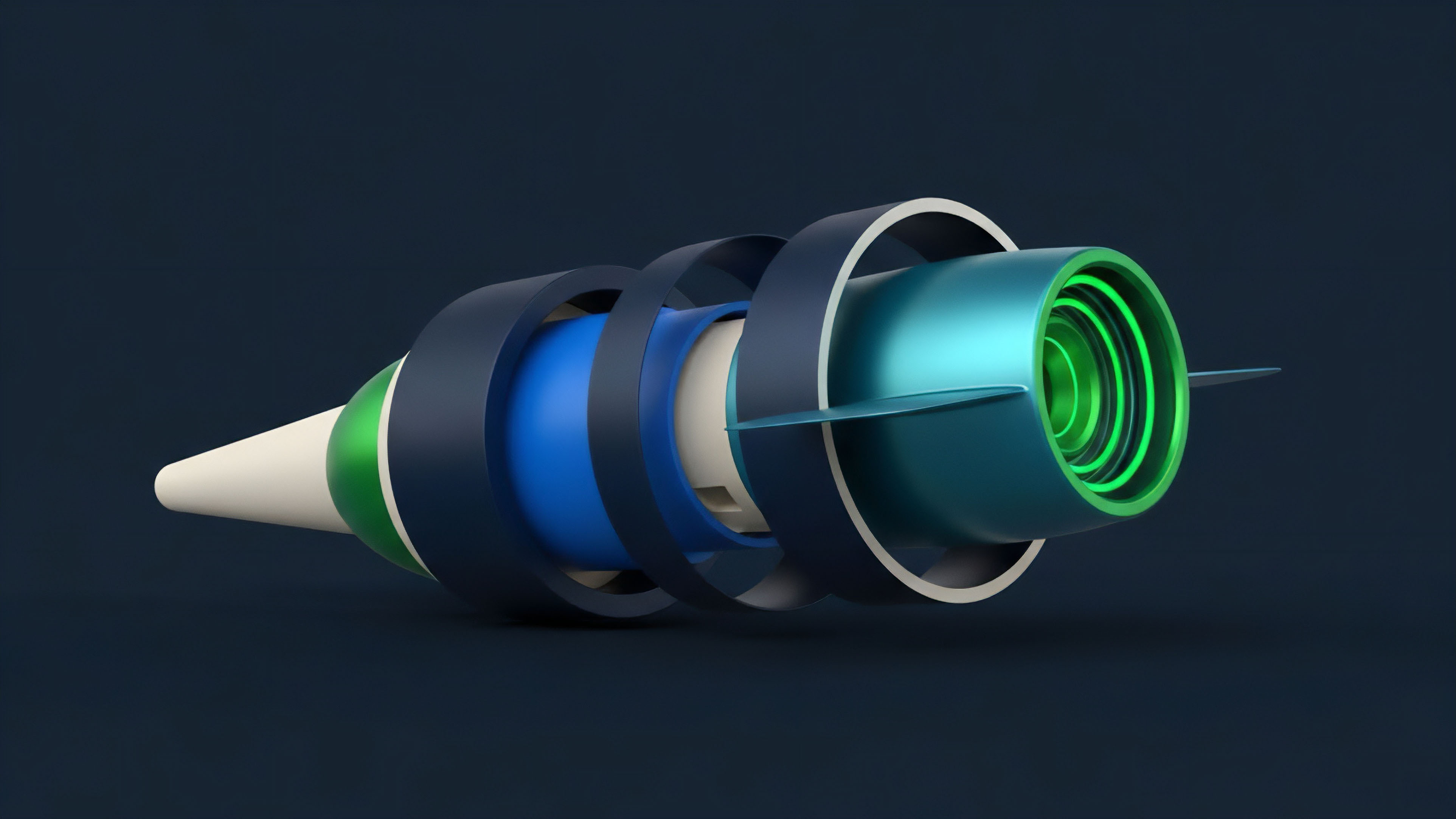

The evolution of push feeds in crypto derivatives has moved from simple, single-source oracles to complex, multi-layered data delivery systems. Early protocols often relied on a single data source, which was highly vulnerable to manipulation. If a bad actor could compromise that source, they could execute a profitable trade on the options protocol at the expense of other users.

The first major step in evolution was the shift to aggregated data feeds. Protocols began using decentralized oracle networks (DONs) that aggregate data from multiple off-chain sources. This approach increases the cost of manipulation, as an attacker must compromise a majority of the data sources simultaneously.

The next phase of evolution focused on reducing latency and improving data quality for high-frequency applications.

- Single-Source Feeds: The initial approach, highly vulnerable to manipulation and single points of failure.

- Aggregated Feeds: The current standard, using decentralized oracle networks to combine data from multiple sources to improve reliability.

- Layer 2 Data Streams: The emerging standard, where data is streamed to a Layer 2 solution for high-frequency updates before being settled on Layer 1, significantly reducing latency and cost.

- Proactive Oracles: The next generation of oracles that go beyond price reporting to include implied volatility surfaces and risk metrics, allowing for more sophisticated on-chain derivatives.

A significant challenge in this evolution has been data fragmentation. Different protocols use different push feed providers and update frequencies, leading to market fragmentation and potential arbitrage opportunities between platforms. The future direction points toward standardized data streams and shared data infrastructure that can serve multiple protocols simultaneously, reducing cost and improving market coherence.

Horizon

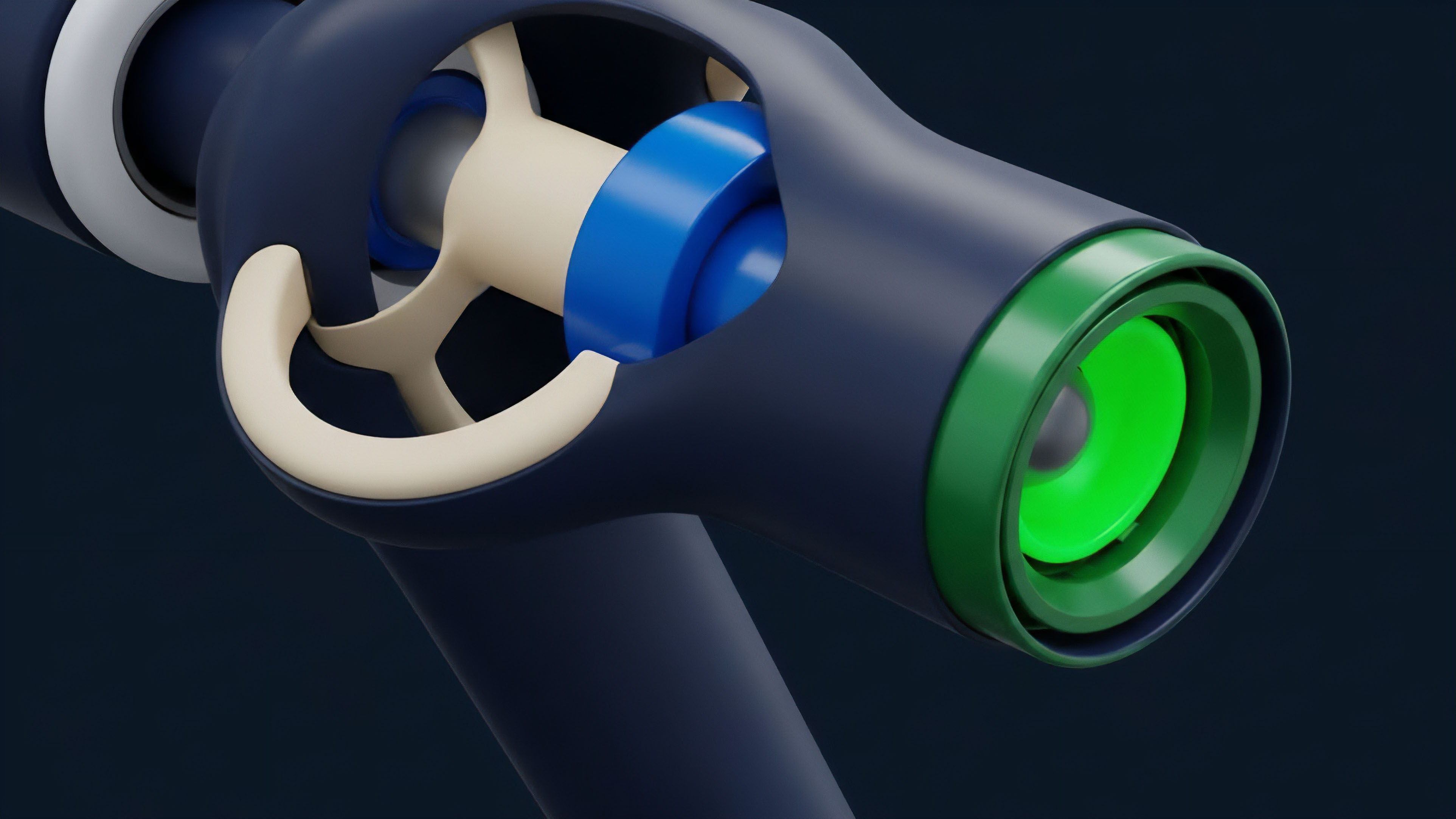

Looking ahead, the future of push data feeds in crypto derivatives is driven by the demand for institutional-grade performance and high-frequency trading. The current latency of Layer 1 push feeds, often measured in minutes, is inadequate for sophisticated options strategies. The horizon involves a shift to high-frequency data delivery via Layer 2 solutions.

These solutions can handle hundreds of updates per second, allowing protocols to offer derivatives that rival traditional finance in speed and precision. The next generation of push feeds will likely incorporate advanced data analytics directly into the oracle network. Instead of simply pushing a single price, future oracles will deliver complex data structures like volatility surfaces.

This will allow on-chain options protocols to offer more sophisticated products and pricing models. The integration of zero-knowledge proofs is also a key development. This technology allows data to be verified on-chain without revealing the source or the full data set, increasing privacy and security.

| Current Limitation | Future Solution | Impact on Derivatives |

|---|---|---|

| Layer 1 latency and high gas cost. | Layer 2 data streaming and verifiable computation. | Enables high-frequency options trading and dynamic risk management. |

| Simple price reporting. | Volatility surface delivery and predictive oracles. | Supports advanced options products and accurate pricing of exotic derivatives. |

| Reliance on trusted data sources. | Zero-knowledge proof verification. | Increases data privacy and security, reducing reliance on trusted third parties. |

The ultimate goal is to remove the “data lag” entirely, making on-chain derivatives as performant as off-chain ones. This requires a new architecture where data integrity can be proven without revealing underlying market maker strategies.

The future of push feeds involves a transition to high-frequency data streams on Layer 2 networks, enabling sophisticated risk management and complex derivatives products previously limited to traditional finance.

Glossary

Volatility Surfaces

Push-Based Oracles

Data Streams

Oracles Data Feeds

Historical Volatility Feeds

Future of Defi

Economic Security

Decentralized Protocols

Omni Chain Feeds