Essence

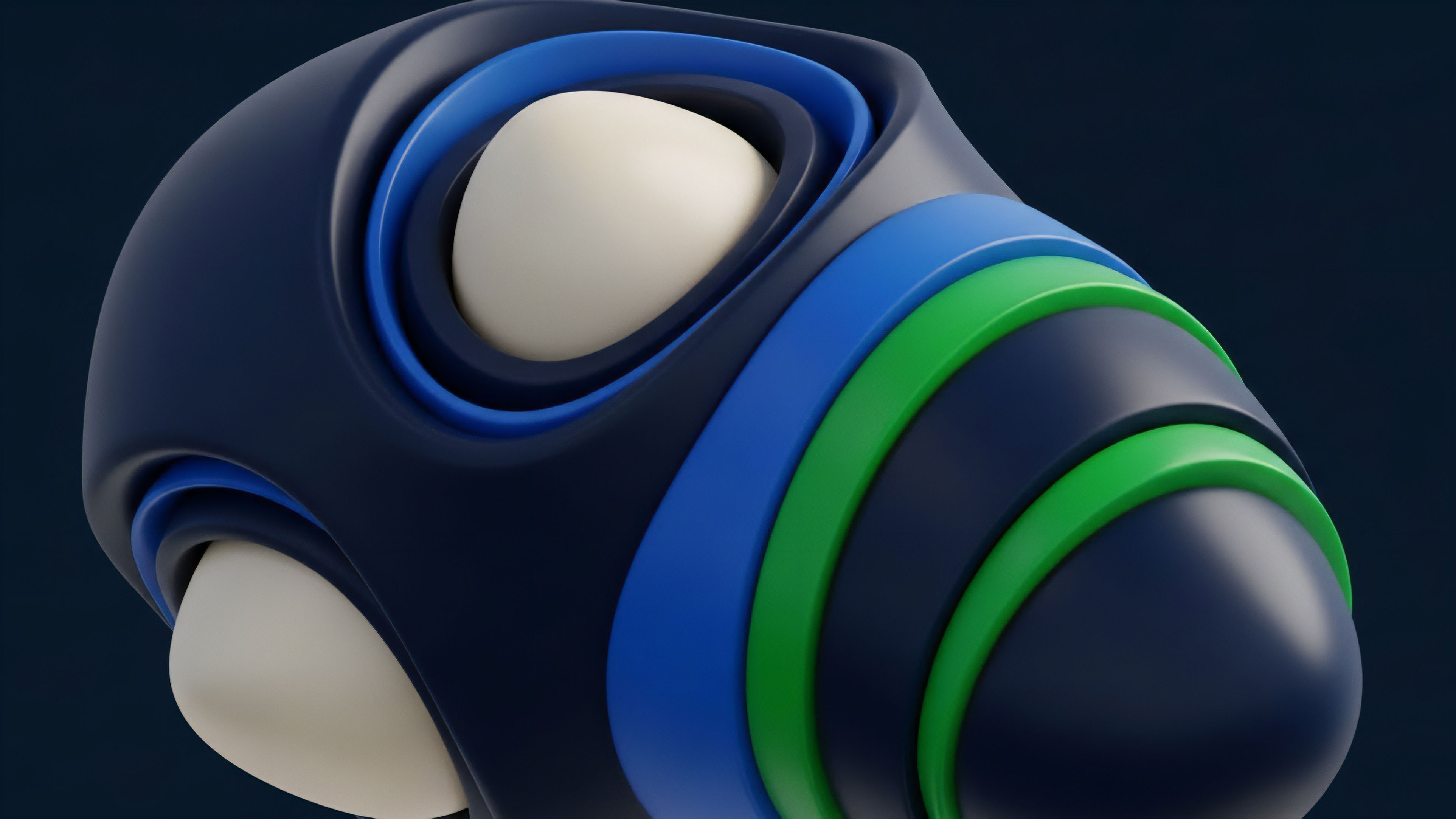

Data providers are the essential infrastructure for options markets, serving as the conduits that translate market reality into a format usable by financial models and, crucially, smart contracts. The complexity of options pricing, which relies on multiple variables beyond the simple spot price of an underlying asset, makes these data feeds significantly more sophisticated than those required for spot trading. The primary data product delivered is the implied volatility surface, a three-dimensional plot that represents the market’s expectation of future price movement across different strike prices and expiration dates.

This surface is not static; it constantly shifts in response to market sentiment and order flow.

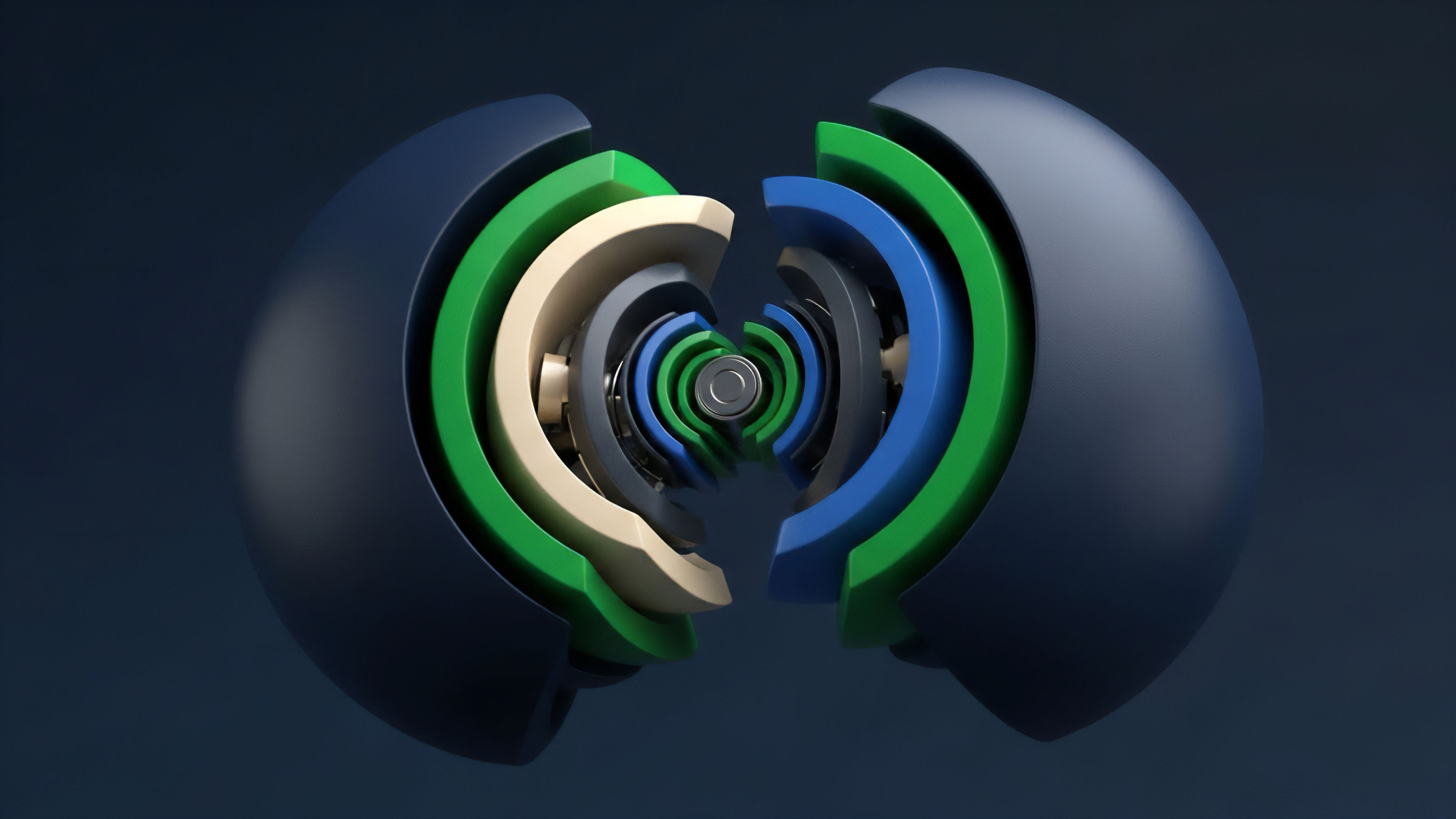

The core function of a data provider in this context is to synthesize raw order book data from multiple venues into a single, reliable, and consistent volatility surface. This synthesis is vital because options trading in crypto markets is highly fragmented, with liquidity spread across centralized exchanges (CEXs) and decentralized protocols (DEXs). A reliable data provider must aggregate this fragmented data, filter out noise and potential manipulation, and present a coherent view of market risk.

The accuracy of this synthesized data directly determines the precision of risk calculations, such as the Greeks, and the stability of automated market makers (AMMs) and liquidation engines.

Data providers are the critical infrastructure for options markets, translating fragmented market data into coherent volatility surfaces and risk metrics required for pricing and risk management.

Origin

The evolution of data provision for crypto derivatives began with a simple necessity: replicating the functionalities of traditional finance (TradFi) options markets in a new asset class. In TradFi, data providers like Bloomberg and Refinitiv (LSEG) have long provided consolidated feeds from exchanges like the CME and Cboe, offering high-fidelity data with strict standards for data integrity and low latency. Early crypto derivatives markets, particularly on centralized platforms like Deribit, largely operated in a similar fashion, with proprietary data feeds accessible via APIs for market makers and quantitative funds.

The shift to decentralized finance (DeFi) created a fundamental architectural challenge. While CEXs could manage data internally, DeFi protocols required external data to be delivered on-chain to smart contracts in a trust-minimized manner. This led to the emergence of specialized decentralized oracle networks.

Early oracle designs focused primarily on simple spot prices, but the demands of options protocols quickly outgrew these capabilities. The requirement for a comprehensive volatility surface ⎊ not just a single price point ⎊ forced a re-evaluation of oracle architecture. This new generation of data providers had to solve the problem of delivering complex, multi-dimensional data to the blockchain securely and efficiently, often in a single transaction.

Theory

The theoretical underpinnings of options data provision revolve around the Black-Scholes-Merton model and its practical application through the volatility surface. The model requires an input for volatility, which in practice is derived from observed market prices rather than being directly measurable. The core challenge for a data provider is to calculate this implied volatility (IV) accurately and consistently across different strike prices and expiration dates.

This process involves solving for IV by iterating through the Black-Scholes formula, using real-time option prices from various exchanges.

The resulting volatility surface exhibits a characteristic “smile” or “smirk,” where out-of-the-money options have higher IV than at-the-money options. A data provider’s ability to accurately capture this skew is vital for proper risk management. A flawed surface can lead to significant mispricing, creating arbitrage opportunities or, worse, causing systemic risk within a protocol.

The theoretical integrity of the data provider’s feed relies on its ability to create an arbitrage-free surface. If the surface allows for a butterfly spread or other complex strategies to generate risk-free profit, the underlying data model is compromised.

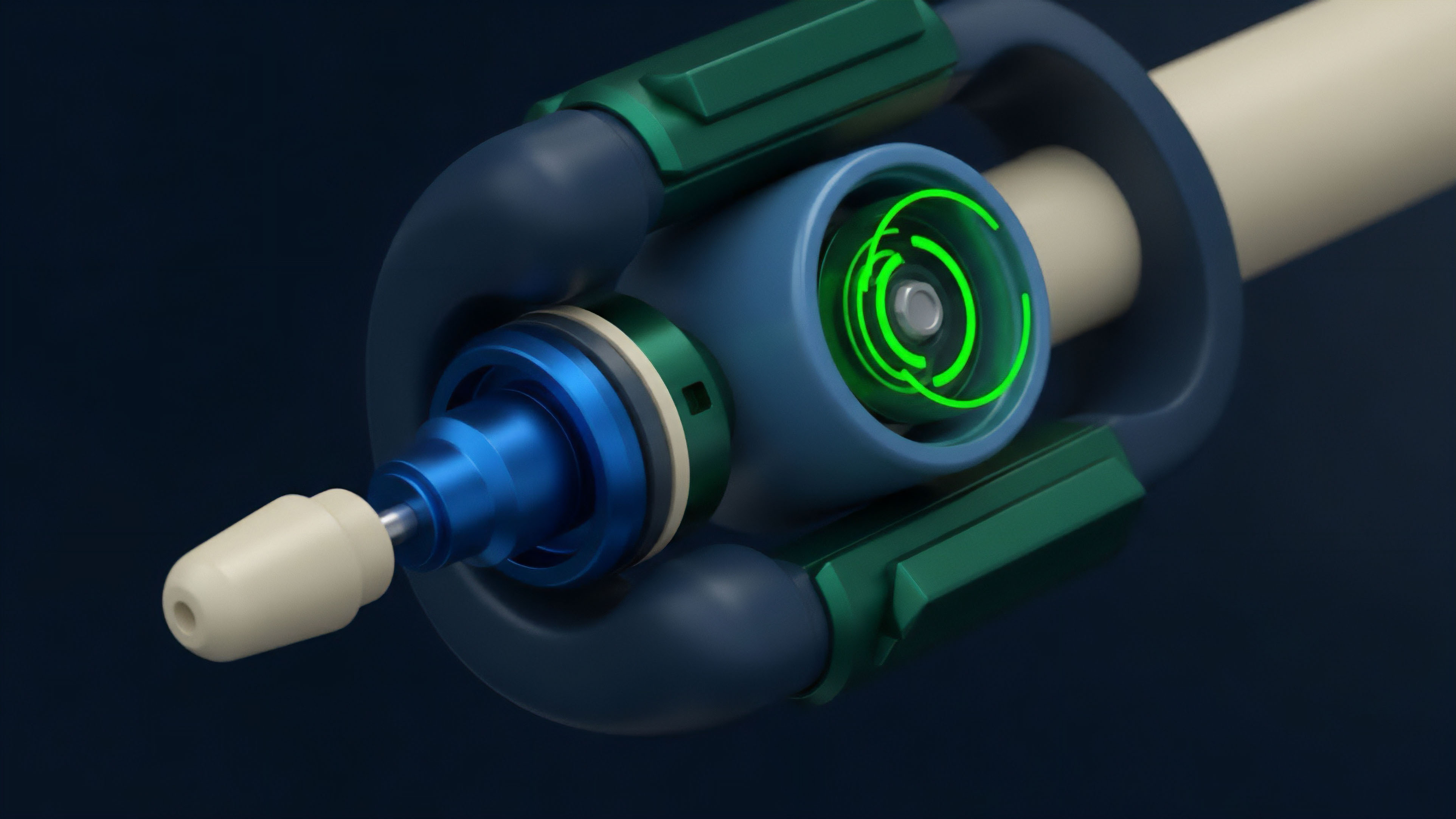

A secondary theoretical consideration is the calculation of Greeks ⎊ the sensitivity metrics (Delta, Gamma, Vega, Theta) that measure an option’s risk exposure to changes in underlying price, volatility, time, and interest rates. The data provider often calculates these sensitivities in real-time, feeding them directly to protocols for margin calculations and portfolio risk assessment. This requires a robust, low-latency computational engine to ensure that risk calculations are always based on the most current market state.

Approach

The implementation approach for crypto options data provision bifurcates between centralized data aggregators and decentralized oracle networks. Each approach presents a distinct set of trade-offs regarding latency, security, and data integrity.

Centralized data aggregators, such as those used by proprietary trading firms, focus on maximizing speed and accuracy. They directly access CEX APIs (like Deribit’s or OKX’s) and process the data off-chain. The resulting volatility surfaces are often proprietary and highly optimized for specific trading strategies.

The data quality is high, but the system relies entirely on the trust and integrity of the centralized exchange and the data provider itself. This approach is unsuitable for permissionless DeFi protocols due to the single point of failure and lack of on-chain verifiability.

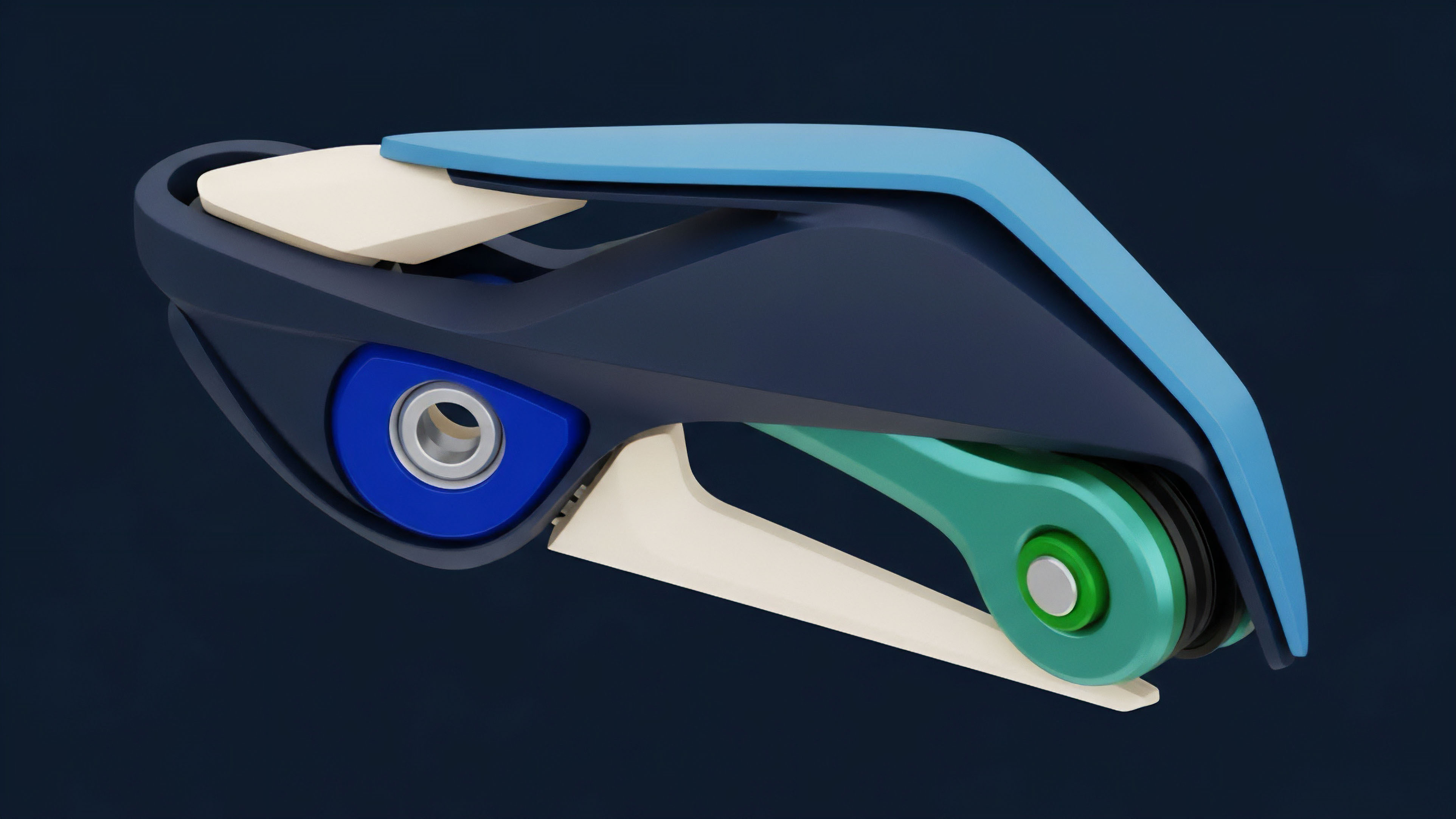

Decentralized oracle networks adopt a different approach. They rely on a network of independent data publishers to submit data points to a smart contract. The network then aggregates these submissions using a median calculation to create a single, tamper-resistant data point.

This process ensures data integrity by making manipulation prohibitively expensive. The specific data aggregation methods vary significantly between providers. For example, some oracle networks use a “pull” model where protocols request data on demand, while others use a “push” model where data updates automatically based on pre-defined deviation thresholds.

The challenge with this approach is balancing decentralization with latency and cost. Aggregating data on-chain is computationally intensive and expensive, making high-frequency updates difficult.

The following table compares the architectural trade-offs of these two approaches:

| Feature | Centralized Data Aggregator (CEX API) | Decentralized Oracle Network (DeFi) |

|---|---|---|

| Latency | Low (milliseconds) | High (seconds to minutes, depending on blockchain finality) |

| Data Integrity | Trust-based (single point of failure) | Cryptographically verified (consensus-based) |

| Cost Model | Subscription fee | On-chain transaction fees |

| Data Scope | Proprietary, CEX-specific data | Aggregated, multi-venue data |

Evolution

The evolution of data providers in the crypto options space reflects a transition from simple price feeds to comprehensive risk management tools. Initially, data provision was focused on providing a single, reliable spot price. As options markets grew, the need for volatility data became paramount.

The next generation of data providers recognized that the volatility surface itself needed to be a dynamic, real-time product. This led to the development of specialized oracles that could handle complex data structures.

The current state of development involves a focus on mitigating oracle risk and liquidity fragmentation. Liquidity fragmentation occurs when the same options contract trades on multiple venues (e.g. Deribit, Cboe Digital, and a DeFi protocol like Lyra or Dopex).

This fragmentation makes it difficult to construct a single, accurate volatility surface, as the market’s true state is obscured. Data providers are evolving to address this by developing advanced aggregation algorithms that normalize data from different sources and adjust for differences in underlying asset prices and contract specifications.

The primary challenge for data providers in a fragmented market is synthesizing a single, arbitrage-free volatility surface from disparate data sources.

A significant development is the move toward “risk-aware” data feeds. These feeds do not simply report prices; they provide data points specifically tailored for risk calculations, such as pre-calculated Greeks or volatility skew parameters. This reduces the computational burden on the consuming protocol, allowing for more efficient margin calculations and liquidation processes.

The evolution of data providers is moving them from passive information conduits to active participants in a protocol’s risk engine.

Horizon

Looking forward, the future of options data provision lies in achieving a new level of data standardization and on-chain computational efficiency. The current fragmentation of data, where different venues calculate implied volatility differently, hinders the development of a truly robust and interconnected options market. A future where data providers adhere to a common standard for volatility surface construction would unlock significant efficiencies for market makers and protocols.

The next major architectural shift will likely be the integration of data providers directly into on-chain risk engines. Instead of protocols merely consuming data, they will use data providers to execute complex risk calculations directly within the smart contract environment. This would allow for dynamic margin requirements and real-time risk adjustments, significantly improving capital efficiency.

This development requires data providers to move beyond simple data delivery and toward providing verifiable, on-chain computation services. This represents a paradigm shift where the data provider’s role expands to encompass the provision of a full risk framework, not just raw data.

This future also requires a solution to the latency problem inherent in decentralized oracle networks. As options trading moves toward high-frequency strategies, the delay between off-chain market events and on-chain data updates creates opportunities for front-running and manipulation. Future data solutions will need to utilize advanced techniques, such as zero-knowledge proofs, to prove the integrity of off-chain data calculations without sacrificing speed.

The goal is to create a system where the data feed is not only reliable but also near-instantaneous, enabling high-frequency trading strategies to operate safely on a decentralized foundation.

Glossary

Data Availability Providers

Automated Market Makers

Decentralized Identity Providers

Data Feed Security

Financial System Risk Management Software Providers

Identity Providers

Verifier Service Providers

Market Liquidity Providers

External Liquidity Providers