Essence

Off-chain data streams represent the essential architectural bridge connecting the deterministic logic of a smart contract with the stochastic, external reality of financial markets. For crypto options and derivatives, this connection is not a luxury; it is a fundamental requirement for settlement and risk management. A smart contract on a blockchain, by design, cannot access data from outside its own state.

This creates a data vacuum where a derivative contract, which depends on real-world variables like asset prices, interest rates, or volatility, simply cannot function without an external input mechanism. The core function of these data streams is to provide verifiable information to a contract at a specific point in time. In traditional finance, this data is sourced from centralized exchanges and processed by clearing houses.

In decentralized finance, this responsibility falls to specialized infrastructure known as oracles. The off-chain data stream provides the input for calculating a contract’s value at expiration, determining whether collateral should be liquidated, or adjusting the margin requirements based on current market conditions. The integrity of the entire derivative position rests on the integrity and timeliness of this data feed.

If the data feed is compromised, the smart contract’s logic will execute based on faulty information, leading to incorrect settlements and potential systemic failure of the protocol.

Off-chain data streams are the necessary link between the deterministic execution of smart contracts and the stochastic inputs required for derivative contracts to function accurately.

Origin

The genesis of off-chain data streams in DeFi stems directly from the “oracle problem” that emerged with the first generation of decentralized applications. Early protocols attempting to create financial products faced a severe limitation: they could only operate on data present within their own blockchain. This restricted functionality to basic swaps or lending based on hardcoded ratios.

The need for a robust options market, however, required real-time price feeds for a wide array of assets. The initial attempts at solving this involved simple on-chain price feeds where users or a single administrator would update a price variable. This approach proved highly vulnerable to manipulation and high gas costs, especially during periods of high volatility.

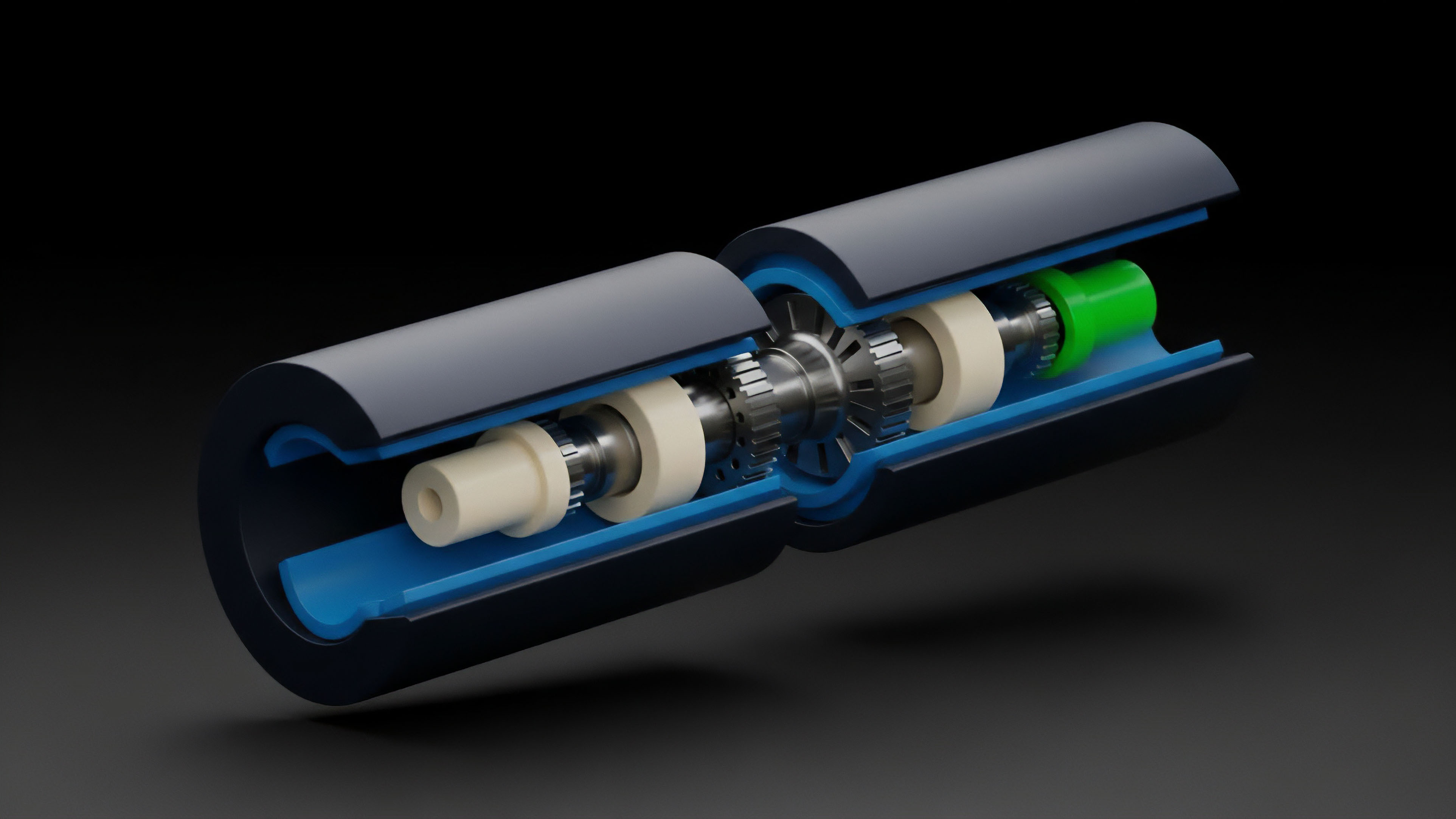

The breakthrough arrived with the development of decentralized oracle networks (DONs). These networks moved beyond a single source of truth, creating a system where multiple independent nodes, often incentivized by a native token, collectively aggregate data from various sources. This architectural shift from a single, centralized data input to a distributed consensus mechanism for data delivery was a necessary prerequisite for building complex options protocols.

The origin story of off-chain data streams is therefore one of necessity, where the limitations of early blockchain design forced the creation of a new, decentralized infrastructure layer specifically designed to provide reliable, tamper-resistant data to power advanced financial instruments.

Theory

The theoretical underpinnings of off-chain data streams are rooted in information economics and Byzantine fault tolerance. The core challenge is not simply acquiring data, but doing so in a way that minimizes the “last mile problem” and ensures data integrity against malicious actors.

From a quantitative perspective, the reliability of the off-chain data stream directly impacts the calculation of risk parameters for options. An inaccurate or delayed price feed can lead to mispricing of volatility and incorrect margin calls. The design of an off-chain data stream involves a trade-off between speed, security, and cost.

The data aggregation model itself is critical. Most systems employ a form of medianization or time-weighted average price (TWAP) to smooth out short-term volatility spikes and prevent flash loan attacks. The theoretical risk of data manipulation is often modeled using game theory, where the cost to corrupt the data must be higher than the potential profit derived from exploiting the derivative contract.

Data Aggregation and Pricing Models

The primary mechanism for data integrity in off-chain streams is aggregation. Instead of relying on a single source, a decentralized oracle network queries multiple sources and calculates a single, canonical value. The choice of aggregation methodology significantly impacts the options pricing model.

- Medianization: This approach selects the middle value from a set of data points submitted by different nodes. It effectively filters out extreme outliers caused by single data source failures or malicious node submissions.

- Volume-Weighted Average Price (VWAP): A more sophisticated approach, VWAP calculates the average price based on the trading volume at different price points. This provides a more accurate representation of the market’s consensus price and is often preferred for high-volume derivatives markets.

- Time-Weighted Average Price (TWAP): This method averages prices over a specific time interval, mitigating manipulation risk from flash loans. TWAP is particularly important for options settlement where a single, instantaneous price spike should not trigger a premature liquidation.

Data Latency and Greeks

The speed at which off-chain data streams update directly impacts the accuracy of option pricing models. In a high-volatility environment, even a small delay (latency) in data delivery can lead to significant miscalculations of the Greeks, specifically Vega and Theta.

| Greek | Impact of Data Latency | Consequence for Options Protocol |

|---|---|---|

| Vega | Underestimation or overestimation of volatility changes. | Inaccurate calculation of options value, leading to potential undercollateralization or overcharging of premiums. |

| Theta | Inaccurate decay calculation due to stale price data. | Poor risk management for market makers, potentially resulting in losses as time value erodes differently than expected. |

| Delta | Inaccurate hedging ratios, particularly in highly volatile markets. | Market makers may be unable to maintain a delta-neutral position, exposing them to significant directional risk. |

Approach

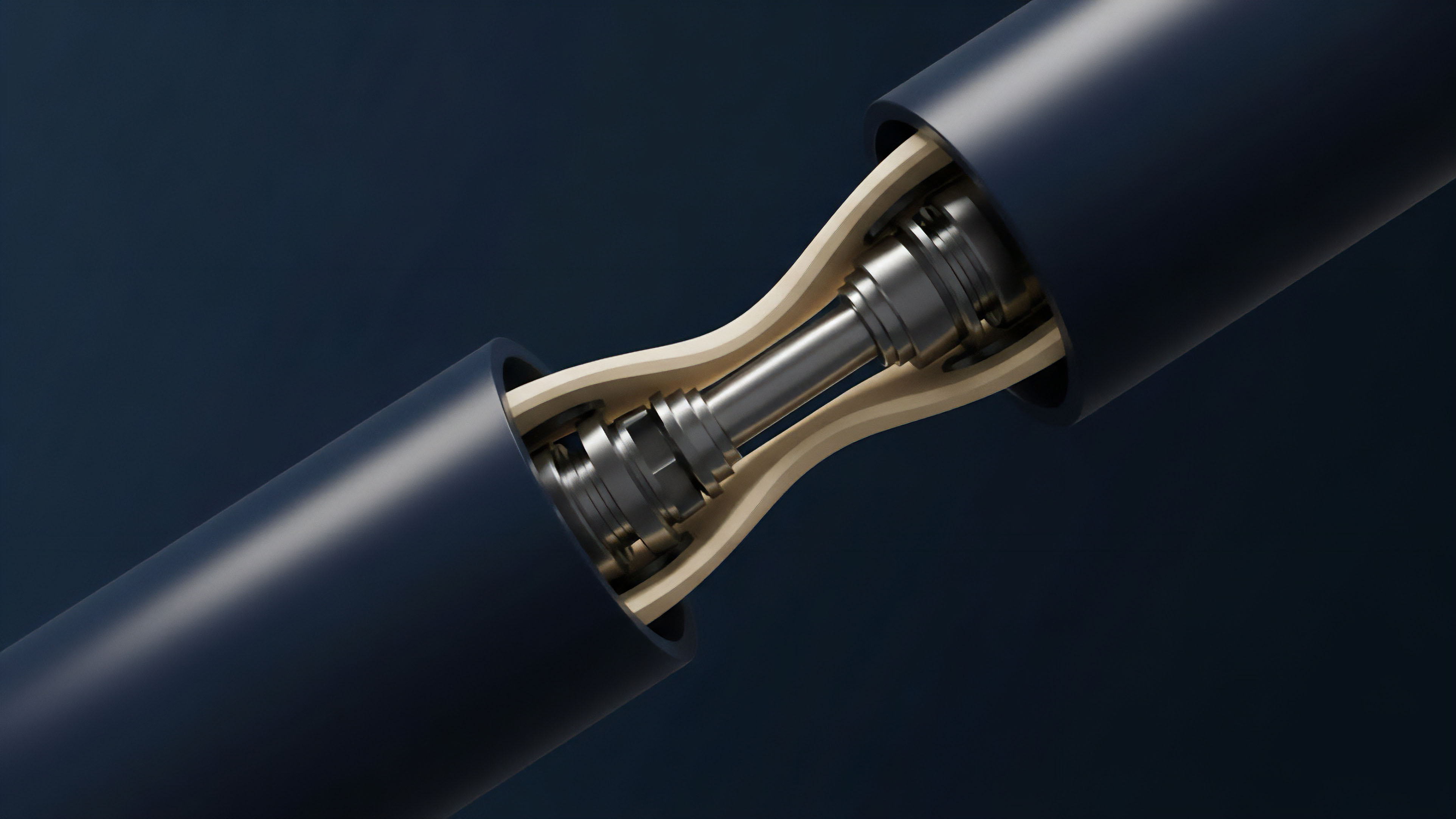

The implementation of off-chain data streams in options protocols varies significantly based on architectural choices, primarily centered on how data is delivered to the smart contract. Two dominant models define current approaches: the push model and the pull model. The choice between these two determines the trade-off between capital efficiency and security for the protocol.

Push Model Architecture

In a push model, the oracle network actively updates the on-chain data feed whenever the price changes by a predetermined percentage threshold. This approach ensures high data freshness and low latency, which is essential for options protocols that require real-time margin calculations. The push model provides continuous, up-to-the-second pricing for collateral checks and liquidations.

However, it requires constant updates, which translates to higher gas costs for the protocol. This cost structure can limit the range of assets supported or require a different economic model where users pay for the data updates.

Pull Model Architecture

In contrast, the pull model allows a smart contract to request data from the oracle network only when it needs it, typically during settlement or when a user exercises an option. The user pays the gas fee for the data request. This approach is highly gas efficient, as data updates only occur on demand.

The pull model is well-suited for options protocols where real-time liquidations are not necessary, or where the options are long-dated and less sensitive to minute-by-minute price changes. However, it introduces potential latency issues during periods of network congestion, as a user’s data request might be delayed, potentially affecting the final settlement price.

The selection between a push or pull data model directly influences a protocol’s risk profile and capital efficiency.

Evolution

The evolution of off-chain data streams for options markets has progressed from simple spot price feeds to complex data surfaces. Initially, protocols needed a single price point to settle a simple call or put option. As the options market matured, however, the need for more complex data inputs became apparent.

This includes data streams for implied volatility (IV) surfaces and interest rate benchmarks. The challenge in options pricing lies in the fact that volatility itself is a critical input variable, often more important than the underlying asset’s price. Off-chain data streams are evolving to provide accurate volatility data, which requires a more complex aggregation methodology than simple spot price feeds.

These streams must aggregate data from multiple exchanges, calculate the implied volatility from existing option chains, and then deliver a coherent IV surface to the smart contract. This development allows for the creation of more sophisticated options products, such as exotic options or volatility swaps, that were previously impossible to implement on-chain. Furthermore, the regulatory landscape is influencing the design of these streams.

As DeFi gains traction, there is increasing pressure for data sources to be auditable and verifiable. The future of off-chain data streams will likely involve a higher degree of transparency regarding the source data and aggregation methodology, ensuring compliance with potential regulatory frameworks. This shift is driving the development of new data integrity standards that prioritize verifiability over simple speed.

Horizon

Looking ahead, the long-term goal for off-chain data streams in options markets is to eliminate the “off-chain” dependency. The current reliance on external oracles introduces a necessary trust assumption, even if it is distributed across multiple nodes. The ultimate architectural vision involves bringing data verification fully on-chain.

The horizon for data integrity includes the development of zero-knowledge (ZK) proofs for data verification. Instead of simply trusting an oracle network’s consensus, a smart contract could receive a ZK proof that verifies the data’s integrity without revealing the source data itself. This would allow for a trustless data input, ensuring that the options contract’s logic executes based on verifiably true information.

This architectural shift would transform the oracle from a data provider to a data prover, fundamentally changing the risk profile of options protocols.

The Data-as-a-Service Model

The future of off-chain data streams will also involve a transition from basic price feeds to specialized data services. Options protocols will require access to high-fidelity, low-latency data for real-time risk management. This includes data on funding rates, interest rate curves, and complex volatility surfaces.

The market for data services will likely become highly competitive, with protocols subscribing to multiple feeds to ensure redundancy and data accuracy. The evolution of this infrastructure will allow for a new generation of sophisticated options products, moving beyond simple European-style options to fully collateralized American options and other complex derivatives that require continuous, verifiable data inputs.

The future of data streams involves a shift toward zero-knowledge proofs for verification, transforming oracles from data providers to data provers.

Glossary

Off-Chain Settlement

Off-Chain Exchanges

Off Chain Markets

Off-Chain Order Fulfillment

Off-Chain Communication Protocols

On-Chain Off-Chain Bridge

On-Chain Data Validity

Off-Chain Data Relay

Off-Chain Market Price