Essence

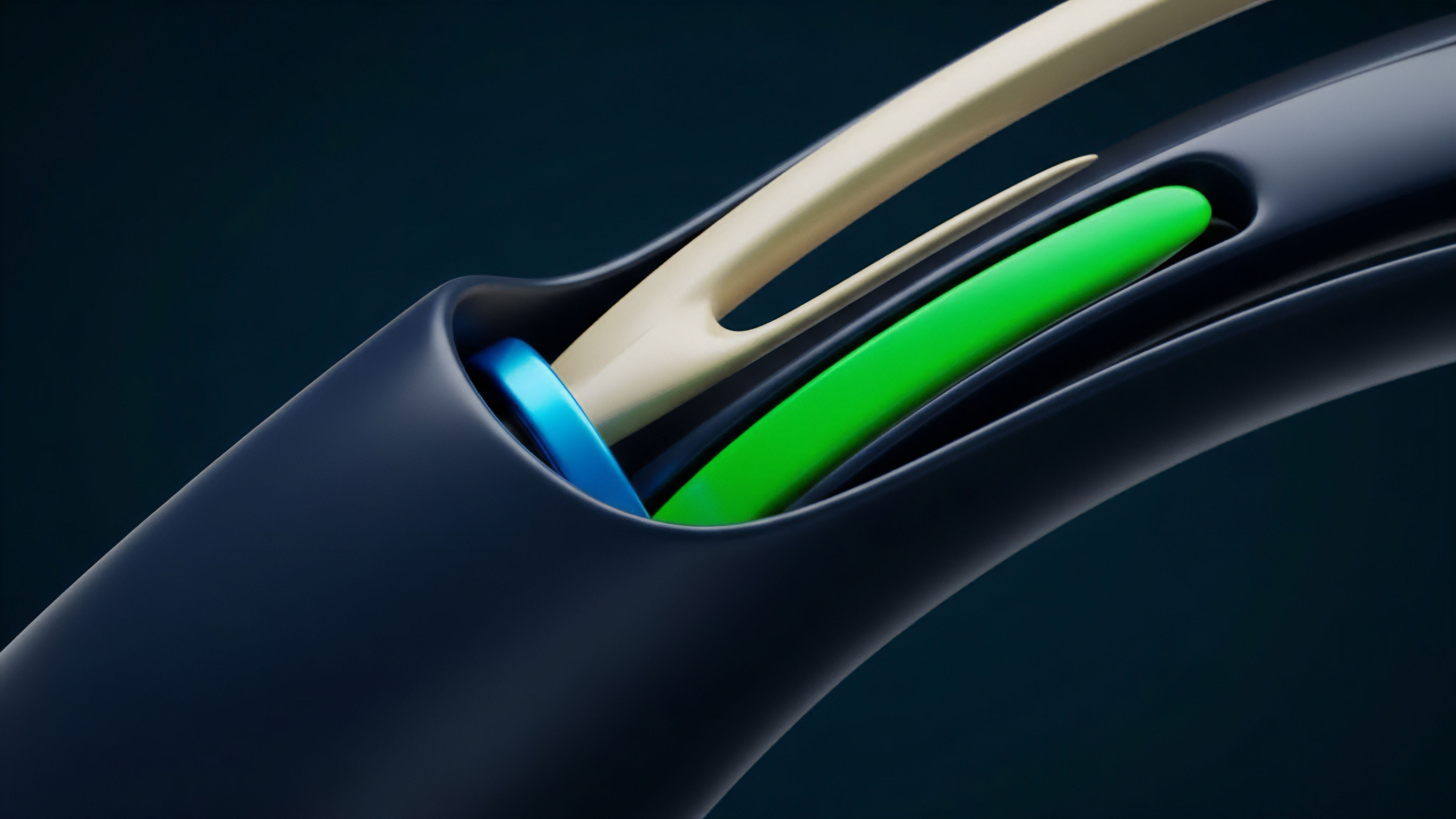

Real-time data feeds serve as the fundamental nervous system for any crypto options market, whether centralized or decentralized. The data feed’s core function is to provide the continuous stream of information required for accurate pricing, risk management, and automated settlement. In traditional finance, this data infrastructure is mature, standardized, and often taken for granted.

In the crypto space, however, data feeds face unique challenges related to market fragmentation, network latency, and the inherent trust assumptions of decentralized systems. A data feed for options must deliver information beyond a simple spot price. It must account for volatility surfaces, order book depth, and implied volatility (IV) calculations across various strikes and expirations.

The quality of this real-time data directly determines the efficiency and fairness of the market. Without reliable, low-latency data, options pricing models cannot function correctly, leading to significant arbitrage opportunities, inaccurate risk assessments, and potential cascading liquidations. The data feed is the bridge between the chaotic, high-frequency market environment and the structured, deterministic logic of a smart contract.

The Data Feed as a Systemic Nexus

The data feed acts as a critical nexus, connecting market microstructure to quantitative finance models. For a decentralized options protocol, the data feed is the oracle that feeds the settlement engine and the margin system. This places an immense burden on the data provider to ensure accuracy and timeliness.

A single point of failure or data manipulation at this level can lead to systemic risk across multiple protocols. The integrity of the options market hinges entirely on the integrity of its data input.

A reliable real-time data feed for crypto options provides the continuous stream of information required for accurate pricing, risk management, and automated settlement in decentralized markets.

Origin

The concept of real-time data feeds in crypto markets originated from the need for price discovery on centralized exchanges (CEXs). Early CEXs provided simple APIs for spot prices, which were sufficient for basic trading. However, as crypto derivatives evolved, especially with the introduction of options, the demand for more sophisticated data became apparent.

The “oracle problem” emerged as a core challenge for decentralized finance (DeFi). A smart contract, by design, cannot access external data sources directly. It requires a trusted intermediary to feed it information.

The initial solutions for options pricing in DeFi were often rudimentary, relying on a small number of centralized oracles or simple time-weighted average prices (TWAPs) from a few exchanges. This created significant security vulnerabilities, as a malicious actor could manipulate the price feed by concentrating liquidity on a specific exchange or exploiting the oracle’s update mechanism. The development of more robust oracle networks, such as Chainlink, provided a more secure and decentralized solution by aggregating data from multiple sources.

This aggregation model aims to mitigate single points of failure and increase resistance to manipulation.

From CEX API to Decentralized Oracle Network

The evolution of data feeds in crypto options traces a clear path from simple, centralized APIs to complex, decentralized oracle networks (DONs). Early CEX options platforms like Deribit set the standard for data availability, providing granular order book data and volatility surfaces. The challenge for DeFi was to replicate this level of data quality and integrity without relying on a central authority.

This led to the creation of oracle solutions specifically designed for derivatives, which aggregate data from a wide range of sources, including CEXs, DEXs, and proprietary data providers. The goal is to create a robust and censorship-resistant data layer that can support the sophisticated requirements of options contracts.

Theory

From a quantitative finance perspective, the data feed for options must provide more than just the current spot price of the underlying asset.

It must deliver the necessary inputs to calculate the “Greeks” and construct the volatility surface. The theoretical framework for options pricing, often rooted in models like Black-Scholes-Merton (BSM), requires specific data points that change dynamically. The real-time data feed provides these inputs, making it possible to calculate risk sensitivities in real-time.

The core data points required for options pricing extend beyond the underlying asset’s price. The model requires:

- Implied Volatility (IV): The market’s expectation of future price volatility. This is not directly observable and must be calculated from market data.

- Risk-Free Rate: A benchmark interest rate used in options pricing models. In traditional finance, this is a clear variable, but in crypto, it can be derived from lending protocols or stablecoin yields.

- Time to Expiration: The time remaining until the option contract expires, which must be precisely calculated to determine time decay.

- Strike Price: The price at which the option holder can buy or sell the underlying asset.

Volatility Surface Construction

A critical component of real-time options data is the construction of the volatility surface. The volatility surface is a three-dimensional plot that represents implied volatility as a function of both strike price and time to expiration. The data feed must continuously update this surface to reflect changes in market sentiment.

The skew of this surface, which describes how IV changes with different strike prices, is particularly important. A data feed that cannot capture this skew in real-time will lead to inaccurate pricing and potential mis-hedging. The BSM model assumes constant volatility, which is a significant oversimplification.

Modern options pricing relies on models that account for stochastic volatility, where volatility itself changes over time. A real-time data feed must provide the data necessary to feed these more advanced models, allowing for a more accurate reflection of market conditions.

| Data Feed Component | Traditional Finance (TradFi) | Decentralized Finance (DeFi) |

|---|---|---|

| Underlying Price Source | Centralized exchange feeds (e.g. Bloomberg, Refinitiv) | Decentralized oracle networks (DONs) aggregating CEX and DEX data |

| Volatility Surface Data | Proprietary data from market makers and exchanges | On-chain volatility oracles, aggregated CEX IV data, or proprietary calculation engines |

| Risk-Free Rate Input | Treasury yield curves (e.g. US Treasury rates) | On-chain lending protocol yields (e.g. Aave, Compound) |

| Latency Requirements | Sub-millisecond for high-frequency trading (HFT) | Block-time latency (seconds to minutes) or off-chain data feeds |

Approach

The implementation of real-time data feeds for crypto options requires careful consideration of the trade-off between speed and security. A data feed must be fast enough to prevent arbitrage opportunities while remaining secure against manipulation. Different approaches have emerged to balance these competing priorities.

Data Aggregation and Validation

Most robust data feeds employ an aggregation model. Instead of relying on a single source, data providers collect information from numerous exchanges and liquidity pools. This aggregated data is then processed through a validation layer to filter out outliers and potential manipulation attempts.

The process involves calculating a median or weighted average price, making it significantly more expensive for a single entity to corrupt the feed. This approach provides a higher degree of security for on-chain protocols, which rely on deterministic outcomes based on the data provided.

Latency Management and Off-Chain Calculation

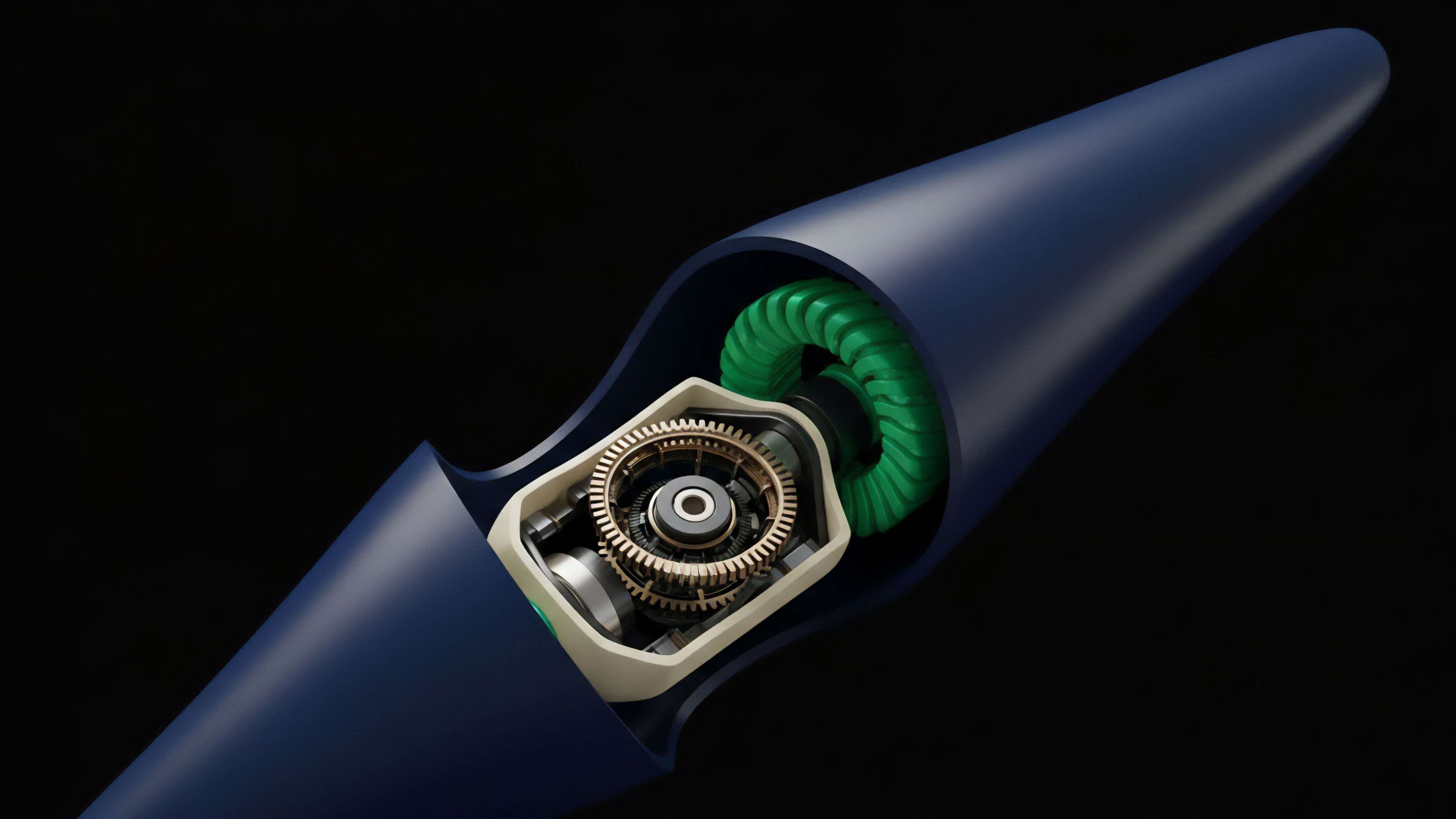

For high-frequency trading and sophisticated market making, latency is paramount. A delay of even a few seconds in a real-time feed can render pricing models obsolete in a volatile market. To address this, many protocols utilize off-chain computation.

Data feeds calculate complex metrics like implied volatility surfaces off-chain and then post a summary or a validated hash of this data on-chain. This reduces gas costs and allows for faster updates. The challenge here is ensuring the integrity of the off-chain calculation.

The data feed must provide cryptographic proofs or utilize a secure multi-party computation framework to ensure that the off-chain data has not been tampered with before it reaches the smart contract.

Off-chain computation for options data feeds reduces gas costs and allows for faster updates, while cryptographic proofs ensure the integrity of the data before it reaches the smart contract.

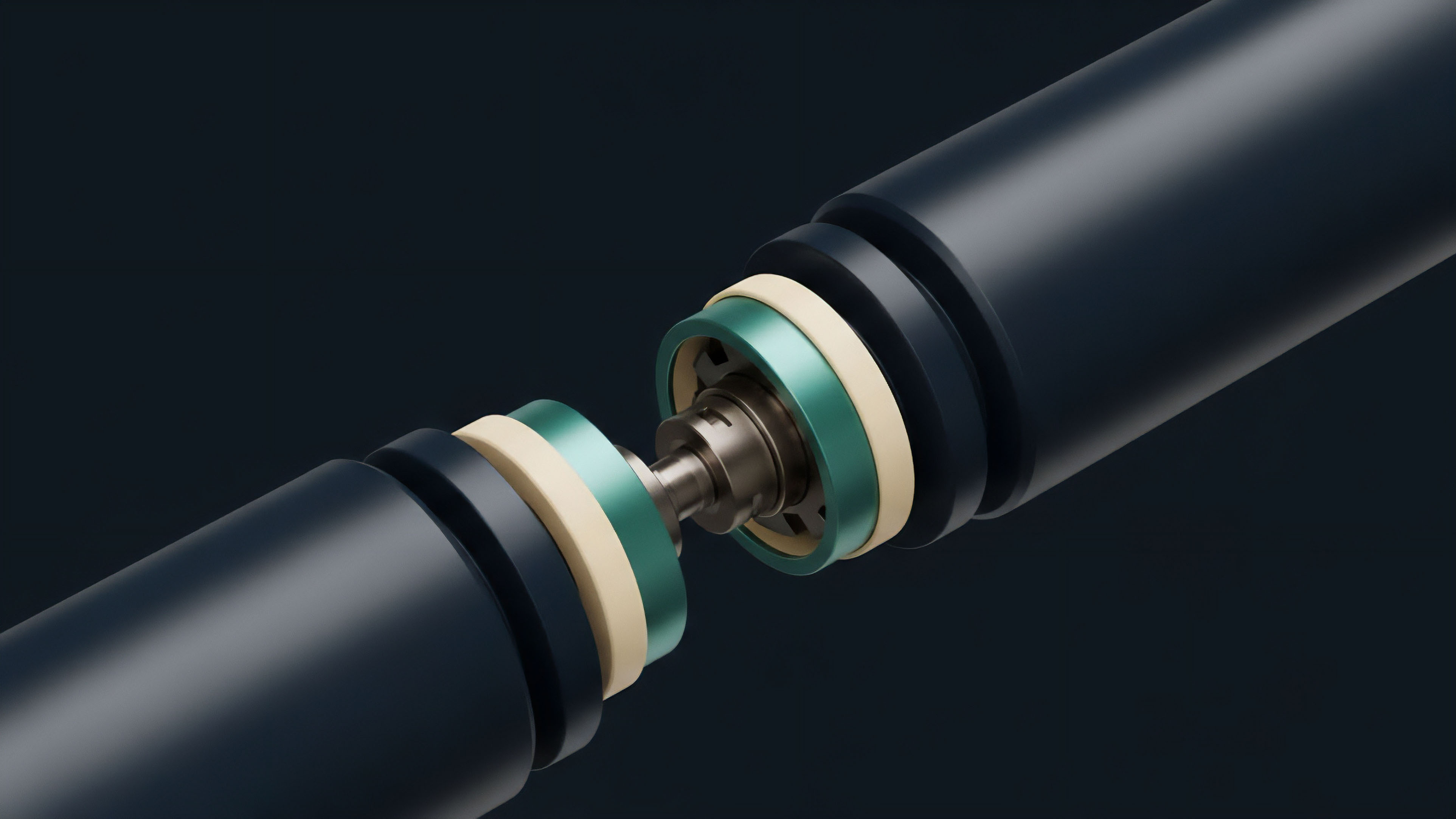

Data Feed Architecture Models

The data feed architecture can be broadly categorized into push and pull models. A push model continuously updates the data on-chain at regular intervals, while a pull model requires the smart contract to request data when needed. A push model is often more suitable for high-frequency options trading, as it provides continuous updates.

However, it incurs higher gas costs. A pull model is more gas-efficient but introduces potential latency issues during periods of high demand.

- Push Model: Data is continuously broadcasted to the network. This ensures a constant flow of information but can be expensive to maintain on-chain.

- Pull Model: Smart contracts request data on demand. This is cost-efficient but can result in data staleness if not managed carefully.

- Hybrid Model: A combination of push and pull, where critical data (like spot price) is pushed, while less time-sensitive data (like volatility surfaces) is pulled.

Evolution

The evolution of real-time data feeds for crypto options mirrors the increasing sophistication of the derivatives market itself. Early data feeds were simple and focused on price; current feeds are complex systems that attempt to model market dynamics. The shift from simple spot prices to full volatility surfaces represents a significant maturation.

This change was driven by the realization that options pricing requires more than just a single data point; it requires a representation of market expectations across time and strikes. The primary driver of data feed evolution has been the need to address specific systemic risks identified during market events. The flash crashes and cascading liquidations seen in early DeFi protocols demonstrated the fragility of simple data sources.

This led to a focus on robust data aggregation and manipulation resistance. The next stage of evolution involves integrating real-time data with advanced risk management systems. Data feeds now not only provide pricing inputs but also feed directly into margin calculation engines and liquidation protocols.

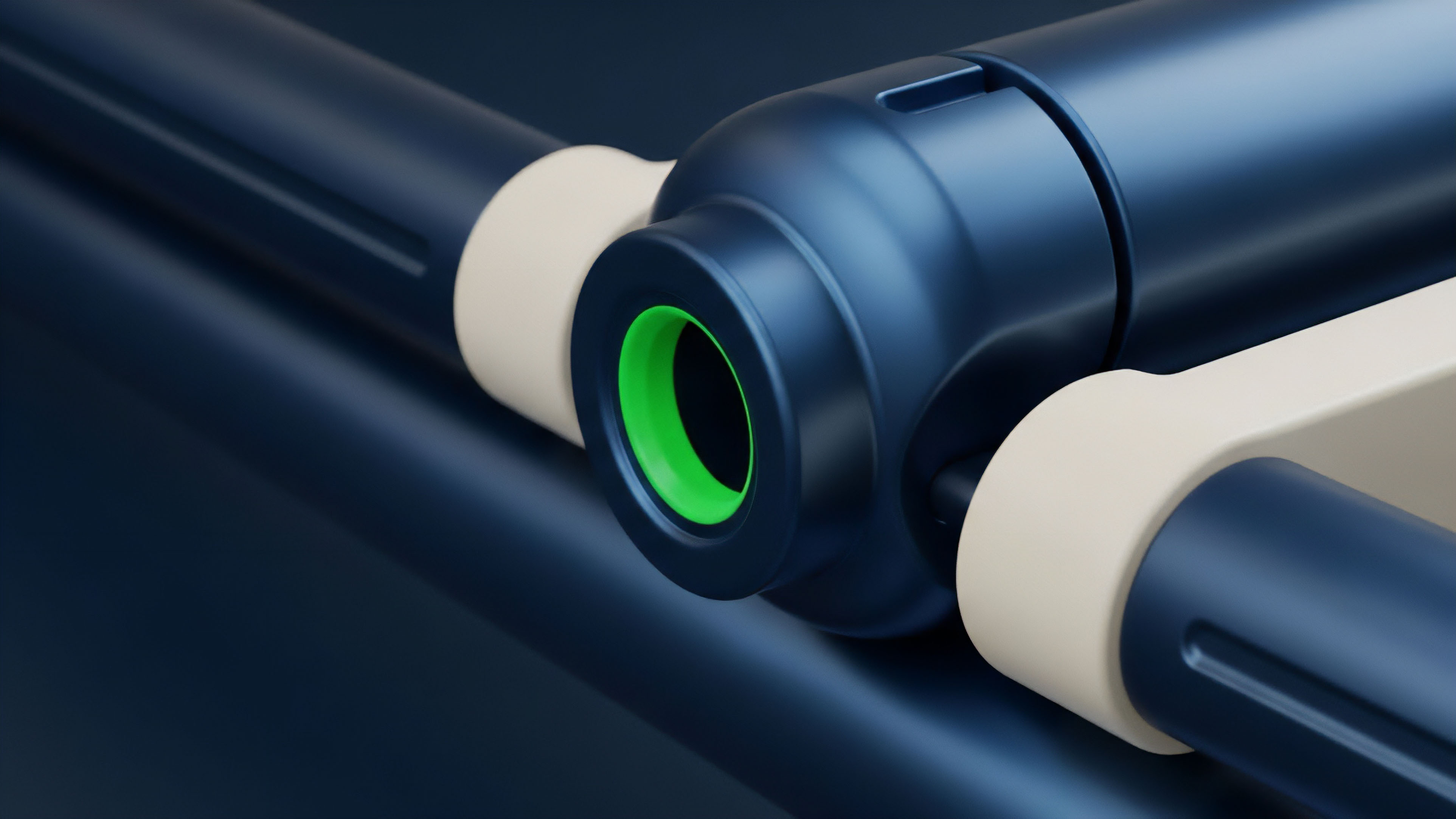

Addressing Liquidity Fragmentation

The fragmentation of liquidity across multiple CEXs and DEXs presents a significant challenge for data feeds. A single price feed from one venue may not reflect the true global market price. The data feed must evolve to aggregate liquidity across diverse platforms.

This requires sophisticated algorithms that can account for different trading volumes, slippage, and market depths on various exchanges. The goal is to provide a unified, representative price that reflects the aggregated market reality.

| Data Feed Model | Primary Focus | Key Advantage | Key Disadvantage |

|---|---|---|---|

| Simple Price Feed | Underlying asset spot price | Low cost, high speed | Inadequate for options pricing, high manipulation risk |

| Volatility Surface Feed | Implied volatility across strikes and expirations | Accurate options pricing, robust risk management | High complexity, higher gas costs, data source requirements |

| Aggregated Feed (DON) | Consensus-based price from multiple sources | Manipulation resistance, decentralized trust | Potential for data staleness, latency during network congestion |

Horizon

The future of real-time data feeds for crypto options will be defined by the intersection of Layer 2 solutions, regulatory changes, and the demand for greater capital efficiency. As Layer 2 networks scale, the constraints on data throughput and gas costs will lessen, allowing for more frequent and granular updates. This could enable the development of truly high-frequency options trading on decentralized platforms, rivaling the performance of centralized exchanges.

On-Chain Volatility Oracles

A significant development on the horizon is the creation of on-chain volatility oracles. These oracles would calculate and publish implied volatility surfaces directly on-chain, eliminating the need for off-chain calculation and reducing trust assumptions. This requires a new generation of smart contracts capable of handling complex mathematical operations efficiently.

The development of such oracles would fundamentally change how options are priced in DeFi, allowing for more accurate risk management and potentially unlocking new forms of structured products. The increasing regulatory scrutiny of crypto markets will also impact data feeds. Regulators will likely demand greater transparency and auditability of the data sources used for options pricing.

This could lead to a standardization of data feeds, similar to what exists in traditional finance, where specific data providers are designated as “approved” sources. The challenge for decentralized protocols will be to balance regulatory compliance with the core principles of decentralization and censorship resistance.

The Role of Behavioral Game Theory

From a behavioral game theory perspective, data feeds create an adversarial environment. The design of a data feed must anticipate and mitigate attempts by malicious actors to manipulate prices for profit. The next generation of data feeds will likely incorporate advanced mechanisms to detect and respond to coordinated attacks.

This includes implementing circuit breakers, dynamic fee adjustments, and reputation systems for data providers. The goal is to create a data feed that is not only technically sound but also economically secure, where the cost of manipulation significantly outweighs the potential profit.

- Latency Reduction: Layer 2 solutions will enable sub-second updates, facilitating high-frequency options trading on decentralized exchanges.

- Regulatory Standardization: Increased regulatory pressure may force data feeds to adopt standardized methodologies and data source verification processes.

- On-Chain Volatility Calculation: Future protocols will likely calculate and publish implied volatility surfaces directly on-chain, enhancing transparency and reducing trust assumptions.

- Data Integrity in Adversarial Environments: Advanced game theory models will be applied to data feed design to make manipulation economically infeasible.

Glossary

Real-Time Equity Tracking

Data Propagation

Blockchain Data Analytics

Data Availability Layers

Real-Time Risk Settlement

Perpetual Futures Data Feeds

Data Integrity Prediction

Low-Latency Data Architecture

Risk Data Feed