Essence

Off-chain data processing represents the critical challenge of feeding external information into a deterministic, closed blockchain environment. For decentralized options protocols, this process provides the necessary inputs for calculating collateral value, determining strike prices, and executing settlement logic. The blockchain itself is isolated from real-world events, meaning any contract requiring information about external asset prices ⎊ such as the price of BTC/USD at expiration ⎊ must rely on an external mechanism to provide that data.

This external mechanism is commonly referred to as an oracle. Without reliable off-chain data, derivatives contracts cannot function in a trustless manner, as their very purpose is to create financial exposure based on real-world market movements. The integrity of the off-chain data processing layer is therefore directly proportional to the financial security and reliability of the options protocol itself.

Off-chain data processing acts as the necessary bridge between the deterministic logic of a smart contract and the stochastic nature of external market reality.

The core function of this data processing is to transform raw market data into a standardized format that a smart contract can interpret and act upon. This involves more than simply fetching a price; it includes data aggregation from multiple sources, validation to prevent manipulation, and a mechanism for secure delivery to the blockchain. The challenge intensifies with options contracts because they often rely on time-sensitive, high-frequency data for collateral management and liquidation.

If the data feed is slow or inaccurate, the protocol’s risk engine cannot function correctly, leading to potential undercollateralization or unfair liquidations.

Origin

The necessity for robust off-chain data processing emerged with the advent of complex financial primitives in decentralized finance. Early blockchain applications focused on simple value transfer and state changes that did not require external information. The introduction of lending protocols and synthetic assets created the initial demand for reliable price feeds to calculate collateral ratios and liquidation thresholds.

Options protocols, which require more dynamic inputs than simple lending, quickly inherited and amplified this demand. The initial approach involved relying on single-source oracles, often operated by the protocol itself. This created a significant security vulnerability, as the protocol’s integrity was dependent on the honesty of a single entity providing the data.

The subsequent evolution toward decentralized oracle networks (DONs) was a direct response to this systemic weakness, recognizing that data integrity must be secured by cryptoeconomic incentives rather than simple trust assumptions.

The transition from simple price feeds to advanced data processing mirrors the maturation of traditional finance from basic forward contracts to complex derivatives. As decentralized derivatives protocols began to offer European and American-style options, the required data inputs became more sophisticated. This required a move beyond basic spot prices to incorporate data on volatility, funding rates, and settlement mechanisms.

The challenge shifted from simply providing a single number to building a resilient, distributed network capable of processing and verifying complex data streams in real time. The architecture of off-chain data processing today is a direct result of the lessons learned from early DeFi liquidations, where data manipulation and oracle failure led to significant losses.

Theory

The theoretical foundation of off-chain data processing for options centers on the conflict between data latency and data manipulation risk. An options contract requires data at specific points in time, often at expiration or for collateral checks. The data must be accurate at that precise moment.

However, retrieving data from external sources and validating it takes time, introducing latency. This latency creates a window for manipulation, where a malicious actor can influence the price on a decentralized exchange (DEX) just before the oracle updates, profiting from the discrepancy before the oracle corrects itself. This is particularly relevant in the context of Maximal Extractable Value (MEV), where data feeds become targets for front-running strategies.

The design of the data feed itself is critical. For options, the price feed must reflect the true market consensus rather than a single exchange’s price. A common method to achieve this is through Time-Weighted Average Price (TWAP) or Volume-Weighted Average Price (VWAP).

A TWAP calculates the average price over a specified time interval, smoothing out short-term volatility and making manipulation more expensive for an attacker. A VWAP weights the average price by trading volume, providing a more accurate representation of the market’s consensus price by prioritizing larger trades. The choice between these two methods depends on the specific risk profile of the options protocol and its sensitivity to short-term price fluctuations.

The reliability of a decentralized options protocol’s risk engine hinges entirely on its ability to mitigate data manipulation by balancing latency with robust aggregation methods.

The integrity of off-chain data processing is fundamentally a game-theoretic problem. Oracle designs must create incentives for data providers to act honestly and disincentives for them to collude or provide bad data. This often involves a staking mechanism where providers must lock up collateral that can be slashed if they submit inaccurate data.

The cost of providing bad data must exceed the potential profit from manipulating the options market. The theoretical ideal is a cryptoeconomic system where honest behavior is the dominant strategy for all participants.

Data Feed Types for Options

Options contracts require specific data types to calculate their value and manage risk. The complexity of the contract dictates the required inputs, moving beyond simple spot prices to include volatility and settlement mechanisms. The following table compares standard data feed requirements for different derivative types:

| Data Feed Type | Required Data Inputs | Application in Options Protocols |

|---|---|---|

| Spot Price Feed | Current asset price (e.g. BTC/USD) | Collateral valuation, strike price determination, final settlement price for European options. |

| Volatility Feed | Realized or implied volatility data (e.g. VIX equivalent) | Accurate Black-Scholes pricing, risk management for option writers, dynamic margin adjustments. |

| Settlement Data Feed | Time-weighted price at expiration, or specific index price | Final payout calculation, ensuring fair settlement based on a robust, manipulation-resistant average. |

Approach

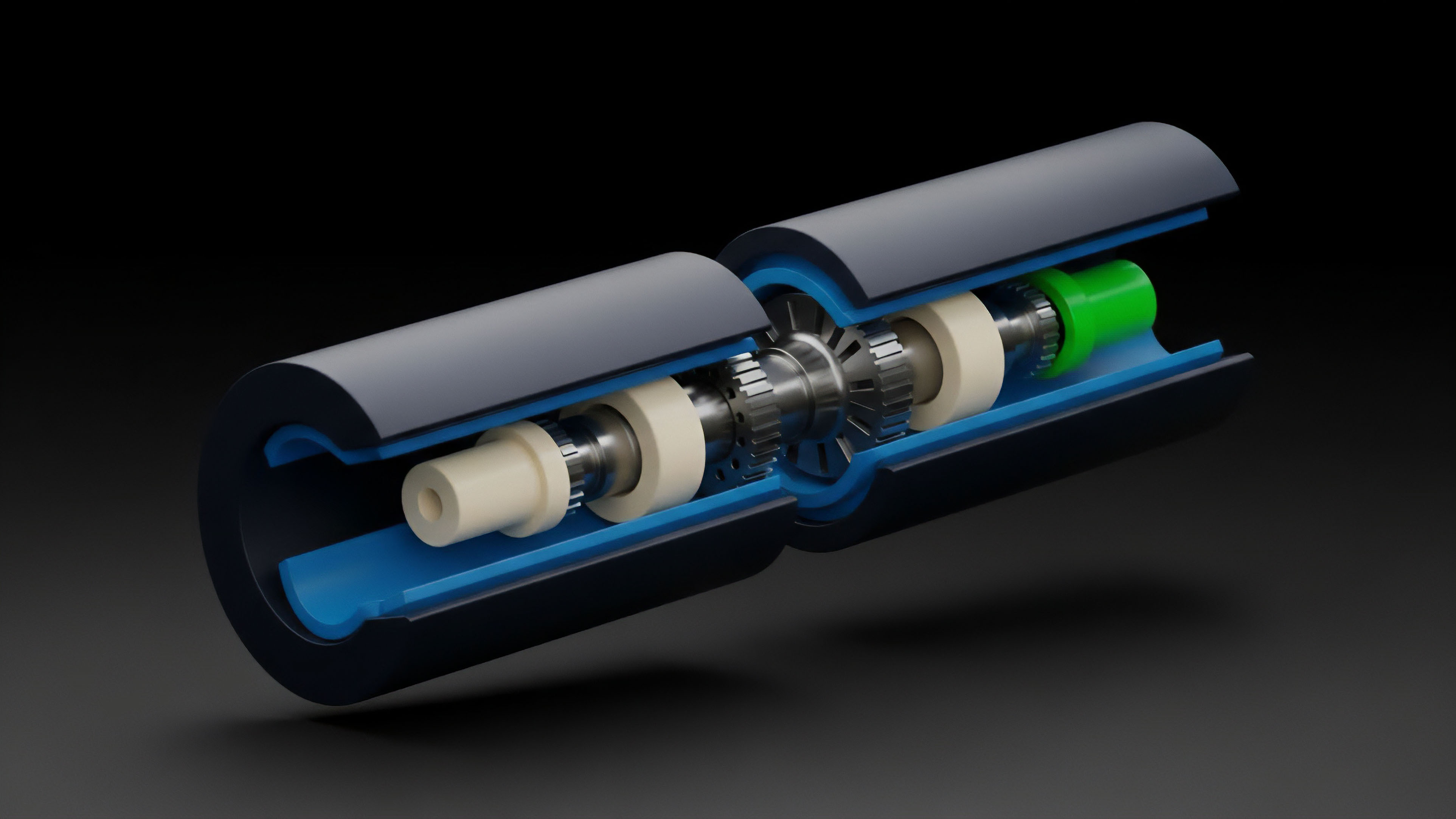

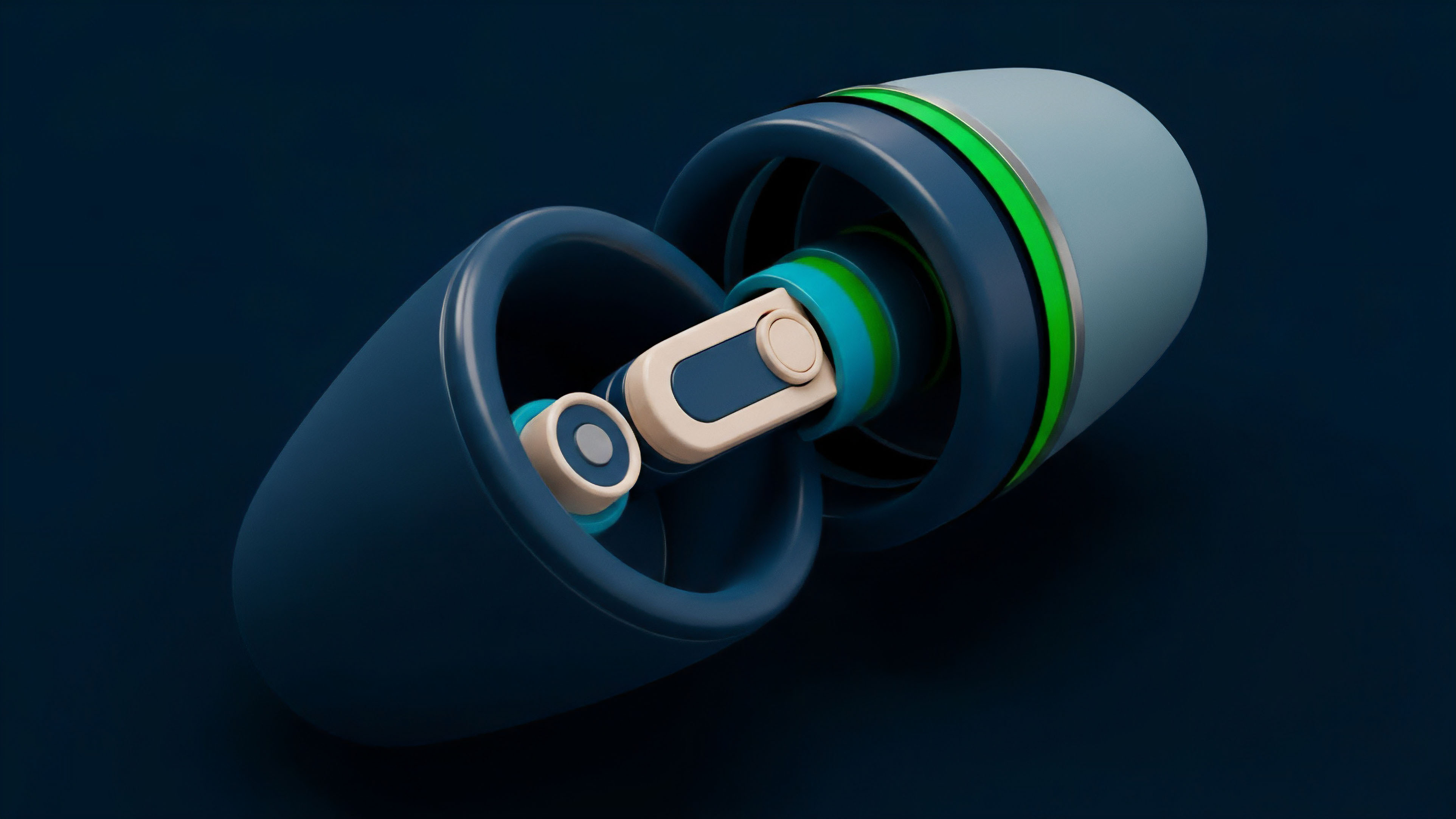

The current approach to off-chain data processing for decentralized options relies heavily on decentralized oracle networks (DONs). These networks distribute the responsibility of data provision across multiple independent nodes, eliminating the single point of failure inherent in centralized oracles. The process begins with data aggregation, where a network of nodes collects price information from various exchanges and data sources.

This raw data is then validated against a set of rules, often involving median calculations or outlier removal to ensure consensus and prevent single-source errors. Once validated, the data is aggregated into a single, signed payload that is delivered to the blockchain for smart contract consumption.

The specific implementation of data processing within an options protocol must account for the high sensitivity of derivatives pricing to small changes in inputs. For example, a minor fluctuation in the price feed can significantly alter the delta of an option, leading to rapid changes in collateral requirements. The protocol must also decide on the update frequency of its data feeds.

High-frequency updates reduce latency and improve pricing accuracy but increase transaction costs for the protocol. Low-frequency updates save costs but expose the protocol to greater risk during periods of high volatility.

A well-designed oracle network must balance data quality, update frequency, and cost efficiency to maintain a stable risk environment for derivative trading.

A significant challenge in implementing off-chain data processing is the cost of data updates on Layer 1 blockchains. The cost of fetching data and updating the state on-chain can make high-frequency derivatives trading economically unviable. This has led to the development of hybrid approaches where certain calculations or data updates are performed off-chain, with only the final, verified result submitted to the blockchain.

This optimizes for efficiency while retaining security guarantees. The following list outlines the core principles of designing a robust off-chain data feed for derivatives:

- Source Diversity: Data must be sourced from a variety of exchanges and data aggregators to prevent manipulation on any single platform.

- Cryptoeconomic Security: Data providers must stake collateral that can be slashed for submitting inaccurate data, ensuring financial alignment with honest behavior.

- Data Aggregation Method: The use of TWAP, VWAP, or median calculations to smooth out short-term volatility and mitigate flash loan attacks.

- Latency Management: Dynamic update mechanisms that adjust frequency based on market volatility, ensuring timely data during high-risk periods.

Evolution

The evolution of off-chain data processing has moved beyond simple price feeds to encompass more complex inputs required for advanced derivatives modeling. The first major step was the introduction of volatility oracles. Standard Black-Scholes models rely on implied volatility as a key input.

Providing a reliable, decentralized feed for implied volatility ⎊ derived from option market prices rather than just spot prices ⎊ is essential for accurately pricing options and managing risk for liquidity providers. This requires a different type of data processing, often involving more complex calculations off-chain before submission to the smart contract.

Another significant shift is the migration of data processing to Layer 2 (L2) solutions. Layer 1 blockchains face limitations in throughput and cost that restrict the frequency and complexity of data updates. L2s, such as rollups, allow for cheaper and faster data processing.

This enables options protocols to perform more granular risk calculations off-chain and only submit a summary proof or final settlement data to the Layer 1 chain. This hybrid architecture optimizes capital efficiency and allows for a greater variety of derivatives products to be offered. The following table illustrates the trade-offs between L1 and L2 data processing architectures:

| Architectural Approach | Data Update Frequency | Security Model | Transaction Cost |

|---|---|---|---|

| Layer 1 On-Chain Processing | Low to Medium (limited by block space) | High (inherits L1 security) | High (expensive for frequent updates) |

| Layer 2 Off-Chain Processing | High (near real-time) | Hybrid (relies on L1 settlement and L2 validity proofs) | Low (cheaper transactions) |

This architectural evolution also highlights a subtle but important divergence in data security models. While early oracle networks focused on cryptoeconomic incentives, new approaches leverage secure hardware enclaves (TEEs) to execute data processing in a trusted, tamper-proof environment off-chain. The TEE ensures that even the data provider cannot manipulate the data feed, providing a stronger guarantee of integrity.

The development of these technologies is critical for enabling complex, high-frequency derivatives markets where data integrity cannot be compromised by human or systemic vulnerabilities.

Horizon

Looking ahead, the next generation of off-chain data processing will focus on enhanced privacy and verifiable computation. The integration of zero-knowledge proofs (ZKP) represents a significant leap forward. ZKPs allow a data provider to prove that they have correctly processed data off-chain without revealing the data itself.

This could be applied to complex calculations like implied volatility or risk modeling, where the smart contract only needs to verify the correctness of the result, not the raw inputs. This enhances privacy for market makers and liquidity providers who may not want to expose their trading strategies to the public blockchain.

Another critical development will be the integration of data feeds with Decentralized Physical Infrastructure Networks (DePIN). DePIN provides a mechanism for collecting real-world data in a decentralized manner, extending the reach of oracles beyond financial markets to include real-world events, weather data, or logistical information. This will enable the creation of exotic options contracts that are contingent on non-financial outcomes.

The challenge here is to create a secure, verifiable data collection mechanism that can withstand adversarial attempts to manipulate physical sensors or data inputs. The future of off-chain data processing is therefore a convergence of cryptography, hardware security, and distributed systems engineering, all aimed at expanding the design space for decentralized derivatives beyond simple financial assets.

The future trajectory of off-chain data processing will see a convergence of zero-knowledge proofs and secure hardware, enabling complex, private calculations off-chain while maintaining full verifiability on-chain.

The final stage of this evolution involves the creation of fully decentralized risk management systems that process data off-chain and automatically adjust collateral requirements based on real-time volatility shifts. This moves away from static risk parameters toward dynamic, data-driven systems that can react to changing market conditions. This requires not just accurate data, but a sophisticated off-chain computation engine that can calculate risk metrics (Greeks) and automatically update protocol parameters.

This level of automation will significantly reduce counterparty risk and improve capital efficiency, allowing for a new class of derivatives products that can truly compete with traditional finance in terms of speed and complexity.

Glossary

Off-Chain Identity

Off-Chain State Channels

Zero-Latency Data Processing

Cross-Chain Data Bridges

Off-Chain State Machine

Off-Chain Bidding

Oracle Problem

On-Chain Settlement

Market Consensus