Essence

Data aggregation methods in decentralized finance (DeFi) options protocols represent the architectural mechanism for synthesizing disparate, fragmented market information into a single, reliable price feed. This process is essential for the accurate pricing, collateralization, and liquidation of derivative positions. In traditional finance, a centralized exchange acts as the primary source of truth for price discovery.

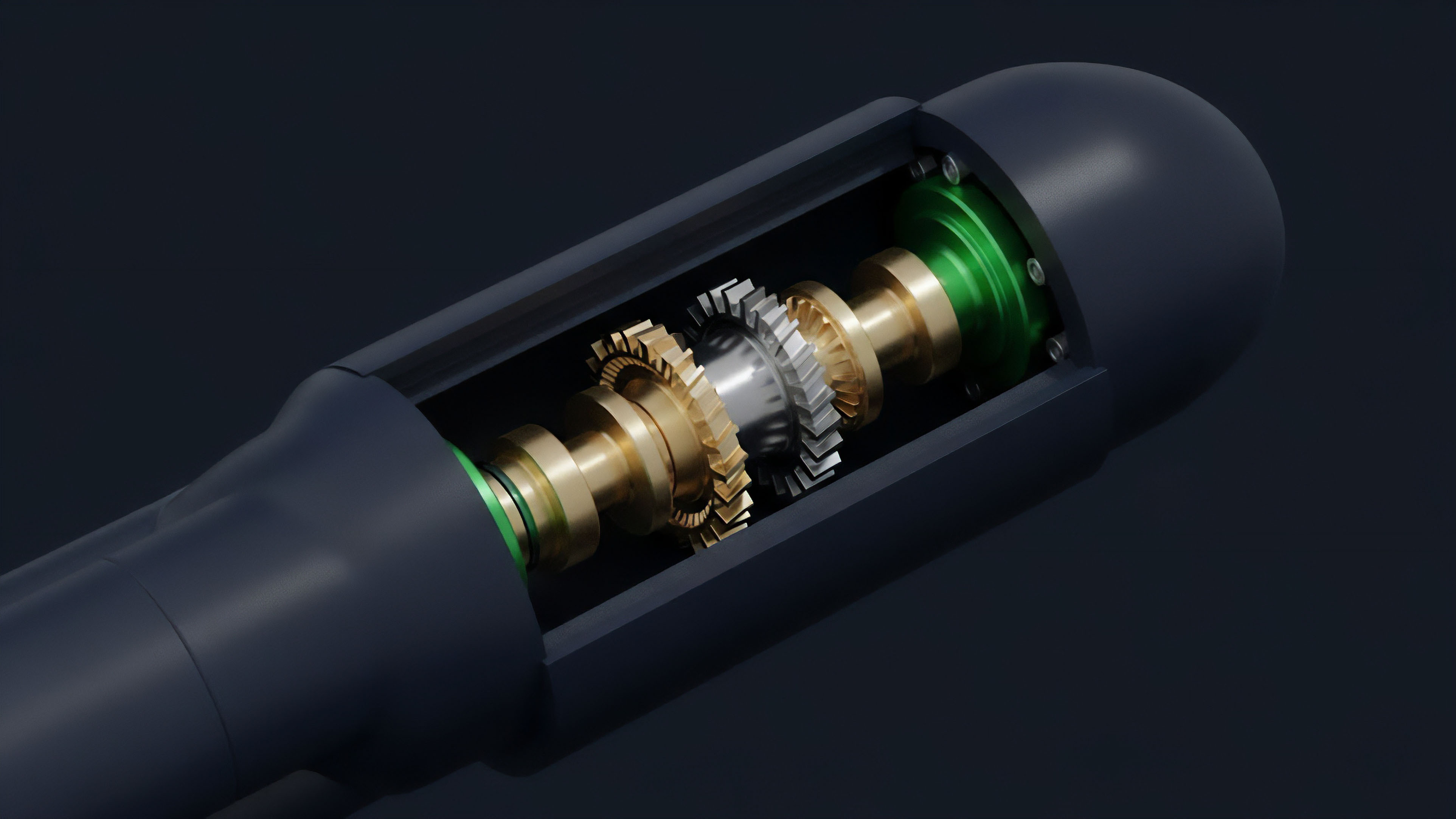

However, decentralized markets operate across numerous venues ⎊ both on-chain automated market makers (AMMs) and off-chain order books ⎊ creating a data landscape where no single source holds universal authority. The aggregation method determines how a protocol creates a “synthetic index price” from this chaotic data environment. The integrity of a protocol’s risk engine, particularly for perpetual options and exotic derivatives, hinges entirely on the robustness and manipulation resistance of its chosen aggregation methodology.

A failure in data aggregation is not simply an inaccuracy; it is a systemic vulnerability that can be exploited for profit by malicious actors, leading to cascading liquidations and protocol insolvency.

Data aggregation in DeFi options protocols creates a single source of truth for pricing and risk management from fragmented market information.

The core challenge lies in balancing latency and security. A price feed must be updated quickly enough to reflect real-time market movements, allowing for efficient trading and risk management. Yet, speed cannot compromise security.

Aggregation methods must incorporate mechanisms to filter out malicious data inputs, protect against flash loan attacks that temporarily distort spot prices on specific exchanges, and ensure that the final aggregated value accurately reflects the true market consensus rather than a temporary anomaly. This architectural choice is where protocol physics and quantitative finance converge.

Origin

The necessity for sophisticated data aggregation methods emerged from the limitations of early decentralized oracle designs.

The first generation of oracle solutions often relied on single-source data feeds or simple multi-source models without robust validation. These early designs proved susceptible to manipulation, particularly during periods of high market volatility or specific exploits like flash loan attacks. A single large trade on a specific on-chain AMM could temporarily skew its price, and if that AMM was a significant component of an oracle’s data source, the aggregated price would be corrupted.

This vulnerability was particularly pronounced in options protocols where accurate spot prices are fundamental to calculating strike prices, premiums, and collateral requirements. The inability to distinguish between genuine market movement and temporary price manipulation led to significant financial losses for protocols and users. This problem required a shift in perspective, moving from a simple data collection model to a data processing and validation model.

The evolution of aggregation methods in crypto options directly reflects the lessons learned from these early exploits. The industry recognized that simply having multiple data sources was insufficient; the method of combining those sources had to be resilient against adversarial behavior. The development of more advanced methods ⎊ such as volume-weighted averaging and median-based filtering ⎊ was a direct response to the game theory of manipulation.

Protocols realized they needed to design aggregation methods where the cost of corrupting the aggregated price exceeded the potential profit from doing so. This led to the creation of hybrid systems that combine on-chain data with off-chain, signed data from reputable centralized exchanges, leveraging the strengths of both systems while mitigating their individual weaknesses.

Theory

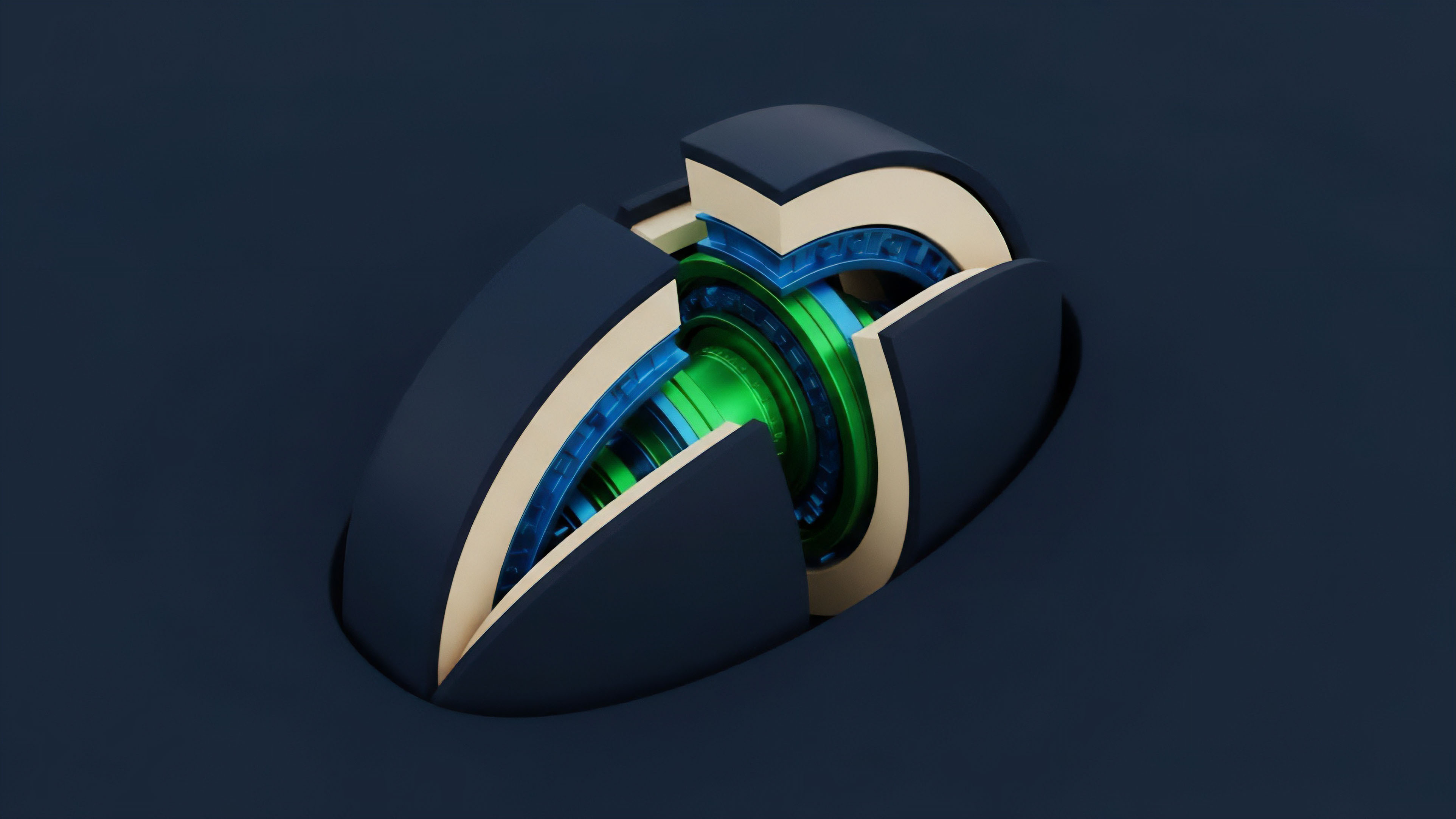

The theoretical foundation of data aggregation for derivatives protocols rests on robust statistical methods and game-theoretic incentive design.

The objective is to construct an index price that minimizes variance and maximizes manipulation resistance. The core methodologies typically fall into several categories, each with distinct trade-offs regarding latency, capital efficiency, and security.

Median Price Aggregation

The simplest and most common method is median pricing. This involves collecting data from a set of diverse sources and taking the middle value. The advantage of median aggregation is its inherent resistance to outliers.

If a single data source, or even a minority of sources, reports a manipulated price, the median value remains unaffected. The median calculation effectively filters out malicious data points without requiring complex statistical analysis or a consensus mechanism. However, median aggregation can be slow to react to genuine market movements if a large number of sources are reporting stale data, potentially hindering efficient risk management during high-velocity events.

Time-Weighted Average Price (TWAP)

For protocols where manipulation resistance over short time frames is paramount, a Time-Weighted Average Price (TWAP) calculation is often employed. A TWAP calculates the average price of an asset over a specified time window, weighting each data point equally by time. This method makes it significantly more expensive for an attacker to manipulate the price, as they must sustain the manipulation over the entire duration of the time window to move the average price significantly.

While highly effective against flash loan attacks and short-term manipulation, a TWAP feed introduces latency, meaning the price used for liquidations may not reflect the current spot price. This latency can be problematic for options protocols where accurate real-time pricing is necessary for calculating volatility surfaces and option premiums.

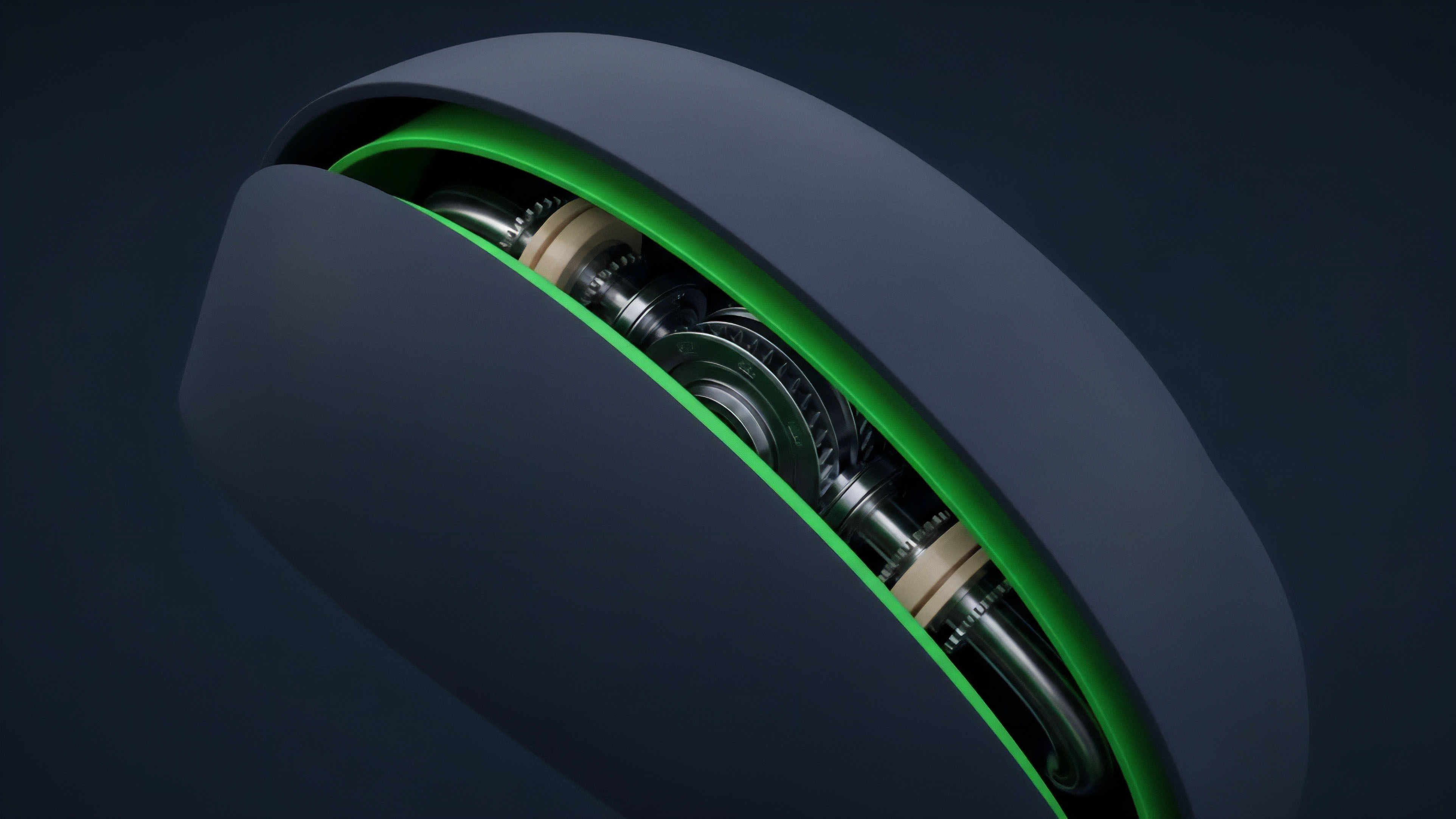

Volume-Weighted Average Price (VWAP)

A more sophisticated method is the Volume-Weighted Average Price (VWAP), which weights each data source based on its reported trading volume. The rationale here is that sources with higher liquidity and larger trading volumes are more difficult to manipulate and therefore represent a more accurate reflection of the true market price. VWAP aggregation places greater emphasis on data from major centralized exchanges and large on-chain AMMs.

The challenge with VWAP is the potential for data providers to falsify volume metrics or for a protocol to rely too heavily on a single source that, while high volume, could still be compromised. The implementation of VWAP requires careful design to prevent sybil attacks where an attacker creates artificial volume across multiple sources.

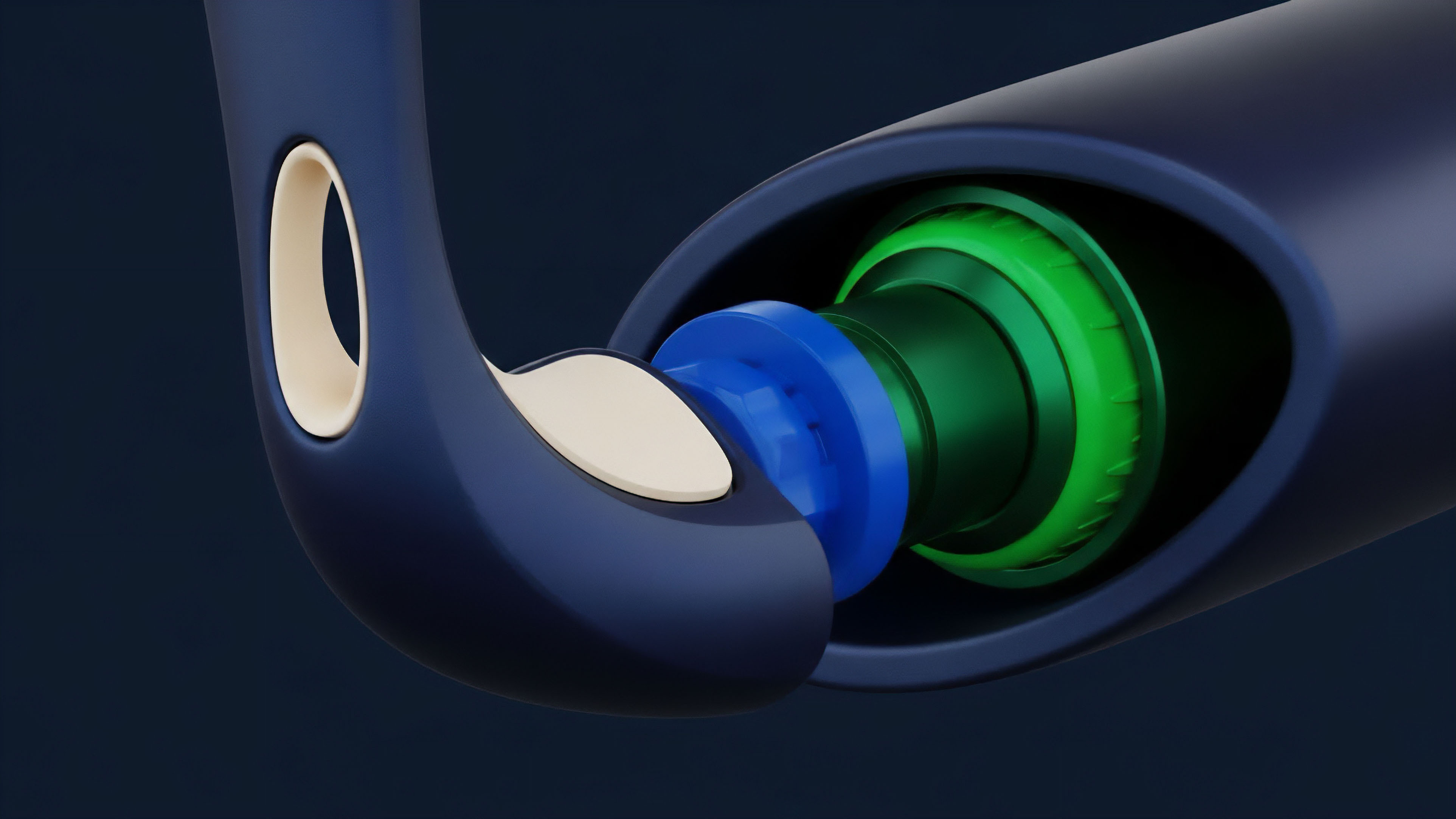

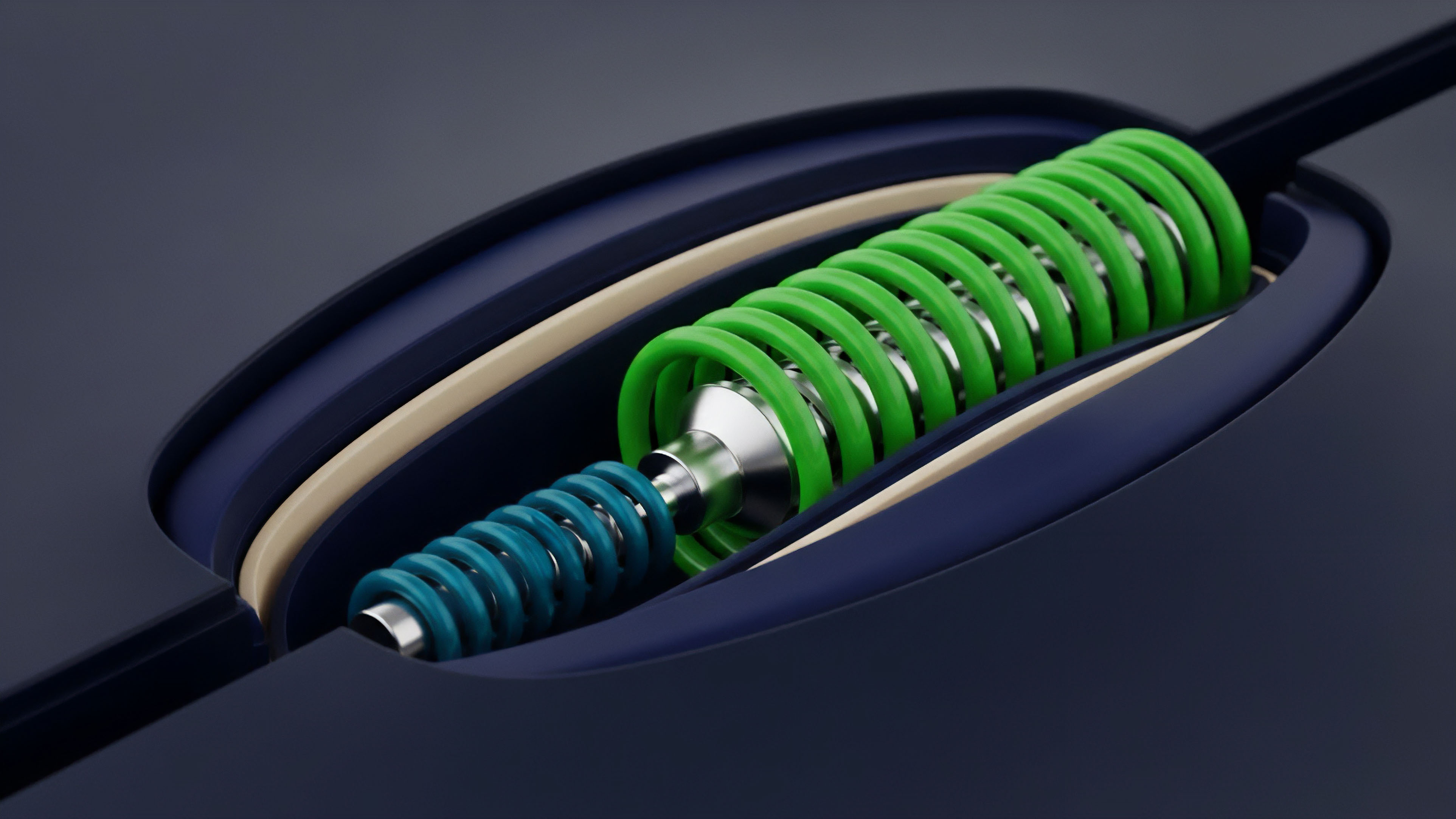

Hybrid Aggregation and Outlier Detection

Modern protocols often use a hybrid approach combining multiple methodologies. This typically involves a core aggregation method (like medianization) coupled with advanced outlier detection. The outlier detection mechanism identifies data points that fall outside a predetermined standard deviation from the aggregated price.

These outliers are then discarded, and a new calculation is performed. This approach balances the robustness of medianization with the responsiveness of a dynamic filtering system.

| Aggregation Method | Manipulation Resistance | Latency Trade-off | Typical Use Case |

|---|---|---|---|

| Median Price | High against single-source manipulation | Low to moderate; slow to react to genuine spikes | Low-frequency risk management, collateral checks |

| Time-Weighted Average Price (TWAP) | High against short-term manipulation | High; significant lag behind real-time price | Settlement, long-term collateral value calculation |

| Volume-Weighted Average Price (VWAP) | Moderate; susceptible to volume spoofing | Low; reflects real-time market activity | High-frequency trading, real-time pricing models |

Approach

The implementation of data aggregation methods in crypto options protocols requires careful consideration of both the on-chain and off-chain environments. The primary objective is to create a price feed that is resistant to manipulation while remaining responsive enough for the specific derivative product being offered. The practical approach involves a combination of data source selection, aggregation logic, and incentive design for data providers.

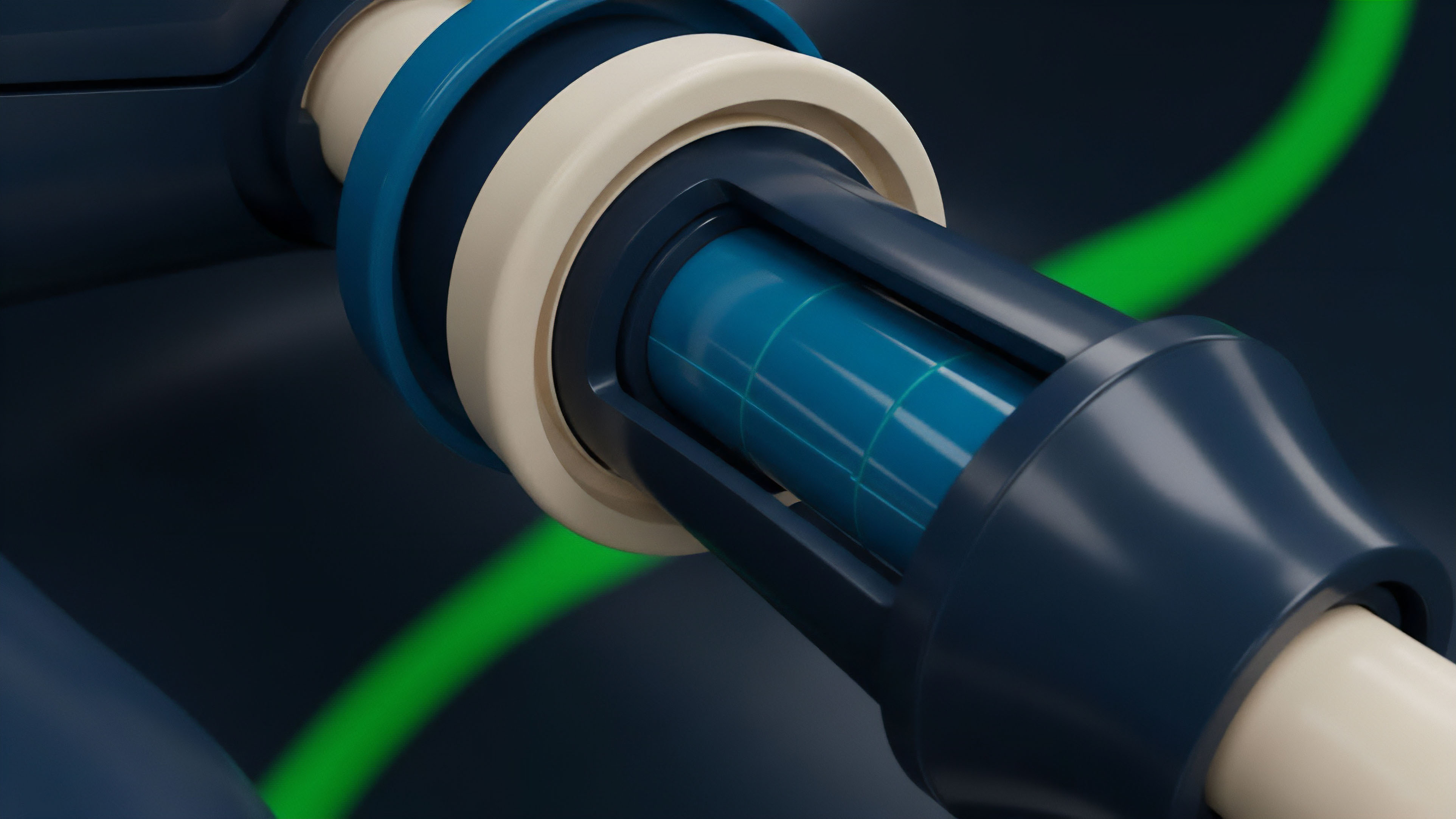

Source Selection and Data Normalization

The first step in building a robust aggregation method is carefully selecting a diverse set of data sources. A common strategy involves using a mix of centralized exchanges (CEXs) and decentralized exchanges (DEXs). CEXs offer deep liquidity and high trading volumes, making their data difficult to manipulate.

DEXs provide on-chain data that is transparent and verifiable. The challenge here is data normalization; prices across exchanges often differ due to latency and liquidity. The aggregation method must account for these discrepancies to produce a meaningful index price.

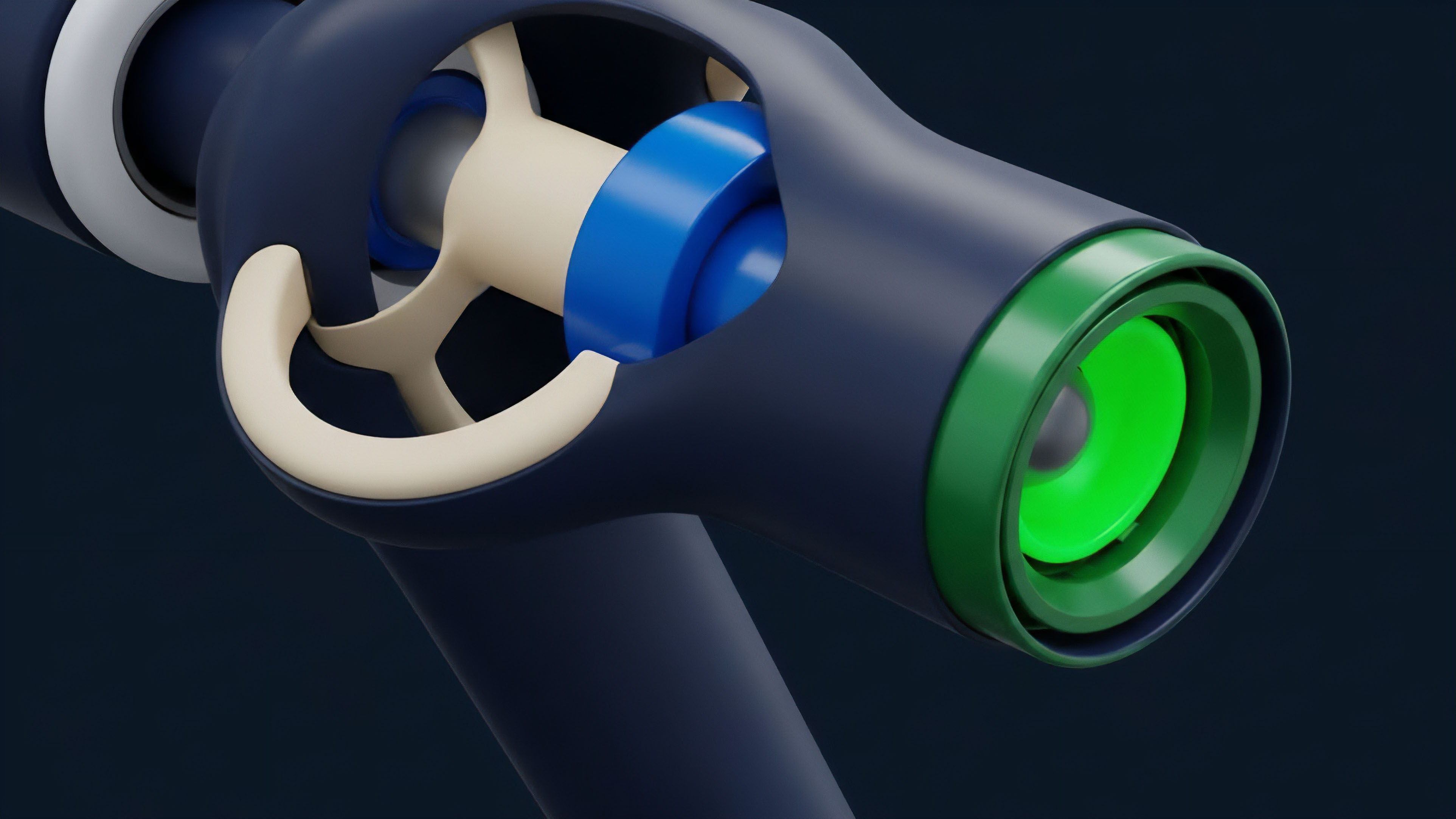

Risk-Based Aggregation Logic

Protocols often employ different aggregation methods for different risk parameters. For example, a protocol might use a high-latency, highly secure TWAP for calculating collateral value and liquidations, where a short-term price spike should not trigger an immediate cascade. Conversely, a lower-latency median price feed might be used for real-time options pricing and premium calculations, where responsiveness to market movements is more critical for efficient trading.

Incentive Structures and Provider Staking

The game theory of data aggregation relies on incentivizing data providers to submit honest data. Protocols achieve this by requiring data providers to stake collateral. If a provider submits data that deviates significantly from the aggregated median price (or a specific standard deviation threshold), their stake is slashed.

This mechanism ensures that the cost of submitting malicious data outweighs the potential profit from doing so.

- Source Selection: Choose a diverse set of data sources, balancing high-liquidity centralized exchanges with transparent on-chain DEXs.

- Data Normalization: Adjust for differences in pricing and liquidity across sources to ensure a fair comparison.

- Outlier Filtering: Implement statistical methods to identify and remove data points that deviate significantly from the consensus.

- Weighted Averaging: Calculate the final price using methods like medianization or VWAP, prioritizing sources based on volume or reliability.

- Incentive Layer: Implement staking and slashing mechanisms to ensure data providers are financially penalized for submitting bad data.

Evolution

The evolution of data aggregation methods for options protocols reflects a shift from simple, reactive mechanisms to complex, predictive systems. Early aggregation methods focused primarily on simple statistical calculations to protect against single-source manipulation. However, as the DeFi space matured, a more sophisticated understanding of market microstructure emerged, revealing new vulnerabilities that required architectural responses.

The first major evolution was the move from simple averaging to medianization and outlier detection. This change recognized that the goal was not simply to find an average price, but to find a robust price that could withstand adversarial attempts to poison the data feed. The second evolution involved the integration of decentralized autonomous organizations (DAOs) and reputation systems for data providers.

This created a social layer of security where data sources were not simply trusted based on their size, but based on their history of accurate reporting and their adherence to protocol rules. More recently, the focus has shifted to aggregating not just spot prices, but also implied volatility data. Options protocols require a volatility surface to accurately price contracts, and this data is inherently more complex and difficult to aggregate than simple spot prices.

The current frontier involves creating decentralized volatility oracles that synthesize implied volatility data from multiple on-chain and off-chain sources. This requires advanced aggregation methods that account for different strike prices and maturities, creating a dynamic surface rather than a single price point.

| Phase of Evolution | Primary Aggregation Method | Key Challenge Addressed | Derivative Product Focus |

|---|---|---|---|

| Phase 1: Early DeFi (2019-2020) | Simple Averaging and Single Source Oracles | Basic price discovery, initial data availability | Simple swaps, basic lending |

| Phase 2: Robust Aggregation (2021-2022) | Medianization, TWAP, Outlier Detection | Flash loan attacks, data manipulation resistance | Perpetual swaps, vanilla options |

| Phase 3: Volatility Aggregation (2023-Present) | Hybrid models, volatility surface construction | Accurate options pricing, volatility arbitrage | Exotic options, structured products |

Horizon

Looking ahead, the next generation of data aggregation methods for crypto options will move beyond simple price feeds and toward predictive, volatility-aware systems. The current model, where protocols react to price changes, will be superseded by systems that proactively model market risk and volatility skew. This involves creating decentralized oracles that aggregate implied volatility surfaces from multiple options markets.

This shift is essential for enabling sophisticated options strategies and structured products that require accurate, real-time volatility data. A key development on the horizon is the move toward “on-chain volatility surfaces” where data aggregation methods are designed to synthesize implied volatility data directly on the blockchain. This will allow for the creation of new derivative instruments that reference specific volatility indexes rather than just spot prices.

The challenge here is the computational cost of aggregating complex volatility data on-chain. We will see the rise of hybrid systems that perform intensive calculations off-chain and then submit a verifiable, aggregated result on-chain using zero-knowledge proofs. The regulatory environment will also play a role in shaping future aggregation methods.

As decentralized derivatives protocols attract institutional capital, the demand for transparent, auditable, and compliant data feeds will increase. This will likely lead to greater standardization of aggregation methods and increased scrutiny on the data sources used by protocols. The future of data aggregation is not simply about finding the right price; it is about building a robust, verifiable, and predictive infrastructure that can support a new generation of complex financial instruments.

Future aggregation methods will focus on synthesizing volatility surfaces and risk parameters, moving beyond simple spot price feeds to enable complex derivatives.

The ultimate goal for a derivative systems architect is to build an aggregation method that is not just secure against today’s attacks, but also anticipates future attack vectors. This requires continuous iteration on the incentive structures for data providers, ensuring that the cost of manipulation remains high even as protocols scale. The challenge of data aggregation is a perpetual game of cat and mouse between protocol designers and adversarial market participants.

Glossary

Options Protocols

Systemic Risk Aggregation

High Frequency Data Aggregation

Flash Loan

Finite Difference Methods

Multi-Layered Data Aggregation

Data Processing Methodologies

Financial Derivatives Market

Market State Aggregation