Essence

Data source verification for crypto options is the mechanism that ensures the integrity and reliability of external market data used for contract settlement. The core challenge lies in resolving the oracle problem, which asks how a deterministic, trustless smart contract can access real-world information without introducing a single point of failure. In derivatives, this is not an abstract technicality; it is the fundamental vulnerability of the entire system.

An option contract’s value and its ultimate payout are contingent upon a precise, agreed-upon price at expiration. If the price feed for the underlying asset can be manipulated or compromised, the financial integrity of the derivative itself collapses. The verification process, therefore, extends beyond simple data delivery; it includes cryptographic proofs, economic incentive structures, and consensus mechanisms designed to make data corruption prohibitively expensive for an attacker.

The design of this verification layer dictates the systemic risk profile of the options protocol, determining whether it can withstand sophisticated market manipulation or flash loan attacks.

Data source verification is the process of cryptographically and economically securing external price feeds to ensure the integrity of derivatives settlement in decentralized protocols.

Origin

The necessity for robust data source verification originates from the fundamental architectural constraints of decentralized finance. Traditional finance (TradFi) relies on centralized clearing houses and regulated exchanges, which act as trusted data providers. These entities have legal obligations and significant financial resources, making them reliable sources of truth for price discovery and settlement.

The advent of smart contracts introduced a system where trust is minimized and code executes automatically. However, smart contracts are inherently isolated from the outside world; they cannot natively query external databases or APIs. This created a chasm between the on-chain logic of a derivatives contract and the off-chain reality of market prices.

Early attempts at decentralized options protocols often relied on simple, single-source oracles, which quickly proved vulnerable to manipulation. The realization that the security of the derivative contract was only as strong as its weakest link ⎊ the data feed ⎊ forced the industry to prioritize the development of economically secure verification layers. This shift marked the transition from building simple financial instruments on-chain to building resilient financial systems that can securely interface with the outside world.

Theory

The theoretical foundation of data source verification for derivatives rests heavily on game theory and economic security modeling. The objective is to design a system where rational, self-interested participants are incentivized to report accurate data and penalized for reporting false data. This framework often utilizes a Schelling point mechanism, where participants converge on a common answer because they expect others to do the same.

The core mechanisms for achieving this theoretical ideal include:

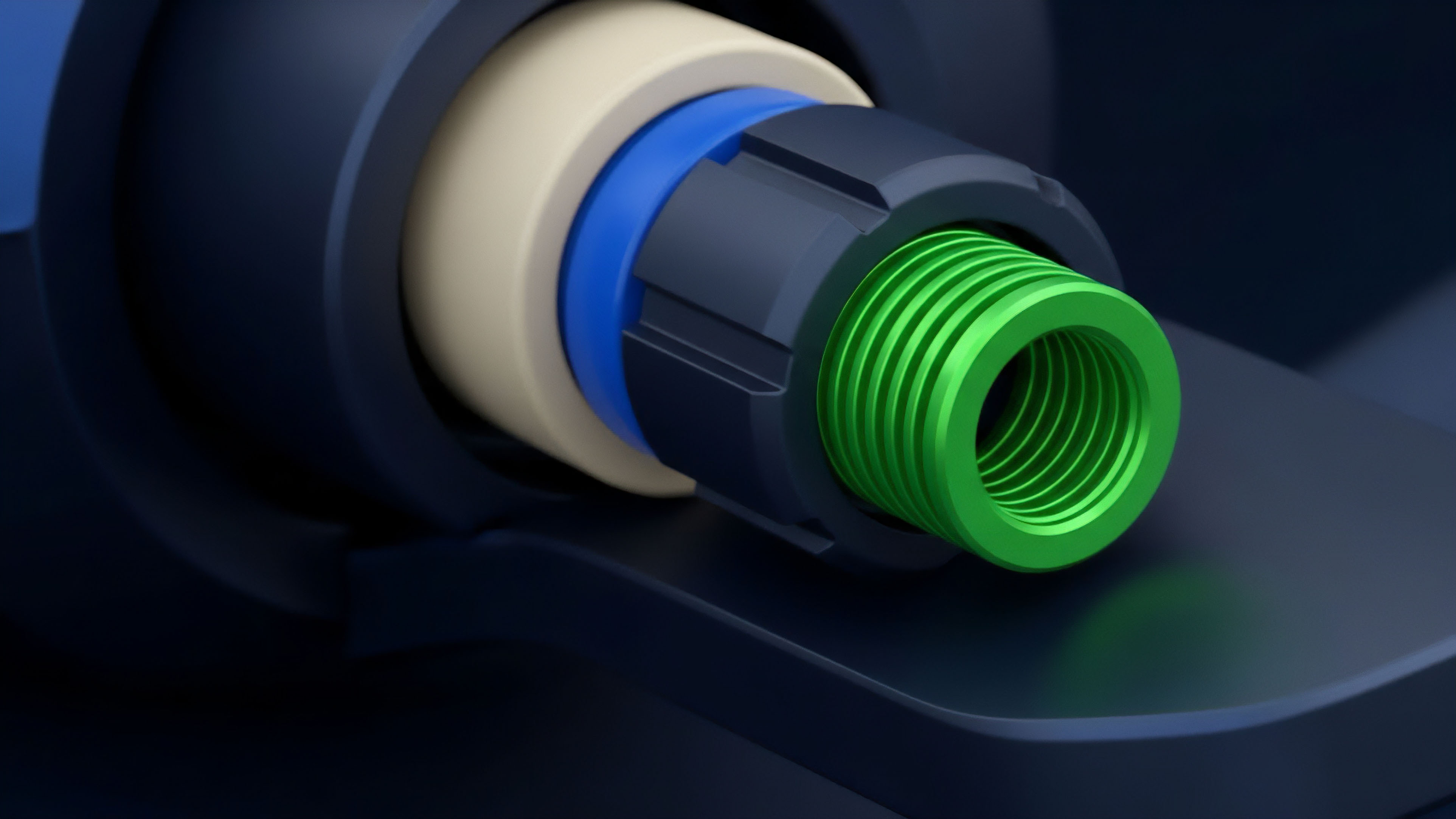

- Staking and Collateralization: Data providers are required to stake collateral, which can be slashed if they submit inaccurate data. The size of the collateral must be greater than the potential profit from manipulating the data feed, creating an economic disincentive for malicious behavior.

- Reputation Systems: Providers establish a track record of accurate reporting over time. This reputation is often used to weight their influence in the data aggregation process, rewarding reliable behavior and diminishing the impact of new or unproven actors.

- Decentralized Aggregation: Instead of relying on a single source, protocols aggregate data from multiple independent sources. The system then takes the median or a weighted average of these reports. This approach makes it necessary for an attacker to compromise a majority of the independent sources simultaneously, significantly increasing the cost of attack.

The design of these incentive structures must account for the specific characteristics of derivatives. For options, a flash crash or a rapid spike in volatility can create opportunities for manipulation during the short settlement window. A well-designed verification system must ensure data freshness while maintaining security, a difficult trade-off that requires careful calibration of update frequencies and dispute resolution mechanisms.

Approach

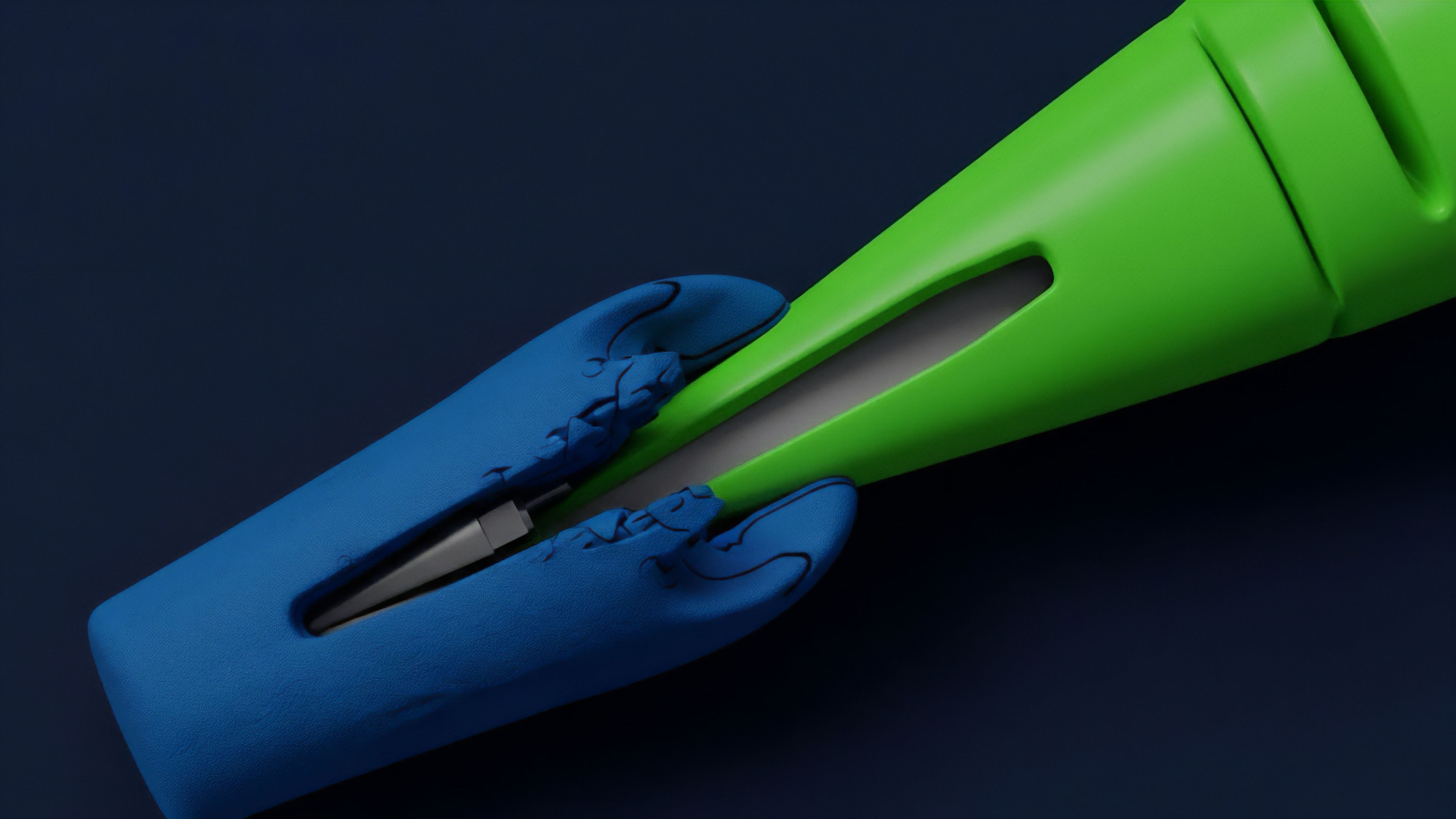

The practical implementation of data source verification in crypto options protocols typically involves a multi-layered approach to mitigate risk. The current standard relies on decentralized oracle networks that aggregate data from numerous sources. The process begins with data collection from off-chain exchanges, which is then passed through a verification layer before being broadcast on-chain.

The verification process often follows this general structure:

- Data Ingestion: Data providers (nodes) collect price information from various centralized and decentralized exchanges.

- Off-Chain Aggregation: The collected data is aggregated by the oracle network, often using a median or time-weighted average price (TWAP) calculation. This reduces the impact of single-exchange outliers.

- On-Chain Validation: The aggregated data is submitted to the smart contract, where a verification process checks for consistency, freshness, and adherence to predefined parameters.

- Dispute Resolution: If a submitted price falls outside a predefined range, a dispute mechanism is triggered, allowing other participants to challenge the data before it is finalized for settlement.

A critical consideration for derivatives is the data latency versus security trade-off. High-frequency options trading requires low latency data, but rapid updates can increase the window of vulnerability to flash loan attacks, where an attacker manipulates a single data source for a brief period to execute a profitable trade before the data is corrected. Protocols must carefully balance the need for timely data with the need for sufficient time to verify and finalize the price, especially during high-volatility events.

Evolution

Data source verification has evolved significantly, moving from rudimentary single-source solutions to highly resilient, multi-layered systems. Early protocols often used a simple, single oracle or relied on a small, permissioned set of nodes. These systems were prone to manipulation, as demonstrated by early exploits where attackers manipulated a single exchange price to liquidate positions on a derivatives platform.

The progression has centered on increasing the cost of attack and reducing the surface area for manipulation. The current generation of oracles has shifted toward decentralized aggregation models where data is sourced from a diverse set of providers and aggregated on-chain. This evolution has introduced new challenges, specifically regarding the cost of data provision and the complexity of governance.

The next phase involves integrating more sophisticated data types beyond simple spot prices, such as implied volatility surfaces and interest rate curves, to support more complex derivative products. This requires verification mechanisms that can handle multivariate data inputs and calculate complex metrics on-chain.

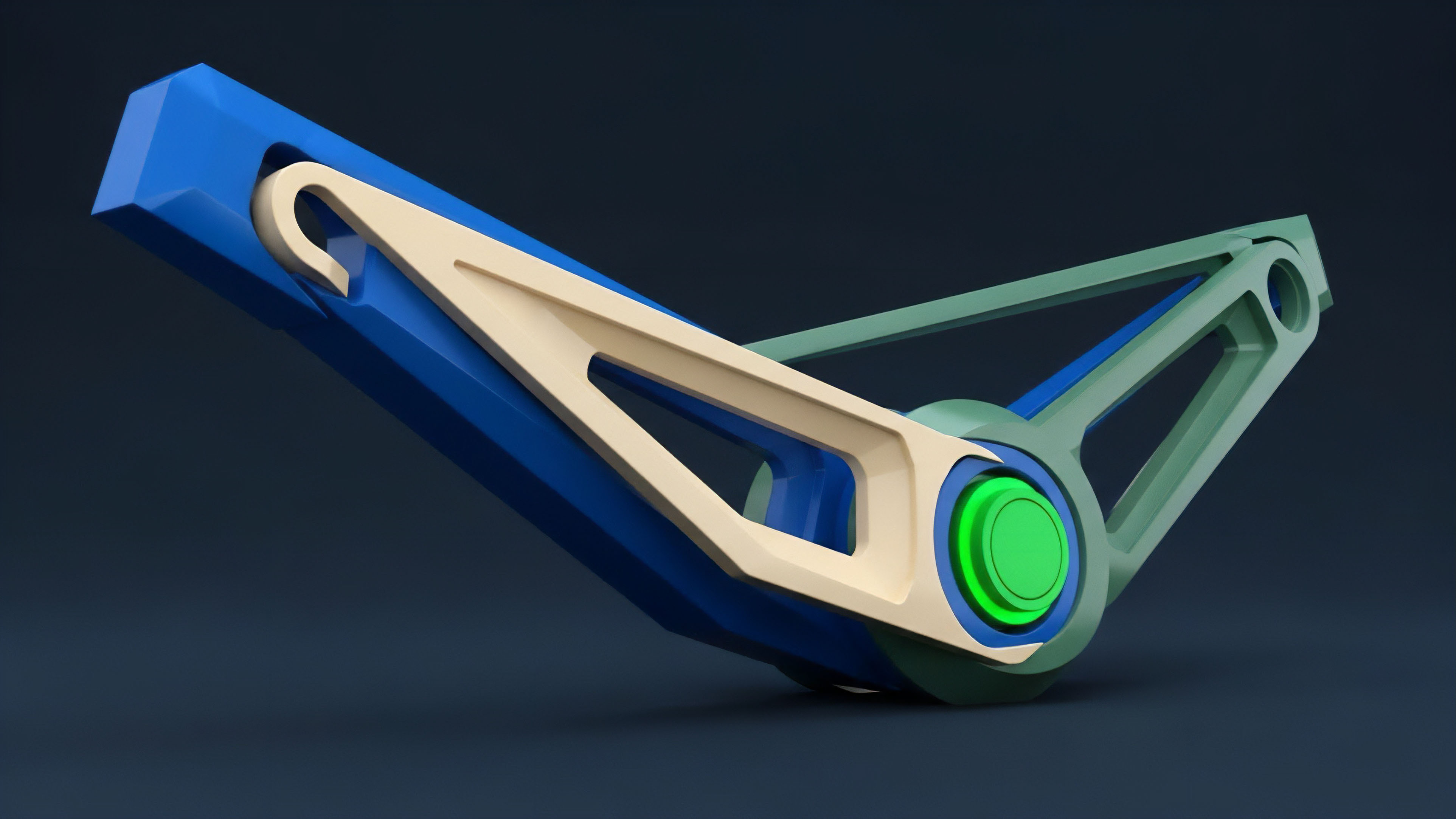

| Phase of Evolution | Primary Data Source | Security Model | Vulnerability Profile |

|---|---|---|---|

| Phase 1: Single-Source Oracles | Single centralized exchange API | Trust-based, permissioned nodes | High risk of manipulation; single point of failure |

| Phase 2: Decentralized Aggregation Networks | Multiple exchanges and data providers | Economic security (staking/slashing) | Latency risk; high cost of data updates |

| Phase 3: Cross-Chain and ZK-Oracles | Off-chain data with cryptographic proofs | Zero-knowledge proofs; trust minimization | Computational overhead; new attack vectors on proofs |

Horizon

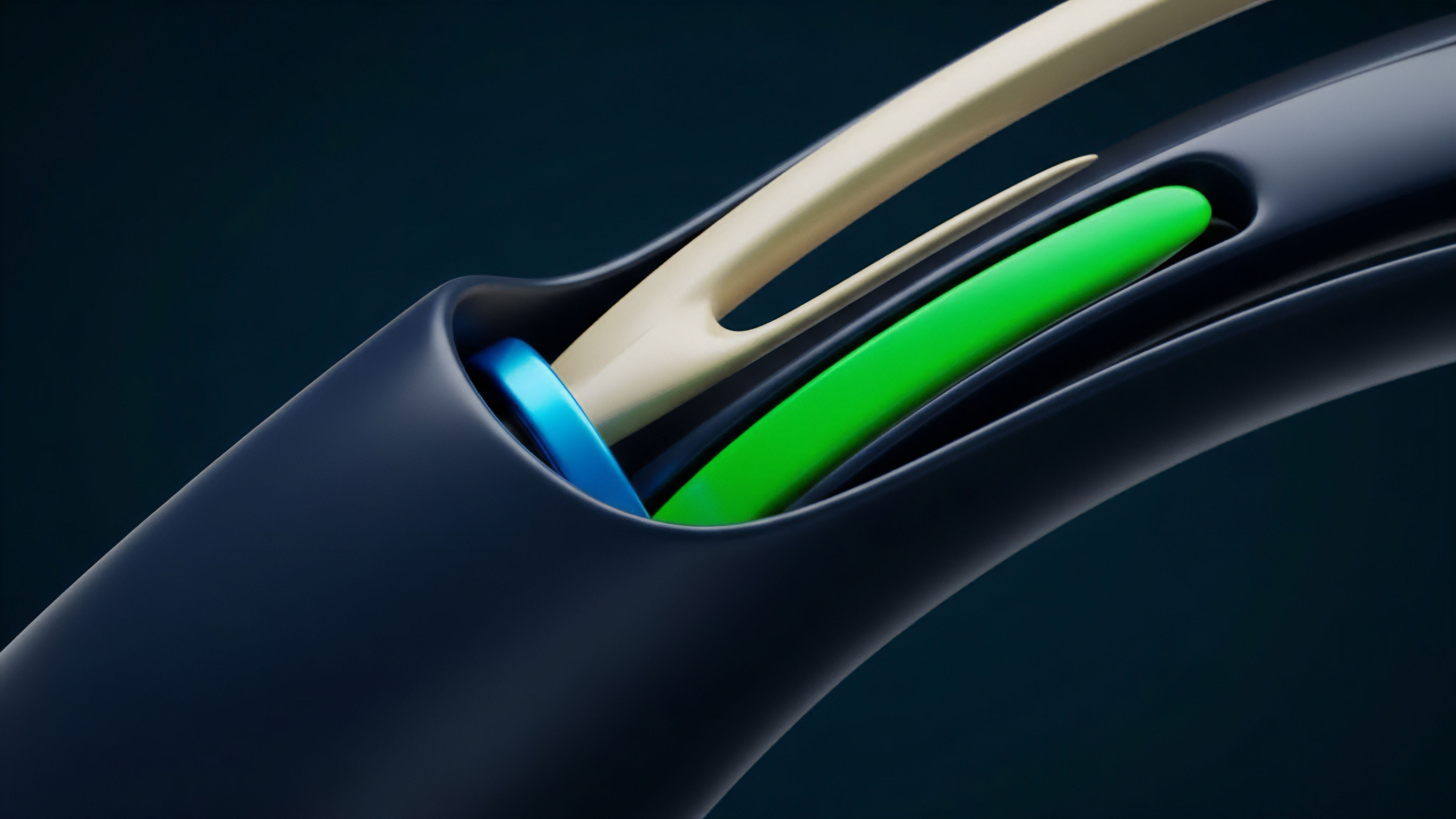

Looking ahead, the next generation of data source verification will focus on minimizing trust assumptions through advanced cryptography and on-chain mechanisms. One significant area of research is the development of zero-knowledge (ZK) oracles. These systems would allow data providers to submit cryptographic proofs of a data point’s accuracy without revealing the underlying data source or the specific value.

This preserves data privacy while still allowing for verifiable settlement, a crucial step for institutional adoption where data confidentiality is paramount. Another key development is the use of on-chain data sources, where the data required for settlement is derived directly from decentralized exchange (DEX) liquidity pools. While a simple TWAP from a DEX pool can be vulnerable to manipulation, new designs, such as virtual Automated Market Makers (vAMMs), offer a more robust price discovery mechanism that can serve as a trustless data source for derivatives.

This approach eliminates the need for external data providers entirely, creating a truly self-contained, trust-minimized financial system. The convergence of these technologies points toward a future where derivatives protocols are secured not by a single data feed, but by a network of interconnected, cryptographically verifiable data sources. The challenge shifts from simply obtaining accurate data to creating a dynamic, real-time feedback loop between data provision, market activity, and risk management.

This new architecture will allow for the creation of derivatives that are more resilient to market manipulation and capable of supporting complex, multi-asset financial products.

The future of data source verification involves moving beyond external data feeds toward cryptographically verifiable proofs and on-chain data sources to achieve complete trust minimization.

Glossary

Data Resilience

Protocol Design

Computational Verification

Verification Depth

On-Chain Identity Verification

On-Demand Data Verification

Data Verification Techniques

Economic Security

Price Discovery