Essence

Data source reliability is the foundational structural integrity of any decentralized derivatives protocol. Without accurate and timely external data, the entire financial structure collapses into an adversarial game of manipulation. The core challenge in decentralized finance (DeFi) is the “oracle problem” ⎊ the difficulty of securely importing off-chain information onto the blockchain for smart contract execution.

For options contracts, this data requirement is particularly acute, extending beyond simple spot prices to include complex inputs like implied volatility surfaces, interest rate curves, and settlement prices at specific time intervals.

Data source reliability determines the solvency and fairness of derivatives protocols by ensuring accurate settlement and preventing oracle manipulation attacks.

The reliability of a data source is not just a technical measure of uptime; it represents an economic security guarantee. If a protocol uses a data source that can be manipulated at a cost lower than the value locked in the derivatives contracts, the system is fundamentally flawed. This vulnerability is where a protocol’s capital efficiency and risk profile are most exposed.

The choice of data source defines the attack surface of the entire system, dictating the cost required for a bad actor to force an incorrect liquidation or settlement.

Origin

The necessity for reliable data sources arose directly from the shift in financial architecture from centralized exchanges (CEX) to decentralized protocols. On a CEX, price discovery and contract settlement are handled internally, creating a closed system where the exchange itself serves as the trusted data source.

This model relies on a single point of trust. When DeFi protocols began building derivatives markets on-chain, they were forced to source external price feeds to settle contracts. The early attempts often relied on simple, single-source oracles or a small set of aggregated data points, creating significant vulnerabilities.

Early exploits demonstrated that a simple flash loan attack could temporarily manipulate the price on a single, low-liquidity decentralized exchange (DEX), which an oracle might be reading. This manipulation would then trigger incorrect liquidations on the derivatives protocol, allowing the attacker to profit from the system’s structural weakness. This era highlighted a critical insight: The data source cannot be a simple feed; it must be a mechanism with its own economic security model.

The evolution of oracles became a race to build a data layer that was more expensive to manipulate than the value it secured.

Theory

The theoretical underpinnings of data source reliability in derivatives protocols are rooted in game theory and system design. The core objective is to align economic incentives such that honest reporting is rewarded and malicious reporting is prohibitively expensive.

This concept moves beyond simple cryptographic verification to incorporate financial penalties and rewards.

Oracle Design Architectures

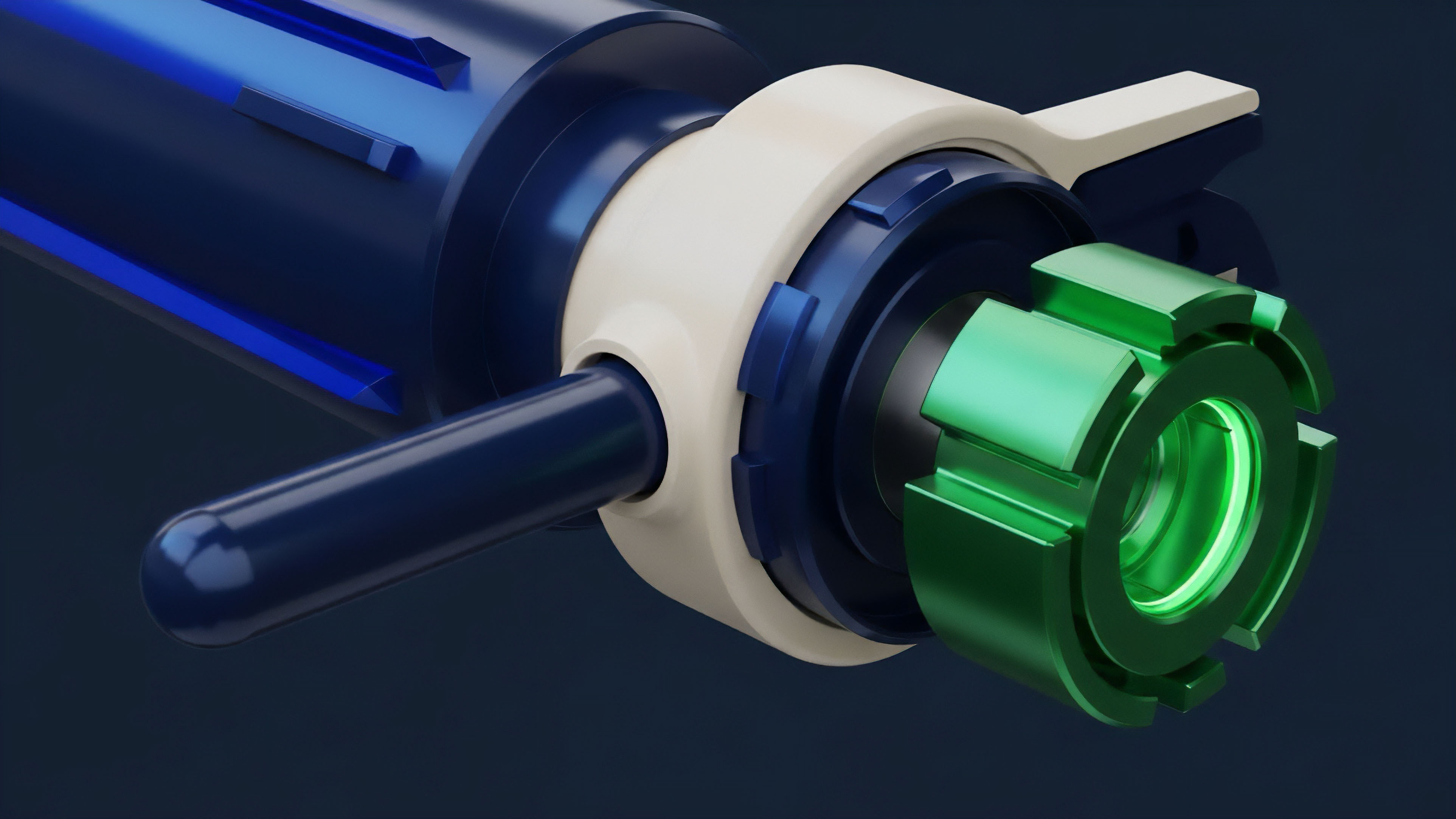

There are three primary theoretical approaches to data source design:

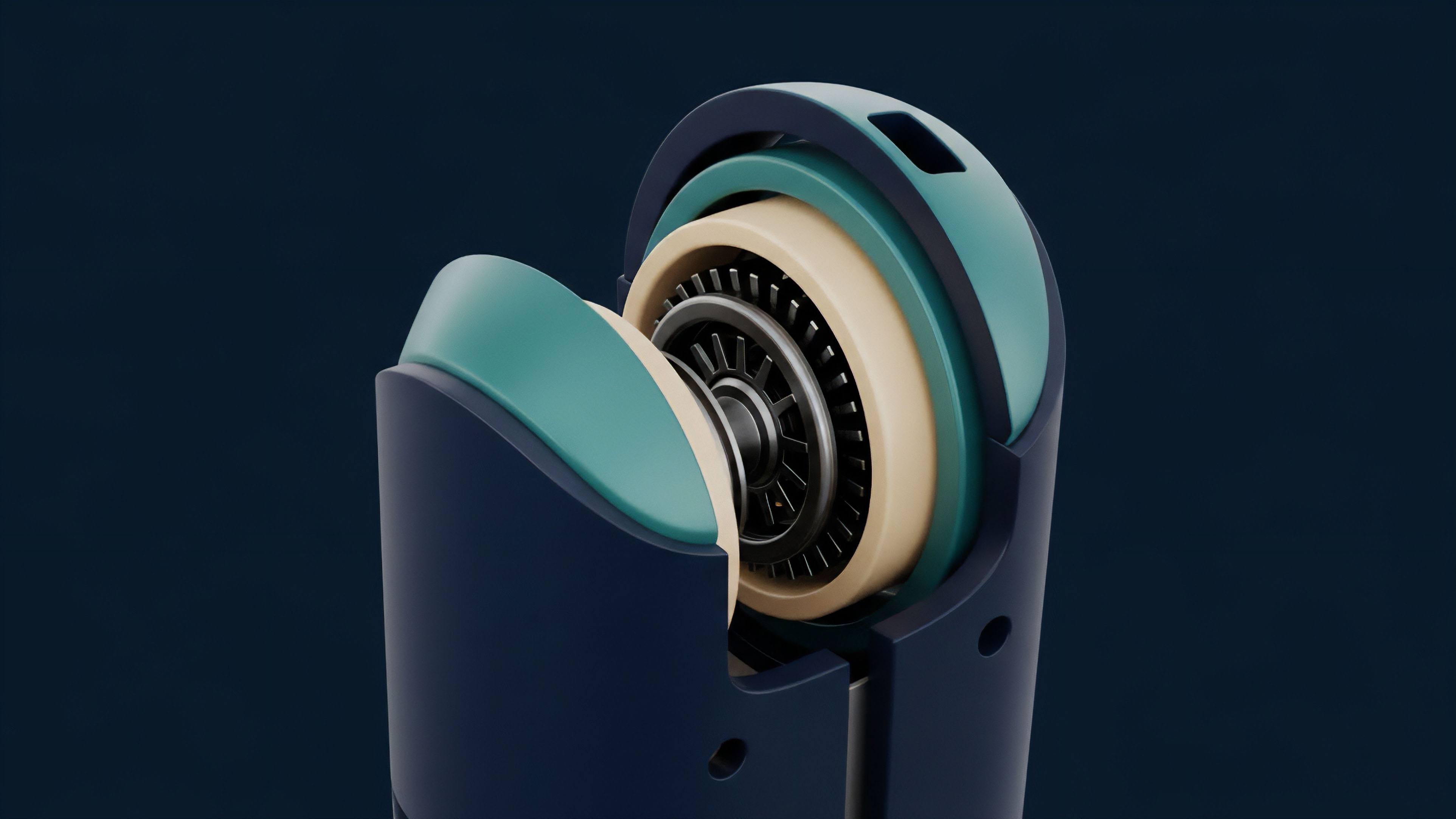

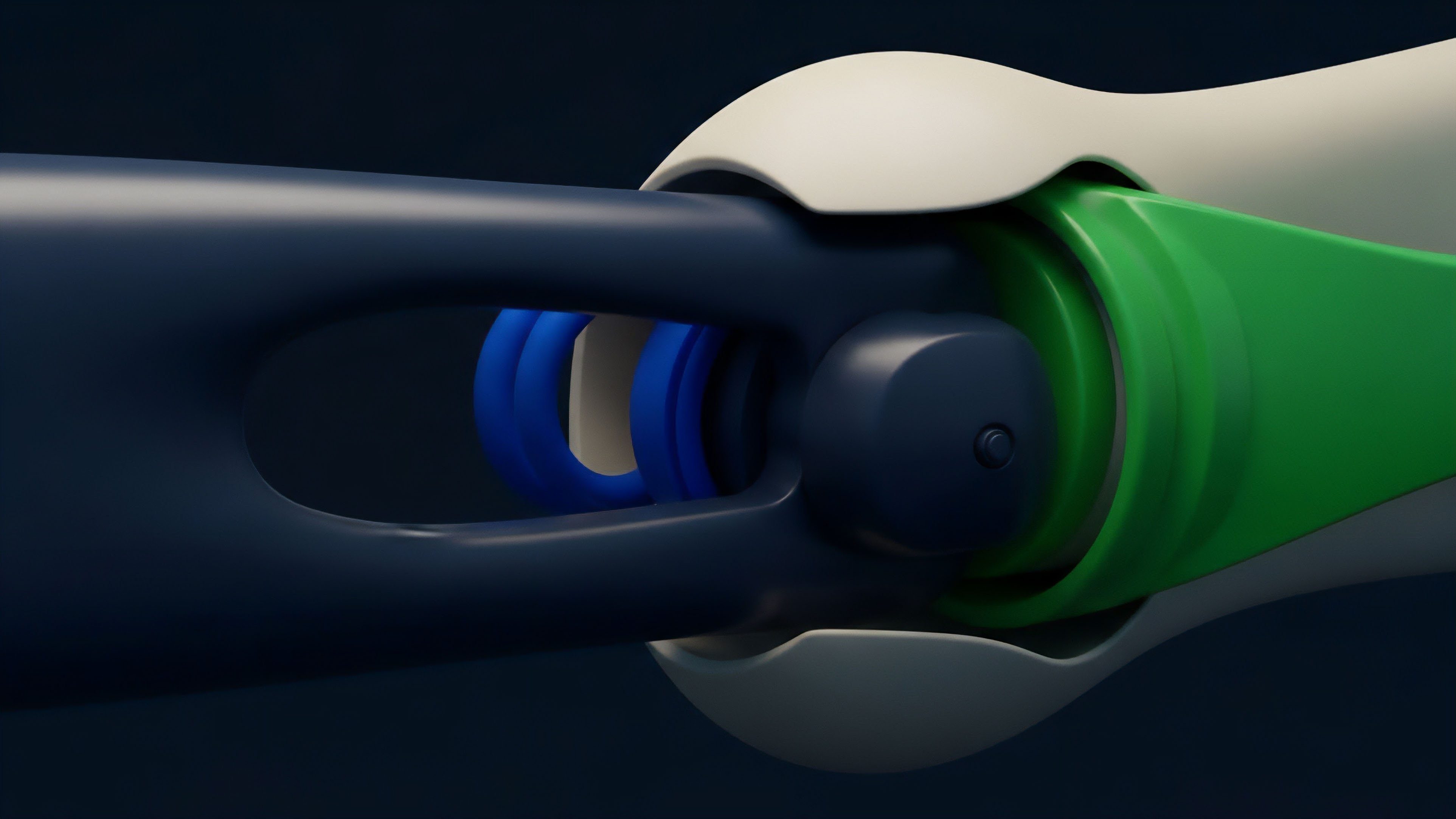

- Decentralized Oracle Networks (DONs): These networks aggregate data from multiple independent nodes and data sources. The security model relies on a large number of participants making it difficult for any single actor to control the majority of nodes. This approach prioritizes decentralization and robustness against single points of failure.

- Off-Chain Data Streams: These solutions focus on providing low-latency data directly to protocols without waiting for on-chain block confirmations. The data is often signed by multiple data providers, with protocols verifying these signatures. This model prioritizes speed, essential for high-frequency trading and rapid liquidations in derivatives markets.

- Time-Weighted Average Price (TWAP) Oracles: This approach mitigates flash loan attacks by calculating the price based on a rolling average over a specific time window. While effective against instantaneous manipulation, it introduces latency and may not reflect rapid market shifts accurately, which can lead to inefficient liquidations.

Economic Security Vs. Data Accuracy

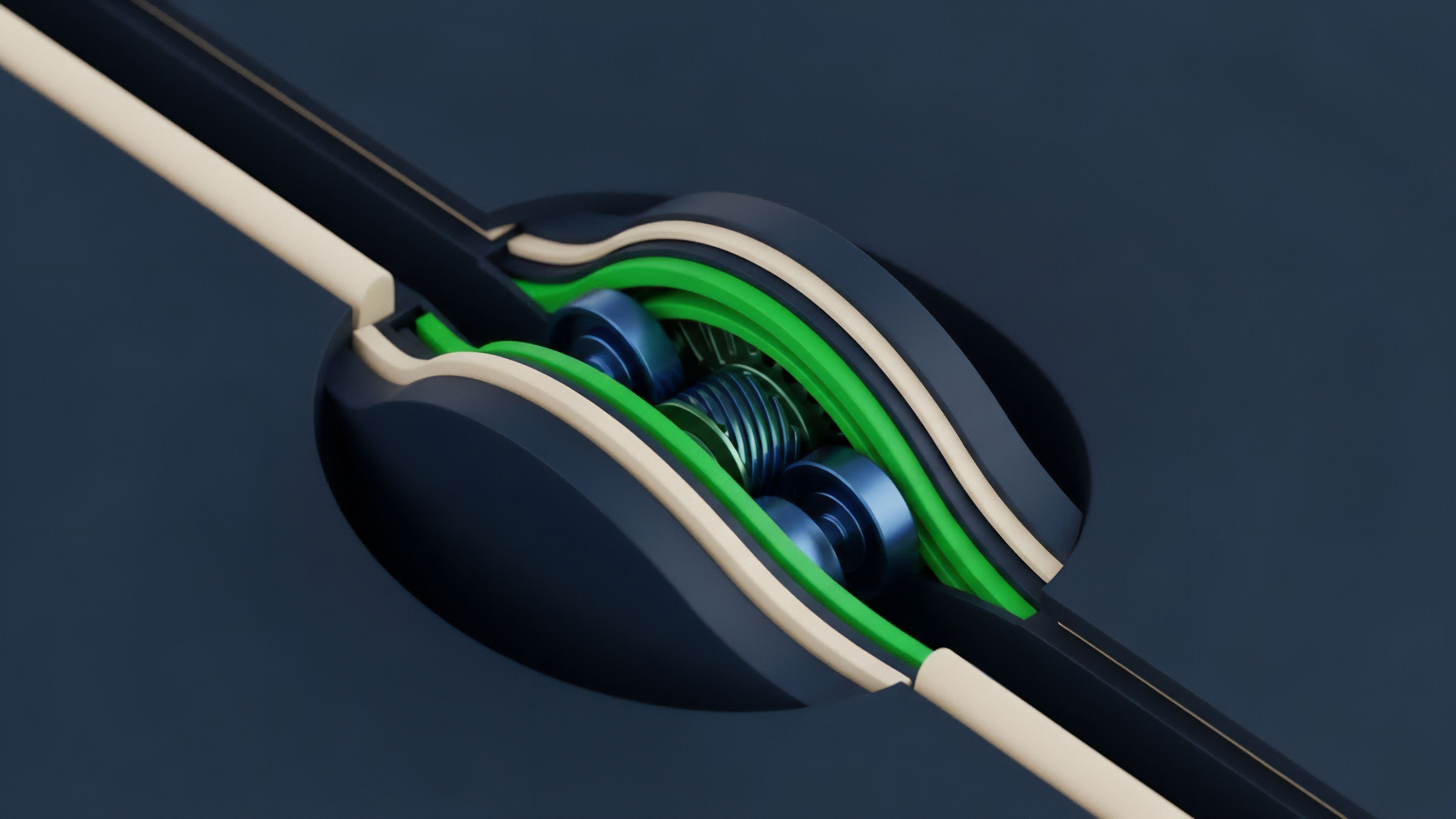

A central tension exists between data accuracy and economic security. A highly accurate data feed might be sourced from a single, high-liquidity exchange. However, this single point of failure makes the data source vulnerable to manipulation if the cost to manipulate that single exchange is low.

Conversely, a highly decentralized oracle network might prioritize security by averaging data from many sources, potentially sacrificing precision by including less relevant or lower-quality feeds. The optimal solution for derivatives protocols involves balancing these trade-offs, often through mechanisms that dynamically adjust based on market conditions and collateralization levels.

The cost to corrupt an oracle must exceed the profit potential from manipulating the protocol’s contracts; otherwise, the system is economically unstable.

Approach

Current implementations of data source reliability for crypto options utilize several distinct models, each with specific trade-offs regarding latency, cost, and security. A common approach involves a hybrid model where protocols use high-latency, highly secure oracles for critical settlements and low-latency, less secure feeds for internal risk management and UI displays.

On-Chain Vs. Off-Chain Aggregation

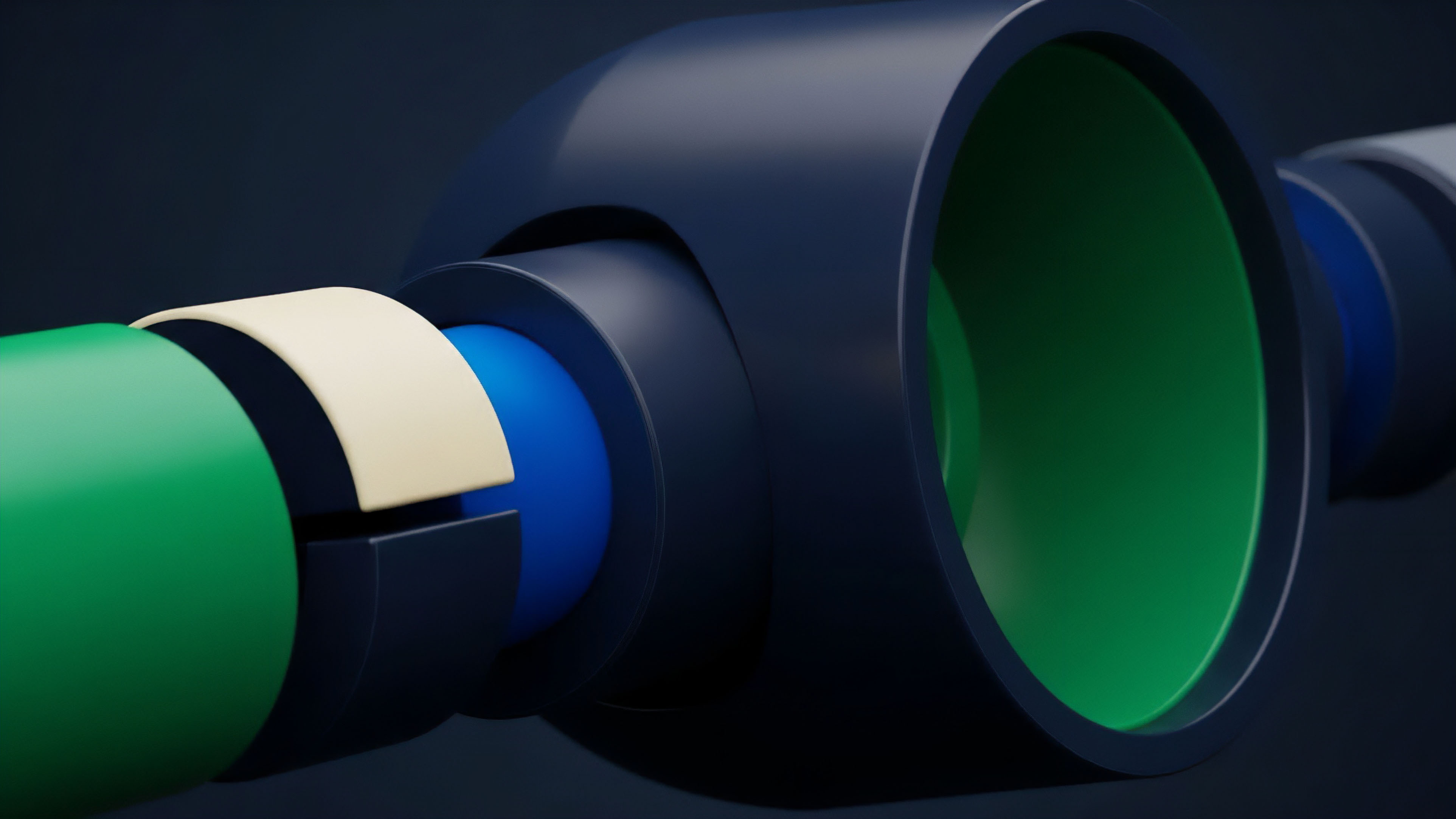

The choice between on-chain and off-chain data aggregation defines the system’s performance characteristics. On-chain aggregation requires every data point to be processed by the blockchain’s consensus mechanism, ensuring high integrity but introducing significant gas costs and latency. Off-chain aggregation, in contrast, uses a separate network to process and sign data, delivering it to the blockchain only when needed.

This approach reduces costs and improves speed but requires trust in the off-chain network’s integrity.

| Characteristic | On-Chain Aggregation (e.g. TWAP) | Off-Chain Aggregation (e.g. Pyth Network) |

|---|---|---|

| Latency | High (requires block confirmation) | Low (near real-time data streaming) |

| Gas Cost | High (for every data update) | Low (only for data delivery/verification) |

| Security Model | Cryptographic and consensus-based | Economic security via collateralization/attestation |

| Use Case | Final settlement, low-frequency events | Real-time liquidations, high-frequency pricing |

The Role of Market Microstructure

A derivative protocol must account for the specific microstructure of the underlying asset. For highly liquid assets like Bitcoin or Ethereum, reliable data feeds can be constructed by aggregating data from multiple high-volume CEXs and DEXs. However, for options on lower-liquidity assets, data source reliability becomes significantly more challenging.

The data source must be resilient to price movements that occur during a data feed’s update interval. A delay in data delivery can lead to a “stale price” where the oracle reports a value that no longer reflects the true market price, potentially causing cascading liquidations or incorrect settlements.

Evolution

Data source reliability has evolved significantly from simple, single-source price feeds to complex, economically-secured networks.

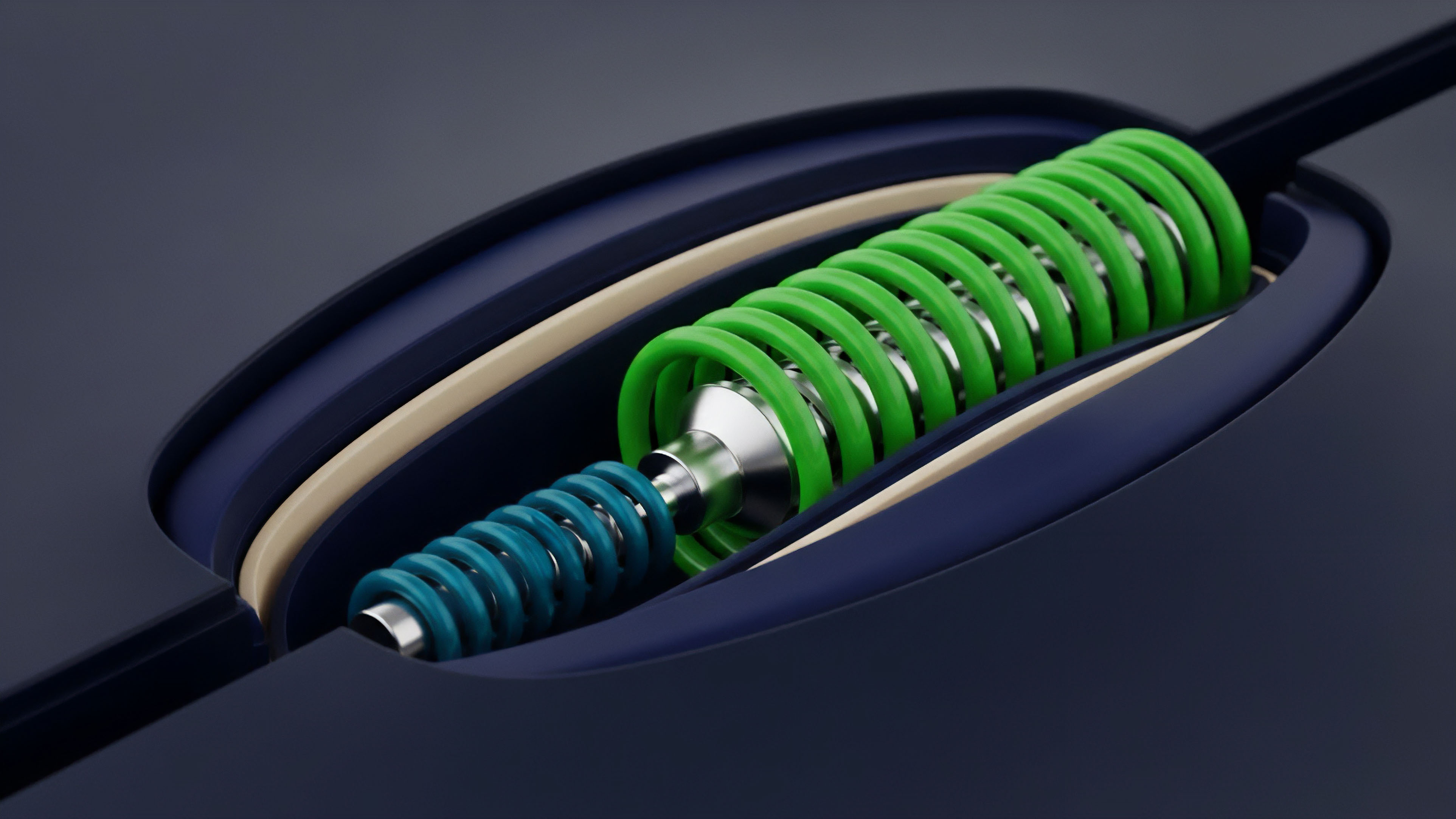

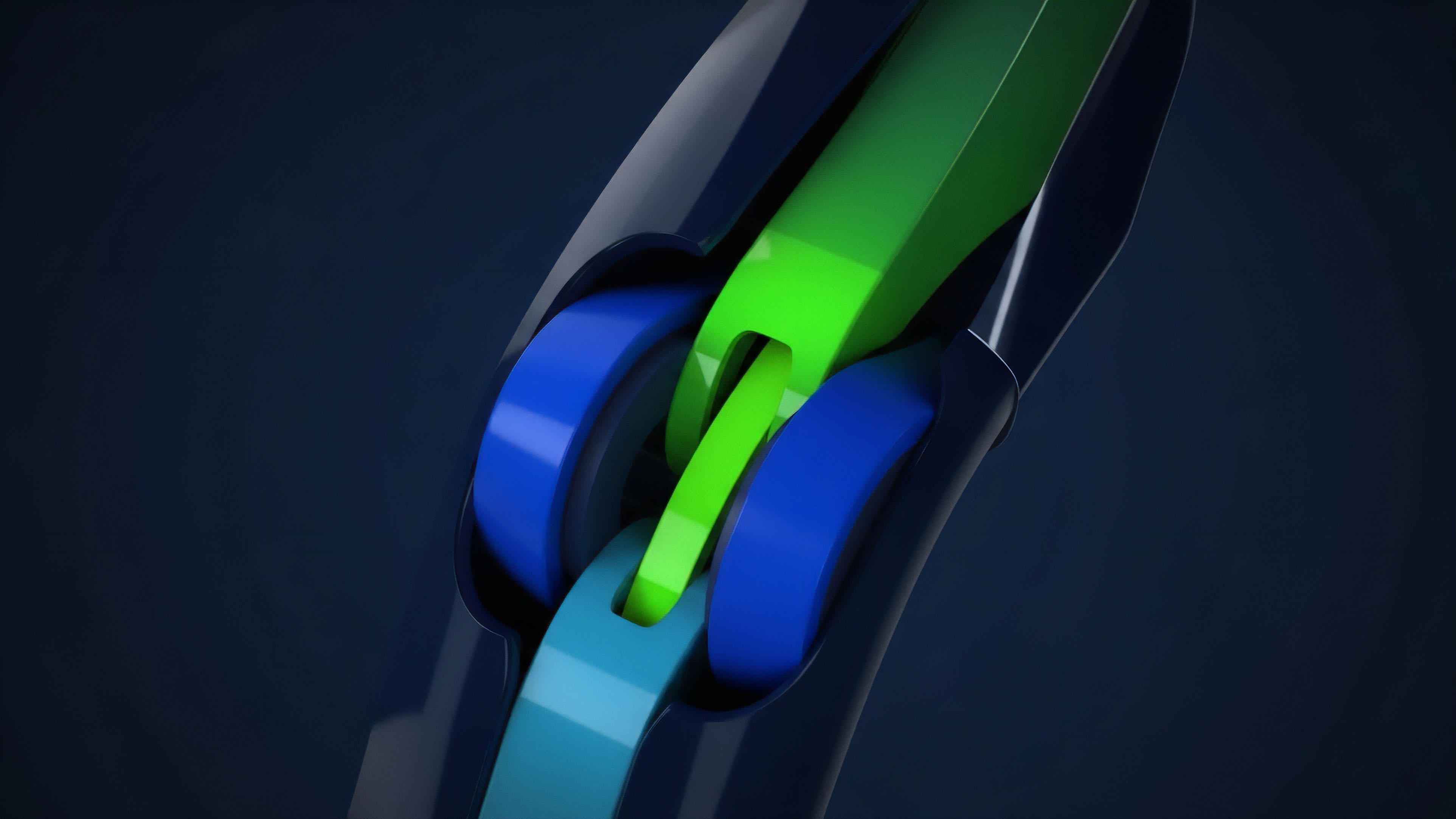

The initial model, where a protocol might simply query a single DEX for a price, proved insufficient in an adversarial environment. The next iteration involved decentralized oracle networks, which introduced a layer of economic security by requiring data providers to stake collateral. If a provider submits incorrect data, their stake is slashed, creating a disincentive for malicious behavior.

Verifiable Computation and ZK Proofs

The current trajectory involves integrating verifiable computation into data sourcing. Instead of simply trusting a data provider’s attestation, protocols are moving toward verifiable proofs. Zero-knowledge proofs (ZKPs) allow a data provider to prove that they correctly processed data from a specific source without revealing the source itself.

This provides a new layer of security, particularly for off-chain data processing. The future of data integrity for complex options will rely heavily on these techniques to ensure that pricing models and volatility calculations performed off-chain can be cryptographically verified on-chain.

The evolution of data source reliability reflects a shift from trusting external data providers to verifying their computations cryptographically.

Specialized Data Feeds for Options

A significant development is the move toward specialized data feeds specifically designed for derivatives. Options pricing models require more than just a spot price; they require volatility data, which itself is often calculated off-chain. The new generation of oracle networks is building dedicated feeds for implied volatility surfaces, enabling more sophisticated options protocols to function.

This specialization allows for more precise risk management and prevents protocols from relying on potentially manipulated or inaccurate proxies for volatility.

Horizon

Looking ahead, the horizon for data source reliability involves solving two key challenges: low-latency data for exotic options and cross-chain data integrity. As options protocols move toward more complex structures, such as options on interest rate swaps or options with highly specific trigger conditions, the data requirements become increasingly granular and difficult to secure.

Cross-Chain Data Integrity

The proliferation of layer 2 solutions and app chains creates a new challenge for data integrity. A derivative contract on one chain may need data from an asset that primarily trades on another chain. This requires a robust, secure cross-chain messaging system that can transmit data reliably without introducing new trust assumptions or vulnerabilities.

The challenge is to maintain the integrity of the data as it traverses multiple, potentially disparate consensus environments.

The Need for On-Chain Verifiable Order Books

The ultimate goal for data source reliability is to move away from external data feeds entirely by bringing the order book on-chain. While computationally intensive, a truly decentralized exchange with a verifiable order book would eliminate the oracle problem for spot prices, allowing derivatives protocols to directly reference the on-chain price discovery mechanism. This architectural shift would create a fully self-contained financial ecosystem where all data required for settlement is native to the blockchain. This vision presents significant challenges regarding throughput and gas costs, but it represents the most robust long-term solution for systemic risk reduction.

Glossary

Systemic Revenue Source

Data Source Decentralization

Open-Source Risk Parameters

Financial Data Reliability

Open-Source Cryptography

On-Chain Oracle Reliability

Pre-Committed Capital Source

Execution Reliability

Multi Source Oracle Redundancy