Essence

Trustless verification within the context of crypto derivatives refers to the foundational mechanism that ensures a smart contract can accurately and securely access external data, primarily the underlying asset price, without relying on a centralized authority. This process is critical for the lifecycle of an options contract, specifically for collateral valuation, margin calculations, and final settlement. In traditional finance, a centralized clearinghouse performs this function, acting as a trusted third party to verify market data and manage counterparty risk.

In decentralized finance (DeFi), this role is supplanted by cryptographic and economic mechanisms. The core challenge lies in bridging the gap between the on-chain execution logic of the smart contract and the off-chain reality of market prices. A failure in this verification process leads directly to systemic risk, as a manipulated price feed allows an attacker to liquidate positions unfairly or to settle contracts at incorrect values, effectively draining protocol capital.

The verification mechanism must be robust against a specific set of attack vectors, primarily related to data integrity and availability. A successful system must ensure that the price data delivered to the smart contract is both accurate at the moment of execution and resistant to manipulation by individual data providers or malicious actors. This requires a shift from a trust-based model, where one trusts a single entity, to a trustless model, where trust is replaced by economic incentives and cryptographic verification.

The integrity of the options market hinges entirely on this mechanism’s ability to provide a single, verifiable source of truth for all participants.

Trustless verification for options contracts replaces centralized clearinghouse functions with decentralized mechanisms, ensuring accurate price data delivery to smart contracts for secure settlement and collateral management.

Origin

The need for trustless verification emerged directly from the earliest attempts to build decentralized derivatives protocols on platforms like Ethereum. Early protocols, often simple options vaults or basic perpetual futures exchanges, quickly encountered a critical vulnerability: the oracle problem. These systems initially relied on single, centralized data feeds or simple on-chain price mechanisms (like TWAPs from a single Automated Market Maker, or AMM) to determine the value of collateral and the strike price for options settlement.

This approach created an obvious attack vector. A malicious actor could manipulate the price feed by executing a large trade on the single AMM used for pricing or by compromising the centralized data provider.

The history of DeFi is punctuated by significant financial losses directly attributable to oracle manipulation. Flash loan attacks, where an attacker borrows a large amount of capital, manipulates the price on a DEX, executes a profitable trade against a vulnerable options protocol using the manipulated price, and repays the loan all within a single transaction, demonstrated the fragility of these early verification methods. The “Black Thursday” crash in March 2020 further highlighted the need for robust verification when network congestion prevented oracles from updating, leading to mass liquidations at incorrect prices.

These systemic failures forced the development community to recognize that a decentralized financial instrument required a decentralized verification mechanism. The origin of trustless verification in options is therefore rooted in the necessary evolution away from single points of failure to create a resilient financial system.

Theory

The theoretical foundation of trustless verification rests on a blend of game theory, mechanism design, and information theory. The core objective is to design a system where the cost of attacking the verification mechanism exceeds the potential profit from manipulating the data. This economic security model is achieved through several layered approaches.

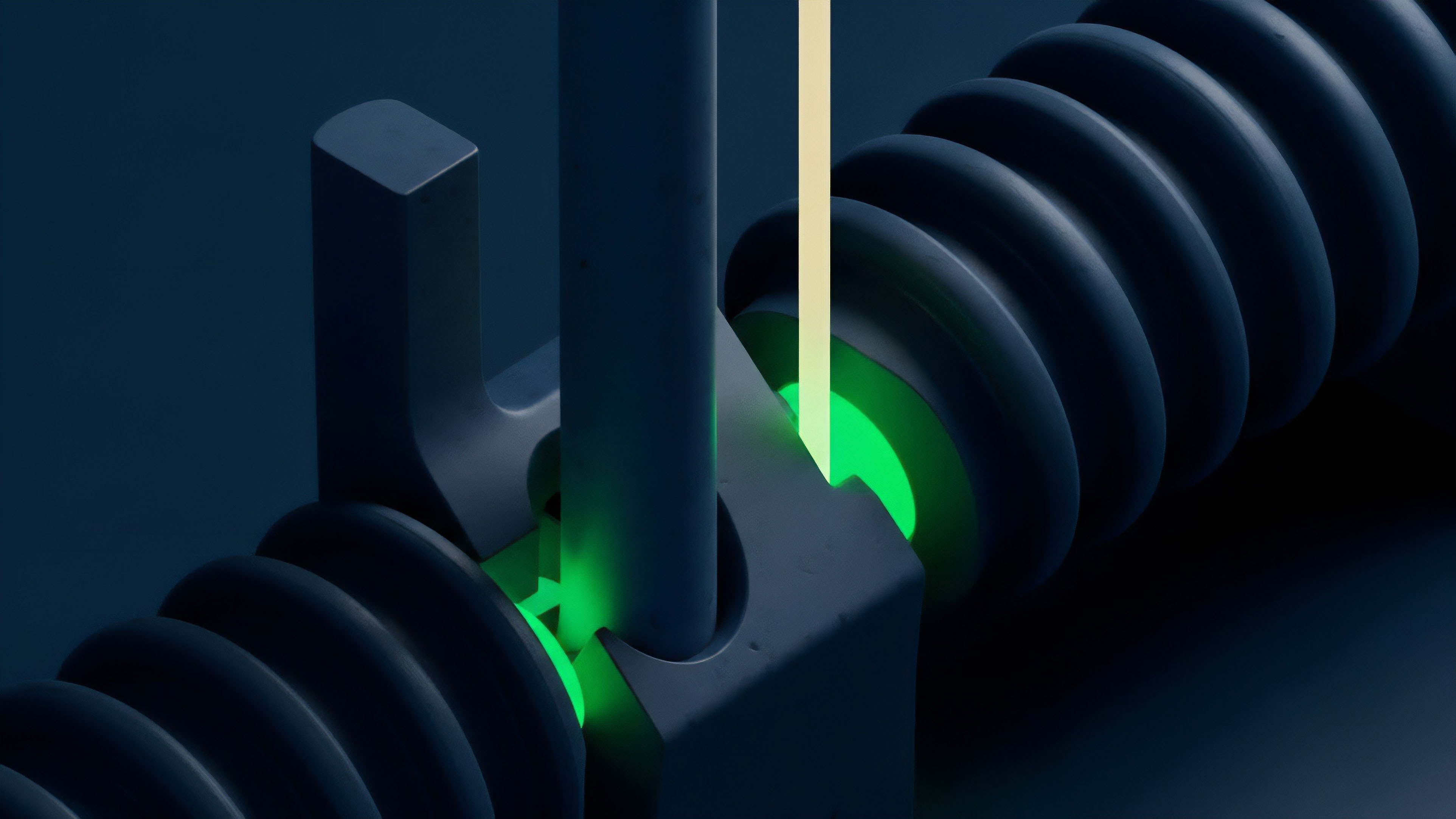

The first principle involves decentralized data aggregation. Instead of relying on a single data provider, the protocol aggregates price data from numerous independent sources. By calculating a median or a weighted average of these inputs, the system makes it prohibitively expensive for a single entity to corrupt the final price.

The attacker must compromise a majority of the independent data providers simultaneously, increasing the attack cost significantly.

A second critical theoretical component is data provider incentives. Data providers (or oracle nodes) are often required to stake collateral, which can be slashed if they submit inaccurate data. This economic penalty aligns the incentives of the providers with the integrity of the system.

The mechanism design must account for “lazy” providers who simply copy data from others, ensuring that honest, independent work is rewarded more highly than parasitic behavior. The theory also addresses latency and finality. Options contracts, particularly short-term ones, require low-latency price updates.

However, increasing update frequency increases costs and potential for front-running. The theoretical solution balances these trade-offs by adjusting update thresholds and fees based on market volatility, ensuring data integrity during high-stress periods while maintaining efficiency during stable periods.

The verification theory relies on mechanism design where the cost of manipulating aggregated data from multiple providers exceeds the profit gained from a successful attack.

The quantitative analysis of trustless verification focuses on the impact of price feed quality on options pricing models. The standard Black-Scholes model assumes continuous, friction-free price updates. In reality, decentralized verification introduces discrete update intervals and potential latency.

This creates a divergence between the theoretical price and the price used for settlement, impacting risk management. The integrity of the price feed directly influences the calculation of Greeks (Delta, Gamma, Theta), which measure risk sensitivity. An inaccurate or delayed price feed leads to incorrect risk calculations, causing significant losses for liquidity providers and traders who rely on automated rebalancing strategies.

Approach

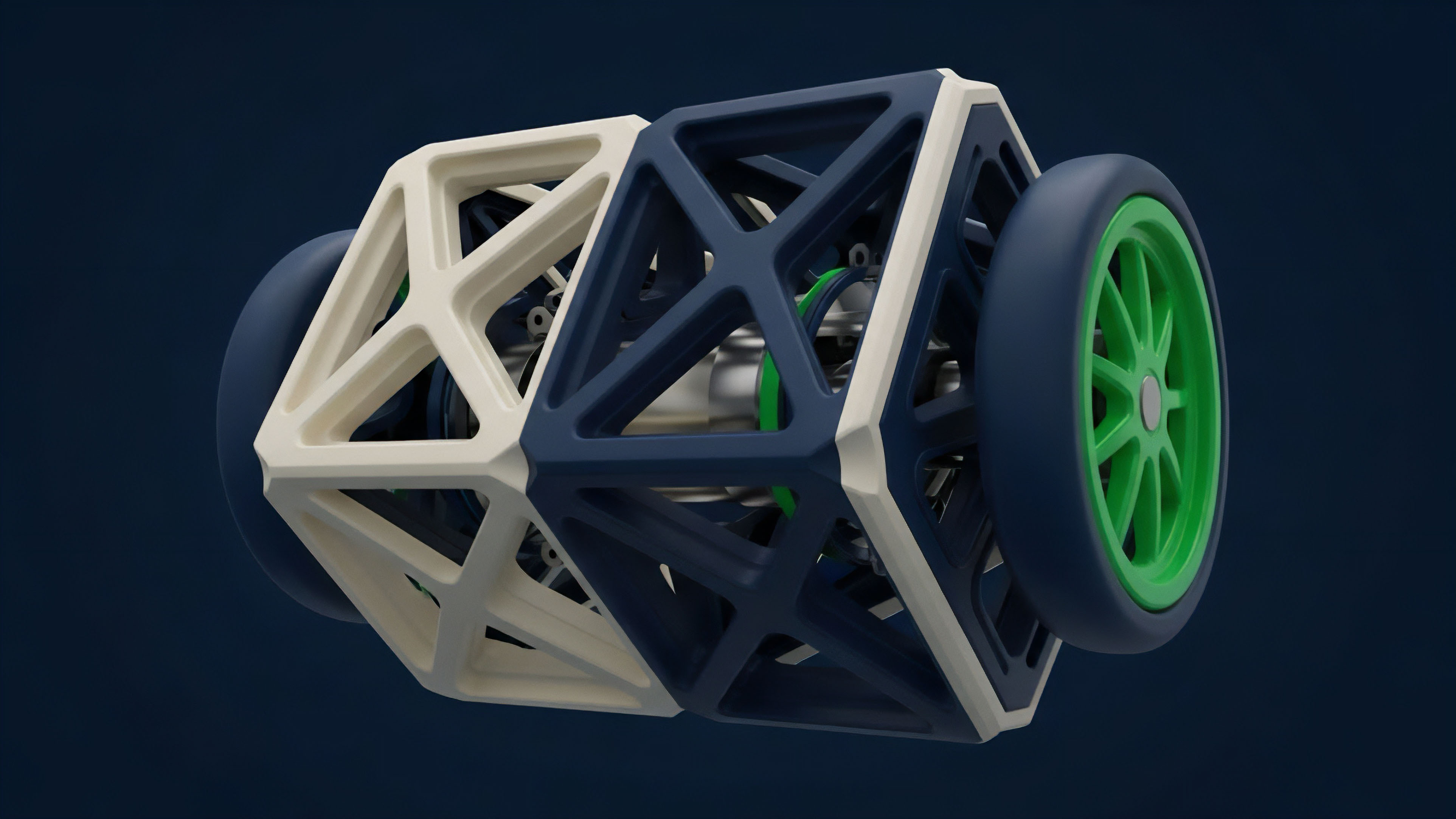

The practical implementation of trustless verification in crypto options protocols typically involves a multi-layered approach that combines external decentralized oracle networks with internal protocol mechanisms. The dominant approach utilizes decentralized oracle networks, which function as a bridge between off-chain market data and on-chain smart contracts.

A typical process for an options protocol’s collateral verification looks like this:

- Data Request Initiation: The options protocol smart contract needs to verify the value of a user’s collateral (e.g. ETH) to determine if a margin call or liquidation is necessary.

- Data Aggregation: The decentralized oracle network’s nodes independently fetch data from multiple high-volume centralized exchanges (CEXs) and decentralized exchanges (DEXs).

- Data Filtering and Validation: The network’s nodes validate the collected data against specific criteria to identify and filter out outliers or manipulated data points.

- Median Calculation: The verified data points are aggregated, typically using a median calculation, to produce a single, tamper-proof price feed.

- On-chain Delivery: The aggregated price data is submitted to the options protocol’s smart contract, triggering necessary actions like liquidation or margin updates.

A secondary approach involves using internal price mechanisms, such as those derived from an options AMM. In this model, the price of the option is determined by the ratio of assets in the liquidity pool. While this approach removes the reliance on external oracles, it introduces significant risks related to liquidity fragmentation and impermanent loss for liquidity providers.

Arbitrageurs are relied upon to keep the internal AMM price aligned with external market prices. However, during periods of extreme volatility, this arbitrage can break down, leaving the protocol vulnerable to pricing discrepancies. The most robust solutions combine both approaches, using external oracles as a primary source of truth while leveraging internal AMM pricing for continuous risk assessment.

| Verification Method | Pros | Cons | Best Application |

|---|---|---|---|

| External Decentralized Oracles | High integrity, resistance to single-source manipulation, broad market coverage. | Latency risk, cost of data updates, reliance on off-chain data providers. | Collateral valuation, final options settlement. |

| Internal AMM Pricing | Low latency, on-chain price discovery, no external dependencies. | Liquidity fragmentation risk, potential for price divergence during high volatility, reliance on arbitrageurs. | Continuous risk monitoring, short-term options pricing. |

Evolution

The evolution of trustless verification has progressed from simple, single-source data feeds to highly complex, multi-layered data infrastructure. The initial focus was simply on securing the price feed against flash loan attacks. This led to the development of decentralized oracle networks, which increased security by decentralizing the data source.

However, this early model still faced limitations, particularly in terms of data latency and cost. High-frequency options trading requires updates that are too expensive to be constantly posted on-chain via a standard oracle network.

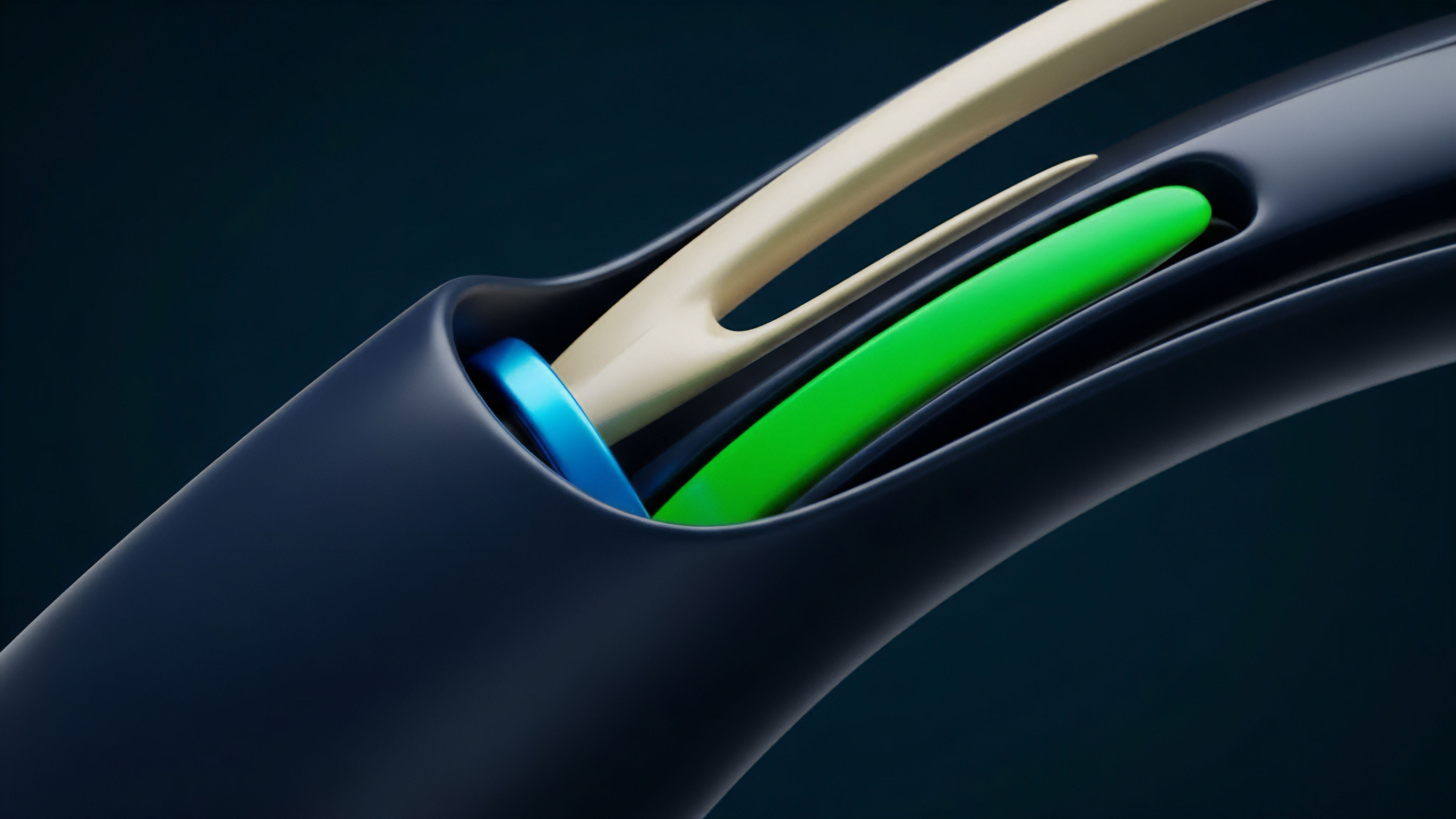

The next stage in the evolution involved the development of specialized data products for specific financial instruments. Options protocols require more than just a spot price; they require data on implied volatility (IV), interest rate curves, and other complex variables necessary for accurate pricing. This led to the creation of oracle-based volatility feeds , which calculate and verify volatility surfaces in a decentralized manner.

This allows options protocols to price contracts more accurately than simply relying on a spot price.

The shift in verification technology moves beyond simple spot price feeds toward complex data products like decentralized volatility surfaces, enabling more sophisticated options pricing models.

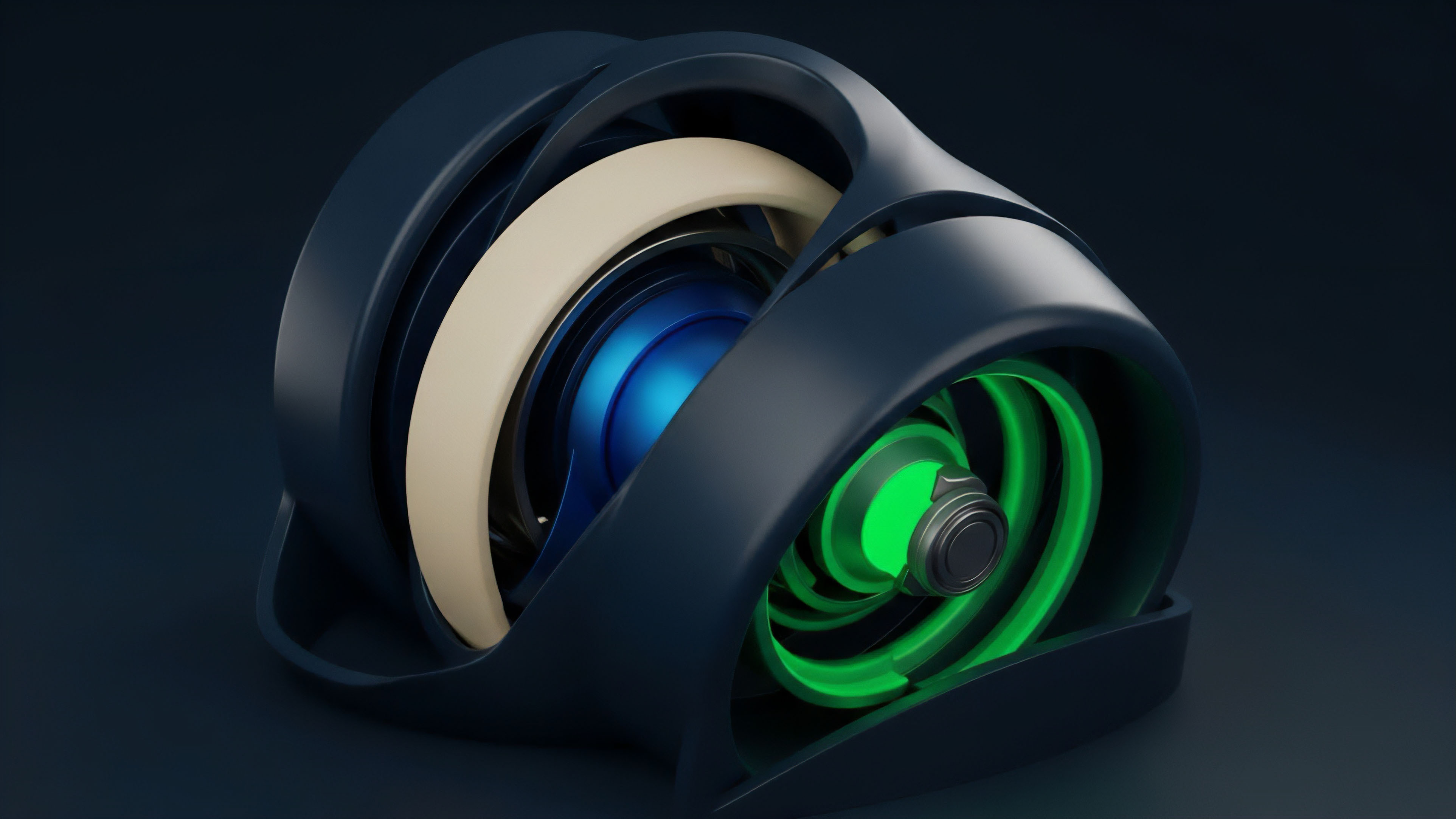

The current evolution involves a move toward off-chain computation and verification. Protocols are experimenting with techniques like zero-knowledge proofs (ZKPs) and secure multi-party computation (MPC) to perform complex calculations off-chain and then verify the results on-chain. This reduces the computational burden on the main blockchain and allows for more complex options pricing models (like Black-Scholes or Monte Carlo simulations) to be used in a trustless environment.

The goal is to verify the calculation itself, not just the raw inputs, leading to a higher degree of financial integrity. This evolution is driven by the demand for capital efficiency and greater financial product complexity.

Horizon

Looking ahead, the horizon for trustless verification in options involves a shift toward predictive and real-time risk management, moving beyond simple price verification at settlement. The next generation of verification mechanisms will focus on integrating complex, multi-dimensional data to prevent systemic risk before it materializes. This requires the development of decentralized volatility surfaces , where the verification mechanism not only provides a spot price but also provides a verified, forward-looking view of implied volatility across different strike prices and maturities.

This data allows for more accurate and proactive margin management, reducing the likelihood of cascading liquidations during market downturns.

A second significant development involves interoperability and data fragmentation. As options protocols expand across multiple blockchains (L1s and L2s), the challenge becomes verifying data consistently across these disparate environments. The future will require cross-chain verification protocols that can securely transfer data from one chain to another without introducing new points of trust.

This ensures that collateral locked on one chain can be accurately valued and managed by a derivatives protocol operating on another chain. The integration of zero-knowledge proofs will allow protocols to verify complex financial logic without revealing sensitive data about positions or collateral. This enhances both privacy and efficiency, allowing for a new class of options products that can be settled without revealing underlying positions to the public.

| Current Challenge | Horizon Solution | Core Technology |

|---|---|---|

| Price feed latency and cost. | Real-time risk management and dynamic update thresholds. | Off-chain computation, ZKPs. |

| Data fragmentation across chains. | Cross-chain verification protocols. | Interoperability solutions, secure messaging layers. |

| Limited data complexity (spot price only). | Verified volatility surfaces and interest rate benchmarks. | Decentralized oracle networks with specialized data products. |

The ultimate goal is to move from a system that simply verifies data for settlement to one that provides a continuous, real-time risk profile of the entire protocol. This requires a new architecture where verification mechanisms are deeply integrated into the protocol’s margin engine, enabling automated adjustments to collateral requirements and risk parameters based on verified market conditions. This integration creates a more resilient and capital-efficient financial system.

Glossary

Rwa Verification

System Solvency Verification

Asynchronous State Verification

Manual Centralized Verification

Game Theory

Trustless Settlement Engine

Universal Proof Verification Model

Data Provenance Verification Methods

Data Verification Layers