Essence

Data Availability Cost (DAC) represents the systemic expense required to ensure that all necessary information for a decentralized financial system ⎊ specifically a derivatives protocol ⎊ is accessible, verifiable, and timely. In traditional finance, information access is often a function of a counterparty relationship and centralized data feeds. In decentralized finance (DeFi), where trust must be minimized, this cost shifts from information access to information verification.

DAC is the price paid to guarantee that a protocol can execute its logic, such as liquidations or settlement, based on data that all participants agree upon, even if they cannot trust each other. This cost function is fundamentally different from a simple transaction fee; it is a critical component of a protocol’s overall risk profile. The cost of data availability is not static.

It scales with the complexity of the derivative instrument and the required frequency of updates. For simple spot exchanges, DAC is minimal, largely limited to transaction fees for price feed updates. For options and perpetual futures, however, DAC becomes a significant factor.

These instruments rely on continuous data streams for mark pricing, collateral health checks, and liquidation triggers. The cost of ensuring this data is available and resistant to manipulation directly impacts the capital efficiency and overall safety of the protocol.

Data Availability Cost is the price paid to guarantee data integrity and accessibility within a decentralized financial system, directly influencing protocol security and capital efficiency.

Origin

The concept of DAC emerged from the fundamental limitations of early Layer 1 (L1) blockchains. Initially, protocols like MakerDAO encountered the “oracle problem” ⎊ the challenge of bringing off-chain real-world data onto the blockchain in a secure and reliable manner. Early solutions involved a small set of trusted data providers, but this created a centralization vulnerability.

The evolution from simple price feeds to complex derivative protocols exposed a deeper issue: the cost of data availability itself. As DeFi protocols grew, the demand for high-frequency, verifiable data outpaced the capacity of L1 blockchains. The cost of publishing data on-chain became prohibitive, leading to high transaction fees and slow updates, which in turn caused inefficient liquidations and poor user experiences.

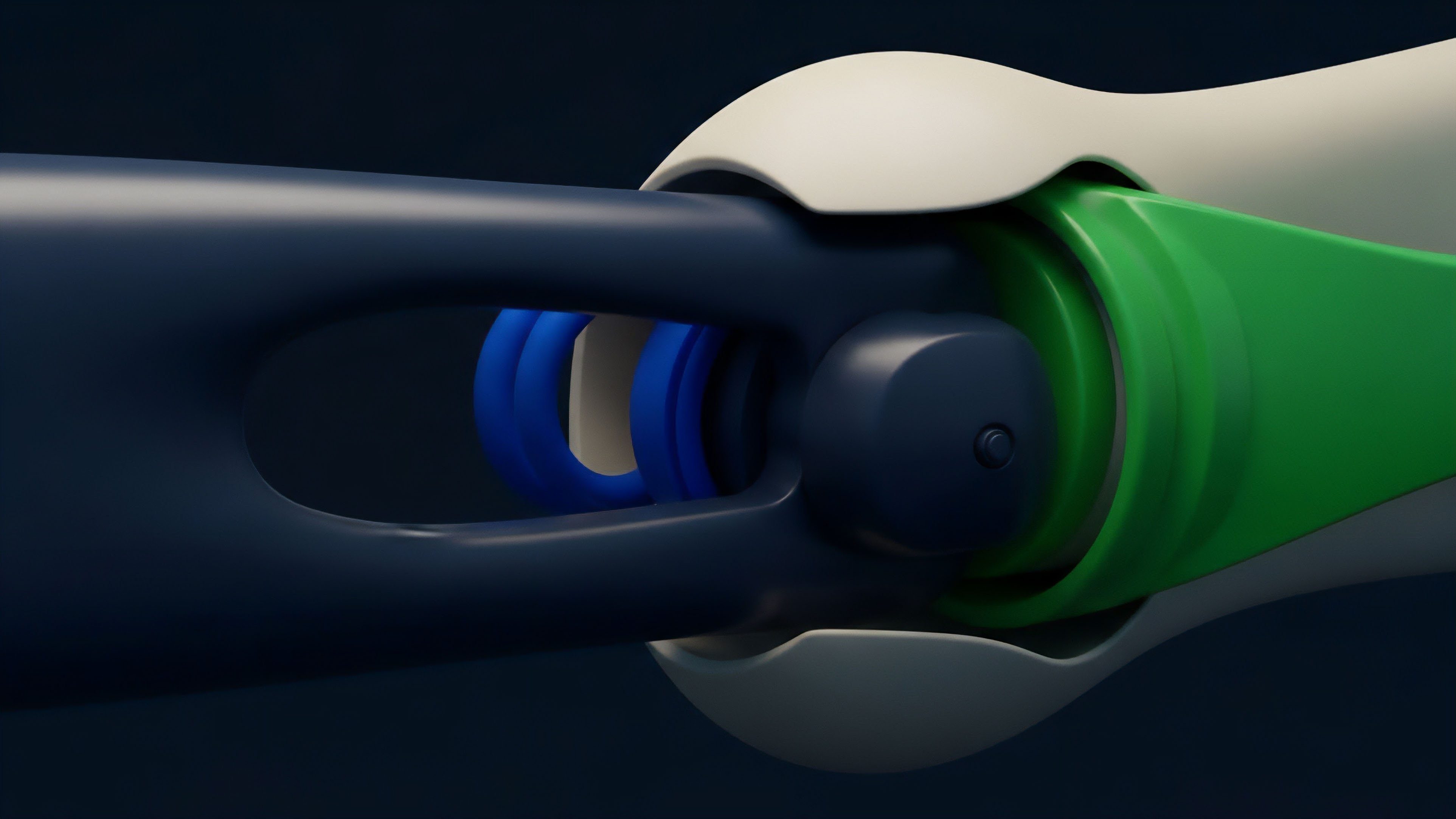

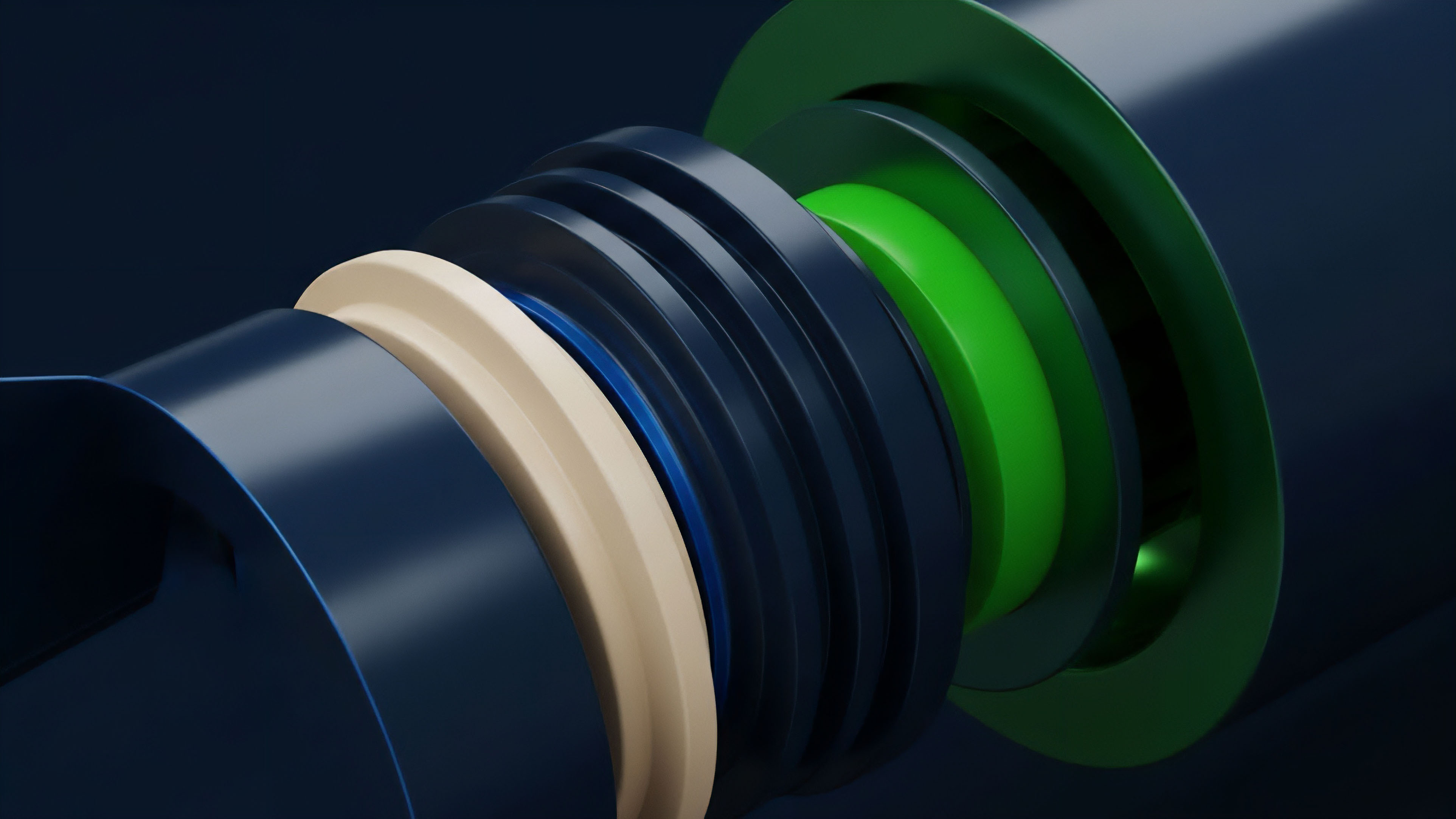

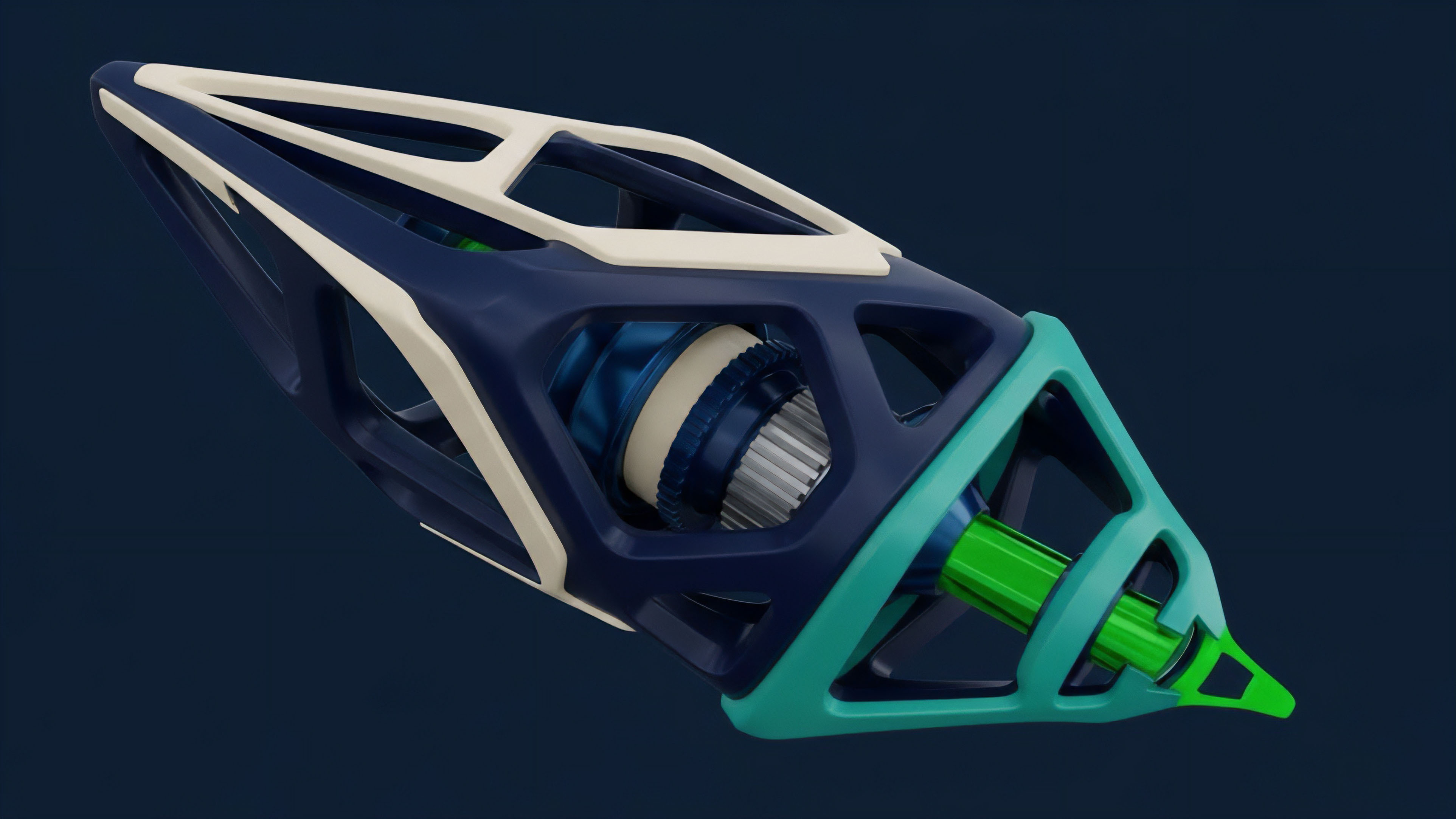

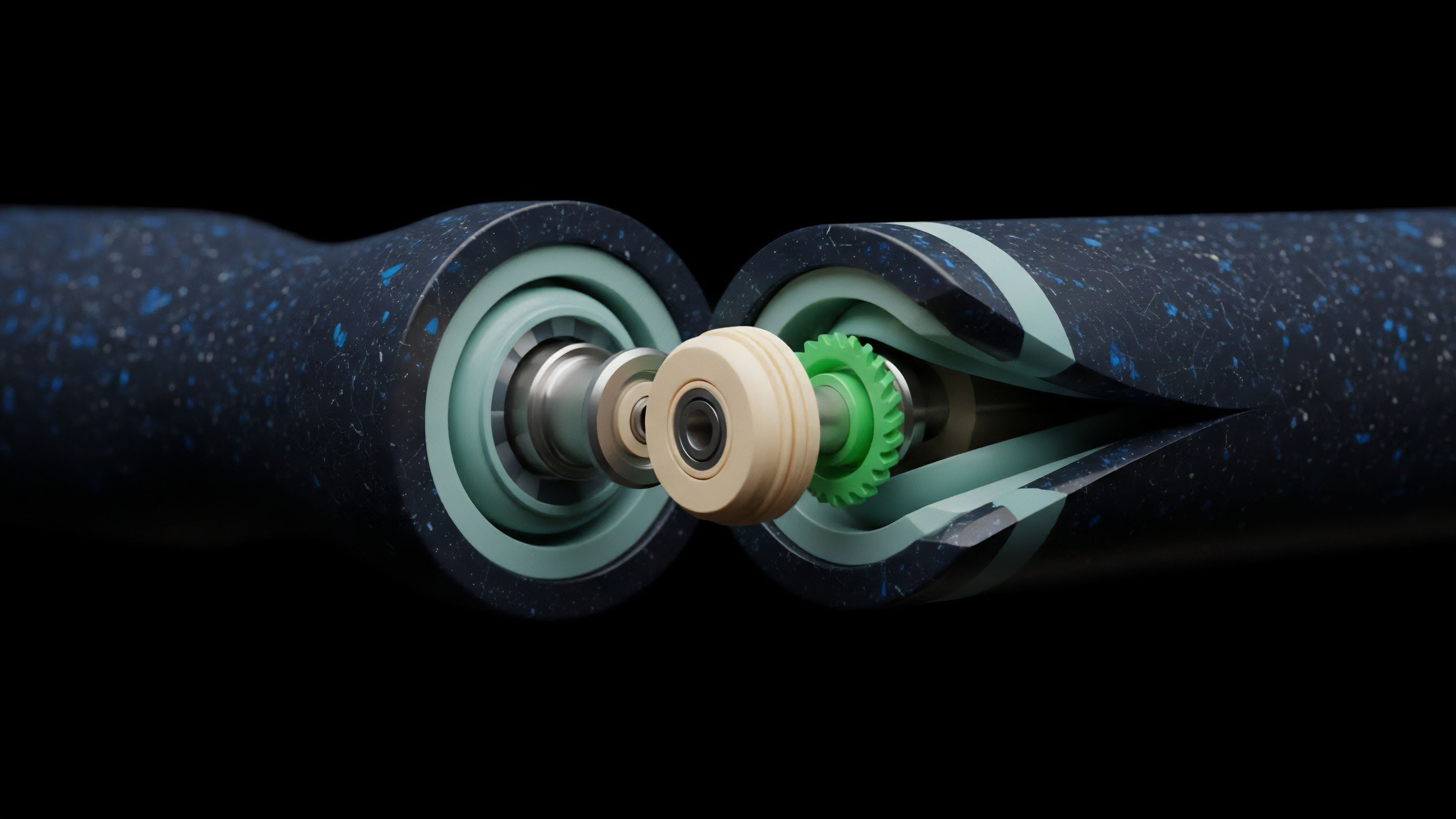

The transition to modular blockchain architectures fundamentally changed the DAC calculus. As protocols moved from monolithic L1s to Layer 2 (L2) rollups, the data availability problem shifted. Rollups require that the data necessary to reconstruct the state of the L2 chain be published on the underlying L1.

This ensures that in the event of a dispute or malicious activity, anyone can verify the L2 state. The cost of publishing this data to the L1 ⎊ typically Ethereum ⎊ became the primary component of DAC for rollups. This led to the creation of dedicated data availability layers, which compete with L1s by offering a cheaper, more specialized service for storing and making data available to a network of validators.

Theory

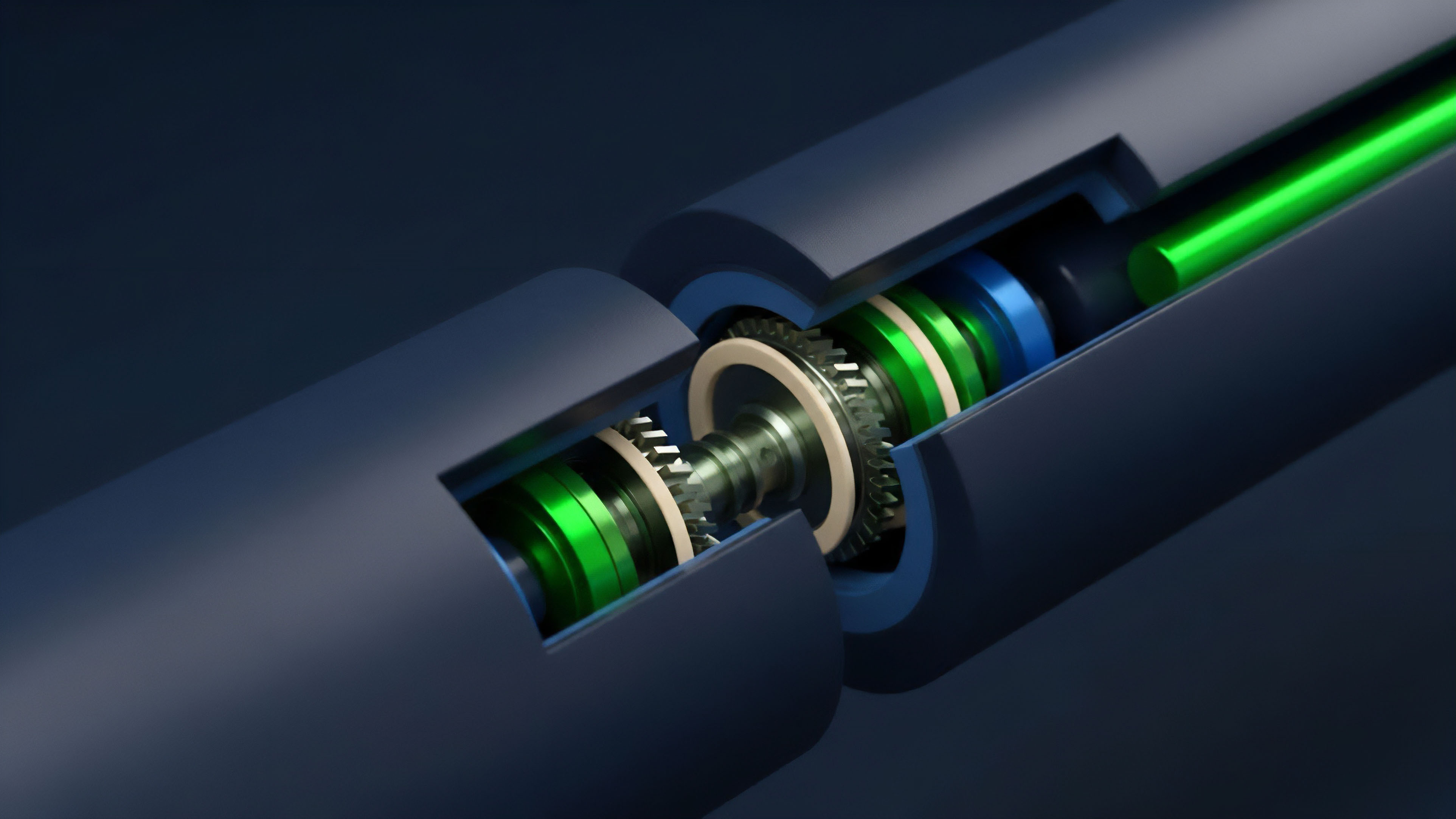

To understand DAC in derivatives, one must deconstruct its components. DAC is a composite risk premium, a function of latency, security, and capital expenditure. It represents the trade-off between the cost of publishing data and the risk of a market failure caused by data unavailability.

Components of Data Availability Cost

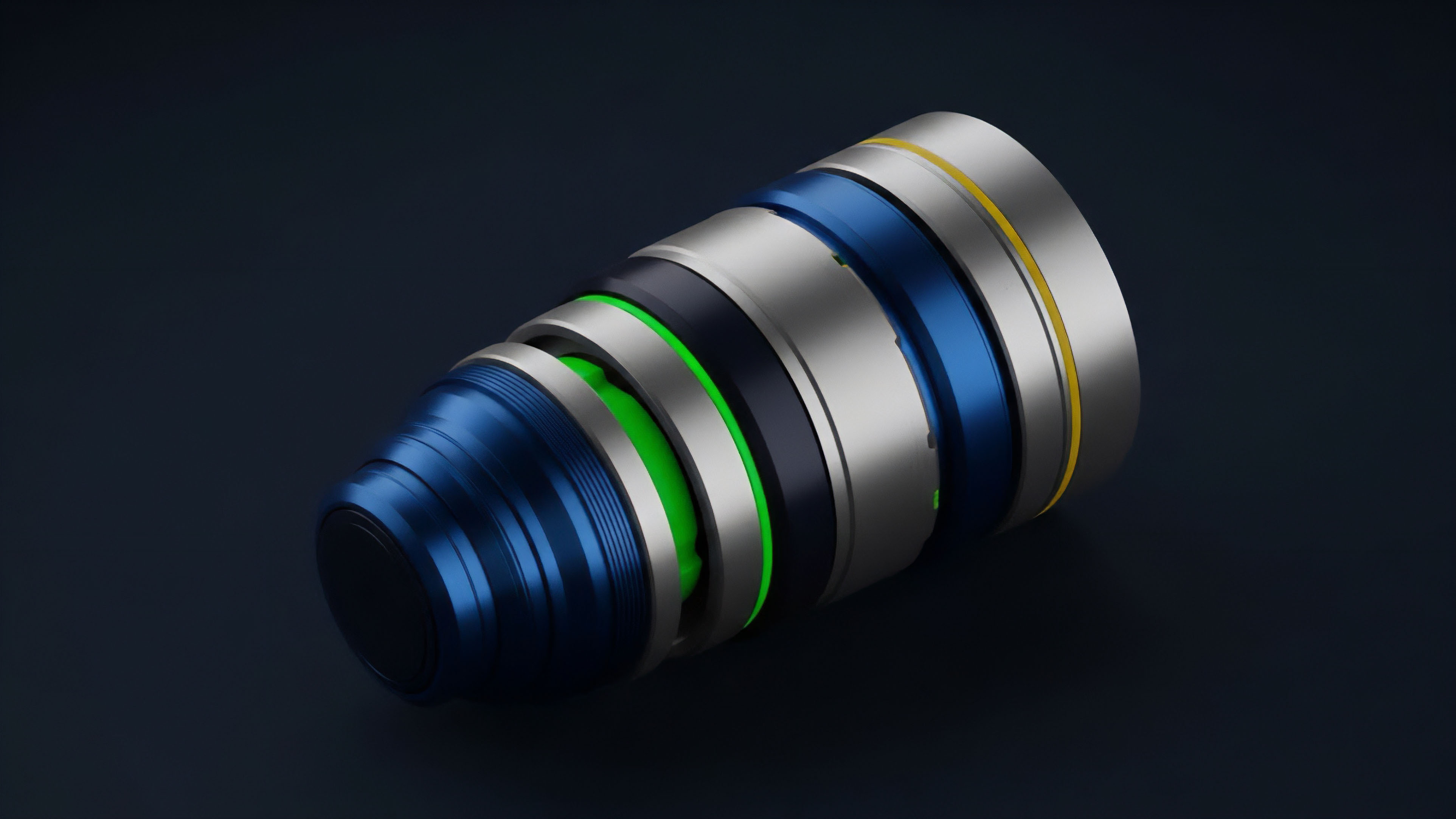

The total cost of data availability for a derivatives protocol can be broken down into three primary elements:

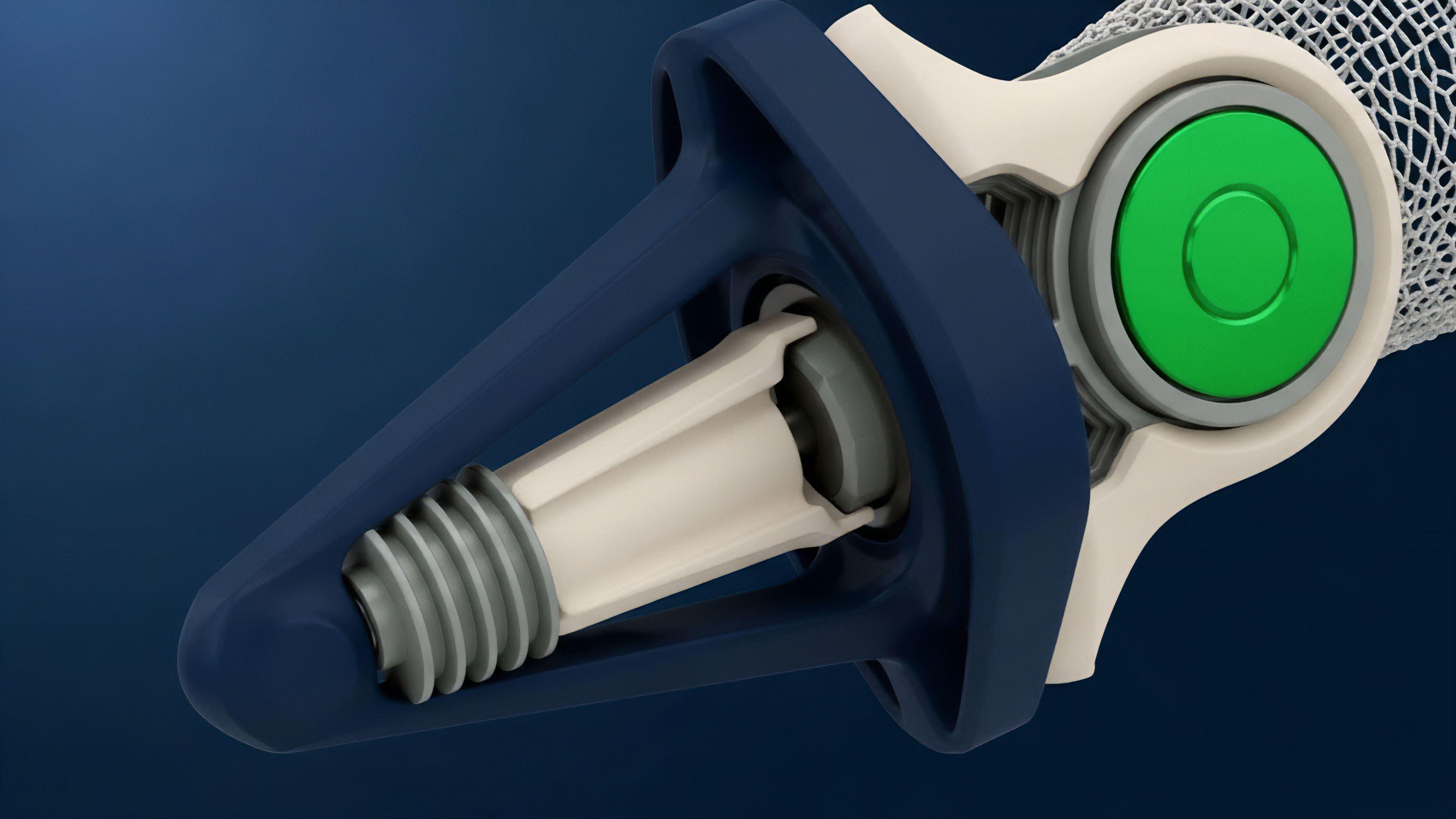

- On-Chain Transaction Costs: The gas fees required to post data to the underlying blockchain. This includes data necessary for state transitions on rollups or direct price feed updates on L1s. These costs fluctuate with network congestion and are often the largest component of DAC for high-throughput systems.

- Oracle Incentives and Security Deposits: The financial cost associated with incentivizing data providers to act honestly. Protocols must pay rewards to oracles and require them to stake collateral. This collateral acts as a bond, which can be slashed if the oracle submits false data. The size of this bond and the required rewards represent a direct cost to the protocol, effectively a premium paid for data integrity.

- Implicit Latency Risk Premium: The hidden cost associated with the delay between data generation and data availability. In derivatives trading, price data must be available in real time to prevent front-running and ensure fair liquidations. A higher DAC can lead to slower updates, creating a window for arbitrage or manipulation. This risk premium is often reflected in wider bid-ask spreads or higher collateral requirements.

DAC and Systemic Risk

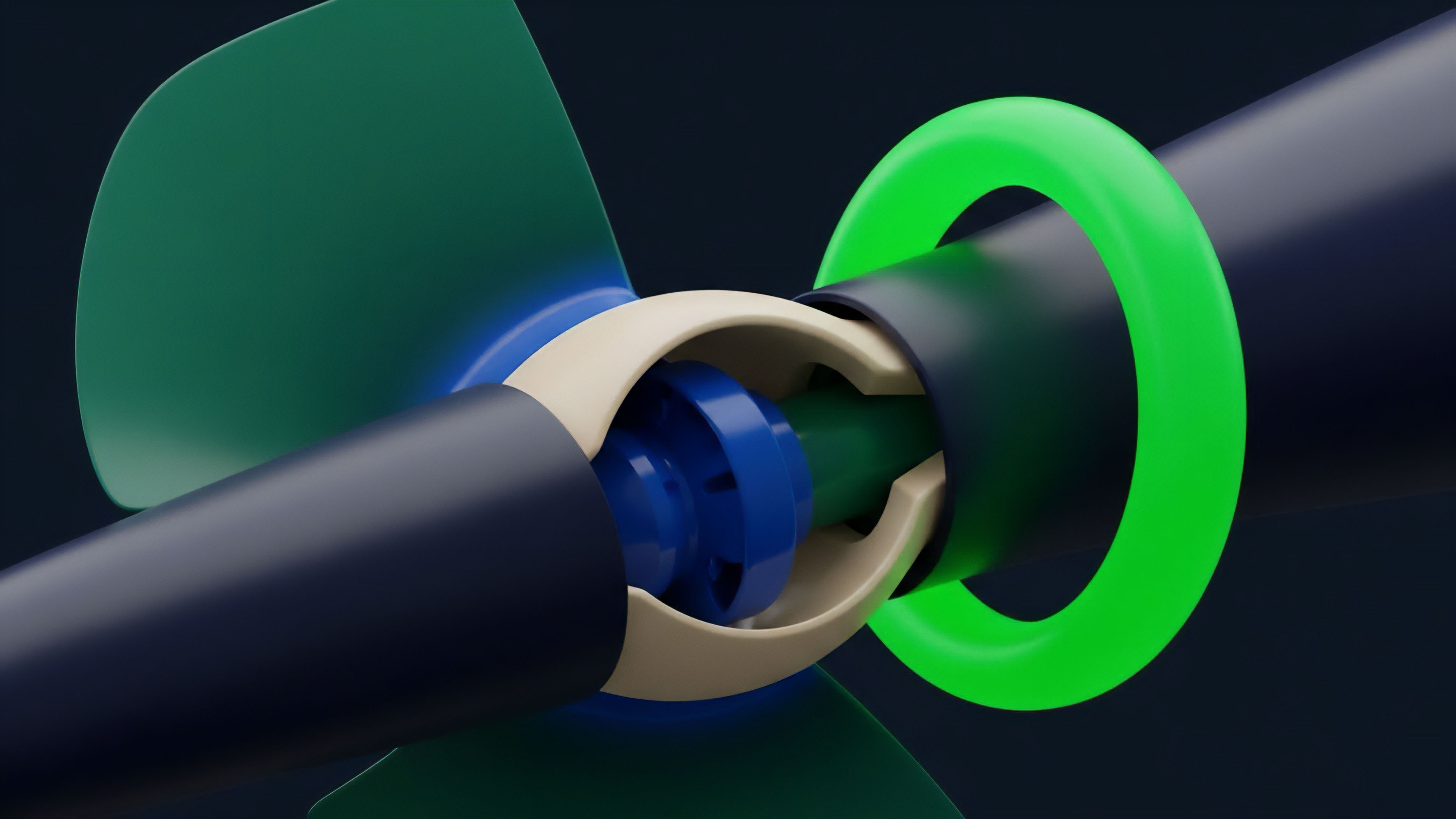

DAC directly influences systemic risk within a decentralized derivatives market. If data availability is expensive, protocols may choose to update price feeds less frequently to save on costs. This creates a risk-off scenario for options traders.

The risk premium for holding a position increases as the time between data updates grows, because the collateralization ratio of a position can change dramatically between updates. This can lead to cascading liquidations when data finally becomes available, causing sudden market volatility. A derivatives protocol must balance the cost of data against the risk of data manipulation.

If data availability is too cheap, it may be vulnerable to Sybil attacks or data manipulation. If it is too expensive, the protocol becomes capital inefficient and uncompetitive. The optimal DAC for a given protocol is a dynamic equilibrium point, determined by the market value of the assets being traded and the volatility of the underlying assets.

Approach

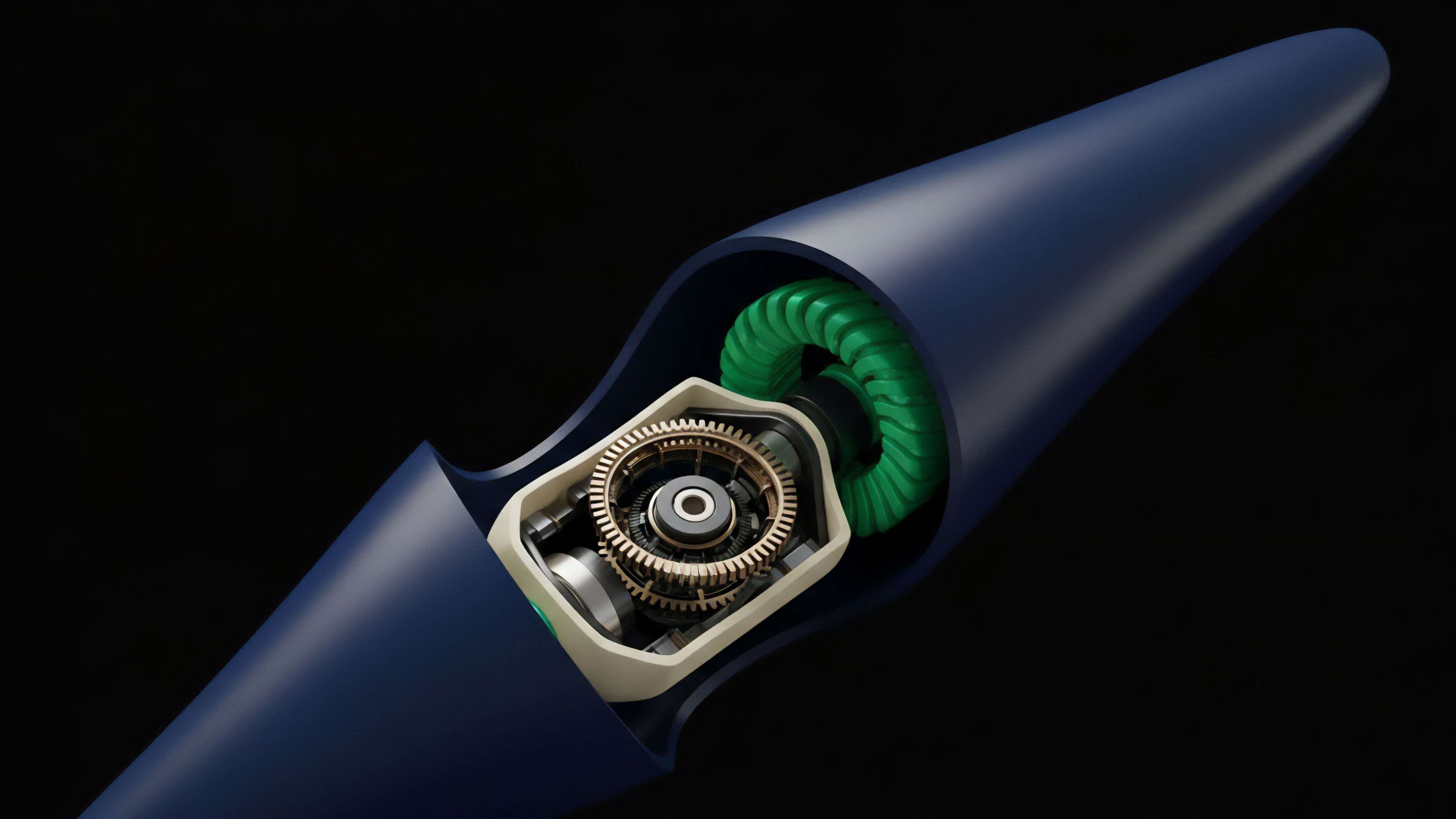

Derivatives protocols employ different approaches to manage DAC, driven by their specific requirements for latency and security. The choice of architecture determines the cost profile.

Layer 2 Data Strategies

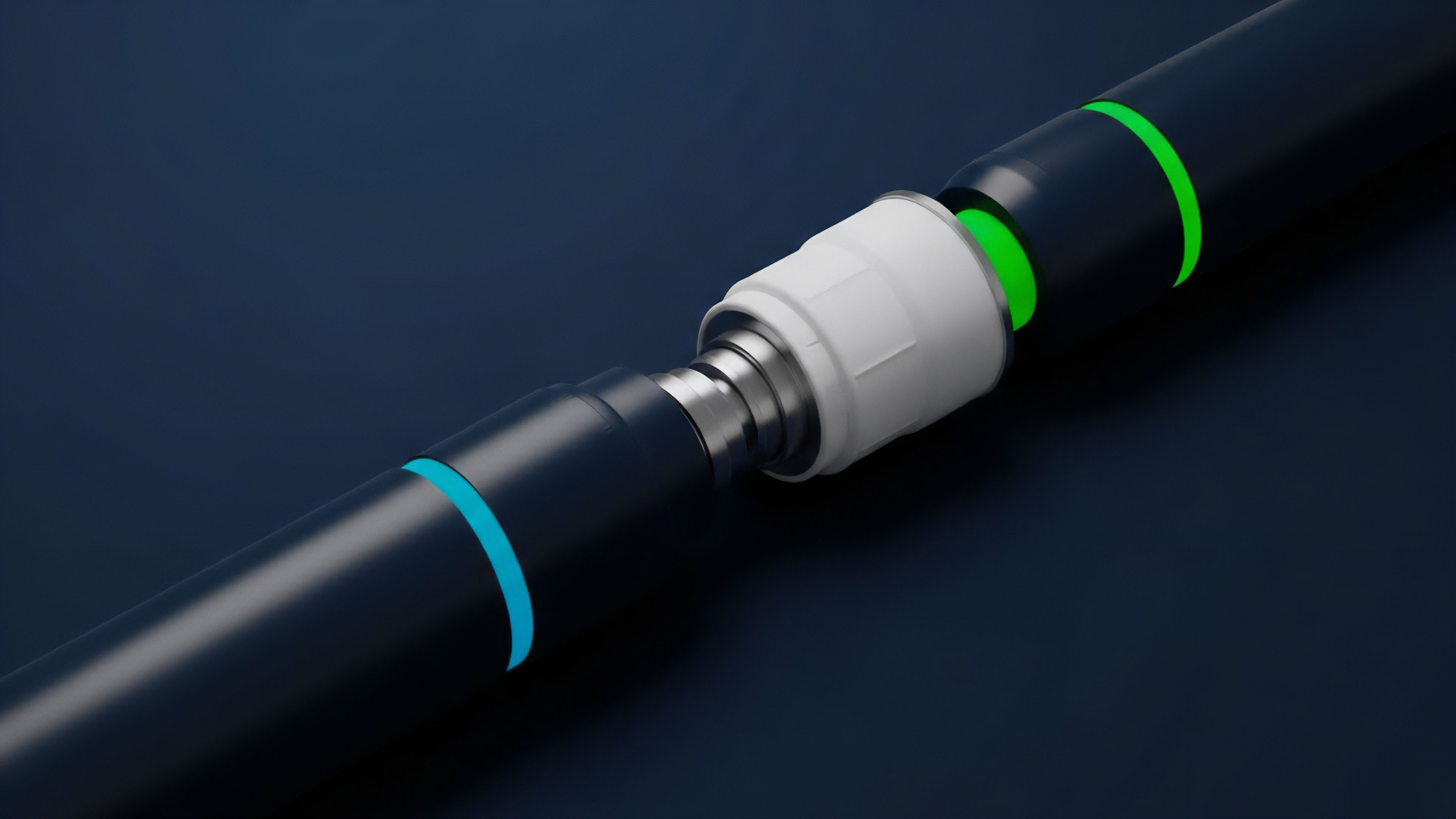

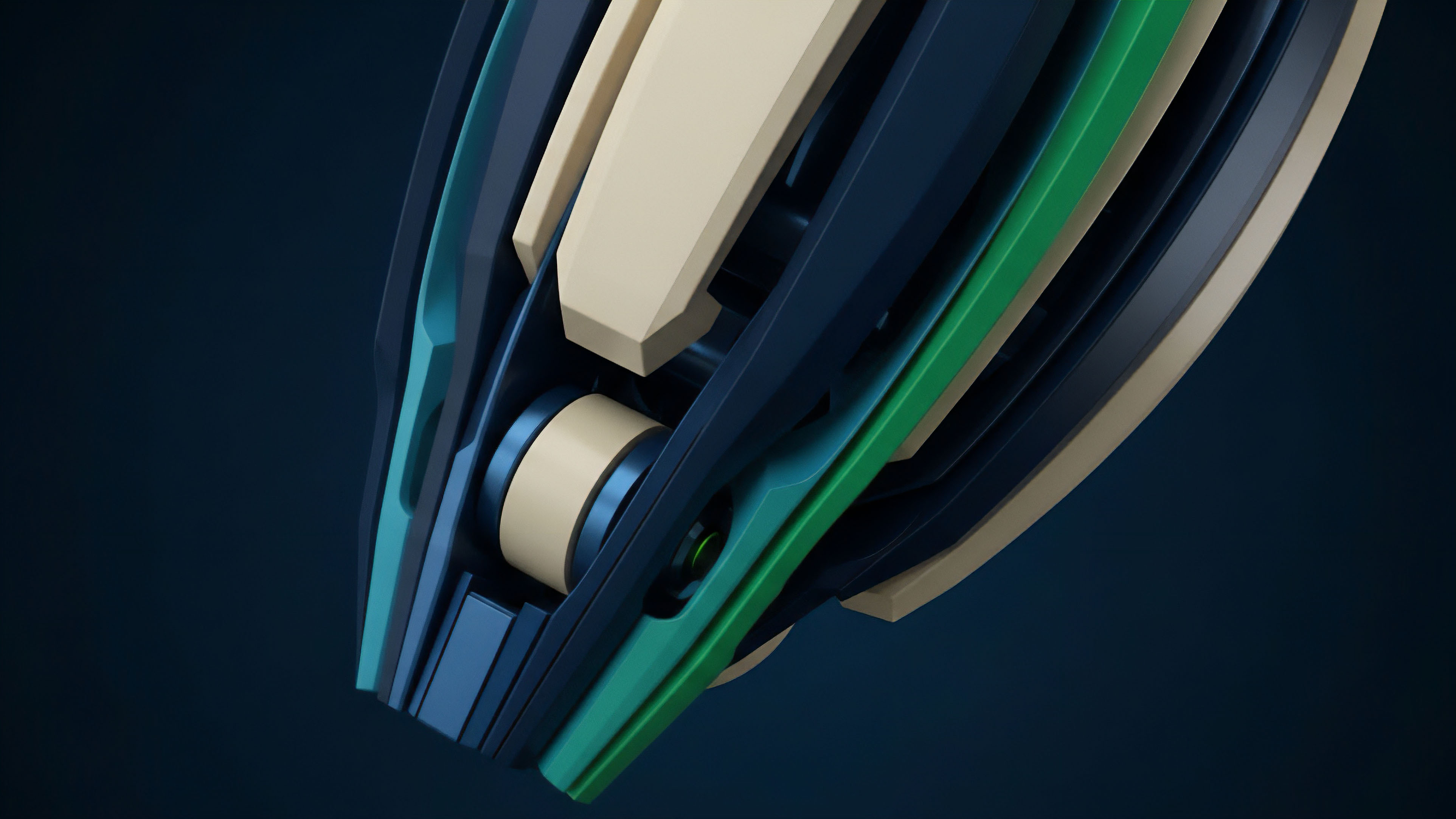

The most common approach today involves L2 rollups, which abstract away the high cost of L1 execution while relying on the L1 for data availability. The specific DAC depends on the rollup’s data posting strategy:

- L1 Data Posting (Standard Rollups): The rollup publishes transaction data directly to Ethereum’s calldata. This leverages the security of Ethereum, but the cost remains high, especially during periods of high L1 congestion. This approach offers maximum security for derivatives protocols where settlement integrity is paramount.

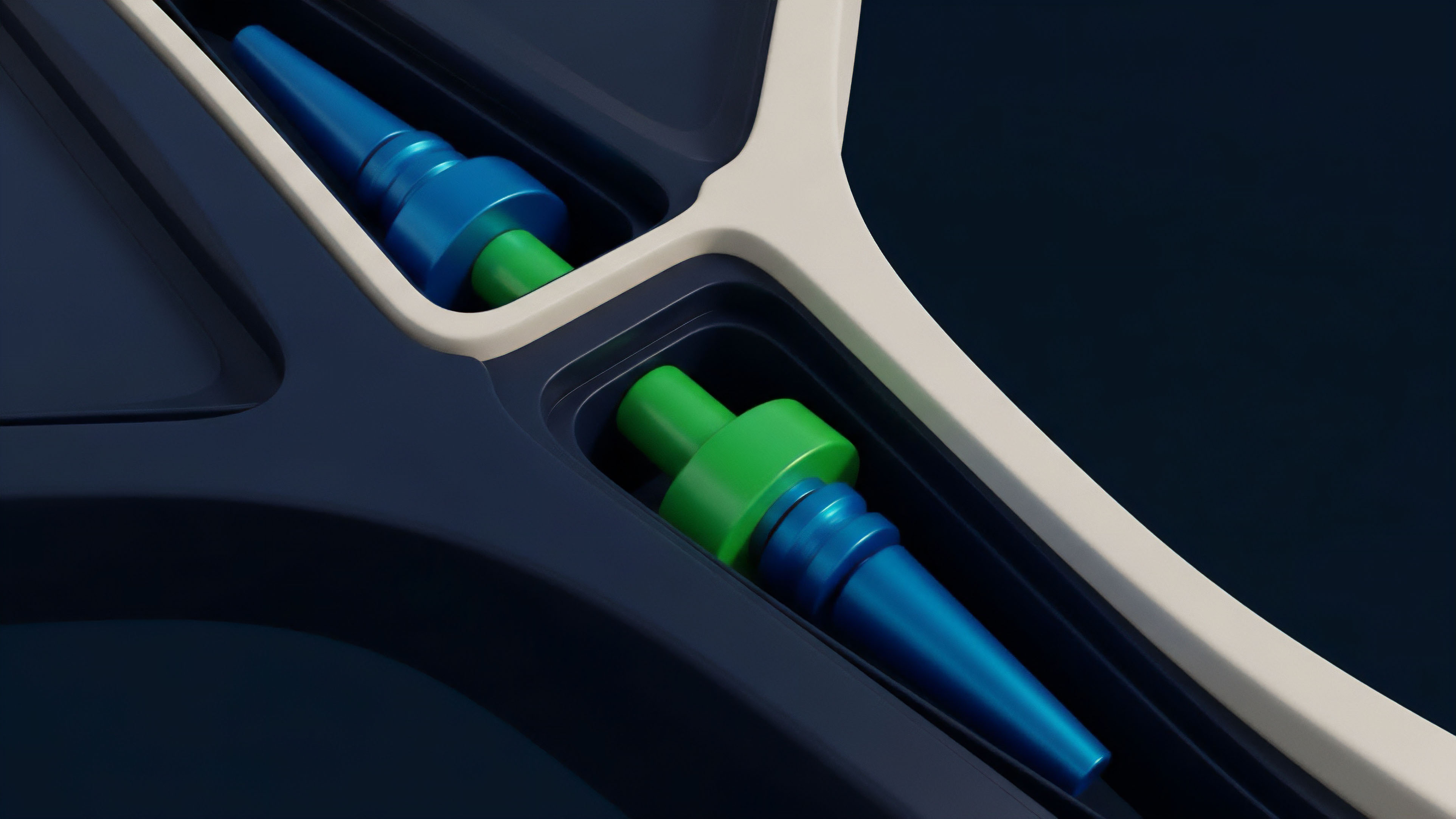

- Data Availability Committees (DACs): Some L2s utilize a committee of trusted third parties to sign off on data availability, rather than posting all data to L1. This drastically reduces DAC by offloading storage costs. However, it introduces a trust assumption: users must trust the committee not to collude and withhold data. This trade-off between cost reduction and trust assumption is critical for derivatives protocols, where a malicious DAC could freeze liquidations.

- Data Availability Sampling (DAS): Advanced solutions, such as those used by modular data layers, allow light clients to verify data availability by sampling small portions of the data. This significantly reduces the cost of data verification for individual users, allowing protocols to scale without sacrificing security.

DAC and Options Pricing

For options pricing, DAC introduces a specific, non-linear cost. The cost of data availability for a protocol’s liquidation engine directly affects the implied volatility surface. If the cost of data is high, the risk of a “stale price” increases.

This stale price risk means that a position’s true value may diverge significantly from its on-chain value between updates. To compensate for this risk, market makers widen spreads, effectively increasing the implied volatility and the cost of the option.

| Data Availability Strategy | Primary DAC Component | Latency Profile | Security Model | Suitability for Derivatives |

|---|---|---|---|---|

| L1 Calldata Posting | L1 Gas Costs | High (L1 block time) | Trustless (L1 security) | High-value, low-frequency options |

| Data Availability Committees | Committee Fees | Low (Off-chain) | Trusted Committee | High-frequency, lower-value options |

| Data Availability Sampling | Storage Fees | Variable (DAS parameters) | Trust-minimized (L1 security) | General-purpose derivatives, scaling solutions |

Evolution

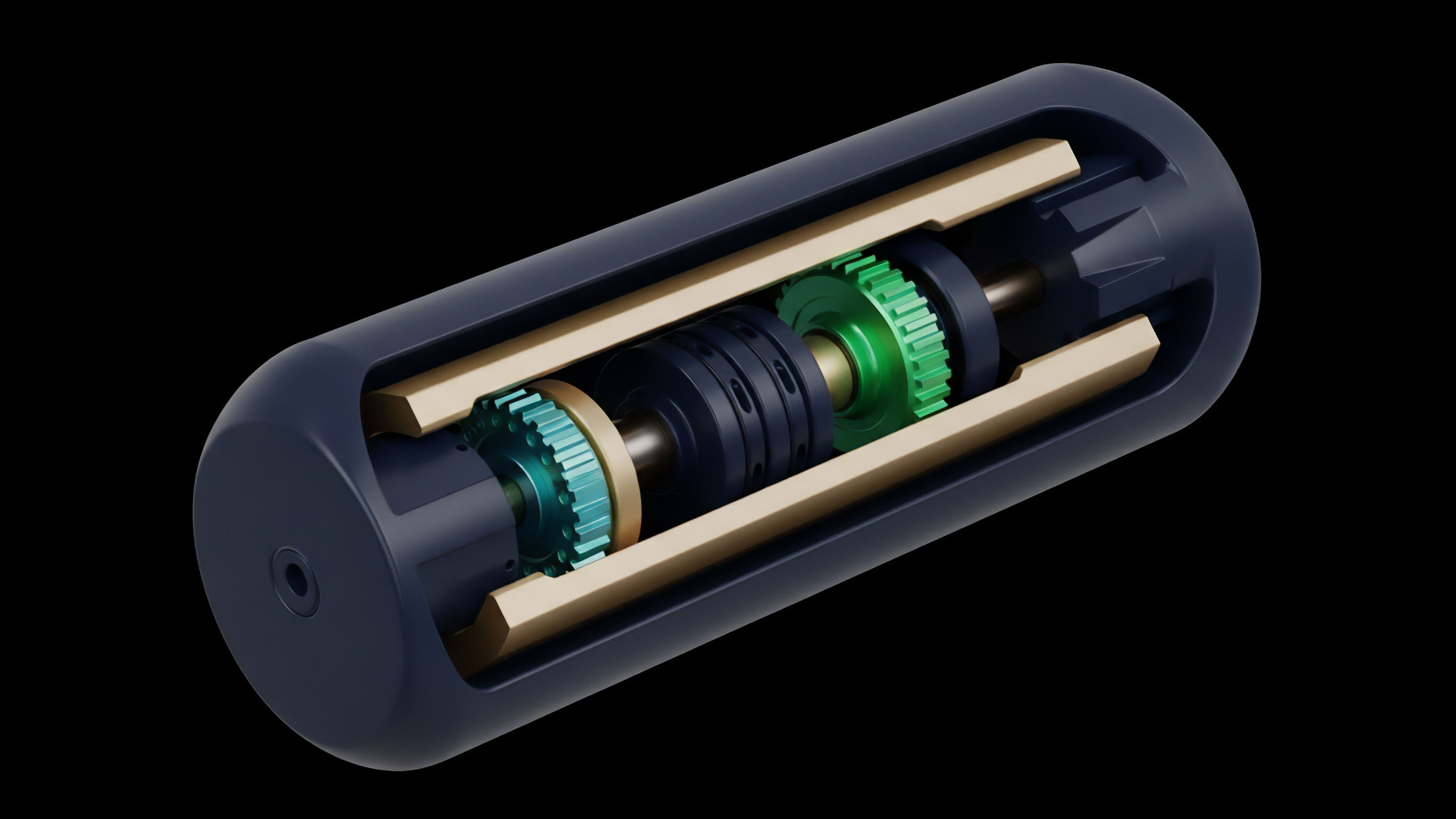

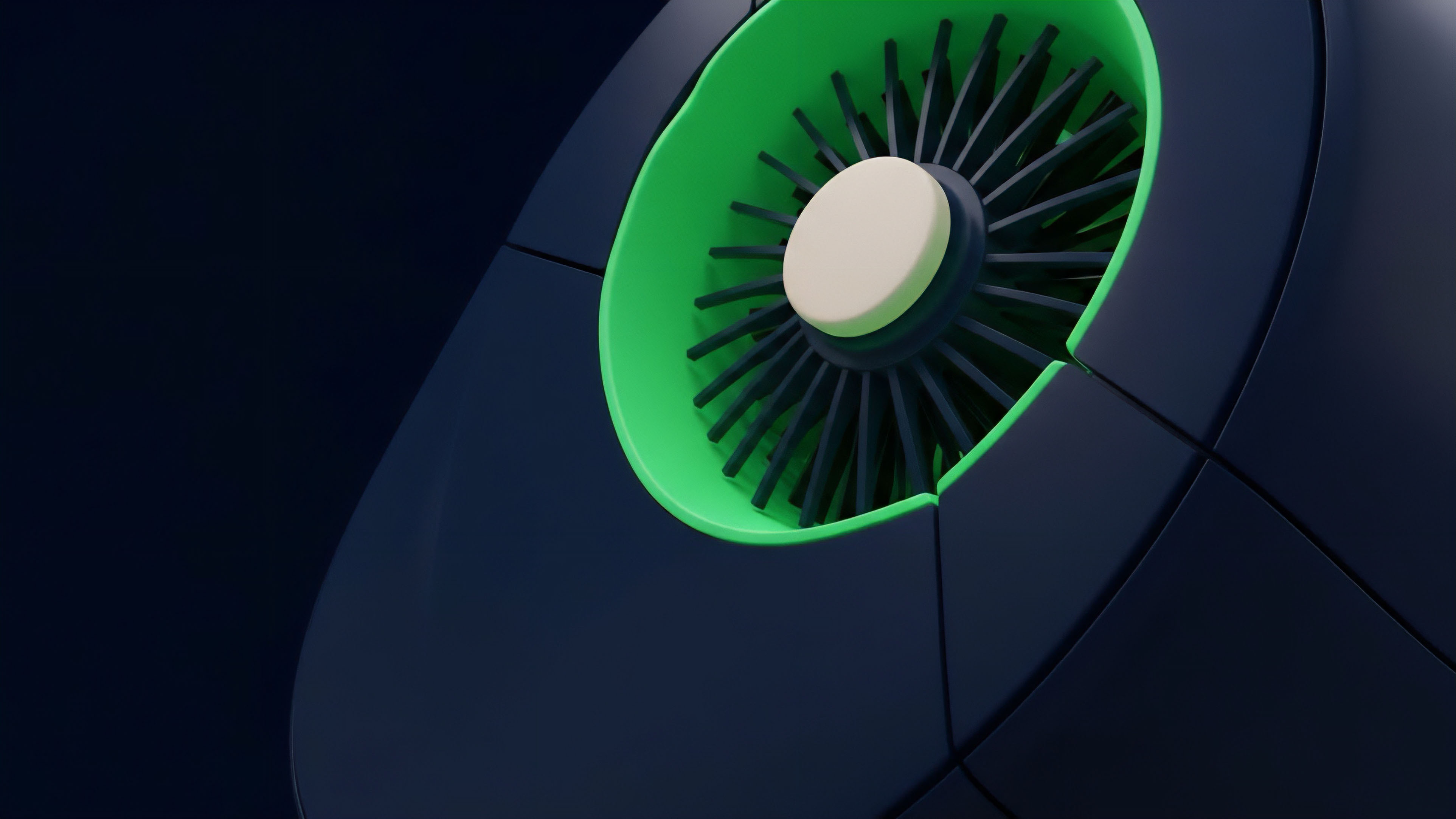

The evolution of DAC is directly tied to the shift from monolithic to modular blockchain architectures. Initially, DAC was a function of L1 throughput; protocols competed for block space, driving up costs for all applications. This model created a zero-sum game where derivatives protocols struggled to compete with high-value transactions like token swaps.

The introduction of dedicated data availability layers represents a fundamental change in market structure. These layers specialize in providing data availability at a lower cost than L1s, creating a new market for data services. This specialization allows rollups to separate execution from data availability, creating a new cost structure.

The cost of data availability is no longer a fixed overhead for all applications; it is now a variable cost that protocols can choose based on their risk tolerance.

Modular data availability layers are transforming DAC from a fixed overhead cost into a competitive variable, enabling more efficient and scalable derivatives protocols.

This evolution has created a new class of derivatives protocols. Protocols built on modular architectures can tailor their DAC strategy to their specific product offerings. For example, a protocol offering exotic options with high capital requirements might choose a high-security, high-cost DAC strategy, while a protocol offering high-frequency perpetual futures might prioritize lower latency and lower cost by utilizing a more optimized data layer.

Horizon

Looking ahead, DAC will likely become the primary determinant of a protocol’s capital efficiency and risk profile. As execution environments become highly optimized and commoditized, the cost of data availability will differentiate protocols. The future of decentralized derivatives depends on the ability to reduce DAC without compromising security.

The next phase of DAC optimization will likely focus on data compression techniques and more efficient proof systems. By minimizing the amount of data that needs to be posted on-chain, protocols can significantly reduce their operational costs. This will enable the creation of highly capital-efficient derivatives protocols that can compete directly with centralized exchanges.

The development of specialized data layers will create a competitive landscape where DAC is no longer a barrier to entry but a strategic choice.

Future Implications for Derivatives

The ability to reduce DAC will have profound implications for derivatives markets.

- Exotic Options: Lower DAC enables protocols to offer more complex and exotic options that require frequent, high-precision data updates for accurate pricing and risk management.

- Cross-Chain Derivatives: A reduction in DAC facilitates the creation of truly cross-chain derivatives where data from multiple chains can be aggregated efficiently and securely.

- Capital Efficiency: By lowering the cost of data verification, protocols can reduce collateral requirements, leading to higher leverage and greater capital efficiency for traders.

The future competitive advantage will go to the protocol that can achieve the lowest DAC while maintaining the highest level of data integrity. This requires a systems-level approach where data availability is considered a core part of the protocol’s risk engine, not simply a technical detail.

The future of decentralized derivatives hinges on achieving a competitive advantage through lower DAC, enabling greater capital efficiency and the introduction of more complex financial instruments.

Glossary

L3 Cost Structure

Data Availability Infrastructure

Cost-plus Pricing Model

Total Execution Cost

Dynamic Carry Cost

Data Cost Market

Settlement Cost Component

Data Availability Mechanism

Data Availability and Economic Security