Essence

Data Feed Cost constitutes the aggregate economic friction required to maintain a high-fidelity synchronization between off-chain price discovery and on-chain state. Within the architecture of decentralized derivatives, this expenditure represents the price of objective reality. Every smart contract exists within a deterministic silo, lacking inherent visibility into the external world.

To execute a liquidation or settle an option, the protocol must ingest data from centralized exchanges or liquid venues, a process that incurs direct gas fees and indirect risk premiums.

Data Feed Cost is the quantitative measure of economic resources sacrificed to import external market veracity into a trustless execution environment.

This financial burden is a direct consequence of the “Oracle Problem,” where the security of the derivative depends on the integrity and freshness of the price stream. High-frequency options markets require sub-second updates to prevent toxic flow and arbitrage against the liquidity pool. Consequently, the Data Feed Cost scales non-linearly with the required precision and the volatility of the underlying asset.

Market participants must account for these expenses within their margin engines to ensure that the cost of updating the price does not exceed the value of the trade being protected.

The Taxonomy of Information Friction

The total expenditure is categorized into three primary layers:

- On-chain transaction fees paid to miners or validators for state transitions.

- Operational overhead for node operators who manage the infrastructure and API connections.

- The opportunity cost of latency, where stale data leads to adverse selection for liquidity providers.

Protocols often socialized these expenses across all users, yet modern architectures are shifting toward a user-pays model. In this environment, the Data Feed Cost becomes a variable expense that traders must optimize, similar to slippage or exchange fees in traditional finance. The efficiency of a derivative platform is often judged by its ability to minimize this cost without compromising the security or accuracy of the settlement price.

Origin

The necessity for Data Feed Cost emerged during the transition from simple token swaps to complex financial instruments on Ethereum.

Early decentralized exchanges relied on internal liquidity pools for price discovery, but this proved insufficient for options which require external benchmarks like the Black-Scholes inputs. As developers attempted to build robust margin systems, they realized that fetching a price from an external API and committing it to a blockchain was an expensive operation.

The origin of data feed expenditures lies in the structural isolation of blockchain environments and the resulting need for incentivized external actors.

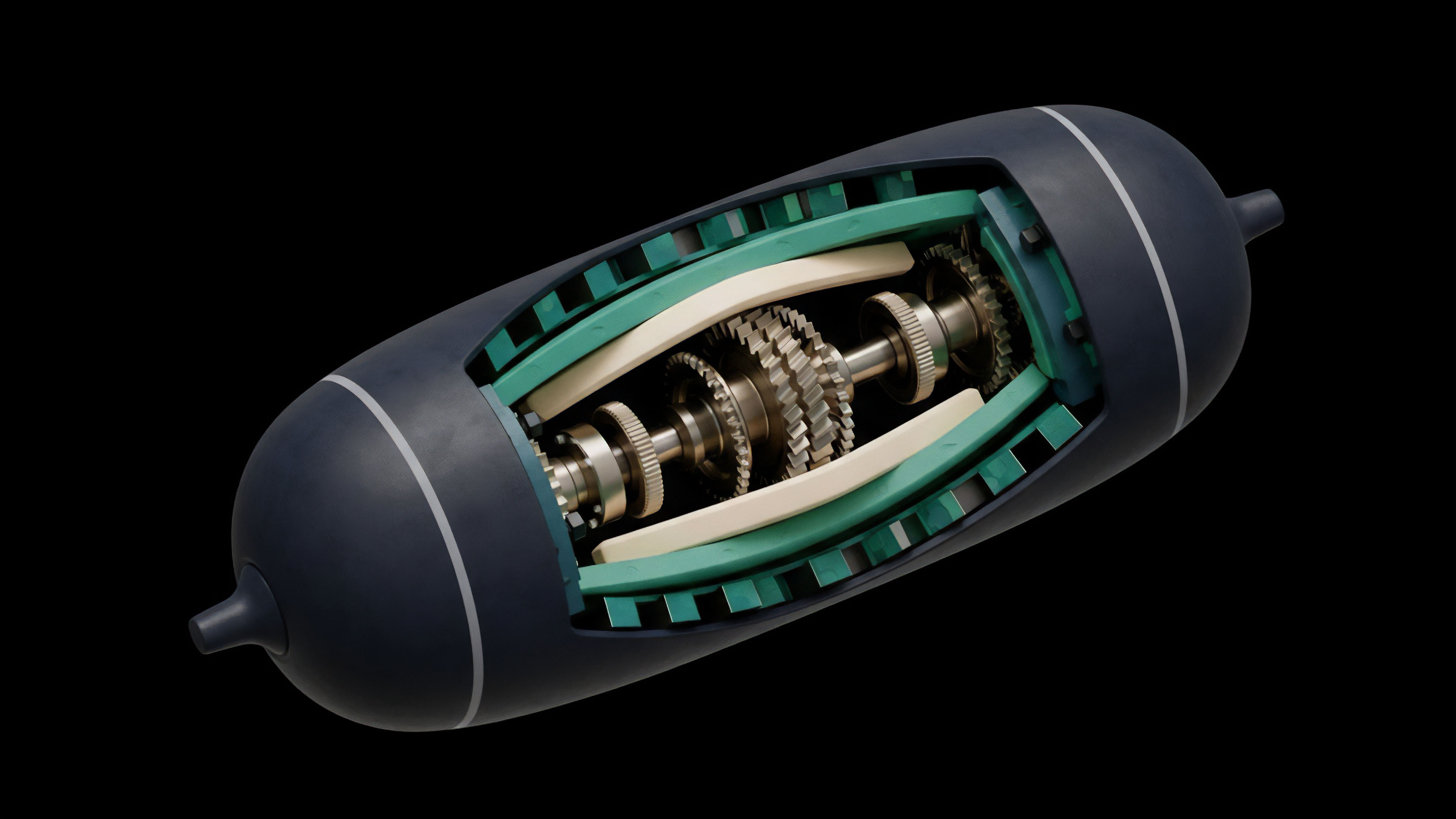

Early implementations utilized simple “push” oracles where a centralized entity periodically sent price updates. This model was fragile and expensive, as the entity had to pay gas fees regardless of whether a trade occurred. The rise of Decentralized Oracle Networks (DONs) distributed this responsibility but increased the Data Feed Cost due to the need for consensus among multiple nodes.

Each node requires a portion of the fee to justify the hardware and security risks involved in providing a signed price packet.

Evolution of the Fee Structure

| Era | Primary Mechanism | Cost Driver |

|---|---|---|

| First Generation | Centralized Push APIs | Single-entity gas subsidies |

| Second Generation | Decentralized DONs (Chainlink) | Node operator consensus and aggregation |

| Third Generation | On-demand Pull Oracles (Pyth) | User-triggered transaction inclusion |

As the DeFi sector matured, the realization that “free” data was a security risk became apparent. Low-cost feeds often relied on low-quality data or infrequent updates, leading to massive exploits during high-volatility events. The industry shifted toward a model where the Data Feed Cost is viewed as a security feature.

Paying for high-quality, low-latency data protects the protocol from price manipulation and ensures that liquidations occur at fair market values.

Theory

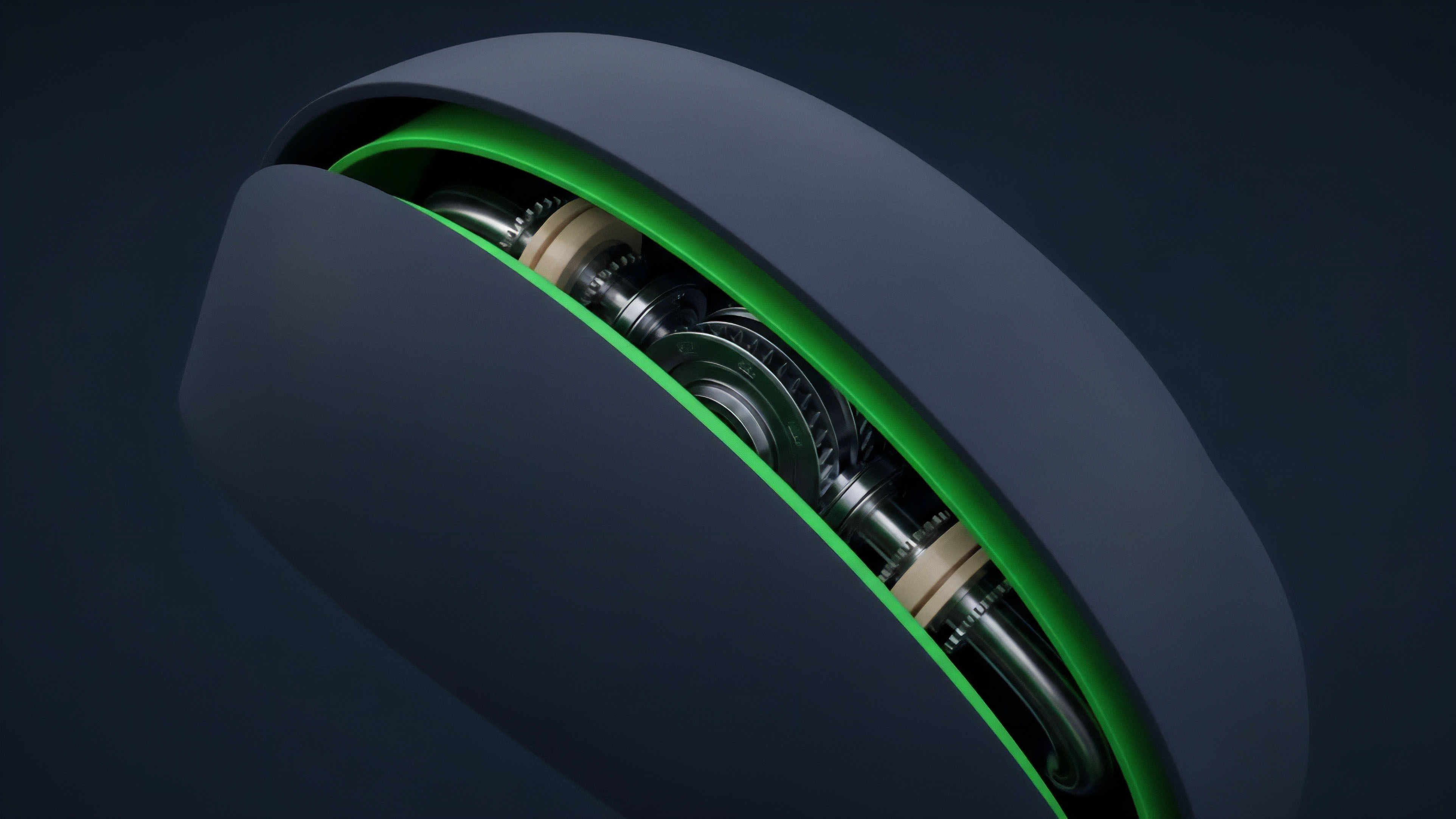

The mathematical modeling of Data Feed Cost involves a trade-off between the deviation threshold (δ) and the heartbeat interval (H). A push oracle updates the price if the market moves by more than δ percent or if H seconds have passed since the last update. The total cost C over a period T is defined by the number of updates N multiplied by the gas price G.

Financial stability in derivatives is a function of the equilibrium between the cost of data ingestion and the potential loss from price staleness.

In a volatile market, the number of updates increases, causing the Data Feed Cost to spike exactly when the protocol is under the most stress. This creates a systemic risk where the cost of updating the oracle might exceed the gas limit of a block or the available liquidity in the protocol’s treasury. Quantifying this risk requires analyzing the historical volatility of the asset and the gas price correlation.

If gas prices and asset volatility are positively correlated, the protocol faces a “double-hit” scenario where maintaining price accuracy becomes prohibitively expensive.

Theoretical Cost Determinants

The structural components of the expenditure are defined by:

- Deviation Sensitivity: The percentage change in price that triggers a mandatory update to prevent arbitrage.

- Heartbeat Frequency: The maximum time allowed between updates to ensure the feed remains “live” during stagnant periods.

- Quorum Size: The number of independent signatures required to validate a single price point, increasing security and gas usage.

Oracle Latency and the Greeks

For options traders, the Data Feed Cost indirectly affects the “Theta” and “Vega” of their positions. If the data feed is slow or expensive, the protocol may use a wider bid-ask spread to compensate for the uncertainty. This “latency spread” is a hidden component of the Data Feed Cost that impacts the capital efficiency of the market.

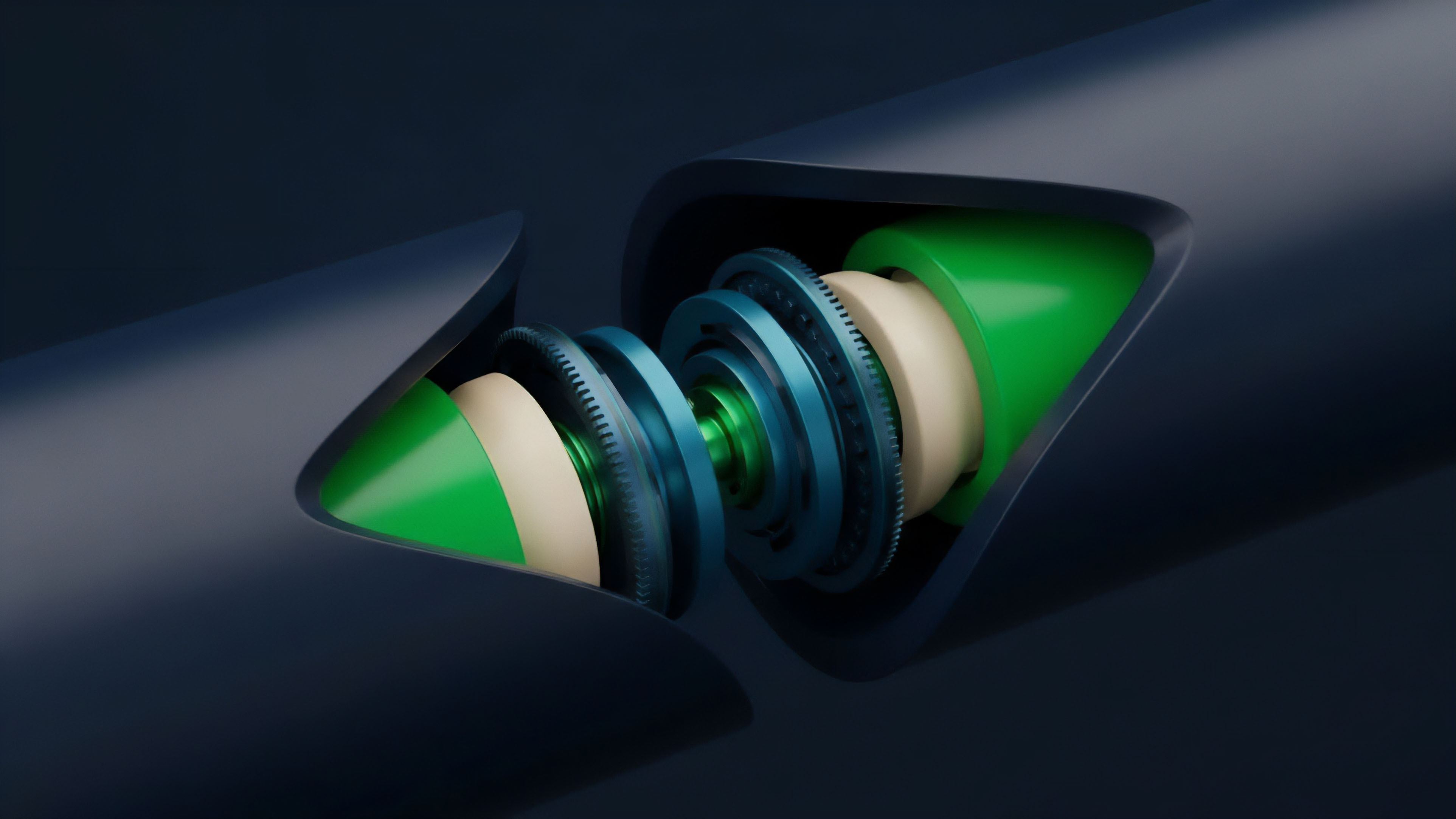

High-performance derivative systems aim to reduce this spread by utilizing off-chain aggregation with on-chain verification, effectively decoupling the cost of data generation from the cost of data settlement.

Approach

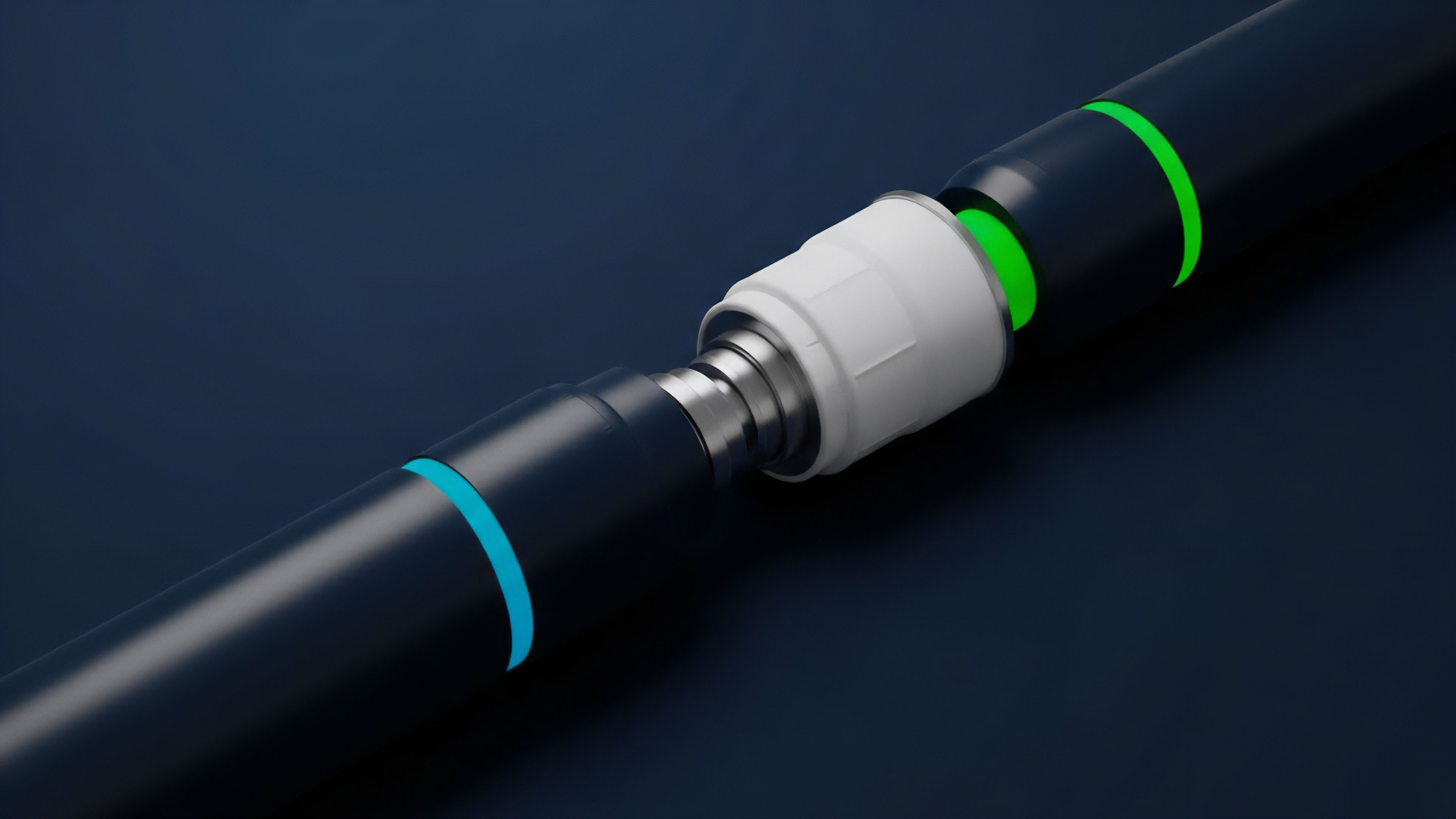

Current methodologies for managing Data Feed Cost focus on “Pull” architectures and Layer 2 scaling solutions. Instead of the oracle pushing data to the blockchain, the protocol or the user “pulls” the data when needed. This shift ensures that the Data Feed Cost is only incurred when a transaction actually requires a price update, such as a trade execution or a liquidation event.

This “just-in-time” data delivery significantly reduces wasted gas and allows for much higher update frequencies.

Comparative Analysis of Delivery Models

| Feature | Push Model | Pull Model |

|---|---|---|

| Cost Burden | Protocol/Oracle Network | End User/Liquidator |

| Efficiency | Low (updates during low activity) | High (updates only on demand) |

| Latency | Fixed by Heartbeat | Variable by Transaction Speed |

| Security | Continuous On-chain State | Cryptographic Proof Validation |

Modern derivative platforms like GMX or Synthetix utilize these pull models to offer “zero-slippage” trades. By requiring the user to include a signed price update from a provider like Pyth or Chainlink Data Streams within their transaction, the Data Feed Cost is internalized by the participant who benefits from the trade. This aligns incentives, as the trader is willing to pay for the data to ensure their order is filled at the most accurate price.

Optimistic and Zero-Knowledge Verification

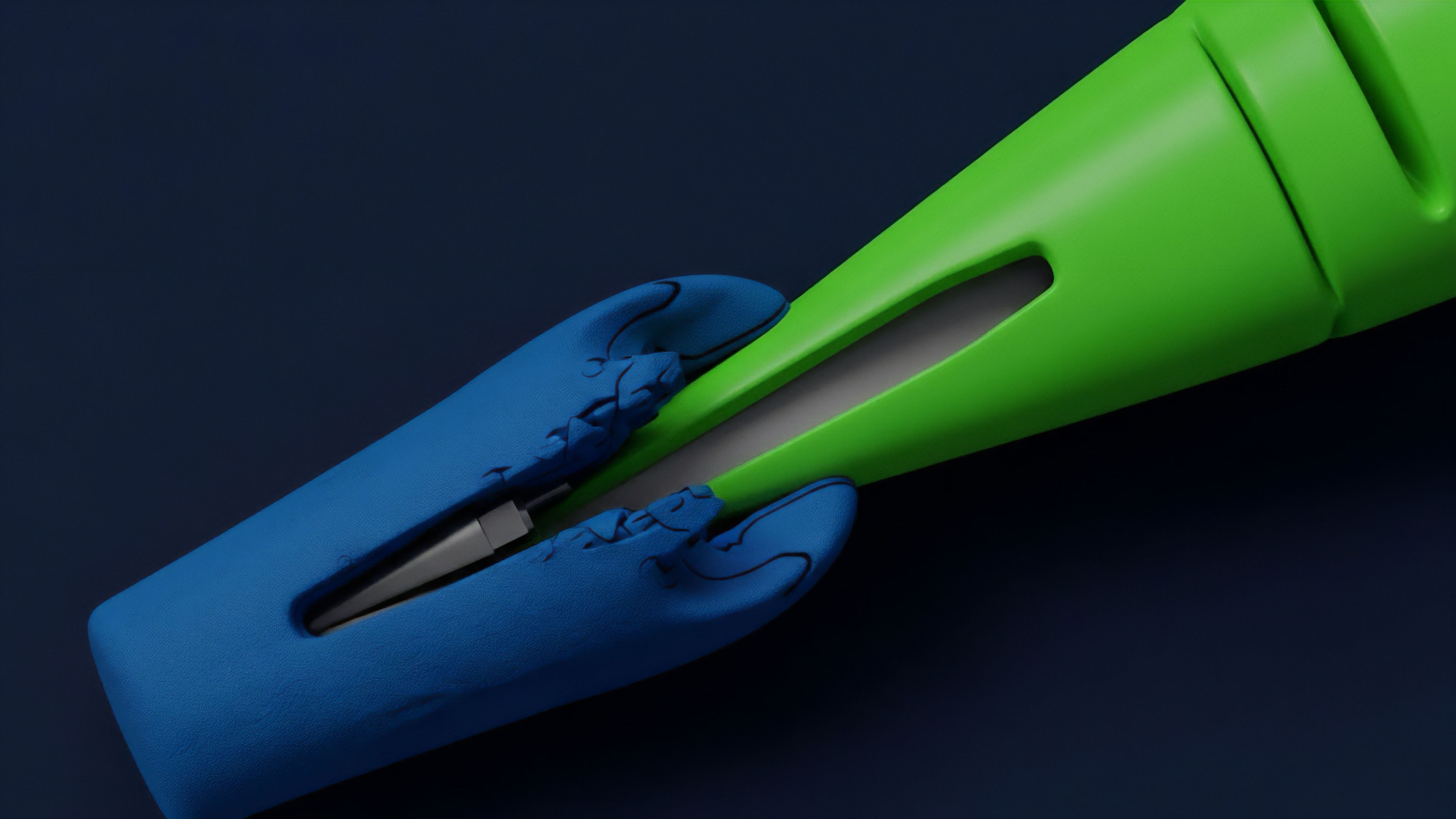

Another sophisticated method involves optimistic oracles. These systems assume the data is correct unless challenged, which drastically lowers the Data Feed Cost during normal operation. If a dispute occurs, a more expensive verification process is triggered.

Conversely, zero-knowledge (ZK) proofs are being used to compress large amounts of off-chain data into a single, cheap-to-verify on-chain proof. This allows for the ingestion of complex data sets, such as entire volatility surfaces, without the massive Data Feed Cost associated with traditional methods.

Evolution

The trajectory of Data Feed Cost has moved from being a subsidized protocol expense to a primary factor in market microstructure. Initially, Ethereum L1 gas prices made high-frequency oracles nearly impossible for all but the most liquid assets.

The migration to optimistic and ZK-rollups changed the calculus, as the cost of committing data to these chains is an order of magnitude lower. This has enabled the creation of perps and options on “long-tail” assets that previously could not support the Data Feed Cost.

Systemic evolution has transformed the data feed from a static protocol overhead into a dynamic, user-driven market commodity.

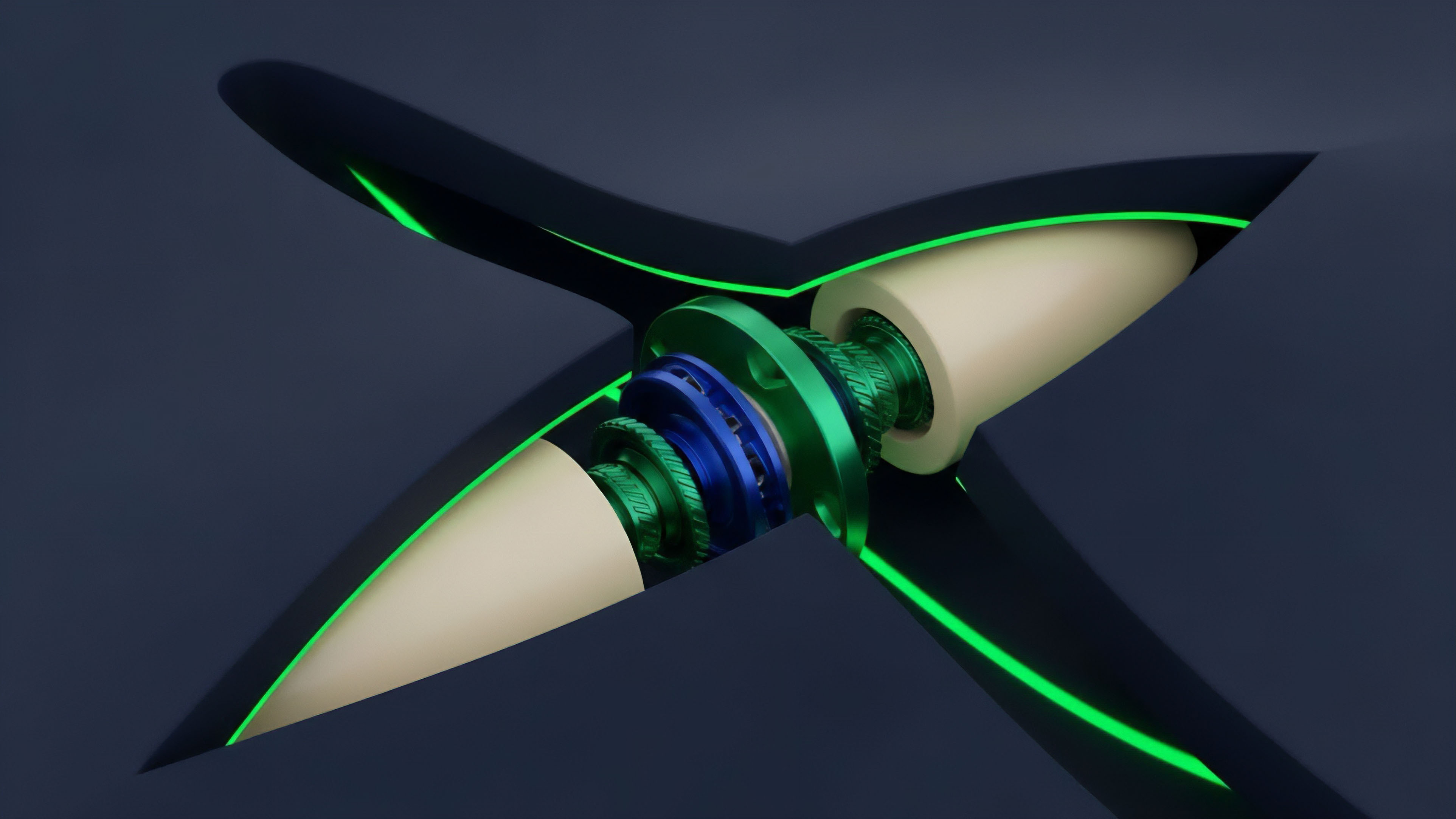

We have also seen the emergence of “Oracle Extractable Value” (OEV). This concept acknowledges that the person who updates the oracle often has a first-mover advantage for liquidations. Protocols are now beginning to capture this value by auctioning the right to update the feed.

The revenue from these auctions is used to offset the Data Feed Cost, effectively making the data feed a self-sustaining or even profitable component of the protocol architecture.

Milestones in Cost Optimization

- The Gas Token Era: Early attempts to use Chi or GST2 tokens to hedge against rising oracle update costs.

- The L2 Revolution: Arbitrum and Optimism providing the throughput necessary for 1-second price heartbeats.

- The OEV Paradigm: The transition toward protocols capturing the arbitrage value inherent in price updates to subsidize their own infrastructure.

This shift represents a maturation of the space. Developers no longer view the Data Feed Cost as a hurdle to be ignored but as a variable to be engineered. By integrating the oracle directly into the liquidation and settlement logic, protocols are achieving a level of efficiency that rivals centralized counterparts while maintaining the transparency of on-chain execution.

Horizon

The future of Data Feed Cost lies in the total vertical integration of the oracle and the execution layer.

We are moving toward “App-chains” where the validators are also the data providers. In this model, the Data Feed Cost is eliminated at the application level and internalized into the consensus mechanism of the chain itself. This allows for sub-millisecond price updates with zero marginal gas cost for the end user, enabling high-frequency options trading that was previously restricted to Wall Street servers.

Future Cost Architectures

| Innovation | Impact on Data Feed Cost | Primary Benefit |

|---|---|---|

| Shared Sequencers | Atomic bundling of price and trade | Elimination of frontrunning risk |

| ZK-Oracle Proofs | Massive data compression | Support for complex multi-asset indices |

| Validator-Oracle Fusion | Zero gas price updates | CEX-like performance on-chain |

Furthermore, the rise of AI-driven oracles will introduce a new dimension to the Data Feed Cost. These systems will use machine learning to predict when an update is most valuable, optimizing the heartbeat to save money during periods of low volatility while increasing frequency during crashes. The “cost” will shift from being purely about gas to being about the computational power required to run these predictive models. The ultimate destination is a market where Data Feed Cost is invisible. Through the combination of OEV capture, ZK-compression, and specialized blockchain architectures, the friction of importing truth will be socialized through the value the data creates. This will pave the way for a truly global, permissionless derivatives layer where the cost of information is no longer a barrier to entry, but a foundation for systemic resilience.

Glossary

Long-Tail Assets

Decentralized Price Feed Aggregators

On-Chain State

Protocol Abstracted Cost

Data Feed Regulation

L1 Gas Prices

Price Updates

Data Feed Circuit Breaker

Option Settlement