Essence

Rollup Technology Analysis centers on the architectural evaluation of layer-two scaling solutions designed to shift transaction execution off the primary blockchain while maintaining verifiable security through state roots posted to the base layer. The functional significance lies in the capacity to compress high-frequency activity into succinct cryptographic proofs, thereby expanding the throughput of decentralized financial environments.

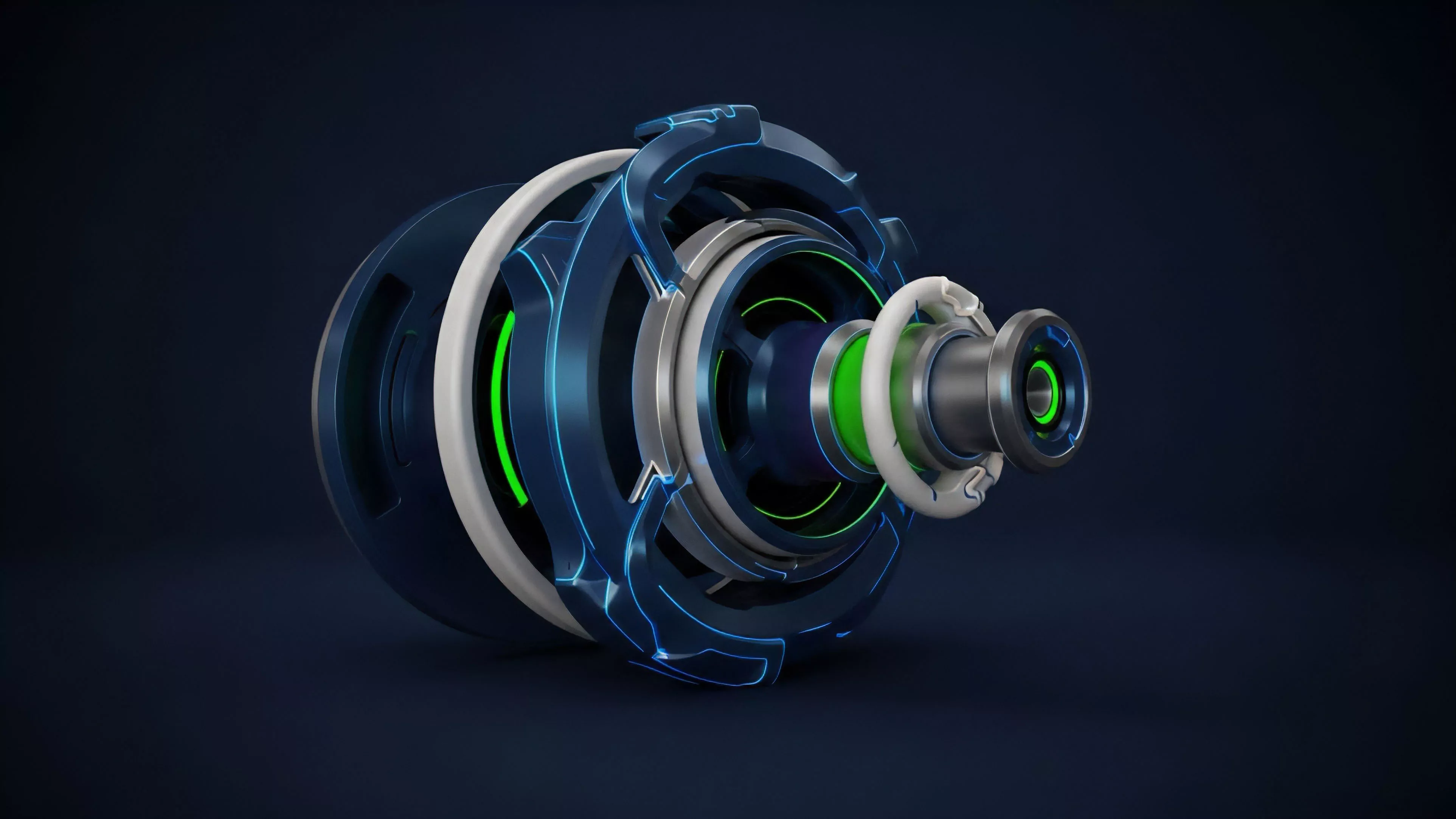

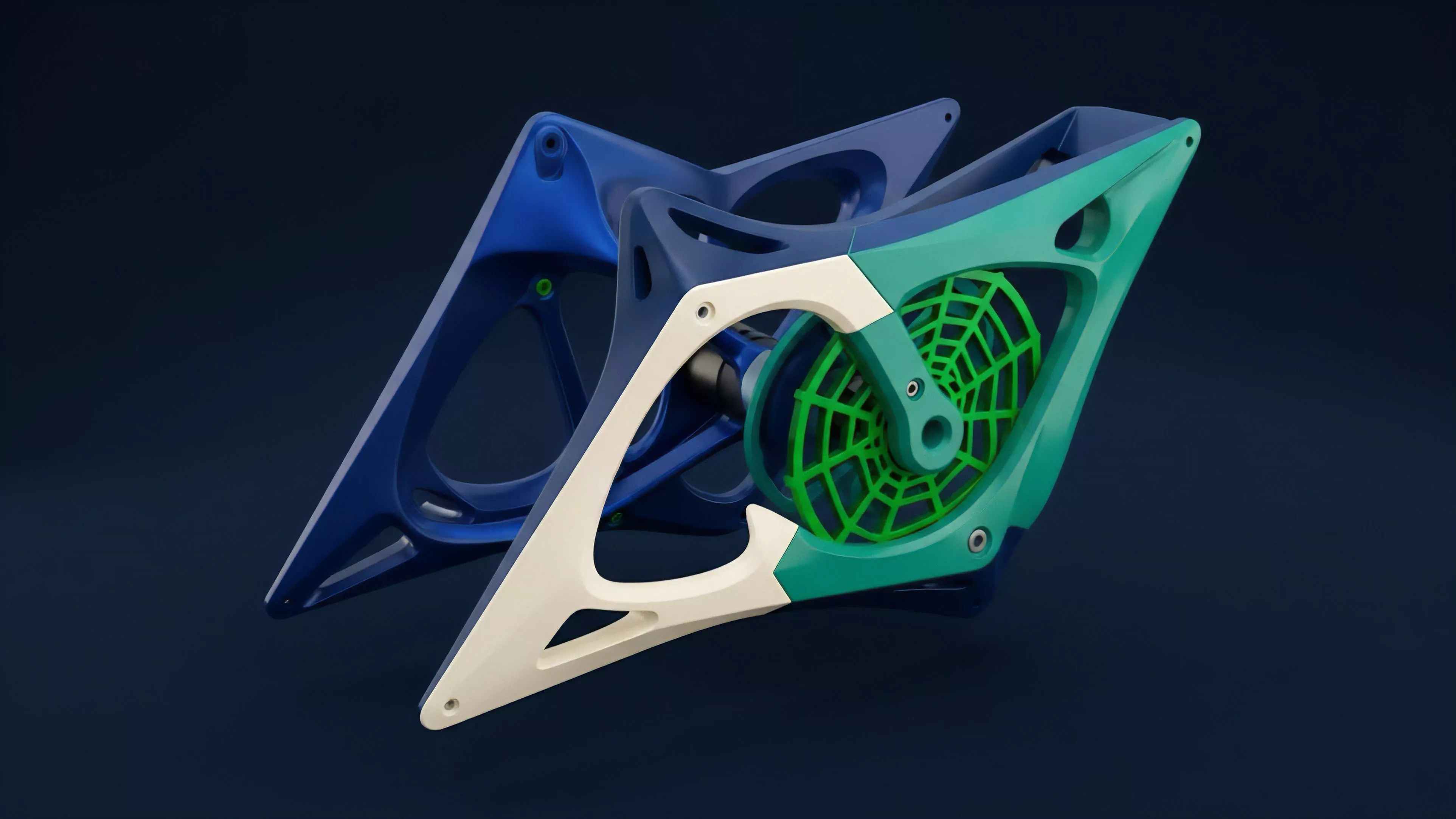

Rollup technology functions by aggregating transaction data off-chain and submitting condensed proofs to the primary settlement layer to ensure network integrity.

This domain demands an understanding of how data availability and execution proofs dictate the economic viability of decentralized venues. By decoupling execution from consensus, these systems modify the traditional cost-structure of decentralized markets, allowing for higher leverage, tighter spreads, and more frequent rebalancing without incurring prohibitive gas fees. The structural integrity of these systems relies upon the mathematical certainty of the underlying proof mechanism, whether optimistic or zero-knowledge based.

Origin

The genesis of Rollup Technology Analysis tracks back to early research on sidechains and state channels, which struggled with the trade-offs between trustlessness and scalability.

The shift toward rollups emerged as a direct response to the limitations of monolithic blockchain architectures that forced every node to process every transaction.

- Optimistic Rollups: Introduced the assumption of valid state transitions by default, relying on fraud proofs to challenge and revert invalid actions.

- Zero-Knowledge Rollups: Leveraged complex cryptographic primitives to generate succinct non-interactive arguments of knowledge, providing mathematical certainty of state validity.

- Data Availability Layers: Developed to decouple the storage of transaction data from the execution environment, addressing the fundamental bottleneck of bandwidth.

This evolution reflects a transition from simplistic throughput enhancements to sophisticated cryptographic engineering. Financial architects began treating these layers as distinct venues for liquidity, where the security model of the rollup directly impacts the risk premium of the assets residing within the environment.

Theory

The theoretical framework governing Rollup Technology Analysis rests on the interaction between state compression, security proofs, and the latency of settlement. In a zero-knowledge system, the protocol relies on the zk-SNARK or zk-STARK generation process to guarantee that the state transition function was executed correctly.

This introduces a computational overhead that must be balanced against the speed of finality.

Security in rollup architectures depends on the robustness of the proof generation mechanism and the availability of data for independent verification.

Adversarial participants constantly test the limits of these systems. In an optimistic model, the challenge window acts as a latency buffer, where the economic cost of a potential dispute is balanced against the risk of censorship or delay.

| Mechanism | Security Foundation | Finality Characteristic |

| Optimistic | Fraud Proofs | Delayed Settlement |

| Zero-Knowledge | Validity Proofs | Immediate Settlement |

The mathematical rigor applied to these systems mimics traditional high-frequency trading infrastructure, where the objective is to minimize execution risk while maximizing capital efficiency. The interaction between the sequencer, the prover, and the base layer creates a unique market microstructure where latency is no longer a function of global consensus but of local computational throughput.

Approach

Current analysis of Rollup Technology Analysis prioritizes the evaluation of sequencer decentralization and data availability throughput. Market participants now assess the risk of rollup failure by examining the proof generation time and the liquidity fragmentation inherent in multi-rollup environments.

- Sequencer Economics: The study of how transaction sequencing incentives impact MEV extraction and order flow priority.

- Proof Verification Costs: Evaluating the gas consumption of submitting proofs to the base layer as a proxy for operational sustainability.

- Bridge Security: Assessing the trust assumptions required for cross-chain liquidity movement and asset wrapping.

Sophisticated traders view these layers not merely as scaling tools, but as distinct financial venues with varying risk profiles. The approach involves quantifying the probability of a failed state transition or a sequencer-induced delay, treating these as exogenous variables in a broader portfolio risk management model.

Evolution

The trajectory of Rollup Technology Analysis has shifted from theoretical viability to practical deployment within production-grade financial applications. Initially, the focus remained on raw transaction-per-second metrics.

Today, the discourse centers on interoperability protocols and shared sequencing, which aim to reduce the systemic risk of liquidity silos.

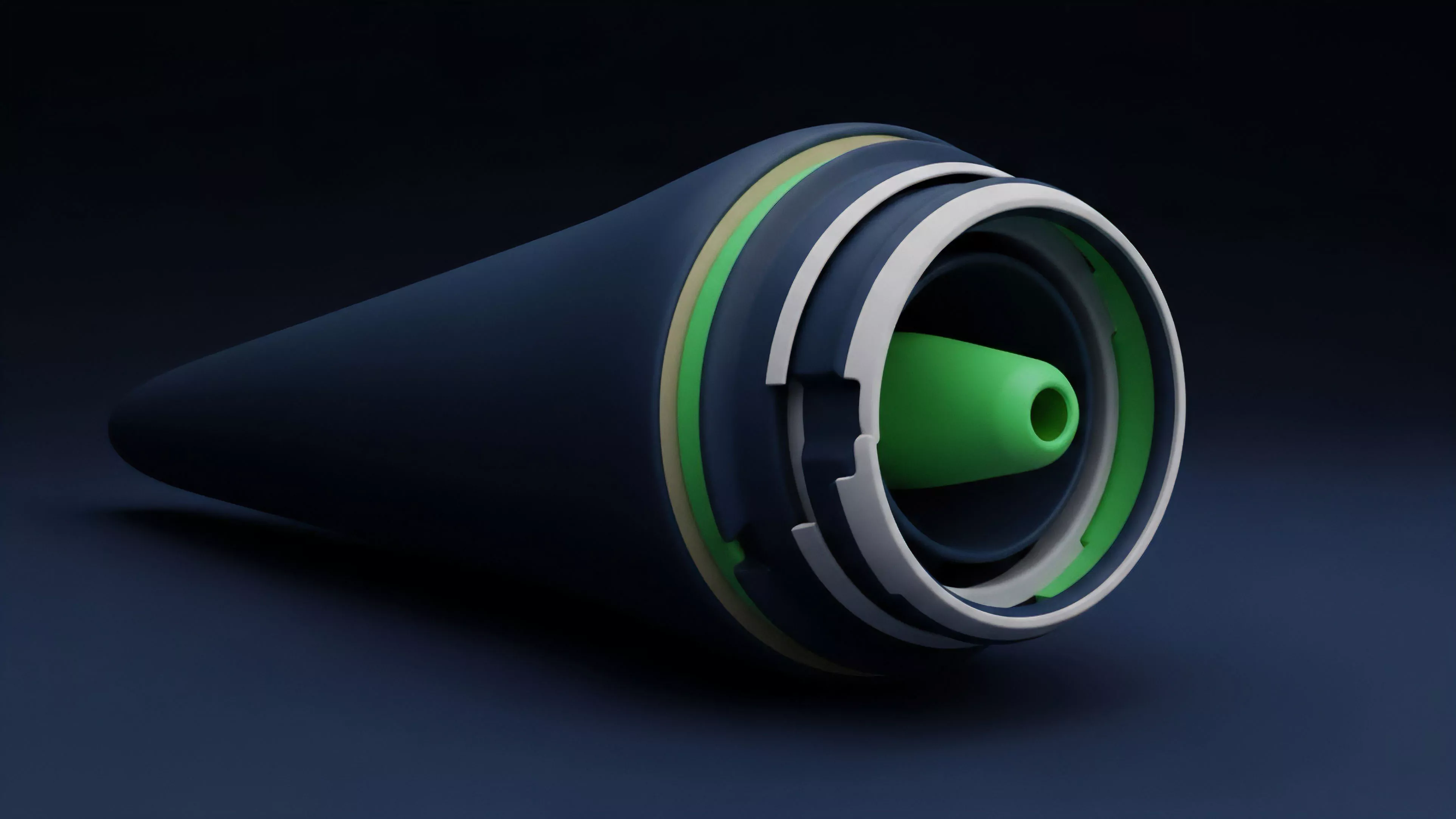

The transition toward shared sequencing architectures reduces cross-rollup latency and mitigates the risk of fragmented liquidity pools.

Technological advancements have moved toward modular stacks, where execution, settlement, consensus, and data availability are handled by specialized protocols. This architectural modularity allows for the creation of application-specific rollups, providing developers with granular control over the environment’s performance and security parameters.

| Era | Primary Focus | Key Risk |

| Monolithic | Base Layer Throughput | Congestion |

| Modular | Execution Efficiency | Interoperability |

| Shared | Liquidity Unification | Systemic Contagion |

This evolution mirrors the history of traditional financial exchanges, moving from decentralized, local venues to integrated, high-speed networks. The complexity of these systems introduces new failure modes, requiring a more rigorous approach to auditability and smart contract risk management.

Horizon

The future of Rollup Technology Analysis points toward the convergence of decentralized identity, privacy-preserving computation, and institutional-grade order matching engines. As these technologies mature, the barrier between centralized and decentralized venues will dissolve, with rollups serving as the primary infrastructure for global value transfer. The next phase involves the development of recursive proofs, allowing for the aggregation of multiple rollup states into a single, compact proof. This advancement will fundamentally alter the economics of block space, potentially reducing settlement costs to near-zero. However, this progress introduces new systemic risks, as the concentration of proof generation power may create single points of failure. The ultimate goal is a permissionless, high-throughput financial layer that retains the censorship resistance of the base blockchain while providing the performance required for global derivatives markets. What happens when the computational overhead of proof generation becomes the primary barrier to market entry, and how will the protocol design adapt to maintain decentralization?