Essence

Data Storage Efficiency represents the optimization of state persistence within distributed ledger environments, specifically addressing the cost-to-performance ratio of maintaining historical transaction records and current account states. Within crypto derivatives, this concept dictates the viability of high-frequency settlement layers and the scalability of margin engine updates. The financial weight of this efficiency lies in reducing the gas-adjusted cost of maintaining collateralized positions, where excessive storage bloat directly erodes liquidity provider returns and complicates real-time risk assessment.

Data Storage Efficiency defines the ability of a protocol to minimize the computational and economic overhead of maintaining ledger state while preserving necessary transactional integrity.

The architectural challenge centers on balancing immediate data accessibility with long-term archival requirements. When derivatives protocols require sub-second state updates for liquidation monitoring, the underlying storage mechanism must provide low-latency read/write access. Failure to manage this efficiency results in increased latency for order execution, which, in adversarial market conditions, creates significant slippage and potential for predatory front-running by participants with superior infrastructure access.

Origin

The requirement for refined storage models emerged from the limitations of monolithic blockchain designs, where every node stores the entire transaction history. Early protocols struggled with the linear growth of state size, leading to prohibitive costs for maintaining full nodes and increased synchronization times for new participants. This structural bottleneck necessitated a transition toward specialized data availability layers and state-pruning techniques, directly influencing the design of modern derivative exchanges.

- State Bloat: The phenomenon where the accumulation of historical data necessitates increasing hardware requirements for network participants, leading to centralization risks.

- Archival Nodes: Specialized infrastructure components tasked with maintaining the complete ledger history, incurring higher economic costs compared to light clients.

- Transaction Replay: A foundational requirement in derivative protocols, demanding efficient storage retrieval to verify historical margins and liquidation events during disputes.

The evolution from simple account-based models to complex, contract-heavy environments shifted the focus from mere transactional throughput to the efficiency of state representation. The industry recognized that the cost of storing a derivative contract’s state ⎊ often involving complex Greeks calculations and multi-asset collateral tracking ⎊ was a primary factor in the overall fee structure for market participants.

Theory

The quantitative framework for Data Storage Efficiency relies on optimizing the data structure’s memory footprint relative to the frequency of access. In derivative markets, this involves mapping state variables ⎊ such as current mark price, open interest, and individual user collateral ratios ⎊ into compact, Merkle-Patricia trees or similar cryptographic structures that allow for rapid verification without redundant data replication.

| Metric | Impact on Derivative Performance |

|---|---|

| State Access Latency | Determines speed of margin calls and liquidation triggers |

| Storage Cost Per Byte | Influences the base fee charged for contract creation |

| Proof Generation Time | Affects the latency of cross-layer settlement verification |

The mathematical optimization of state storage directly dictates the margin engine latency and the overall systemic resilience of decentralized derivatives.

Adversarial environments demand that storage structures remain resistant to state-bloat attacks, where malicious actors deliberately inflate the ledger size to force network congestion. By implementing strict state-rent mechanisms or localized data sharding, protocols enforce economic accountability for the storage resources consumed. This is not just a technical constraint; it is a fundamental governance decision that dictates which market strategies remain profitable under varying network load conditions.

Approach

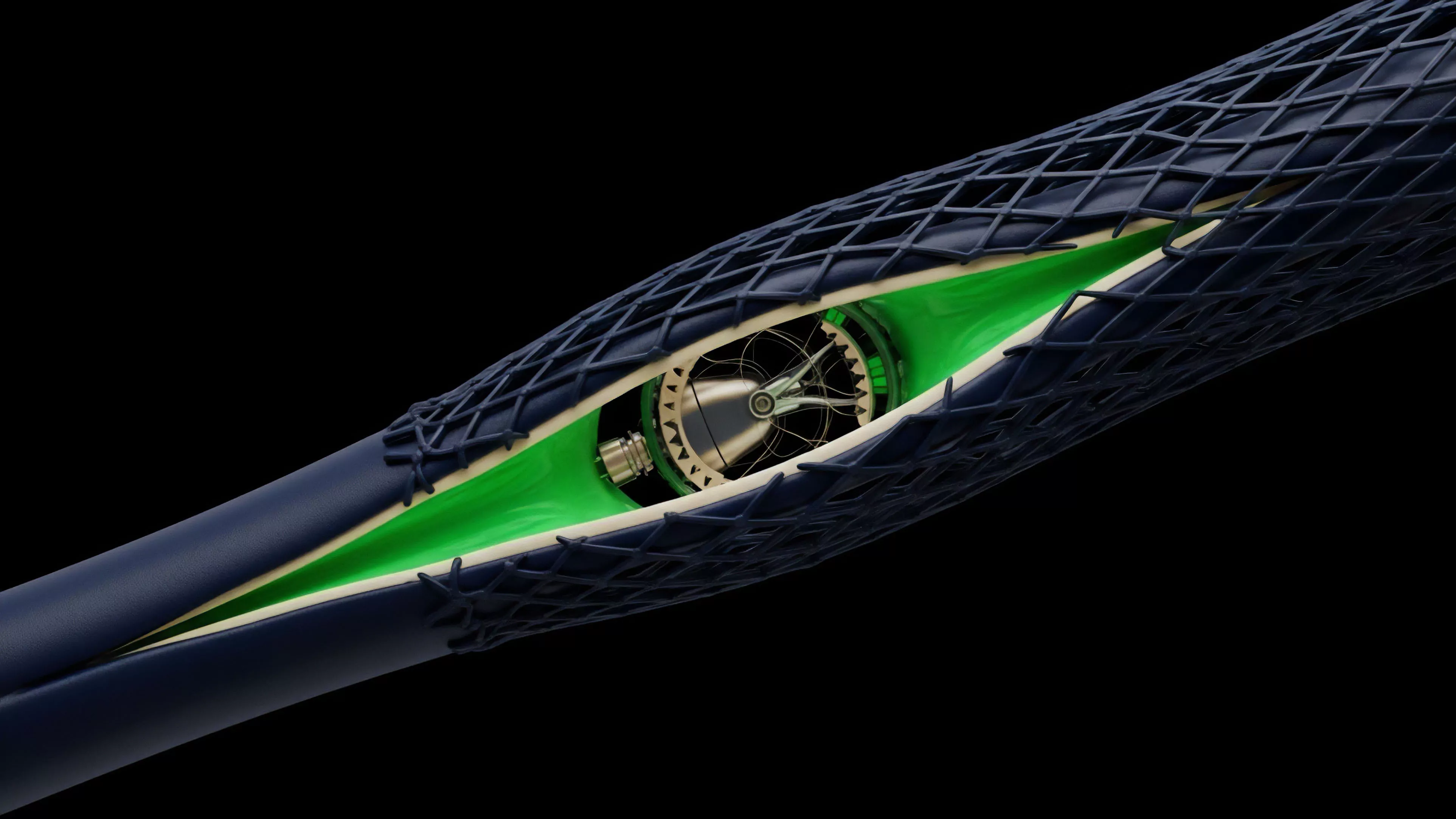

Current strategies for achieving Data Storage Efficiency prioritize off-chain computation and data availability solutions. By offloading non-critical historical data to decentralized storage networks, protocols maintain a lean on-chain state, reserving expensive block space for essential settlement and collateral updates. This approach requires sophisticated cryptographic proofs, such as validity proofs or fraud proofs, to ensure that off-chain data remains consistent with the on-chain settlement layer.

- State Pruning: Eliminating redundant historical data from active validator nodes while maintaining cryptographic proofs of past states.

- Data Sharding: Distributing the burden of storage across multiple network segments, allowing for horizontal scalability of derivative contract volumes.

- Compressed State Representation: Utilizing advanced serialization formats to minimize the byte-size of complex derivative position data.

The shift toward these mechanisms acknowledges that the primary constraint in decentralized finance is no longer raw execution speed, but the throughput of state synchronization. The market now favors protocols that can prove the validity of a derivative settlement without requiring every node to store the entire history of every price movement, thus reducing the barriers to entry for decentralized market makers.

Evolution

The progression of storage management has moved from early, inefficient replication toward sophisticated, modular architectures. Initially, the focus remained on simply keeping the ledger synchronized. Today, the priority has shifted to granular control over state availability, allowing for the emergence of high-frequency derivative protocols that operate with performance profiles comparable to centralized exchanges.

This development reflects a deeper understanding of protocol physics, where the cost of storage is treated as a primary variable in the pricing of risk.

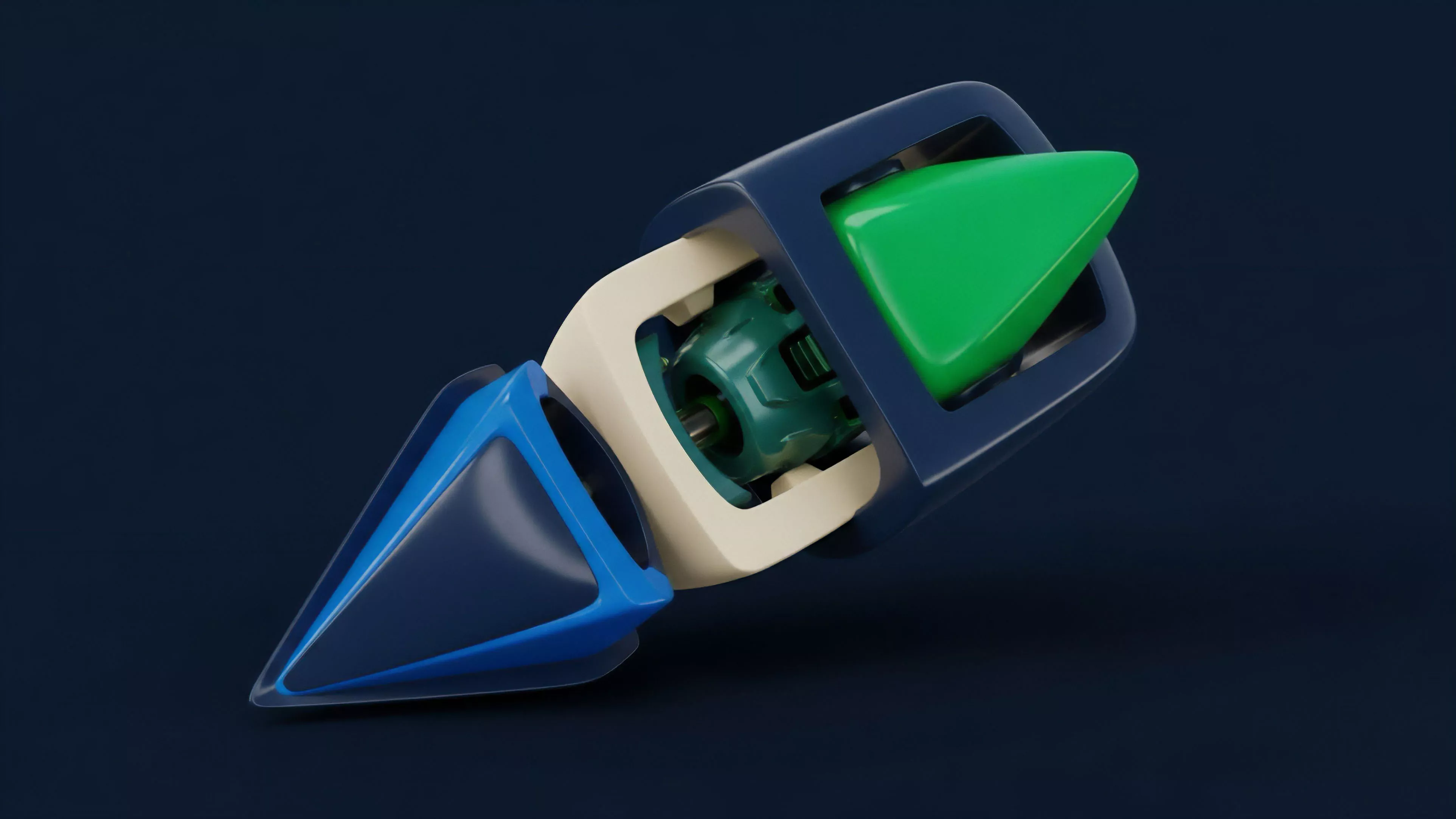

Modular storage architectures allow derivative protocols to scale by decoupling state execution from long-term historical data availability.

This evolution highlights a critical divergence between legacy blockchain designs and modern, purpose-built financial layers. As derivative markets demand higher leverage and faster settlement, the underlying storage infrastructure must adapt to handle the intense, localized data bursts that occur during market volatility. We observe a clear trend toward protocol designs that treat state as a dynamic, ephemeral resource rather than a static, immutable burden.

Horizon

Future developments in Data Storage Efficiency will likely center on zero-knowledge state compression, where the entire history of a derivative position can be represented by a single, succinct proof. This will fundamentally alter the cost structure of decentralized derivatives, enabling near-zero storage fees and facilitating the creation of hyper-scalable, permissionless markets that are currently impossible under existing constraints. The ability to verify complex, multi-legged option strategies with minimal on-chain state will unlock new classes of synthetic assets.

The integration of hardware-accelerated storage verification will further drive efficiency, as specialized chips reduce the latency of proof generation. This technical advancement will coincide with a broader shift in governance, where storage consumption becomes a priced commodity, subject to supply and demand dynamics that automatically stabilize the protocol’s state size. The ultimate objective remains the creation of a financial system where storage is essentially a background utility, invisible to the end user yet providing the robust foundation required for global-scale capital efficiency.