Essence

Real-time data integration represents the essential mechanism for delivering market information to decentralized applications, specifically options protocols. Without this capability, the entire apparatus of on-chain derivatives cannot function with financial precision. A derivative contract’s value is derived from its underlying asset, making the continuous, low-latency stream of price data a prerequisite for accurate pricing, collateralization, and risk management.

The challenge in decentralized finance is that a smart contract cannot natively access external data. This creates a fundamental need for robust data feeds that bridge the off-chain world of market movements with the on-chain execution logic of the protocol. This bridge must operate with both high frequency and verifiable integrity to prevent manipulation and ensure the solvency of the system.

The core function of data integration in options markets extends beyond a simple price quote. It requires a continuous feed of data that reflects market microstructure. For an options protocol, this data stream must provide a granular view of price changes, volatility, and order book depth to calculate the Greeks accurately and determine appropriate collateral requirements.

The system must process this data stream to update margin requirements dynamically, ensuring that positions remain adequately collateralized against sudden price shifts. This process creates a feedback loop where market data directly dictates the protocol’s risk engine, maintaining the financial health of the system against a constantly moving underlying asset.

Real-time data integration provides the necessary market context for options protocols to calculate risk, price derivatives, and manage collateral with precision.

Origin

The necessity for real-time data integration in decentralized finance emerged from the early exploits of protocols that relied on naive or poorly designed data sources. The first generation of DeFi protocols often used single-source oracles or relied on data updates that were too slow to react to market volatility. This created significant vulnerabilities, particularly for options and lending protocols.

Flash loan attacks became a common exploit vector where an attacker could manipulate the price feed of a single decentralized exchange (DEX) or oracle, execute a trade against the manipulated price, and then return the loan within the same block. The primary challenge was not a lack of data, but a lack of secure, high-frequency data delivery. Early oracle designs focused on data verification through consensus mechanisms, but often sacrificed speed and update frequency to achieve security.

This trade-off proved costly for derivatives markets, where pricing models require near-instantaneous data to maintain accurate valuations. The high leverage and systemic risk inherent in options protocols demanded a solution that could deliver data with a frequency comparable to centralized exchanges. The evolution of real-time data integration was driven by a practical necessity ⎊ to mitigate the financial risk posed by slow or easily manipulated price feeds, transforming data integrity from a technical concern into a core economic requirement for protocol survival.

Theory

The theoretical foundation of real-time data integration for crypto options rests on the principles of stochastic calculus and risk management, where the accuracy of the model output is directly proportional to the quality and frequency of the input data. In traditional finance, options pricing models like Black-Scholes-Merton assume a continuous, frictionless data stream, but this assumption breaks down in a decentralized, block-based environment. The challenge lies in translating a continuous-time model to a discrete-time, high-latency environment.

This translation requires a data pipeline that minimizes the time between a market event (a price change) and the protocol’s reaction (a margin update or liquidation trigger). The latency in data updates creates “pricing lag,” which can lead to significant risk exposure for the protocol’s liquidity providers. A protocol’s ability to maintain solvency depends entirely on its capacity to process real-time market data ⎊ including price, volatility, and volume ⎊ to calculate and update the Greeks (Delta, Gamma, Vega) of all outstanding positions.

If the data feed lags behind the market, the protocol’s hedging mechanisms fail to react quickly enough to price changes, resulting in undercollateralized positions and potential system failure. The core problem for a quantitative analyst designing a decentralized options protocol is determining the appropriate data update frequency to balance security against capital efficiency. A slower data update reduces transaction costs and potential oracle manipulation risks, but increases the risk of a “liquidation cascade” during periods of high volatility.

A faster data update, conversely, increases transaction costs and potential network congestion, but improves the accuracy of risk calculations. The theoretical solution involves a trade-off between the cost of data updates and the value at risk (VaR) of the protocol’s positions. The protocol must calculate the optimal update frequency by analyzing the historical volatility of the underlying asset and setting a threshold for acceptable slippage.

This process is a constant battle between the theoretical continuous nature of market dynamics and the discrete, costly nature of on-chain data delivery.

Latency and Model Accuracy

The speed of data integration directly impacts the accuracy of option pricing models. A high-frequency data feed allows for a more accurate calculation of implied volatility and the subsequent adjustment of option prices. In a decentralized environment, latency is not simply a matter of network speed; it is a matter of block finality and data propagation across multiple layers.

- Data Freshness: The time elapsed between a market trade and its inclusion in the data feed used by the options protocol. This is critical for preventing front-running and oracle manipulation.

- Greeks Calculation: Real-time data updates allow for continuous recalculation of Delta and Gamma, enabling protocols to hedge their risk more effectively and avoid large, sudden losses during market movements.

- Liquidation Thresholds: The data feed determines when a position falls below its minimum collateral requirement. If the data feed is slow, the protocol may liquidate a position too late, leaving the protocol to absorb the loss.

Approach

Current implementations of real-time data integration in crypto derivatives protocols vary widely, driven by different trade-offs between cost, latency, and security. The core challenge remains how to efficiently deliver high-frequency, verifiable off-chain data to a low-frequency, high-cost on-chain environment. The dominant approach involves a hybrid architecture that leverages off-chain computation and data aggregation to minimize on-chain costs.

Off-Chain Data Aggregation

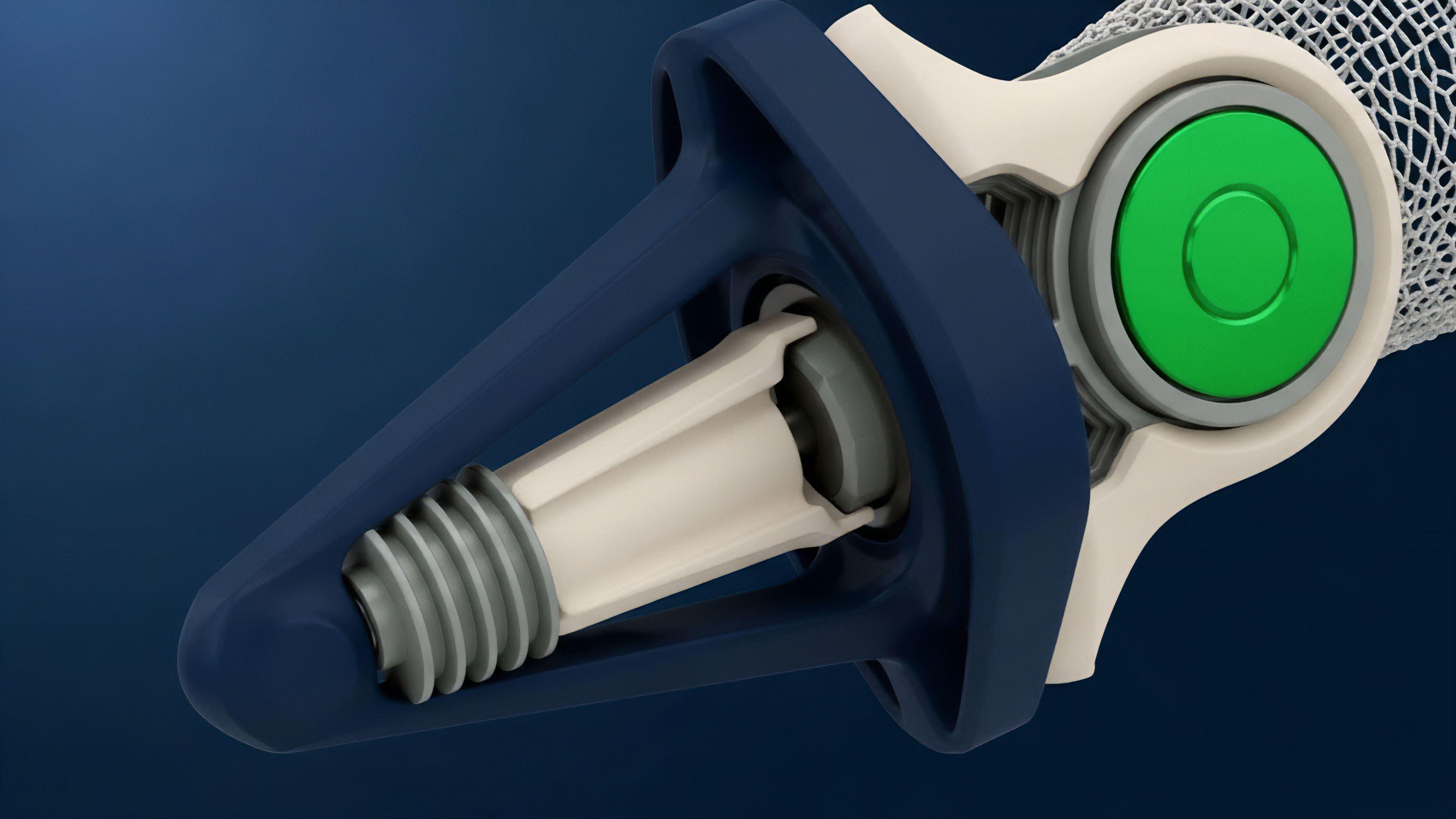

The most common method involves data aggregation networks like Chainlink or Pyth. These networks collect data from numerous sources (centralized exchanges, decentralized exchanges, market makers) off-chain. This data is then aggregated, verified, and signed by a network of nodes before being submitted to the blockchain.

This process ensures data integrity by requiring consensus among multiple independent sources.

- Data Collection: Data providers (market makers, exchanges) stream real-time pricing data to a network of aggregation nodes.

- Data Aggregation: The network processes the data, calculates a median or volume-weighted average price (VWAP), and filters out outliers.

- On-Chain Submission: The aggregated price is then submitted to the blockchain via a smart contract, which updates the price feed used by the options protocol.

Latency Optimization Strategies

For options protocols that require high-frequency updates (e.g. perpetual options or short-term options), a simple on-demand update model is insufficient. Protocols employ specific strategies to manage latency:

- Layer-2 Data Feeds: Protocols often operate on Layer-2 solutions where data updates are cheaper and faster. The data feed is integrated directly into the Layer-2 environment, reducing the latency between data updates and trade execution.

- High-Frequency Oracles: Networks like Pyth push data updates at very high frequency (e.g. multiple times per second) off-chain, and then make those updates available on-chain via a “pull” mechanism. This allows protocols to access fresh data when needed without paying for every update.

Data Architecture Comparison

A protocol’s choice of data feed architecture dictates its risk profile and operational cost.

| Architecture | Latency | Security Model | Cost Efficiency |

|---|---|---|---|

| Single-Source Oracle (Legacy) | High (Slow updates) | Low (Single point of failure) | High (Low update cost) |

| On-Chain Aggregation (Legacy) | High (Block time constraint) | High (On-chain verification) | Low (High gas cost per update) |

| Off-Chain Aggregation (Current) | Medium (Data network latency) | Medium-High (Multi-source verification) | Medium (Variable update cost) |

| Layer-2 Integration (Current) | Low (Layer-2 finality) | Medium-High (Layer-2 security model) | High (Low Layer-2 gas cost) |

Evolution

The evolution of real-time data integration has mirrored the growth of the crypto options landscape itself, moving from a static, single-point-of-failure model to a dynamic, multi-source system. Early data feeds were designed for simple lending protocols where a price update every few minutes was sufficient. The advent of high-frequency options trading and perpetual futures, however, demanded a complete re-architecture of data delivery.

The challenge shifted from simply verifying a price to verifying a high-frequency time-series of prices, including volatility and funding rates. The first major evolution was the move from single-source oracles to aggregated oracles. This significantly increased security by making price manipulation prohibitively expensive, requiring an attacker to compromise multiple data providers simultaneously.

The next evolution involved a shift from a “push” model ⎊ where data updates were pushed to the blockchain on a fixed schedule ⎊ to a “pull” model, where protocols could request data updates on demand. This greatly improved capital efficiency by allowing protocols to pay for data only when necessary, such as during a liquidation event.

The Need for Volatility Feeds

For options protocols, the real-time data integration challenge extends beyond the underlying asset’s price. The implied volatility of an option ⎊ a key input for pricing models ⎊ changes dynamically based on market sentiment and order flow. A protocol must integrate data feeds that provide accurate volatility surfaces in real time to price options accurately.

This requires sophisticated data processing that goes beyond simple price aggregation, necessitating the creation of dedicated volatility oracles that calculate and distribute this data.

The move from simple price feeds to high-frequency volatility surfaces represents a critical step in the maturation of decentralized options protocols.

Horizon

Looking ahead, the next phase of real-time data integration for crypto options will focus on data privacy and verifiable computation. The current model, while effective, still exposes data inputs to all participants. The next frontier involves zero-knowledge proofs (ZKPs) to verify data integrity without revealing the source or the raw data itself.

This allows for the creation of sophisticated, private derivatives markets where market makers can provide pricing data without exposing their proprietary models or order flow. The ultimate goal for decentralized data integration is data sovereignty ⎊ the ability for a protocol to control its own data inputs and verification process without reliance on external, centralized oracle networks. This could be achieved through decentralized identity solutions that verify data providers, or through fully on-chain computation where data is sourced and processed entirely within the protocol’s environment.

This future state allows for the creation of complex financial instruments that require data inputs that are both real-time and private, a capability that will unlock a new generation of sophisticated options products in decentralized finance.

Future data integration will move beyond simple price verification toward verifiable computation and data privacy using zero-knowledge proofs.

Glossary

Real-Time Risk Pricing

Real-Time Volatility Metrics

Real-Time Fee Adjustment

Contingent Claims Integration

Layer 2 Rollup Integration

Consensus Layer Integration

Black-Scholes Greeks Integration

Protocol Integration Challenges

Systemic Integration