Essence

Off-chain data integration for crypto options addresses the fundamental challenge of trust-minimized financial contracts operating on a deterministic ledger. A smart contract cannot inherently access real-world information, such as the spot price of an underlying asset, without external input. For an options contract, the final settlement value depends entirely on the asset’s price at expiration.

If this data feed is compromised, the entire contract fails to execute correctly, leading to significant financial losses for one party and potential systemic risk for the protocol. This external data feed ⎊ often referred to as an oracle ⎊ is the single point of failure that decentralization attempts to eliminate. The integration of this data is a complex engineering problem involving cryptographic proofs, economic incentives, and consensus mechanisms to ensure data integrity.

The system must verify that the data provided accurately reflects the real-world market price, ensuring that the contract’s logic is applied to a verifiable truth rather than a manipulated input.

Off-chain data integration is the critical bridge that connects the deterministic logic of a smart contract to the stochastic reality of external market events, determining the financial outcome of derivatives.

The challenge extends beyond simple price feeds. Options pricing requires data points that are themselves derivatives of market activity, such as implied volatility surfaces. A decentralized options protocol must not only receive a spot price but also calculate or receive a reliable volatility measure to accurately price and manage risk.

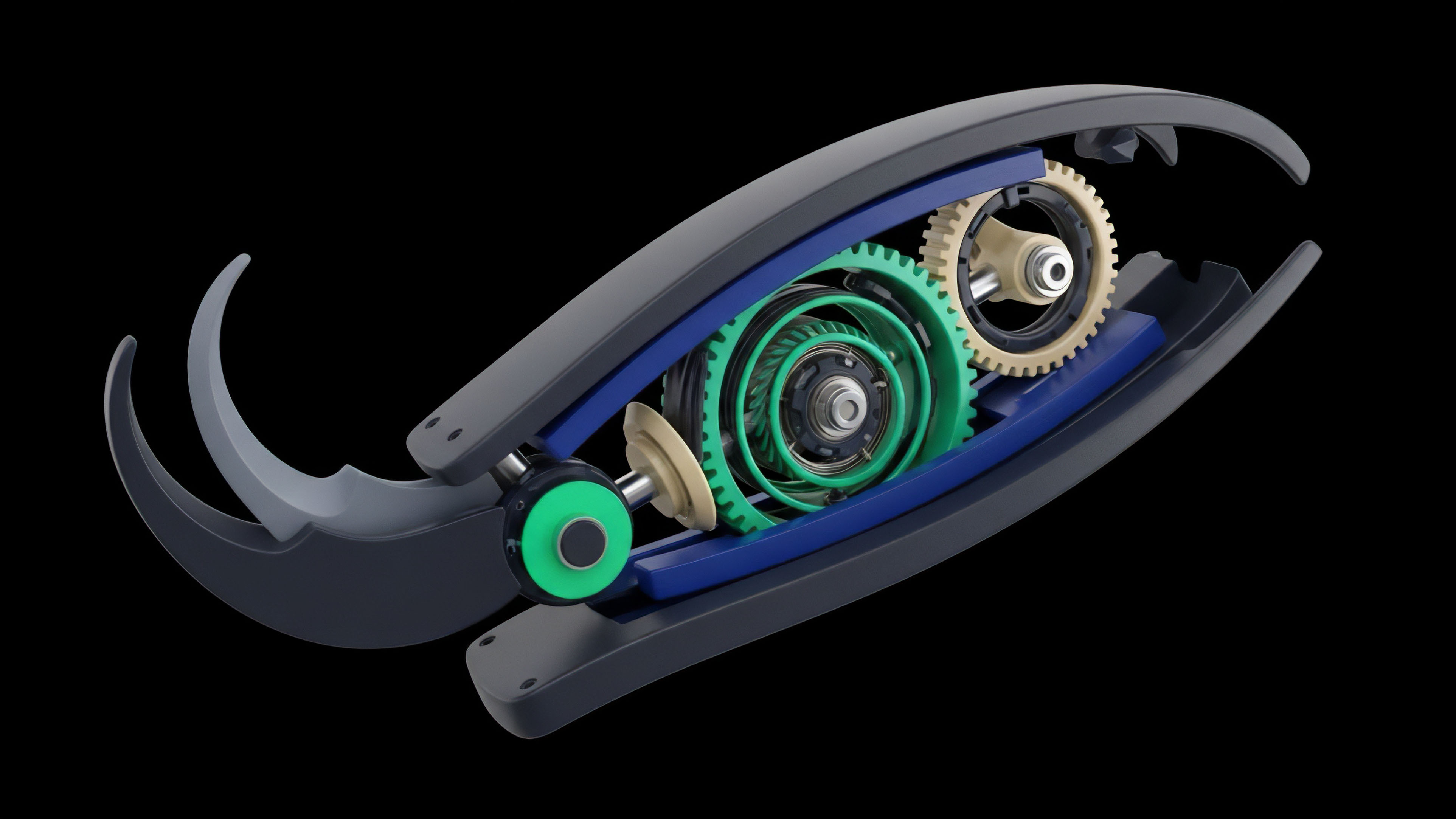

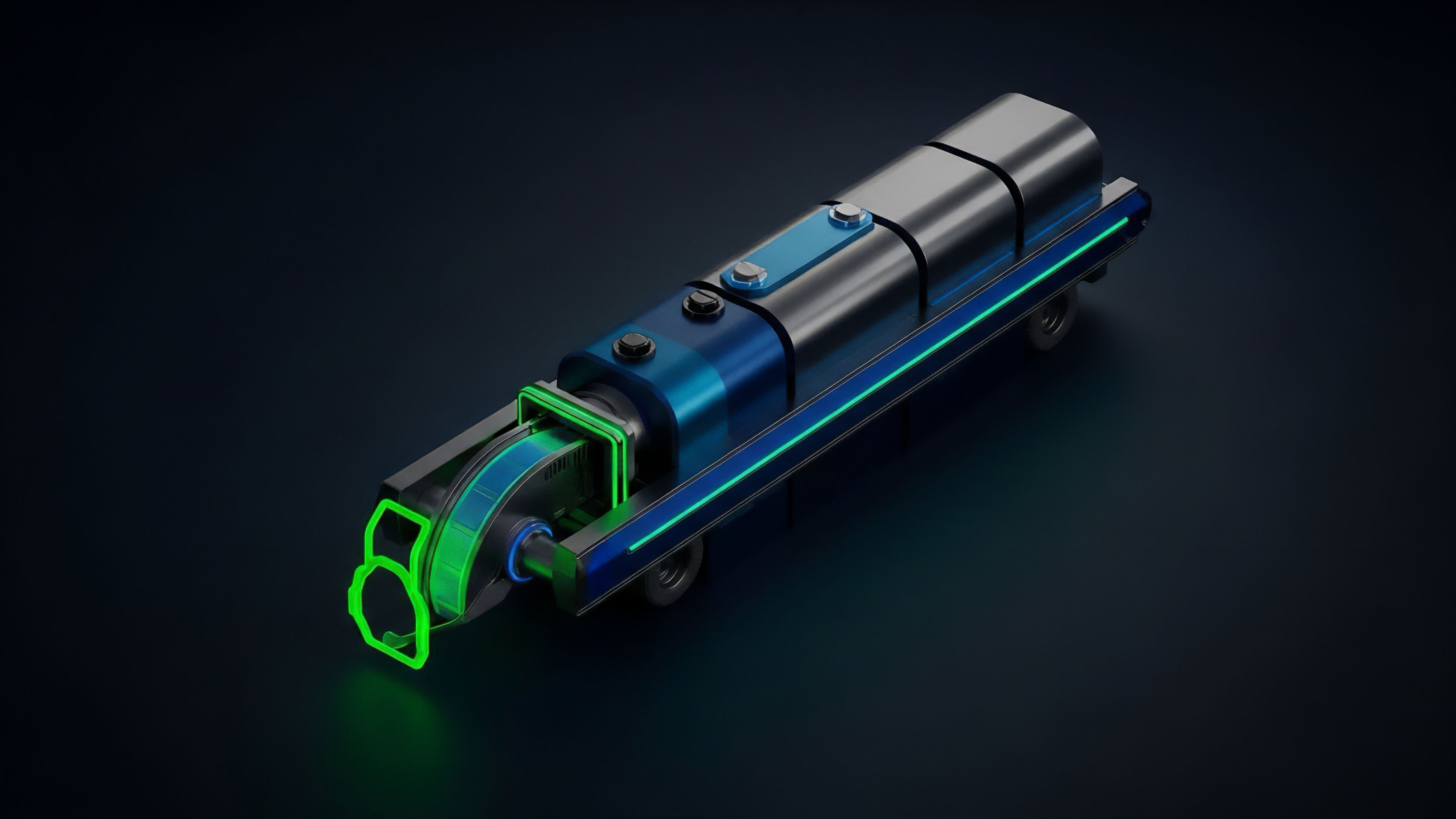

The architecture must account for the high-frequency nature of market data while maintaining the slow, secure, and costly nature of on-chain computation. This creates a trade-off between speed and security. The solution often involves a layered approach where data aggregation and validation occur off-chain before a single, signed value is committed to the blockchain, minimizing on-chain gas costs while maximizing data accuracy.

Origin

The necessity for off-chain data integration emerged directly from the earliest attempts to build financial applications on top of programmable blockchains. Early decentralized applications (DApps) in the pre-DeFi era struggled with a fundamental design flaw: a contract’s inability to react to external conditions. The initial attempts at solutions were highly centralized, relying on a single party ⎊ often the project founder or a small consortium ⎊ to manually input data.

This created a trust-based system that negated the core value proposition of decentralization. The evolution of this field was driven by the need to secure the rapidly growing collateral locked in these early protocols. The first significant innovation was the introduction of decentralized oracle networks (DONs).

These networks, pioneered by projects like Chainlink, introduced a new design pattern where multiple independent data providers would submit data, and the protocol would aggregate these inputs, taking a median or average to reduce the impact of any single malicious actor. This shift from single-source data to multi-source consensus marked a turning point, allowing derivatives protocols to operate with a higher degree of confidence. The complexity increased further with the introduction of options, as these instruments required not just a single price point but a continuous stream of reliable data for marking collateral, managing liquidations, and calculating margin requirements.

Theory

The theoretical underpinnings of off-chain data integration for options are rooted in two primary areas: financial engineering and systems theory. The financial aspect concerns the integrity of the data inputs required for pricing models. The Black-Scholes model, for instance, requires five inputs, with the underlying price and volatility being the most dynamic.

The data integration system must deliver these inputs in real-time, accurately reflecting the current market state to prevent arbitrage. The challenge here is that options protocols operate in an adversarial environment where participants are constantly seeking to exploit data latency. If the on-chain price feed lags behind the real-world spot price, an attacker can execute a front-running strategy.

They observe a price movement off-chain, purchase an option at the outdated on-chain price, and then sell it for a profit when the oracle updates. This attack vector necessitates a data delivery mechanism that minimizes latency while maximizing the cost of manipulation. The systems theory perspective views the oracle as a critical feedback loop in a complex adaptive system.

The oracle’s data input dictates the system’s response ⎊ liquidations, margin calls, or settlement. A failure in this feedback loop can trigger cascading failures throughout the protocol. The design must therefore prioritize robustness and fault tolerance.

This involves economic security, where the cost of corrupting the data feed exceeds the potential profit from the resulting attack. The core challenge is designing incentives for data providers to be truthful, often through staking mechanisms where providers post collateral that can be slashed if they submit inaccurate data. This design pattern transforms the oracle problem from a technical challenge into an economic game theory problem.

Data Latency and Systemic Risk

The most significant theoretical challenge in decentralized options is data latency. Options markets, particularly in high-frequency trading, operate on microsecond timescales. A decentralized protocol, however, must wait for a block confirmation, which can take seconds or minutes, before processing data.

This time gap creates a window for manipulation. The oracle design must mitigate this by either delivering data with a high frequency or by implementing mechanisms that smooth out short-term volatility. This smoothing, however, introduces basis risk, where the on-chain price diverges from the real-world price, creating opportunities for arbitrage.

The choice between these two approaches determines the fundamental risk profile of the protocol. A protocol prioritizing speed might be vulnerable to manipulation, while one prioritizing security might be less efficient for active traders.

Economic Security and Game Theory

The security of off-chain data integration relies heavily on game theory. Data providers are incentivized to provide accurate data by a combination of rewards and penalties. The system’s integrity depends on the assumption that a majority of data providers will act honestly.

This requires careful calibration of economic parameters: the reward for honest reporting, the penalty for malicious reporting, and the cost for an attacker to compromise enough providers to skew the result. The cost of attack must always exceed the potential profit from manipulating a derivative contract. This creates a security model where economic incentives, rather than trust in a central authority, enforce data integrity.

Approach

The implementation of off-chain data integration for options protocols currently follows several distinct architectural patterns, each representing a different trade-off between speed, cost, and security.

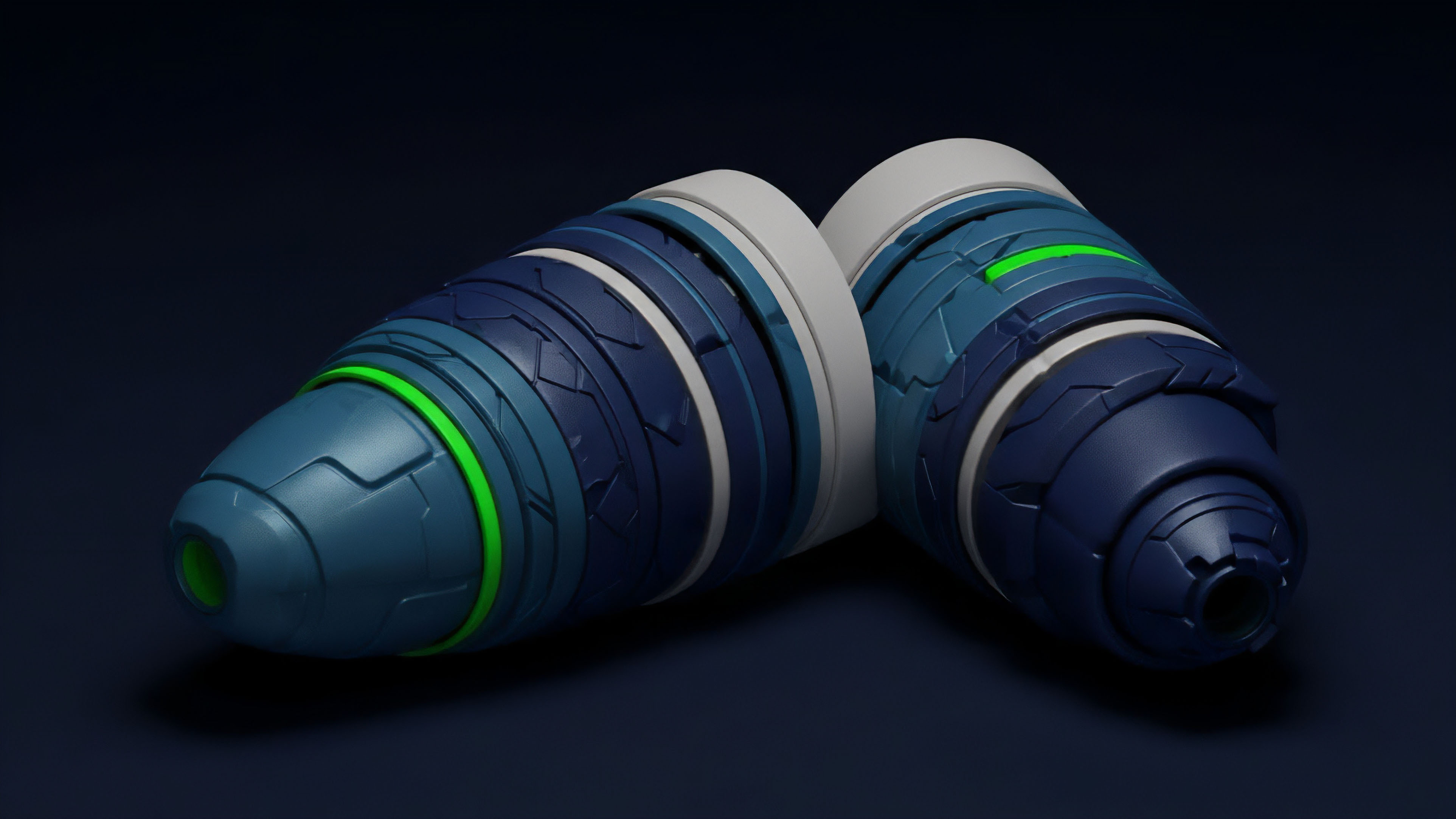

- Decentralized Oracle Networks (DONs): This approach uses a network of independent data providers. The protocol requests data, and multiple providers respond with their observations. The protocol then aggregates these responses, typically by taking the median value, to create a robust data point. This method minimizes the impact of a single malicious provider, making it highly secure. However, it can be expensive and slow, as it requires multiple on-chain transactions for aggregation.

- Centralized Feeds: Some protocols opt for a single, trusted entity to provide data. This approach offers high speed and low cost, as only one transaction is needed. However, it reintroduces a single point of failure, making the protocol vulnerable to censorship or compromise of the centralized feed provider. This model is often used in protocols that prioritize capital efficiency and speed over absolute decentralization.

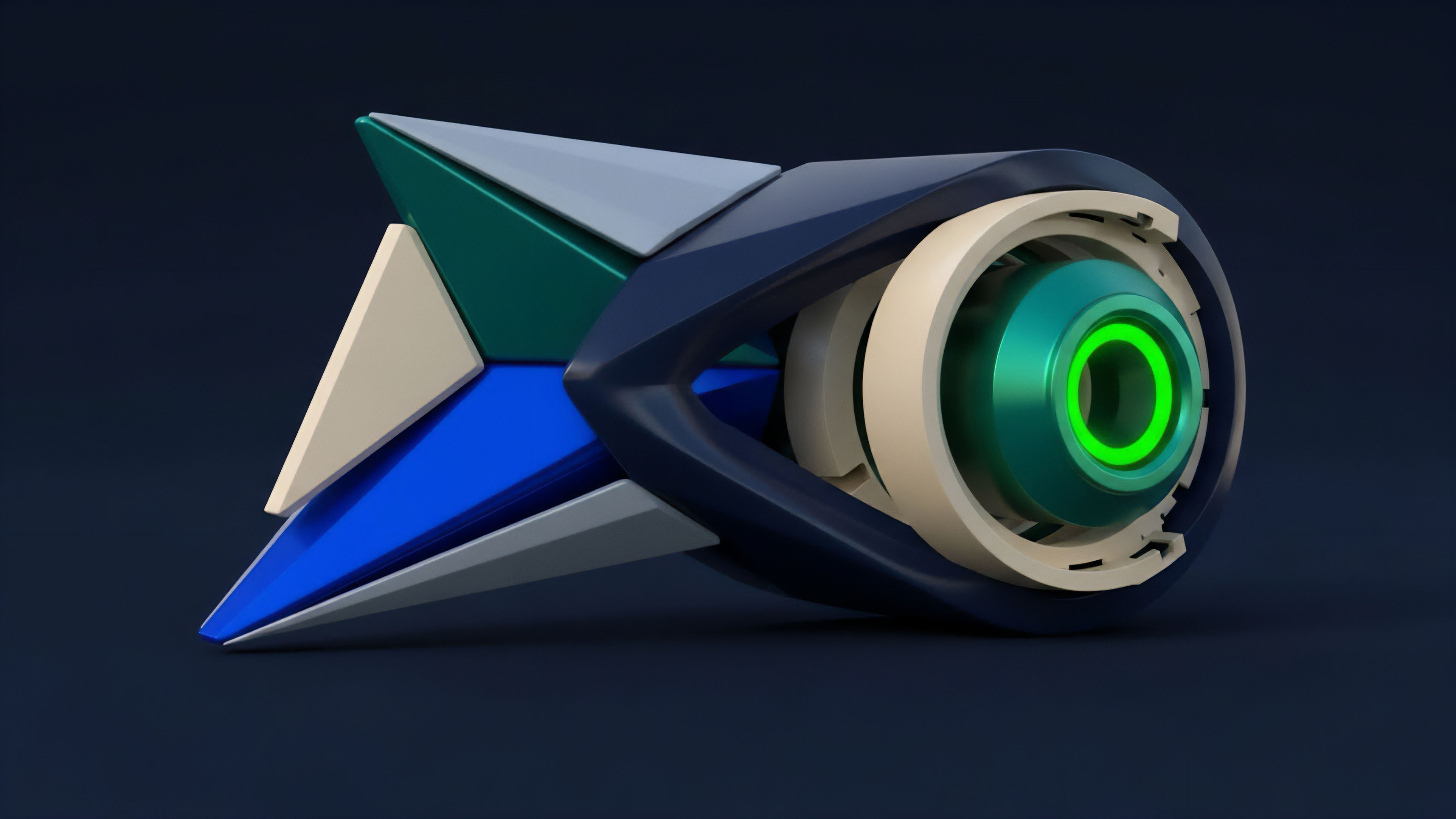

- Hybrid Models: These models combine elements of both approaches. A common design involves a high-frequency, centralized feed for fast updates, coupled with a slower, decentralized network that serves as a fallback or verification layer. If the centralized feed deviates significantly from the decentralized consensus, the protocol can pause or revert to the more secure but slower data source.

The choice of data source is critical for different option types. For simple vanilla options, a reliable spot price feed at expiration is sufficient. However, for exotics like Asian options or volatility derivatives, the protocol requires a time-weighted average price (TWAP) or a calculation of implied volatility.

This necessitates more complex off-chain computation and data aggregation before the final value is submitted to the chain.

| Integration Method | Security Model | Data Latency | Cost Efficiency |

|---|---|---|---|

| Decentralized Oracle Network (DON) | Economic incentives and consensus of multiple nodes | High latency (seconds to minutes) due to aggregation | High cost per update due to multiple submissions |

| Centralized Feed | Trust in a single entity; single point of failure | Low latency (near real-time) | Low cost per update |

| Hybrid Verification Layer | Layered security; fast primary feed with slow fallback | Variable; fast for normal operation, slow for verification | Moderate cost; combines high-speed updates with verification costs |

Evolution

The evolution of off-chain data integration for crypto options mirrors the increasing sophistication of the derivatives market itself. The initial phase focused on securing simple spot prices for collateral and liquidation. This was primarily a “price feed” problem.

The second phase, driven by the growth of options protocols, required more complex data. It became necessary to accurately calculate implied volatility, which cannot be directly observed from a single spot price. This led to the development of dedicated volatility oracles, which process a stream of data and calculate a measure like the VIX (Volatility Index) equivalent for crypto assets.

The current phase is focused on moving beyond simple data feeds to integrating complex financial models off-chain. Instead of simply providing data inputs, future oracles will perform calculations, such as determining the mark-to-market value of an option portfolio or calculating margin requirements based on risk models like VaR (Value at Risk). This shift allows protocols to offer more complex, capital-efficient products.

The progression from simple spot price feeds to complex, off-chain volatility calculations represents the shift from basic financial contracts to sophisticated risk management tools.

This evolution also involves a change in data provenance. Early oracles simply provided a single aggregated price. Modern approaches, however, are focused on verifiable data sources.

The data itself is sourced from multiple high-quality exchanges, weighted by volume and liquidity, and then cryptographically signed to ensure its integrity. The next generation of integration systems will likely move towards a more programmatic approach where the data itself is sourced directly from a decentralized exchange (DEX) liquidity pool, making the data on-chain by default, thereby eliminating the need for a separate off-chain feed.

Horizon

Looking ahead, the future of off-chain data integration for options points toward two major developments: “oracle-less” protocols and advanced zero-knowledge data verification.

The oracle-less approach attempts to circumvent the oracle problem entirely by creating derivatives that are self-contained on-chain. This often involves synthetic assets or automated market makers (AMMs) where pricing is derived from the on-chain activity of the pool itself, rather than external feeds. For example, an AMM for options can derive implied volatility from the ratio of calls to puts within its liquidity pool.

This eliminates the need for external data feeds, removing the associated security risks and latency issues. However, these models often suffer from liquidity fragmentation and high slippage, making them less efficient for large traders.

Zero-Knowledge Data Verification

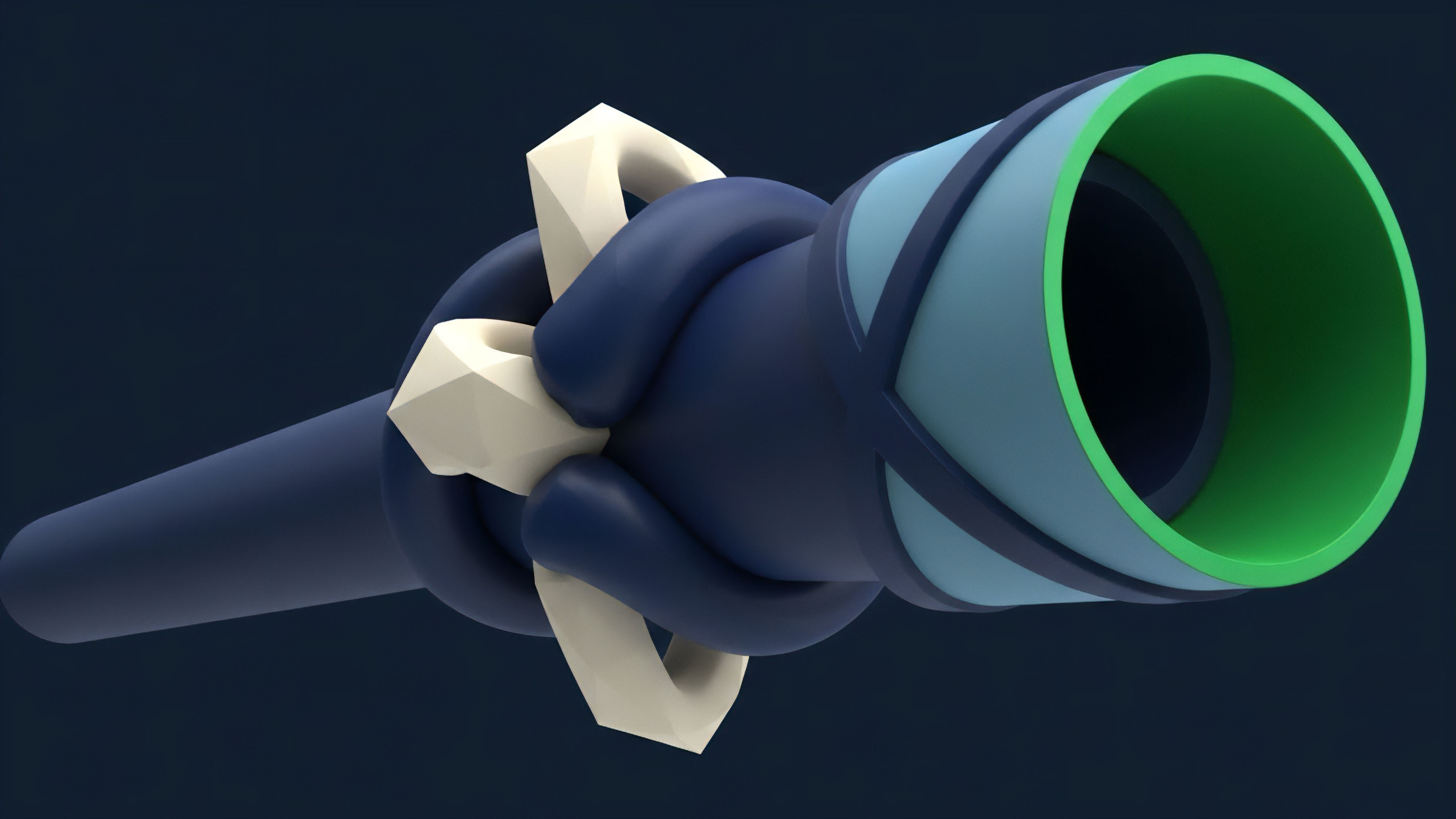

A more advanced approach involves using zero-knowledge proofs (ZKPs) to verify off-chain data. Instead of simply trusting a data provider, a ZKP system allows a provider to prove that they correctly processed data from a specific source without revealing the source data itself. This allows for complex calculations to occur off-chain, such as calculating the implied volatility surface across multiple exchanges, and then generating a proof that verifies the calculation’s accuracy.

The on-chain smart contract only needs to verify the proof, not re-run the calculation or trust the provider’s inputs. This significantly reduces on-chain costs while ensuring data integrity.

The Regulatory and Systemic View

The regulatory landscape will also heavily influence the future of data integration. As derivatives protocols become more widely adopted, regulators will demand transparency and auditability. Off-chain data integration must evolve to provide a clear audit trail of data provenance, demonstrating that pricing feeds are not manipulated and adhere to market integrity standards. The systemic implications of this integration are vast. As more financial products rely on shared oracle infrastructure, the oracle itself becomes a critical point of systemic risk. A compromise of a major oracle network could trigger a cascade of liquidations across multiple derivatives protocols simultaneously. The future architecture must therefore prioritize a robust and redundant system to prevent this kind of interconnected failure. The focus shifts from optimizing individual protocol efficiency to ensuring the resilience of the shared data infrastructure.

Glossary

Cross-Chain Data Synthesis

Off-Chain Transaction Processing

Off Chain Data Feeds

Economic Incentives

Black-Scholes Integration

On-Chain Data Latency

Off-Chain Options

Off-Chain Matching Settlement

On-Chain Data Inputs