Essence

Real-Time Market Data Verification addresses the fundamental challenge of ensuring data integrity for decentralized financial instruments. A derivative contract, particularly an option, relies on accurate and timely pricing information for its underlying asset. The contract’s value, collateral requirements, and liquidation thresholds are calculated based on this external data feed.

Without a robust verification mechanism, the smart contract operates in a vacuum, susceptible to manipulation or reliance on stale information. The core function of verification is to create a secure, cryptographically verifiable bridge between off-chain market prices and on-chain contract logic. This process ensures that the automated financial system reacts correctly to changes in the real-world value of assets.

The complexity increases with derivatives because they introduce non-linear risk profiles. An options contract’s value changes rapidly with minor shifts in the underlying asset price. If the data feed is delayed by even a few seconds during high volatility, the contract’s margin calculations become incorrect.

This creates opportunities for arbitrageurs to exploit the system by executing trades at prices that no longer reflect market reality. The verification system must account for the high-frequency nature of derivatives trading, providing a data stream that is both fast and resistant to manipulation.

Real-Time Market Data Verification is the process of ensuring a smart contract’s pricing calculations are based on accurate, timely, and manipulation-resistant external data.

The challenge for decentralized finance protocols is that they operate in a permissionless environment. Unlike traditional exchanges, which rely on internal data feeds and regulatory oversight to maintain integrity, DeFi protocols must establish trust in external data sources without a central authority. The verification process, therefore, must be designed to achieve consensus among multiple data providers, ensuring that a single point of failure cannot compromise the entire system.

This requirement for decentralization fundamentally changes the architecture of data verification compared to legacy financial systems.

Origin

The requirement for external data verification in financial markets predates crypto by decades. Traditional financial institutions established data integrity through tightly controlled, centralized mechanisms.

Exchanges like CME or ICE act as both the venue for trading and the source of truth for pricing. Data verification in this context relies on regulatory oversight and internal controls to prevent data manipulation. When derivatives began trading on these exchanges, the underlying data feeds were assumed to be accurate because the exchange itself guaranteed them.

In crypto, the “oracle problem” arose with the advent of smart contracts. A smart contract on a blockchain cannot natively access information outside its own network. To function as a financial instrument, it requires data about external events, such as asset prices, interest rates, or commodity prices.

Early solutions for decentralized options protocols often relied on simple, single-source data feeds, which proved highly vulnerable to manipulation. The infamous flash loan attacks demonstrated that a malicious actor could temporarily manipulate the price of an asset on a single decentralized exchange (DEX) and use that manipulated price to liquidate or exploit a derivative contract on another protocol. The origin of real-time verification in DeFi is a direct response to these vulnerabilities.

The industry recognized that a decentralized financial system required a decentralized data verification layer. This led to the development of decentralized oracle networks, which aggregate data from numerous sources and require cryptographic consensus among nodes before delivering a price feed to a smart contract. The evolution of this field was driven by the necessity to prevent systemic risk and ensure the viability of complex financial instruments like options and perpetual futures in a permissionless environment.

Theory

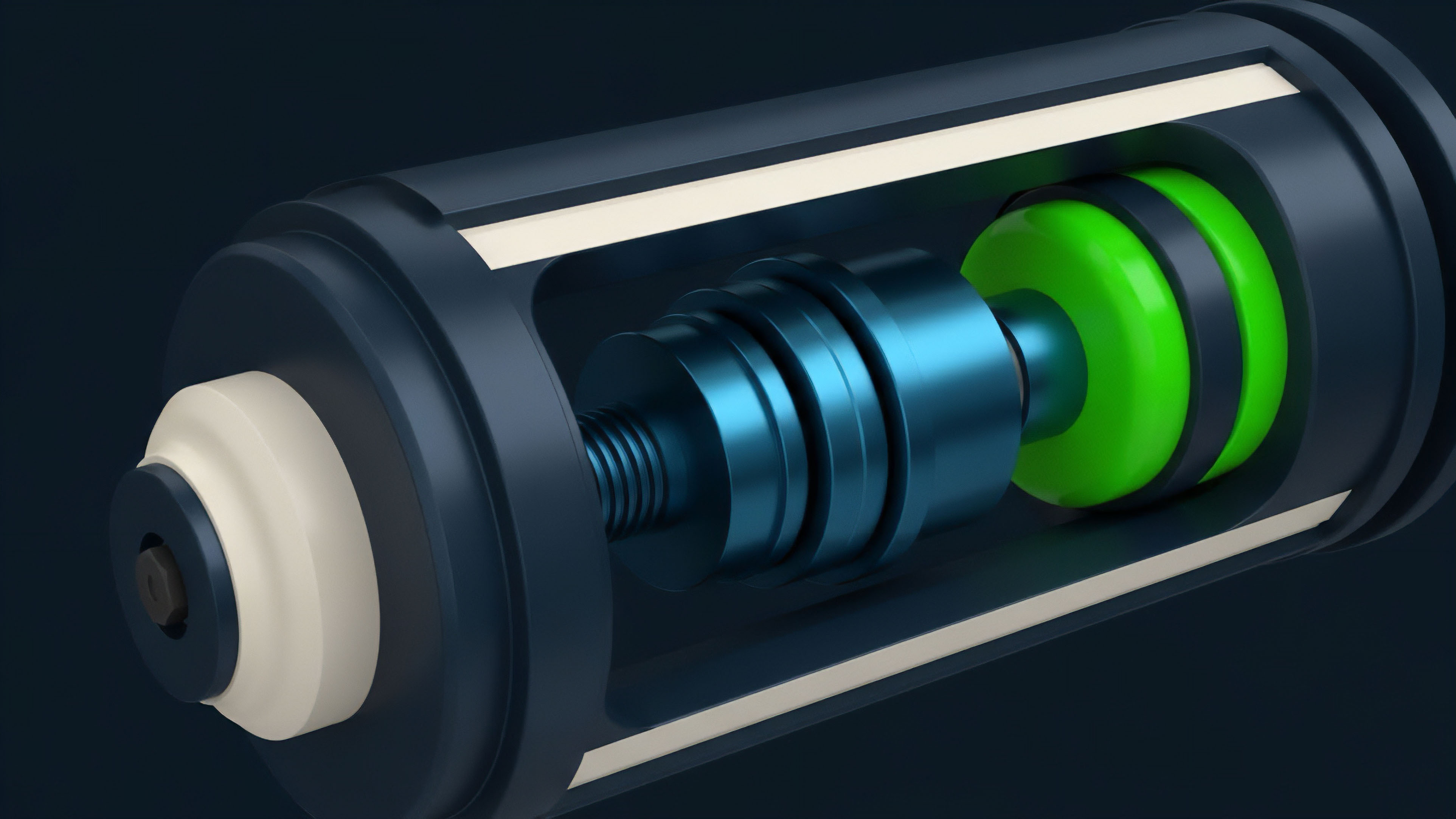

The theoretical foundation of market data verification in crypto derivatives rests on a blend of quantitative finance and distributed systems design. From a quantitative perspective, options pricing models, such as Black-Scholes, require a clean, continuous-time price for the underlying asset (S). Any distortion in S directly impacts the calculation of the option’s theoretical value and its risk sensitivities, known as the Greeks.

For example, a delayed data feed during a sharp price movement will cause the calculated delta (the option’s price sensitivity to the underlying asset) to be incorrect, leading to mis-hedging by market makers. From a systems design perspective, the verification mechanism must solve the Byzantine Generals’ Problem for data feeds. The goal is to ensure that a consensus on a single price point can be reached among potentially untrustworthy data providers.

The primary theoretical approaches to achieving this involve:

- Data Aggregation and Medianization: Collecting price data from multiple independent sources (centralized exchanges, DEXs) and calculating a median value. The median price is used to mitigate the impact of outliers or manipulated prices from a single source.

- Cryptographic Proofs and Signatures: Requiring data providers to cryptographically sign their data submissions. This allows smart contracts to verify the source and integrity of the data, ensuring that the data has not been tampered with since its creation.

- Staking and Economic Incentives: Implementing a staking mechanism where data providers must stake collateral. If a provider submits incorrect data, their stake is slashed. This economic incentive aligns provider behavior with data accuracy.

A critical theoretical consideration is the trade-off between data latency and data security. A verification system designed for high security requires more time for consensus among multiple nodes, increasing latency. A low-latency system, vital for high-frequency options trading, sacrifices security by relying on fewer data points or faster consensus mechanisms.

This trade-off dictates the specific design choices for different types of derivative protocols.

| Verification Model Component | Risk Mitigation Objective | Impact on Options Protocol |

|---|---|---|

| Data Source Diversity | Mitigates single-source manipulation risk (flash loan attacks). | Ensures collateralization ratios are based on a fair market value. |

| Consensus Mechanism (e.g. Medianization) | Prevents outliers from distorting the price feed. | Maintains accurate calculation of options value and Greeks. |

| Latency Thresholds | Prevents front-running and exploitation during volatility. | Enables high-frequency options trading and tight bid-ask spreads. |

The design of these systems must also account for “volatility skew,” the phenomenon where implied volatility varies across different strike prices. If a data verification failure occurs during a high volatility event, the system’s perception of risk can be completely skewed, leading to incorrect margin calls or liquidations. The verification mechanism must provide not just a single price point, but a stable, verifiable input that reflects the underlying market’s true risk state.

Approach

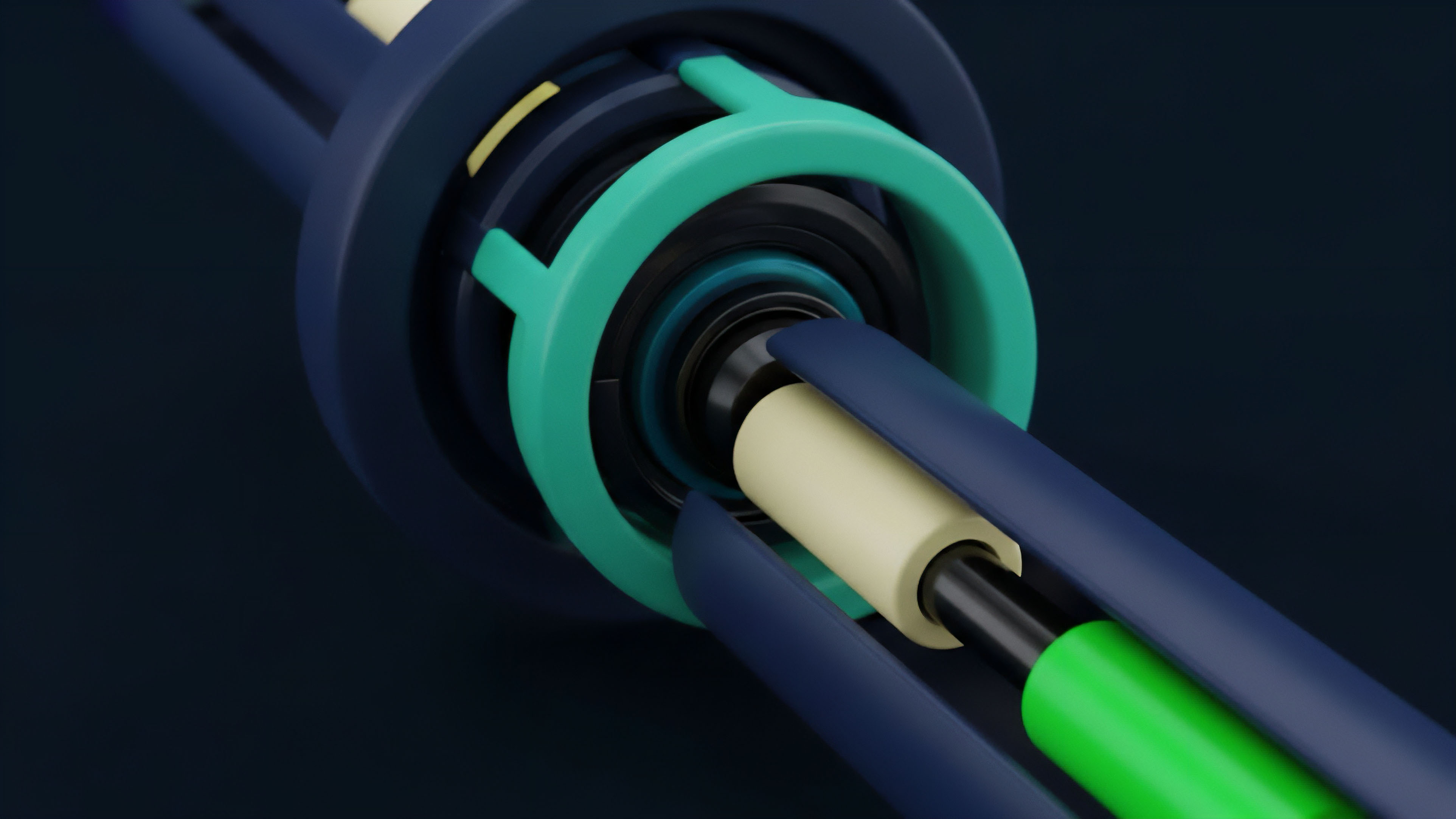

Current approaches to real-time market data verification are dominated by decentralized oracle networks. These networks provide a robust, external data layer for smart contracts. The most common implementation involves a decentralized network of nodes that collect price data from various centralized and decentralized exchanges.

The nodes aggregate this data, apply a median calculation to filter out outliers, and then submit the resulting price to a smart contract on the blockchain. The core approach for options protocols involves selecting an oracle network that meets specific criteria for latency and security. For perpetual futures and short-term options, low latency is paramount.

The verification system must deliver data within milliseconds to prevent front-running by high-frequency traders. For longer-term options or collateral-based lending, data integrity and security take precedence over speed. The implementation details vary significantly between different oracle designs:

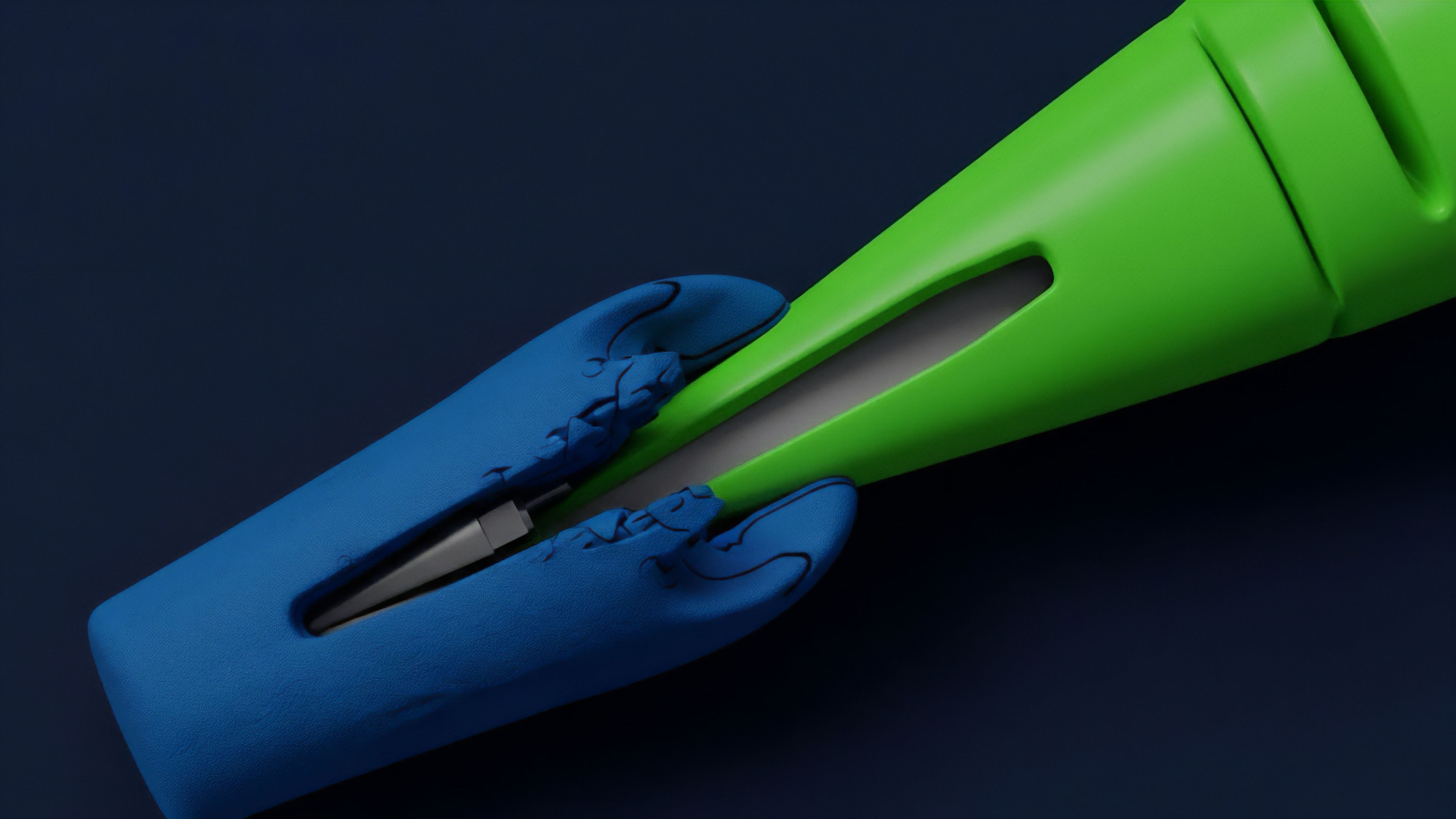

- Push Oracles: Data is pushed onto the blockchain at regular intervals or when a price deviation exceeds a specific threshold. This approach ensures data freshness but can be expensive due to transaction fees.

- Pull Oracles: Smart contracts request data from the oracle network when needed. This approach reduces costs but introduces latency, as the data must be retrieved from the network.

A critical aspect of the approach is the verification of the verification mechanism itself. Protocols often implement a “safety mechanism” or circuit breaker that pauses trading or liquidations if the data feed from the oracle network fails or exhibits suspicious behavior. This prevents cascading failures during extreme market conditions.

The choice of oracle network and verification methodology is often a primary design decision for any new options protocol, as it dictates the protocol’s overall risk profile and capital efficiency.

Effective data verification requires a careful balance between data freshness (low latency) and data security (consensus among multiple sources) to maintain system integrity.

Evolution

The evolution of real-time market data verification in crypto derivatives has progressed from rudimentary, single-point feeds to sophisticated, decentralized consensus mechanisms. Early derivative protocols often relied on simple price feeds from a single centralized exchange. This created a single point of failure, allowing a flash loan attack on a DEX to manipulate the price on a separate protocol. The next stage involved the creation of decentralized oracle networks. These networks aggregated data from multiple sources, significantly reducing the risk of single-source manipulation. The evolution of these networks focused on improving two key areas: latency and data source diversity. Early networks struggled with high latency due to the slow block times of underlying blockchains. This made them unsuitable for high-frequency options trading. The most recent development in verification involves low-latency data feeds designed specifically for derivatives. These systems provide near-instantaneous price updates, often by utilizing specialized off-chain processing layers that only commit a verified price to the blockchain when a smart contract requires it. This “pull” model reduces on-chain transaction costs and latency, making high-frequency options trading viable in a decentralized environment. This progression reflects a deeper shift in market microstructure. The verification process has evolved from a simple data retrieval task to a critical component of market design. The verification system now defines the boundaries of capital efficiency and risk for decentralized derivatives. The move toward specialized, low-latency data feeds indicates that data verification is no longer a generic utility but a specialized infrastructure layer tailored to the unique requirements of options and futures markets.

Horizon

Looking ahead, the future of real-time market data verification will be defined by three converging forces: cross-chain interoperability, regulatory pressure, and the integration of advanced data models. As derivatives markets fragment across different layer-1 and layer-2 blockchains, the verification challenge expands. A protocol on one chain may need verified data from an asset trading on another chain. This requires the development of cross-chain oracle networks capable of securely transmitting data across disparate ecosystems. The second force is regulatory oversight. As decentralized finance grows, regulators will likely impose stricter requirements on data integrity and verification processes. This may lead to the standardization of verification methods, requiring protocols to use specific, audited data sources and consensus mechanisms. This shift will force a trade-off between decentralization and compliance, as protocols seek to satisfy regulatory requirements for market integrity. The third force involves integrating advanced data models directly into the verification process. Current systems primarily verify the spot price of an asset. Future systems will need to verify more complex inputs, such as implied volatility surfaces or interest rate curves, to accurately price exotic options and structured products. This requires a new generation of oracles capable of aggregating and verifying complex financial models rather than just simple price points. The goal is to create a verification layer that supports the full spectrum of quantitative finance, allowing for the creation of sophisticated, risk-managed derivatives in a decentralized setting. The long-term challenge is to build a verification system that is both sufficiently decentralized to resist manipulation and sufficiently fast to support high-frequency trading. The architecture of these future systems will determine whether decentralized derivatives can truly compete with traditional finance in terms of speed, capital efficiency, and systemic stability.

Glossary

Real-Time Risk Reporting

Real-Time Risk Assessment

Risk Parameter Verification

Consensus Mechanism Design

Operational Verification

Cryptographic Verification Cost

Post-Trade Verification

Real Time Options Quoting

Real-Time Market State Change