Essence

Proprietary data feeds are specialized, high-fidelity data streams that provide a critical advantage in the pricing and risk management of crypto derivatives. These feeds differ from standard public oracles by offering granular, real-time insights into market microstructure that are not accessible to general market participants. The value proposition of a proprietary data feed is built on information asymmetry, where a select group of market makers and institutional traders gain access to data that allows for superior execution and more accurate calculations of risk parameters.

The core function of these feeds is to address the limitations of public price oracles in a derivatives context. While public oracles provide a necessary spot price for collateral valuation and liquidation triggers, they are insufficient for pricing complex options. Options pricing requires a detailed understanding of the volatility surface ⎊ a three-dimensional plot of implied volatility across different strikes and expirations.

Proprietary feeds aggregate real-time order book data, bid-ask spreads, and historical volatility metrics to calculate this surface with high precision, enabling market makers to hedge risk more effectively and offer tighter spreads.

The true value of proprietary data feeds lies in their ability to translate raw order book dynamics into a real-time volatility surface, moving beyond simple spot price aggregation.

The data itself often consists of aggregated, normalized, and cleaned data from multiple centralized and decentralized exchanges. This process of data cleaning and normalization is itself proprietary, filtering out noise, identifying spoofing attempts, and correcting for market fragmentation. The speed and integrity of this data stream are paramount, as even a millisecond delay in processing can render a pricing model obsolete in a high-frequency trading environment.

Origin

The concept of proprietary data feeds originates in traditional finance, where high-frequency trading firms and large banks built their competitive advantage on direct data feeds from exchanges. In crypto, this necessity emerged with the growth of decentralized derivatives protocols. Early decentralized finance (DeFi) protocols relied on simple price oracles, often sourced from a single, aggregated index.

This model worked adequately for spot lending and basic swaps, but it proved fundamentally flawed for options protocols. The first generation of decentralized options protocols struggled with accurate pricing, often relying on simplified models that failed during periods of high volatility. The underlying problem was a lack of high-quality, real-time volatility data.

The transition to proprietary feeds was driven by the recognition that a derivatives market requires a data infrastructure that can account for the dynamic nature of implied volatility. This evolution was accelerated by market events where oracles lagged or failed, leading to incorrect liquidations and significant losses. As decentralized options markets matured, market makers required data parity with their centralized counterparts to effectively manage risk.

This demand led to the development of specialized data providers and protocols that focus on delivering high-frequency, granular data streams specifically tailored for derivatives pricing models. The architecture evolved from a simple “price feed” to a complex “data oracle network” capable of delivering multiple data points (e.g. implied volatility, open interest, order book depth) simultaneously.

Theory

The theoretical foundation of proprietary data feeds rests on the principle of information advantage within market microstructure.

In an options market, pricing models such as Black-Scholes require specific inputs, including implied volatility. While the Black-Scholes model assumes constant volatility, real-world markets exhibit volatility skew and term structure. A proprietary feed’s primary function is to accurately calculate and deliver these real-time volatility adjustments.

The process involves a complex quantitative analysis of order book data. The data feed aggregates bids and asks across various exchanges to create a composite view of market depth. By analyzing the depth of the order book and the volume of trades at different strikes, the feed calculates a precise implied volatility surface.

This surface represents the market’s collective expectation of future volatility for specific strikes and expirations.

| Data Type | Source Aggregation | Financial Implication |

|---|---|---|

| Order Book Depth | Multiple CEX/DEX APIs | Determines bid-ask spread and liquidity at specific strikes. |

| Implied Volatility Surface | Options protocol order books | Calculates real-time volatility skew for accurate option pricing. |

| Trade History & Volume | Real-time transaction data | Analyzes short-term price pressure and market sentiment. |

The theoretical value of this precision is directly linked to the calculation of Greeks. The Greek values (Delta, Gamma, Vega, Theta) are essential for risk management. A proprietary feed allows market makers to calculate their portfolio’s sensitivity to price movements (Delta), changes in volatility (Vega), and time decay (Theta) with greater accuracy than relying on public oracles.

This precision reduces the risk of mispricing options and ensures a more stable hedging strategy.

Approach

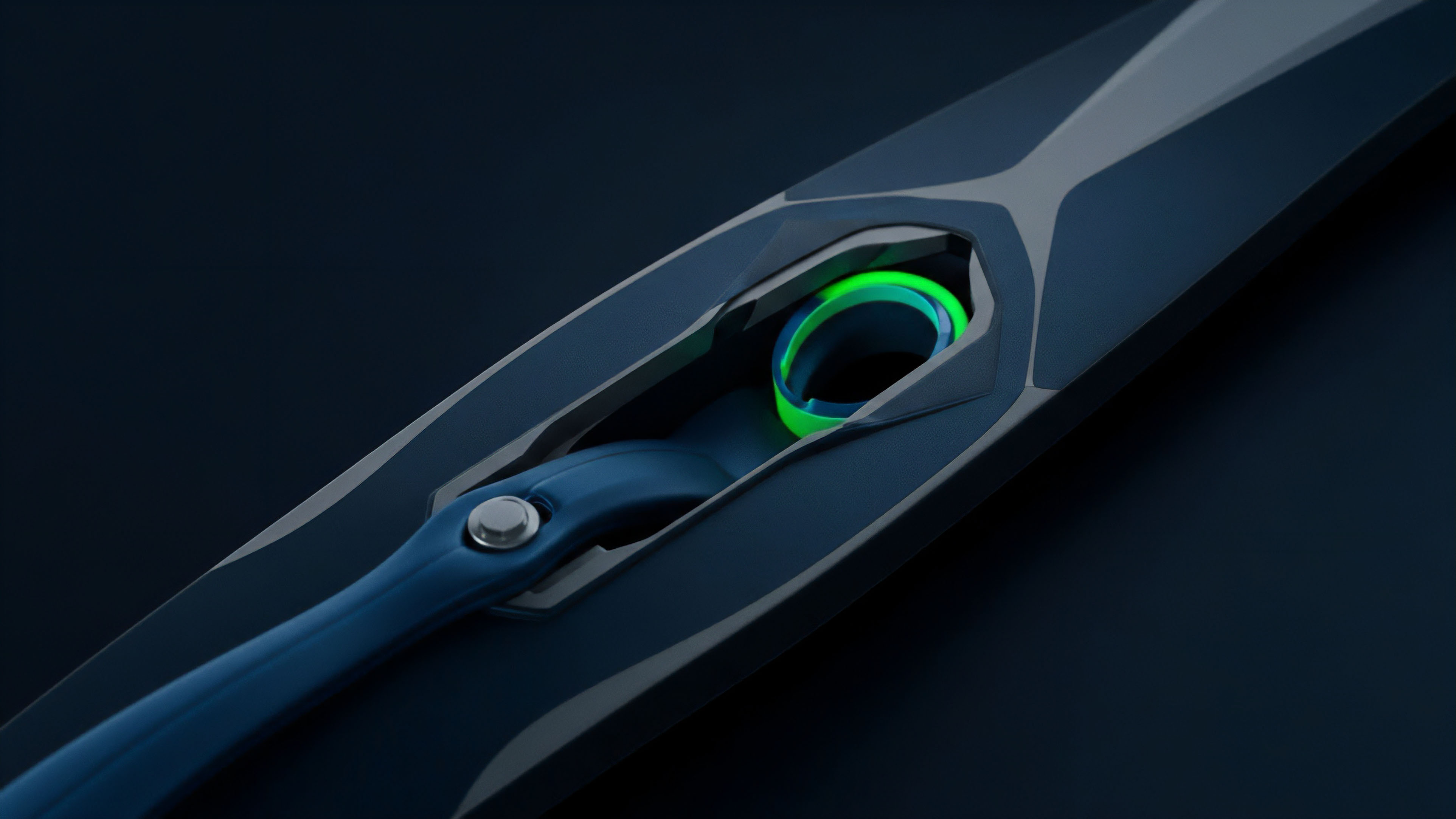

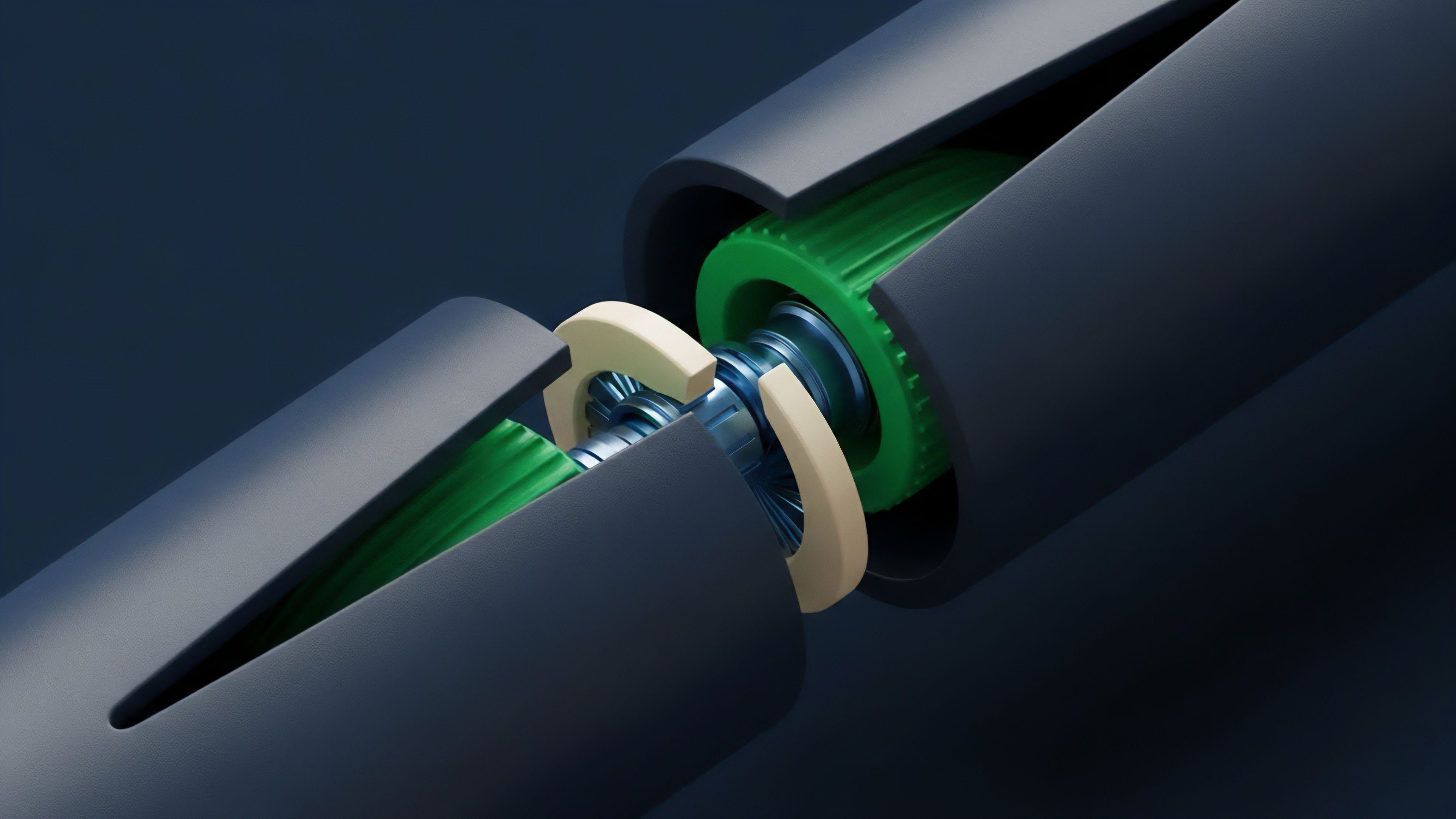

The implementation of a proprietary data feed for crypto options requires a sophisticated architecture that balances speed, accuracy, and security. The current approach involves several distinct components.

First, high-speed data collection nodes ingest raw data from both centralized exchanges (CEX) via direct APIs and decentralized exchanges (DEX) via smart contract event monitoring. This raw data includes order book snapshots, trade logs, and open interest statistics. Second, a normalization engine processes this raw data.

This engine filters out data anomalies, identifies potential wash trading, and aggregates data across fragmented markets to create a unified view. This “data cleaning” process is where much of the proprietary intellectual property resides. The engine then calculates the implied volatility surface using advanced algorithms, often incorporating models that account for non-normal distributions specific to crypto assets.

Third, a secure distribution layer delivers the processed data to clients. For decentralized protocols, this distribution often utilizes a dedicated oracle network where validators attest to the data’s integrity. For institutional clients, a private API endpoint provides direct access to the feed.

A high-quality proprietary feed acts as a “second brain” for market makers, translating chaotic market signals into structured risk parameters in real time.

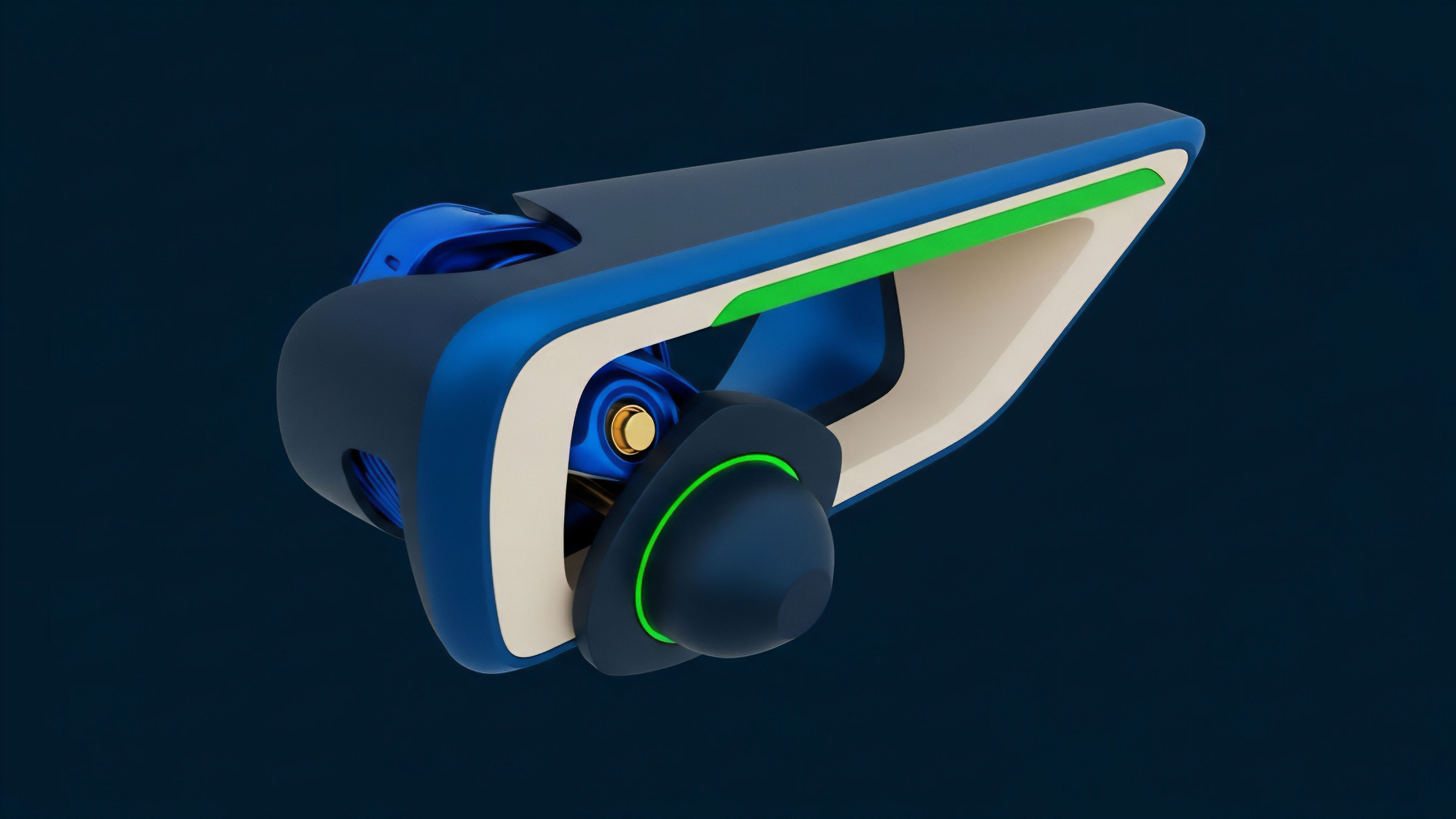

The data delivered is typically not a single price, but a structured data object containing:

- Implied Volatility Surface: A matrix of implied volatilities for various strikes and maturities.

- Greeks Calculations: Pre-calculated Delta, Gamma, and Vega values based on the feed’s internal pricing model.

- Order Book Depth: A snapshot of liquidity at specific price levels, providing insight into potential price movements.

- Settlement Price Data: Verified prices for options settlement, often aggregated from multiple sources to prevent manipulation.

Evolution

The evolution of proprietary data feeds in crypto options mirrors the maturation of the market itself. Initially, proprietary data was simply faster access to public information. As market complexity increased, the feeds evolved from basic price aggregators to sophisticated volatility surface calculators.

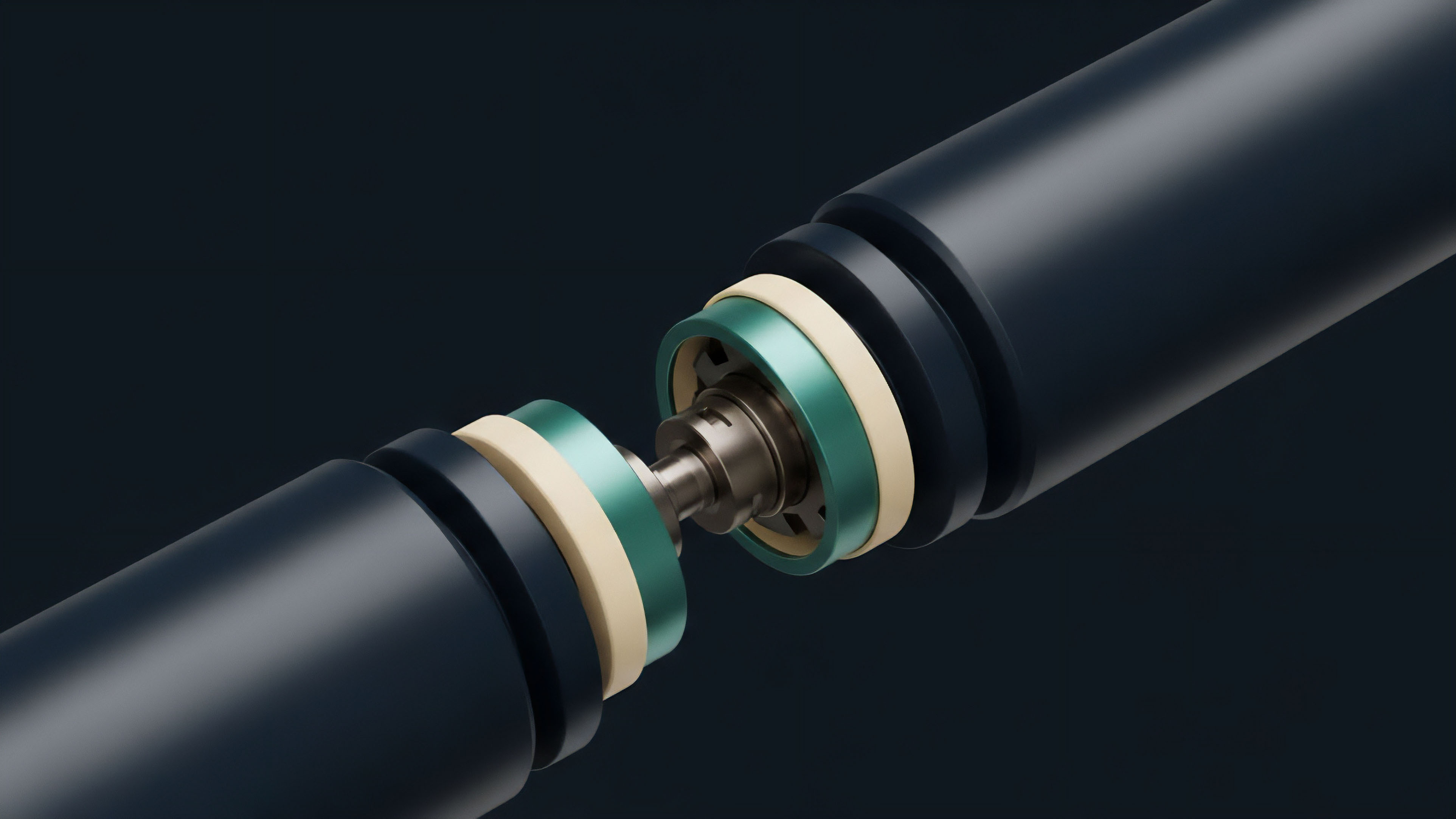

The shift from centralized to decentralized finance created a new challenge: how to provide proprietary data to a trustless environment without compromising security. The first major evolution involved the transition from private APIs to decentralized oracle networks. This change allowed proprietary data to be integrated directly into smart contracts, enabling decentralized options protocols to access high-quality data without relying on a single, centralized entity.

This introduced a trade-off between speed and verifiability. A subsequent development is the emergence of verifiable data feeds, where the data itself is cryptographically signed by multiple validators before being transmitted on-chain. This ensures that the data used for pricing and liquidation is accurate and tamper-proof.

This evolution addresses the “oracle manipulation risk” inherent in decentralized markets, where a single bad data point can lead to catastrophic liquidations. The current trend is toward the commoditization of data. As more protocols require high-quality data, the proprietary feeds are becoming less of a secret weapon and more of a standard infrastructure component.

The competitive edge is shifting from data access to the speed and sophistication of the models used to process that data. The next phase of development involves integrating these feeds with artificial intelligence and machine learning models to predict volatility and manage risk dynamically.

Horizon

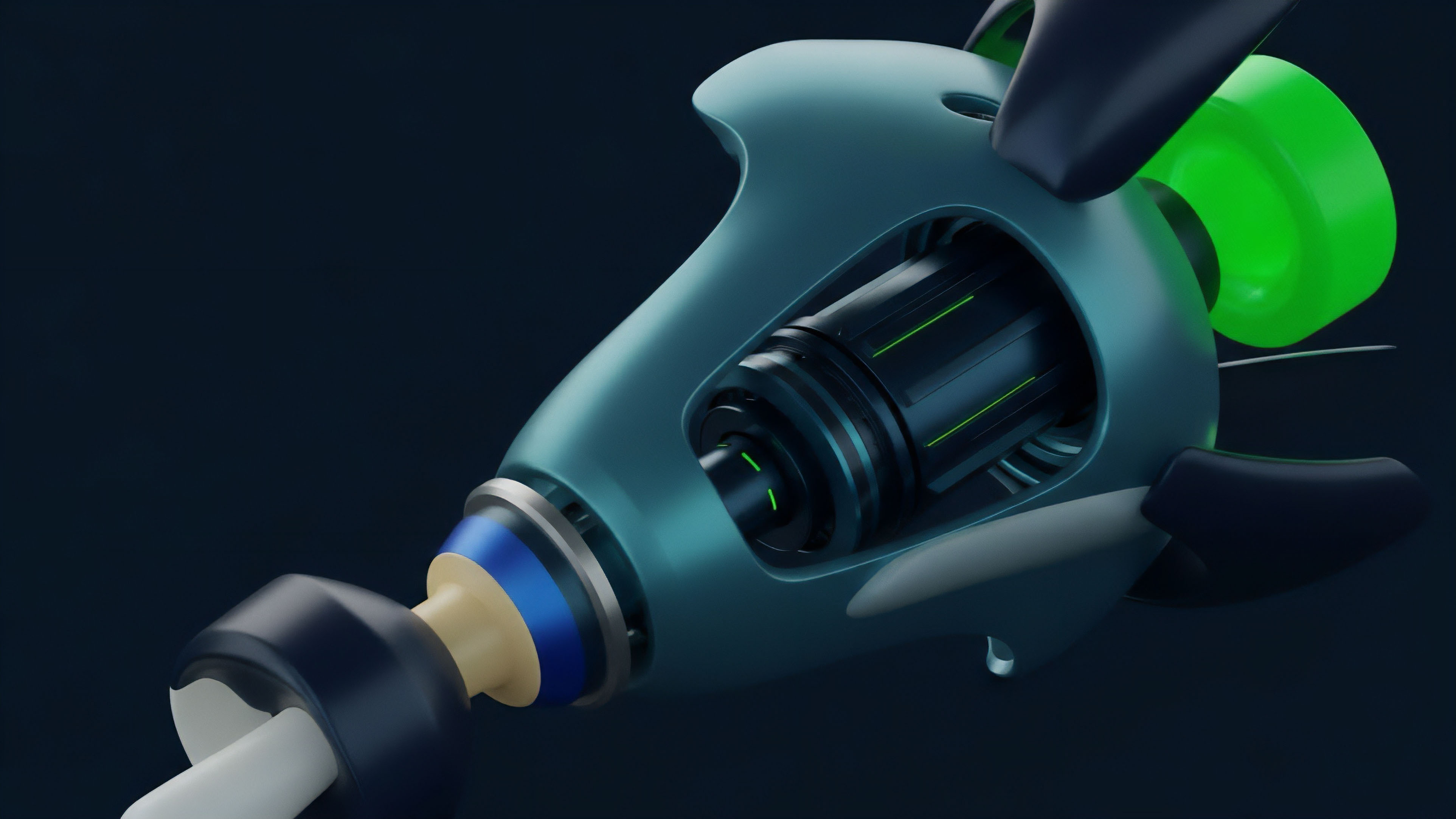

Looking ahead, the future of proprietary data feeds involves a shift from simply providing data to becoming predictive risk engines.

The market is moving toward a state where the data itself is commoditized, and the real value lies in the algorithms that interpret the data in real time. This future will be defined by two key areas: enhanced data verification and predictive modeling. On the technical front, we will see the rise of more sophisticated decentralized oracle networks that utilize zero-knowledge proofs to verify data integrity without revealing the underlying proprietary data source.

This will allow decentralized protocols to leverage proprietary insights while maintaining privacy and security. The challenge here is to achieve consensus on complex data points like volatility surfaces, rather than simple price points.

| Current State | Future State |

|---|---|

| Static volatility surface calculation. | Dynamic, real-time predictive volatility modeling. |

| Private API access for institutions. | Decentralized, verifiable data feeds for all protocols. |

| Information asymmetry for market makers. | Data commoditization; edge shifts to model execution speed. |

On the strategic front, the data feeds will likely integrate with AI models to generate real-time risk assessments and automated hedging strategies. This convergence of data and AI will allow protocols to manage risk dynamically, automatically adjusting parameters like margin requirements based on predicted volatility changes. The ultimate goal is to create a fully autonomous risk management system where data feeds not only report on current conditions but also predict future market states, ensuring the stability of decentralized derivatives protocols during periods of extreme stress.

The future data feed will not simply report the current state of volatility; it will predict the future state of volatility, transforming passive data provision into active risk management.

The regulatory environment will also play a role, as data feeds become central to market integrity. Regulators will likely focus on data transparency and verifiability to prevent manipulation, potentially requiring standardized reporting mechanisms for proprietary feeds used in regulated financial products. This will create a tension between the proprietary nature of the data and the need for public verification.

Glossary

Cross-Chain Price Feeds

Market Event Impact

Collateralized Data Feeds

State Commitment Feeds

Decentralized Finance Infrastructure

Price Data Feeds

Settlement Price Data

Centralized Data Feeds

Oracle Feeds