Essence

Data Integrity Verification is the fundamental requirement for any decentralized options protocol to function as a financial primitive. A derivatives contract, by its nature, is a bet on the future value of an underlying asset. The contract requires an indisputable source of truth ⎊ a final settlement price ⎊ at expiration to determine a winner and loser.

Without a robust mechanism for data integrity verification, the entire financial structure collapses into a trust-based system, rendering the decentralization aspect meaningless. The core challenge in decentralized finance is the inability for smart contracts to natively access external information about asset prices, volatility, or interest rates. The system must bridge the gap between off-chain reality and on-chain computation.

The problem is particularly acute for options, which are highly sensitive to price changes, time decay, and volatility. A small, temporary fluctuation in a price feed, if not verified and smoothed, can lead to incorrect liquidations or unfair settlement prices. The verification process must ensure that the data input is not only accurate at the time of settlement but also tamper-resistant throughout its entire lifecycle.

This involves a set of cryptographic and economic mechanisms designed to make data manipulation prohibitively expensive.

Data integrity verification ensures that a decentralized options protocol’s settlement logic operates on an accurate, tamper-proof source of truth, eliminating the single point of failure inherent in traditional systems.

Origin

The necessity of robust data verification in decentralized finance stems from early exploits that exposed the fragility of naive oracle designs. The initial wave of DeFi protocols often relied on simple, centralized price feeds, or in some cases, used a single, privileged administrator to manually input data. These single points of failure were quickly exploited, leading to significant capital losses in various protocols.

As derivatives protocols began to emerge, the risk amplified dramatically. The high leverage inherent in options trading means that a minor data manipulation can result in catastrophic liquidations. The evolution of data verification has been a reactive process, driven by the need to secure progressively more complex financial instruments.

Early solutions focused on time-weighted average prices (TWAPs) to prevent flash loan attacks, where an attacker could manipulate a price on a decentralized exchange (DEX) for a single block and profit from an incorrect oracle feed. As options protocols advanced, the demand grew beyond simple price data to include volatility feeds and implied volatility surfaces, requiring more sophisticated and secure data aggregation methods. This led to the development of decentralized oracle networks (DONs), which distribute the responsibility for data collection across multiple independent nodes, making single-point manipulation nearly impossible.

Theory

The theoretical foundation of data integrity verification in options protocols rests on the trade-off between security, speed, and cost. The ideal system provides immediate, accurate data without excessive transaction fees or trust assumptions. In practice, protocols must compromise on one or more of these variables.

The primary challenge for options is that pricing models, particularly those based on Black-Scholes, require high-frequency data feeds to accurately calculate volatility and mark-to-market positions. A slow data feed creates significant risk for market makers, while a fast, but insecure, feed creates risk for the protocol’s entire user base. The core mechanism for achieving integrity is often rooted in game theory.

By requiring data providers to stake collateral, protocols create an economic disincentive for malicious reporting. If a node reports bad data, its stake can be slashed, making the potential profit from manipulation significantly less than the cost of losing the staked capital. The theoretical security of the system, therefore, scales with the value of the collateral staked by the data providers.

This creates a fascinating dynamic where the financial security of the protocol is directly tied to the economic incentives of its participants.

Data Latency and Security Tradeoffs

The primary tension in data integrity verification for derivatives is the latency-security trade-off. A protocol can prioritize security by implementing long time delays and requiring multiple confirmations before data is accepted. This approach, however, results in high data latency, making it difficult for market makers to accurately price options and manage risk in fast-moving markets.

Conversely, prioritizing low latency requires accepting data more quickly, potentially exposing the protocol to flash attacks or data manipulation.

Verification Models Comparison

The choice of verification model dictates the protocol’s risk profile and capital efficiency. The following table compares three primary approaches used in decentralized options protocols:

| Model Type | Security Mechanism | Latency Characteristics | Best Use Case |

|---|---|---|---|

| Centralized Oracle | Trust-based, single entity input. | Low latency (near real-time). | Low-risk assets, high-speed applications. |

| Decentralized Oracle Network (DON) | Economic incentives, data aggregation from multiple nodes. | Medium latency (time delays for consensus). | General-purpose derivatives, standard assets. |

| Optimistic Oracle | Challenge period, game theory, data accepted unless challenged. | High latency (time delay for challenge period). | Long-term contracts, low-frequency data updates. |

Approach

The implementation of data integrity verification in current decentralized options protocols typically involves a multi-layered approach that combines on-chain and off-chain elements. The objective is to ensure that a malicious actor cannot manipulate the price feed without incurring a cost greater than the potential profit from the exploit.

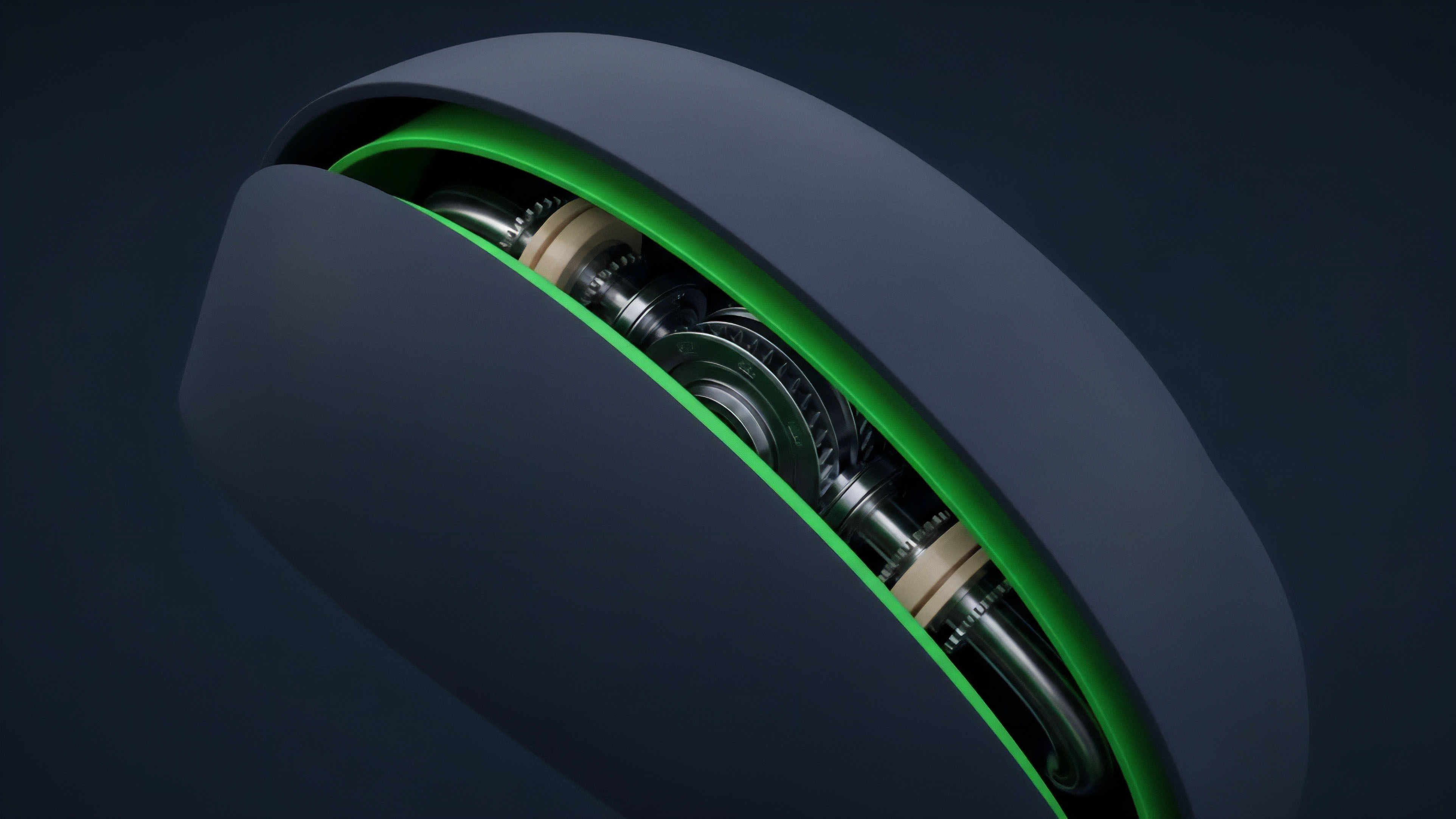

Data Aggregation and Filtering

A key approach involves aggregating data from multiple independent sources to generate a single, reliable price feed. This aggregation often uses a median function to filter out outliers, preventing a single compromised source from skewing the final price. Protocols often combine data from major centralized exchanges (CEXs) and decentralized exchanges (DEXs) to create a robust and representative price.

The aggregation logic itself must be transparent and verifiable on-chain.

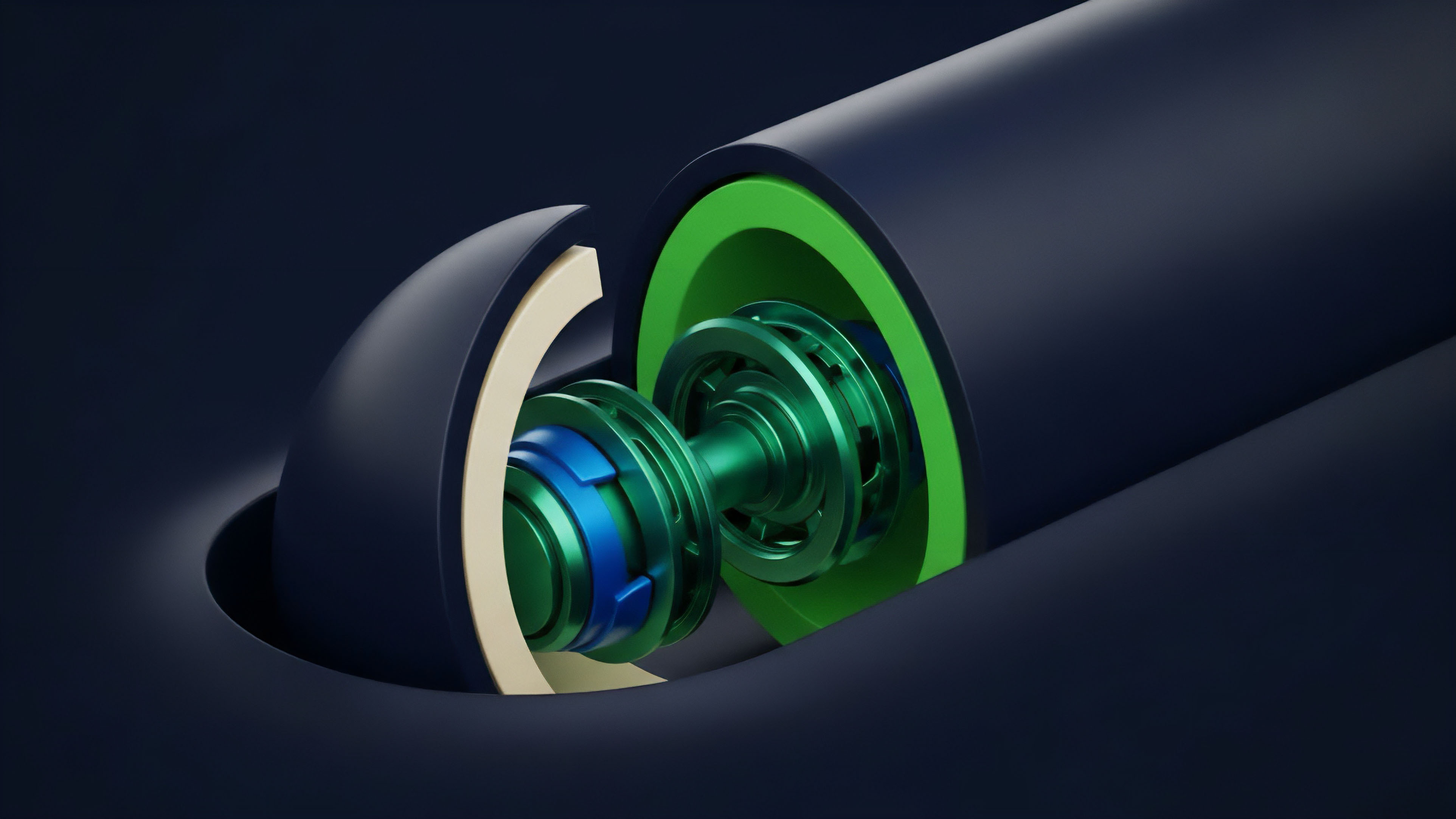

Economic Security through Staking

Data providers in many decentralized oracle networks must stake a significant amount of capital. This economic stake serves as a bond that aligns incentives. If a provider submits incorrect data, their stake can be slashed, making the attack economically irrational.

The security of the system is directly proportional to the total value staked in the network. This approach shifts the security model from cryptographic certainty to economic deterrence.

Decentralized oracle networks use economic incentives and data aggregation to secure derivatives protocols against data manipulation, ensuring the cost of an attack exceeds the potential profit.

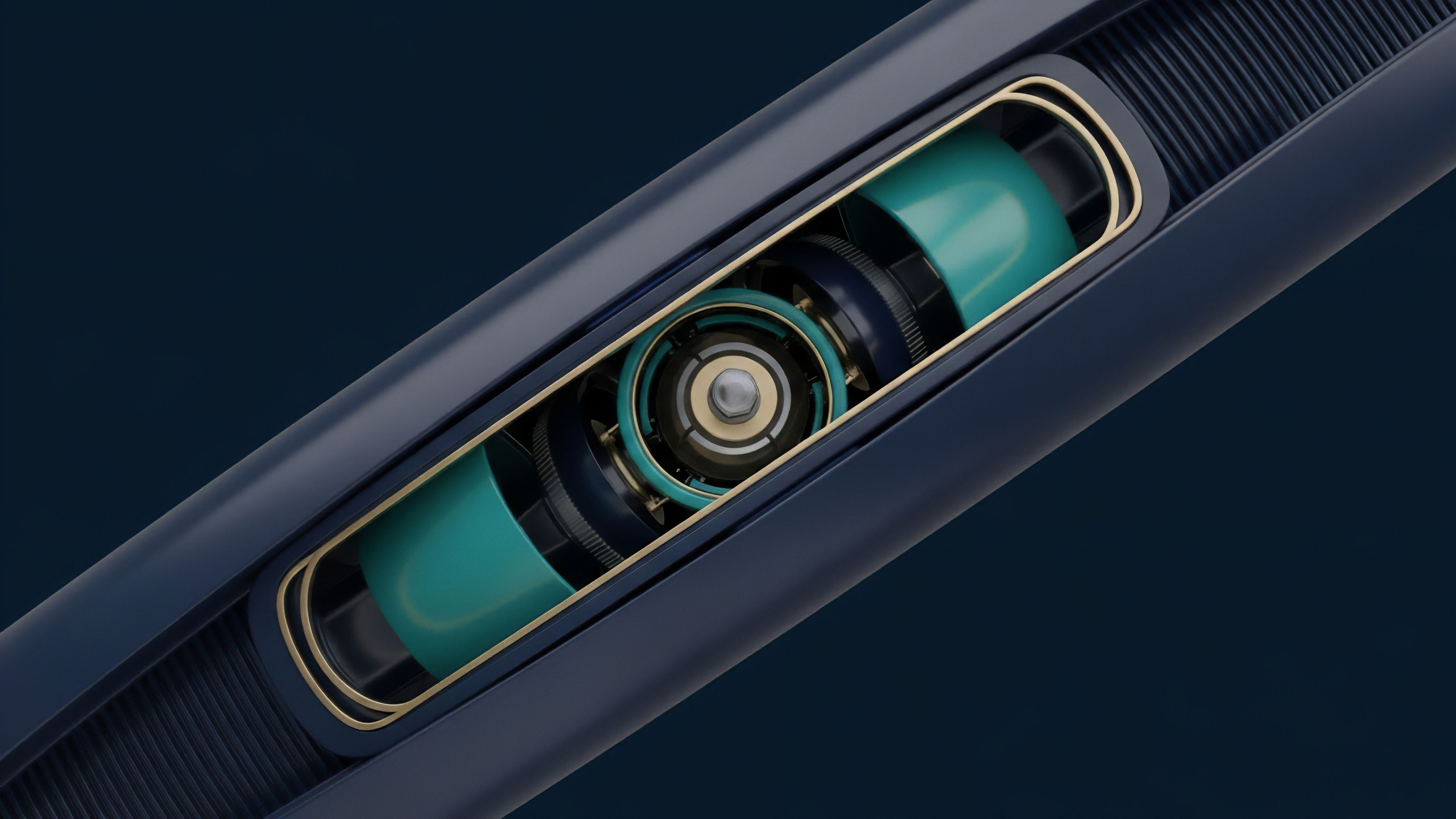

Optimistic Settlement Models

Another approach uses optimistic settlement models, where data is assumed to be correct unless challenged by another participant. This approach, often used in Layer 2 solutions, introduces a “challenge period” during which any participant can submit a proof that the data is incorrect. This significantly reduces the cost of verification but introduces a time delay in settlement, which is a significant consideration for short-term options contracts.

Evolution

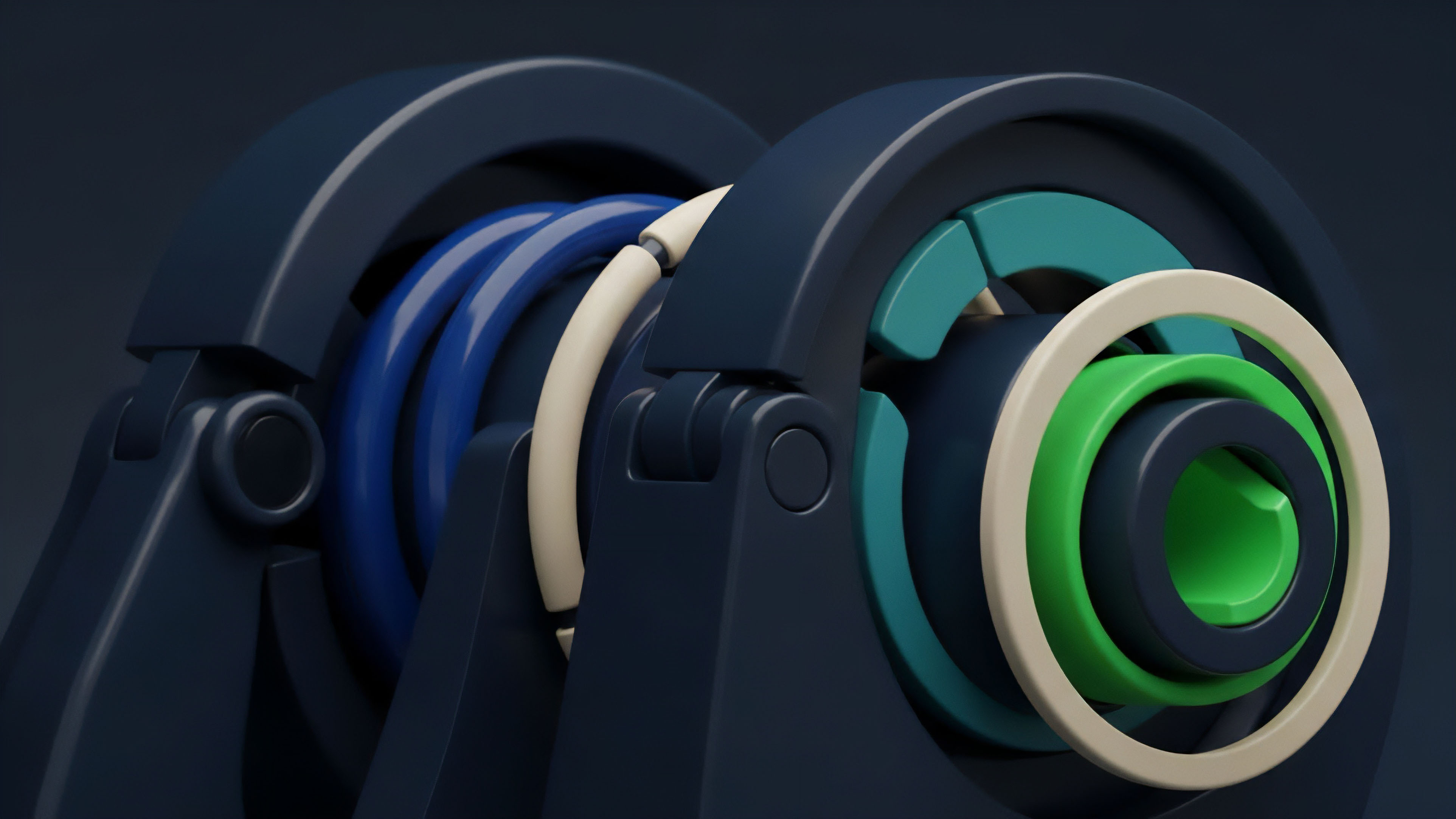

The evolution of data integrity verification is moving beyond simple economic incentives toward cryptographic certainty. The next generation of verification mechanisms leverages zero-knowledge proofs (ZK-proofs) to verify data authenticity without revealing the underlying data itself. This allows protocols to confirm that data originates from a legitimate source without trusting the source itself.

Zero-Knowledge Oracles

Zero-knowledge proofs allow a data provider to prove that they have access to specific data from a reliable source without actually publishing the data on-chain. This enhances privacy and efficiency, as only the proof needs to be verified on the blockchain. This shift changes the security model from “economic deterrence” (staking) to “cryptographic certainty.” This approach is particularly relevant for options protocols dealing with real-world assets (RWAs) where data privacy is paramount.

Cross-Chain Verification

The fragmentation of liquidity across multiple blockchains requires data integrity verification to evolve beyond single-chain solutions. Cross-chain verification protocols allow a protocol on one chain to securely verify data from another chain. This enables options protocols to access liquidity and data from diverse sources without compromising security.

This also facilitates the creation of multi-asset derivatives that span different blockchain ecosystems.

The next generation of data integrity verification leverages zero-knowledge proofs to move beyond economic incentives toward cryptographic certainty, ensuring data authenticity without sacrificing privacy.

Horizon

The future of data integrity verification in decentralized options protocols points toward a fully abstracted, universal data layer where verification is a seamless, automated process. This data layer will not only provide price feeds but also complex financial parameters required for sophisticated derivatives, such as implied volatility surfaces and risk metrics. The long-term vision involves eliminating the concept of a “data feed” entirely by creating a self-verifying system where data is inherent to the protocol’s state transitions.

The primary challenge remaining is the integration of real-world assets (RWAs) into decentralized options. Verifying data for traditional financial instruments ⎊ such as real estate indices or commodity prices ⎊ introduces new complexities that current oracle designs are not equipped to handle. This requires a new class of verification mechanisms that can bridge the gap between the off-chain legal system and the on-chain cryptographic system.

This requires a fundamental re-architecture of how we think about data ownership and authenticity. The ultimate goal is to create a financial operating system where data integrity is not a feature but a fundamental property of the network.

The Data Integrity Paradox

As data integrity mechanisms become more sophisticated, they risk becoming overly complex, creating new attack vectors or increasing costs. The paradox is that the more layers of verification we add to achieve certainty, the more fragile the system becomes due to increased complexity. The future lies in simplifying the verification process while maintaining security, potentially through a new consensus mechanism where data verification is inherent to block validation rather than a separate oracle layer.

Glossary

Solvency Verification

Data Stream Verification

Protocol Invariants Verification

Data Integrity Management

Solvency Verification Mechanisms

Margin Data Verification

Quantitative Model Verification

On-Chain Data Feed Integrity

Price Oracle Integrity