Essence

The integrity of a financial derivative is an illusion if the ancestry of the input data remains unverified. Proof of Data Provenance establishes a cryptographic audit trail that links every data point back to its basal source, ensuring that the information used for pricing, settlement, and risk management has not been tampered with or misreported. In decentralized marketplaces, where trust is replaced by verification, the ability to demonstrate the pedigree of a price feed or a trade execution becomes the non-negotiable standard for institutional participation.

The reliability of a derivative contract depends entirely on the verifiable accuracy of its underlying price feed.

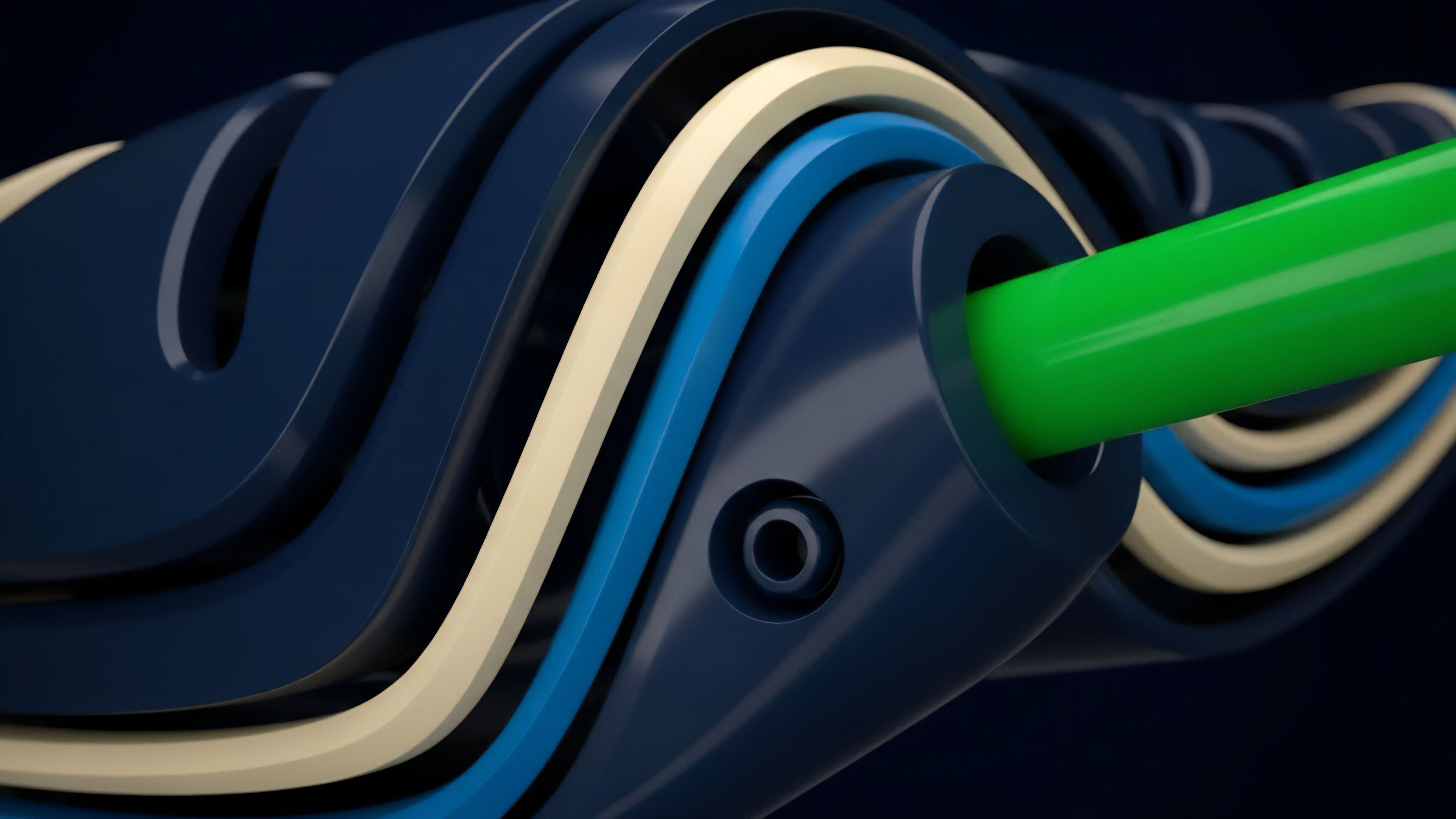

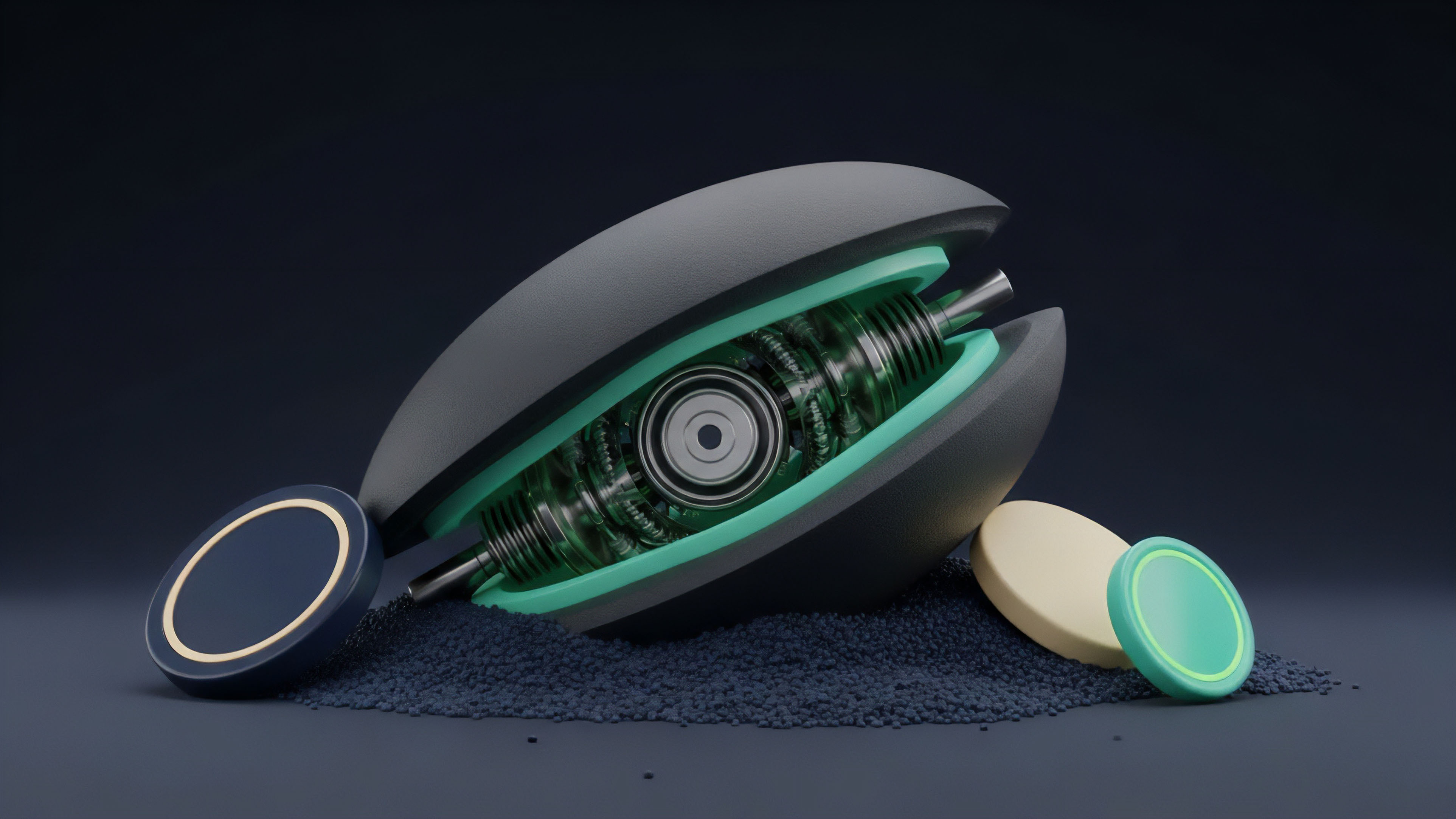

Within the architecture of automated market makers and options vaults, Proof of Data Provenance functions as the immune system of the protocol. It prevents the injection of malicious data ⎊ often used in oracle manipulation attacks ⎊ by requiring every update to carry a valid cryptographic signature and a history of its transformations. This process ensures that the Greeks, which dictate the risk sensitivity of a portfolio, are calculated from a baseline of absolute truth rather than distorted or stale information.

Systemic Integrity and Non-Repudiation

The quiddity of this mechanism lies in its ability to enforce non-repudiation across the entire lifecycle of a digital asset. When a trade is executed on a decentralized options exchange, the Proof of Data Provenance records the exact state of the order book and the external market conditions at that specific microsecond. This level of granularity allows for a forensic reconstruction of market events, which is vital for resolving disputes and maintaining the stability of the financial system during periods of extreme volatility.

Verification over Trust

Unlike traditional finance, where auditors verify data months after the fact, Proof of Data Provenance operates in real-time. It utilizes hashing functions and digital signatures to create a chain of custody that is visible to all participants. This transparency eliminates the need for intermediaries to vouch for the data, as the mathematics of the blockchain provide the necessary assurance.

The systemic result is a marketplace where the risk of data corruption is mitigated by the very structure of the information itself.

Origin

The requirement for verifiable data history arose from the early failures of electronic data interchange and the subsequent need for secure time-stamping in distributed systems. Proof of Data Provenance traces its lineage to the work of Leslie Lamport on logical clocks and the development of Merkle trees in the late 1970s. These mathematical structures provided the first efficient way to verify large sets of data without requiring the storage of the entire history at every node.

Cryptographic ancestry transforms data from a liability into a verifiable financial asset.

As high-frequency trading and algorithmic execution became dominant in global markets, the cost of “dirty” data ⎊ information that is inaccurate, delayed, or manipulated ⎊ began to pose a systemic threat. The emergence of Bitcoin and later Ethereum provided the first practical environment where Proof of Data Provenance could be implemented at scale. By anchoring data to a decentralized ledger, developers could finally create financial instruments that were immune to the localized failures of centralized data providers.

From Timestamps to Pedigree

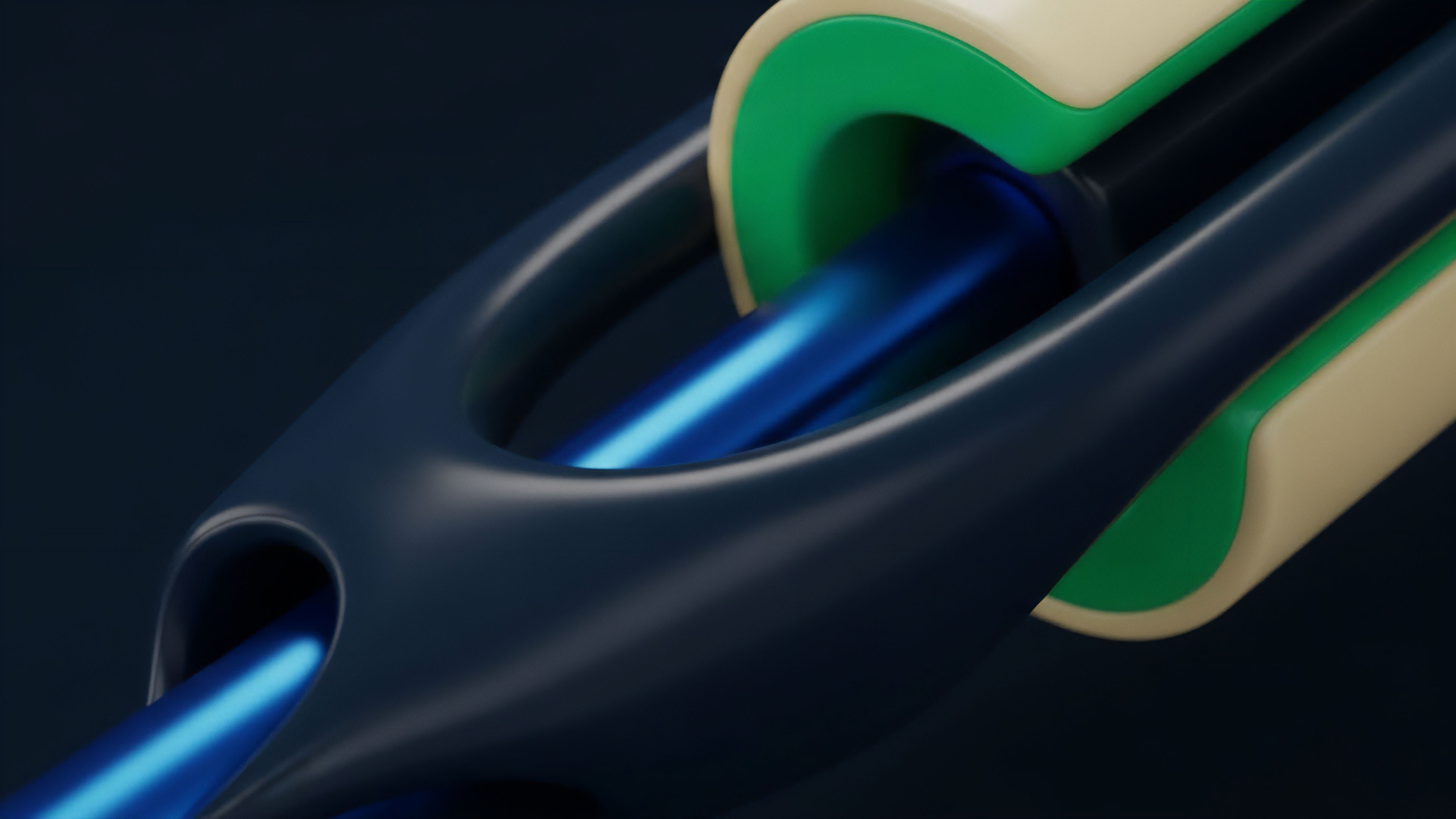

The transition from simple timestamping to complex provenance tracking was driven by the increasing complexity of derivative products. Early blockchain applications focused on simple transfers of value, but the rise of decentralized finance necessitated more sophisticated inputs. Proof of Data Provenance evolved to meet this demand, incorporating multi-signature requirements and decentralized oracle networks to ensure that no single point of failure could compromise the integrity of the data stream.

The Oracle Problem and the Search for Truth

The “Oracle Problem” ⎊ the challenge of bringing external data onto a blockchain securely ⎊ served as the primary driver for the refinement of provenance techniques. Market participants realized that a secure smart contract is useless if the data it consumes is flawed. This led to the development of protocols that prioritize the Proof of Data Provenance, using economic incentives and cryptographic penalties to ensure that data providers remain honest.

Theory

The mathematical foundation of Proof of Data Provenance rests on the properties of one-way hashing functions and the efficiency of inclusion proofs.

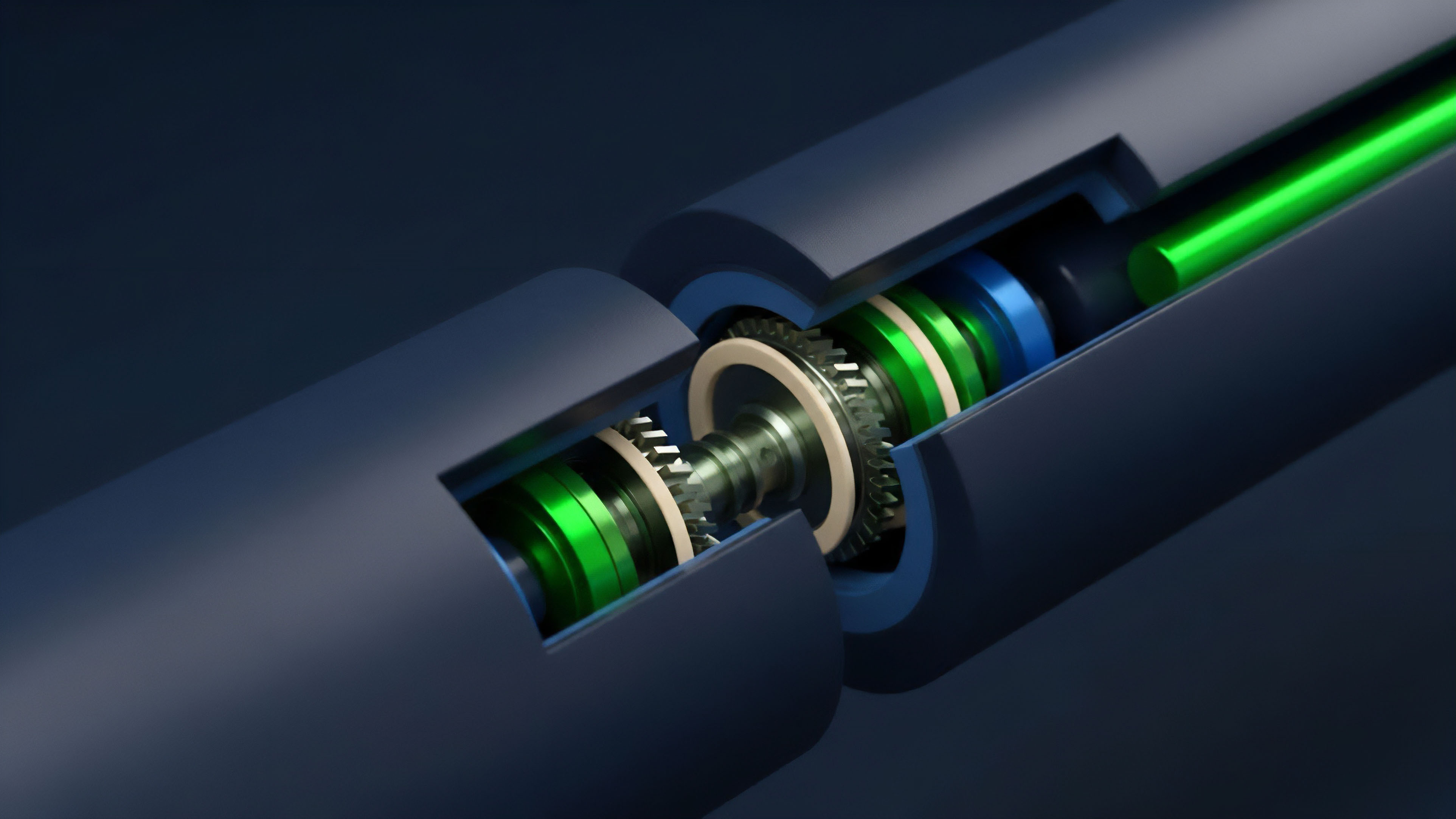

Every piece of data is hashed ⎊ transformed into a unique string of characters ⎊ and then combined with other hashes to form a Merkle tree. This structure allows a participant to verify that a specific data point belongs to a larger set by only examining a small fraction of the total data. This O(log n) efficiency is what enables real-time verification in high-throughput environments.

Market efficiency is a direct function of the transparency and immutability of information origins.

In the context of quantitative finance, Proof of Data Provenance ensures the stability of the pricing engine. If the delta or gamma of an option is calculated using a manipulated spot price, the resulting hedge will be ineffective, leading to potential liquidation. By integrating provenance into the pricing model, the system can automatically reject data that does not meet the required threshold of cryptographic validity.

This mirrors the laws of thermodynamics in physical systems ⎊ where information entropy must be managed to prevent the collapse of the structure.

Cryptographic Primitives and Inclusion

The use of Zero-Knowledge Proofs (ZKP) represents the current peak of provenance theory. ZKPs allow a data provider to prove the validity and origin of a piece of information without revealing the underlying data itself. This is vital for privacy-preserving derivatives trading, where a firm may want to prove they have the necessary collateral or that their trade was executed at a fair market price without exposing their proprietary strategy.

| Verification Method | Computational Cost | Security Level | Primary Use Case |

|---|---|---|---|

| Merkle Inclusion Proofs | Low | High | Oracle price feeds |

| Zero-Knowledge Proofs | High | Extreme | Privacy-preserving trades |

| Digital Signatures | Medium | Medium | Individual trade execution |

| Hashing Chains | Very Low | High | Historical audit logs |

Entropy and Information Stability

Information in a decentralized system tends toward disorder if not actively anchored. Proof of Data Provenance acts as a stabilizing force, ensuring that the state of the market remains consistent across all nodes. This theoretical framework treats data as a physical object with a specific history, making it impossible to “double-spend” or retroactively alter the record without breaking the entire chain of hashes.

Approach

Current implementations of Proof of Data Provenance utilize a combination of decentralized oracle networks and on-chain verification contracts.

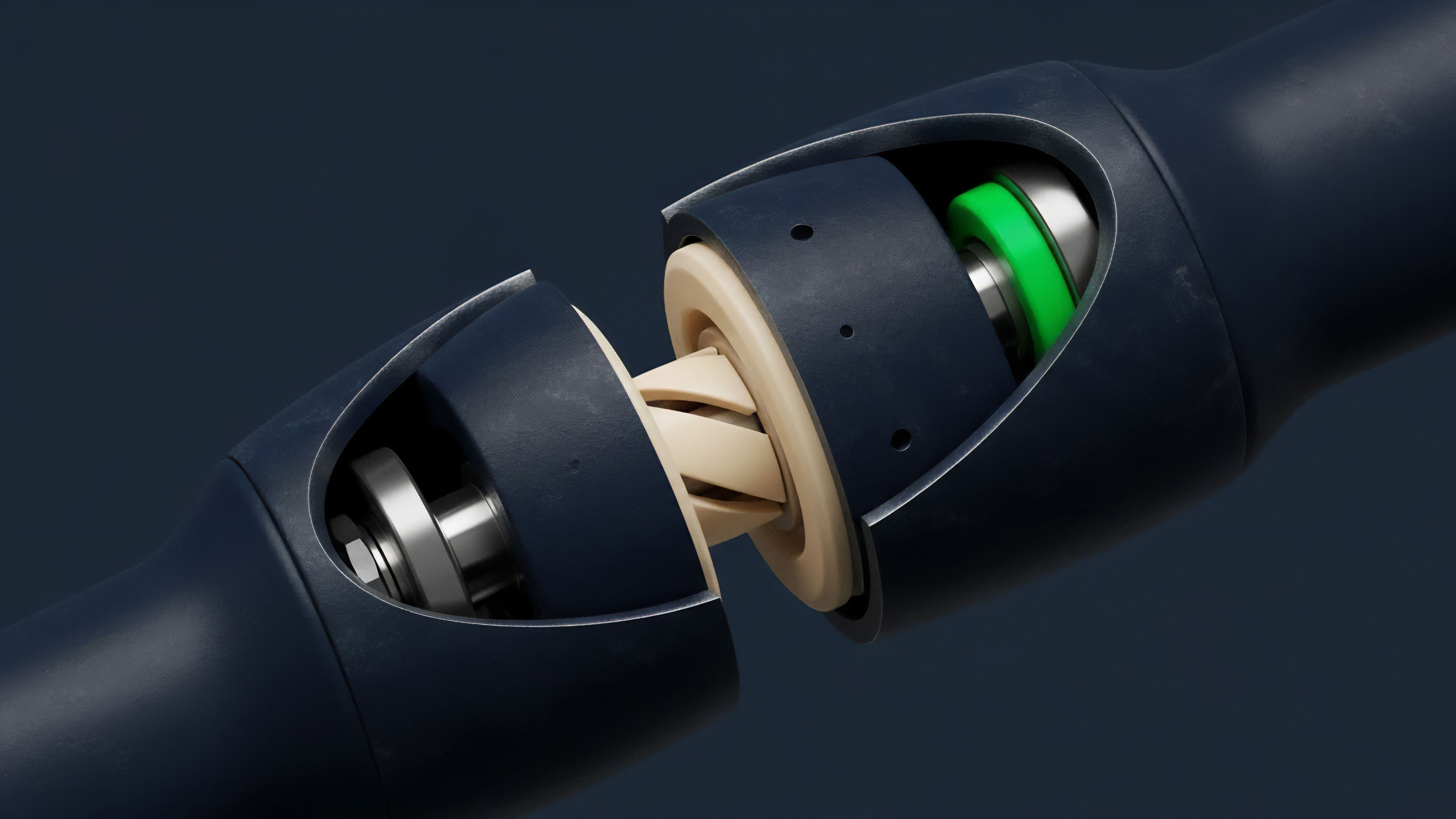

Protocols like Chainlink and Pyth use a “commit-reveal” scheme or a reputation-based aggregation model to ensure that the data delivered to a smart contract is accurate. The Proof of Data Provenance is generated by multiple independent nodes, each providing a signed attestation of the data they have retrieved from various sources.

- Attestation Generation: Data providers retrieve information from multiple APIs and sign the result with a private key.

- Aggregation and Consensus: A decentralized network of nodes compares the signed data, removing outliers and calculating a weighted average.

- On-Chain Verification: The aggregated data, along with the Proof of Data Provenance, is submitted to a smart contract that verifies the signatures before updating the price feed.

- Dispute Resolution: If the data is later found to be incorrect, the provenance trail allows the system to identify and penalize the malicious providers.

Push Vs Pull Models

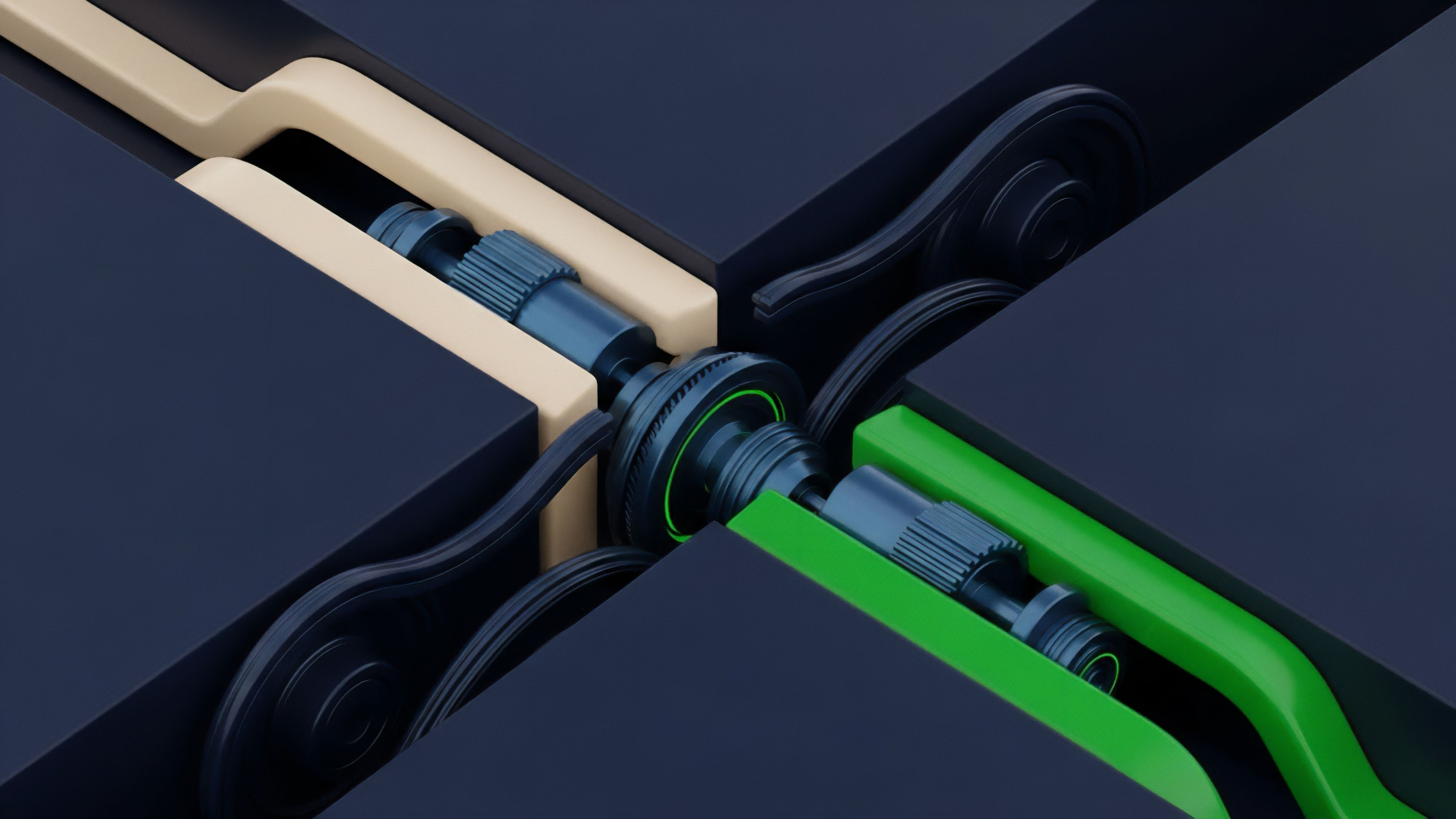

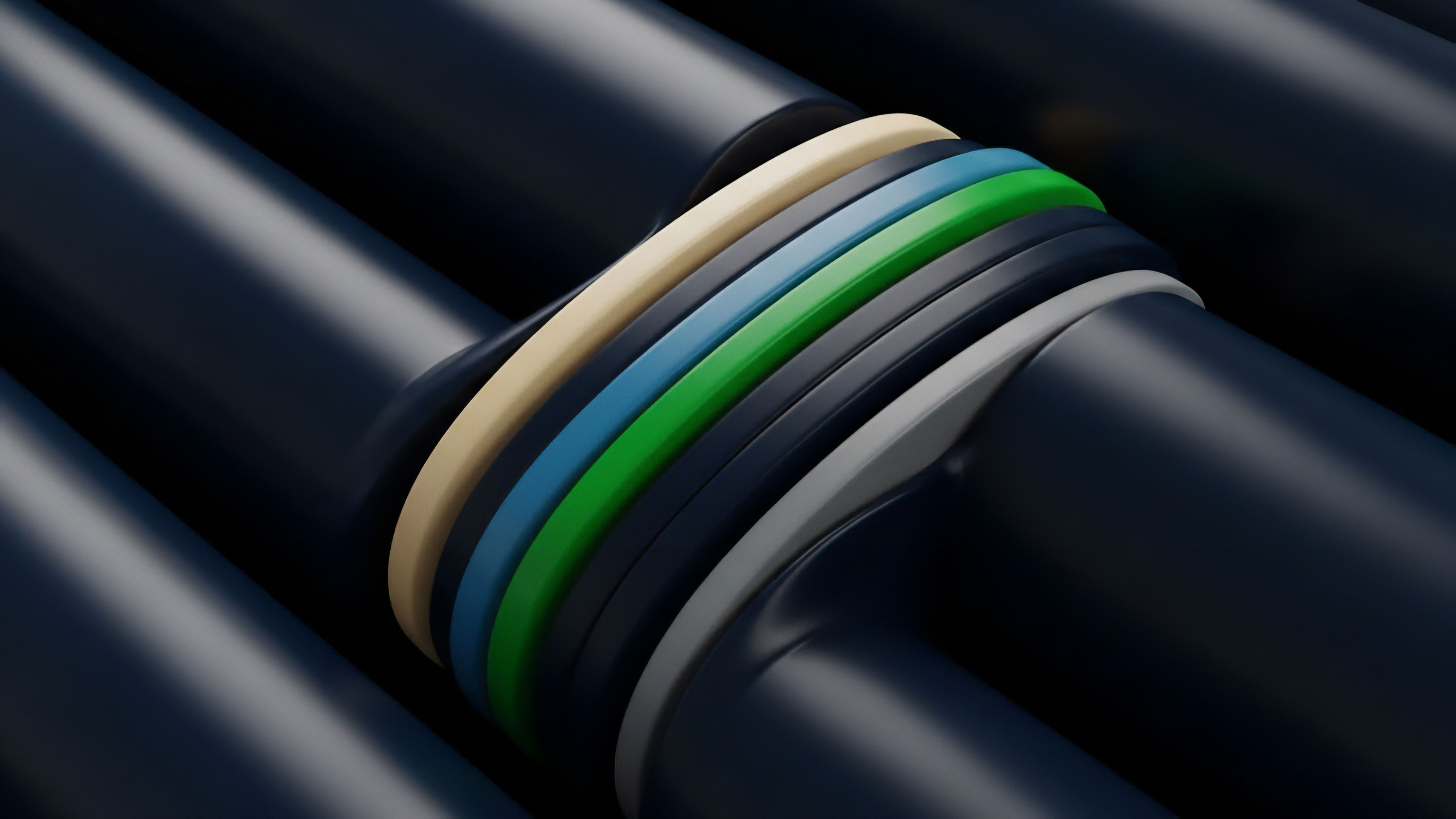

The methodology for delivering provenance data varies based on the needs of the derivative protocol. “Push” models broadcast data at regular intervals, which is suitable for assets with consistent liquidity. “Pull” models, however, require the user to request a price update and provide the Proof of Data Provenance at the time of execution.

This latter method is increasingly preferred for options trading, as it reduces latency and ensures that the price used for settlement is as close to the execution time as possible.

| Model Type | Latency Profile | Cost Efficiency | Provenance Strength |

|---|---|---|---|

| Push Model | Higher | Lower (shared cost) | High (periodic) |

| Pull Model | Lower | Higher (user-paid) | Very High (on-demand) |

| Hybrid Model | Medium | Medium | Adaptive |

Risk Mitigation in Data Transmission

The primary risk in any provenance system is the “Man-in-the-Middle” attack, where data is intercepted and altered before it reaches the blockchain. To counter this, Proof of Data Provenance systems often use Trusted Execution Environments (TEEs) like Intel SGX. These hardware-level security features ensure that the data is processed in a secure enclave, making it virtually impossible for even the node operator to tamper with the information before it is signed and sent to the network.

Evolution

The transition from centralized data silos to decentralized provenance has been marked by a shift in the power dynamics of financial information.

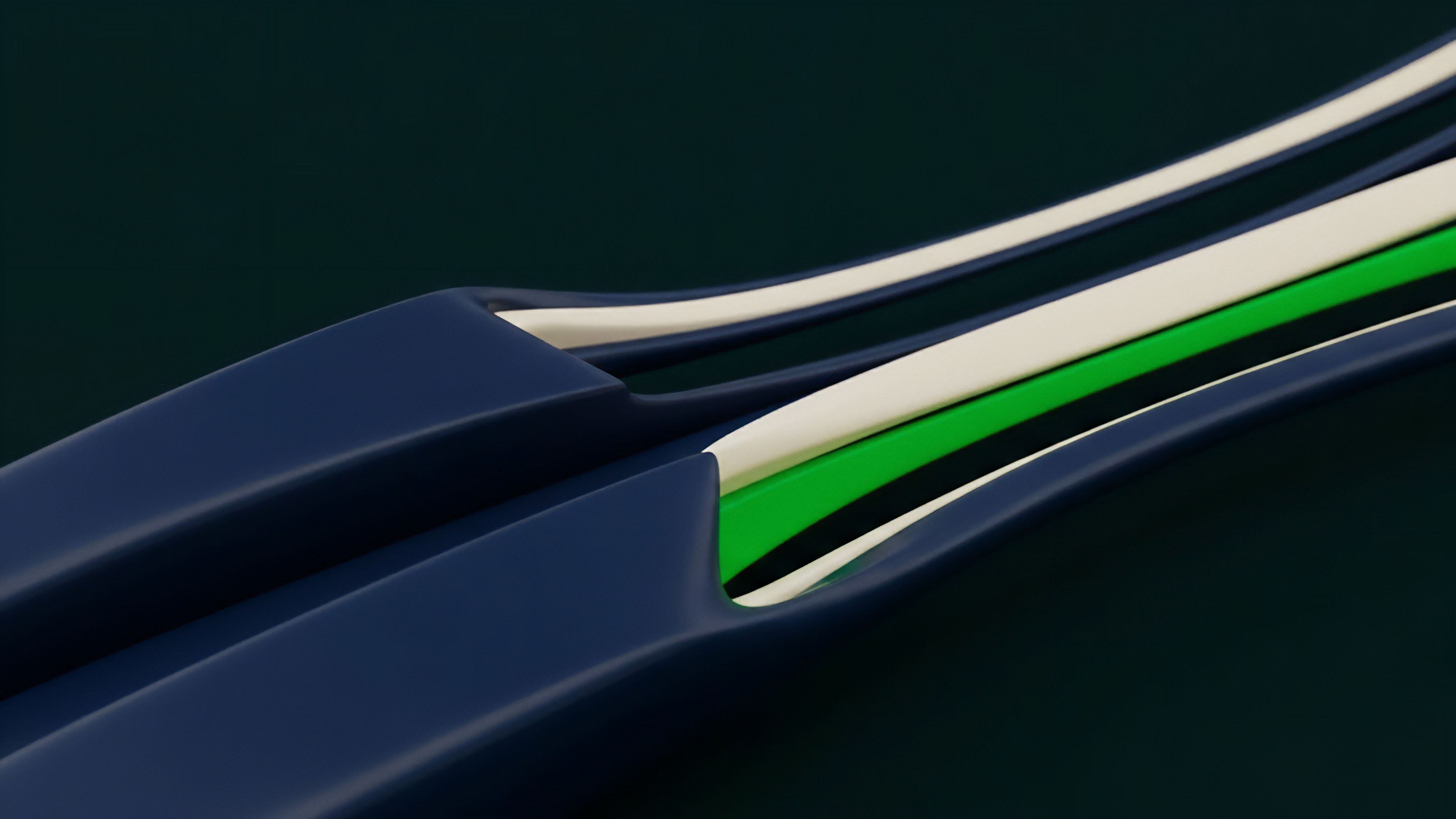

In the early days of crypto, traders relied on a single exchange’s API for pricing, which created significant risks of flash crashes and manipulation. The introduction of Proof of Data Provenance changed this by distributing the responsibility for data integrity across a global network of participants, making the system more resilient to localized failures. Alongside this structural change, the technical sophistication of provenance tracking has increased.

Early systems only recorded the final price, but modern protocols record the entire path the data took ⎊ from the original exchange through the aggregation layer and finally to the smart contract. This “deep provenance” allows for a much higher level of scrutiny and enables the creation of more complex derivatives that depend on the historical volatility or the volume-weighted average price of an asset.

The Shift to Zero-Knowledge

The most significant change in the recent history of Proof of Data Provenance is the adoption of zero-knowledge technology. By using zk-STARKs or zk-SNARKs, protocols can now provide a Proof of Data Provenance that is both incredibly secure and highly compressed. This reduces the gas costs associated with on-chain verification, making it feasible to track the provenance of thousands of data points per second.

This efficiency is vital for the scaling of decentralized options platforms that require frequent updates to maintain accurate pricing.

Institutional Compliance and Transparency

As regulatory scrutiny of the digital asset space increases, Proof of Data Provenance has taken on a new role as a tool for compliance. Institutional investors require a clear audit trail for every trade to satisfy internal risk management and external regulatory requirements. The ability to provide a cryptographic Proof of Data Provenance for every transaction allows decentralized protocols to meet these standards without sacrificing the privacy or autonomy of their users.

Horizon

The future of Proof of Data Provenance lies at the intersection of artificial intelligence and decentralized infrastructure.

As AI-generated data becomes more prevalent in financial markets, the need to distinguish between human-verified information and machine-generated synthetic data will become imperative. Proof of Data Provenance will evolve to include “Proof of Computation,” ensuring that the models used to generate financial forecasts or risk assessments are themselves verifiable and have not been tampered with. Furthermore, the expansion of cross-chain interoperability will require a new form of “Universal Provenance.” In a future where assets move seamlessly between different blockchains, the Proof of Data Provenance must be able to traverse these boundaries without losing its integrity.

This will likely involve the use of recursive ZK-proofs, where a proof of a proof is used to maintain the chain of custody across multiple layers and networks.

Dark Pools and Private Provenance

The development of decentralized dark pools will rely heavily on advanced Proof of Data Provenance. These venues require participants to prove they have the assets and the authority to trade without revealing their identity or the size of their position. By using Proof of Data Provenance in conjunction with multi-party computation, these platforms can offer the same level of security as public exchanges while maintaining the absolute privacy required by large institutional players.

The End of Information Asymmetry

Ultimately, the widespread adoption of Proof of Data Provenance will lead to a significant reduction in information asymmetry. When every participant has access to the same verifiable history of an asset, the advantage held by centralized intermediaries and insiders is diminished. This will promote a more level playing field, where the success of a trading strategy depends on the quality of the analysis rather than the exclusivity of the data. The financial system of the future will be built on a basal layer of cryptographic truth, where Proof of Data Provenance serves as the ultimate arbiter of value.

Glossary

Price Feed

Proof-of-Computation

Dark Pool Privacy

Decentralized Oracle Networks

Cross-Chain State Proofs

Decentralized Marketplaces

Margin Engine Reliability

Smart Contract Inputs

Data Provider Reputation