Essence

Computational sovereignty requires the ability to prove the validity of operations executed outside the restricted environment of a blockchain virtual machine. Off-Chain Computation Verification represents the cryptographic guarantee that external data processing adheres to a specific, pre-defined logic without requiring every node to re-execute the task. This architectural shift addresses the bottleneck of synchronous execution, allowing decentralized protocols to handle the high-frequency calculations required for sophisticated financial instruments.

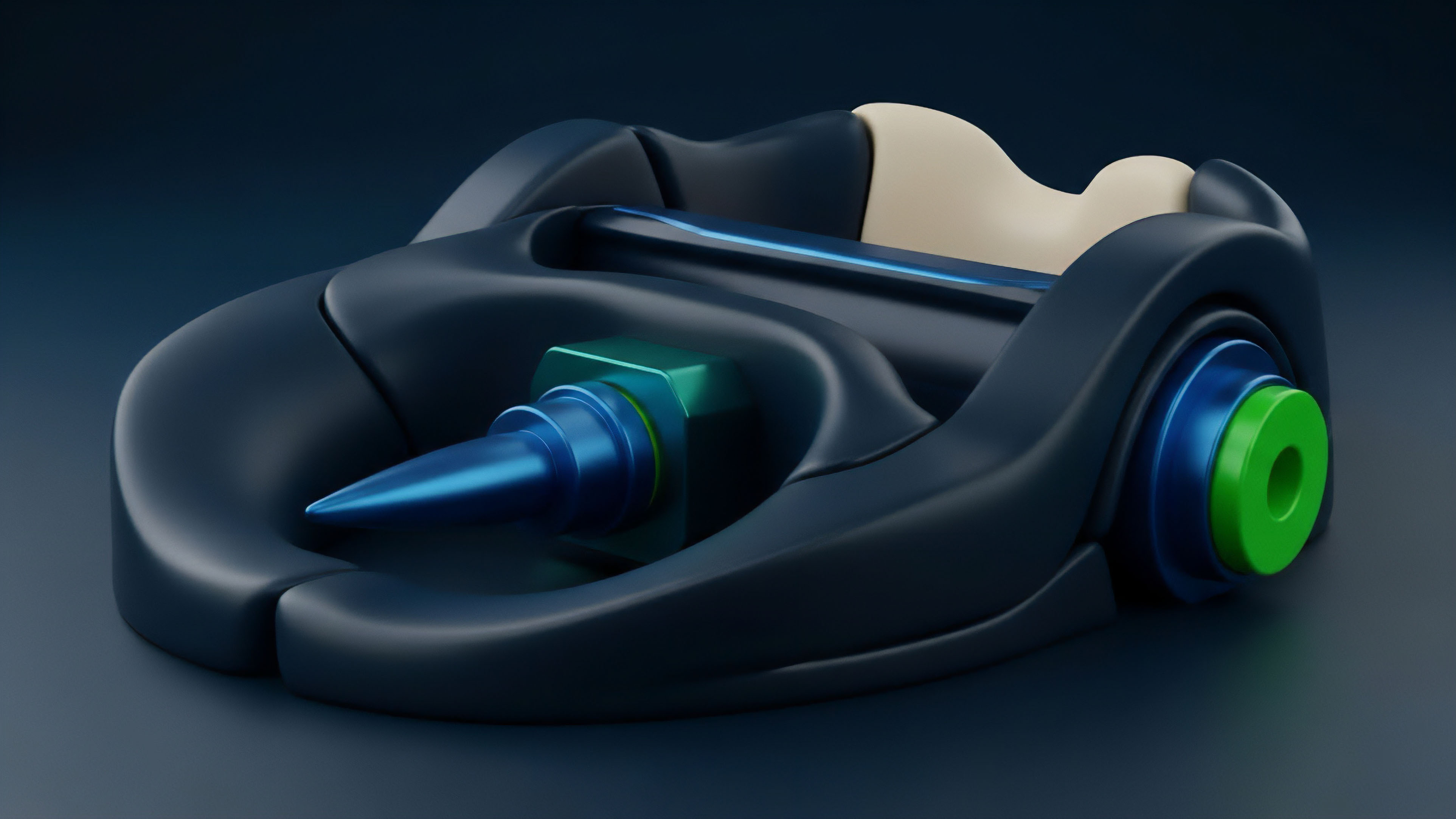

Off-Chain Computation Verification establishes a mathematical link between external processing and on-chain settlement.

Trustless systems face a choice between extreme latency or centralized reliance. By utilizing Off-Chain Computation Verification, developers move heavy lifting ⎊ such as Black-Scholes pricing or complex margin calculations ⎊ to specialized environments while maintaining the security properties of the base layer. This creates a modular stack where execution is separated from settlement, ensuring that the integrity of the ledger remains intact even as the complexity of the financial products increases.

Origin

The demand for sophisticated risk engines in decentralized finance exposed the limitations of early blockchain designs.

Initial decentralized exchanges functioned as simple automated market makers, but the transition to professional-grade options and derivatives necessitated a higher computational ceiling. The high gas costs and block time constraints of Layer 1 networks made real-time volatility surface adjustments and complex liquidation auctions unfeasible.

Early protocol limitations necessitated the development of verifiable external execution environments.

Architects looked toward cryptographic research to solve this impasse. The move toward Off-Chain Computation Verification was accelerated by the realization that scaling via simple throughput increases would lead to centralization. Instead, the industry shifted toward validity proofs and optimistic execution models.

These methods allow the protocol to verify a proof of computation rather than the computation itself, effectively decoupling the cost of verification from the complexity of the task.

Theory

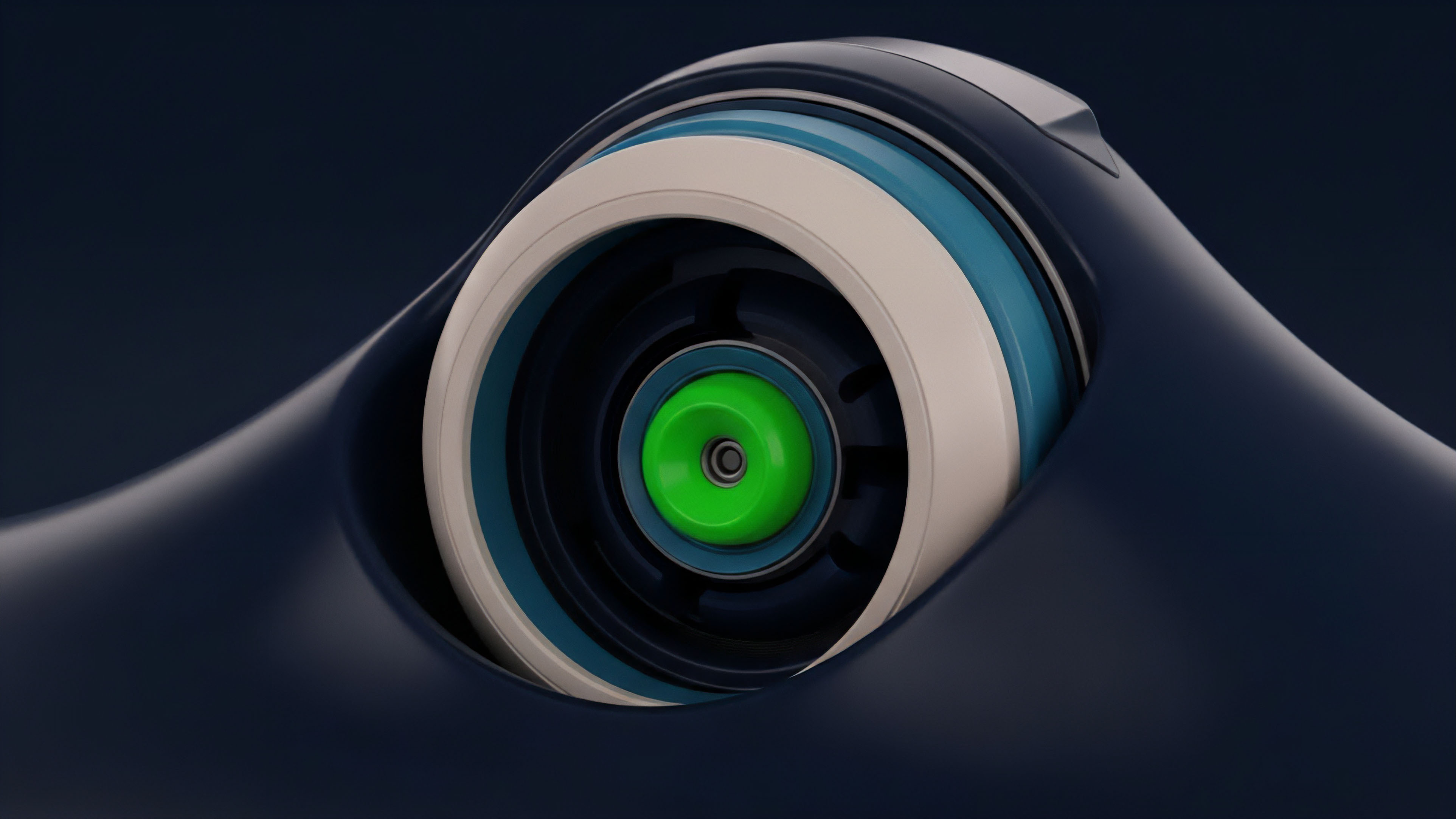

The mathematical foundation of Off-Chain Computation Verification rests on the ability to represent a program as a set of algebraic constraints. Through a process of arithmetization, logic is converted into polynomials over a finite field. A prover generates a succinct proof demonstrating that they know a witness that satisfies these constraints.

The verifier, located on-chain, can then confirm this proof in logarithmic or constant time relative to the original computation size.

- Polynomial Commitments: Cryptographic schemes that allow a prover to commit to a polynomial and later prove its evaluation at specific points.

- Arithmetization: The transformation of computer code into a system of equations that can be verified cryptographically.

- Proof Aggregation: The technique of combining multiple proofs into a single proof to reduce on-chain verification costs.

The efficiency of these systems is measured by the trade-off between prover time and verifier cost. In the context of options, the Off-Chain Computation Verification system must process thousands of delta and gamma calculations per second. The security of the margin engine depends on the soundness of the proof system, ensuring that no participant can submit a false state transition to avoid liquidation or inflate their collateral value.

This mathematical abstraction mirrors the transition in physics from Newtonian mechanics to quantum field theory, where the observer’s verification defines the reality of the state.

| Verification Type | Security Model | Verification Latency |

|---|---|---|

| Validity Proofs | Cryptographic Soundness | Low |

| Fraud Proofs | Economic Incentives | High |

Approach

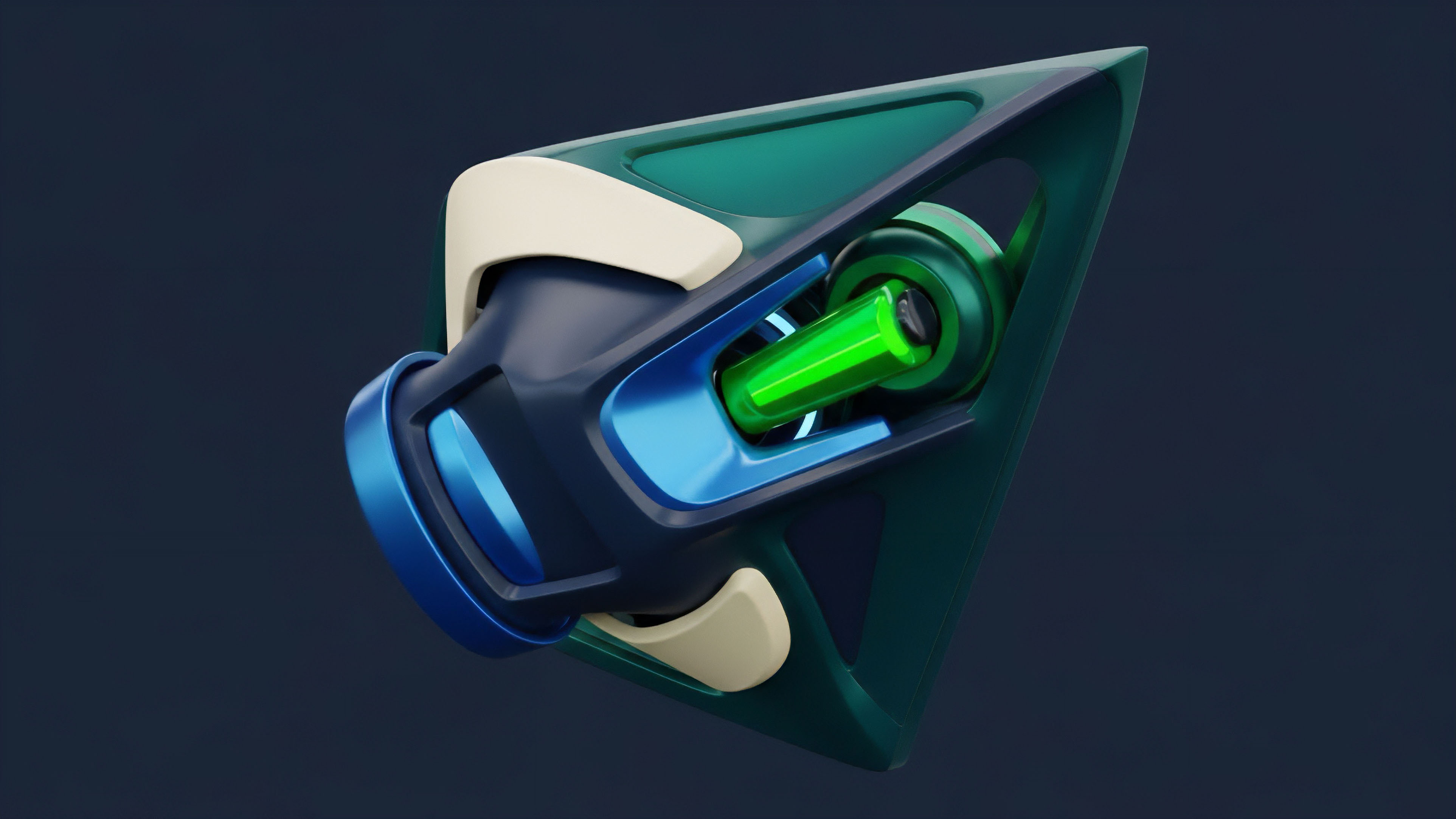

Current implementations of Off-Chain Computation Verification utilize specialized coprocessors and zero-knowledge virtual machines. These systems allow developers to write logic in standard languages like Rust or C++, which are then compiled into a verifiable circuit. This removes the need for manual circuit construction, reducing the risk of bugs in the cryptographic implementation.

Modern verification systems utilize zero-knowledge virtual machines to execute standard programming logic with cryptographic certainty.

Risk management engines now leverage these coprocessors to calculate real-time portfolio health. Instead of simplified on-chain checks, the Off-Chain Computation Verification layer processes the entire volatility surface and cross-marginal requirements. The resulting state update is sent to the smart contract along with a validity proof, ensuring that the ledger only updates if the calculations were performed correctly.

| Component | Function | Primary Risk |

|---|---|---|

| Prover | Generates the cryptographic proof of computation | Liveness and computational overhead |

| Verifier | Confirms the proof on the blockchain | Gas cost and implementation vulnerabilities |

| Sequencer | Orders transactions before off-chain processing | Centralization and censorship |

Evolution

The shift from reputation-based systems to cryptographic verification marks a turning point in the maturity of digital asset markets. Historically, off-chain logic was handled by centralized oracles or multisig committees. These models introduced significant counterparty risk, as the integrity of the financial system depended on the honesty of a few actors.

The introduction of Off-Chain Computation Verification eliminates this trust requirement, replacing human discretion with mathematical proof. Our survival in a high-adversarial environment depends on this shift.

- Manual Governance: Early protocols relied on human intervention and multisig wallets to manage complex parameters.

- Optimistic Execution: Systems assumed honesty but allowed for challenges, introducing a trade-off between security and speed.

- Cryptographic Finality: Current systems provide immediate certainty through validity proofs, enabling high-performance trading.

This transition has direct implications for capital efficiency. When the margin engine is verified via Off-Chain Computation Verification, the protocol can safely offer higher leverage and tighter spreads. The reduction in uncertainty allows market makers to commit more liquidity, knowing that the liquidation logic is immutable and verifiable. The industry has moved away from the “move fast and break things” ethos toward a “verify everything” standard.

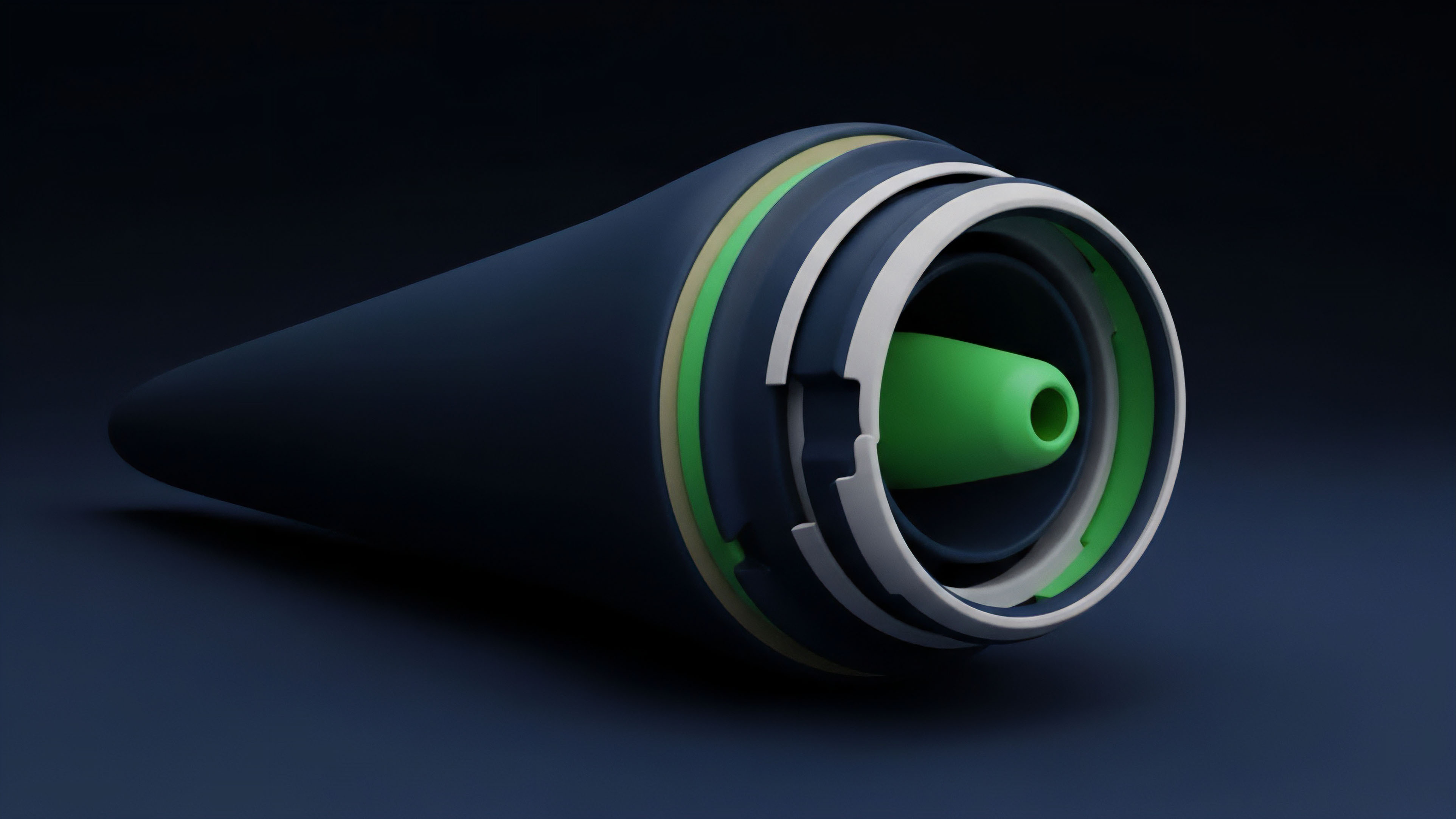

Horizon

The next phase of development involves the integration of recursive proofs and hardware acceleration. Recursive Off-Chain Computation Verification allows a proof to verify other proofs, enabling the compression of an entire day’s worth of trading activity into a single on-chain transaction. This will permit the creation of decentralized options clearinghouses that rival the performance of traditional finance venues while maintaining full transparency. The convergence of zero-knowledge technology and decentralized physical infrastructure will likely decentralize the prover role. This ensures that the Off-Chain Computation Verification process remains censorship-resistant and highly available. As specialized hardware reduces the cost of proof generation, we will see the rise of hyper-liquid, trustless derivative markets that operate with the speed of centralized exchanges but the security of a global blockchain. How does the transition to verifiable off-chain logic impact the systemic risk profile of cross-protocol liquidity when the underlying proofs share a common cryptographic library vulnerability?

Glossary

Option Exercise Verification

Synthetic Asset Verification

Verification Cost Optimization

Data Verification Layer

Auditable Risk Computation

Oracle Integrity

Off-Chain Market Dynamics

Off-Chain Computation Nodes

External Data