Essence

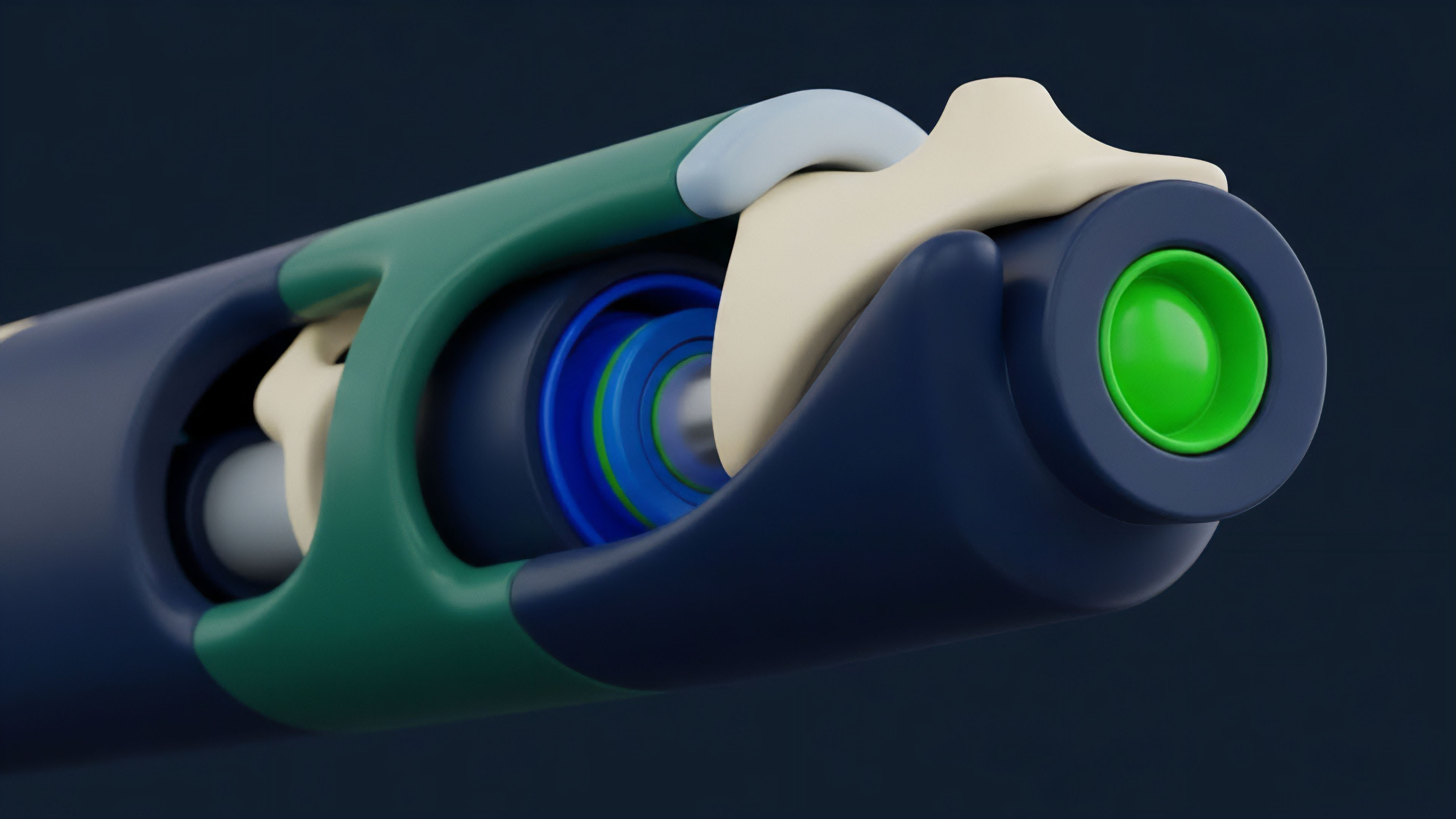

Optimistic assumptions define a core design philosophy in decentralized systems where the default state of transactions is assumed to be valid, rather than requiring cryptographic proof of validity for every single state transition. This approach, most prominently used in Layer 2 scaling solutions, fundamentally alters the trade-off between speed, cost, and security. The core premise is that a transaction or state change is considered legitimate unless a participant explicitly challenges it during a specified time window.

This challenge mechanism relies on fraud proofs, where a verifier can demonstrate that an invalid state transition occurred. The financial significance of this architecture, particularly for derivatives, lies in its impact on settlement finality. By deferring the computationally expensive validity checks, optimistic systems drastically reduce transaction costs and increase throughput.

This allows for the high frequency and low latency required by options and perpetual futures markets. However, this efficiency comes at the cost of immediate finality; a withdrawal or settlement cannot be considered fully finalized until the challenge period has elapsed. This creates a temporal gap in risk management that protocols must actively mitigate, especially when dealing with highly leveraged positions where rapid liquidation is necessary to prevent cascading failures.

The assumption of honesty, backed by a robust economic incentive structure where malicious actors lose a security bond, forms the foundation of this scaling model.

Optimistic assumptions create a new risk-reward profile for decentralized finance by prioritizing high throughput and low cost, while deferring settlement finality to a challenge period.

Origin

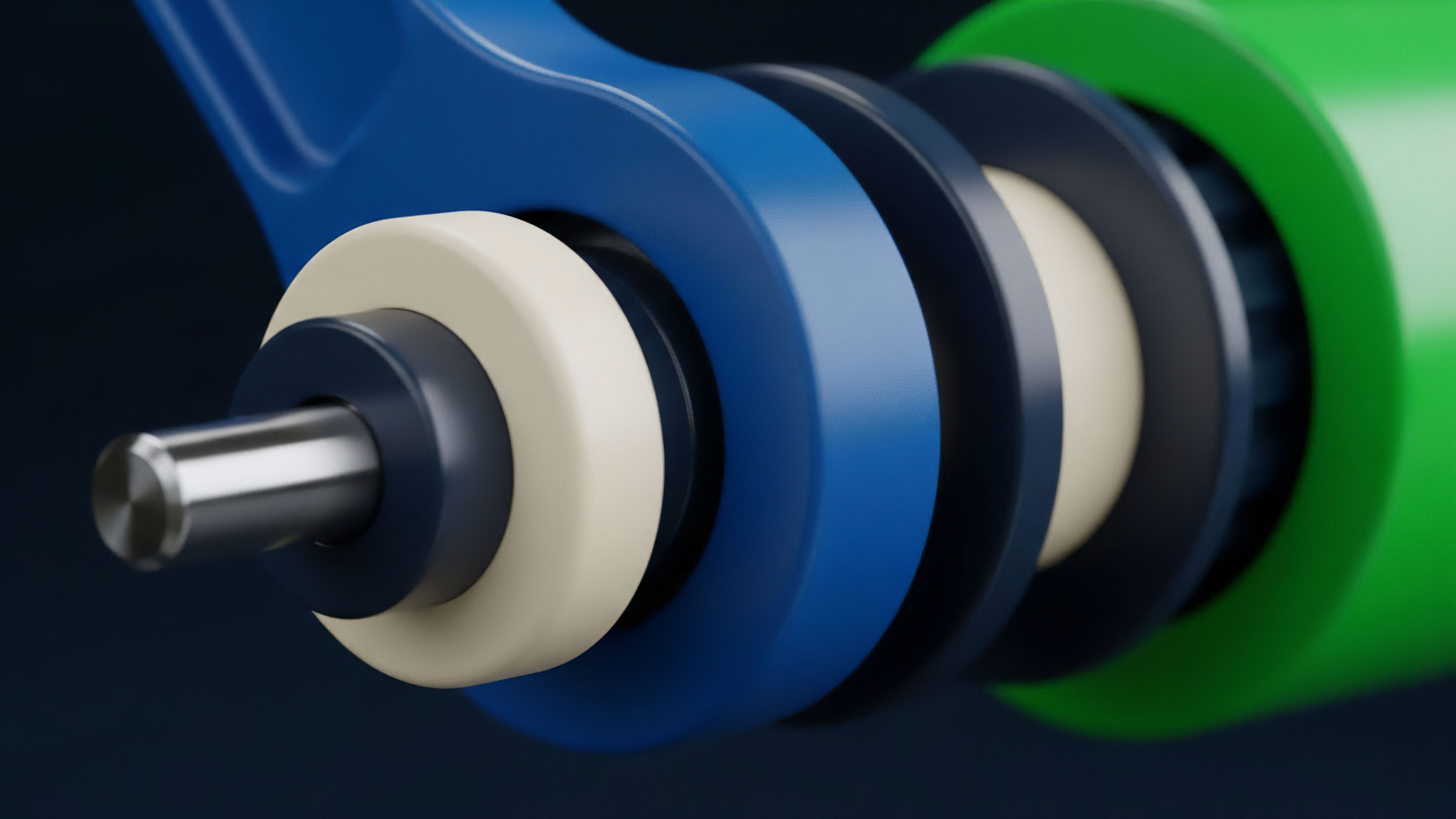

The concept of optimistic execution in decentralized systems traces its roots to early research into off-chain scaling solutions, particularly in response to the scalability limitations of first-generation blockchains. Early attempts at scaling, such as sidechains and Plasma, sought to move computation off the main chain but struggled with complex data availability challenges. Plasma, for example, required users to constantly monitor all transactions to ensure their funds were safe, creating significant practical hurdles for mass adoption.

The Optimistic Rollup architecture emerged as a refinement of these ideas, simplifying the data availability problem by posting all transaction data back to the Layer 1 chain. The core breakthrough was shifting from “validity proofs” (which require every transaction to be cryptographically proven correct before acceptance, as seen in ZK-Rollups) to “fraud proofs” (which assume correctness and only require proof of incorrectness if a dispute arises). This design choice was heavily influenced by game theory, specifically the idea of creating an adversarial environment where honest participants are incentivized to challenge fraud, while dishonest participants face a high cost for attempting an attack.

This mechanism allows for a significant reduction in computational overhead for the network as a whole, as only invalid transactions require extensive computation during the challenge period. The challenge period itself is an economic and technical parameter that directly impacts the risk profile of the system, acting as a crucial element in the design of derivative protocols built on top of this infrastructure.

Theory

The theoretical underpinnings of optimistic assumptions are rooted in a combination of computer science principles and behavioral game theory.

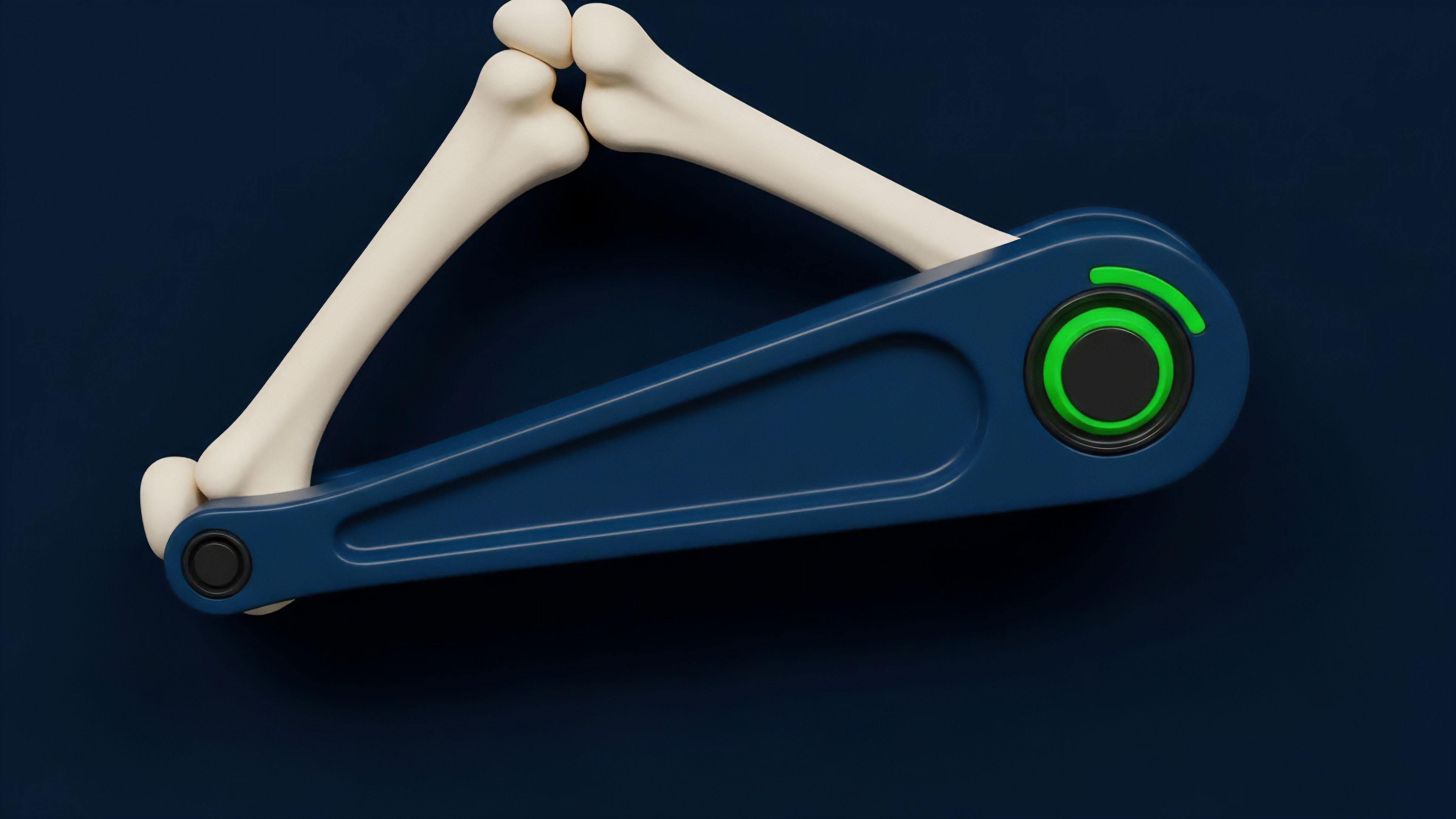

The system’s integrity relies on a security bond and the economic incentive for a “dispute game” between a malicious sequencer and a network of verifiers. When a sequencer proposes a state update, they stake a bond. If an honest verifier detects fraud, they submit a fraud proof and initiate the dispute process.

The verifier is rewarded for a successful challenge, while the malicious sequencer loses their bond. The entire system operates under the assumption that at least one honest verifier exists and is actively monitoring the chain. From a quantitative finance perspective, the challenge period introduces a specific type of settlement risk.

A derivative contract’s value depends heavily on the certainty and timing of cash flows. In an optimistic system, the final settlement of a derivative position, particularly one involving a withdrawal from the L2 to the L1, is subject to the duration of this challenge period. This temporal uncertainty impacts pricing models and risk management strategies.

For example, a market maker on an optimistic L2 must account for the fact that capital locked in a derivative position cannot be immediately moved to another chain or L1, creating an opportunity cost and increasing liquidity risk. The duration of the challenge period is a key variable in determining the required margin for positions and the liquidation thresholds.

Risk Implications for Derivatives

- Liquidation Latency: The challenge period creates a time delay between when a position falls below the margin threshold and when the liquidation can be fully executed and settled on L1. This can lead to under-collateralization and potential bad debt for the protocol if the underlying asset’s price moves significantly during the challenge window.

- Bridging Risk: Capital moving between the L1 and the optimistic L2 is subject to the challenge period. This means capital efficiency is reduced, as funds are locked for several days during a standard withdrawal, impacting market makers’ ability to quickly rebalance portfolios across different chains.

- Adversarial Behavior: The system assumes rational actors. However, in times of high market volatility, a malicious actor could attempt to exploit the challenge period by submitting fraudulent transactions, forcing verifiers to spend resources on disputes and potentially creating a temporary denial-of-service condition for settlement.

Approach

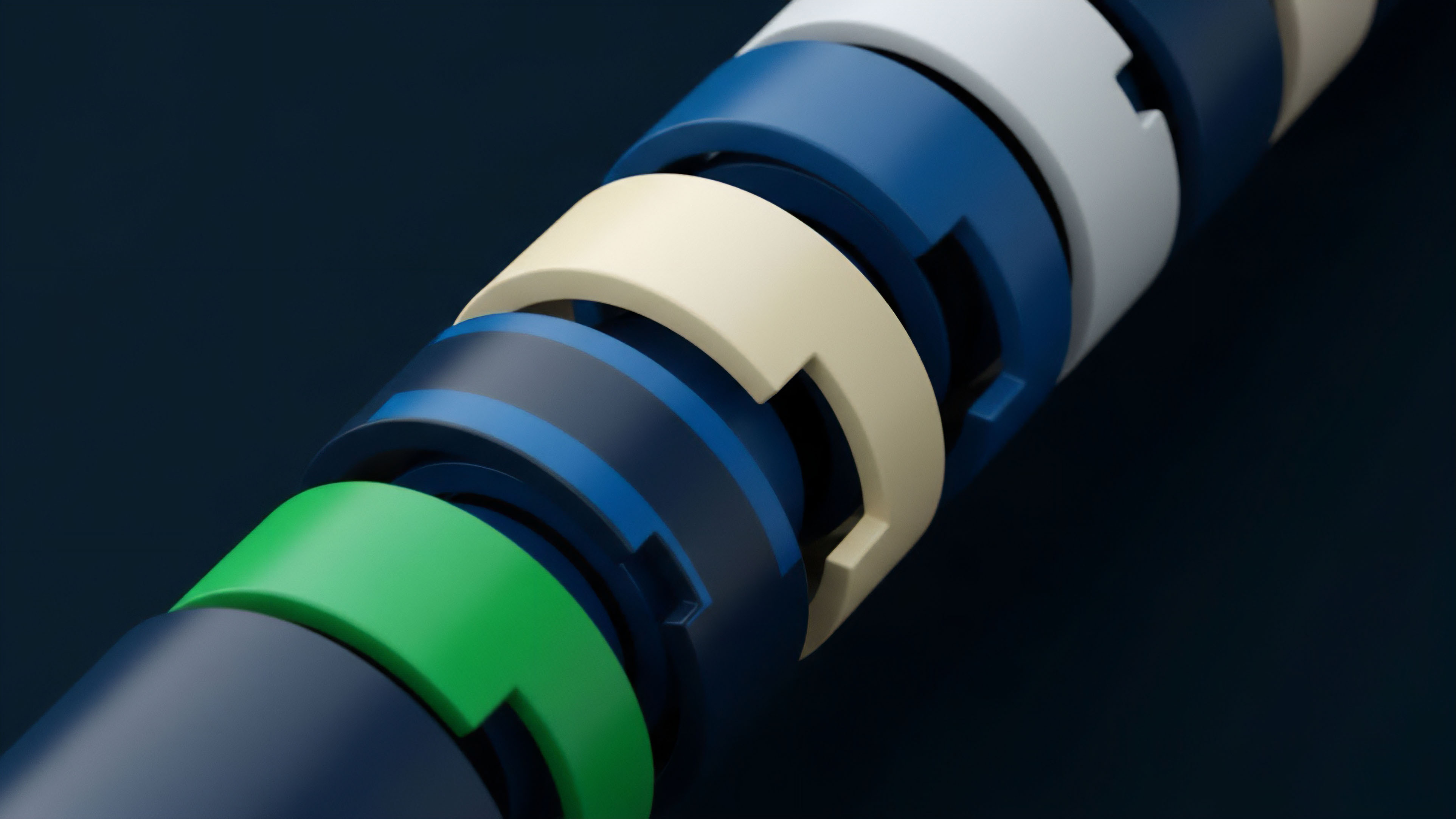

In practice, derivative protocols built on optimistic L2s adopt specific strategies to mitigate the risks inherent in optimistic assumptions. The primary mitigation technique involves adjusting risk parameters to account for the challenge period’s latency. Protocols often require higher initial margin and maintenance margin requirements on L2s compared to L1s, effectively over-collateralizing positions to absorb potential price movements during the settlement delay.

Another critical approach involves the use of “fast withdrawals” or “liquidity providers” that bridge the time gap. A liquidity provider (LP) offers immediate L1 liquidity to users wishing to exit the L2. The user pays a small fee, and the LP takes on the risk of waiting out the challenge period to reclaim their capital.

This creates a secondary market for finality, allowing market makers and traders to effectively bypass the optimistic assumption’s latency for a price.

Risk Management Strategies for L2 Derivatives

- Margin Requirement Adjustments: Protocols increase margin requirements based on the L2’s challenge period duration. A longer challenge period necessitates higher collateral to protect against price volatility during the delay.

- Fast Withdrawal Services: Market makers provide fast withdrawal services by offering immediate liquidity to users exiting the L2. This allows for rapid capital reallocation but introduces a new counterparty risk between the user and the liquidity provider.

- Oracle Design: Optimistic L2s must rely on robust oracle designs to feed price data to derivative contracts. The oracle itself must be designed to account for the challenge period, ensuring that price feeds are consistent and resistant to manipulation during the dispute window.

| Parameter | L1 Settlement | Optimistic L2 Settlement |

|---|---|---|

| Finality Speed | Immediate (after block confirmation) | Delayed (after challenge period) |

| Capital Efficiency | High (minimal latency risk) | Lower (capital locked during challenge period) |

| Risk Mitigation | Block validation | Fraud proofs and economic bonds |

Evolution

The evolution of optimistic assumptions has centered on addressing the practical challenges introduced by the initial design. The most significant challenge has been the centralization of the sequencer role. The sequencer is responsible for ordering transactions and proposing state updates to the L1.

In most current optimistic rollups, this sequencer is a single entity. While this provides high efficiency, it introduces a single point of failure and potential for censorship. A malicious sequencer could withhold transactions, censor specific users, or engage in front-running to extract value.

To counteract this, the focus has shifted to decentralizing the sequencer role. The introduction of pre-confirmations allows the sequencer to provide a guarantee to users that their transaction will be included in the next block, effectively reducing the latency risk for derivatives traders before the transaction is even posted to L1. The ultimate goal, however, is a shared sequencing layer where multiple sequencers compete to process transactions, eliminating single points of failure and creating a more robust, censorship-resistant environment.

This shared sequencing model also promises to solve liquidity fragmentation across different L2s, as capital can be moved between protocols on different rollups without needing to return to L1 first.

The transition from centralized sequencers to shared sequencing layers is critical for enhancing the security and liquidity of derivatives protocols operating under optimistic assumptions.

Horizon

Looking ahead, the optimistic assumption model is converging with other scaling solutions to create hybrid architectures. The primary competition comes from ZK-Rollups, which offer near-instant finality by using validity proofs, eliminating the need for a challenge period. While ZK-Rollups historically faced greater computational complexity for general-purpose smart contracts, recent advancements are closing this gap.

The future landscape for derivatives protocols will likely feature a segmentation of products based on risk tolerance and finality requirements. High-frequency trading and high-leverage perpetual futures may migrate toward ZK-Rollups due to their superior finality. Conversely, more complex options and structured products may continue to operate on optimistic L2s where the economic trade-offs are acceptable.

The long-term trajectory involves a modular blockchain design where different layers specialize in specific functions. The optimistic assumption will likely persist as a core mechanism for certain types of high-throughput applications, but its implementation will become more sophisticated, integrating with decentralized sequencers and shared liquidity layers to minimize the risks associated with the challenge period. The challenge for systems architects is to design protocols that can seamlessly bridge these different finality models, allowing for capital to flow freely while respecting the inherent security assumptions of each layer.

| Feature | Optimistic Rollup | ZK-Rollup |

|---|---|---|

| Finality Mechanism | Fraud Proofs (Assumed valid) | Validity Proofs (Proven valid) |

| Withdrawal Delay | Challenge period (Days) | Near-instant (Minutes) |

| Computational Cost | Lower for execution, higher for disputes | Higher for proof generation, lower for verification |

Glossary

Optimistic Oracle Design

Optimistic Rollup

Trust Assumptions in Bridging

Optimistic Security Assumptions

Cryptographic Assumptions

Optimistic Rollup Costs

Cryptographic Assumptions Analysis

Optimistic Finality Window

Optimistic Bridge Costs