Nature of Optimistic Systems

The Optimistic Verification Model functions as a trust-minimized architecture where state transitions are accepted by default. This protocol design reverses the traditional consensus requirement for immediate, synchronous validation of every transaction. By deferring the verification process to a designated challenge window, the system achieves significant throughput gains, particularly for high-frequency financial instruments like crypto options. The underlying assumption is that the majority of participants act honestly, while the security of the network is maintained by the threat of economic penalties.

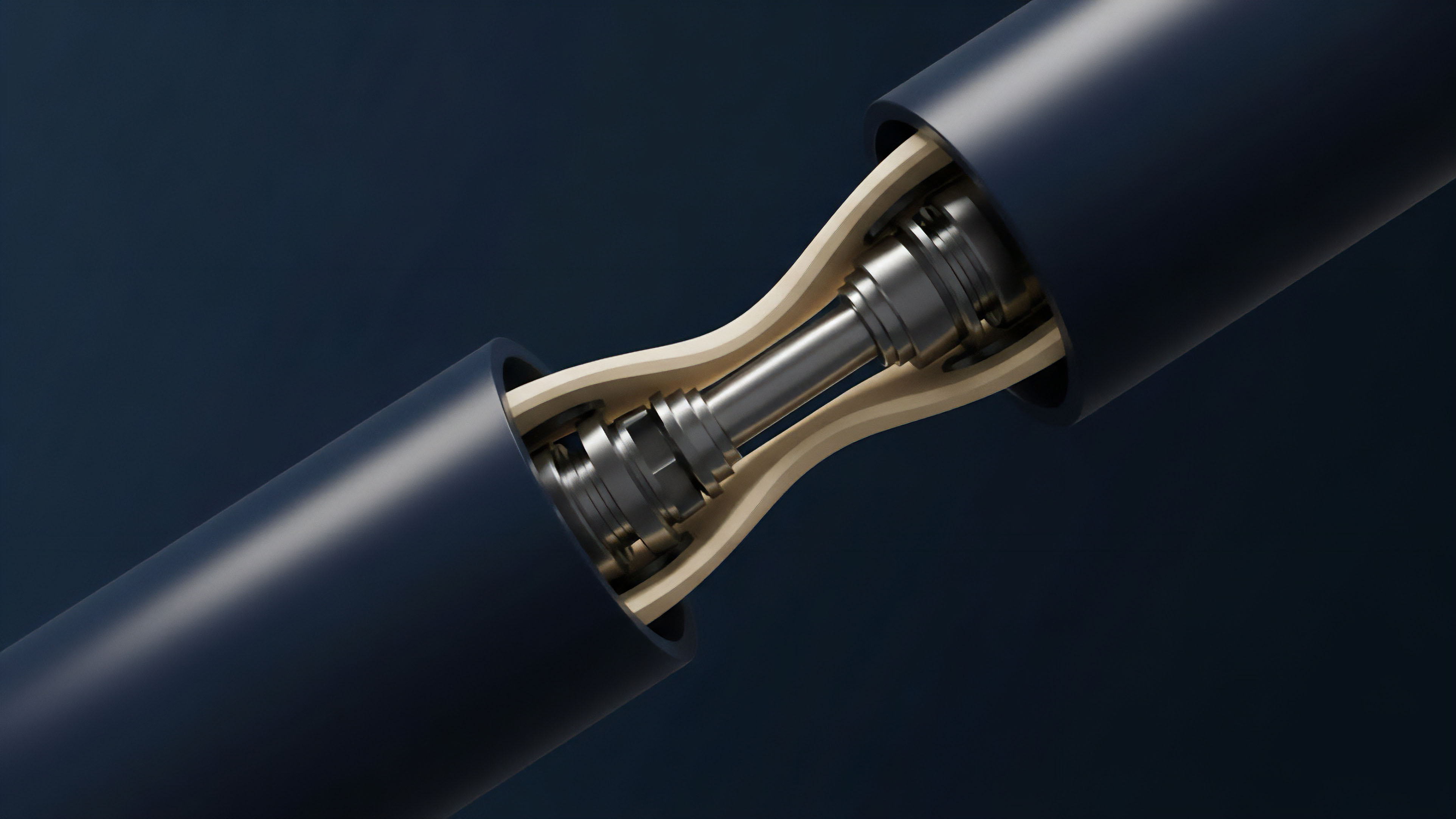

Optimistic Verification Model utilizes a presumption of validity to minimize on-chain computation costs while maintaining a security bond for dispute resolution.

Within the context of decentralized derivatives, the Optimistic Verification Model enables complex margin engines and order matching systems to operate with low latency. This is achieved by moving the heavy lifting of computation off-chain while anchoring the finality to a secure base layer. The system relies on the availability of data to ensure that any observer can reconstruct the state and challenge a fraudulent submission. This data availability is the lifeline of the model, as without it, the ability to generate a fraud proof is compromised.

Historical Context of Scalability

The development of the Optimistic Verification Model stems from the limitations of early Layer 2 research and the prohibitive costs of on-chain computation. Research into Plasma identified that verifying every step of a computation on the main layer was unscalable for global finance. Developers sought a middle ground that offered the security of a base layer with the performance of a centralized exchange. This led to the formalization of rollups, where transaction data is bundled and posted to the parent chain.

The transition from simple payment channels to generalized computation required a more robust dispute mechanism. The Optimistic Verification Model emerged as the solution to this requirement, providing a way to execute arbitrary smart contract code off-chain. This shift was driven by the need for capital efficiency in decentralized finance, where traders require instant feedback and high liquidity without the friction of high gas fees. The architecture was refined through multiple iterations of interactive dispute games, which reduced the amount of data needed to prove fraud on-chain.

The challenge period creates a probabilistic finality where the cost of attacking the system exceeds the potential gains from fraudulent state updates.

Game Theory and Incentives

The structural integrity of the Optimistic Verification Model relies on a Nash Equilibrium established between sequencers and challengers. The sequencer commits a state root to the L1, while a bond acts as collateral against malicious behavior. If a challenger identifies an invalid state transition, they initiate a dispute. The resolution process involves a bisection game, where the specific instruction causing the discrepancy is isolated and executed on the L1 to determine the truth.

Systemic Roles

- Sequencer: Aggregates trades and submits state roots to the parent chain.

- Challenger: Monitors the chain for invalid state transitions and submits fraud proofs.

- Validator: Ensures that the data posted by the sequencer is available and correct.

This model introduces a specific type of risk known as the 1-of-N security model. As long as there is at least one honest challenger monitoring the network, the Optimistic Verification Model remains secure. This differs from traditional consensus models that require a majority of honest participants. The economic incentives are structured such that the challenger is rewarded from the sequencer’s slashed bond, creating a self-sustaining security loop.

Comparison of Verification Architectures

| Feature | Optimistic Verification | Zero Knowledge Verification |

|---|---|---|

| Validation Method | Fraud Proofs | Validity Proofs |

| Finality Time | 7 Days | Minutes |

| Computational Cost | Low | High |

| Data Efficiency | Moderate | High |

Implementation in Derivative Markets

Options protocols utilize the Optimistic Verification Model to handle elaborate margin calculations off-chain. In a decentralized options exchange, the risk engine must constantly evaluate the collateralization levels of every position. Performing these calculations on a base layer would be economically unfeasible. By using an optimistic approach, the protocol can update prices and liquidations in real-time, only settling the final state to the L1 periodically.

Scalability in decentralized derivatives depends on the ability to compress transaction data and defer validation without compromising the censorship resistance of the underlying ledger.

Traversing the liquidity requirements of crypto options requires a system that supports high-frequency updates. The Optimistic Verification Model facilitates this by allowing for rapid state transitions that are only finalized after the challenge period. This introduces a trade-off between execution speed and withdrawal latency. Traders can open and close positions instantly, but moving funds back to the base layer requires waiting for the dispute window to close. This delay is often mitigated by liquidity providers who offer fast exits in exchange for a small fee.

Operational Parameters

| Parameter | Standard Value | Impact on Options |

|---|---|---|

| Challenge Window | 168 Hours | Withdrawal Latency |

| Sequencer Bond | Variable ETH | Economic Security |

| Gas Limit | High | Order Book Depth |

Technical Transitions and Upgrades

Initial implementations of the Optimistic Verification Model required specialized virtual machines, creating friction for developers. The transition toward EVM equivalence represents a significant leap in protocol maturity. This shift allows developers to use the same tools and languages they use on the base layer, accelerating the migration of derivative protocols to Layer 2. The removal of custom transpilers has reduced the surface area for bugs and improved the reliability of fraud proofs.

The move from OVM 1.0 to more advanced iterations like Bedrock or Nitro has optimized the way data is stored and processed. These upgrades have focused on reducing the footprint of transaction data on the L1, which is the primary cost driver for rollups. By utilizing more efficient compression algorithms and batching techniques, the Optimistic Verification Model has become more competitive with centralized alternatives. The introduction of multi-client support has also increased the resilience of the network against software vulnerabilities.

Future State of Verification

The trajectory of the Optimistic Verification Model points toward hybrid verification systems. These systems aim to combine the speed of optimistic execution with the instant finality of zero-knowledge proofs. In such a setup, the system operates optimistically by default, but provides a validity proof upon request or for specific high-value transactions. This would eliminate the challenge period for many users while maintaining the low computational overhead of the optimistic model.

Shared sequencing and atomic composability are the next frontiers for the Optimistic Verification Model. By coordinating sequencers across different rollups, the ecosystem can achieve synchronous interactions between disparate protocols. This is vital for the crypto options market, where traders often need to hedge positions across multiple venues. The integration of decentralized sequencer sets will also address concerns regarding the centralization of transaction ordering, further aligning the model with the principles of decentralization.

Glossary

Verification Cost Optimization

Cost ⎊ Verification Cost Optimization, within the context of cryptocurrency derivatives, options trading, and financial derivatives, fundamentally addresses the minimization of expenses associated with validating transaction integrity and order execution.

Optimistic Attestation

Context ⎊ Optimistic attestation, within cryptocurrency, options trading, and financial derivatives, signifies a proactive validation process predicated on the assumption of eventual success or fulfillment of a contractual obligation.

Optimistic Rollup Comparison

Evaluation ⎊ ⎊ Optimistic Rollup Comparison involves assessing the performance characteristics of optimistic scaling solutions against alternatives like ZK-Rollups or sidechains, focusing on trade-offs in latency, security assumptions, and capital efficiency.

Public Verification Layer

Layer ⎊ ⎊ This designates the specific segment of a multi-tiered system, typically the base blockchain or a dedicated verification chain, responsible for the final, immutable confirmation of off-chain events or cryptographic proofs.

Optimistic Fraud Proof Window

Algorithm ⎊ An Optimistic Fraud Proof Window represents a defined period following a state root submission on an Optimistic Rollup, during which challenges to that root can be submitted.

Merkle Tree Root Verification

Verification ⎊ The cryptographic process of confirming that a specific set of data, representing transactions or contract states, correctly aggregates up to a single, published root hash within a Merkle tree structure.

Microkernel Verification

Algorithm ⎊ Microkernel verification, within complex financial systems, represents a rigorous process of formally proving the correctness of a microkernel’s implementation ⎊ the foundational core of an operating system ⎊ upon which critical financial applications, including those handling cryptocurrency transactions and derivatives pricing, are built.

Signature Verification

Process ⎊ Signature verification is the cryptographic process of validating a digital signature to confirm that a transaction or message originated from the claimed sender.

Optimistic Oracle Design

Design ⎊ Optimistic oracle design operates on the principle that data submitted to the oracle is assumed to be correct unless challenged by a network participant within a specified dispute period.

Clearinghouse Verification

Verification ⎊ In the context of cryptocurrency derivatives, options trading, and financial derivatives, Clearinghouse Verification represents a multi-layered process ensuring the authenticity and integrity of participant identities and transaction data submitted to a central clearing counterparty.