Essence

The integrity of a derivative contract hinges entirely on the veracity of its underlying data inputs. For decentralized options protocols, this necessity translates into the complex challenge of on-chain data verification, a mechanism that ensures the pricing, settlement, and liquidation processes operate on objective, immutable information rather than off-chain manipulation or single-point-of-failure data feeds. The core function of data verification is to bridge the gap between the external market state ⎊ such as the price of Bitcoin, a specific volatility index, or a lending rate ⎊ and the internal logic of a smart contract.

Without a robust verification system, a decentralized options contract lacks the necessary connection to external reality required for fair execution, rendering it vulnerable to manipulation and systemic risk. The primary requirement for an options protocol’s verification system is a high degree of data freshness combined with strong resistance to manipulation. Unlike lending protocols, which can tolerate data latency measured in minutes, options pricing and liquidation engines require near-instantaneous updates to accurately calculate the intrinsic value and manage risk in highly volatile markets.

A delayed or manipulated price feed can lead to significant front-running opportunities, where malicious actors execute trades based on information that has not yet reached the on-chain settlement layer. This creates a fundamental trade-off between speed and security that every protocol must manage.

On-chain data verification is the process of establishing an objective, trustless source of external market data for smart contracts, ensuring the fair execution of decentralized options and derivatives.

The data verification architecture must account for the adversarial nature of the environment. Market participants, particularly high-frequency traders and liquidators, have strong incentives to exploit any weakness in the data feed for profit. This requires a shift from a simple data pull to a complex system of economic incentives, data aggregation, and cryptographic proofs to guarantee that the information presented to the options contract reflects the actual market conditions at the moment of execution.

Origin

The necessity for on-chain data verification emerged directly from the “oracle problem,” a challenge first identified in early smart contract development. While a blockchain provides a secure environment for executing code and managing state transitions, it operates in a vacuum, isolated from external data sources. The earliest decentralized applications, such as prediction markets and simple lending protocols, required external information to function.

These initial attempts at data provision often relied on centralized feeds or single-source inputs, creating obvious security vulnerabilities. The development of decentralized finance, specifically the introduction of complex derivatives like options, significantly amplified the requirements for data verification. Early options protocols, built on rudimentary price feeds, faced immediate challenges related to volatility and liquidation risk.

The market for options requires not just a price feed, but a reliable, low-latency source of volatility data and interest rate information to accurately calculate option premiums. The inability to reliably verify these inputs in real time led to a series of high-profile exploits where protocols were drained of funds by manipulating data feeds or exploiting price discrepancies between centralized exchanges and on-chain sources. The evolution of verification methods can be traced through several phases.

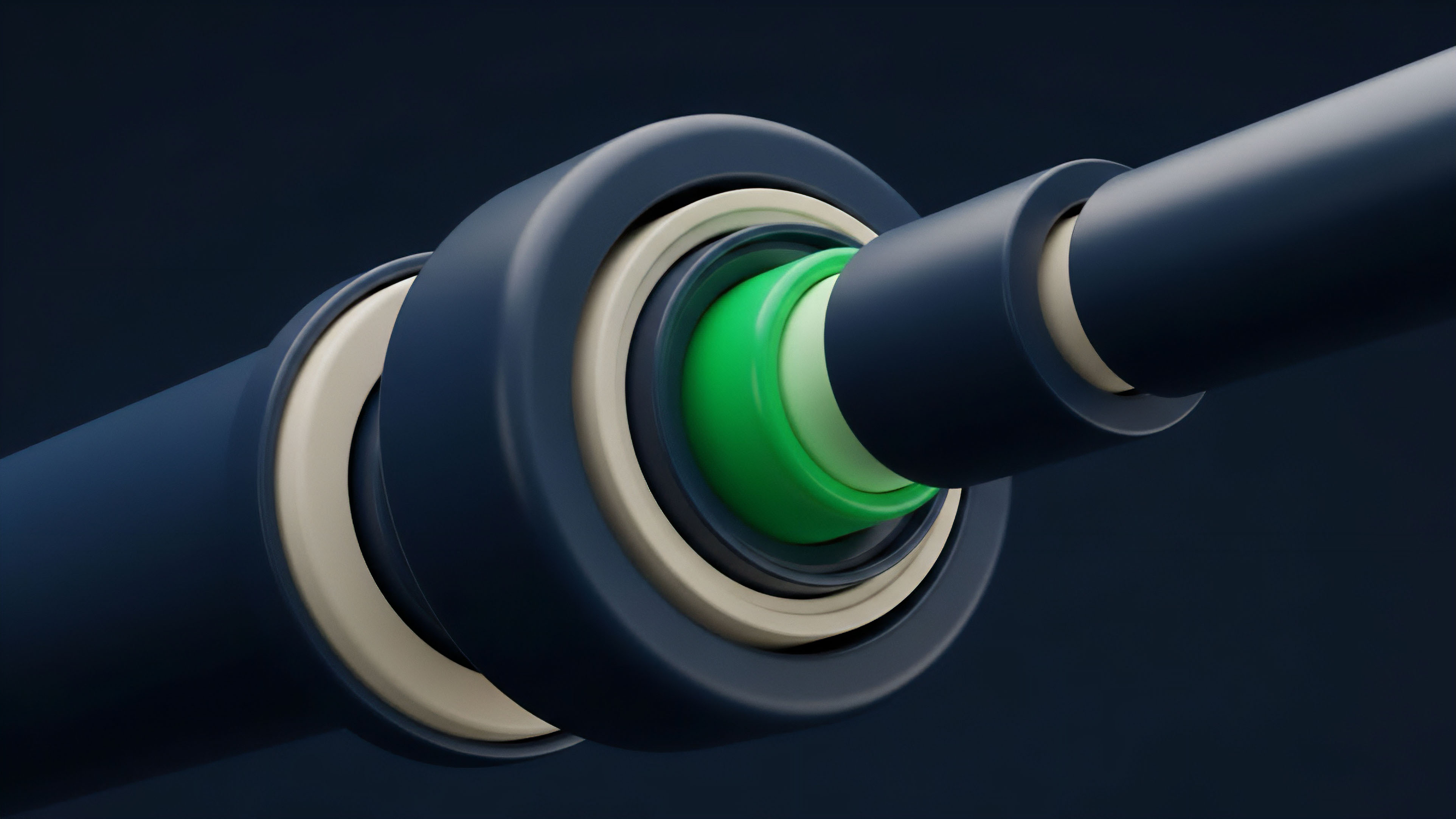

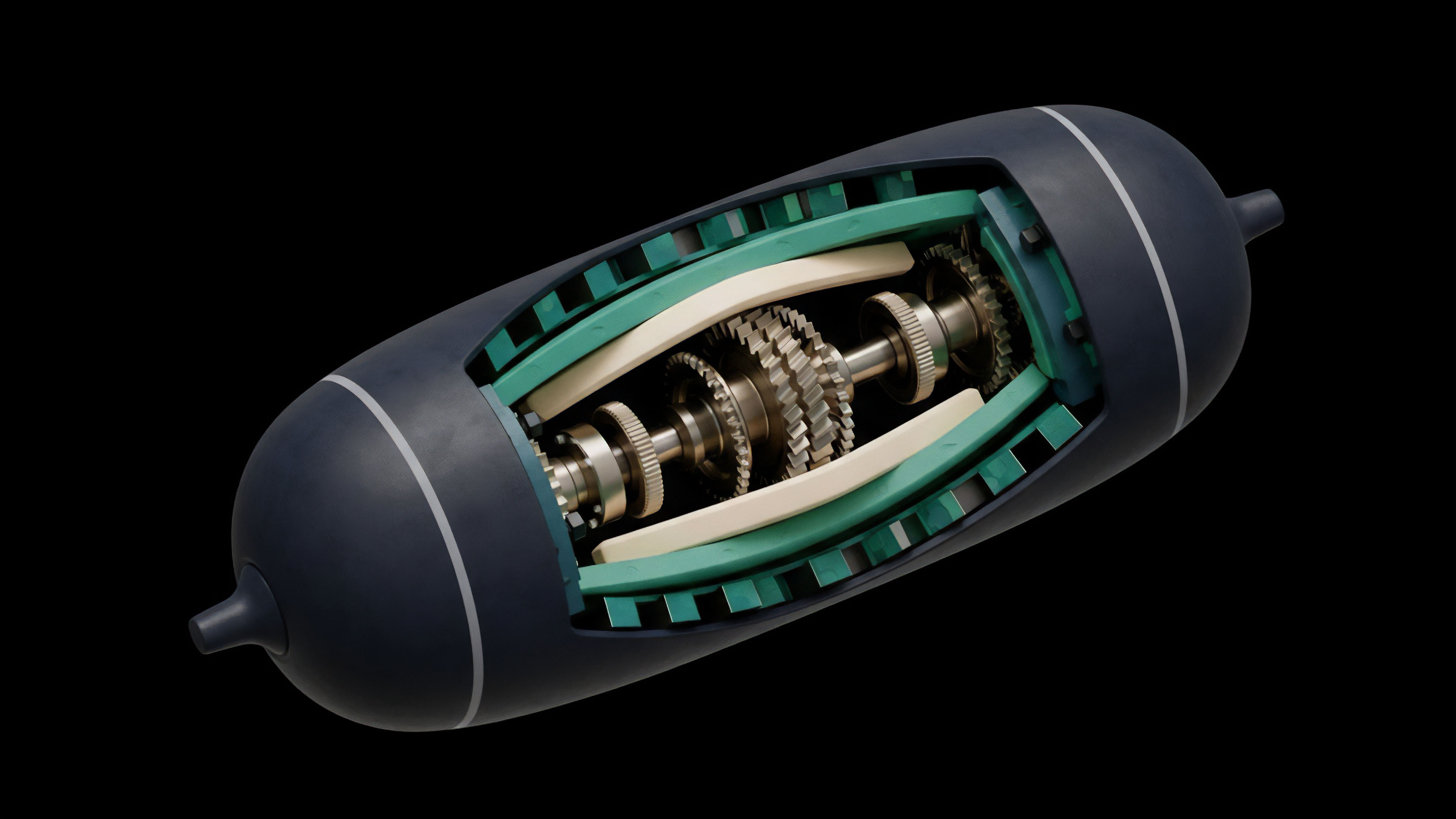

Initially, protocols used simple time-weighted average prices (TWAPs) to smooth out short-term volatility and manipulation attempts. However, TWAPs introduce significant latency, making them unsuitable for real-time risk management in options trading. The next phase involved the rise of decentralized oracle networks (DONs) like Chainlink, which introduced a new standard for data aggregation.

These networks addressed the problem by incentivizing multiple independent nodes to report data, then aggregating those reports to create a robust, verifiable price feed.

Theory

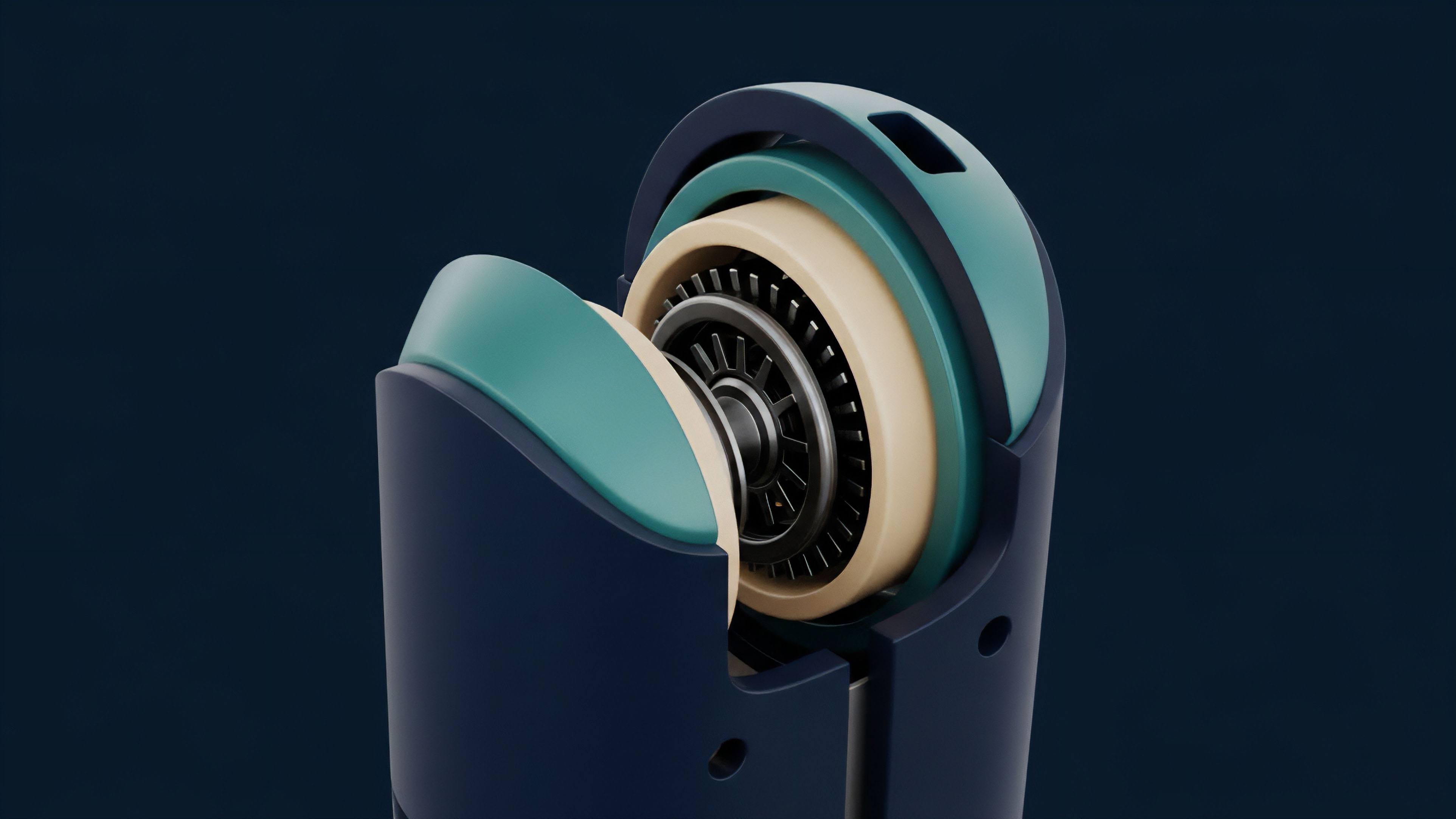

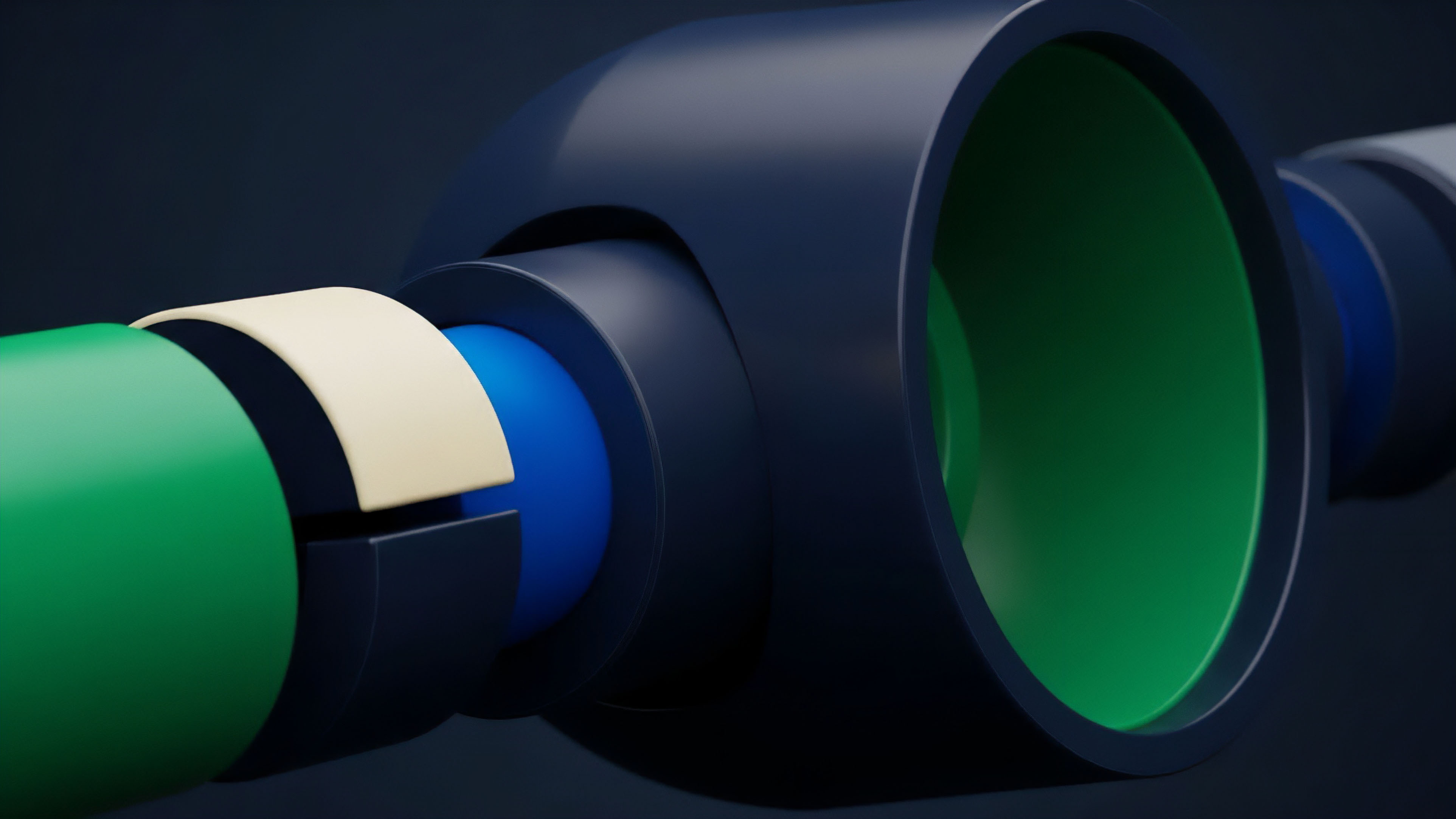

From a quantitative finance perspective, the theory of on-chain data verification for options protocols centers on minimizing basis risk and ensuring the accuracy of the underlying variables in pricing models like Black-Scholes. The Black-Scholes model, for instance, requires five inputs: the current price of the underlying asset (S), the strike price (K), time to expiration (T), the risk-free interest rate (r), and volatility (σ).

On-chain data verification primarily focuses on reliably providing S, T, and σ. The systemic risk in decentralized options protocols arises when the verified value of S deviates significantly from the true market price, leading to mispricing or incorrect liquidation calculations. The theoretical foundation of verification relies heavily on game theory and economic incentives.

A robust verification system must make the cost of data manipulation higher than the potential profit from that manipulation. This principle guides the design of decentralized oracle networks, where nodes are staked with collateral that can be slashed if they report inaccurate data. The challenge is in defining “inaccurate data” in a dynamic market environment.

The system must differentiate between a legitimate price change and a malicious manipulation attempt. The verification process for options involves specific technical considerations that differ from other DeFi applications.

- Time-Weighted Average Prices (TWAPs): TWAPs calculate the average price over a specific time window, smoothing out short-term fluctuations and making manipulation more expensive for an attacker. The trade-off is latency, which can cause significant issues for short-term options pricing.

- Volatility Oracles: For accurate options pricing, protocols require a verifiable source for implied volatility. This data is complex to generate on-chain, often requiring a separate oracle network that aggregates data from multiple sources to create a synthetic volatility index.

- Optimistic Verification: This model assumes data is correct unless challenged by a third party within a specified time window. It offers a faster, cheaper verification method by minimizing on-chain computation, but introduces a latency period during which challenges can occur.

| Verification Method | Primary Benefit | Key Challenge for Options |

|---|---|---|

| Time-Weighted Average Price (TWAP) | Manipulation resistance (high cost to attack) | Data latency; unsuitable for real-time risk management of short-term options. |

| Decentralized Oracle Network (DON) Aggregation | Robustness through redundancy and incentive alignment | High cost of on-chain updates; potential for data staleness during extreme volatility. |

| Optimistic Verification | Low cost and high speed (assuming no challenges) | Latency during challenge periods; reliance on external actors for validation. |

Approach

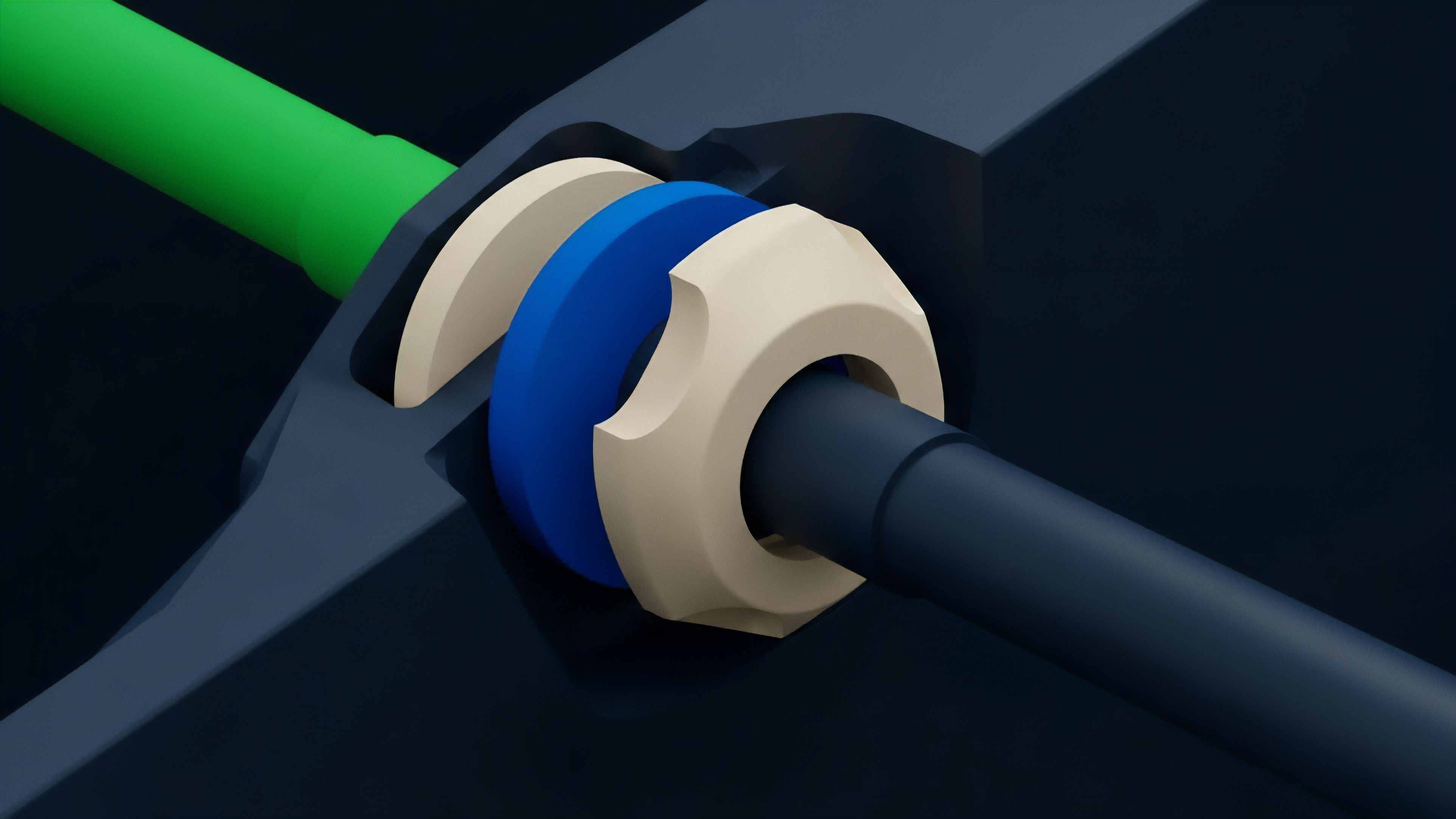

The practical approach to on-chain data verification in modern options protocols involves a multi-layered architecture designed to mitigate specific risks. The current standard utilizes decentralized oracle networks to source price data from multiple centralized exchanges and data providers. This aggregated data is then passed through a verification layer, which may use mechanisms like TWAPs, median calculations, or optimistic challenges.

A key challenge in implementing this approach is the cost of data updates. Every time an oracle updates the price feed on a blockchain, it incurs gas fees. For options protocols with many open positions, frequent updates are necessary for accurate risk management, but these updates can become prohibitively expensive, especially during periods of high network congestion.

This economic constraint often forces protocols to compromise on data freshness, leading to potential mispricing and liquidation risk. To address this, protocols often implement specific data verification strategies for different types of transactions.

- Liquidation Triggers: For liquidations, protocols prioritize security over speed. They often rely on TWAPs or a multi-signature verification process to ensure that a liquidation event is based on a sustained price drop rather than a momentary spike or manipulation attempt.

- Option Pricing: For calculating option premiums at the time of purchase or sale, protocols require a more real-time price feed. This often involves a hybrid approach where a high-frequency, low-latency off-chain data feed is used for pricing calculations, while a more robust, on-chain verification mechanism is used for settlement and collateral checks.

This hybrid approach acknowledges that a purely on-chain solution for real-time options data verification is often economically infeasible. The protocol’s design must define a precise data update schedule, ensuring that the verified price is updated frequently enough to manage risk without making the protocol too expensive to use.

Evolution

The evolution of on-chain data verification has shifted from simple, reactive measures to proactive, systemic risk management.

The early days of options protocols saw a focus on simple price feeds for settlement. However, as the complexity of decentralized options increased, the requirements expanded to include verifiable volatility data and interest rate information. This required the development of specialized oracle networks capable of generating synthetic data points that are themselves verifiable.

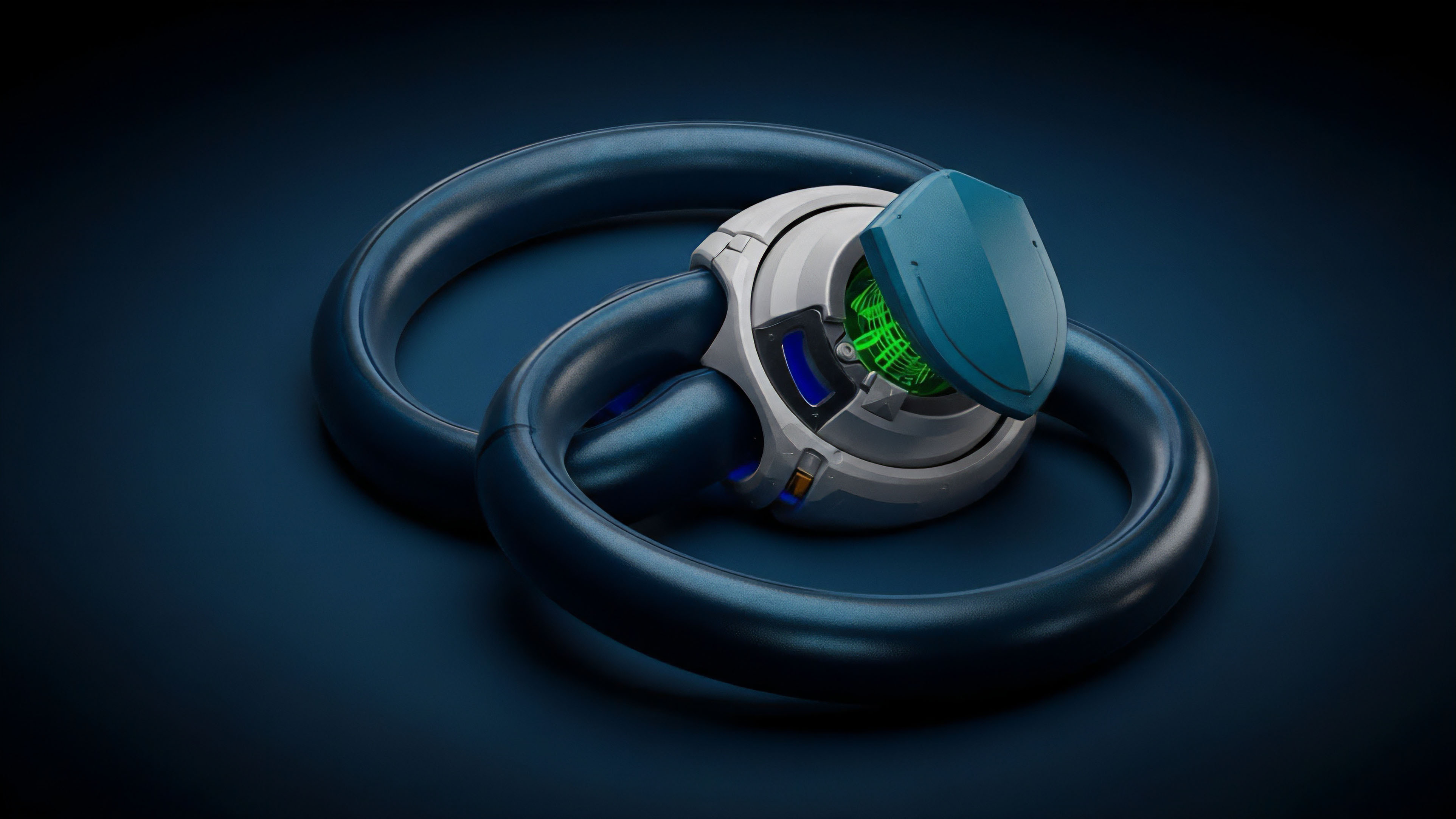

The current generation of options protocols utilizes sophisticated oracle designs that are highly customized for derivatives. The most significant development in this area is the rise of optimistic oracles. These systems allow for faster data updates by assuming data integrity and only initiating a costly on-chain verification process when a discrepancy is challenged.

This design significantly reduces the cost and latency of data verification, making it possible to support more complex derivatives with higher frequency data requirements. The challenge in this evolution, however, remains the inherent latency of optimistic systems. The challenge window, typically measured in hours, creates a time delay during which a potentially manipulated price could be used for a trade.

The protocol must calculate whether the potential profit from a manipulation during this window outweighs the cost of a successful challenge.

Optimistic oracles, by assuming data integrity unless challenged, offer a significant improvement in efficiency and cost for options protocols, though they introduce a specific challenge latency window that must be carefully managed.

Another significant evolution is the integration of layer-2 solutions and specialized oracle networks for specific asset classes. Layer-2s allow for cheaper, faster on-chain computation, making it economically feasible to perform more complex verification calculations. Furthermore, specialized oracles are being developed to verify data points like implied volatility, which cannot be easily derived from simple spot price feeds.

Horizon

Looking ahead, the future of on-chain data verification for options protocols lies in the development of fully autonomous, self-verifying systems that minimize reliance on external oracle networks. The goal is to move beyond simply verifying off-chain data and toward a model where all necessary data for options pricing and settlement is generated and verified within the protocol’s own ecosystem. This involves creating new methods for generating verifiable volatility indices and interest rates from on-chain activity. One promising area of development is the use of automated market makers (AMMs) to generate implied volatility data. By observing the pricing of options within the AMM pool, a protocol can derive a verifiable, on-chain volatility index that is resistant to manipulation because it reflects the actual activity within the protocol itself. This approach significantly reduces the reliance on external data feeds, thereby eliminating the oracle problem for certain inputs. The horizon for on-chain verification also includes the integration of zero-knowledge proofs (ZKPs) to verify data inputs without revealing the underlying information. This would allow protocols to process data from external sources while maintaining privacy and security. For example, a ZKP could verify that a price feed is within a certain range without revealing the exact price, reducing the incentive for manipulation. Ultimately, the goal is to create a closed-loop system where data verification is inherent to the protocol’s design. The next generation of options protocols will likely incorporate these mechanisms to create truly autonomous financial systems where the integrity of the data is guaranteed by the protocol’s internal logic, not by external, potentially fallible, data providers. This will unlock the creation of more exotic derivatives and complex financial instruments that require a level of data integrity currently unattainable in decentralized markets.

Glossary

Advanced Formal Verification

Cross-Chain State Verification

Data Verification Layer

Formal Verification Auction Logic

Identity Verification

On-Chain Data Verification

Deterministic Verification Logic

Cryptographic Verification

Challenge Windows