Essence

On-Chain Data Validation is the process of cryptographically verifying external data inputs for smart contracts, ensuring the integrity and reliability of information used for automated financial execution. This mechanism is foundational for decentralized derivatives, particularly crypto options, where a contract’s value and settlement depend on accurate, real-time pricing data. Without a robust validation layer, a smart contract is vulnerable to manipulation, leading to incorrect liquidations, unfair pricing, and systemic risk.

The core challenge lies in bridging the gap between the external world of market prices and the deterministic environment of a blockchain, where code must execute without external trust assumptions. The integrity of on-chain data validation determines the capital efficiency and overall safety of a derivatives protocol. When a protocol calculates margin requirements or determines whether an option is in-the-money at expiration, it relies entirely on validated data feeds.

A failure in this process, whether through latency, data staleness, or deliberate manipulation, creates an arbitrage opportunity for malicious actors. This risk is particularly acute for options, which have specific expiration times and exercise conditions that demand precise data at specific moments.

On-chain data validation provides the cryptographic assurance required for a decentralized financial system to function without relying on central authorities.

The data validation mechanism is a critical component of a protocol’s risk engine. It dictates how liquidations are triggered and how collateral is valued. In a high-leverage environment, even small inaccuracies in data feeds can cascade into widespread liquidations, threatening the solvency of the entire system.

Therefore, the design of the validation process must prioritize resilience against manipulation over speed, particularly for settlement purposes where finality is paramount.

Origin

The necessity for on-chain data validation emerged from the earliest attempts to build decentralized financial primitives, specifically lending protocols and prediction markets. The first iterations of these systems often relied on simple, centralized data feeds.

The earliest significant failures highlighted the fragility of this model. Flash loan attacks demonstrated how a single-source price feed could be manipulated within a single block, allowing an attacker to borrow assets, execute a trade at an artificially inflated price, and repay the loan before the block concluded. This led to the realization that data integrity was not a technical implementation detail but a core economic problem requiring a decentralized solution.

Early options protocols, such as those built on Ethereum, faced a fundamental dilemma: how to settle contracts at expiration without relying on a trusted third party to provide the final price. The solutions evolved from simple time-weighted average prices (TWAPs) to decentralized oracle networks (DONs). The shift toward DONs marked a significant change in architectural philosophy.

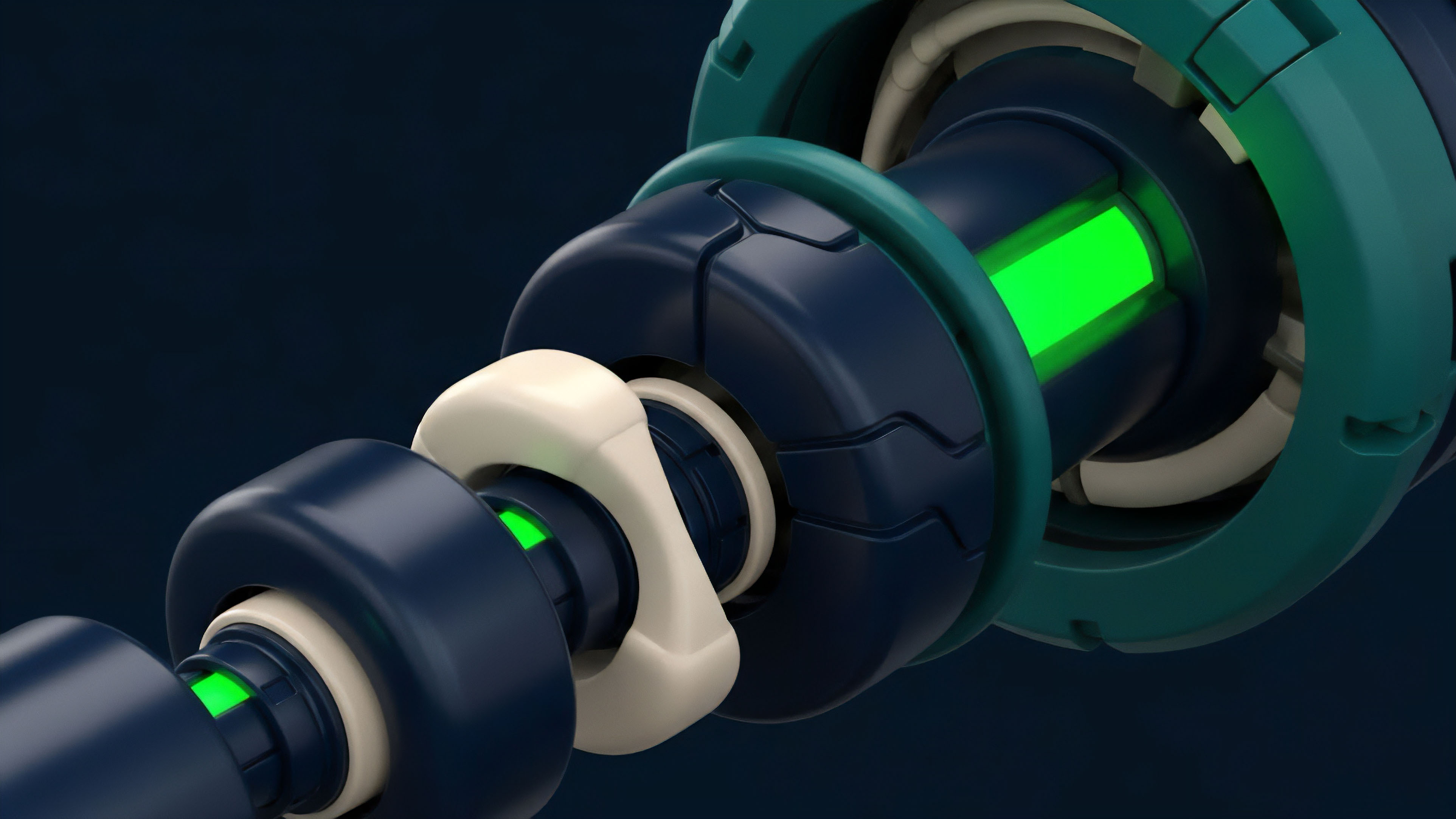

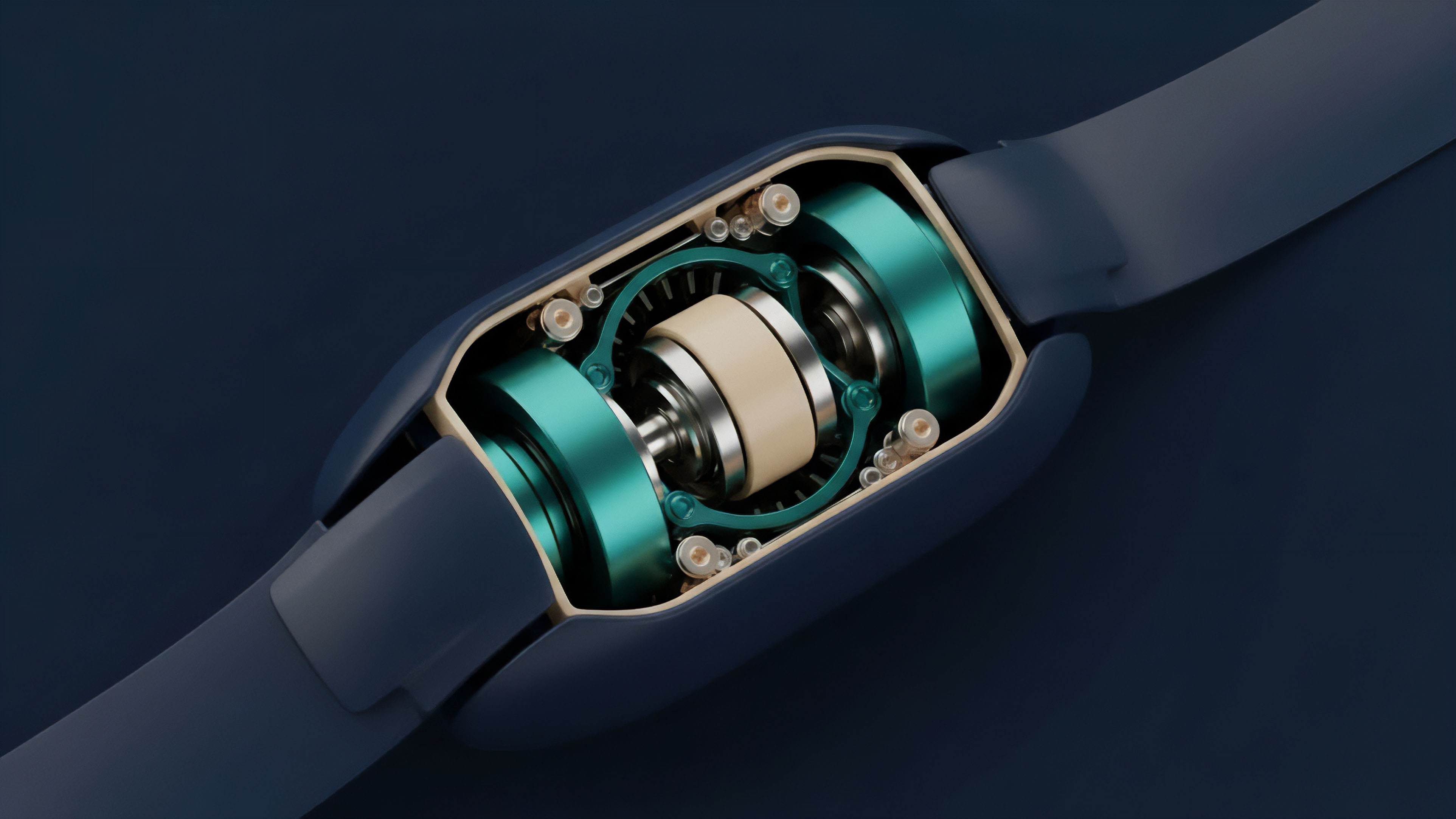

Instead of trusting a single entity, the validation process became distributed among multiple independent nodes. These nodes would source data from different exchanges, aggregate it, and submit the validated result to the smart contract. This design reduced the attack surface, increasing the cost of manipulation significantly.

The transition was driven by the recognition that the security of a derivative protocol’s collateral pool is directly proportional to the cost required to manipulate its underlying price feed.

Theory

The theoretical foundation of on-chain data validation for options rests on a blend of game theory, information theory, and financial modeling. The objective is to design an incentive structure where honest data reporting is economically rational, while malicious reporting results in financial loss.

Incentive Structures and Game Theory

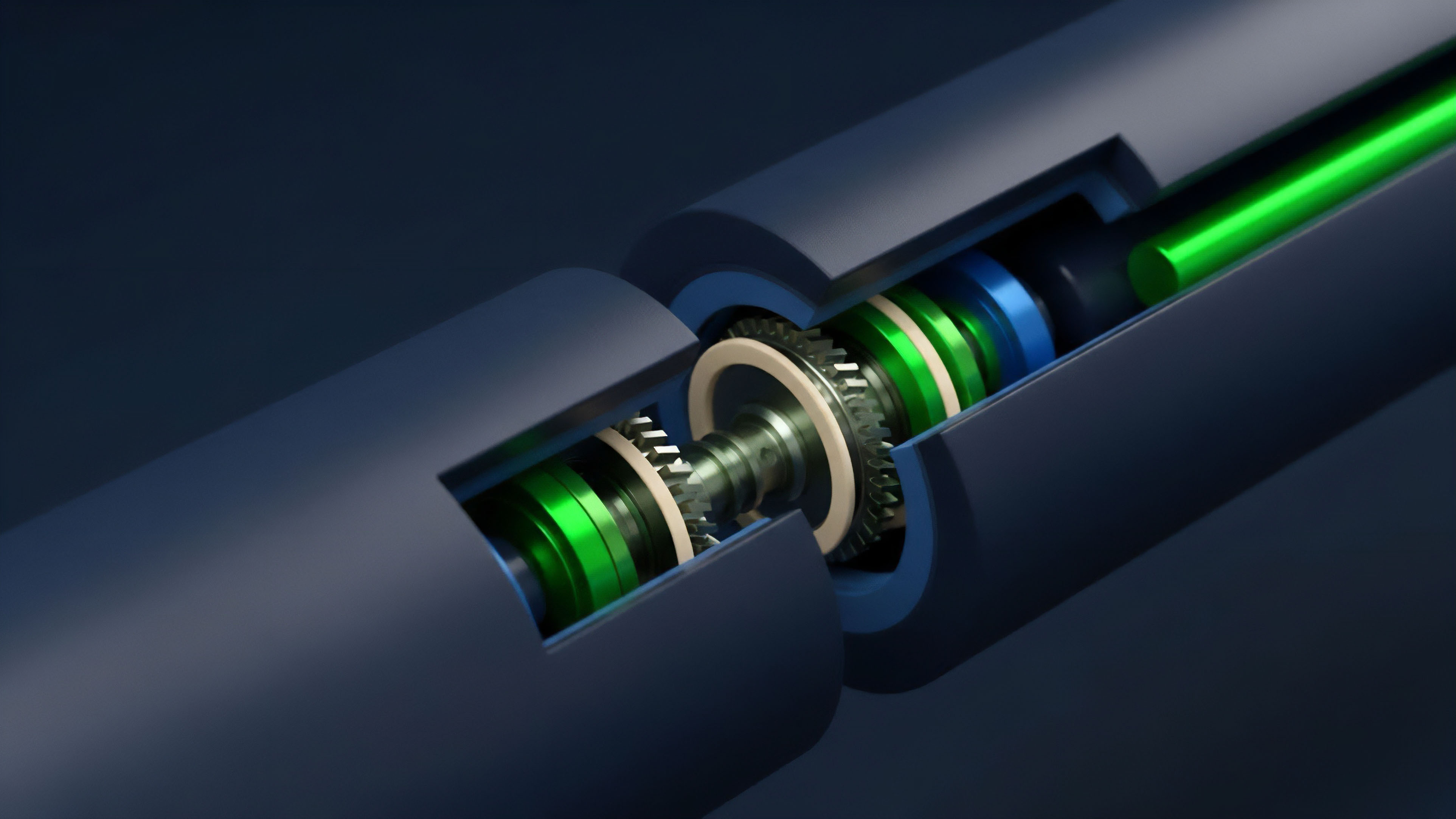

The validation process relies on a system of staking and slashing. Validators stake capital to participate in the network. If they report accurate data consistent with a consensus of other validators, they earn rewards.

If they report malicious data, they risk losing their staked capital. This creates a disincentive for collusion and manipulation. The security of the network is determined by the total value staked and the economic cost required to overcome the consensus mechanism.

The core game theory problem for options is managing data latency and staleness. Options protocols require precise pricing data at specific moments for liquidation and settlement. However, a high-frequency update cycle for data feeds is expensive and increases network congestion.

A low-frequency update cycle creates opportunities for front-running and manipulation during high volatility periods. The optimal solution requires a balance, often achieved through mechanisms like time-weighted average prices (TWAPs) for liquidations, which smooth out price fluctuations over time, making short-term manipulation less effective.

Volatility Validation Challenges

Options pricing models, such as Black-Scholes, require inputs beyond just the spot price, most notably implied volatility. Validating implied volatility on-chain presents a complex challenge. Implied volatility is not a single, observable market price; it is derived from the current price of options contracts across different strike prices and expirations.

This calculation requires processing off-chain order book data from multiple exchanges.

- Data Source Aggregation: Collecting real-time order book data from a diverse set of centralized and decentralized exchanges.

- Volatility Surface Calculation: Computing the implied volatility surface by solving for volatility in the Black-Scholes formula across various strikes and expirations.

- On-Chain Validation: Submitting a consensus of this calculated volatility surface to the smart contract.

The current approach to on-chain volatility validation often simplifies this process by relying on a single, aggregated volatility index or by using a protocol’s internal order book data rather than external feeds. This reduces complexity but introduces a potential source of error if the protocol’s internal market deviates significantly from the broader market.

Approach

Current implementations of on-chain data validation for options protocols utilize several distinct architectural approaches, each with its own trade-offs between cost, latency, and security.

The choice of approach dictates the protocol’s risk profile and capital efficiency.

Validation Architectures

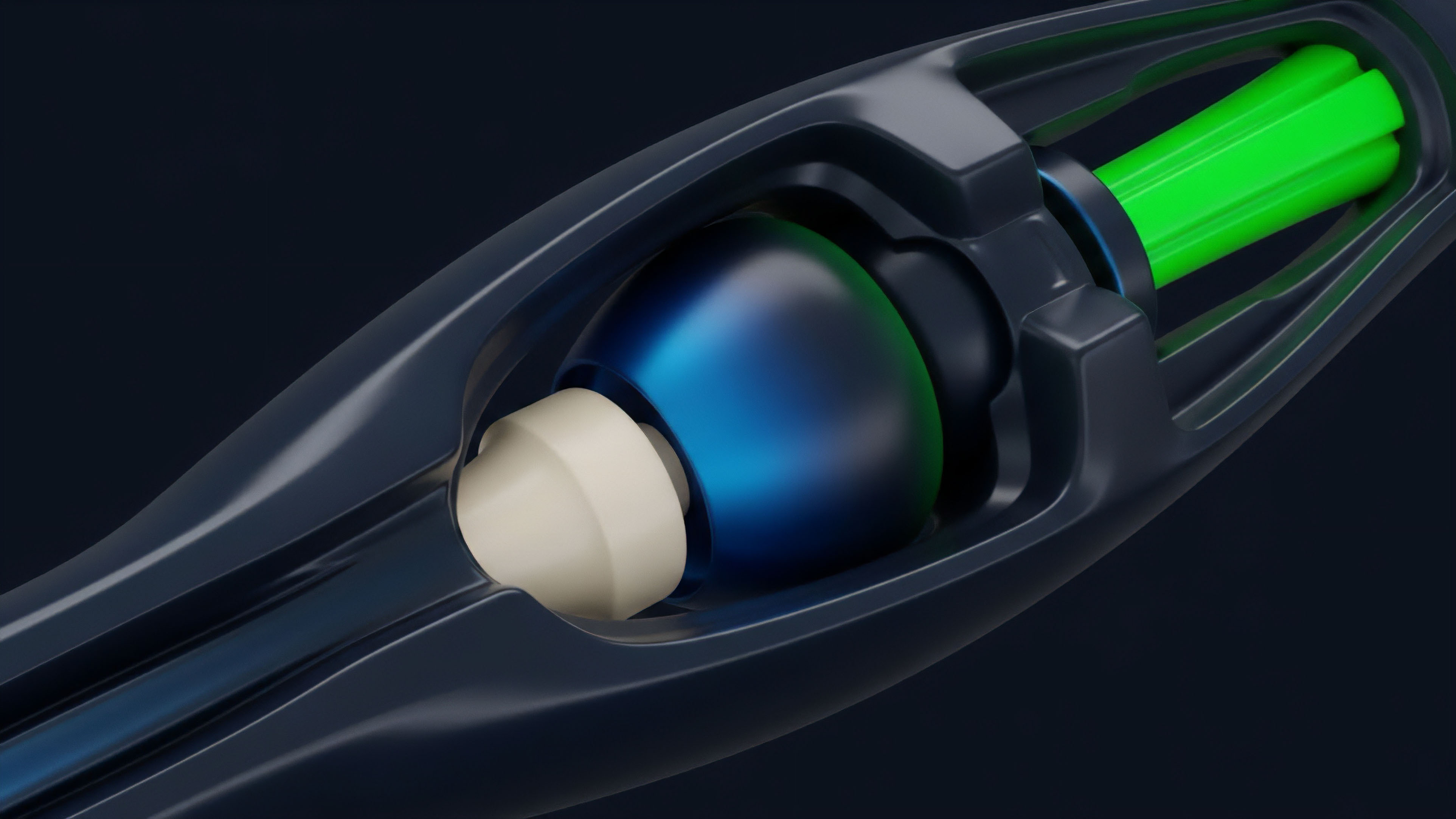

Protocols primarily choose between a “push” model and a “pull” model for data delivery. In the push model, validators actively submit new data to the smart contract at predetermined intervals or when price changes exceed a specific threshold. This model ensures data freshness but incurs higher gas costs for frequent updates.

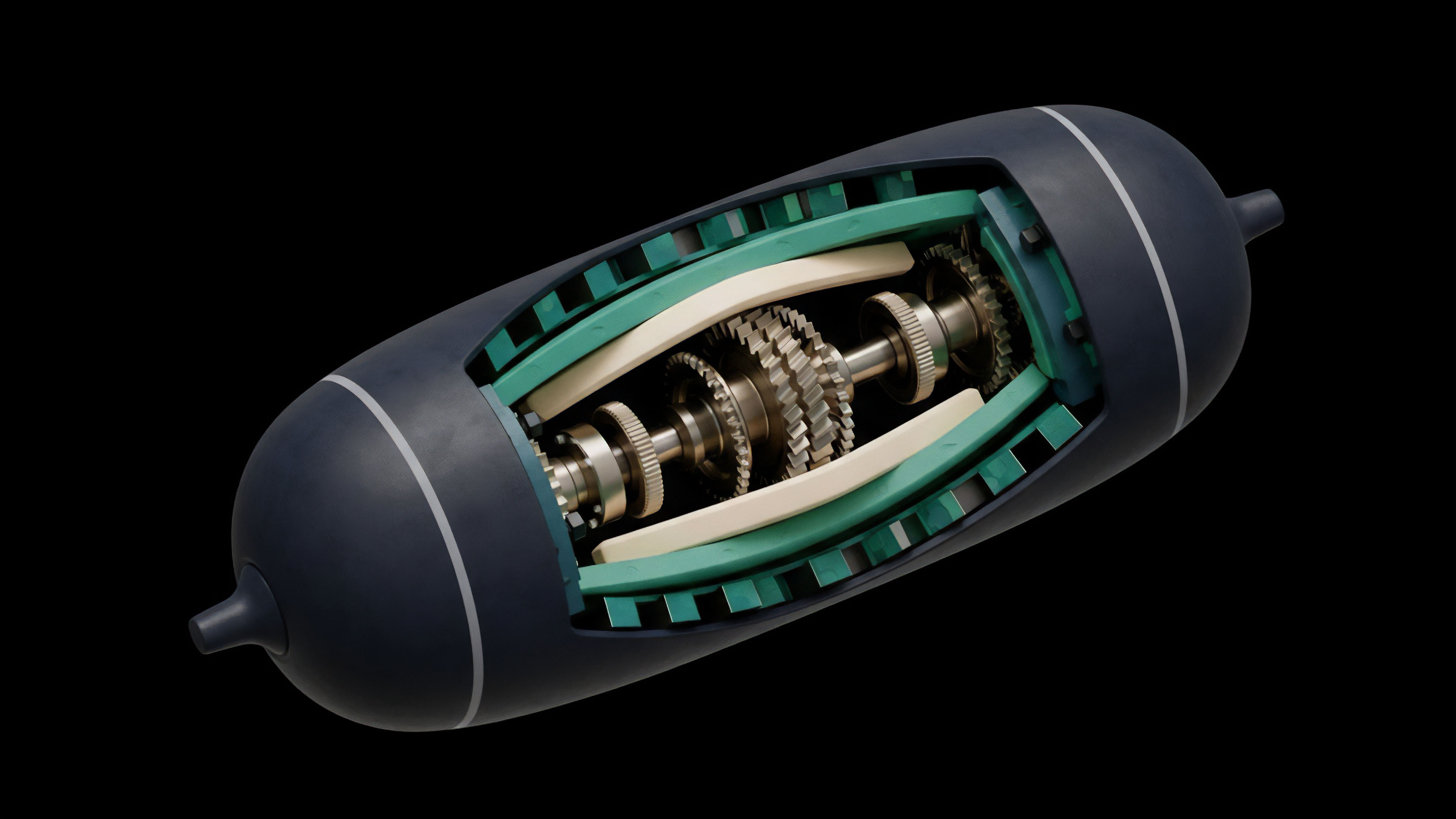

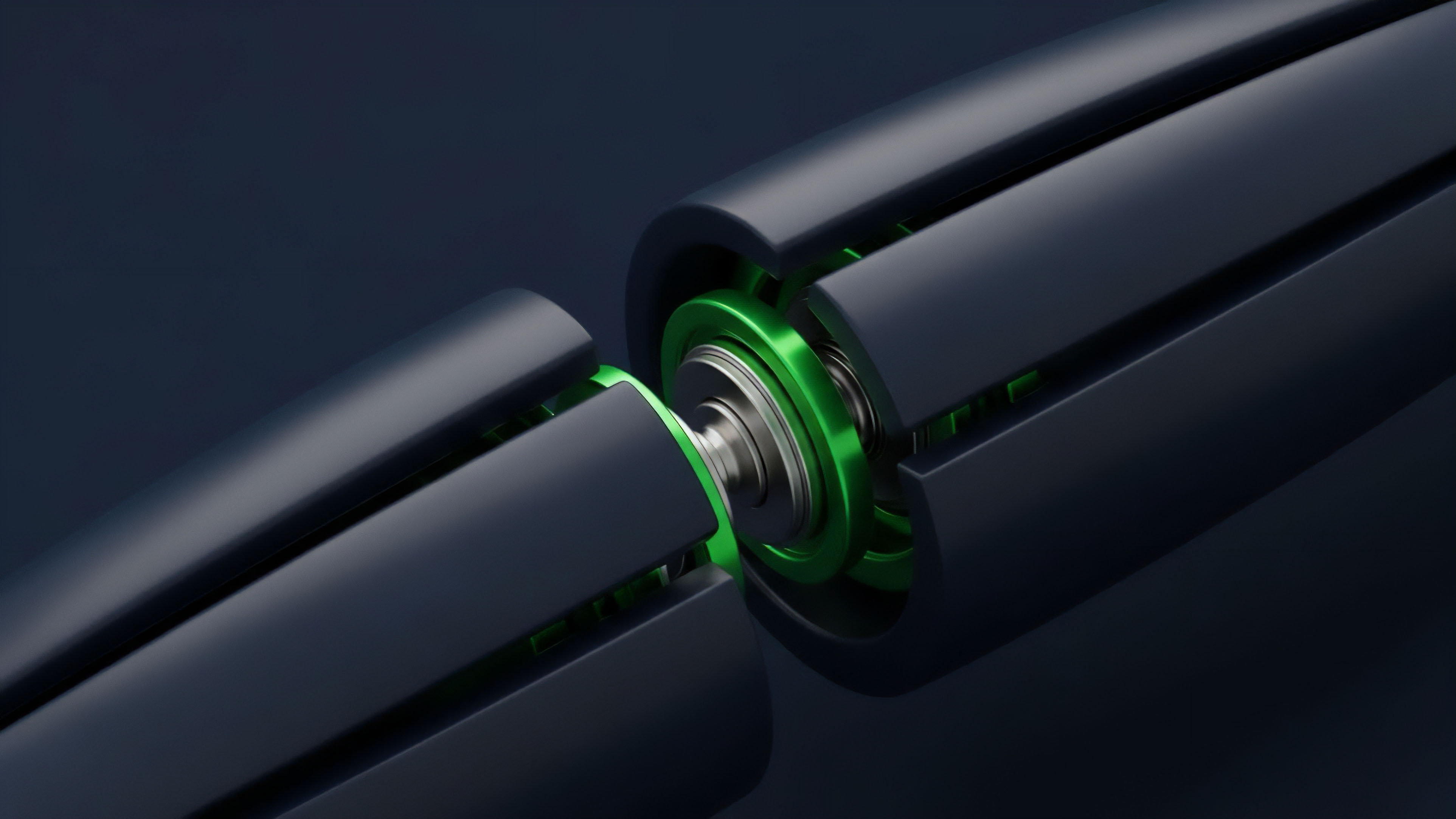

The pull model allows the smart contract to request data when needed, which reduces gas costs but introduces potential latency issues during periods of high demand. A third, more advanced approach involves a decentralized oracle network where data aggregation is performed off-chain, and only the final, validated result is submitted on-chain. This minimizes the computational burden on the main blockchain.

| Validation Method | Description | Risk Profile | Use Case for Options |

|---|---|---|---|

| Time-Weighted Average Price (TWAP) | A data feed that averages prices over a specific time window. | Low risk of flash loan attacks; high risk of stale data during rapid market shifts. | Liquidations and collateral valuation for long-term options. |

| Decentralized Oracle Networks (DONs) | Multiple independent nodes source and aggregate data from various exchanges. | High cost to manipulate due to distributed sources; higher latency than single-source feeds. | Settlement and pricing for most derivatives. |

| Protocol-Native Validation | Data derived directly from the protocol’s internal order book or liquidity pools. | Low reliance on external feeds; high risk of internal manipulation or market divergence. | Margin calculation and internal risk management. |

Risk Mitigation Techniques

For options, data validation must address specific risks associated with time-sensitive contracts.

- Stale Data Circuit Breakers: Protocols implement mechanisms that halt liquidations or settlements if the data feed has not updated within a predefined time window. This prevents incorrect actions based on outdated prices during network congestion.

- Volatility Skew Validation: More sophisticated protocols attempt to validate the volatility skew ⎊ the difference in implied volatility between options of different strike prices. This requires a complex validation process to ensure accurate pricing across the entire options surface.

- Settlement Delay Mechanisms: By introducing a small delay between the data update and the settlement execution, protocols give arbitrageurs time to correct any temporary price discrepancies, thereby increasing the integrity of the final settlement price.

Evolution

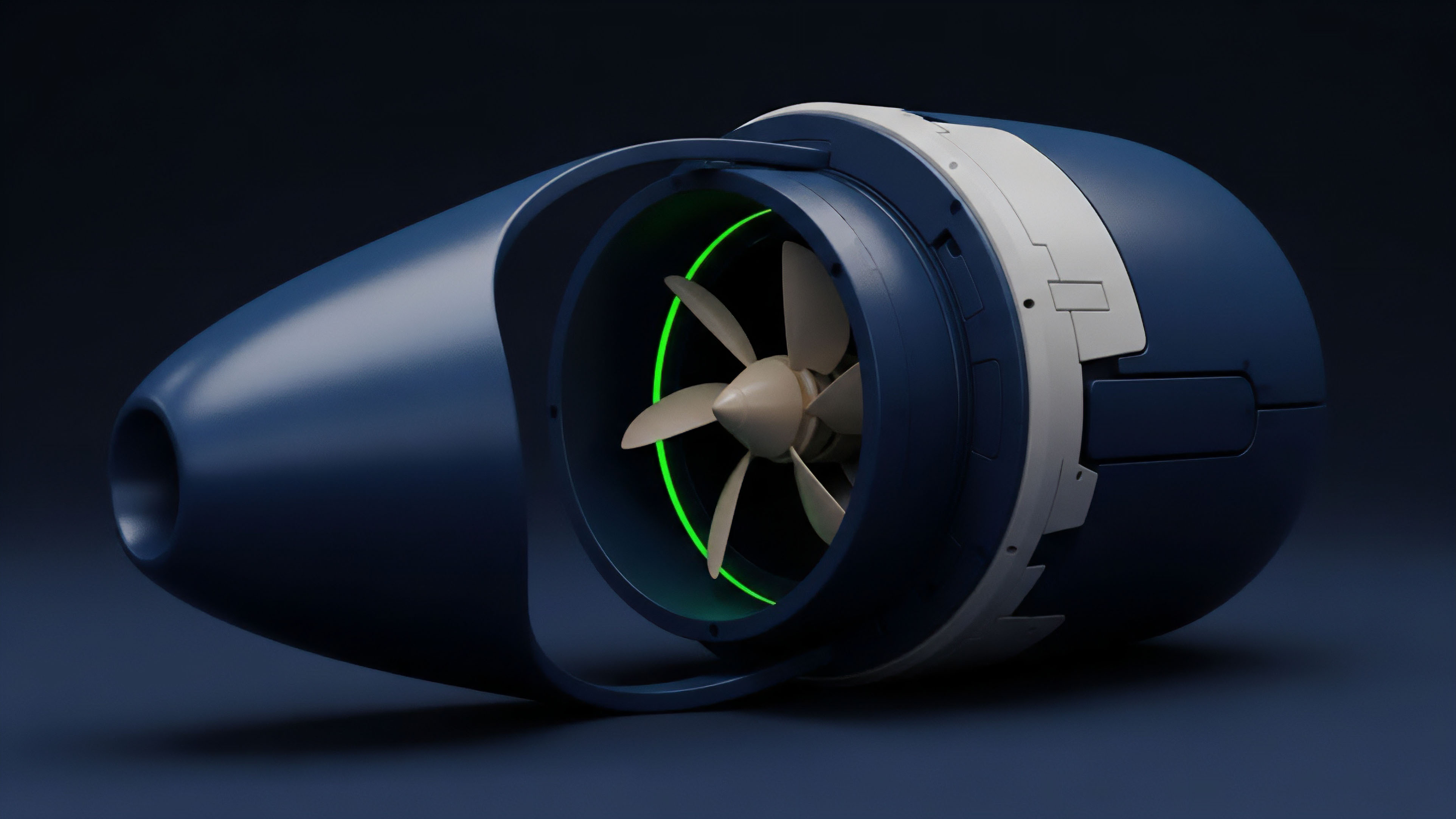

The evolution of on-chain data validation for options reflects a broader shift in decentralized finance toward greater capital efficiency and reduced reliance on external inputs. The progression moves from trusting external feeds to generating internal validation mechanisms. Initially, validation focused on simply providing a spot price feed.

This proved insufficient for derivatives, leading to the development of dedicated volatility oracles. These oracles attempt to provide a validated implied volatility feed by aggregating data from various sources. The next major step involves a transition toward “zk-oracles,” where data validation leverages zero-knowledge proofs.

Instead of revealing the raw data, a validator submits a proof that a specific data point was calculated correctly from a specific source. This enhances privacy and efficiency while maintaining cryptographic assurance. A more profound architectural evolution involves protocols generating their own data feeds internally.

By utilizing a protocol’s internal order book or liquidity pool data, validation becomes native to the protocol itself. This approach reduces external dependencies and minimizes the risk of data manipulation from outside sources. For example, some options protocols derive implied volatility directly from the price of their internal liquidity pools, creating a self-referential validation loop.

The future of on-chain data validation for derivatives is moving toward self-contained systems where data integrity is derived from internal market dynamics rather than external, potentially manipulated feeds.

This evolution suggests a future where data validation for derivatives becomes less about external data sourcing and more about the internal integrity of the protocol’s market microstructure. The validation mechanism effectively merges with the protocol’s risk engine, creating a more resilient and self-sufficient financial primitive.

Horizon

The next phase of on-chain data validation for options must address the fundamental disconnect between data integrity and latency for high-frequency trading.

The current model, where data must be sourced off-chain and then validated on-chain, creates an inherent latency that makes truly high-speed, decentralized options trading difficult. The current state of validation is sufficient for long-term options but struggles with intraday contracts. The novel conjecture here is that true on-chain data validation for high-frequency derivatives will require a shift from validating external data to validating internal calculations.

This means protocols will need to move beyond simple price feeds and develop mechanisms to validate the parameters used in complex financial models, such as volatility surfaces and interest rate curves.

The Shift to On-Chain Parameter Validation

The focus will move from validating a single price point to validating the integrity of a complex calculation. This requires new infrastructure that allows for verifiable computation.

- Decentralized Calculation Engines: Protocols will use decentralized computation networks to run complex calculations, such as implied volatility surface generation, and then submit the result along with a proof of correct execution.

- Cross-Chain Data Integration: As options markets fragment across different blockchains, validation will require robust cross-chain mechanisms. This involves securely transferring validated data from one chain to another, often through secure message-passing protocols.

Architecting a Resilient Data Layer

A key area for development is creating a resilient data layer that can withstand extreme market conditions. This involves designing protocols that can automatically adjust their risk parameters based on the reliability of the data feed. If a data feed becomes stale during high volatility, the protocol should automatically increase margin requirements or temporarily halt liquidations to prevent systemic failure.

| Validation Challenge | Proposed Horizon Solution |

|---|---|

| Latency and Front-running Risk | Zero-knowledge proofs for data submission; on-chain calculation validation. |

| Volatility Surface Complexity | Decentralized computation networks for complex calculations; internal data generation. |

| Cross-Chain Fragmentation | Secure cross-chain message passing protocols for data transfer. |

The ultimate goal for on-chain data validation is to create a system where the validation mechanism itself is fully decentralized and integrated with the protocol’s risk engine, making it economically infeasible to manipulate. The future of decentralized options relies entirely on solving this challenge, moving from a system that reacts to external data to one that autonomously manages its own risk parameters.

Glossary

Transaction Validation Fees

Validation Delay

System Validation

Zero Knowledge Oracles

Off-Chain Compliance Data

On-Chain Data Metrics

Off-Chain Data Collection

Market Condition Validation

Cryptographic Validation