Essence

The core challenge for decentralized options markets lies in the inherent friction between the speed of market price discovery and the latency of blockchain settlement. Options contracts, unlike spot exchanges, are highly sensitive to price changes, time decay, and volatility. The value of an option is not static; it requires continuous, real-time calculation based on underlying asset prices, interest rates, and implied volatility surfaces.

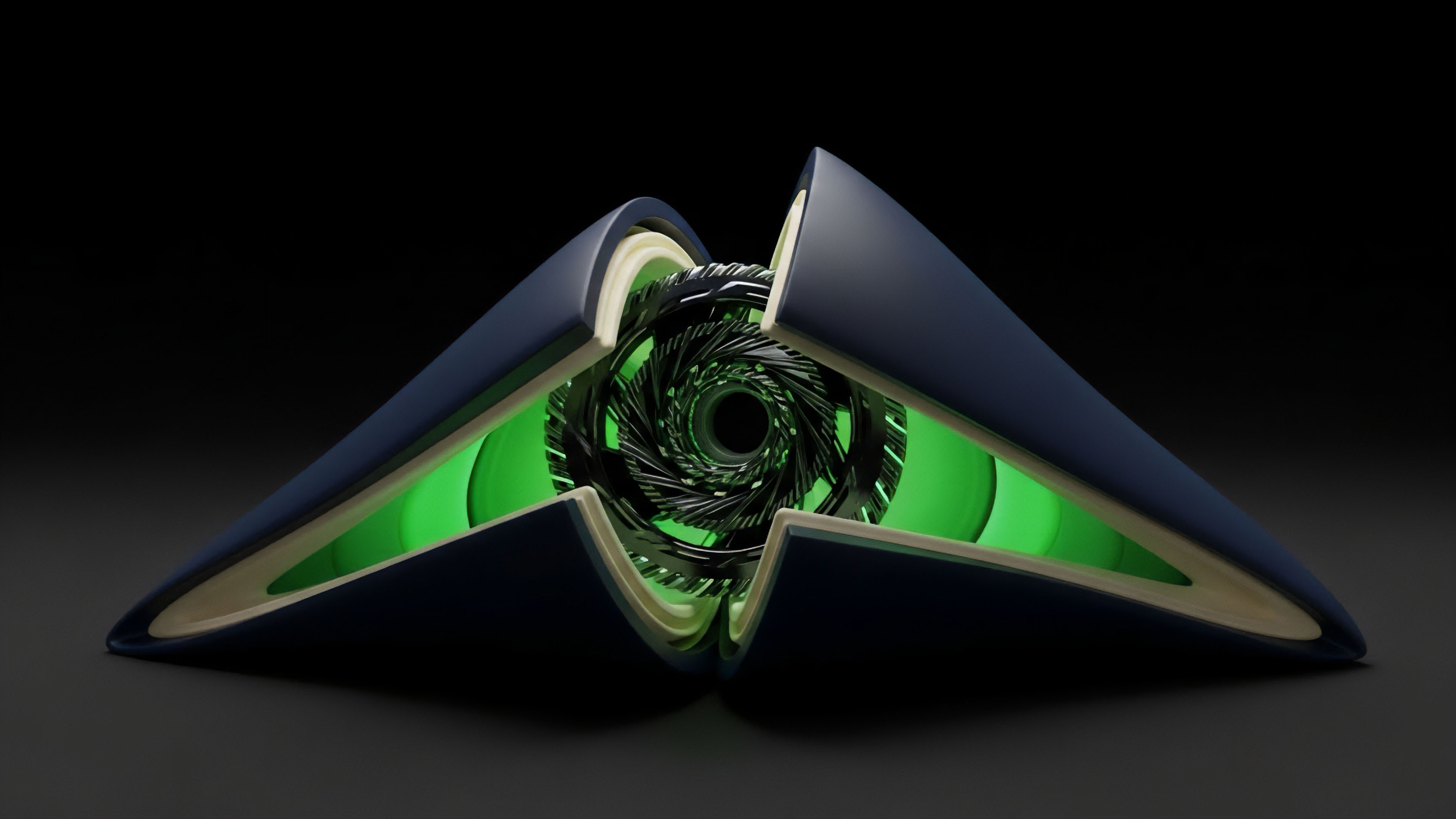

The Off-Chain Data Relay is the architectural solution to this problem, a mechanism that bridges the gap between high-frequency off-chain market data and the slow, expensive on-chain execution environment.

Without an efficient data relay, a decentralized options protocol cannot function safely. A smart contract cannot autonomously determine if a position is undercollateralized or if a liquidation threshold has been breached if it relies on stale or manipulated data. The relay system must be designed to deliver a single, reliable price point ⎊ or, more accurately, a complex data structure ⎊ at precisely the right moment for a specific financial operation.

This mechanism determines the protocol’s systemic integrity, defining the difference between a robust financial primitive and a high-risk liability. The design of this data feed is a first-principles challenge, a question of how to translate a fluid, chaotic market into a deterministic, verifiable input for a rigid, programmatic system.

Off-Chain Data Relay systems are the critical infrastructure for decentralized derivatives, providing the real-time price and volatility data required for accurate options pricing and risk management.

Origin

The origin of the off-chain data relay concept is directly tied to the limitations of early decentralized finance (DeFi) architecture. Early protocols, primarily focused on simple spot trading and lending, could often rely on time-weighted average prices (TWAPs) derived directly from on-chain transactions within their own liquidity pools. However, this model quickly broke down with the introduction of complex derivatives.

Options require more than a simple average price; they demand an understanding of market volatility, which is a second-order derivative of price itself. The Black-Scholes model, for instance, requires a volatility input, which is not a simple on-chain metric.

The need for a robust relay became urgent with the rise of flash loan attacks and oracle manipulation exploits. Attackers realized that if they could temporarily manipulate the price of an asset in a low-liquidity on-chain pool, they could force a lending protocol to liquidate positions at an incorrect price. The solution was to move data aggregation off-chain, where multiple sources could be queried and reconciled.

This shift in design philosophy led to the development of dedicated oracle networks. These networks began to focus not on simply providing a price, but on providing a robust, aggregated, and economically secure data feed that was resistant to single-source manipulation. This transition from simple on-chain price feeds to complex, off-chain data aggregation was a necessary evolution for the entire DeFi space to support advanced financial products like options.

Theory

From a quantitative finance perspective, the Off-Chain Data Relay is a critical component in calculating the Greeks, particularly Vega and Theta, which are essential for managing options risk. The data relay’s core function is to provide the inputs for the pricing model, which then determines the fair value of the option. The accuracy of this input data directly impacts the calculation of risk parameters.

Vega, the sensitivity of an option’s price to changes in implied volatility, is particularly sensitive to the quality of the data relay. If the relay provides a stale or incorrect volatility reading, the entire risk calculation for a portfolio becomes compromised.

The theoretical challenge of the relay is balancing data latency with security. A low-latency feed, which updates very frequently, is necessary for options trading to prevent arbitrage and accurately calculate risk. However, frequent updates increase gas costs on-chain and can increase the attack surface if the update mechanism is not robust.

Conversely, a high-latency feed, while cheaper and potentially more secure against flash loan attacks, renders the options protocol unusable for professional market makers who require real-time data to hedge their positions. The optimal design of the data relay is therefore a trade-off between speed, cost, and security, a trilemma that protocols attempt to solve through various aggregation and incentive mechanisms.

The relay architecture must account for the specific data requirements of options pricing models. This involves more than just a single spot price. The protocol requires a full volatility surface, which plots implied volatility across different strike prices and expiration dates.

This data is complex and changes rapidly. A robust relay must aggregate this data from multiple off-chain sources, apply a median or time-weighted average calculation, and then transmit the resulting value to the smart contract. The specific aggregation methodology directly impacts the reliability of the pricing model.

| Data Relay Architecture | Description | Impact on Options Protocol |

|---|---|---|

| Single Source Oracle | A single data provider or API feeds data directly to the smart contract. | High risk of manipulation; low cost; suitable only for low-value, non-critical data. |

| Aggregated Multi-Source Oracle | Data from multiple providers is aggregated off-chain and a median value is sent on-chain. | Reduced manipulation risk; higher cost; standard for options and derivatives. |

| Time-Weighted Average Price (TWAP) | Price calculated as a moving average over a period of time from on-chain transactions. | High latency; unsuitable for real-time options pricing; useful for long-term collateral checks. |

Approach

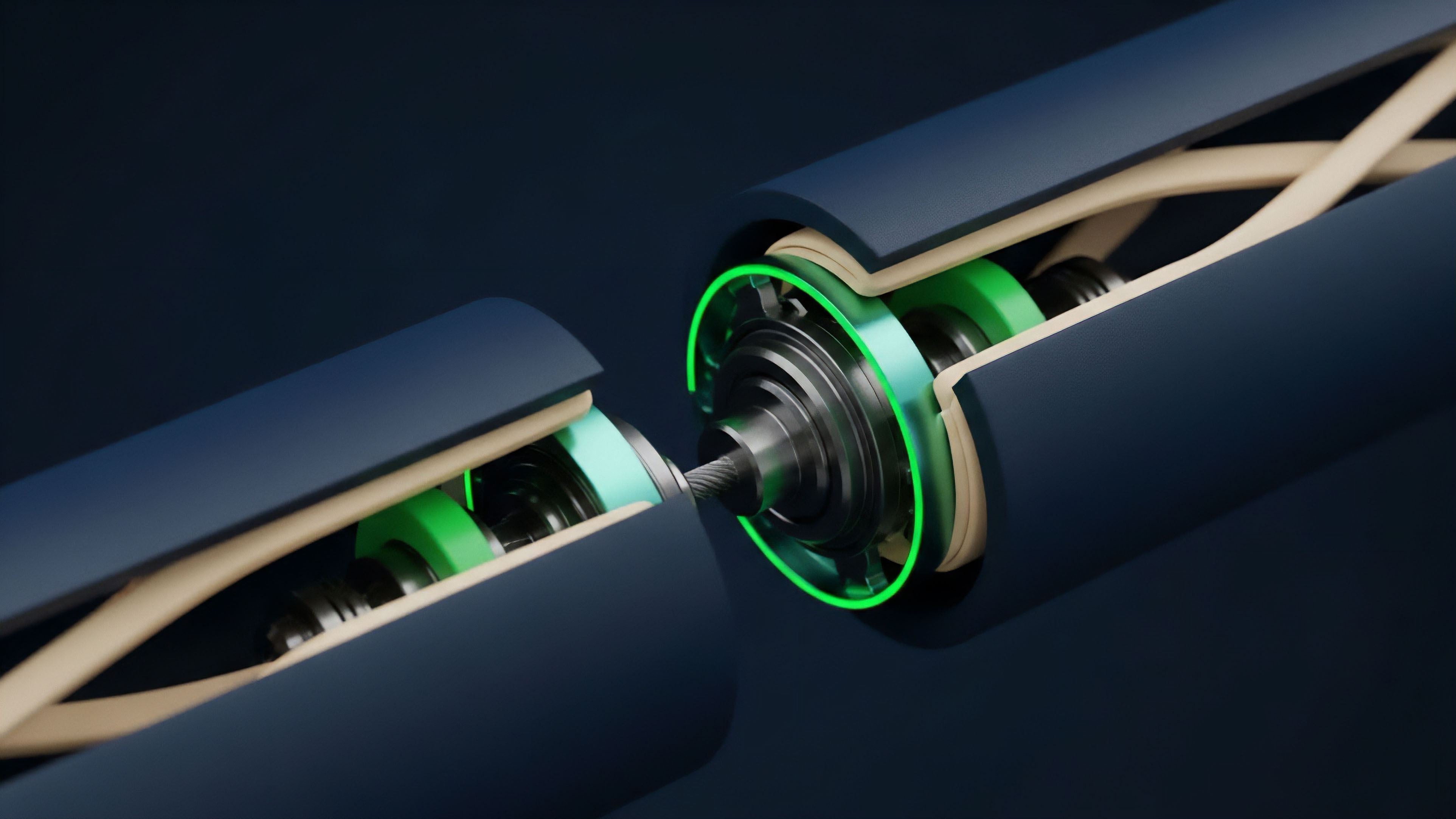

The practical implementation of an off-chain data relay for options protocols centers on a few key design choices. The most common approach utilizes a decentralized oracle network. These networks function by incentivizing independent data providers to submit data to a common aggregation layer.

The smart contract then queries this aggregation layer, receiving a consensus-based price rather than a single source input. This approach mitigates the risk of a single point of failure and makes data manipulation economically unfeasible by requiring an attacker to compromise a majority of the independent data providers.

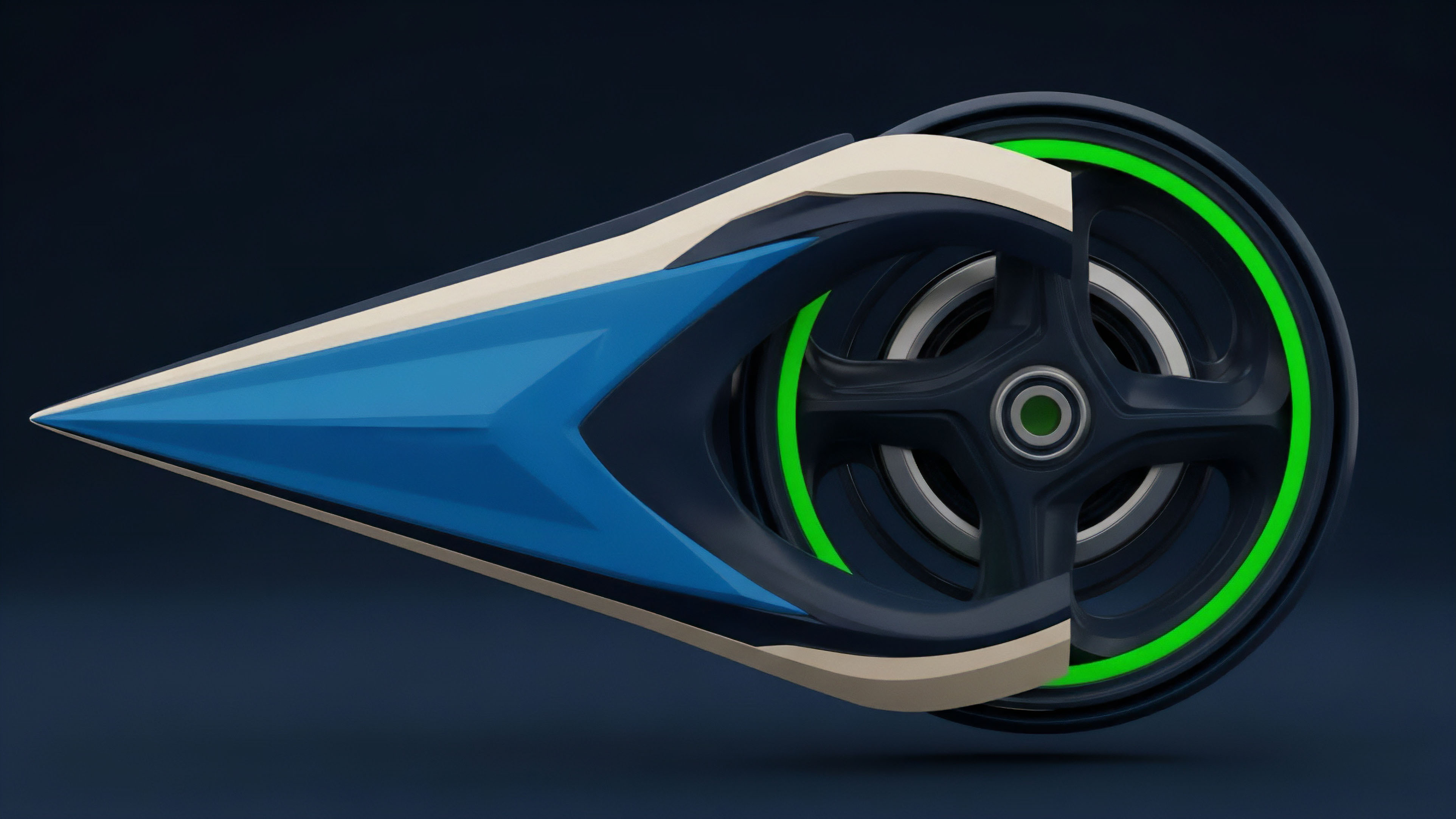

The second major approach involves specific data structures for options pricing. A standard spot price feed is insufficient. A truly sophisticated options protocol requires a continuous feed of implied volatility.

This is where the relay must deliver more than just a number; it must deliver a calculated surface. This involves aggregating data from various sources, calculating the implied volatility from a set of options prices, and then relaying that complex data structure on-chain. This process requires significant off-chain computation before a single value is committed to the blockchain.

The challenge lies in ensuring the integrity of this off-chain calculation, which is often done using a zero-knowledge proof or a similar verification mechanism to ensure the data has not been tampered with before reaching the smart contract.

Effective off-chain data relay requires balancing economic incentives for data providers against the security requirements of the underlying options smart contracts.

A third approach, less common but gaining traction, involves the use of “request-response” models. Instead of a continuous data feed, the smart contract requests a price only when a specific action, such as a liquidation or settlement, is required. This model significantly reduces gas costs but introduces a high risk of data latency.

The protocol must ensure that the price received is current and accurate at the time of execution, a problem often solved by using a time-locked data feed or by ensuring the data request is bundled with a time-sensitive transaction.

Evolution

The evolution of the Off-Chain Data Relay has mirrored the increasing complexity of DeFi itself. Early systems relied on a simple single-source feed, which proved disastrous for options protocols. The shift to decentralized oracle networks was the first major step.

However, these networks faced new challenges, specifically data latency and cost. A data feed that updates every 30 minutes, while secure, is useless for high-frequency options trading. This led to the development of “push” versus “pull” models.

In a push model, data providers continuously update the feed, regardless of whether a contract needs it. In a pull model, contracts request data on demand, paying for the update only when necessary.

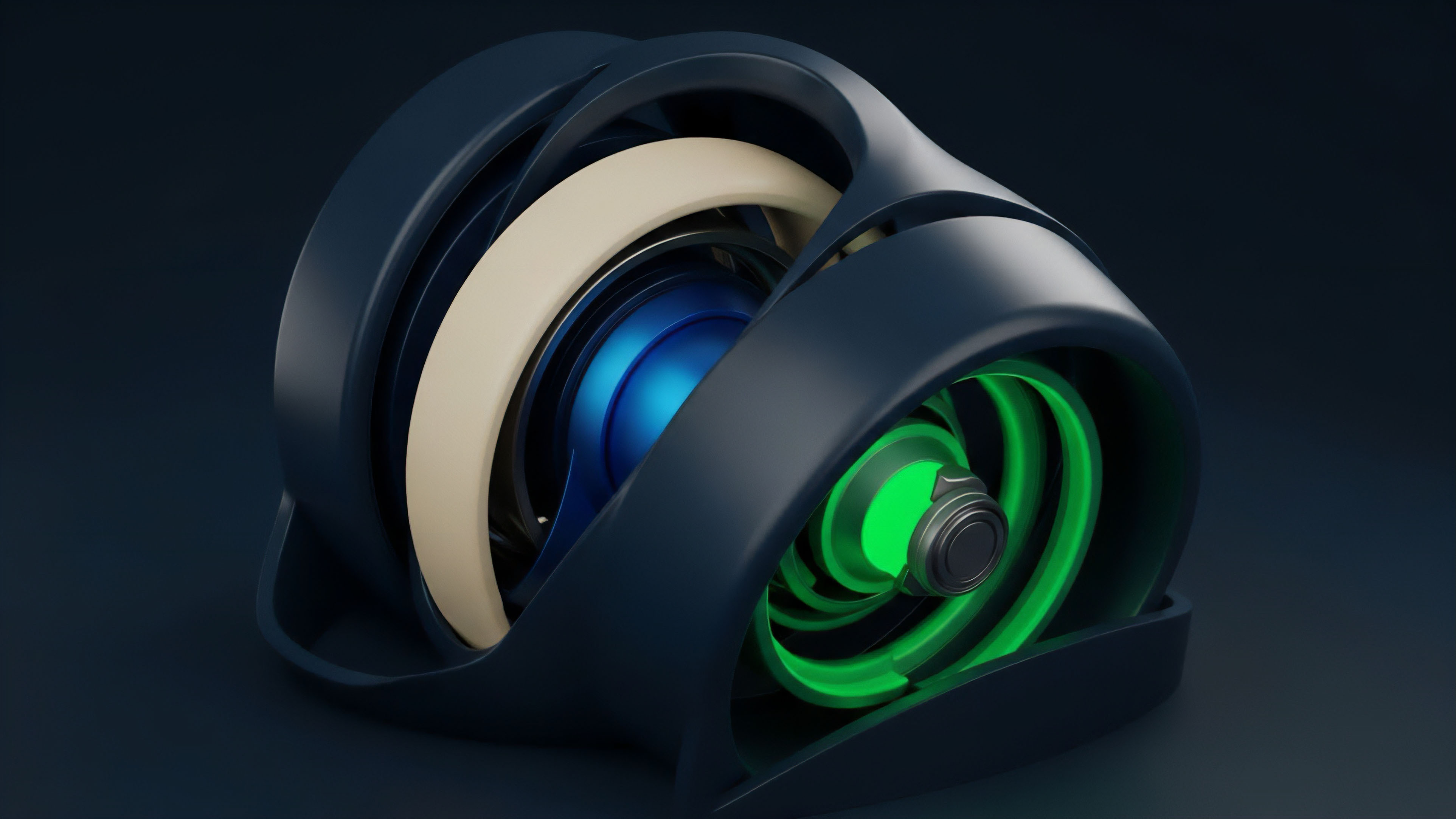

The current state of the art involves a blend of these approaches, often utilizing a multi-layered architecture. The data relay now functions as a network of networks, where different types of data are handled by specialized sub-systems. For instance, a protocol might use a high-frequency, low-cost off-chain data relay for pre-trade risk checks and a separate, more robust on-chain relay for final settlement.

The most significant development in this space is the shift from simple price feeds to a more comprehensive data structure. The relay now provides not only the spot price but also the implied volatility surface, a critical input for options pricing models. This evolution is driven by the demand for more sophisticated financial products and the need for greater capital efficiency.

The development of off-chain data relays has shifted from simple price feeds to complex, multi-layered systems that deliver aggregated volatility surfaces for accurate options pricing.

The challenge remains in mitigating specific attack vectors. A primary risk is the “front-running” of oracle updates. An attacker observes a data update in the mempool and executes a trade before the new price is finalized on-chain, profiting from the stale data.

To counteract this, protocols have implemented various techniques, including using time-locked data updates and ensuring that a specific data update cannot be used for a trade within a certain block window. The systemic risk of contagion from a single oracle failure is also a significant concern. If a major oracle network fails, it could trigger cascading liquidations across multiple options protocols, creating a systemic failure event.

- Latency Exploitation: Attackers can front-run oracle updates, exploiting the time delay between when data is available off-chain and when it is finalized on-chain.

- Data Source Manipulation: An attacker can compromise one or more data providers in an aggregated feed, skewing the median price and causing incorrect liquidations.

- Economic Incentive Misalignment: If data providers are not adequately incentivized or if the cost of manipulation is less than the potential profit, the oracle system becomes vulnerable to attack.

Horizon

Looking forward, the future of the Off-Chain Data Relay is moving toward greater decentralization and verifiability. The current reliance on a few major oracle networks introduces a single point of failure, even if the data itself is aggregated from multiple sources. The next generation of relays will likely involve a fully decentralized network where data provision is open and permissionless, secured by economic incentives and cryptographic verification.

A significant area of development is the integration of zero-knowledge proofs (ZKPs) for data verification. A ZKP allows a data provider to prove that they have correctly calculated the implied volatility surface from off-chain data without revealing the raw data itself. This increases both privacy and security, as the smart contract can verify the integrity of the data without needing to trust the data provider completely.

This approach reduces the reliance on economic incentives alone and moves toward a trustless verification model.

Another area of focus is the development of specific data feeds for complex financial instruments. The current general-purpose data feeds are insufficient for sophisticated options protocols. We will see the rise of specialized relays that provide specific data sets, such as real-time interest rate curves, volatility surfaces, and correlation matrices.

This specialization will allow options protocols to move beyond simple, single-asset options to more complex products like exotic options and volatility derivatives. The challenge remains in standardizing these complex data formats and ensuring interoperability between different protocols.

| Current Model (Push/Pull) | Future Model (ZK-Verification) |

|---|---|

| Data provided by a set of permissioned nodes. | Data provided by a permissionless network of verifiers. |

| Security based on economic incentives (slashing/staking). | Security based on cryptographic proofs (ZKPs). |

| Data latency dependent on block time and gas cost. | Data latency reduced through off-chain computation and on-chain verification. |

The regulatory horizon also impacts the future of data relays. As regulators focus on market manipulation and data integrity in decentralized finance, the need for auditable and verifiable data sources will become paramount. Protocols that can prove the integrity of their data feeds through cryptographic means will have a significant advantage in navigating future regulatory frameworks.

The Off-Chain Data Relay is evolving from a technical necessity into a core component of market integrity and regulatory compliance for decentralized financial systems.

Glossary

Off-Chain Order Matching Engines

Off Chain Relayer

Price Feeds

On-Chain Data Off-Chain Data Hybridization

Off-Chain Matching Settlement

Decentralized Data Provisioning

Off-Chain Execution Challenges

Private Transaction Relay Implementation Details

On-Chain Off-Chain Risk Modeling