Essence

Network Validation Mechanisms function as the foundational integrity protocols of distributed ledgers. They establish the truth of state transitions without reliance on centralized intermediaries. By enforcing consensus through cryptographic and economic constraints, these systems ensure that only valid transactions update the shared ledger.

Network validation mechanisms define the mathematical and economic rules that permit participants to reach agreement on the state of a decentralized ledger.

These systems convert computational energy or capital commitment into a verifiable record of activity. The primary utility involves maintaining liveness and safety in environments where participants possess divergent incentives. Proof of Stake and Proof of Work represent the two dominant architectures, each imposing different trade-offs regarding security, finality, and decentralization.

Origin

The genesis of Network Validation Mechanisms traces back to the Byzantine Generals Problem, a classic challenge in distributed computing regarding how to achieve consensus in the presence of malicious actors.

Early iterations utilized Proof of Work to introduce a cost to sybil attacks, where the expenditure of electricity served as a proxy for trust.

- Proof of Work requires participants to solve complex cryptographic puzzles, tethering security to physical hardware and energy consumption.

- Proof of Stake shifts the security burden to capital, where validator selection correlates with the amount of native assets locked within the protocol.

- Delegated Proof of Stake introduces representative governance to increase transaction throughput at the expense of absolute decentralization.

These designs evolved as the limitations of early validation became apparent, specifically regarding energy efficiency and throughput. The shift toward stake-based models reflects a maturing understanding of how to align participant incentives with protocol longevity through slashing conditions and rewards.

Theory

The mechanics of validation rely on game-theoretic frameworks where rational actors maximize their utility within defined constraints. A Validator must adhere to protocol rules to receive rewards, while any deviation results in the loss of staked assets, a process known as Slashing.

| Mechanism | Primary Constraint | Security Source |

| Proof of Work | Hashrate | Energy |

| Proof of Stake | Capital | Economic |

| Proof of Authority | Reputation | Identity |

The mathematical rigor of these systems rests on the assumption that the cost of an attack exceeds the potential gain. Finality serves as the critical metric, indicating the point at which a transaction becomes immutable.

Finality in decentralized networks is the mathematical threshold where a transaction becomes irreversible according to the consensus rules.

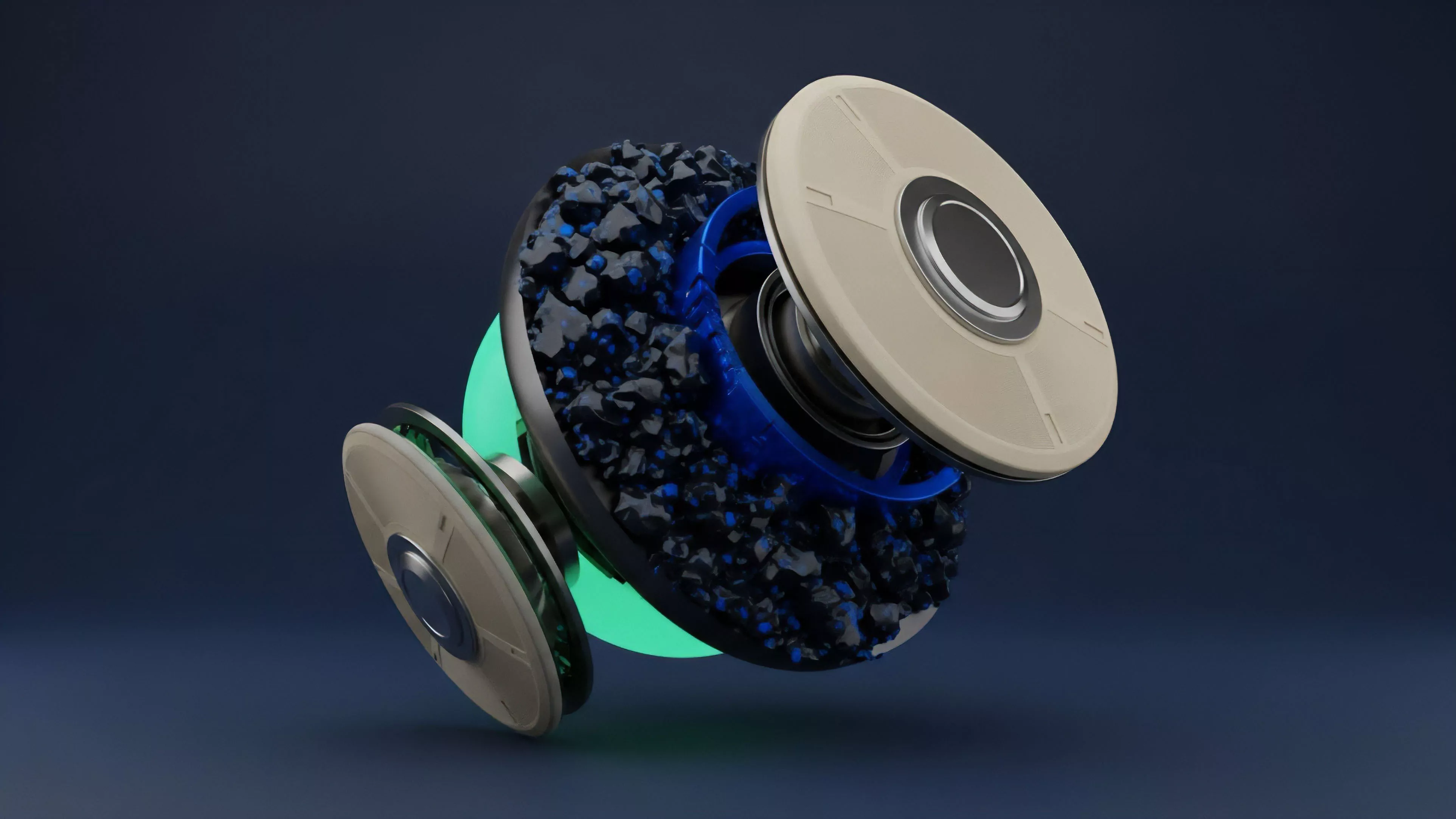

This is where the model becomes elegant ⎊ and dangerous if ignored. The interdependency between protocol security and the market value of the staked asset creates a feedback loop. If the asset price drops significantly, the cost to execute a 51% attack decreases, potentially compromising the entire system.

Approach

Current validation strategies emphasize modularity and scalability.

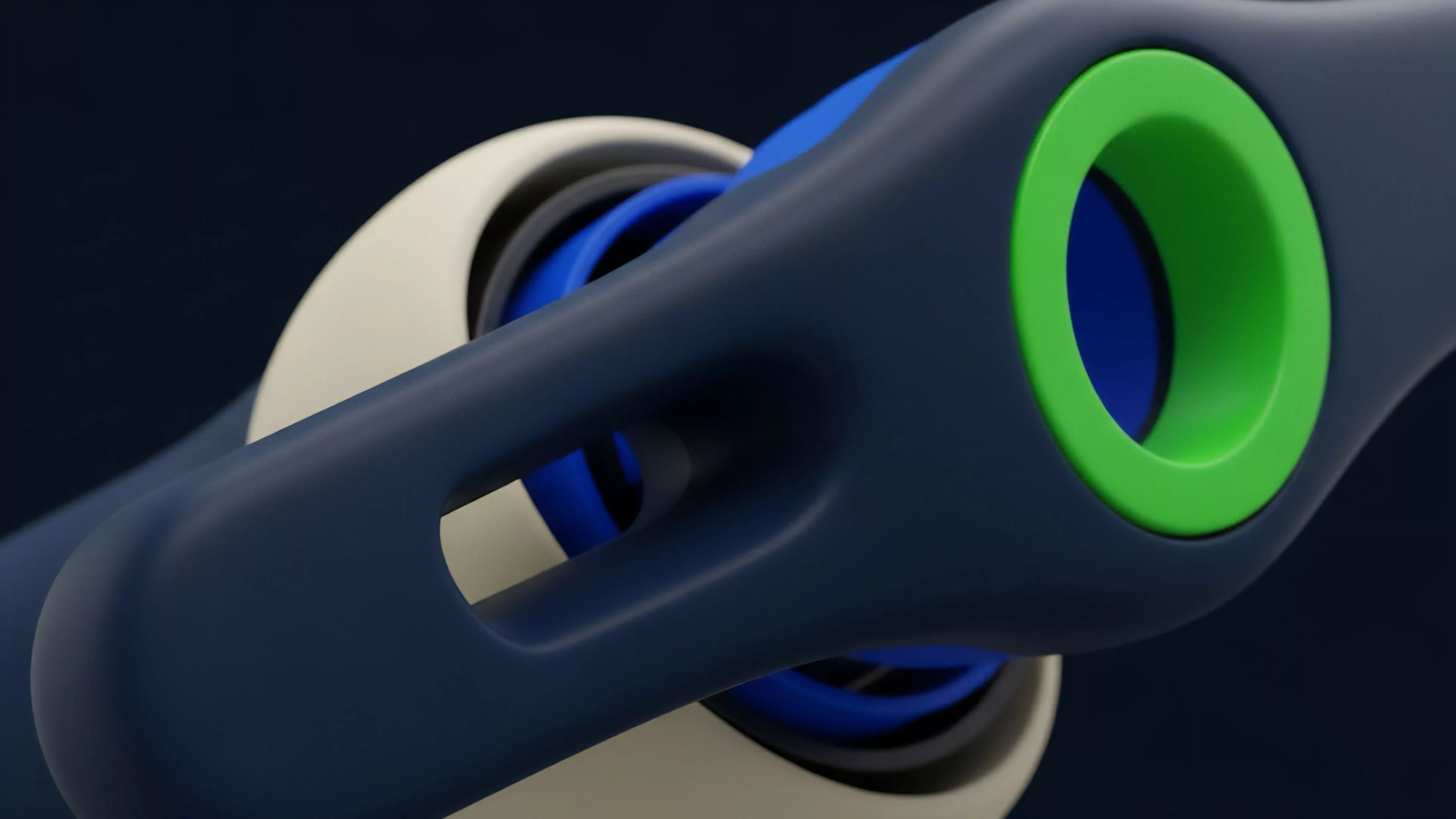

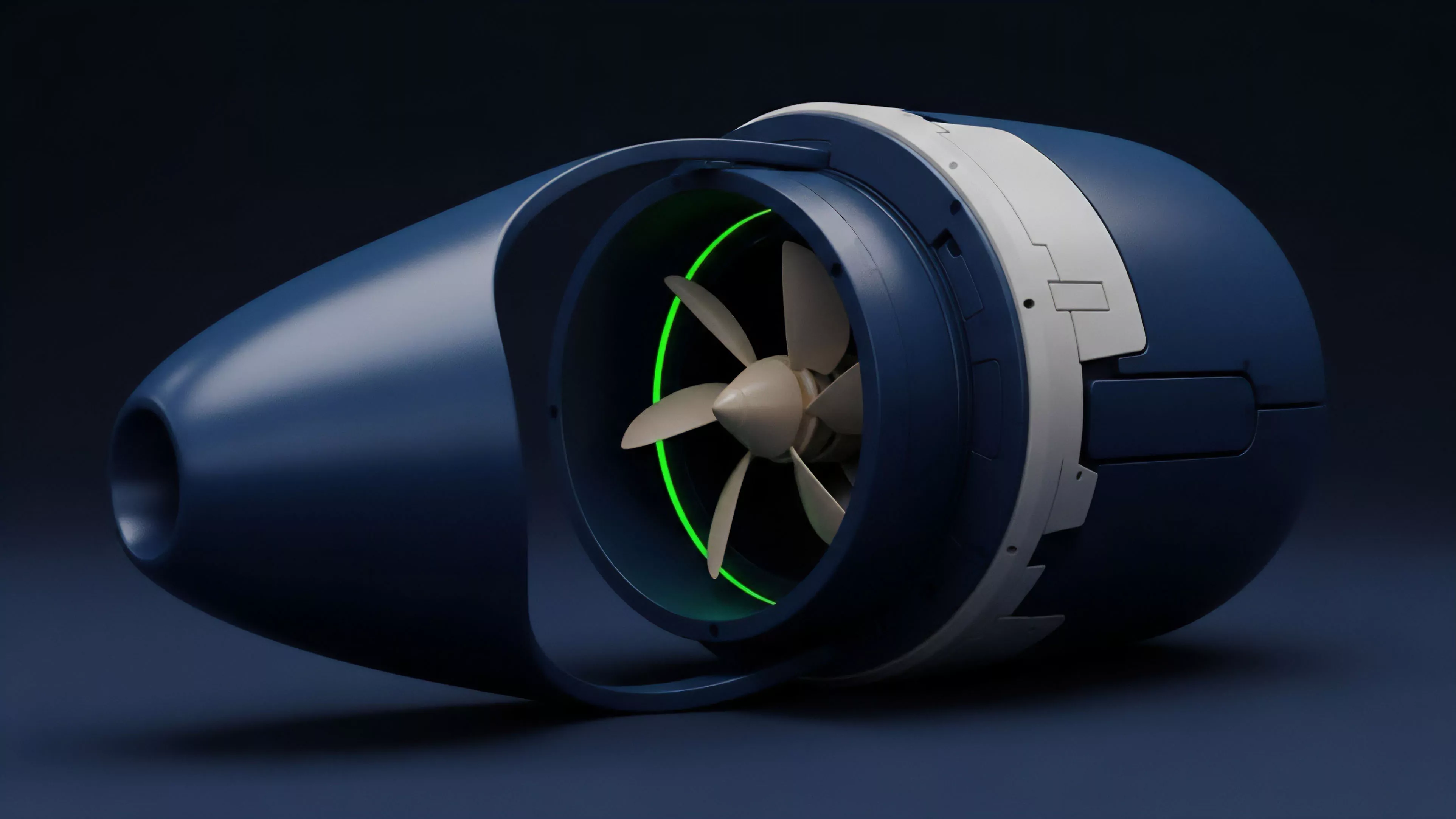

Modern protocols decouple execution from consensus, allowing specialized Sequencers to order transactions while Validators verify the state. This architecture minimizes the latency between transaction submission and final settlement.

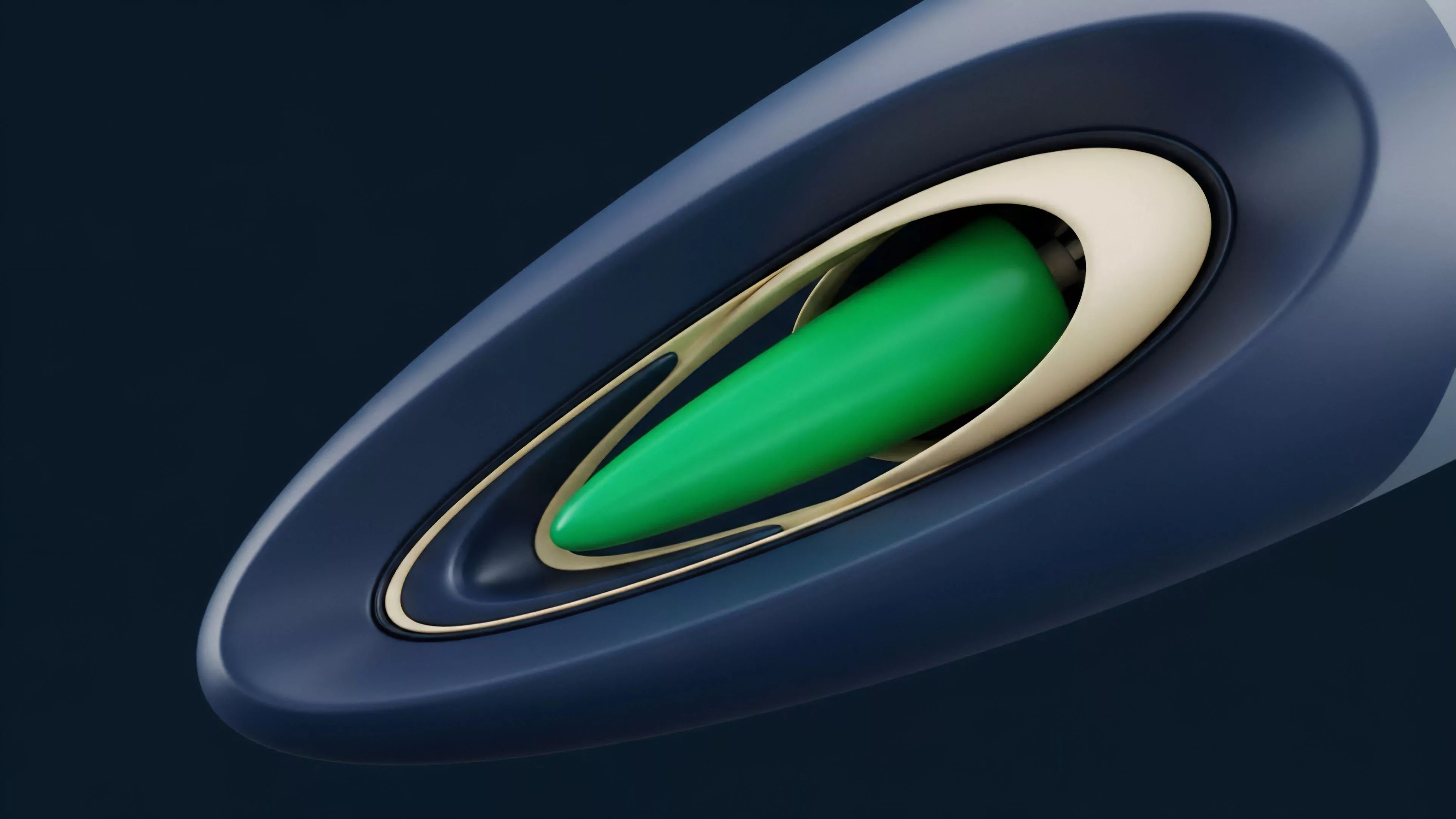

- Liquid Staking protocols enable capital efficiency by issuing derivative tokens against locked assets, allowing users to participate in validation while maintaining liquidity.

- Restaking frameworks permit validators to leverage their existing security commitments to protect secondary protocols, increasing capital utility.

- Zero Knowledge Proofs allow for succinct validation of large batches of transactions, reducing the computational load on individual nodes.

This transition toward modularity represents a departure from monolithic designs. The complexity of managing these interconnected systems introduces systemic risk, as vulnerabilities in a single smart contract can propagate across multiple protocols.

Evolution

The progression of Network Validation Mechanisms reflects a movement from hardware-centric security to complex economic modeling. Early designs focused on resisting censorship through raw power.

Contemporary models prioritize throughput and interoperability, often sacrificing simplicity for performance.

The evolution of validation mechanisms tracks the transition from energy-intensive physical security to sophisticated capital-based economic incentives.

This shift has created new attack vectors. In highly leveraged environments, the intersection of liquid staking derivatives and decentralized finance creates reflexive liquidation cascades. The validator is no longer just a technical operator but a critical participant in a complex financial engine.

Sometimes I wonder if we have replaced the fallibility of human intermediaries with the rigidity of flawed code, yet the efficiency gains remain undeniable.

Horizon

Future validation architectures will likely prioritize Proposer Builder Separation to mitigate the centralization risks inherent in block construction. The focus is shifting toward verifiable, off-chain computation where validation occurs on high-performance layers while settlement remains on the base layer.

| Future Trend | Implication |

| Homomorphic Encryption | Privacy-preserving validation |

| Shared Sequencing | Cross-chain atomic composability |

| Dynamic Slashing | Risk-adjusted validator penalties |

We are moving toward a state where validation becomes a background utility, abstracted away from the end user. The ultimate challenge remains the alignment of human incentives with cryptographic truth as protocols scale to support global financial volumes.