Essence

Data verification mechanisms are the objective truth sources that bridge the gap between off-chain market reality and on-chain smart contract execution. For crypto options, these mechanisms are particularly critical because derivatives derive their value directly from the price movement of an underlying asset. A derivative contract, especially one that settles financially, requires an indisputable price feed at specific points in time ⎊ for example, at expiration or during a margin call.

The DVM provides this external data point, acting as the final arbiter of value for all participants. Without a reliable DVM, a decentralized options protocol cannot guarantee fair settlement, making the contracts unviable for serious financial strategies. The DVM’s functional role extends beyond simple price discovery; it defines the risk profile of the entire protocol.

The accuracy, latency, and manipulation resistance of the DVM directly determine the collateral requirements, liquidation thresholds, and overall capital efficiency of the system. A DVM that is easily manipulated or slow to update creates systemic risk for all users, as collateral can be incorrectly valued, leading to cascading liquidations or protocol insolvency.

Data verification mechanisms serve as the critical on-chain arbiters of off-chain asset prices, enabling fair settlement and risk management for decentralized options contracts.

Origin

The necessity for robust DVMs emerged directly from the “oracle problem” that plagued early decentralized finance protocols. In the initial iterations of DeFi, protocols often relied on simplistic, single-source price feeds or internal market data. These methods were vulnerable to a class of attacks known as flash loan manipulations.

An attacker could borrow a large amount of capital, manipulate the price of an asset on a decentralized exchange (DEX) to create a temporary spike, and then execute a profitable trade against a lending protocol or options vault before repaying the loan ⎊ all within a single transaction block. The initial response to these vulnerabilities was the creation of decentralized oracle networks. These networks, such as Chainlink, sought to replace single-source feeds with a consensus-based approach.

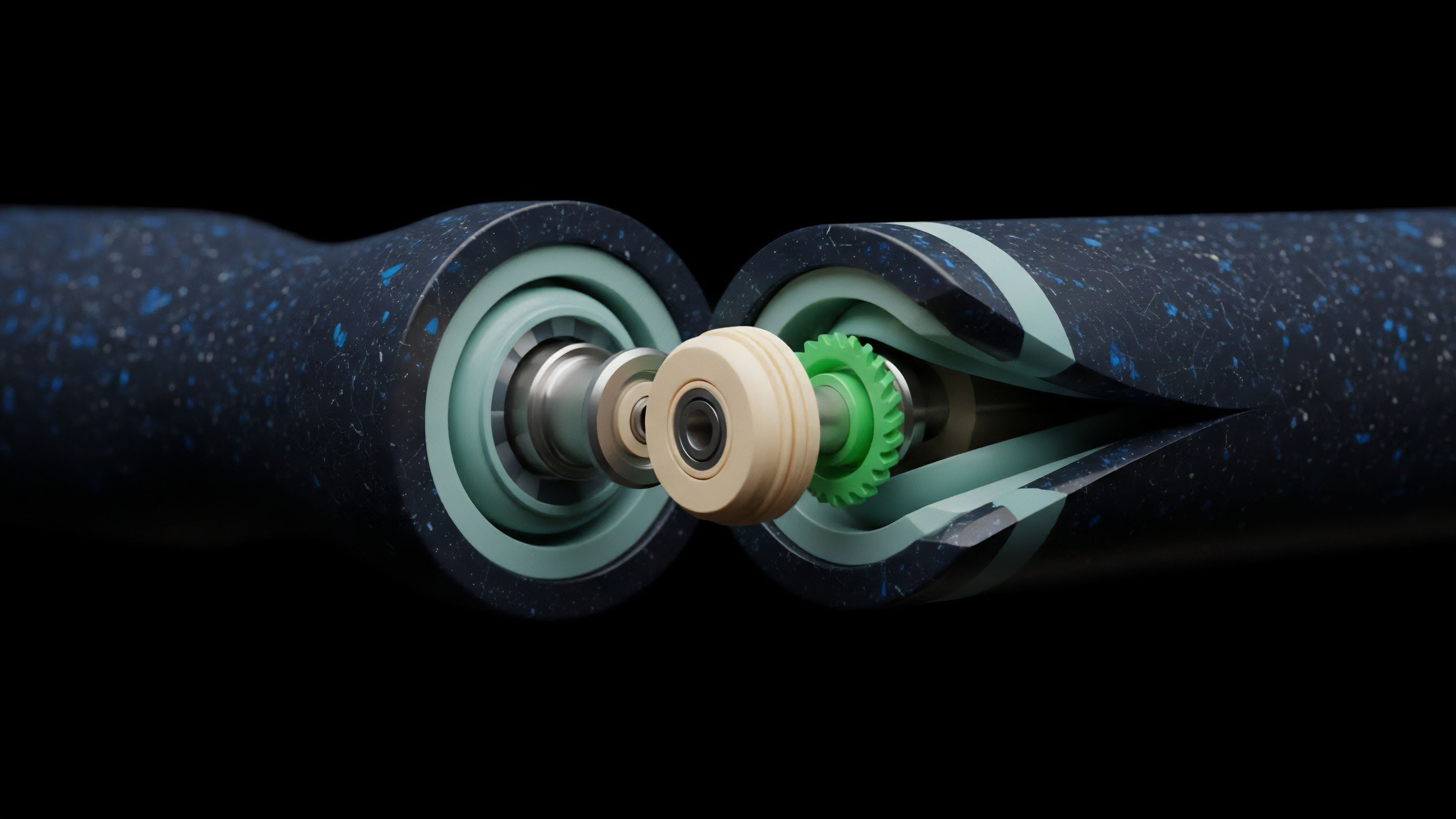

Multiple independent nodes would source data from various off-chain exchanges, aggregate the results, and submit a single, validated price to the blockchain. This shift in architecture moved the data source from a single point of failure to a distributed network, significantly raising the cost and complexity required for a successful manipulation attack. The origin story of DVMs is one of adversarial engineering, where each new protocol design or data aggregation method was a direct response to a previous exploit.

Theory

The theoretical foundation of DVMs in options pricing rests on the principle of minimizing information asymmetry and maximizing data integrity. A primary theoretical consideration for options protocols is whether to use Time-Weighted Average Price (TWAP) or Volume-Weighted Average Price (VWAP) for data aggregation. These two methods represent distinct trade-offs between manipulation resistance and market representation.

- Time-Weighted Average Price (TWAP): This method calculates the average price of an asset over a specific time interval, typically measured in blocks. The TWAP approach is highly effective at preventing flash loan attacks because an attacker cannot instantaneously manipulate the price for a sustained period across many blocks. The trade-off is latency; the price feed reflects a historical average rather than the current market price. This lag can cause issues during periods of high volatility, leading to settlement prices that differ significantly from real-time market values.

- Volume-Weighted Average Price (VWAP): This method calculates the average price of an asset over a time interval, weighted by the volume traded at each price point. VWAP provides a more accurate representation of the actual cost of executing large orders, making it superior for determining the fair market value of an asset in a high-liquidity environment. However, VWAP can be more susceptible to manipulation if an attacker can execute large-volume trades at manipulated prices within the calculation window, especially in less liquid markets.

The choice between TWAP and VWAP is a fundamental architectural decision that dictates the specific risk profile of the options protocol. A protocol focused on long-term, low-volatility strategies might prioritize TWAP for security, while a protocol targeting high-frequency traders might prefer VWAP for accuracy, accepting a different set of risks.

| Data Aggregation Method | Primary Benefit | Primary Risk/Limitation | Best Use Case for Options |

|---|---|---|---|

| Time-Weighted Average Price (TWAP) | Flash loan resistance; price stability | Latency; price lag during high volatility | Long-term options; collateral valuation |

| Volume-Weighted Average Price (VWAP) | Market-representative pricing; accurate cost basis | Manipulation risk via large-volume trades | Short-term options; high-frequency trading |

Approach

The implementation of DVMs in modern options protocols involves a multi-layered approach to risk mitigation. A key element is the Data Attestation Mechanism , where the DVM not only provides a price but also attests to its source and freshness. This allows the protocol to verify the integrity of the data before using it for sensitive operations like liquidation or settlement.

The operational approach for DVMs in options protocols can be broken down into specific functional requirements:

- Latency Management: The DVM must provide price updates at a frequency that matches the required risk tolerance of the options contract. For short-dated options, a high-frequency update is necessary, while longer-dated contracts can tolerate more latency.

- Collateral Verification: The DVM continuously feeds prices to the protocol’s margin engine to calculate the collateralization ratio of each options position. If the ratio falls below a predetermined threshold, the DVM triggers the liquidation process. The accuracy of this price feed is paramount; a stale price can lead to undercollateralized positions, while an overly aggressive feed can trigger unnecessary liquidations.

- Settlement Logic: At expiration, the DVM provides the final settlement price for the option. This price determines the final payoff to the option holder. The DVM must be configured to use a reliable data source that is resistant to manipulation at the exact moment of settlement.

- Circuit Breakers: Advanced DVMs incorporate built-in safety mechanisms. These mechanisms automatically halt liquidations or settlements if the price feed deviates significantly from historical averages or other reference prices, preventing cascading failures during extreme market volatility or data source malfunctions.

A critical aspect of the approach is the Settlement Delay Mechanism. To prevent front-running, many options protocols do not execute settlement immediately upon receiving the DVM price. Instead, they introduce a time delay, allowing participants to review the DVM price and ensuring that any potential manipulation attempt has time to be detected and resolved before a final action is taken.

This delay introduces a necessary friction to ensure system integrity.

Evolution

DVMs have evolved from simple on-chain price feeds to complex, cross-chain data integrity layers. The initial challenge was simply getting data onto a single blockchain securely.

The current challenge is providing data integrity across multiple, interconnected chains. As options protocols expand from a single Layer 1 network to multiple Layer 2s and sidechains, the DVM must be able to verify data from different execution environments and consolidate it into a single, reliable source of truth. The next stage of DVM evolution involves moving beyond price feeds to deliver more sophisticated financial data.

For options, the true value of a contract depends heavily on implied volatility and the volatility surface , not just the underlying asset price. The current generation of DVMs is beginning to address this by providing feeds for volatility data. This data is significantly more complex to verify than a simple price, as it requires aggregating information from multiple sources and calculating a derived value.

The transition from simple price feeds to providing complex volatility surfaces represents a significant leap in DVM capabilities, allowing for more accurate on-chain options pricing models.

The challenge of data integrity in a multi-chain environment introduces new complexities. How does a protocol on Layer 2 verify that a DVM price feed originating from Layer 1 has not been manipulated during the cross-chain bridge process? This requires a new layer of verification mechanisms, often involving Merkle proofs or other cryptographic attestations, to ensure data integrity as it moves between different execution environments.

The integrity of the options market hinges on the ability to trust data that traverses these boundaries.

Horizon

The future of DVMs in crypto options is not simply about faster data; it is about enabling entirely new forms of derivatives. The next generation of DVMs will move from being passive data providers to active components in risk management.

This includes the on-chain implementation of volatility surfaces. Currently, most decentralized options protocols rely on simplistic Black-Scholes models, which assume constant volatility. A DVM capable of delivering a dynamic volatility surface ⎊ a three-dimensional plot of implied volatility across different strike prices and expirations ⎊ would allow for the creation of far more sophisticated options products.

The ability to accurately model volatility on-chain will open up opportunities for exotic options , such as volatility swaps or options based on specific market events rather than just price. This requires DVMs to ingest and verify data from sources beyond standard exchanges, including news feeds and event data, to trigger smart contract logic.

| Current DVM Data Type | Future DVM Data Type | Impact on Options Protocol |

|---|---|---|

| Asset Price (TWAP/VWAP) | Implied Volatility Surface | Enables advanced pricing models and exotic options |

| Collateral Value | Liquidation Thresholds (Dynamic) | Optimizes capital efficiency based on real-time risk |

| Settlement Price | Event-Triggered Data | Allows for binary options based on external events |

The ultimate goal for DVMs is to achieve data composability. This means that a DVM’s output can be seamlessly integrated into multiple protocols simultaneously, creating a shared data layer for the entire decentralized financial system. The integrity of this shared layer will become the single most important factor in preventing systemic risk propagation across different DeFi applications.

The DVM will effectively function as the core data backbone for a truly resilient and interconnected financial ecosystem.

The evolution of DVMs toward providing dynamic volatility surfaces and event-triggered data will unlock a new generation of sophisticated options products on-chain.

Glossary

Black-Scholes Model Verification

Code Logic Verification

Zero-Cost Verification

Capital Requirement Verification

Cryptographic Verification Burden

Collateral Health Verification

Data Latency Issues

Theta Decay Verification

Consensus Price Verification