Essence

The foundation of a reliable options market, whether traditional or decentralized, rests on the integrity of its inputs. Data Provenance in this context refers to the verifiable, auditable history of every data point used in the calculation, pricing, and settlement of a financial contract. This goes beyond a simple price feed; it encompasses the entire data supply chain from source origination to on-chain consumption.

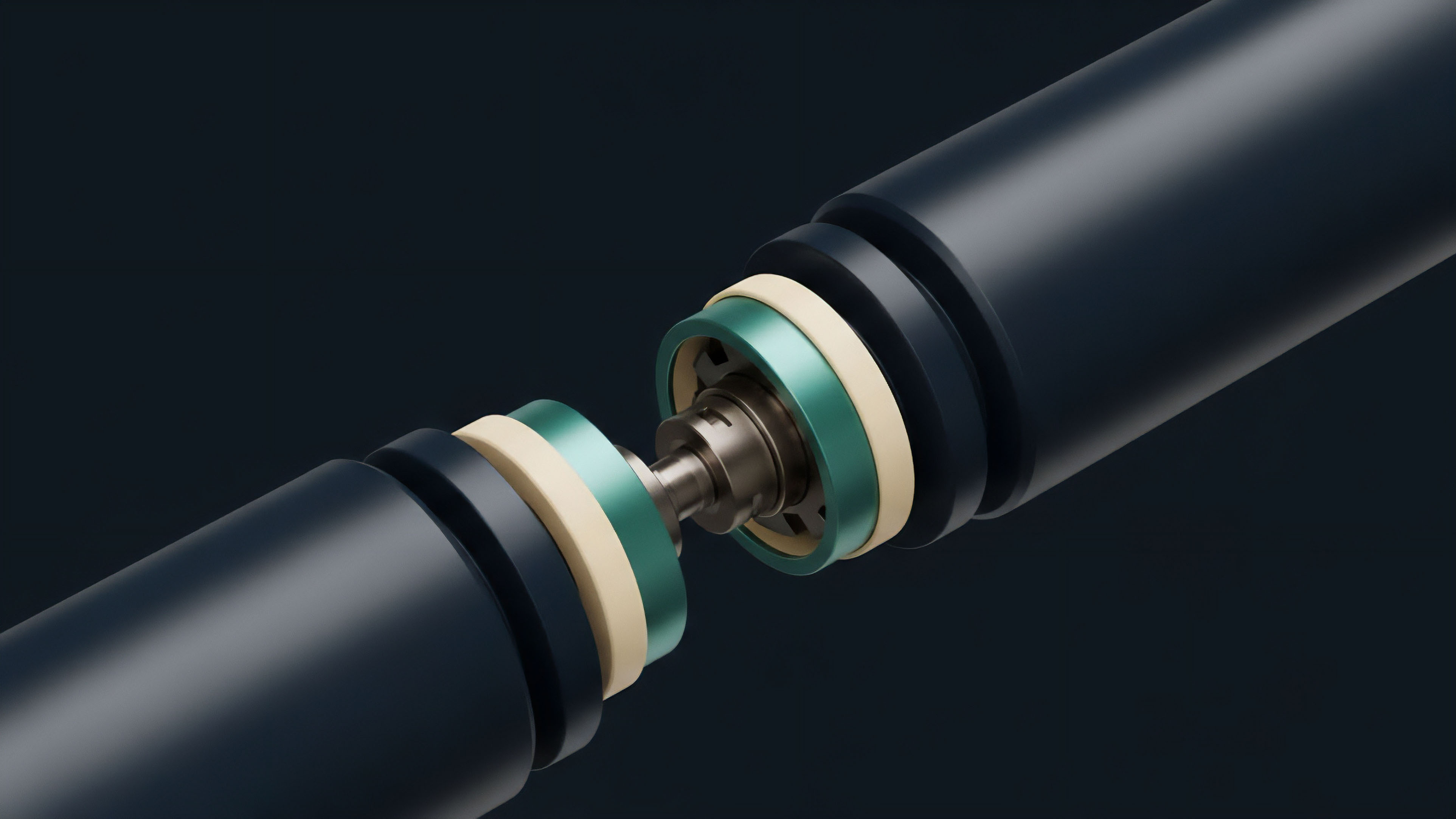

For options, this chain includes spot price data for underlying assets, implied volatility surfaces, and risk-free rates. Without transparent provenance, the system operates on faith in the data provider, creating a single point of failure and introducing systemic risk. The core challenge in decentralized finance is not executing the contract logic trustlessly, but rather ensuring the data inputs that feed that logic are equally trustless and resistant to manipulation.

Data Provenance establishes a chain of custody for financial data, transforming opaque inputs into verifiable facts required for trustless settlement.

The ability to verify the origin and transformation of data inputs is paramount for derivative markets because options pricing models are highly sensitive to small changes in inputs. A slight deviation in the underlying asset’s price feed, even for a brief moment, can trigger incorrect margin calls or liquidations. Data provenance provides the necessary audit trail to trace such failures back to their source, allowing for a post-mortem analysis of system integrity.

This mechanism is a critical architectural requirement for building resilient decentralized derivatives.

Origin

The concept of data provenance in finance gained prominence following the 2008 financial crisis, where the opacity of underlying asset data for complex derivatives like collateralized debt obligations (CDOs) concealed systemic risk. The inability to trace the quality and history of the underlying loans made accurate risk assessment impossible.

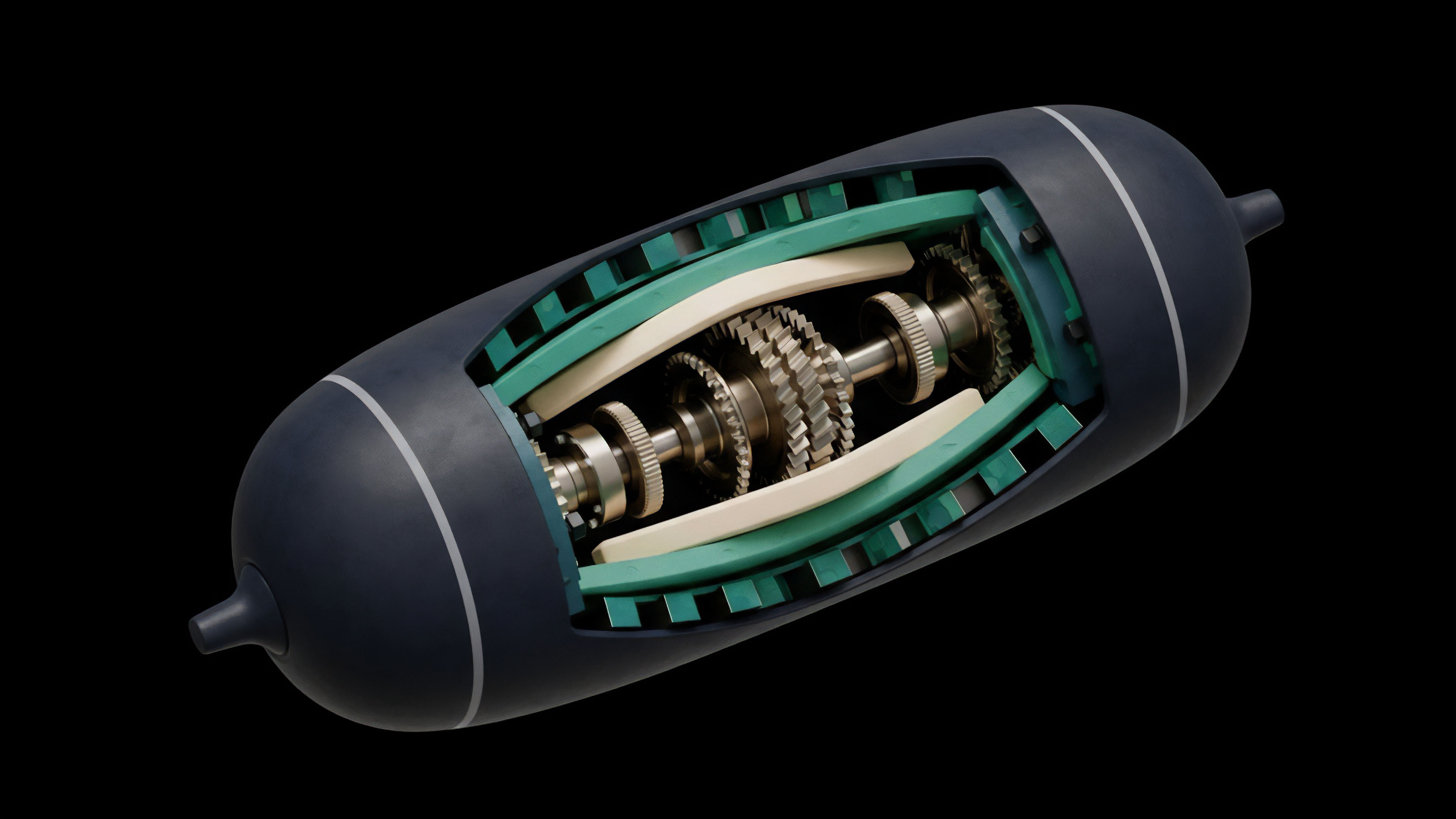

In the context of decentralized finance, the need for data provenance became acutely apparent during early oracle attacks. These attacks exploited the vulnerability of smart contracts that relied on single-source oracles for price data. A flash loan could manipulate the price on a decentralized exchange, causing an options protocol to miscalculate collateral value or execute liquidations based on a manipulated price.

The lessons from these exploits highlighted a fundamental flaw in early DeFi design: a decentralized contract operating on centralized data inputs creates a paradox of trust. The solution required extending the principles of decentralization and immutability from the contract code itself to the data supply chain. The initial response involved moving from single-source oracles to multi-source aggregation models.

This transition represented the first step toward building verifiable data provenance into the architecture of decentralized derivatives. The evolution of oracle design, from simple price feeds to complex data validation networks, directly addresses the need for a transparent and secure history of data inputs.

Theory

From a quantitative finance perspective, data provenance directly impacts the accuracy and integrity of the Greeks, which measure an option’s sensitivity to various market factors.

The Black-Scholes model and its variations require accurate inputs for spot price, time to expiration, volatility, and risk-free rate. If the data source for any of these inputs lacks provenance, the resulting Greek values are unreliable.

Data Integrity and Pricing Models

The primary inputs for options pricing are often sourced from multiple venues and aggregated. The specific methodology for this aggregation is where provenance becomes critical. For example, using a volume-weighted average price (VWAP) requires not just the price data from exchanges, but also the volume data, which must be verifiable.

If the provenance of the volume data is compromised, the resulting VWAP calculation will be skewed, leading to mispricing of the option. The Vega of an option, which measures sensitivity to volatility, is particularly susceptible to data provenance issues. Volatility surfaces are complex datasets derived from market activity.

If the data used to construct this surface is not verifiable, the resulting volatility input for the pricing model introduces unquantifiable risk.

Systemic Risk and Liquidation Mechanisms

Data provenance directly relates to systemic risk in a leveraged environment. Decentralized options protocols rely on accurate price feeds to determine collateral ratios and execute liquidations. A lack of provenance allows for a data manipulation attack to trigger cascading liquidations.

The data supply chain must be designed to resist such attacks by ensuring that data inputs are not only accurate but also delivered in a timely manner. The latency and staleness of data feeds are critical factors in data provenance. If a data point is delivered late, a protocol might liquidate a position based on outdated information, leading to unfair losses for the user.

The system’s resilience depends on the ability to prove that the data used for settlement was both correct and timely at the exact moment of execution.

| Options Input Data | Risk Parameter Impacted | Provenance Requirement |

|---|---|---|

| Underlying Spot Price | Delta, Gamma, Collateral Value | Verifiable trade data from multiple sources, timestamped. |

| Implied Volatility Surface | Vega, Theta | Transparent aggregation methodology, source validation. |

| Risk-Free Rate | Pricing, Carry Cost | Verifiable on-chain rate or reliable off-chain source. |

| Liquidation Thresholds | Systemic Risk, Solvency | Real-time data feeds with verifiable aggregation logic. |

Approach

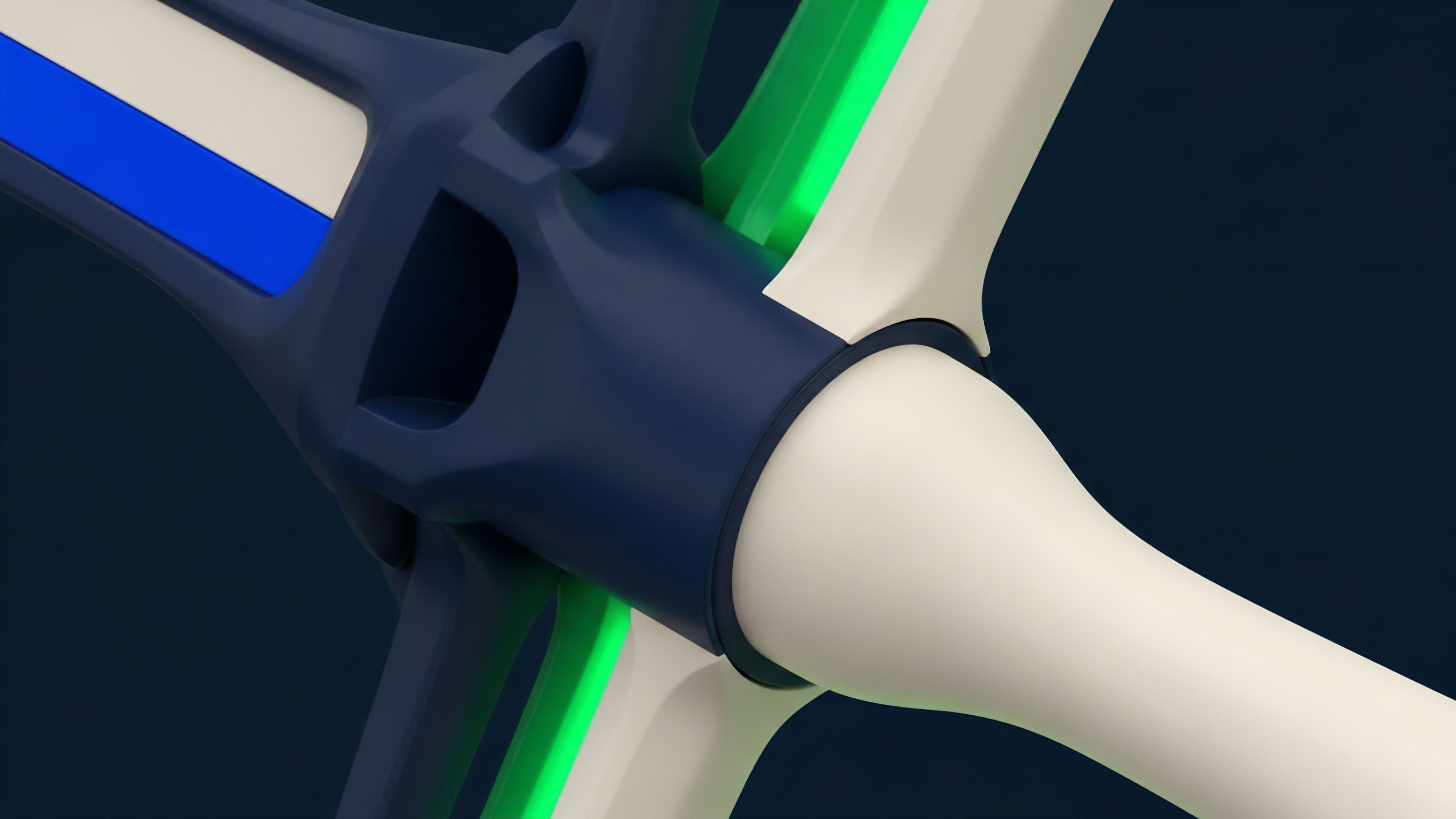

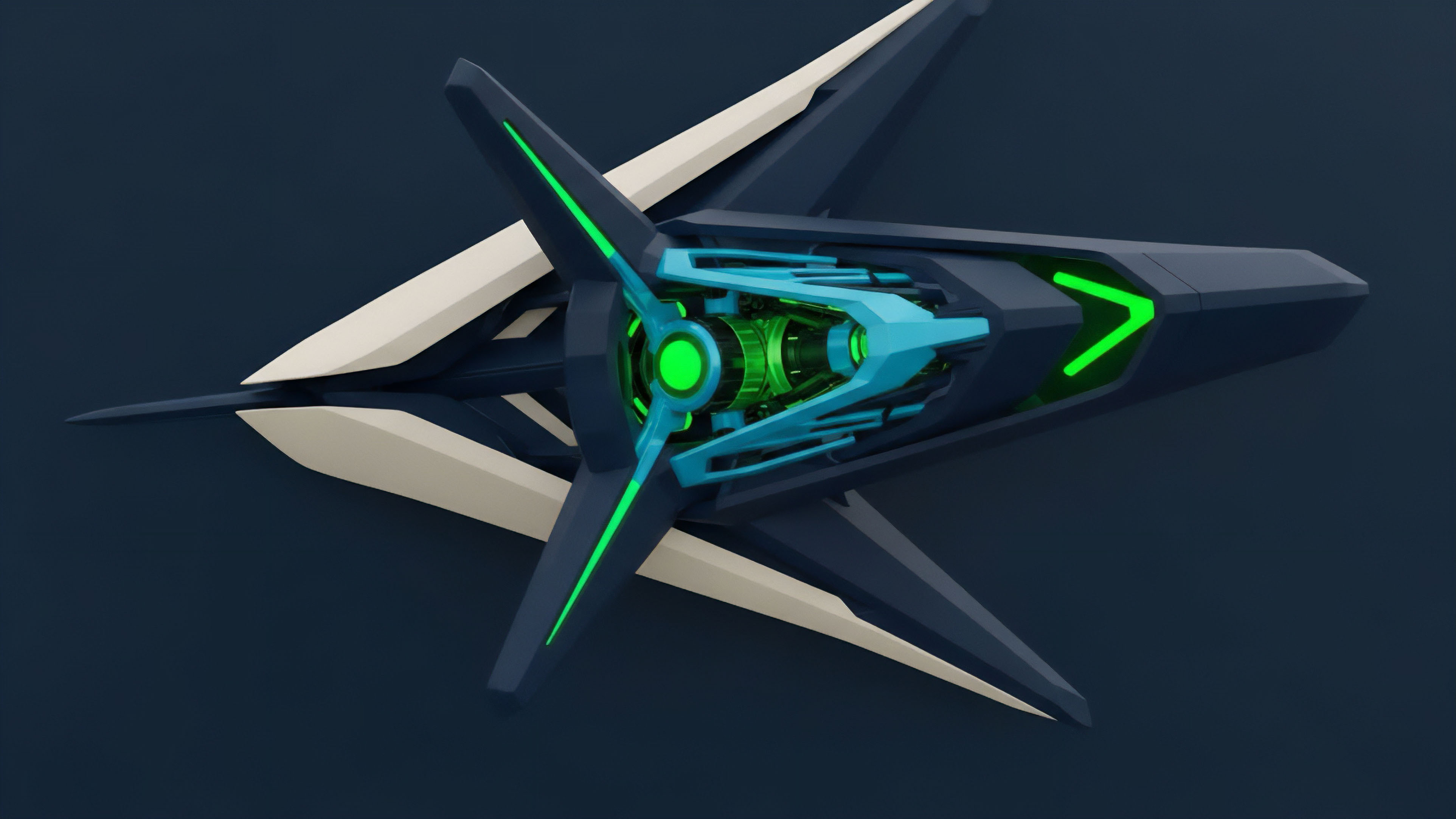

Current implementations of data provenance in decentralized options protocols focus on two primary mechanisms: robust oracle networks and transparent data aggregation logic. The objective is to create a data supply chain where data points are difficult to manipulate and easy to verify.

Oracle Network Architecture

Oracle networks, such as Chainlink or Pyth, serve as the data backbone for decentralized derivatives. They collect data from multiple sources (exchanges, data providers) and aggregate it before feeding it to the smart contract. The provenance in this approach is established by:

- Source Validation: The oracle network verifies that data providers are legitimate and correctly incentivized.

- Aggregation Methodology: The specific logic used to combine multiple data points into a single output (e.g. median, volume-weighted average) is transparent and auditable on-chain.

- Data Attestation: Data providers cryptographically sign their data submissions, providing an on-chain record of where the data originated.

The choice of aggregation method significantly impacts the resilience of the system. A simple median calculation protects against a single malicious data provider, while a VWAP better reflects true market price discovery but requires more complex data inputs.

The integrity of a decentralized options protocol relies on the data supply chain being as robust and transparent as the smart contract code itself.

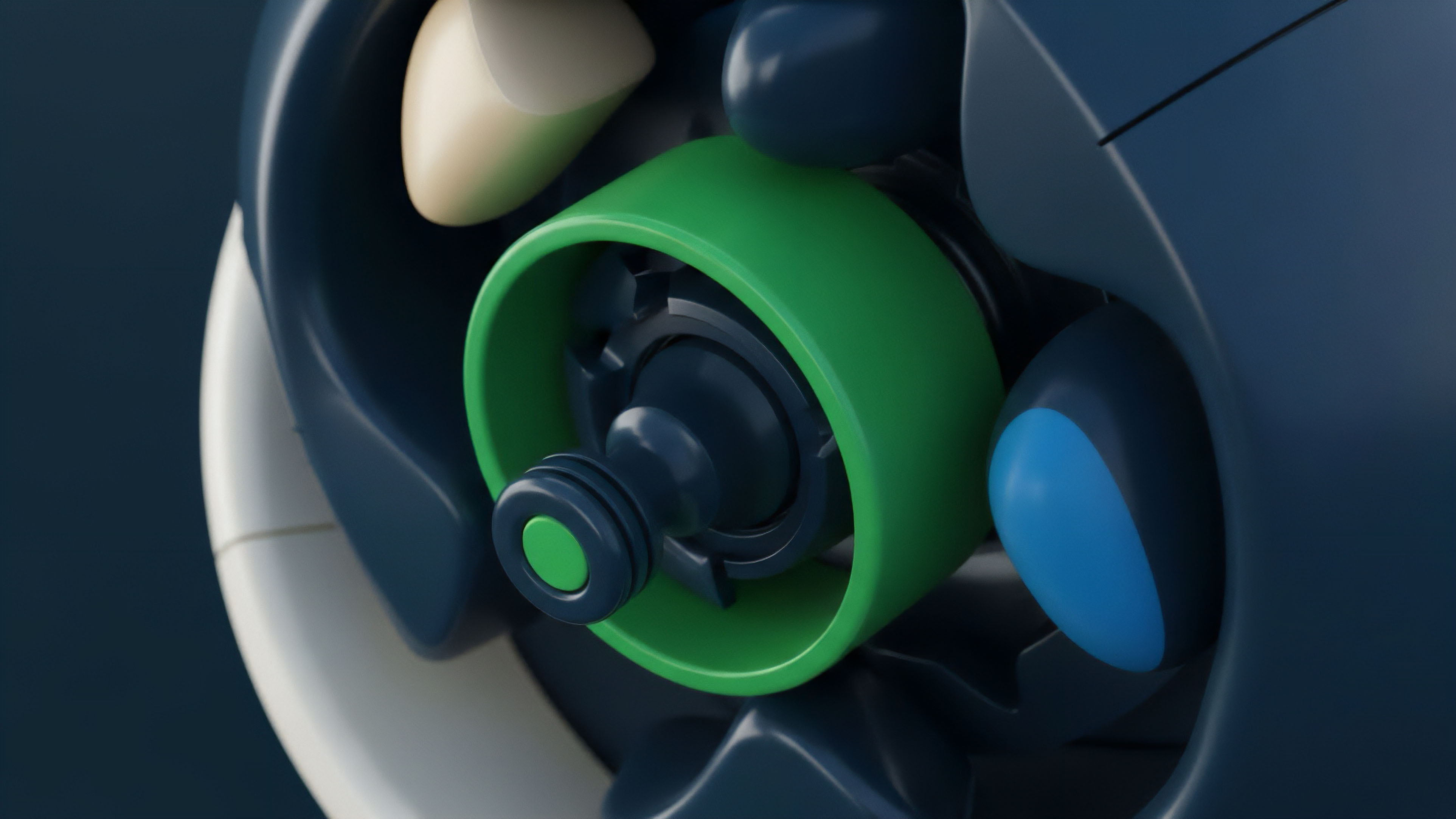

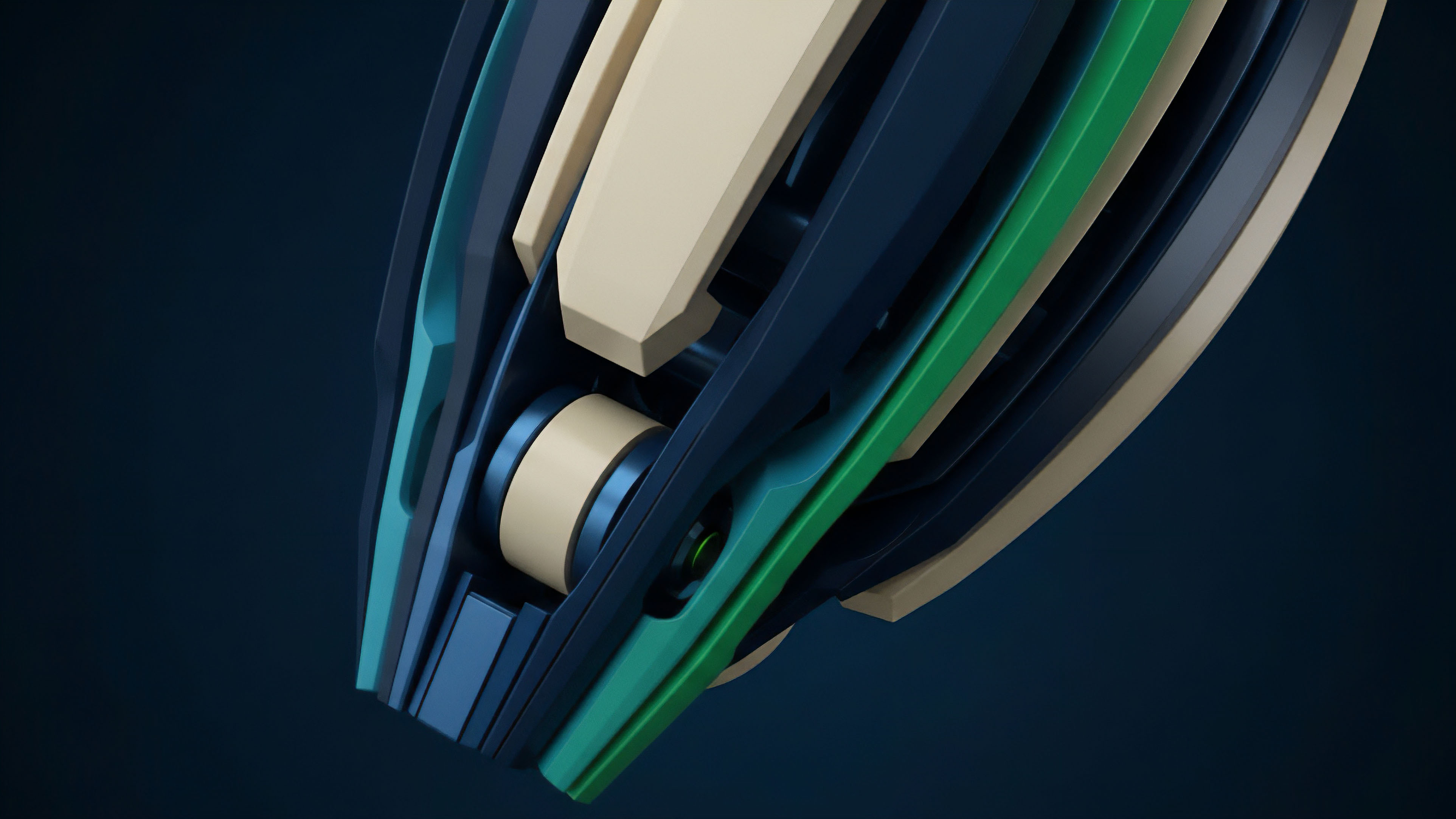

Data Supply Chain Optimization

For high-frequency options trading, data latency is as important as data integrity. The data supply chain must balance these two requirements. Some protocols employ a pull model, where the contract requests data when needed, while others use a push model, where data is continuously updated on-chain.

The push model provides better data freshness but increases transaction costs. The trade-off between cost and latency is a critical design choice for options protocols, as it affects the accuracy of pricing and the risk of liquidations. The development of specialized oracle networks for derivatives, such as those that provide volatility surfaces rather than simple spot prices, represents an architectural shift toward higher-fidelity data provenance.

Evolution

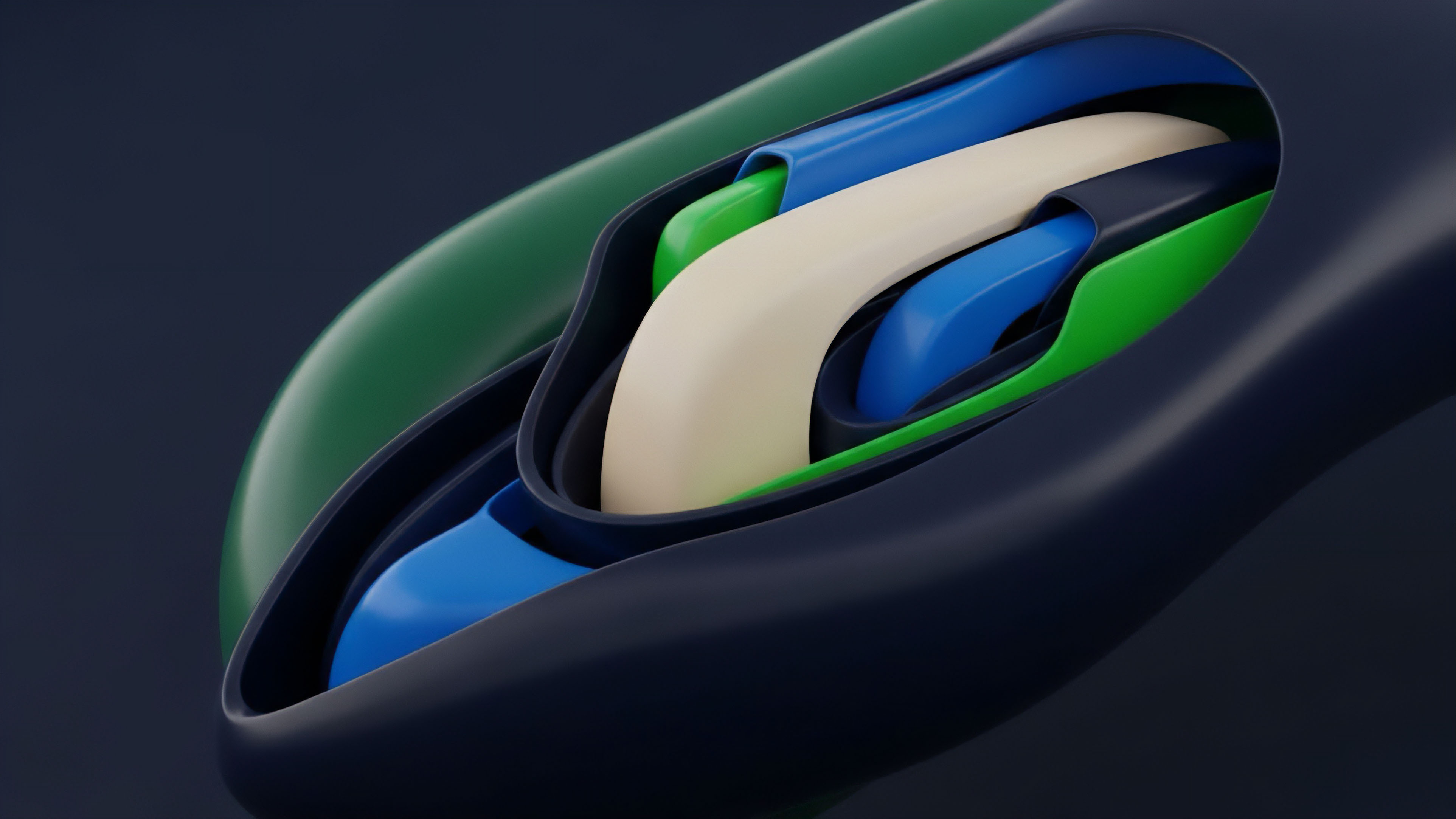

The evolution of data provenance in decentralized options has moved from basic, single-point price feeds to sophisticated, multi-dimensional data validation frameworks. Early protocols relied on simple time-weighted average prices (TWAPs) for settlement, which were easily manipulated by flash loan attacks that artificially inflated or deflated prices during the averaging window. The response to these vulnerabilities was the adoption of multi-source aggregation models.

From TWAP to Multi-Source Aggregation

The shift from TWAPs to multi-source aggregation addressed the “single point of failure” problem. Protocols now utilize a network of independent data providers. This decentralization of the data source increases the cost of attack significantly.

An attacker must manipulate multiple, disparate sources simultaneously to affect the aggregated price. This design choice represents a hardening of the data supply chain.

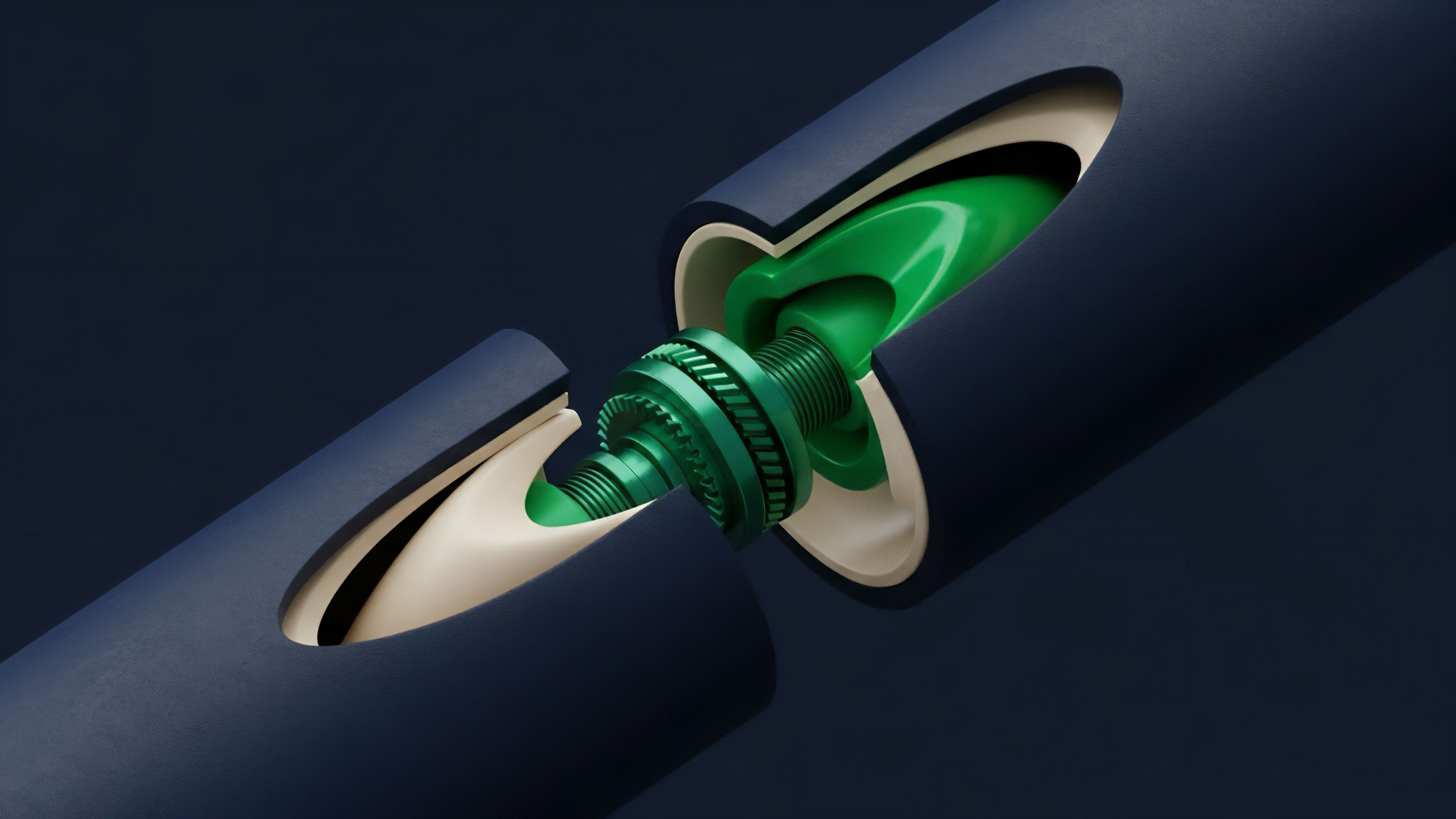

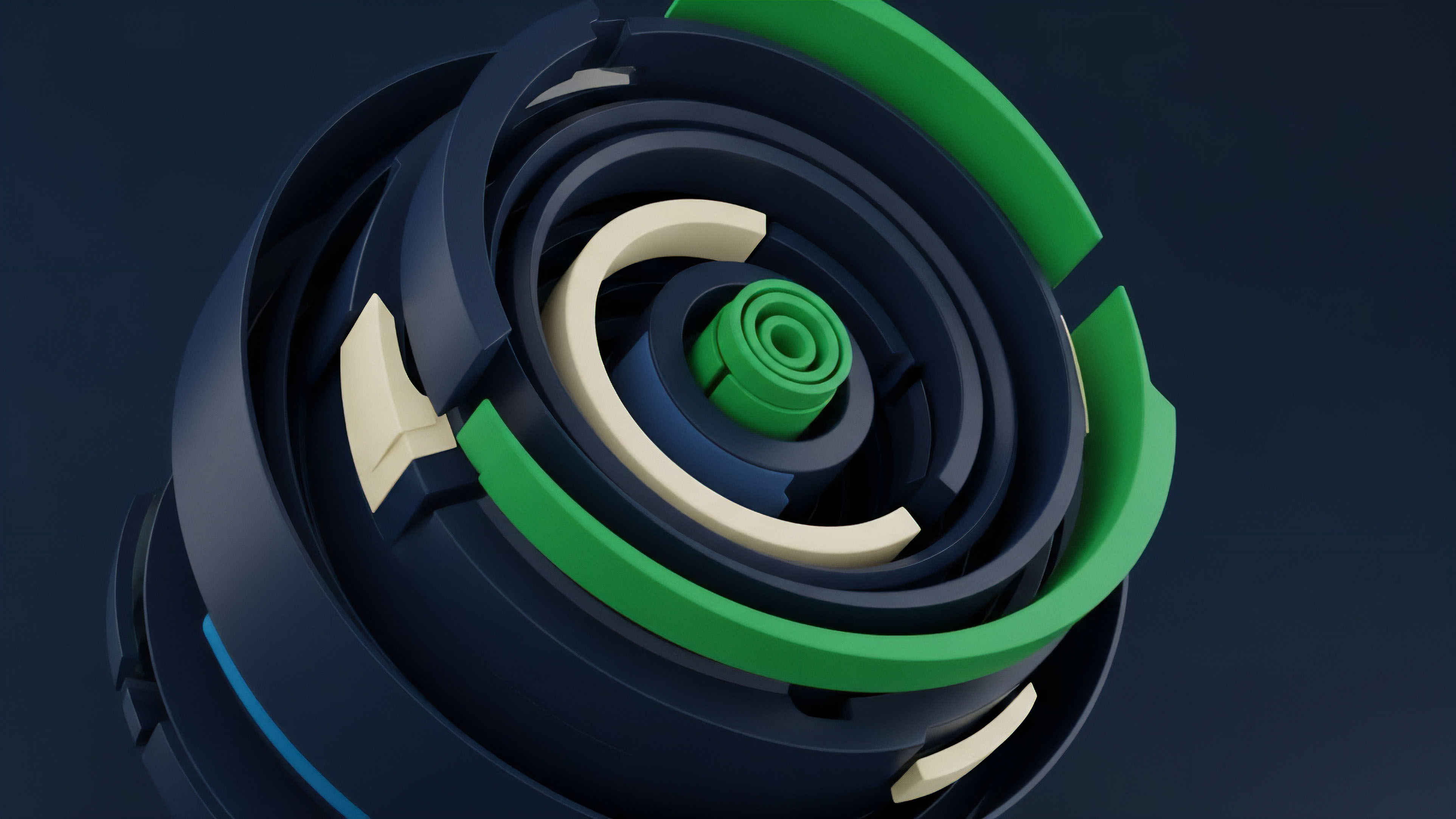

On-Chain Volatility Oracles

A significant recent development is the move toward on-chain volatility oracles. Instead of relying on off-chain data feeds for implied volatility, these oracles derive volatility directly from on-chain trading activity. This approach eliminates external data dependencies for a critical options pricing input.

The data provenance for these on-chain oracles is inherent to the blockchain itself, as every data point (trade) is recorded immutably. This design choice represents a full realization of the trustless data principle for derivatives.

| Data Provenance Model | Characteristics | Primary Risk Mitigation |

|---|---|---|

| Single-Source TWAP | Low cost, high latency, simple aggregation. | None; high risk of flash loan attacks. |

| Multi-Source Median | Decentralized sources, robust against single-source failure. | Data source manipulation. |

| On-Chain Volatility Oracle | Derives data from on-chain trades, eliminates external dependencies. | Oracle manipulation risk, data source integrity. |

Horizon

The next generation of data provenance will likely be defined by a shift from reactive security measures to proactive, cryptographically verifiable data integrity. This involves the integration of advanced cryptographic techniques and new incentive structures to ensure data accuracy before it ever reaches the options protocol.

Zero-Knowledge Proofs for Data Integrity

The application of zero-knowledge proofs (ZKPs) to data provenance represents a significant advancement. ZKPs allow a data provider to prove that a data point originated from a specific source (e.g. a high-volume exchange) and adheres to specific rules without revealing the actual data point itself. This provides a mechanism for verifying data integrity while preserving the privacy of the underlying trade information.

For options protocols, this means receiving cryptographically guaranteed data inputs without needing to trust the data provider.

The future of data provenance involves moving beyond simple data aggregation to cryptographically verifiable data streams, ensuring data integrity without sacrificing privacy.

Data Incentivization and Attestation Markets

The future architecture of data provenance will also include more sophisticated incentive mechanisms. Data providers will be rewarded for submitting accurate data and penalized for inaccuracies. This creates a market for data integrity where data quality is economically enforced. The development of specialized data attestation markets will allow options protocols to source highly specific, verifiable data feeds, such as specific volatility surfaces for exotic options, rather than relying on general-purpose price feeds. This specialization will enable the creation of more complex derivative products that require a higher level of data integrity and provenance. The goal is to create a data supply chain where data quality is not assumed, but proven mathematically.

Glossary

Liquidation Mechanisms

Price Data

Vega Sensitivity

Data Integrity

Verifiable Data

Regulatory Arbitrage

Derivative Pricing Models

Price Discovery Mechanisms

On-Chain Derivatives