Essence

Data validation in crypto derivatives represents the fundamental bridge between the deterministic logic of a smart contract and the volatile, often chaotic reality of external market conditions. It is the process of ensuring that the data used for pricing, collateral calculations, and liquidation triggers is accurate, timely, and resistant to manipulation. Without robust data validation, a decentralized options protocol operates on assumptions, making it structurally unsound and vulnerable to exploitation.

The integrity of this data determines the solvency of the entire system, as financial derivatives are highly sensitive to price changes. A failure in validation can lead to incorrect margin calls, premature liquidations, or underpriced options, creating systemic risk for all participants. The core function of data validation is to transform external market information into a verifiable input that a smart contract can trust, thereby enabling the execution of complex financial logic in a trustless environment.

Data validation is the critical process of transforming external market information into a verifiable input that a smart contract can trust.

Origin

The necessity for advanced data validation arose from the “Oracle Problem,” a foundational challenge in decentralized finance. Early decentralized applications (dApps) struggled with obtaining reliable off-chain data. The initial solutions involved single-source price feeds, often sourced from a single centralized exchange (CEX) or a small group of nodes.

This architecture created a single point of failure and introduced significant counterparty risk. The fragility of these early systems was starkly revealed during periods of extreme market volatility and in specific, targeted attacks. Flash loan attacks, in particular, demonstrated how an attacker could temporarily manipulate the price on a decentralized exchange (DEX) by borrowing a large amount of capital, executing a trade that artificially inflates or deflates the asset price, and then using that manipulated price to exploit an options protocol before repaying the loan within the same block transaction.

This vulnerability highlighted that data validation could not rely on simple spot prices and needed a more sophisticated approach to resist short-term price manipulation. The evolution of options protocols demanded a shift from simple data reporting to a complex system of data aggregation and verification to ensure systemic resilience.

Theory

The theoretical foundation of data validation for options protocols rests on the principle of minimizing the surface area for price manipulation by increasing the cost of attack.

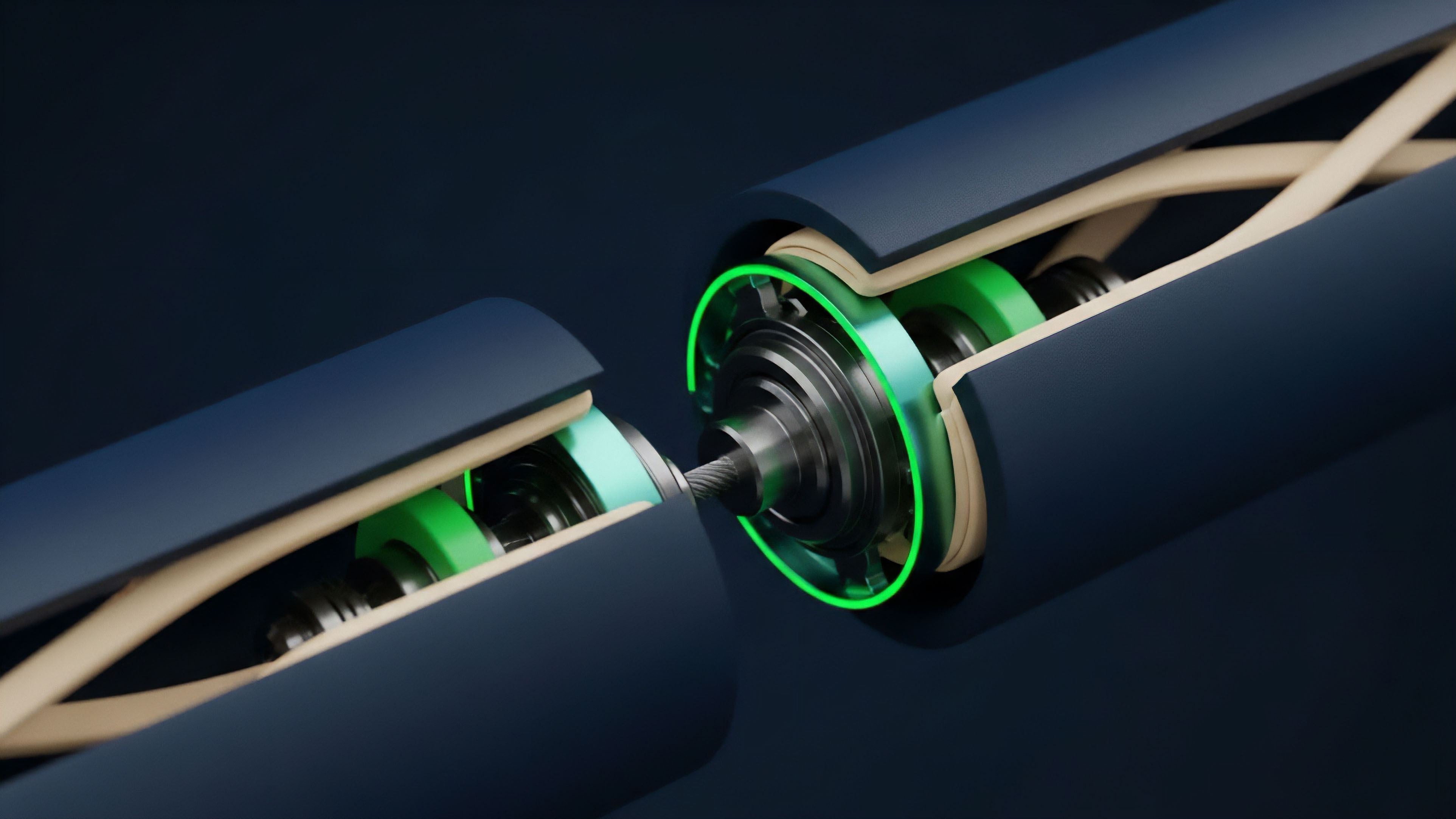

This is achieved through a multi-layered approach to data aggregation. The primary objective is to move beyond a single spot price and instead calculate a price that reflects true market depth and liquidity across multiple venues. This process involves several key mechanisms:

- Time-Weighted Average Price (TWAP): This method calculates the average price of an asset over a specific time interval. By averaging prices across time, TWAP makes it significantly harder for an attacker to manipulate the price for a brief period. A flash loan attack, which often executes within a single block, cannot effectively manipulate a TWAP calculation that spans multiple blocks.

- Volume-Weighted Average Price (VWAP): VWAP calculations account for the volume traded at different prices. This method provides a more accurate representation of the asset’s price in a liquid market, as it gives greater weight to prices where larger amounts of capital changed hands. This helps filter out small, low-liquidity trades that might not reflect true market value.

- Medianization and Outlier Rejection: Oracle networks collect data from numerous independent data sources. A medianization process selects the middle value from all reported prices, effectively rejecting extreme outliers. This prevents a single malicious or faulty data source from corrupting the entire feed. The system’s robustness depends on having a sufficiently large and diverse set of honest data providers.

The design challenge lies in balancing the need for a high-frequency price feed for accurate options pricing with the need for a slower, more secure TWAP/VWAP calculation. The latency inherent in secure aggregation methods creates a trade-off: a slower feed reduces manipulation risk but increases the risk of inaccurate pricing during high volatility.

Approach

The implementation of data validation in decentralized options protocols follows a structured framework designed to mitigate various forms of market manipulation.

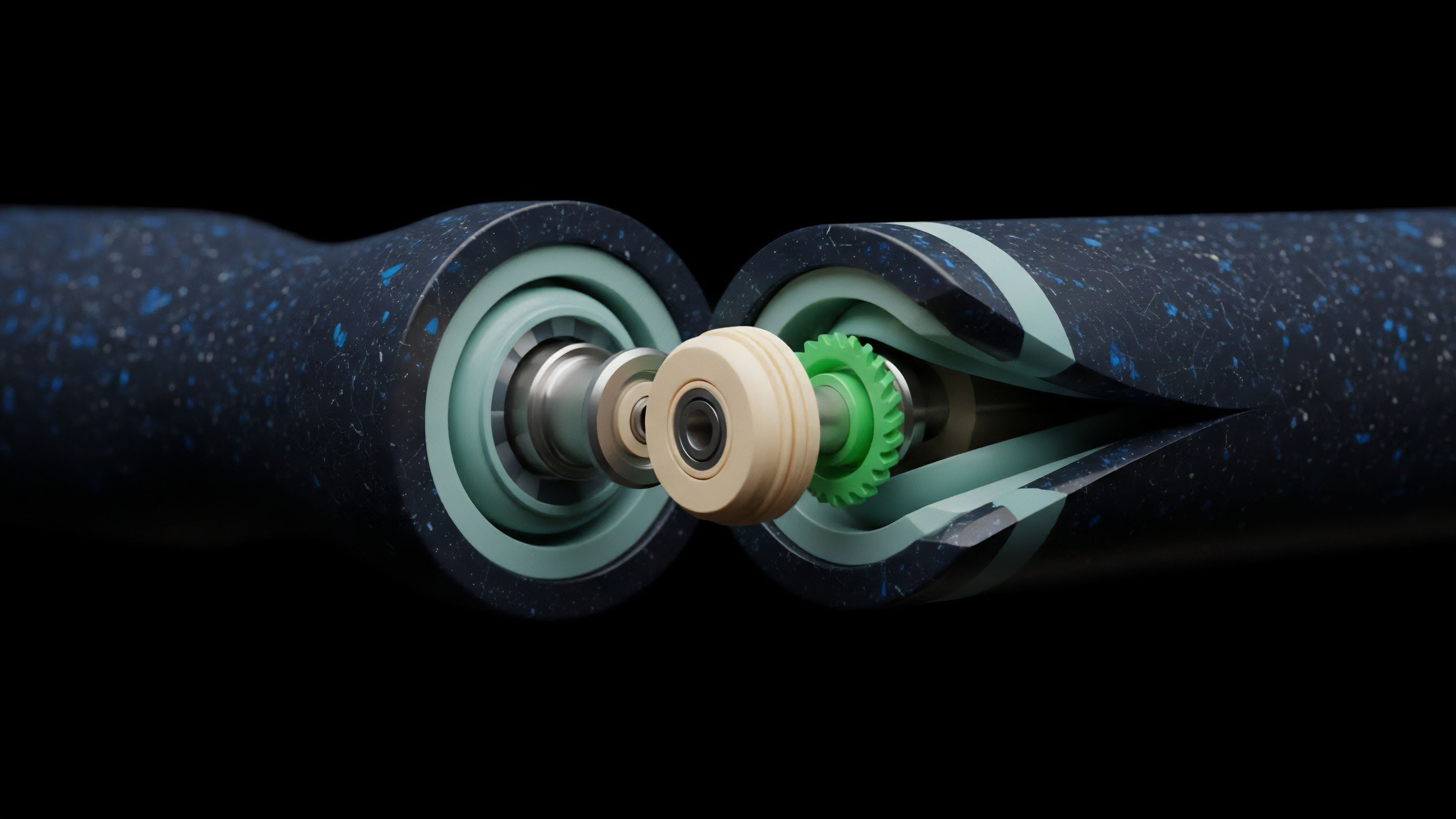

The architecture typically relies on decentralized oracle networks (DONs) to provide external data feeds. The validation process begins with data sourcing, where a network of independent data providers collects price data from multiple centralized and decentralized exchanges. This raw data is then processed through an aggregation layer, where the validation logic applies a combination of TWAP/VWAP calculations and medianization to derive a single, canonical price.

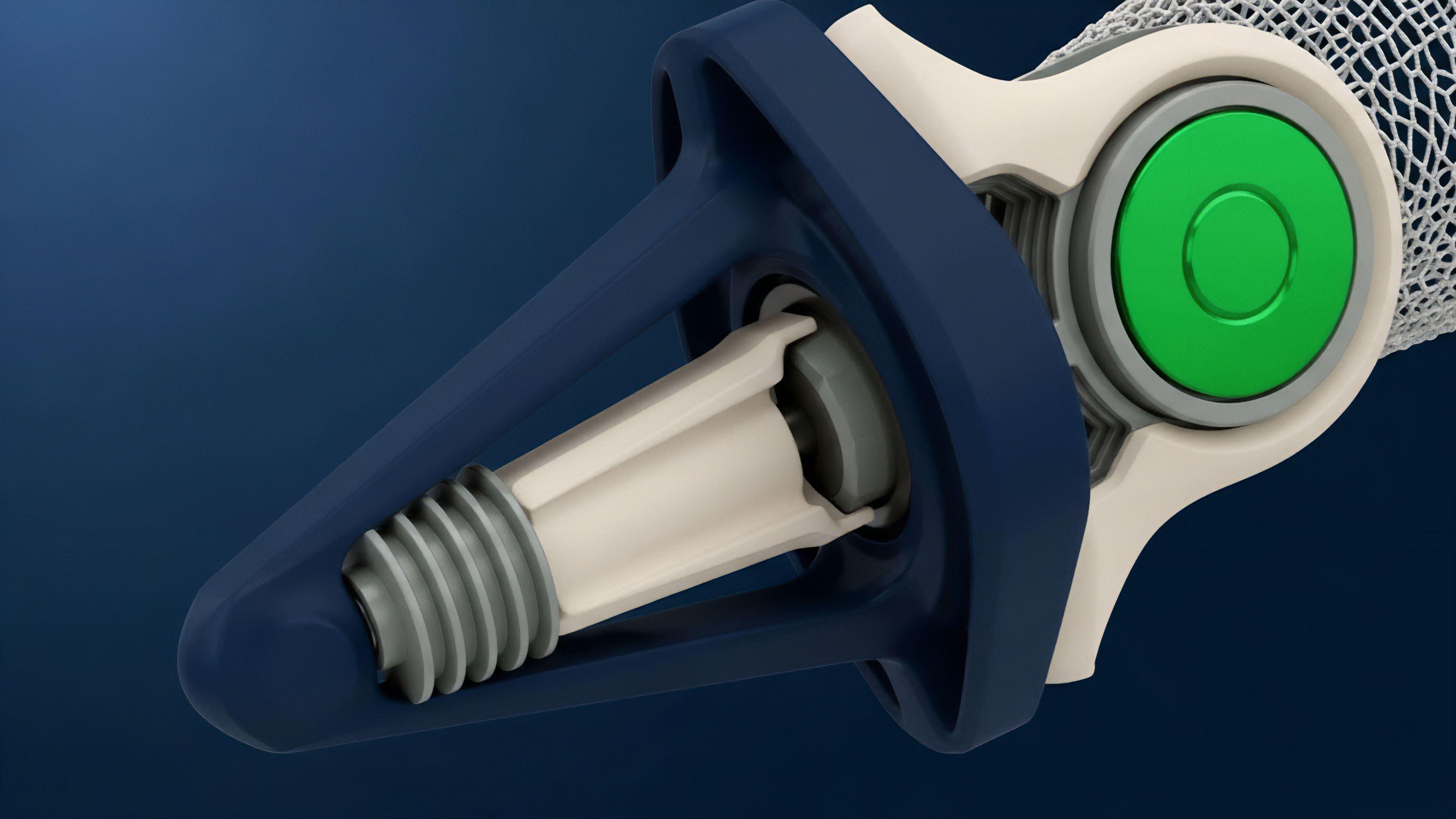

A critical component of this approach is the concept of a “Data Validation Framework.” This framework defines specific parameters for the options protocol’s collateral and liquidation engines.

| Validation Parameter | Purpose in Options Protocol | Risk Mitigation |

|---|---|---|

| Price Feed Type | Determines the strike price calculation and collateral value. | Prevents flash loan attacks by using TWAP/VWAP instead of spot prices. |

| Deviation Threshold | Sets the maximum acceptable difference between oracle updates. | Prevents rapid price changes from triggering liquidations based on stale data. |

| Liquidity Threshold | Checks for sufficient market depth before using a price feed. | Prevents price manipulation in low-liquidity markets. |

| Oracle Stake Requirement | Incentivizes data providers to submit honest data through economic penalties. | Aligns economic incentives with data integrity. |

This approach creates a robust data pipeline where a smart contract receives a pre-validated price. The challenge remains in ensuring the data sources themselves are diverse and in preventing “data provider collusion,” where a majority of providers work together to manipulate the price.

Evolution

Data validation has undergone significant evolution, driven primarily by lessons learned from systemic failures.

The first generation of options protocols relied heavily on a simple medianizer, which aggregated data from a small set of trusted sources. The weakness here was the reliance on a small, centralized set of sources. The next generation introduced the concept of time-weighted averages to combat flash loan exploits.

However, a new set of vulnerabilities emerged when protocols began to rely on price feeds for assets with low liquidity. An attacker could still manipulate the price by targeting the low-liquidity venue used by the oracle network. The current evolution focuses on validating not just price, but also volatility and liquidity.

This involves integrating more sophisticated validation checks that monitor market depth across different exchanges. The most advanced systems are moving toward “oracle-less” solutions for certain types of derivatives, where settlement is determined by a decentralized, in-protocol mechanism rather than an external price feed. For example, a protocol might use a synthetic asset that tracks the underlying price rather than directly querying an external oracle.

The evolution of data validation reflects a move toward self-contained systems where data integrity is maintained through protocol design rather than reliance on external inputs.

The evolution of data validation in DeFi reflects a transition from relying on simple external price feeds to implementing robust, in-protocol mechanisms that validate price, liquidity, and volatility.

Horizon

Looking ahead, the next phase of data validation for decentralized derivatives will be defined by two key areas: enhanced data integrity and economic resilience. We will see a shift toward zero-knowledge proofs (ZKPs) for oracle data. ZKPs allow a data provider to prove that a price feed was generated correctly according to specific rules without revealing the raw data sources or calculation methodology. This significantly increases privacy and security, as the validation process itself is verifiable on-chain. Another critical development will be the integration of machine learning models to predict and preempt data manipulation. These models will analyze historical market data and real-time order flow to identify anomalous price movements that suggest manipulation attempts. The future state of data validation will not be passive; it will actively monitor for threats and adjust validation parameters dynamically. The ultimate goal is to create a validation layer that is so robust and decentralized that the cost of manipulating it outweighs any potential profit from exploiting a derivatives position. This will enable a new class of complex, high-leverage options that are currently impossible to offer securely on-chain.

Glossary

Data Validation Techniques

Option Strike Price Validation

Derivative Pricing Model Validation

Validation Logic

Medianization Data Aggregation

Merkle Proof Validation

Financial Settlement Validation

Protocol Validation Mechanism

Systemic Risk Modeling