Essence

The concept of Data Source Centralization in crypto options defines the reliance on a singular or small set of external data feeds to establish the underlying asset price for derivatives contracts. This mechanism is essential for a protocol’s core functions, specifically calculating margin requirements, determining collateral value, and executing liquidations. While decentralized finance seeks to eliminate single points of failure, many options protocols still face a fundamental architectural trade-off.

The on-chain execution of a derivative contract, which requires precise pricing data, often necessitates pulling information from off-chain sources. When this external data feed is controlled by a single entity, or aggregated from a small, non-diverse set of sources, the entire protocol’s integrity becomes dependent on that entity’s honesty and operational stability. This centralization introduces systemic risk, where a compromise of the data source can cascade through the entire protocol, leading to incorrect liquidations or market manipulation.

The choice of data source determines the protocol’s susceptibility to various attack vectors, including flash loan attacks that exploit temporary price discrepancies between the on-chain oracle and the broader market. A truly robust derivatives market requires a data feed that accurately reflects global market sentiment and price discovery across multiple exchanges, without relying on a centralized authority.

Data Source Centralization represents the inherent tension between a protocol’s need for real-time price accuracy and its core philosophical commitment to decentralization.

Origin

The challenge of Data Source Centralization emerged early in DeFi’s history. Early decentralized exchanges (DEXs) were primarily spot markets and relied on Automated Market Makers (AMMs) where the price was determined algorithmically based on the ratio of assets within the liquidity pool. However, options and perpetual futures require a reliable, real-time index price that reflects the global market, not just the isolated price within a single AMM pool.

The first iterations of derivatives protocols, seeking to launch quickly and maintain high performance, often defaulted to using a single, trusted API from a major centralized exchange (CEX) as their price oracle. This design choice created an immediate contradiction. The protocol claimed to be decentralized, yet its core financial mechanism ⎊ the liquidation engine ⎊ was entirely dependent on a centralized third party.

The historical context of this choice is rooted in a pragmatic trade-off. Building a decentralized oracle network was technically complex and resource-intensive, while a CEX API offered speed, reliability, and low latency, which are critical for preventing arbitrage and ensuring capital efficiency in a high-leverage environment. The early adoption of this centralized model established a precedent that later protocols had to actively work to dismantle, creating the ongoing tension in derivatives architecture.

Theory

From a quantitative finance perspective, Data Source Centralization introduces significant theoretical risks to options pricing models. The Black-Scholes-Merton model, while a simplification, relies on assumptions of continuous trading and efficient markets where price information is readily available. A centralized oracle violates these assumptions by introducing “oracle latency risk.” This latency refers to the time delay between the real market price changing and the on-chain oracle updating its value.

The impact on option Greeks is substantial. The volatility surface, which maps implied volatility across different strikes and maturities, is distorted if the underlying price feed is not truly reflective of market consensus. If a centralized oracle is compromised, the protocol’s margin engine may incorrectly calculate the collateral value, leading to a cascade of liquidations based on a false price.

This creates a systemic risk where a single point of failure can trigger a solvency crisis for the entire protocol. The theoretical solution requires a robust data aggregation mechanism that minimizes the probability of manipulation.

Risk to Liquidation Mechanisms

A centralized data source’s primary threat is to the protocol’s liquidation logic. The system’s ability to automatically liquidate under-collateralized positions depends on an accurate and timely price feed. If a centralized oracle reports an artificially low price, healthy positions may be liquidated prematurely, causing significant losses for users.

Conversely, if the oracle reports an artificially high price, under-collateralized positions may remain open, transferring risk to the protocol’s insurance fund or liquidity providers.

Arbitrage and Market Efficiency

Market makers rely on accurate pricing to manage their risk and profit from discrepancies. A centralized oracle, particularly one with high latency, creates predictable arbitrage opportunities for automated bots. These bots can observe a price change on a CEX before the oracle updates on-chain, allowing them to execute trades at the outdated on-chain price for guaranteed profit.

While this activity can help to align on-chain and off-chain prices, it also places significant stress on the protocol and can be exploited maliciously.

| Data Source Type | Latency Risk | Manipulation Risk | Capital Efficiency |

|---|---|---|---|

| Centralized API (Single Source) | High (SPOF) | High (SPOF) | High (Fast updates) |

| Decentralized Oracle Network (DON) | Low (Aggregated) | Low (Distributed) | Medium (Latency/Cost) |

| On-Chain AMM Price Feed | Lowest (Instant) | High (Flash Loan Attack) | Low (Liquidity Depth) |

Approach

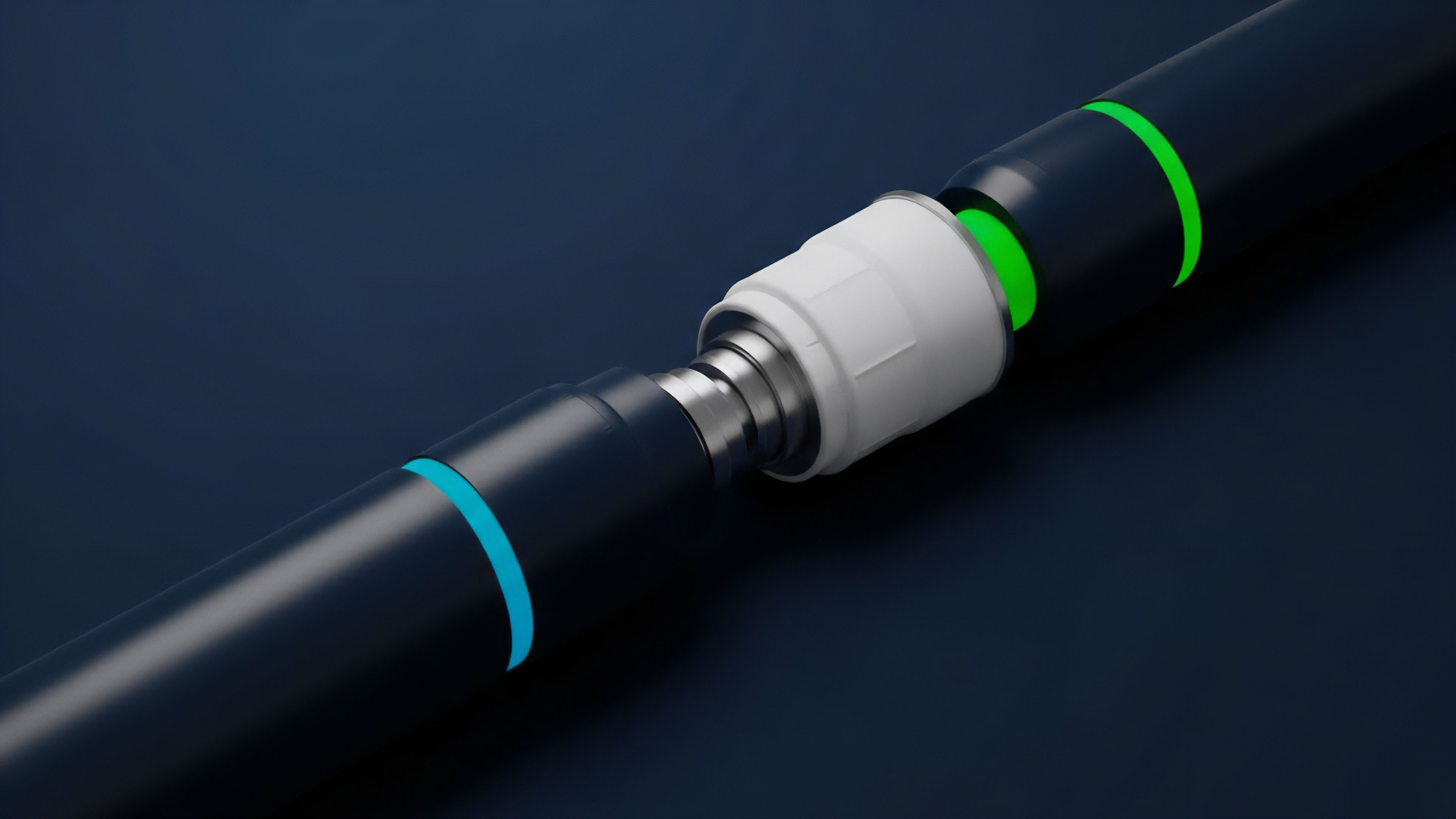

The pragmatic approach to mitigating Data Source Centralization involves a layered strategy of data aggregation and verification. Protocols have moved away from relying on a single source and instead employ sophisticated data aggregation methods. This approach typically involves collecting price data from multiple independent sources, including major centralized exchanges and decentralized AMMs, and then processing this data through a series of filters.

A key technique is calculating the median price rather than a simple average. The median price provides robustness against outliers; if a single source or a small number of sources report a manipulated price, the median calculation ignores the extreme value, preserving the integrity of the feed. Another approach is volume-weighted average price (VWAP), which assigns greater importance to prices from exchanges with higher trading volume.

The Role of Decentralized Oracle Networks

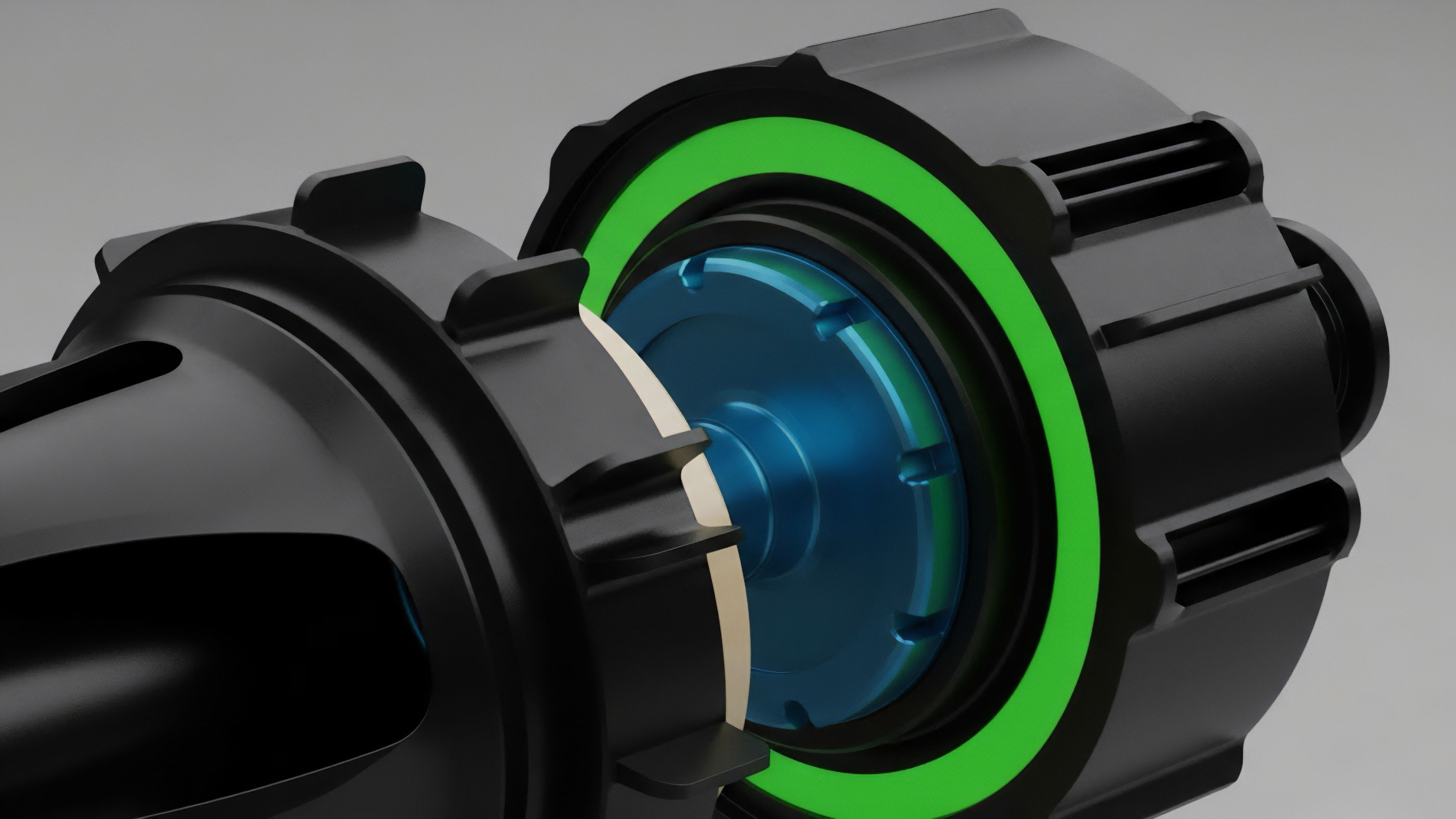

Modern protocols largely rely on Decentralized Oracle Networks (DONs) to provide price feeds. A DON aggregates data from a diverse set of independent node operators. The network incentivizes these operators to report accurately and punishes them for providing incorrect data.

This mechanism shifts the trust from a single entity to a distributed network, significantly reducing the risk of a single point of failure.

Optimistic Oracles and Challenge Periods

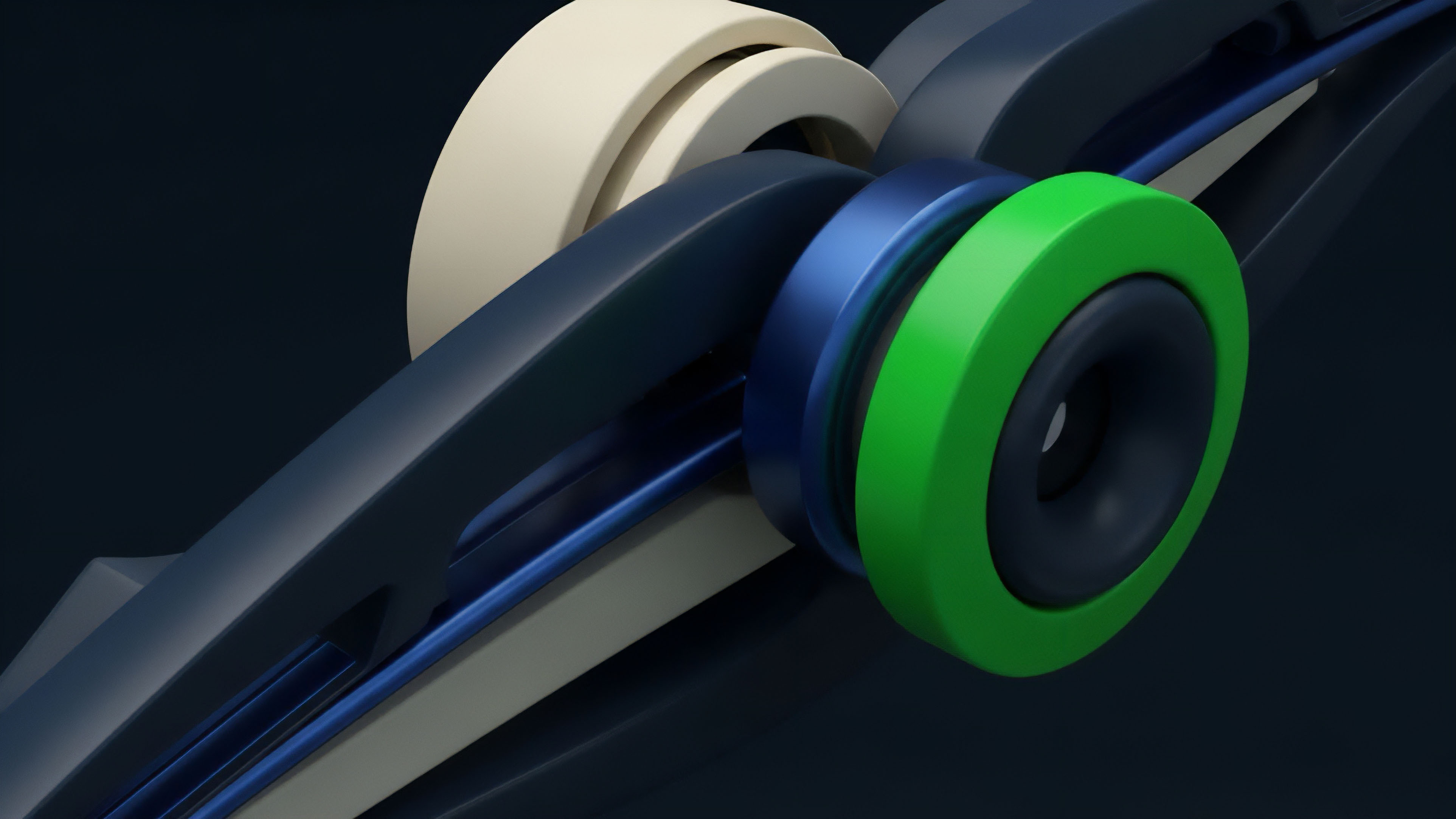

A more advanced approach involves optimistic oracles. These systems operate on the assumption that data submitted by a single source is correct, but they incorporate a challenge period. During this period, other participants can dispute the submitted data by providing evidence that it is inaccurate.

If the dispute is successful, the challenger is rewarded, and the original data submitter is penalized. This model allows for fast updates while maintaining a high level of security through game theory and economic incentives.

Evolution

The evolution of data sourcing in crypto derivatives reflects a constant struggle to balance efficiency with security.

The initial, centralized approach (Phase 1) prioritized speed and low cost, often at the expense of decentralization. This led to several high-profile exploits where flash loans manipulated on-chain prices, triggering incorrect liquidations. The market quickly realized that a centralized price feed was a fundamental design flaw for any protocol handling significant capital.

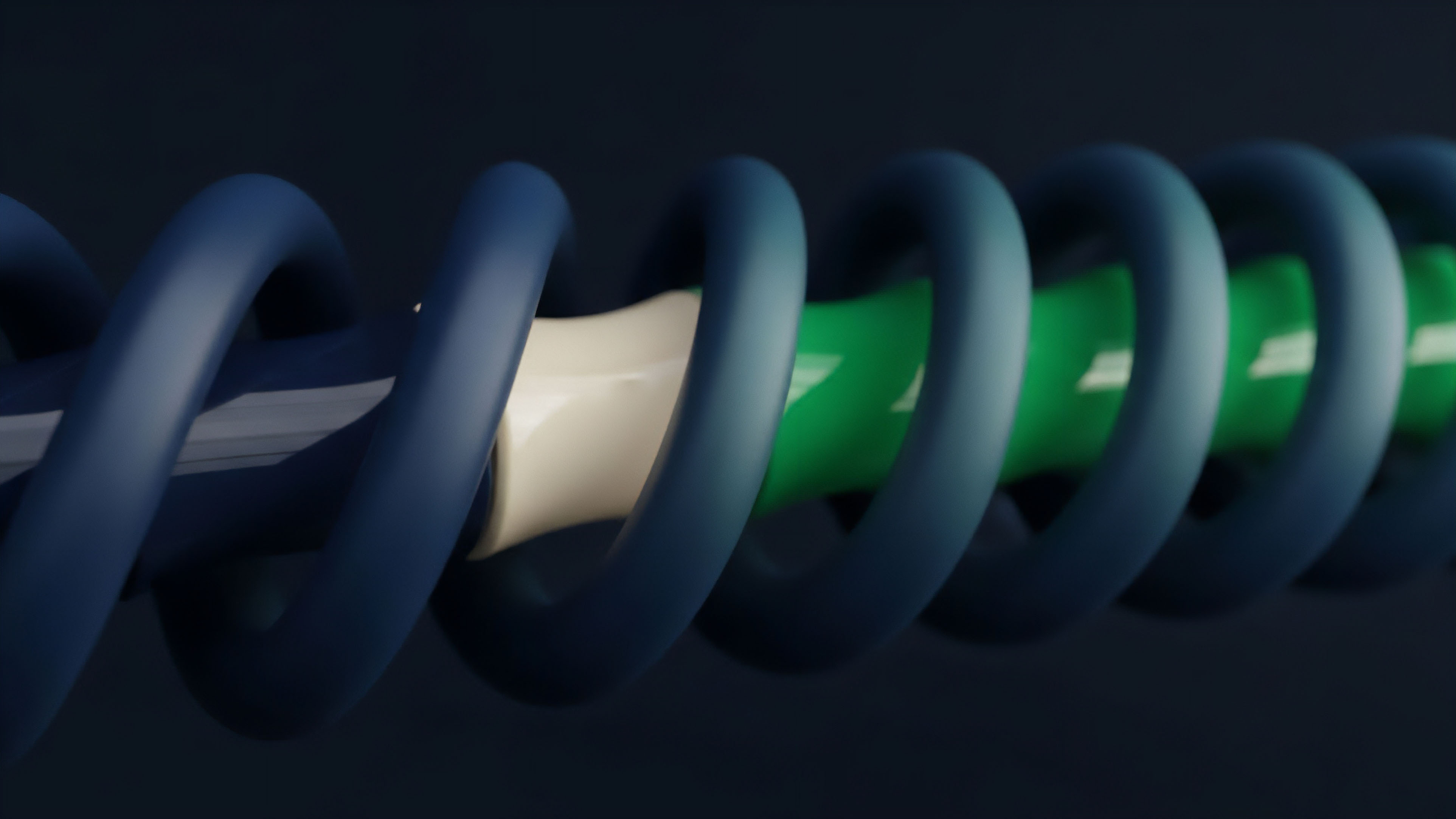

The transition to Phase 2 saw the rise of Decentralized Oracle Networks (DONs). These networks solved the single point of failure problem by distributing data collection and verification across multiple independent nodes. The challenge here shifted from data integrity to data latency and cost.

Aggregating data from numerous sources and securing it through economic incentives adds complexity and transaction costs. Phase 3 introduces more sophisticated mechanisms, such as optimistic oracles and the integration of on-chain data from AMMs. The goal now is to move towards “protocol-native” price feeds where the data is derived directly from the protocol’s own liquidity pools or from other decentralized sources, rather than relying on external, off-chain data.

This minimizes the attack surface and aligns the data source more closely with the protocol’s internal state.

- Phase 1 Centralization: Initial reliance on single CEX APIs for speed and simplicity, leading to high-risk exploits.

- Phase 2 Decentralization: Adoption of DONs to aggregate data from multiple sources and secure feeds through economic incentives.

- Phase 3 Protocol-Native Solutions: Development of optimistic oracles and on-chain price feeds derived from AMMs, reducing external dependencies.

Horizon

The future of data sourcing for crypto options moves toward a truly permissionless and trust-minimized architecture. The current reliance on external oracles, even decentralized ones, introduces a necessary but imperfect layer of trust. The ultimate horizon involves the verification of data integrity using cryptographic proofs, specifically zero-knowledge proofs (ZKPs).

In this advanced architecture, data providers would submit a ZKP verifying the integrity of their data without revealing the raw data itself. This allows a protocol to verify that a price feed accurately reflects a specific set of inputs without needing to trust the provider. This approach could be used to create truly on-chain, verifiable price feeds that eliminate the need for external data sources entirely.

Zero-Knowledge Data Verification

The application of ZKPs to oracle data represents a significant shift. Instead of trusting a network of nodes to report honestly, the protocol trusts a mathematical proof that the data has been processed correctly according to a predefined algorithm. This removes the economic incentive layer and replaces it with cryptographic certainty.

This model allows for greater scalability and security, particularly for high-frequency options trading where latency is critical.

The future of data integrity in derivatives will likely move beyond economic incentives and toward cryptographic guarantees, creating a truly trustless financial system.

Data Source Convergence

We will see a convergence of data sources where protocols use a hybrid model. This model will likely involve a combination of high-frequency on-chain AMM data for rapid liquidations and a slower, more robust decentralized oracle network for final settlement and verification. This multi-layered approach provides both speed and security, minimizing the risks associated with a single, centralized data source. The goal is to create a self-contained ecosystem where data integrity is inherent to the protocol itself, rather than relying on external inputs.

Glossary

Permissionless Finance

Crypto Options

Sequencer Centralization

Single Source Feeds

Full Node Centralization

Open-Source Risk Mitigation

Data Source Governance

Centralization of Block Production

Market Manipulation