Essence

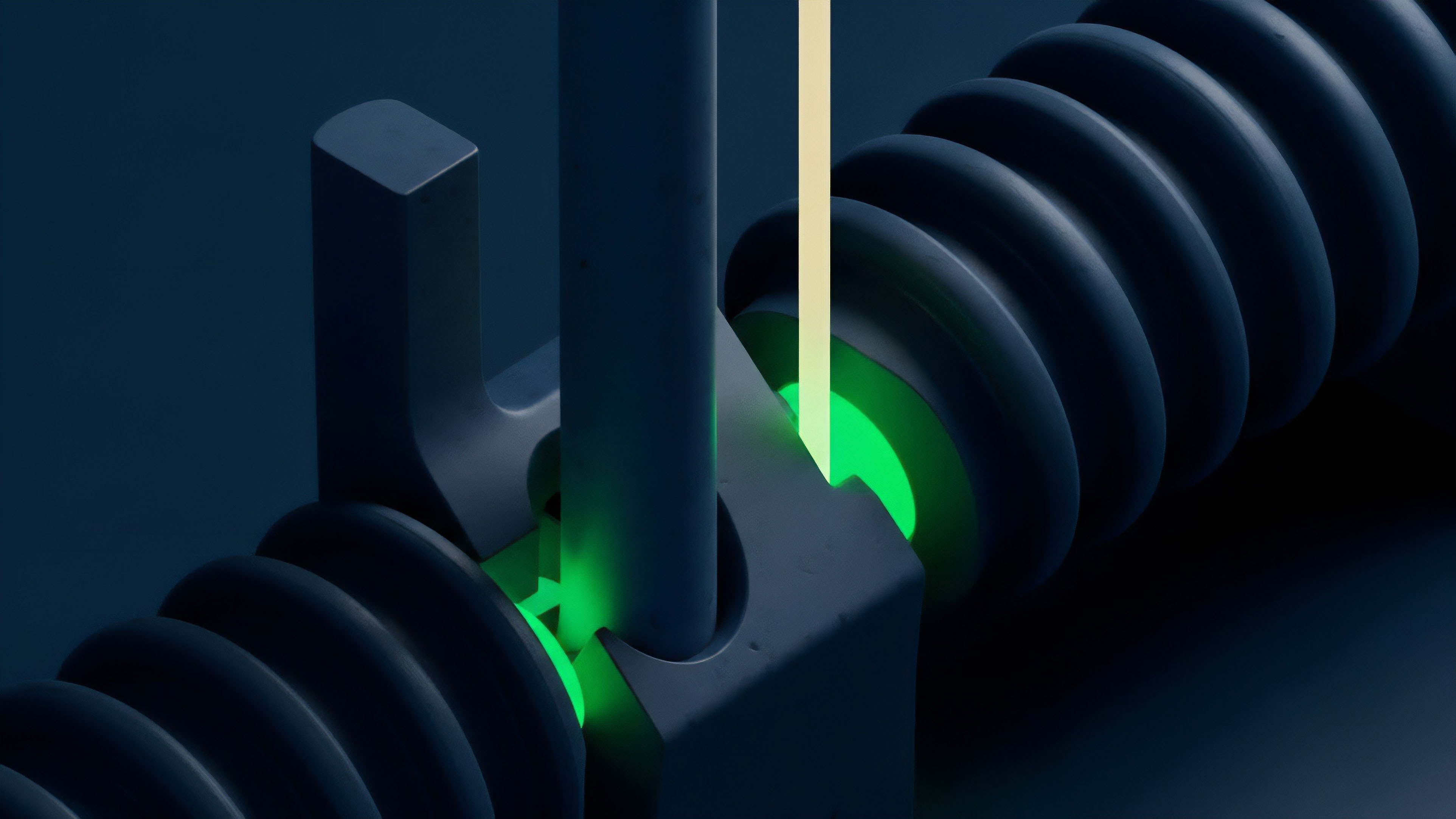

Data Source Weighting is the algorithmic process used by decentralized derivatives protocols to construct a reliable reference price from multiple, potentially adversarial data feeds. It addresses the fundamental challenge of price discovery in a trustless environment, where a single oracle feed presents an unacceptable attack surface. In options and perpetual futures, the integrity of the reference price is paramount, determining everything from strike price accuracy to liquidation triggers.

A robust weighting scheme ensures that a contract’s value reflects true market conditions, rather than a price manipulated on a single, low-liquidity exchange. This mechanism is the core of a protocol’s resilience against manipulation, acting as the final arbiter of value for margin and settlement calculations.

Data Source Weighting is the process of synthesizing multiple external data feeds into a single, reliable reference price for decentralized financial contracts.

The weighting algorithm determines the contribution of each individual data source to the final aggregate price. Without this mechanism, a protocol would be forced to rely on a single source, creating a vulnerability where a malicious actor could manipulate the reference price through a targeted attack. The design of the weighting function must account for various factors: the liquidity of the underlying exchange, the latency of the data feed, and the historical reliability of the source.

The resulting price feed is a synthetic construct, a calculated truth derived from a diverse set of inputs, designed to withstand a range of market manipulations and technical failures.

Origin

The concept of data weighting emerged directly from the earliest failures of decentralized oracle systems. In the initial phases of DeFi, many protocols relied on simple median calculations or a small set of pre-approved data providers. This simplicity proved to be a critical flaw.

Early incidents, particularly during periods of extreme market volatility, exposed how easily a single oracle feed could be manipulated, leading to incorrect liquidations and significant capital losses for users. These failures highlighted the need for a more sophisticated approach to data aggregation.

The “oracle problem” for derivatives protocols is particularly acute because of the high stakes involved in liquidations. A flash loan attack on a low-liquidity exchange could temporarily skew the price on that exchange, causing a cascade of liquidations on a derivatives platform that uses it as a data source. The solution required moving beyond simple averaging.

The initial response involved incorporating a Time-Weighted Average Price (TWAP) or Volume-Weighted Average Price (VWAP) calculation to smooth out short-term spikes. However, even these methods were found to be insufficient against determined attackers with sufficient capital. The evolution of Data Source Weighting began as a direct response to these adversarial market conditions, seeking to create a reference price that was resistant to manipulation by incorporating a diversity of sources and dynamically adjusting their influence based on market conditions.

Theory

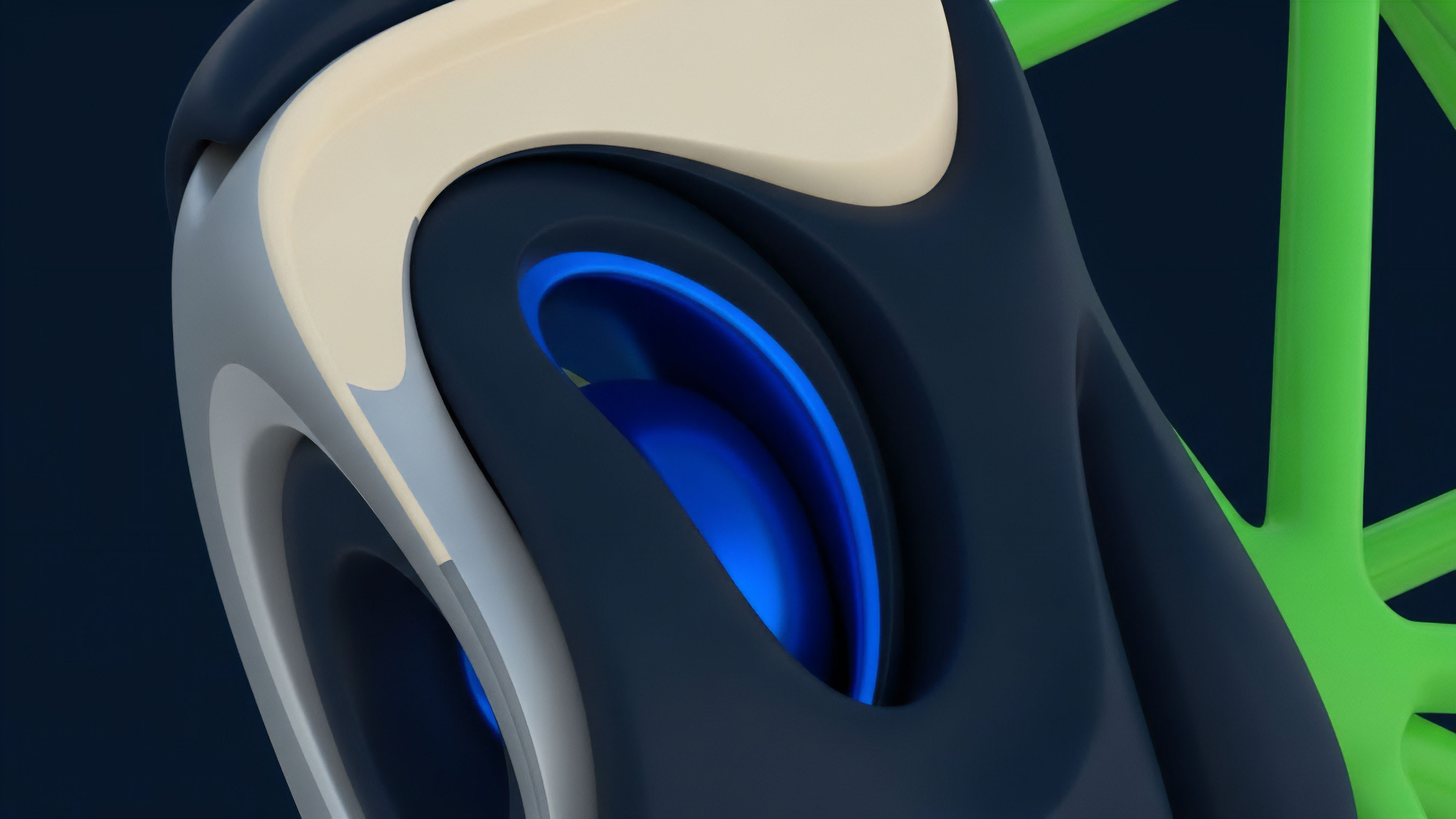

The theoretical foundation of Data Source Weighting draws heavily from information theory and robust statistics. The primary objective is to maximize manipulation resistance while maintaining accuracy. This requires balancing two competing priorities: responsiveness to real market movements and resistance to noise or manipulation.

The design of the weighting function is where a protocol defines its risk tolerance and its model of market reality. The choice of weighting methodology determines how the protocol behaves during high-stress events. A static weighting scheme assigns fixed percentages to sources, which simplifies implementation but fails during periods where a specific source experiences an anomaly or attack.

Dynamic weighting schemes adjust the influence of sources based on real-time data, but introduce complexity and potential for new attack vectors if the adjustment logic is flawed.

The core principle of robust statistics is to minimize the influence of outliers. A simple median calculation achieves this by completely discarding outliers. A more sophisticated weighting scheme assigns influence based on a source’s deviation from the median.

Sources that deviate significantly are given less weight, effectively neutralizing their impact on the aggregate price. This approach assumes that the majority of sources are honest and that price manipulation will only affect a subset of the data feeds. The challenge lies in accurately identifying true outliers versus sources that are simply reflecting a genuine price divergence or market fragmentation.

The selection of sources for inclusion in the weighting algorithm is also a critical decision, as it defines the set of data points from which the “truth” is derived.

Weighting Methodologies Comparison

Different weighting methodologies offer distinct trade-offs in terms of security, capital efficiency, and responsiveness. The following table compares the most common approaches used in derivatives protocols:

| Methodology | Description | Manipulation Resistance | Responsiveness to Market Shift |

|---|---|---|---|

| Simple Average | Equal weight assigned to all data sources. | Low. Vulnerable to manipulation by adding new, cheap data feeds. | High. Reacts quickly to changes in any source. |

| Volume-Weighted Average Price (VWAP) | Weight based on trading volume of each source exchange. | Medium. Requires significant capital to manipulate high-volume exchanges. | Medium. Price reflects where most trading activity occurs. |

| Deviation-Adjusted Weighting | Weight decreases as a source deviates from the aggregate median. | High. Outliers are effectively neutralized. | Medium. Slower to react to genuine market fragmentation. |

A further complexity arises from the latency of data feeds. Data feeds from different exchanges may have varying latencies. If a weighting algorithm simply takes the latest price from each source, a fast but illiquid exchange could be overweighted, leading to a temporary price distortion.

To counter this, many protocols employ time-based weighting, ensuring that a price is sampled over a period rather than at a single instant. The choice between a VWAP and a deviation-adjusted model depends entirely on the protocol’s risk appetite. A VWAP model favors liquidity, assuming that high volume makes manipulation prohibitively expensive.

A deviation-adjusted model favors statistical robustness, assuming that outliers are more likely to be malicious than genuine price discovery.

Approach

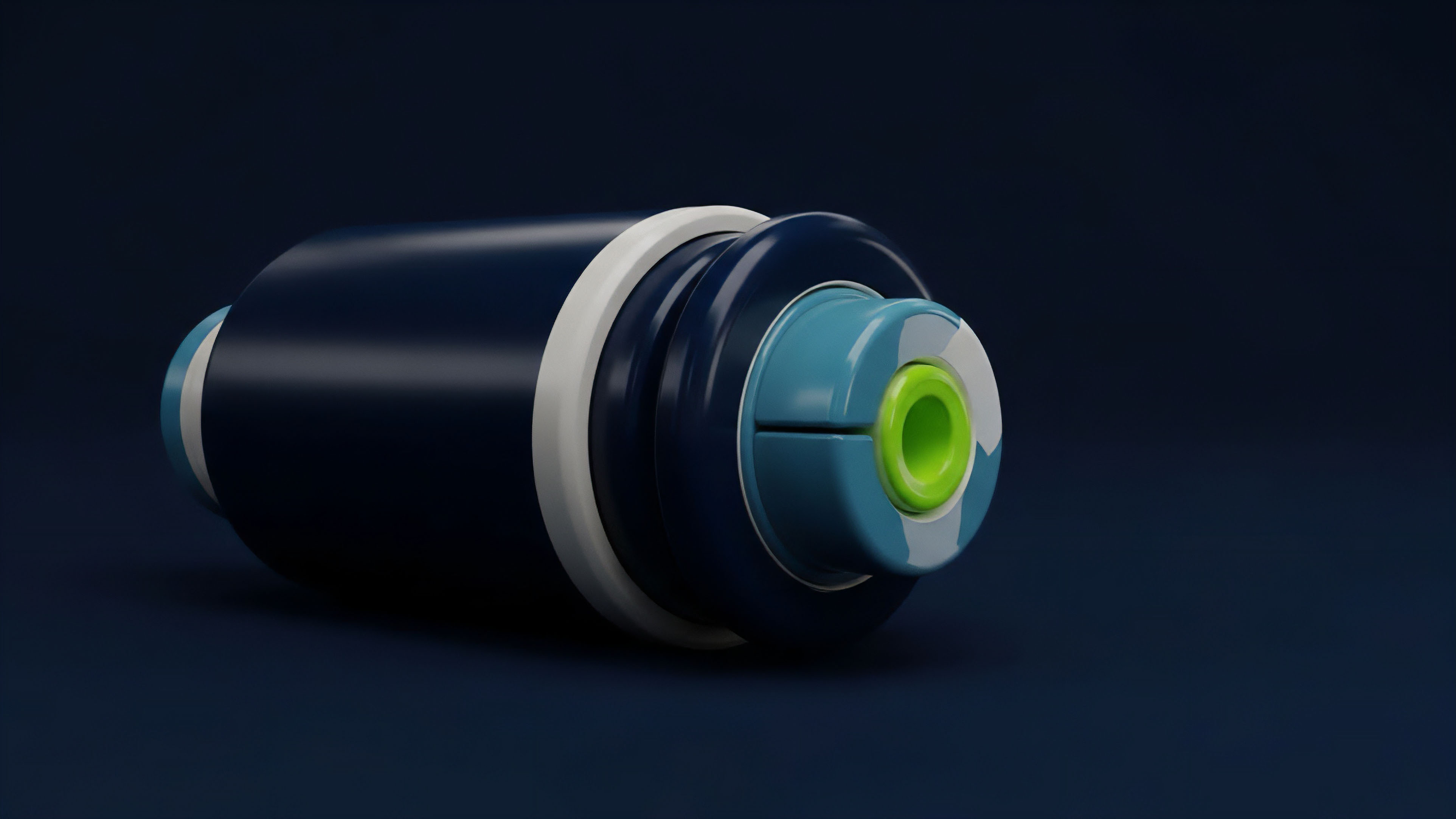

The practical implementation of Data Source Weighting requires careful consideration of the specific market microstructure of the underlying asset. For highly liquid assets like Bitcoin or Ethereum, a weighting scheme can rely on a large pool of data sources, allowing for robust statistical methods. For less liquid assets, the source pool is smaller, increasing the potential impact of manipulation.

The design process for a weighting algorithm involves a set of specific choices regarding source selection and aggregation logic. The goal is to create a price feed that accurately reflects the price on the most liquid exchanges while filtering out noise from less reliable sources.

A critical challenge in implementing a robust weighting scheme is the management of source redundancy and data quality. Protocols must constantly monitor the performance of each data feed, checking for anomalies such as stale data, high latency, or sudden, unexplainable price movements. The aggregation logic often includes a “failover” mechanism where a source that exhibits anomalous behavior is temporarily excluded from the calculation.

This requires a sophisticated monitoring infrastructure that can detect and react to failures in real-time. The protocol must also account for the cost of data acquisition and the latency associated with retrieving data from a large number of sources. The trade-off between security and efficiency is always present.

A more secure system with more sources may have higher latency, which can negatively impact the performance of high-frequency trading strategies on the platform.

Oracle Source Selection Criteria

- Liquidity Depth: Prioritize sources with deep order books and high trading volume to ensure the price reflects genuine market activity.

- Geographic Diversity: Select sources located in different jurisdictions to mitigate single-point-of-failure regulatory risks or regional market anomalies.

- Protocol Diversity: Use data feeds from both centralized exchanges (CEX) and decentralized exchanges (DEX) to capture different market dynamics and prevent a single attack vector from compromising all sources.

- Historical Performance: Evaluate the past reliability and uptime of data sources, excluding those with frequent outages or inconsistent reporting.

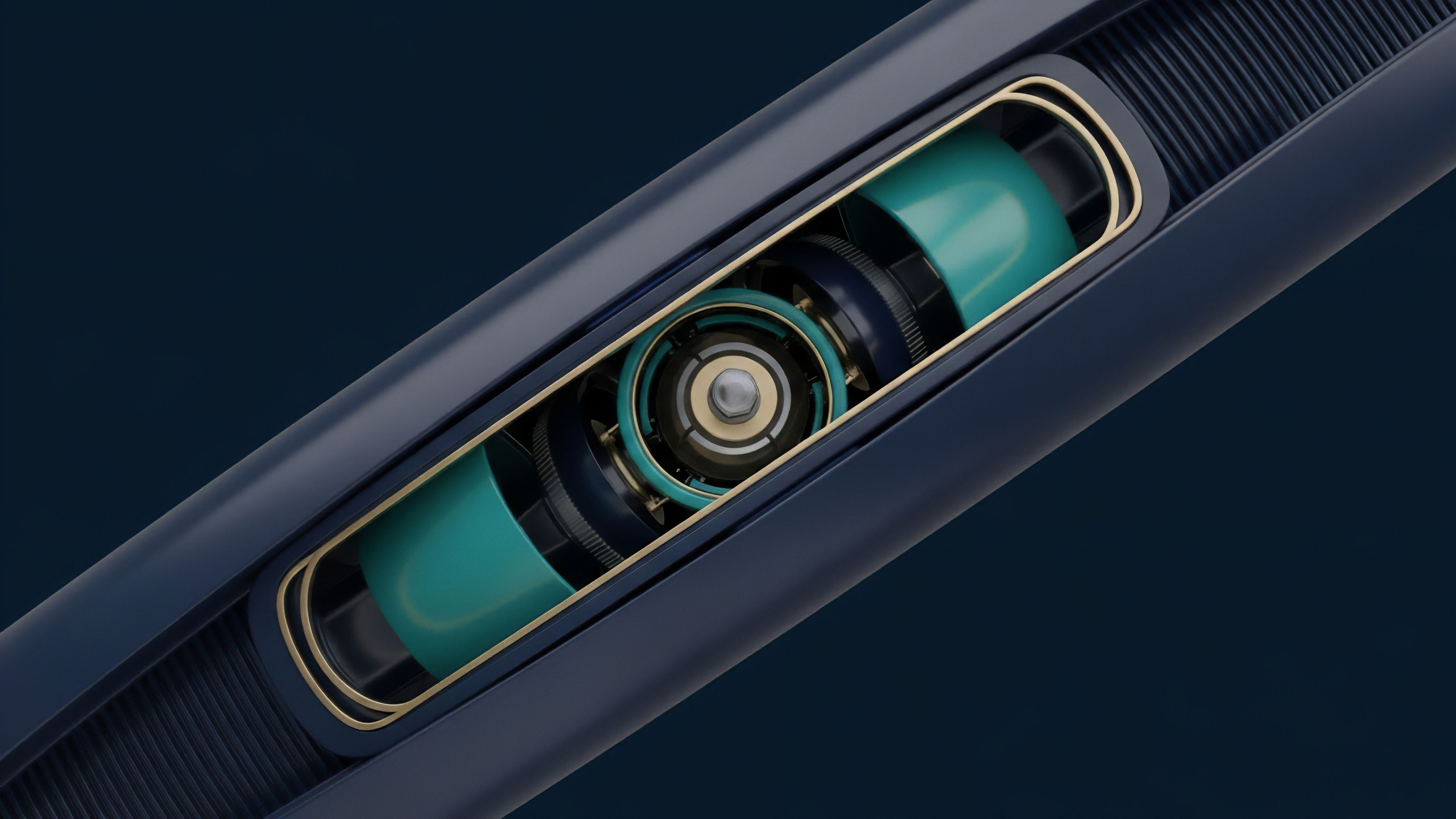

The implementation of Data Source Weighting directly influences the protocol’s liquidation engine. The reference price determines when a user’s collateral falls below the required maintenance margin. A poorly weighted reference price can lead to either premature liquidations (where a user is liquidated based on a manipulated price) or delayed liquidations (where a user’s position should be liquidated but the reference price fails to reflect the true market decline).

Both outcomes represent a significant risk to the protocol’s solvency and user trust. The weighting algorithm is therefore not a secondary feature, but a core component of the protocol’s risk management infrastructure.

Evolution

The evolution of Data Source Weighting has progressed from static, pre-configured systems to dynamic, incentive-based frameworks. Early protocols relied on fixed weights assigned by governance. This approach, however, proved inflexible in rapidly changing market conditions.

The next iteration involved a shift to dynamic weighting, where the influence of each source is adjusted based on real-time metrics. This dynamic approach allows the system to automatically reduce the weight of a source that exhibits high variance or deviates significantly from the median price, effectively quarantining potentially manipulated data feeds.

The most advanced forms of Data Source Weighting are now incorporating elements of game theory and economic incentives. Instead of simply aggregating data, protocols are building reputation systems where oracles are rewarded for providing accurate data and penalized for providing inaccurate data. This approach creates a system where data providers have a financial incentive to be truthful.

The design of these systems is complex, requiring a careful balance between rewards and penalties to ensure a high level of data integrity. This move towards incentive-based weighting represents a significant shift from purely technical solutions to a hybrid approach that incorporates economic principles. The goal is to create a self-regulating system where the cost of providing false data outweighs the potential profit from manipulation.

Key Principles of Advanced Weighting

- Incentive Alignment: Oracles are financially rewarded for providing accurate data and penalized for submitting manipulated prices.

- Adaptive Filtering: The weighting algorithm dynamically adjusts source influence based on historical accuracy and deviation from a statistical norm.

- Hybrid Models: The system combines on-chain data from decentralized exchanges with off-chain data from centralized exchanges to create a comprehensive price feed.

The evolution of Data Source Weighting is closely tied to the broader development of oracle networks. As oracle networks become more robust and decentralized, the weighting mechanisms they provide become more sophisticated. The shift from simple averaging to advanced statistical modeling reflects a growing understanding of market microstructure and the adversarial nature of decentralized systems.

The goal is to build a system where the “truth” of the market price emerges from a consensus of diverse and incentivized data sources, rather than relying on a single authority.

Horizon

Looking forward, Data Source Weighting is likely to move beyond simple statistical methods and incorporate machine learning and AI-driven anomaly detection. These advanced systems will be capable of identifying subtle patterns of manipulation that current algorithms miss. The next generation of weighting algorithms will not just filter out outliers; they will predict potential manipulation attempts based on historical data and real-time order flow analysis.

This will significantly increase the cost and complexity of attacking decentralized derivatives protocols, making them more resilient to manipulation.

Another area of development is the integration of Data Source Weighting with governance systems. Future protocols may allow users to stake collateral on specific data sources, creating a decentralized reputation system where the community actively participates in determining the trustworthiness of data feeds. This would shift the responsibility of source selection from a centralized team to a decentralized network of stakeholders.

The challenge here is to design a system that prevents collusion among stakeholders while still maintaining accuracy. The future of Data Source Weighting lies in creating systems that are not just reactive to manipulation, but proactive in anticipating and preventing it.

The future of Data Source Weighting involves integrating machine learning models for anomaly detection and creating dynamic, incentive-based reputation systems for data sources.

The ultimate goal is to create a “price feed” that is not a static number but a dynamic, probabilistic distribution. This distribution would represent the market’s collective belief about the asset’s price, with a corresponding confidence level based on the quality and diversity of the underlying data sources. This shift from a single price point to a probability distribution would significantly change how derivatives contracts are settled and how risk is calculated.

It would allow protocols to price options based on a more accurate representation of market risk, moving closer to the goal of creating a truly resilient and decentralized financial system.

Glossary

Off-Chain Data Source

Collateralization Ratio Adjustment

Data Feeds

Single-Source Price Feeds

Source Compromise Failure

Open-Source Risk Circuits

Weighting Function

Data Integrity Verification

Source Diversity Mechanisms