Essence

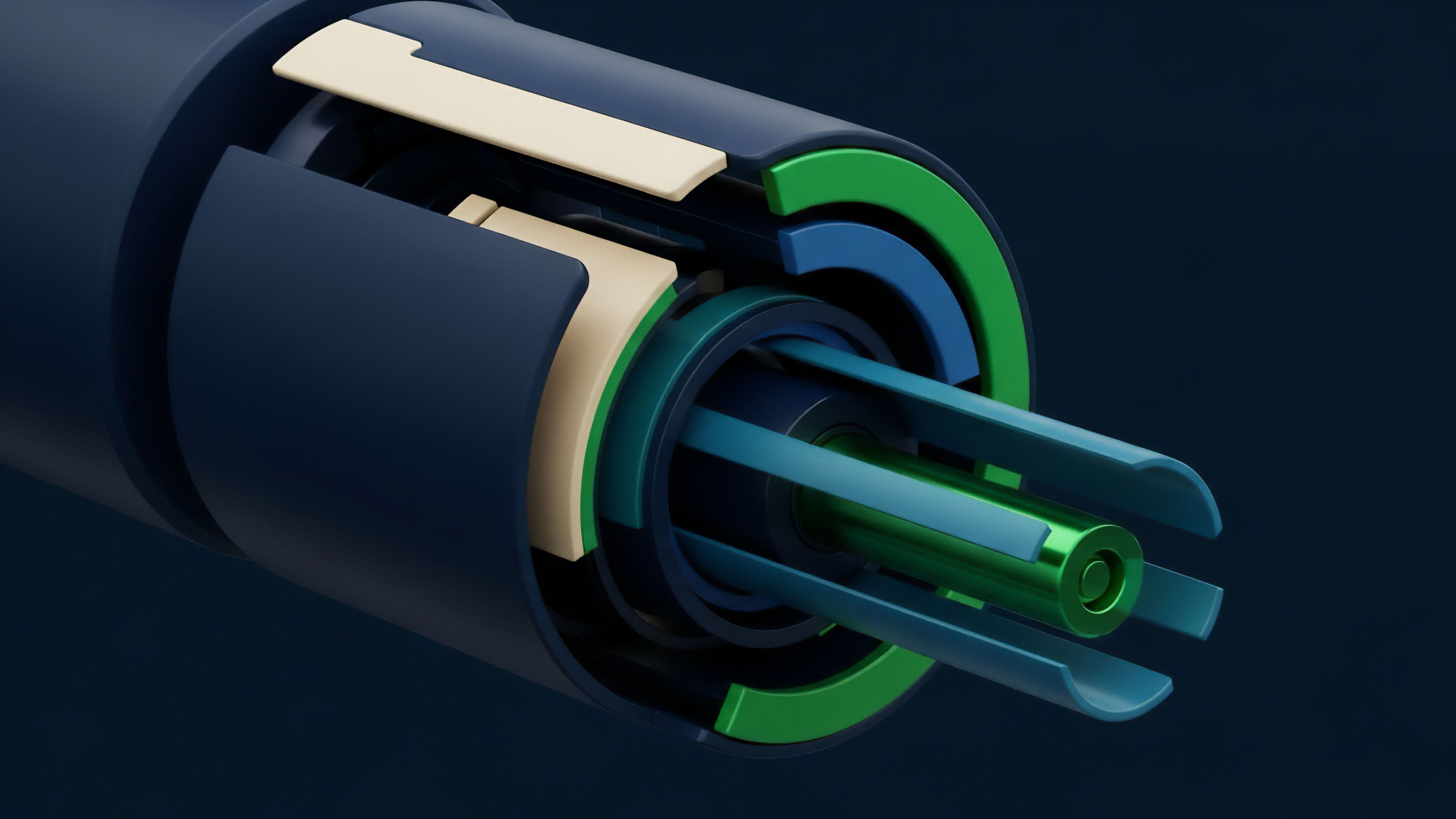

The core challenge in decentralized finance, particularly for options protocols, is the secure and reliable transfer of real-world information into the deterministic environment of a smart contract. This data integrity function is performed by an oracle feed. For options, a price feed must provide the underlying asset’s current value to enable accurate pricing, margin calculations, and settlement.

The reliability of this feed is not a secondary feature; it represents the primary point of failure for the entire derivatives system. If the feed provides manipulated or stale data, the contract logic, no matter how robustly coded, will execute incorrectly. This vulnerability creates systemic risk, where a single oracle attack can lead to widespread liquidations and protocol insolvency.

The system’s integrity hinges on the assumption that the data input reflects market reality, a challenge that requires both cryptographic security and game-theoretic incentive design.

The concept of reliability in this context extends beyond simple availability. A feed that updates infrequently might be technically available but financially unreliable for high-frequency trading or dynamic margin calls. A feed that sources data from a single, low-liquidity exchange might be available but easily manipulated.

True reliability for derivatives requires a multi-layered approach to data aggregation, where a single data point is not trusted, but rather a consensus derived from a broad sample of market sources. The architecture must anticipate adversarial behavior, ensuring that the cost of providing false data outweighs the potential profit derived from exploiting the resulting market mispricing.

Origin

The necessity for reliable oracle feeds originated from early DeFi exploits where price feeds were easily manipulated. The initial solutions often involved protocols pulling data from single decentralized exchanges (DEXs) or relying on time-weighted average prices (TWAPs) from low-liquidity pools. These early designs proved insufficient against flash loan attacks.

An attacker could borrow a large amount of capital, manipulate the price on a single DEX, execute a transaction against a derivatives protocol using the manipulated price, and then repay the loan, all within a single block. This demonstrated that a derivatives protocol could only be as secure as its weakest data source.

The first generation of oracle solutions attempted to solve this by creating dedicated oracle networks. These networks began to aggregate data from multiple off-chain sources, primarily centralized exchanges (CEXs), to mitigate the risk of single-source manipulation. This shift introduced a new set of problems related to data latency and cost.

While more secure than single-DEX feeds, these solutions often provided data at a slower rate than traditional financial markets require for high-speed options trading. The core tension in the origin story of oracle reliability is the conflict between the need for decentralized trust and the requirement for real-time data accuracy.

Theory

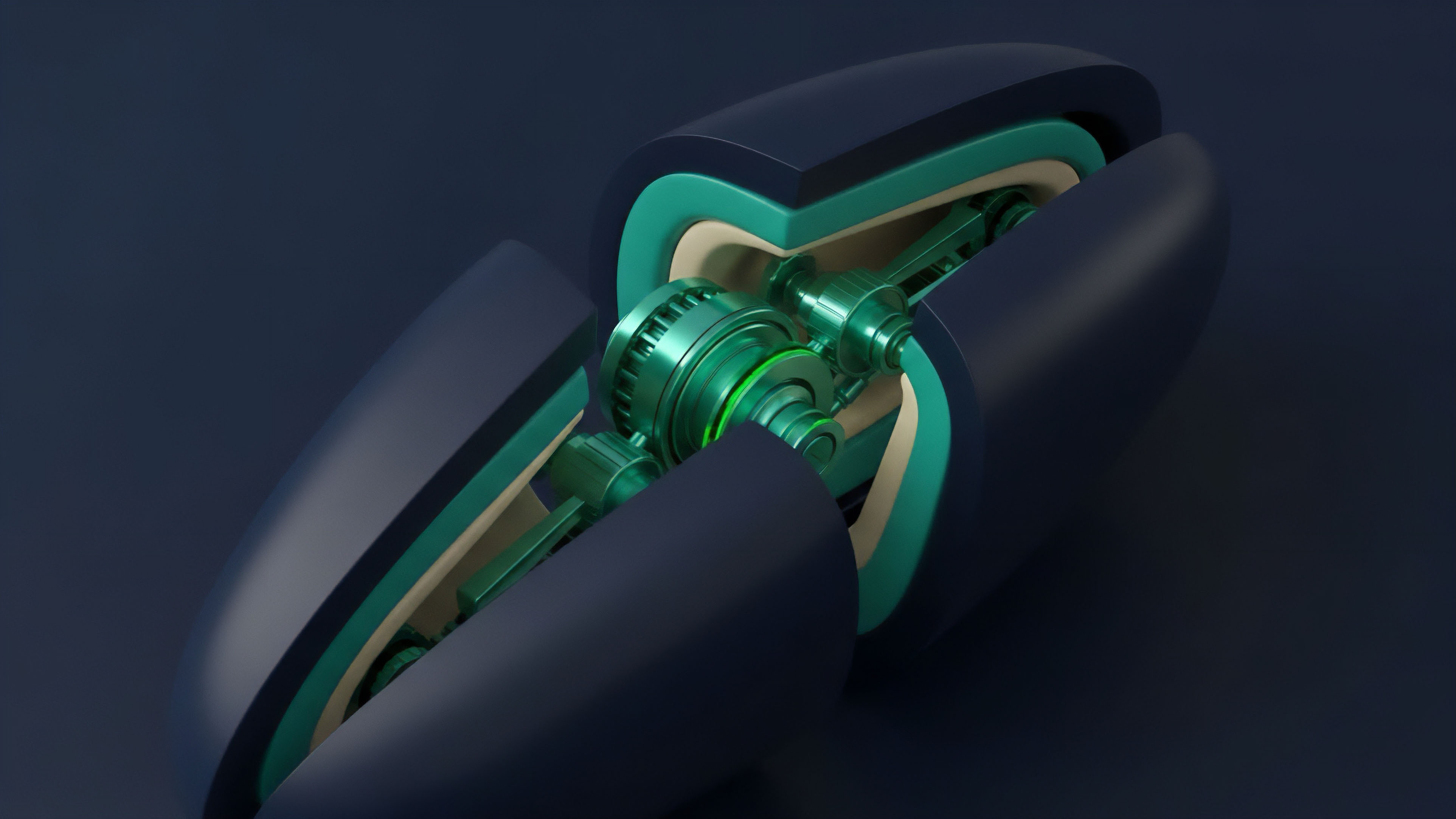

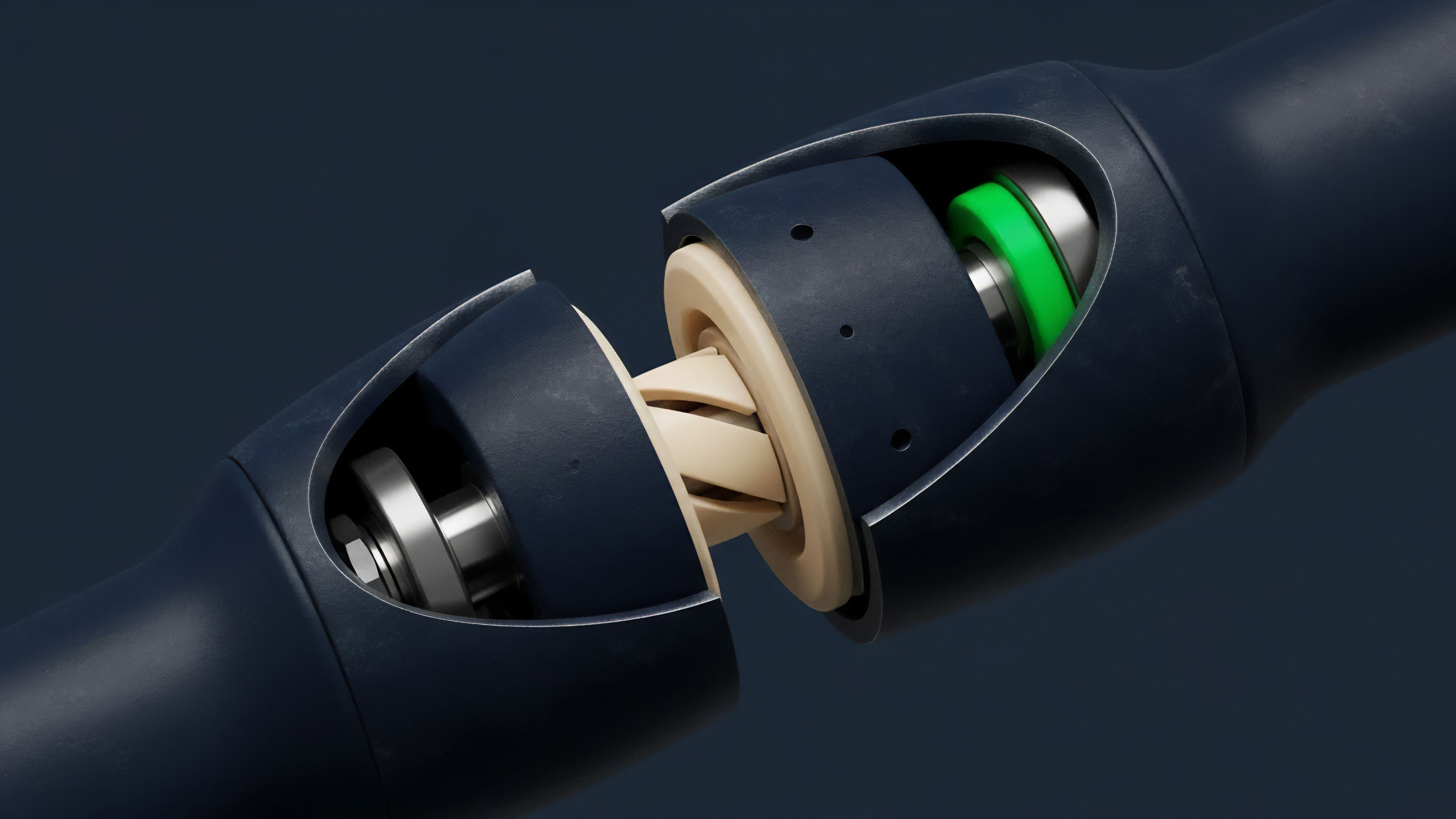

The theoretical foundation of oracle reliability rests on two pillars: economic security and statistical robustness. Economic security ensures that the incentives for data providers align with the protocol’s objectives. Statistical robustness addresses the aggregation methods used to derive a single, accurate price from multiple sources.

For options pricing, this involves more than just a simple average; it requires understanding how to filter outliers and weight sources based on perceived liquidity and historical accuracy.

The statistical approach to aggregation involves several key methodologies, each with distinct trade-offs in terms of latency and security. The choice of aggregation method directly impacts the accuracy of on-chain option pricing models, particularly when calculating greeks like delta and gamma.

- Median Aggregation: This method takes data from a set of providers and selects the middle value. It is highly resistant to outlier manipulation, as a single malicious actor cannot skew the median by providing an extremely high or low value. However, it can be slow to react to genuine, rapid market shifts.

- Volume-Weighted Average Price (VWAP): This method weights each data source based on its reported trading volume. The theory suggests that data from higher-liquidity venues is more representative of the true market price. While accurate, this method introduces a dependency on a potentially centralized volume source and can be vulnerable if a large-volume exchange is compromised or provides manipulated data.

- Outlier Filtering: A common practice is to calculate the standard deviation across all reported prices and discard data points that fall outside a specific range. This technique balances accuracy with security, removing malicious or erroneous data while preserving genuine market consensus.

The challenge for options protocols is that the data requirements extend beyond simple spot prices. Options pricing models require inputs like implied volatility (IV), which is itself a derived value. Providing a reliable IV feed requires aggregating data from options order books, which are inherently more fragmented and less liquid than spot markets.

This adds a layer of complexity to the statistical challenge.

Oracle feed reliability is the foundation upon which the solvency of decentralized derivatives protocols is built, translating real-world market data into on-chain executable logic.

Approach

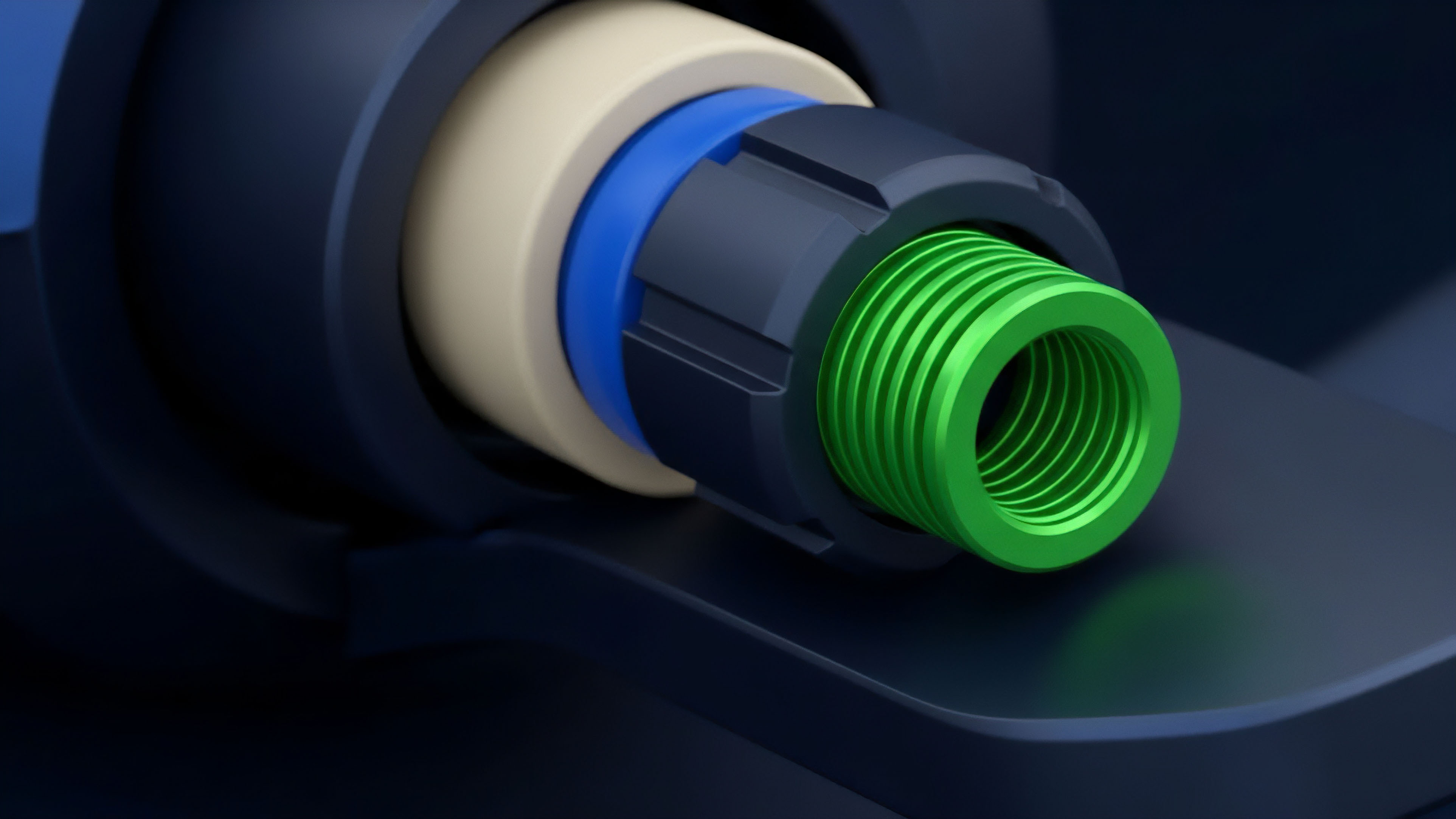

Current approaches to ensuring oracle reliability involve a multi-layered architecture designed to mitigate risk at different points in the data flow. This typically includes a combination of incentive mechanisms for data providers, cryptographic proofs for data verification, and robust aggregation logic. The system must address two core risks: data manipulation (a malicious actor submitting false data) and data liveness (a data provider failing to submit data in a timely manner).

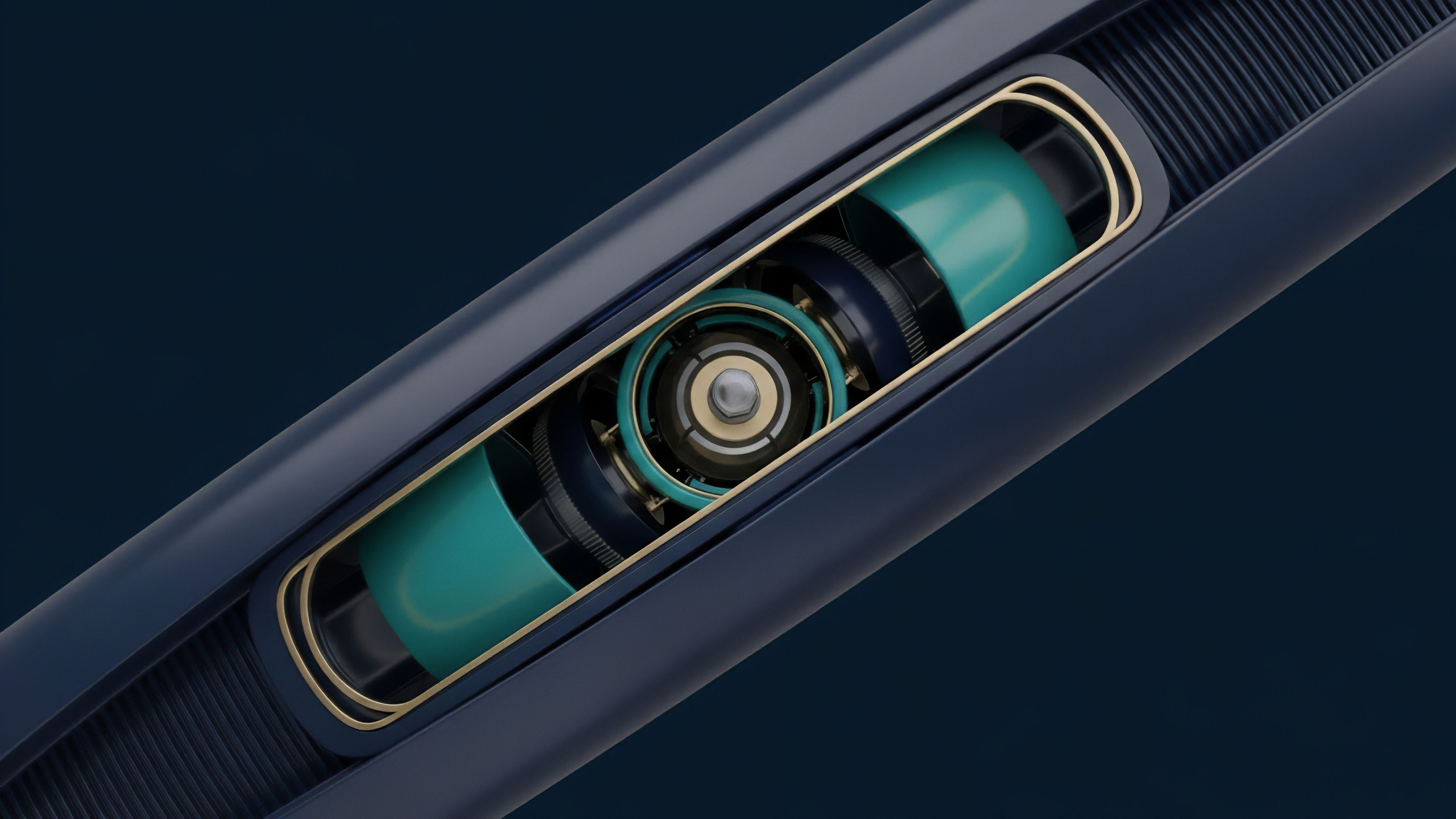

The implementation of a reliable oracle feed for options protocols often requires a continuous “push” model rather than a reactive “pull” model. In a pull model, the smart contract requests data only when needed, which can result in significant latency during volatile market conditions. In a push model, data providers continuously update the price feed on a predetermined schedule, ensuring that the options protocol always has fresh data for real-time pricing and risk calculations.

This constant updating, however, increases transaction costs and requires a robust economic model to incentivize data providers.

To prevent data manipulation, protocols implement a staking mechanism. Data providers stake collateral, and if they submit data that deviates significantly from the consensus, their stake is slashed. This economic deterrent ensures that providing false data is unprofitable.

The following table illustrates the trade-offs between two common data delivery models used in options protocols.

| Data Delivery Model | Description | Latency Characteristics | Cost Implications |

|---|---|---|---|

| Push Model | Data providers continuously broadcast updates to the smart contract at set intervals. | Low latency; near real-time updates for high-frequency trading. | High gas costs due to frequent on-chain transactions. |

| Pull Model | Smart contract requests data only when a specific function (e.g. liquidation) is called. | High latency during volatile periods; data can be stale. | Low gas costs; transactions only occur on demand. |

The most effective approach to oracle reliability combines economic incentives for data providers with statistical aggregation methods to filter out malicious or erroneous inputs.

Evolution

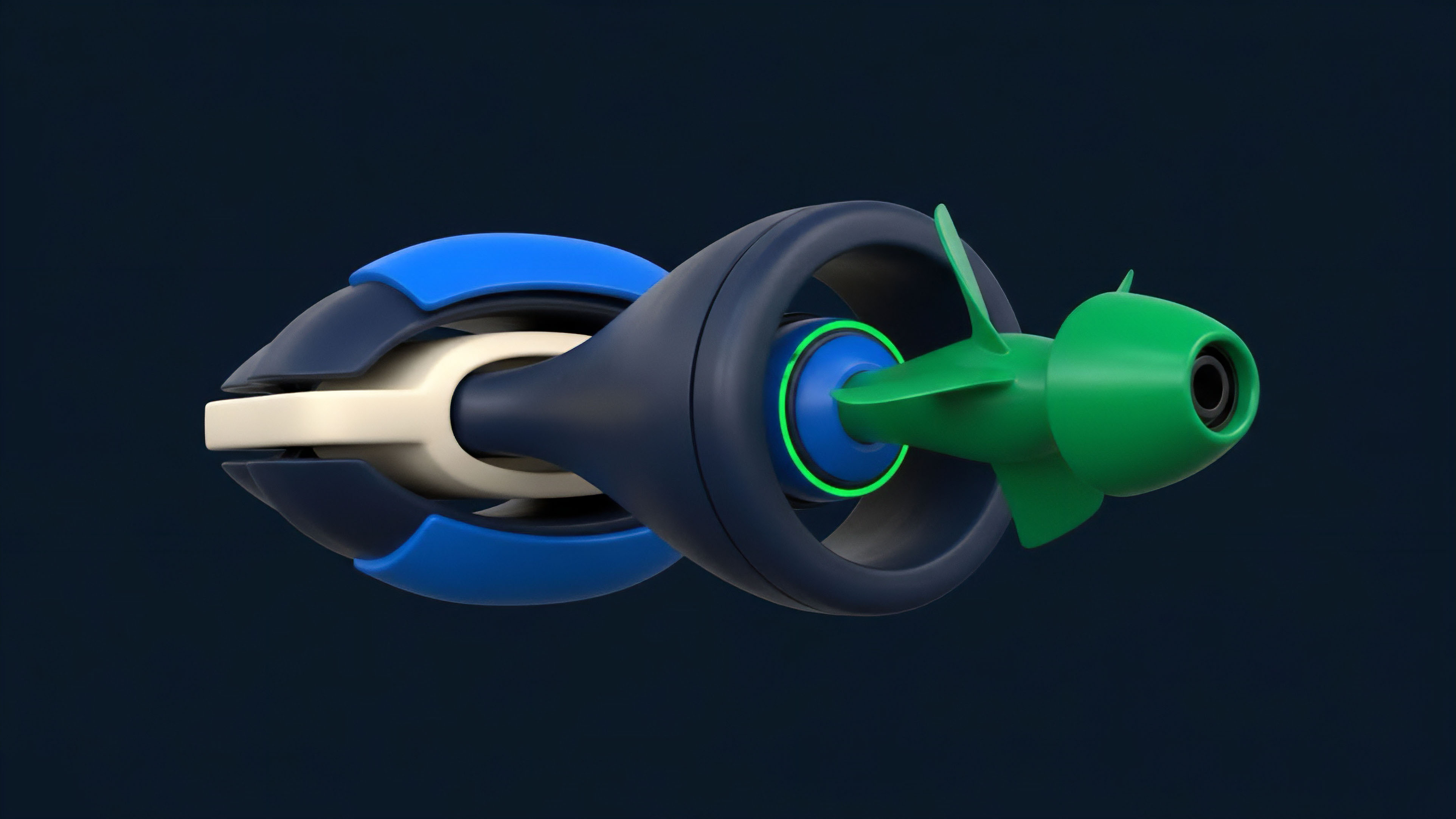

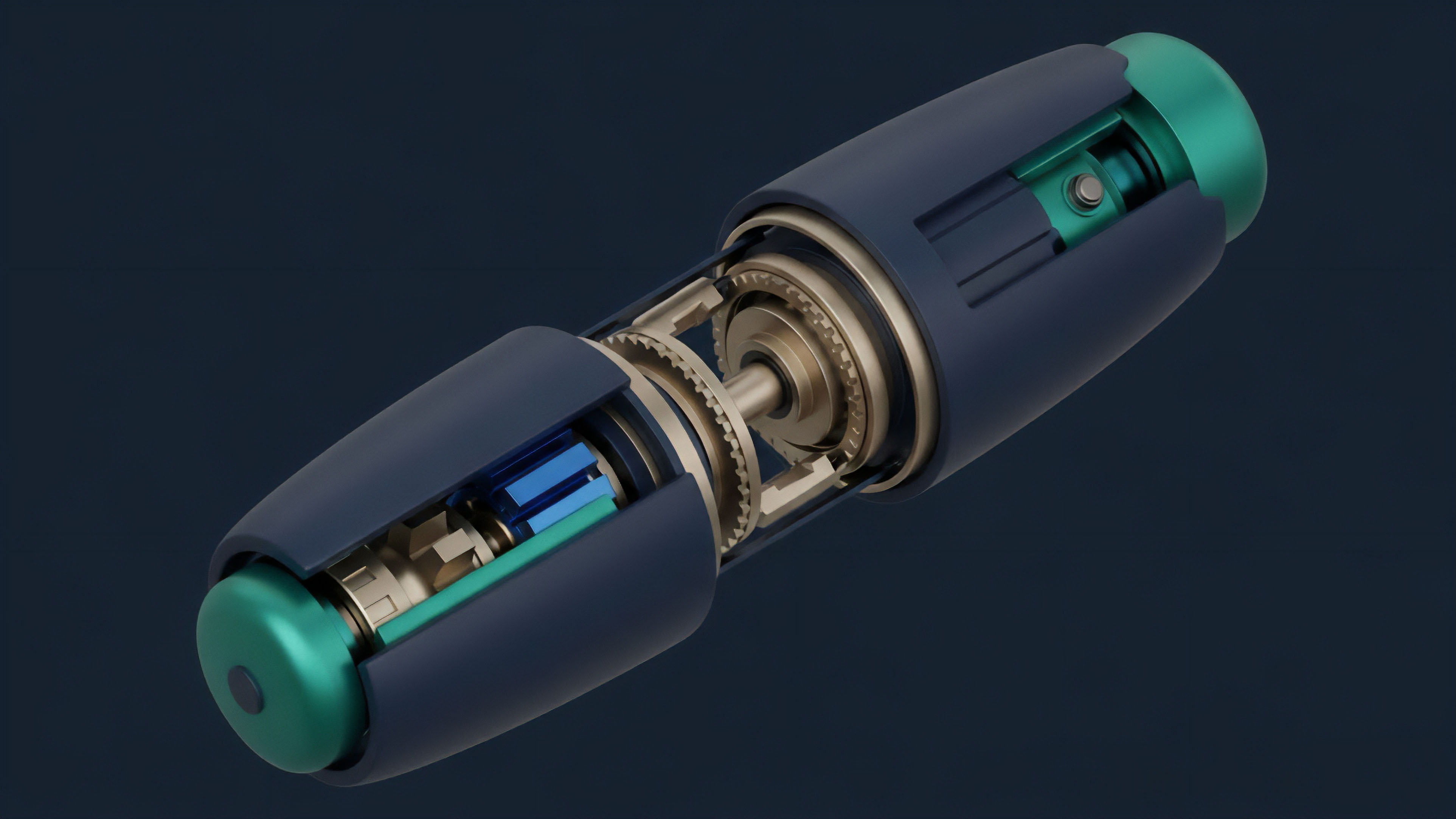

The evolution of oracle reliability has moved from simple price feeds for spot markets to sophisticated data delivery for complex derivatives. The initial focus was on securing basic lending protocols, where a simple spot price was sufficient to calculate collateralization ratios. As options protocols emerged, the requirements changed dramatically.

Options pricing models (like Black-Scholes) require more than just a single spot price; they require inputs like implied volatility (IV), which itself is a derived value representing market expectations of future volatility.

The current generation of oracle solutions is grappling with how to reliably source and deliver IV data. IV is not a universally agreed-upon price; it varies depending on the strike price and expiration date, forming an “IV surface.” Sourcing this surface data from fragmented on-chain options exchanges and off-chain order books presents a significant challenge. The data aggregation methods developed for spot prices are insufficient for IV data.

A new class of oracle solution is required, one that can process and standardize complex data structures rather than simple numerical values.

The next major evolution in oracle reliability is the development of on-chain data sources. While current oracles bridge off-chain data to on-chain protocols, a truly decentralized system would generate its own data. This could be achieved through automated market makers (AMMs) for options, where the IV is derived directly from the protocol’s internal mechanisms and liquidity pools, rather than from external sources.

This eliminates the oracle dependency entirely, creating a self-contained, trustless system.

Horizon

The horizon for oracle reliability is defined by the pursuit of complete on-chain autonomy and the mitigation of systemic risks introduced by external dependencies. The current state, where decentralized protocols rely heavily on data feeds from centralized exchanges, represents a significant vulnerability. A regulatory action against a single data source or a technical failure in a centralized exchange could cascade through the entire DeFi ecosystem, leading to mass liquidations across multiple options protocols simultaneously.

This creates a hidden centralization risk within a supposedly decentralized system.

The next frontier involves oracle composability, where protocols can build on each other’s data streams. Imagine a scenario where a protocol that calculates implied volatility can feed that data directly into another protocol that prices options, creating a more efficient and interconnected data layer. This composability, however, increases the risk of contagion, where a failure in one protocol’s data source can quickly propagate to others.

The challenge is to create a robust data layer that allows for this interconnectedness without creating new systemic single points of failure.

The long-term goal for oracle reliability is to move away from external data entirely. The ideal state involves a fully self-contained ecosystem where prices are determined by on-chain liquidity and market mechanisms. The challenge here is the bootstrapping problem: how to create deep, liquid markets on-chain that can compete with centralized exchanges without first relying on their price feeds.

The solution likely lies in novel incentive structures that attract liquidity providers to on-chain options AMMs, creating a new, truly decentralized price discovery mechanism. The future of oracle reliability is not just about data feeds; it is about building a new financial operating system that generates its own reality.

Future developments in oracle reliability must focus on mitigating systemic risk through on-chain data generation and addressing regulatory vulnerabilities associated with external data dependencies.

Glossary

Tokenomics Design

Data Feed

Oracle Price-Liquidity Pair

Systemic Risk Feed

Push Model Oracles

Data Feed Historical Data

Price Feed Segmentation

Data Feed Reconciliation

Oracle Price Updates