Essence

Data quality in crypto options is the foundational integrity of all inputs required for the accurate pricing, risk management, and settlement of derivative contracts. This extends far beyond a simple price feed; it encompasses the accuracy, timeliness, and resistance to manipulation of the entire dataset that feeds a protocol’s core logic. A derivative smart contract requires a reliable source for both the underlying asset price and, crucially, the implied volatility surface to correctly calculate the value of an option and manage collateral requirements.

Without high-quality data, the entire system operates on flawed premises, leading to systemic vulnerabilities. The core challenge in decentralized finance (DeFi) is that data quality directly impacts the margin engine, which determines when liquidations occur. A bad data input can trigger incorrect liquidations, creating a cascade effect that destabilizes the entire protocol.

Data quality in crypto options represents the integrity of the information feeding the smart contract logic, which dictates accurate pricing and risk management.

The data quality problem is essentially a trust problem. In traditional finance, market participants rely on regulated, centralized data providers to deliver high-fidelity information. DeFi, by design, rejects this central point of trust, instead relying on decentralized oracle networks to aggregate and verify data.

The data itself must be verifiable on-chain, yet complex calculations, like implied volatility, often require off-chain computation. This creates a fundamental tension between the need for high-frequency, low-latency data and the constraints of blockchain consensus mechanisms. The systemic health of a derivatives protocol depends entirely on its ability to resolve this tension without compromising on either speed or decentralization.

Origin

The data quality problem in crypto options originated from the architectural choices made during the early iterations of decentralized finance. When protocols first began offering derivatives, they needed a mechanism to determine the value of collateral and the price of the underlying asset. The simplest solution was to use a single data source, often a single centralized exchange or a simple price feed.

This approach proved immediately vulnerable to manipulation. The most notable early failures involved flash loan attacks, where an attacker could temporarily manipulate the price on a single exchange or in a small liquidity pool, causing the protocol’s oracle to report a false price. This false price would then be used to liquidate positions or drain funds before the price returned to normal.

The data integrity crisis forced a rapid evolution in oracle design. The first generation of solutions focused on simple aggregation: instead of relying on one source, protocols began averaging data from multiple sources. This mitigated single-point-of-failure risk but introduced new challenges related to data latency and cost.

The data quality requirements for options are particularly stringent compared to simple spot markets or lending protocols. An options contract’s value is non-linear and highly sensitive to volatility changes, meaning a simple, delayed price feed is insufficient for accurate pricing and risk management. The industry recognized that a data quality solution for derivatives must address not only price accuracy but also the second-order effects of market dynamics, such as volatility skew and liquidity depth.

Theory

The theoretical framework for data quality in crypto options centers on two primary components: the data feed itself and the resulting impact on quantitative models. The data feed must satisfy several key dimensions to be considered high quality.

- Timeliness: Data must be delivered to the smart contract with minimal latency. For high-frequency options trading, a delay of even a few seconds can result in significant mispricing or missed liquidation opportunities, creating systemic risk.

- Accuracy and Granularity: The data must reflect the true market price, but also possess sufficient granularity to accurately calculate the implied volatility surface. This requires not just the spot price, but also a reliable stream of bids and asks for various strike prices and maturities.

- Resistance to Manipulation: The data source must be resilient to attacks, particularly flash loans or large-scale market manipulation. This is typically achieved through aggregation and weighted averages.

- Availability: The data feed must be continuously available, even during periods of extreme network congestion or high volatility, which are precisely when derivatives protocols are under maximum stress.

The primary consequence of poor data quality is the corruption of the Greeks , the quantitative risk parameters used to manage options portfolios. A delayed or inaccurate price feed will immediately skew the calculation of Delta (the rate of change of option price relative to the underlying asset price), leading to incorrect hedging strategies. A flawed implied volatility surface will directly corrupt Vega (the sensitivity to volatility changes), resulting in mispriced contracts and potentially massive losses for market makers and liquidity providers.

The systemic risk here is that a protocol’s risk engine, operating on bad data, will miscalculate its total value locked (TVL) and collateralization ratio, potentially leading to insolvency during a black swan event.

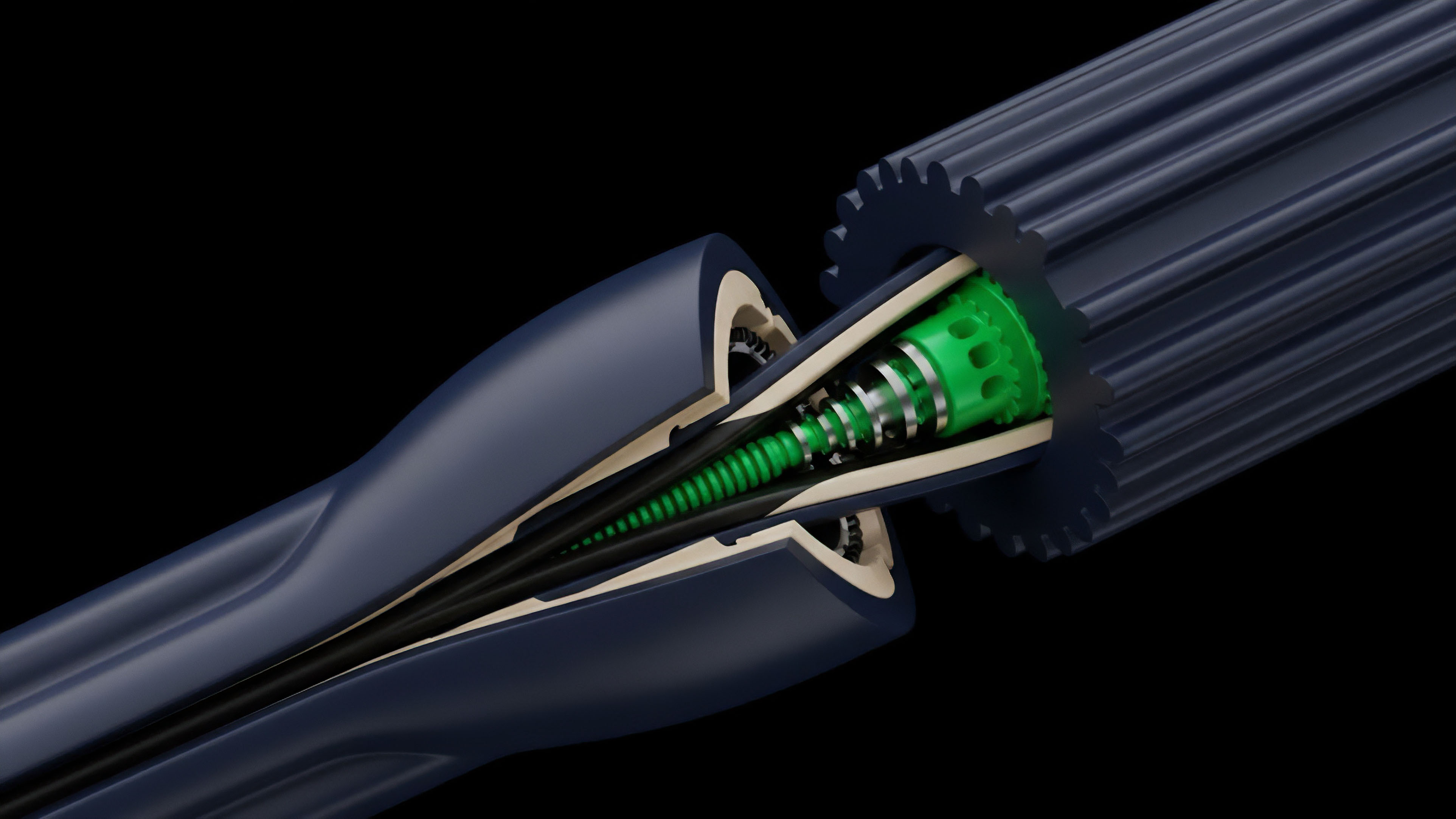

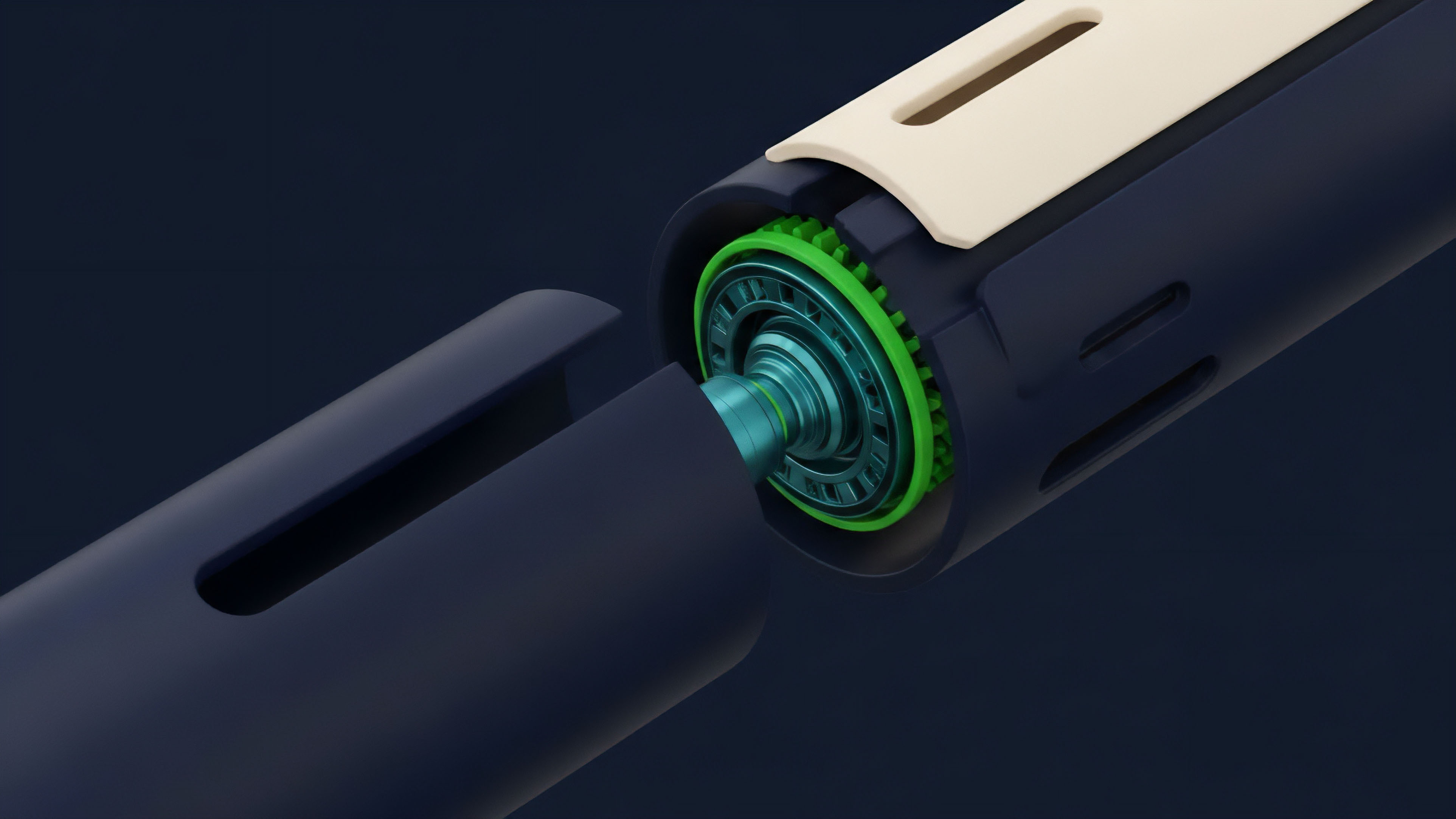

Oracle Design and Data Integrity

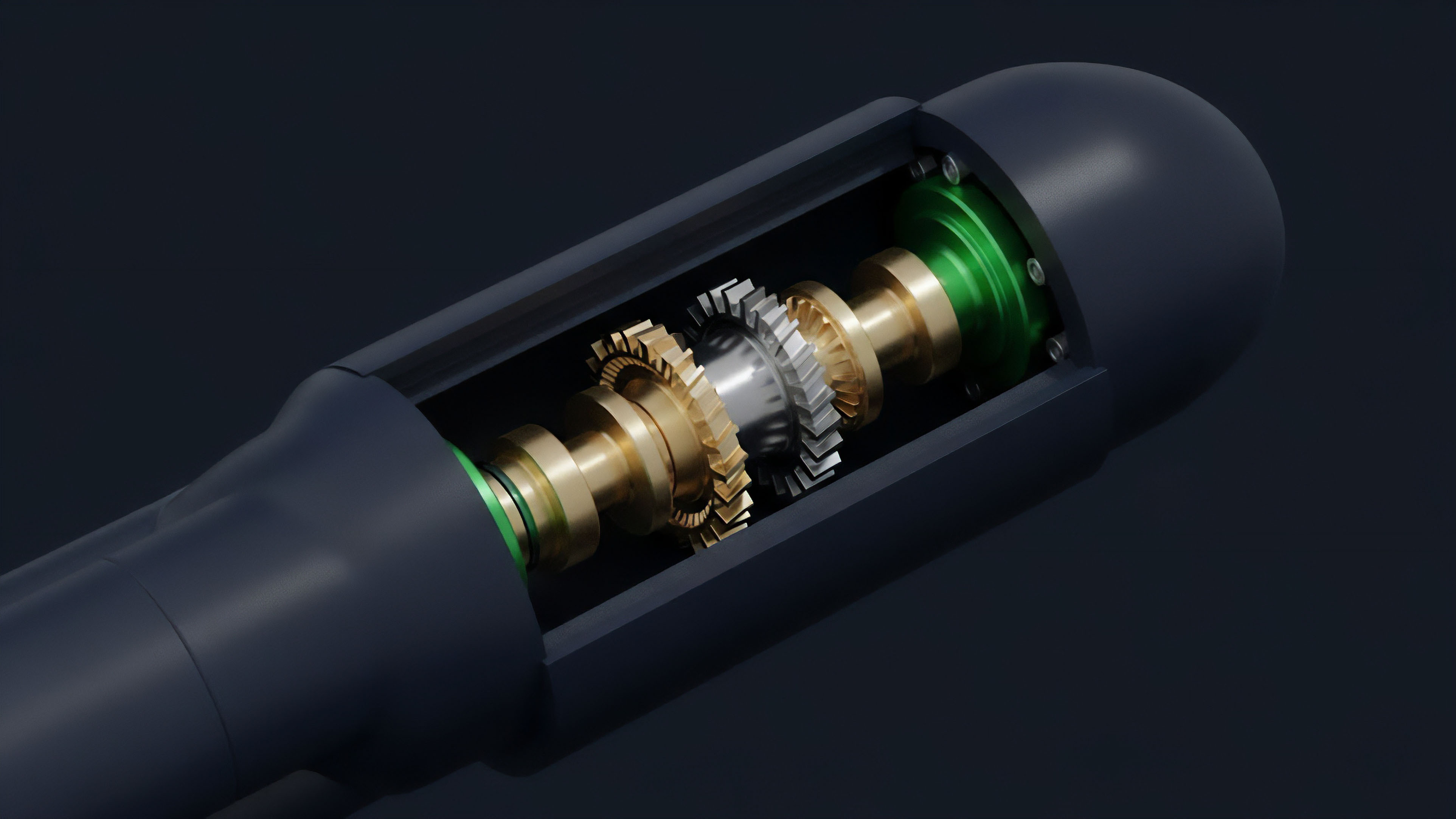

The choice of oracle design dictates the trade-offs in data quality. A simple, low-cost oracle might provide high timeliness but low resistance to manipulation. A complex, decentralized oracle network might offer high resistance to manipulation but suffer from higher latency and cost.

| Data Feed Type | Latency (Time to Update) | Resistance to Manipulation | Cost Model |

|---|---|---|---|

| Single Exchange Price Feed | Low (near real-time) | Low (vulnerable to flash loans) | Low (simple API call) |

| Simple Multi-Source Aggregation | Medium (aggregation delay) | Medium (requires larger attack capital) | Medium (multiple API calls) |

| Volume-Weighted Average Price (VWAP) | Medium/High (requires time window) | High (hard to manipulate across volume) | High (complex calculation) |

| Decentralized Oracle Network (DON) | Medium/High (consensus delay) | High (decentralized source verification) | High (incentivized nodes) |

Approach

Current approaches to ensuring data quality for crypto options focus on mitigating specific vulnerabilities and enhancing data robustness. The most common solution is the implementation of Time-Weighted Average Price (TWAP) and Volume-Weighted Average Price (VWAP) oracles. These mechanisms sample prices over a specific time window, smoothing out temporary price spikes and making manipulation significantly more expensive for attackers.

A protocol relying on a TWAP oracle calculates the average price over a period (e.g. 10 minutes) rather than relying on the single, instantaneous price at the moment of execution. This prevents flash loans from instantly manipulating the oracle feed.

For options protocols, the challenge extends to securing the volatility surface data. Since options pricing is highly sensitive to implied volatility, protocols cannot rely on simple spot price feeds. They must either calculate the implied volatility on-chain, which is computationally expensive, or use a dedicated volatility oracle.

The current approach involves decentralized oracle networks that aggregate implied volatility data from multiple sources, including over-the-counter (OTC) desks and centralized exchanges. The protocol then uses this aggregated data to create a robust, verifiable volatility surface. This approach requires careful selection of data sources and a transparent methodology for weighting them.

- Multi-Source Aggregation: Collecting price data from numerous exchanges to prevent single-source manipulation.

- Temporal Smoothing: Using TWAP or VWAP to filter out short-term volatility and manipulation attempts.

- Off-Chain Computation Verification: Performing complex calculations, such as implied volatility, off-chain and then submitting a cryptographic proof to the chain for verification.

- Decentralized Governance: Allowing protocol participants to vote on which data sources are included in the oracle feed and how they are weighted.

Robust data quality requires a shift from instantaneous price snapshots to time-weighted or volume-weighted averages, effectively making data manipulation economically infeasible for attackers.

Evolution

The evolution of data quality in crypto options reflects a move from simple price feeds to sophisticated, multi-dimensional data models. Early protocols prioritized speed and low cost, often at the expense of data integrity. The focus now is on building resilience against a more complex threat landscape.

The primary challenge in the current environment is the data-liquidation feedback loop. When market conditions become volatile, liquidity often dries up, making the price feeds less reliable. This unreliable data then triggers liquidations based on flawed prices, which further exacerbates the liquidity crisis.

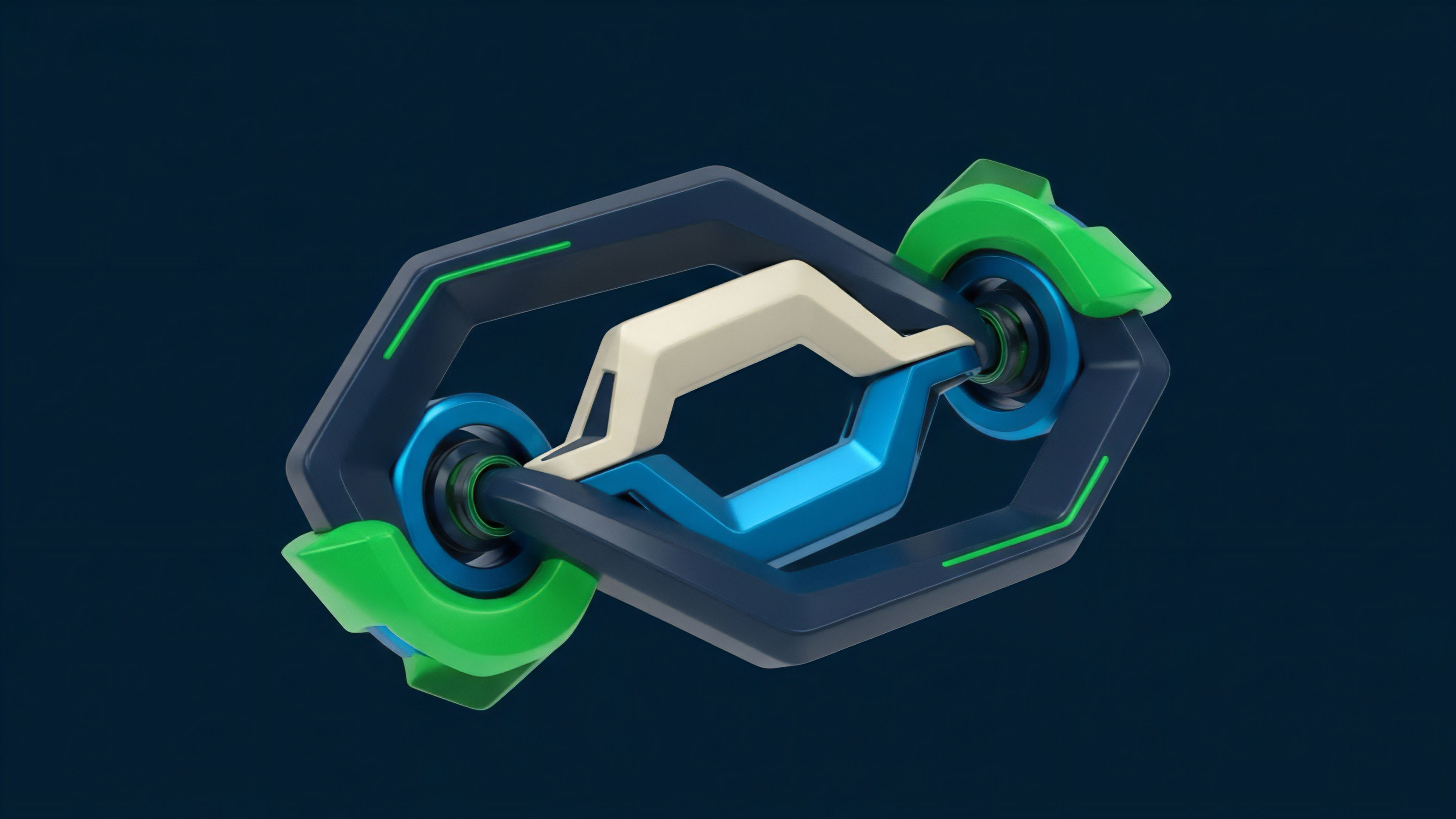

The evolution of options protocols is about breaking this loop by building in data resilience. The next phase of evolution involves addressing the fragmentation of data across different layers and blockchains. As options protocols expand from Layer 1 to Layer 2 solutions and other chains, the data integrity challenge becomes exponentially more complex.

A single oracle network must now reliably aggregate data from multiple chains, ensuring consistency and preventing cross-chain arbitrage based on data latency. This requires a new architecture for data distribution, often involving dedicated messaging protocols and cross-chain communication layers. The most advanced protocols are moving toward off-chain data computation.

Rather than trying to calculate complex metrics like implied volatility on-chain, which is expensive and slow, they perform these calculations off-chain using dedicated data providers. The resulting data point is then submitted to the blockchain, where a cryptographic proof verifies its accuracy. This approach allows protocols to access a high-fidelity volatility surface without incurring high gas costs or compromising on decentralization.

The evolution is moving toward a separation of data processing from data verification.

| Data Quality Challenge | Early Solution (2020-2021) | Current Solution (2022-Present) |

|---|---|---|

| Price Manipulation Risk | Simple multi-source aggregation | TWAP/VWAP oracles and decentralized oracle networks |

| Volatility Data Integrity | Static or off-chain data feeds | On-chain volatility surfaces derived from aggregated data |

| Liquidity Fragmentation | Single chain data sourcing | Cross-chain data aggregation and messaging protocols |

| MEV Exploitation | Front-running opportunities | TWAP/VWAP integration and MEV-resistant oracle design |

Horizon

The future of data quality for crypto options will be defined by the integration of real-time volatility surfaces into smart contracts. The current reliance on TWAP/VWAP oracles, while effective against flash loan attacks, still provides a lagging indicator of market conditions. The next generation of options protocols requires a data feed that can accurately reflect the dynamic changes in implied volatility, particularly during periods of high market stress.

This will require the development of decentralized volatility oracles that source data from multiple options markets, calculate a robust implied volatility surface off-chain, and deliver a verifiable result on-chain with low latency. The challenge of data quality will also merge with the challenge of Maximal Extractable Value (MEV). Data latency creates opportunities for front-running, where a sophisticated actor can observe a data update in the mempool and execute a trade based on that information before the protocol processes it.

Future data quality solutions must be designed to mitigate MEV by ensuring data updates are bundled securely and processed fairly, potentially through private transaction relays or other MEV-resistant architectures.

The next generation of options protocols will require data solutions capable of feeding complex, real-time volatility surfaces directly into smart contracts, moving beyond simple price feeds.

Ultimately, the goal is to create a data layer for options that is as robust and reliable as traditional finance, but without sacrificing decentralization. This requires a holistic approach to data quality that considers not just the price of the underlying asset, but also the liquidity depth, volatility skew, and cross-chain consistency. The future data architecture must be able to support exotic derivatives and complex risk management strategies in a truly permissionless environment. The data layer will determine whether decentralized options protocols can scale to compete with centralized exchanges on a global level.

Glossary

Decentralized Oracle Networks

Price Feed Manipulation

Flash Loan Attacks

Data Quality Control

Data Integrity Checks

Market Data Quality Assurance

Delta Hedging

Price Feed

Mev Exploitation