Essence

Financial data integrity represents the core challenge in constructing robust decentralized financial systems. For crypto options, it refers to the accuracy, consistency, and reliability of the data inputs that govern every aspect of the derivative lifecycle. This includes the underlying asset price, implied volatility, interest rates, and other variables required for accurate pricing and risk management.

Without verifiable data integrity, the entire system collapses into a form of trust-based, centralized finance, undermining the core ethos of decentralization. The integrity of the data stream determines the validity of all subsequent financial calculations. A faulty price feed for the underlying asset, for instance, invalidates the Black-Scholes model and leads directly to incorrect valuations, potentially causing cascading liquidations or protocol insolvency.

The fundamental issue in decentralized finance is the “oracle problem,” which necessitates a mechanism to securely bridge off-chain data into the on-chain environment.

Financial data integrity in crypto options defines the reliability of pricing inputs, determining whether a derivative contract can be settled correctly and without manipulation.

The system’s integrity must withstand adversarial attacks, where participants attempt to manipulate data feeds to trigger profitable liquidations or execute arbitrage strategies at the expense of other users or the protocol’s insurance fund. This requirement for trustless data validation forces protocols to move beyond simple data aggregation and into sophisticated, game-theoretic designs. The data itself is not inherently trustworthy; its integrity must be enforced by economic incentives and cryptographic verification.

This creates a complex relationship between the data source, the oracle mechanism, and the financial product itself, where the weakest link determines the entire system’s security profile.

Origin

The concept of data integrity in financial markets originates in traditional finance, where regulated exchanges and centralized data providers like Bloomberg or Reuters serve as authoritative sources. In this model, integrity is enforced through legal frameworks, regulatory oversight, and physical security.

The transition to decentralized finance introduced a fundamental conflict: how to maintain data integrity when removing the central authority. Early decentralized applications (dApps) initially relied on single, centralized data feeds. These feeds quickly proved to be single points of failure, vulnerable to manipulation during periods of low on-chain liquidity.

The first generation of decentralized derivatives protocols faced significant challenges related to price feed manipulation. The initial solutions were often simplistic, pulling data directly from a single exchange API. This led to incidents where attackers executed flash loan attacks, manipulating the spot price on a single exchange to trigger liquidations on a derivatives protocol at an incorrect price.

The response to these attacks established the need for a robust, decentralized oracle solution. The solution required a system where data was not simply provided by one source, but rather aggregated and validated by multiple independent entities. This shift marked the transition from data integrity as a regulatory requirement to data integrity as a core cryptographic and economic design challenge.

The core innovation came with the introduction of decentralized oracle networks. These networks created an economic incentive layer for data providers to report accurate information. The design philosophy behind these networks recognized that data integrity cannot be assumed in an adversarial environment.

It must be actively enforced through mechanisms that penalize bad actors and reward honest participation. This move away from centralized trust to distributed, economically-incentivized verification represents the core origin story of data integrity in crypto derivatives.

Theory

The theoretical foundation of financial data integrity in crypto options centers on two primary challenges: the limitations of traditional pricing models in a decentralized context and the game theory of oracle design. Traditional models like Black-Scholes rely on a continuous, reliable price feed for the underlying asset. In decentralized finance, price feeds are discrete and subject to latency.

This creates a fundamental disconnect between the model’s assumptions and the reality of the on-chain environment.

Data Latency and Model Invalidation

The core issue with data latency is that the time between a price update on a decentralized exchange and its propagation through an oracle network can be exploited. This time window creates a “stale data” problem. An option pricing model using stale data will miscalculate the value of the derivative, particularly the Greeks.

For example, a significant price movement in the underlying asset might not be immediately reflected in the oracle feed. A trader with access to real-time market data could use this latency to trade against the protocol at an incorrect price. This vulnerability is particularly acute for options with high gamma exposure, where small changes in the underlying price lead to large changes in the option’s delta.

The protocol’s risk engine, operating on stale data, fails to hedge correctly, leading to potential insolvency.

Oracle Game Theory and Economic Security

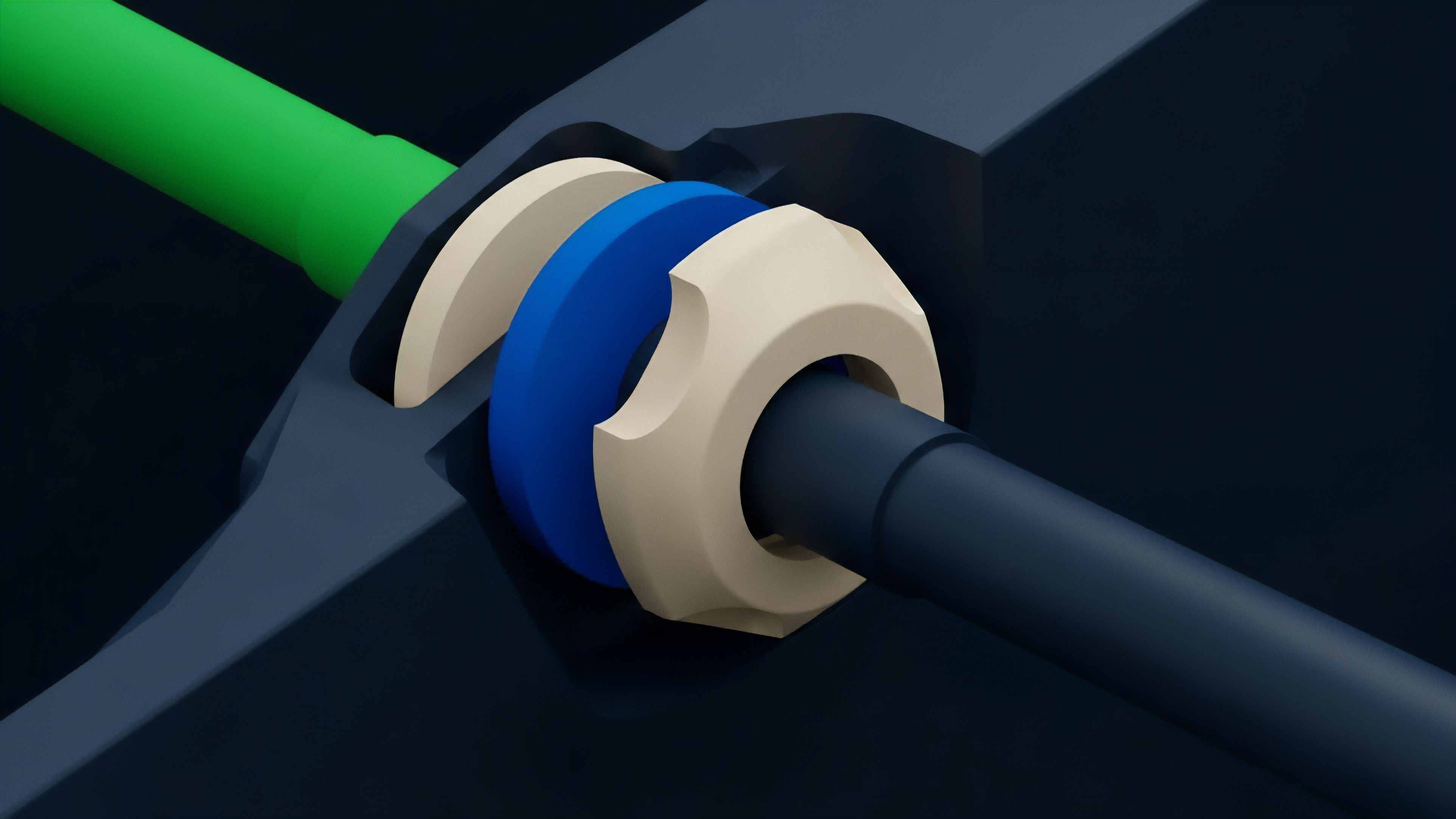

The integrity of the data stream is secured through a game-theoretic design that makes manipulation economically prohibitive. A robust oracle system must aggregate data from multiple independent sources. The system must also implement a mechanism for dispute resolution.

If a data provider submits a malicious or inaccurate price, other participants must have the ability to challenge this data. The economic security of the oracle network is derived from the cost required to successfully corrupt the data feed. This cost must be higher than the potential profit derived from manipulating the derivatives protocol that relies on the feed.

| Oracle Design Principle | Traditional Finance Analogy | DeFi Implementation Challenge |

|---|---|---|

| Source Diversity | Multiple exchanges, brokers | Aggregating data from on-chain and off-chain sources without a central authority |

| Dispute Resolution | Regulatory bodies, arbitration | Creating a decentralized, economically-incentivized mechanism for challenging data accuracy |

| Liveness & Latency | Real-time streaming data feeds | Balancing update frequency with gas costs and network congestion |

| Collateralization | Insurance funds, counterparty risk | Ensuring sufficient collateral to cover potential losses from oracle failure |

Approach

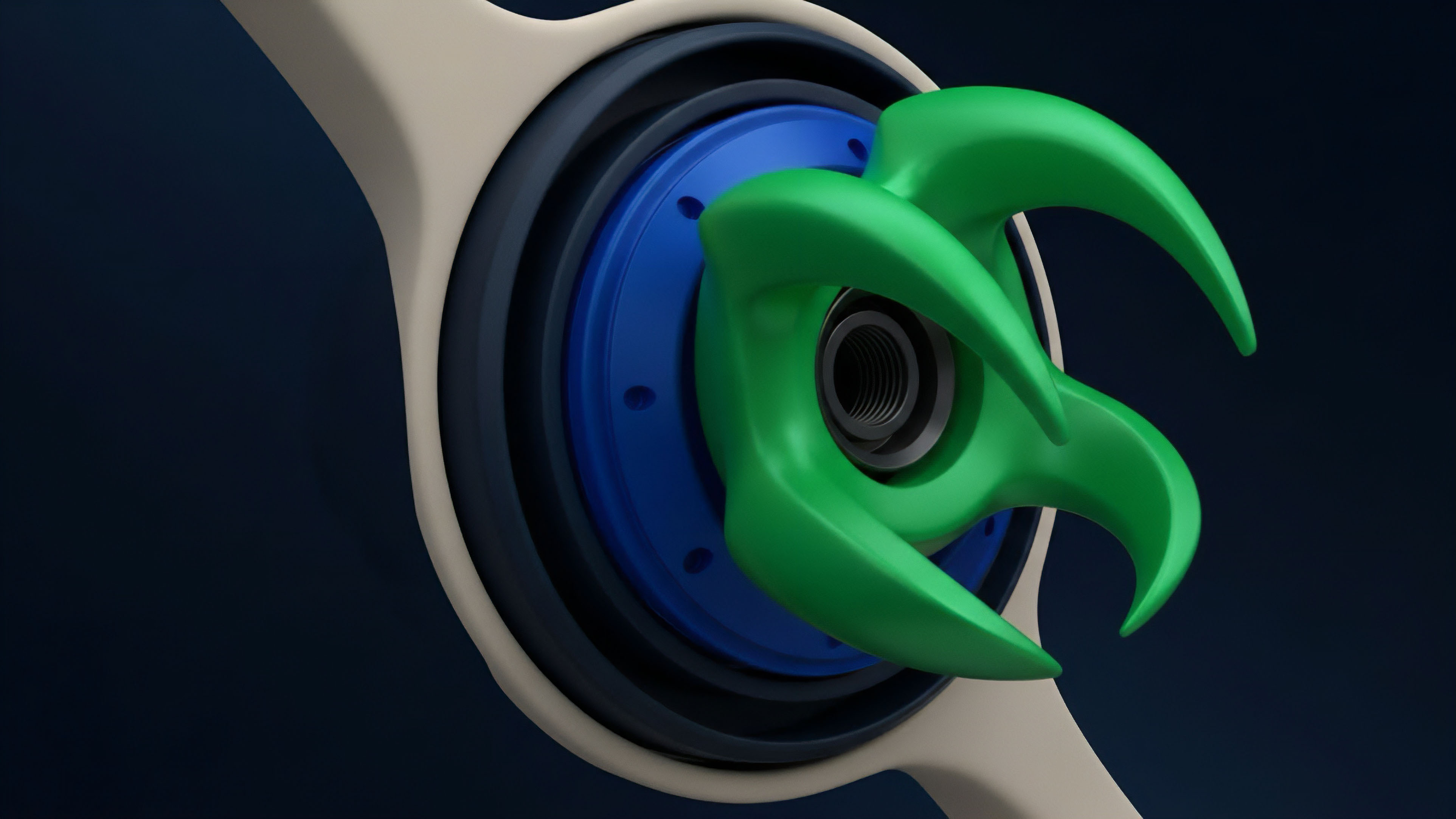

Current approaches to financial data integrity prioritize two key strategies: source aggregation and economic incentives. The objective is to ensure that no single data provider can unilaterally influence the outcome of a derivative contract settlement. This requires a shift from relying on a single price feed to a consensus mechanism across multiple feeds.

Decentralized Data Aggregation

The standard approach involves using decentralized oracle networks to aggregate price data from various sources. These sources typically include major centralized exchanges (CEXs) and high-liquidity decentralized exchanges (DEXs). The oracle network then calculates a median or volume-weighted average price (VWAP) from these sources.

This method creates a “cost of attack” by forcing a malicious actor to manipulate prices across multiple exchanges simultaneously, making the attack economically infeasible. The protocol design must also account for liquidity fragmentation, where the same asset trades at different prices across different venues. The aggregation mechanism must intelligently filter out outliers and sources with insufficient liquidity to prevent manipulation.

Robust data aggregation in decentralized finance ensures that a price feed reflects global market consensus, not isolated liquidity pools.

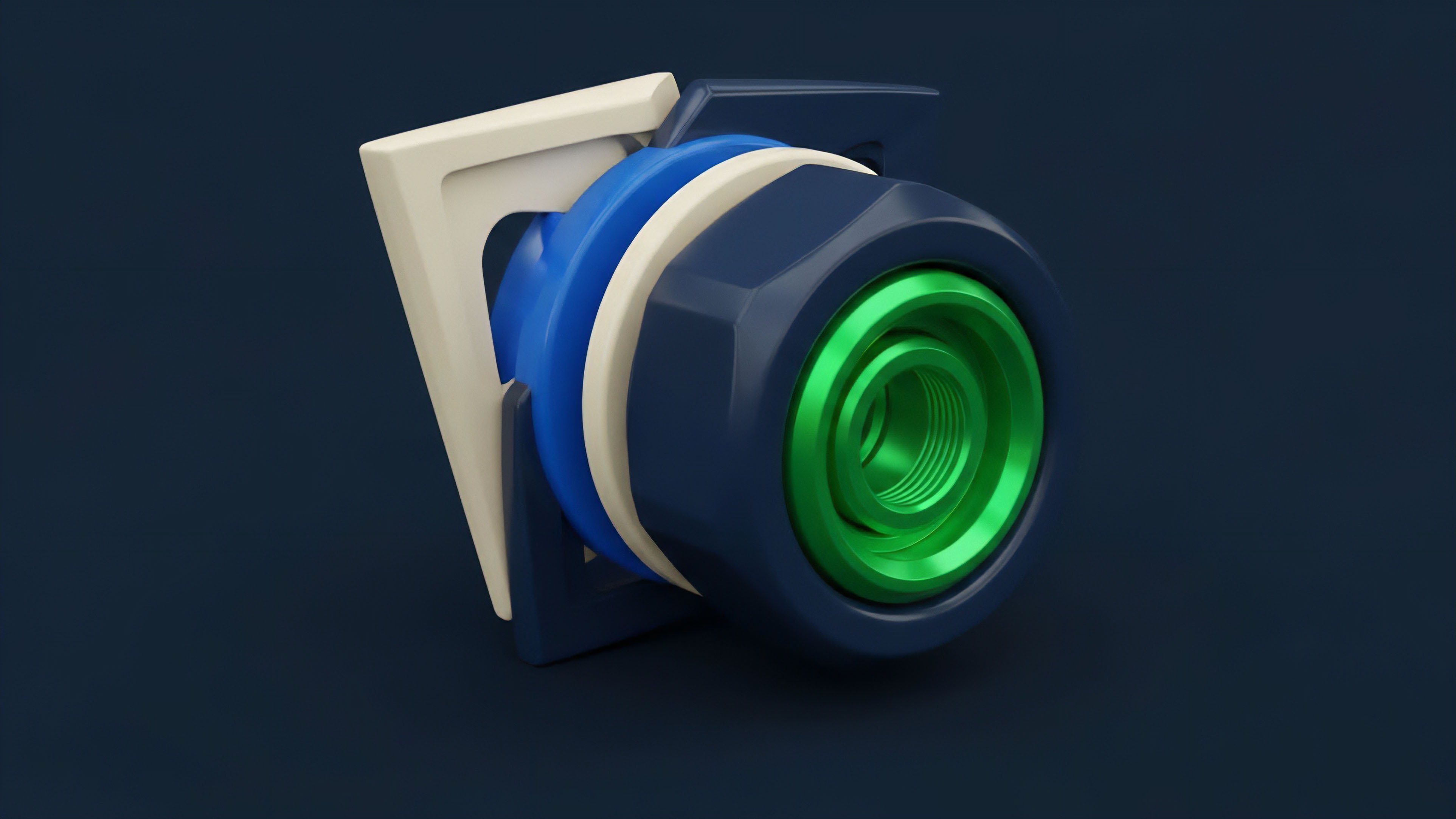

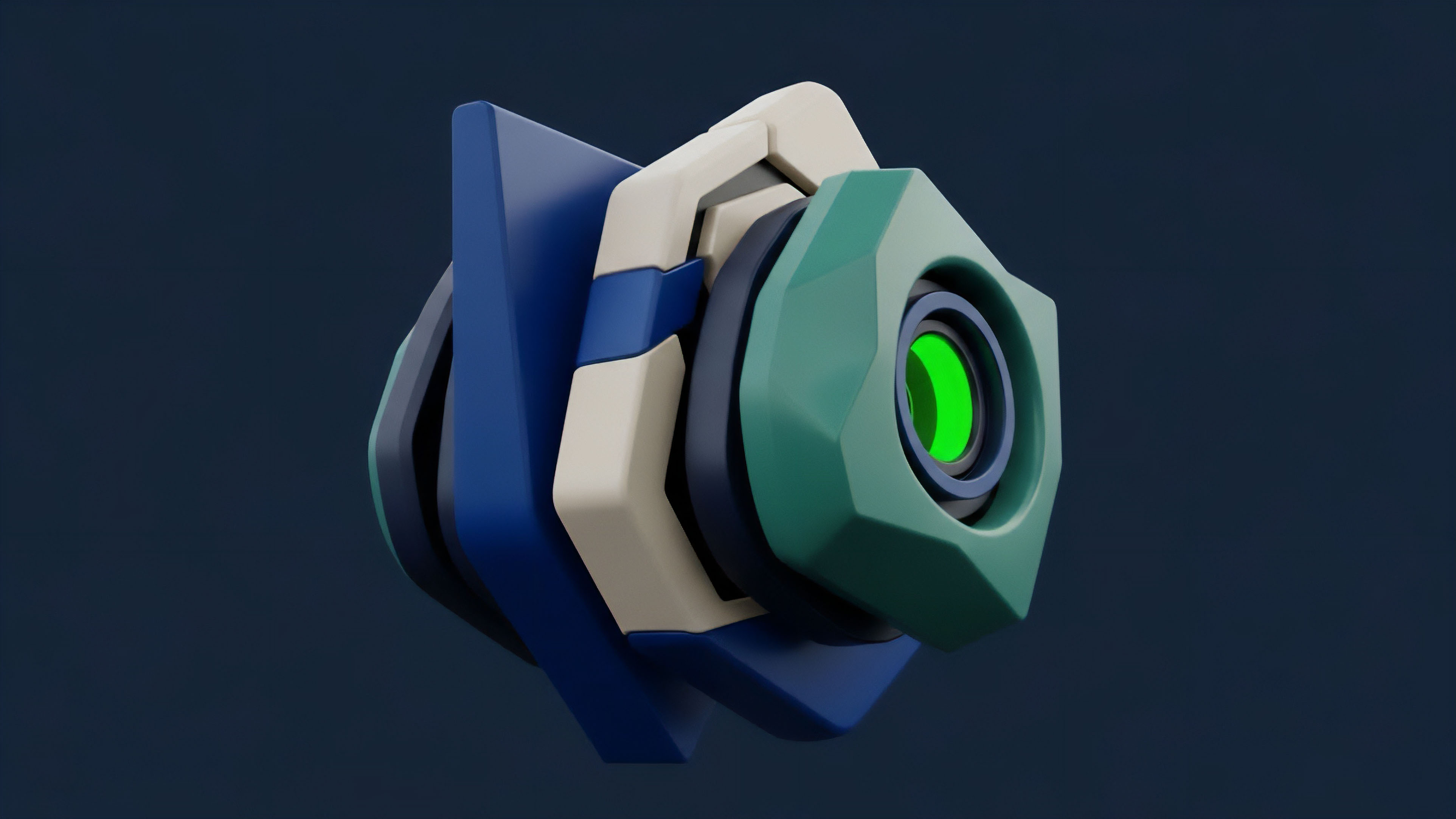

Incentive Mechanisms and Slashing

The integrity of the data is further secured by economic incentives. Data providers stake collateral to participate in the oracle network. If a provider submits inaccurate data, they face slashing ⎊ the loss of their staked collateral.

This creates a powerful financial disincentive against malicious behavior. Conversely, honest data providers receive rewards for accurately reporting prices. This system of rewards and penalties ensures that honest behavior is economically rational, even in an adversarial environment.

The protocol’s risk engine must be designed to pause operations or increase margin requirements if the oracle data deviates significantly from expected values, providing a circuit breaker against potential manipulation.

The implementation of a decentralized oracle system requires careful consideration of the trade-off between update frequency and network costs. High-frequency updates provide greater accuracy for short-term options but incur higher gas costs on the blockchain. Low-frequency updates reduce costs but increase the risk of stale data being exploited.

The optimal approach depends on the specific derivatives product being offered, with perpetual futures often requiring higher frequency updates than long-dated options.

Evolution

The evolution of financial data integrity in crypto options has been a continuous response to adversarial market conditions and the limitations of initial designs. Early protocols focused on a single price feed for the underlying asset, which proved insufficient for complex derivatives. The shift has been toward a holistic approach that incorporates multiple data points and a deeper understanding of market microstructure.

Beyond Simple Price Feeds

Initial data integrity efforts centered on the spot price of the underlying asset. However, options pricing requires more than just the spot price; it requires a reliable measure of implied volatility. The next generation of protocols recognized this need and began integrating data feeds for volatility surfaces.

This involved creating oracles that could accurately calculate and verify the implied volatility for different strike prices and maturities. This advanced data requirement introduced new complexities, as implied volatility itself is derived from market prices and can be manipulated. The solutions evolved to include mechanisms that cross-validate implied volatility data against historical volatility and other market metrics.

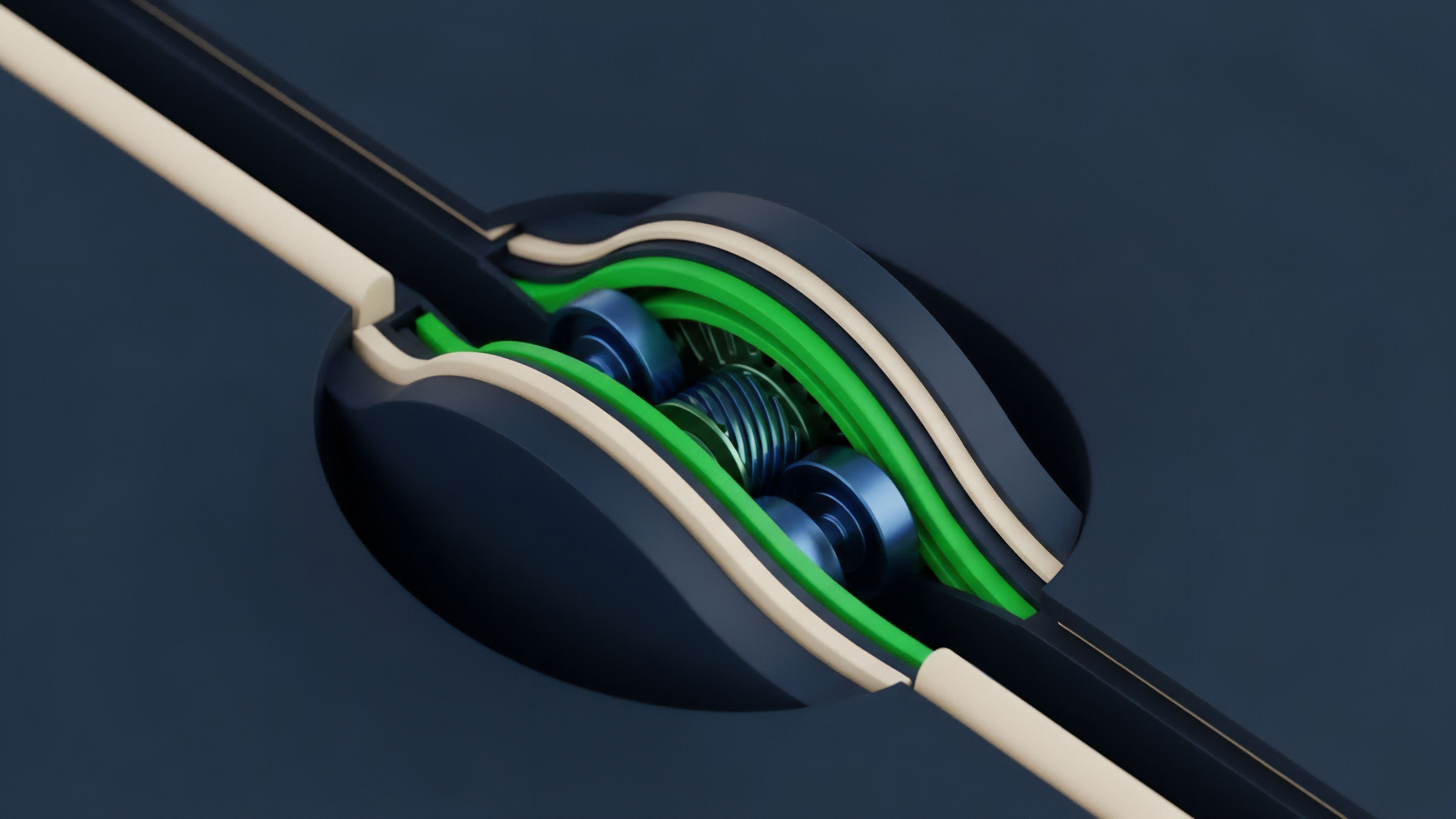

The Role of Market Microstructure

The integrity of financial data is not only about the price itself but also about the context in which that price is generated. The evolution of data integrity has led to a focus on market microstructure ⎊ the study of order flow, liquidity depth, and trading mechanisms. Protocols now recognize that a price from a low-liquidity exchange is less reliable than a price from a high-liquidity venue.

Data integrity solutions have adapted by incorporating liquidity filters and volume-weighted averages, effectively prioritizing data sources based on their depth. This ensures that a price manipulation attack requires significant capital to execute, making it unprofitable.

The challenge of data integrity in options protocols has evolved from preventing simple price manipulation to preventing sophisticated volatility manipulation. Attackers can attempt to manipulate implied volatility by placing large, unexecuted orders on a specific strike price, artificially inflating the volatility surface. The most advanced oracle designs now account for this by filtering out illiquid order book data and focusing on executed trade prices from high-volume venues.

Horizon

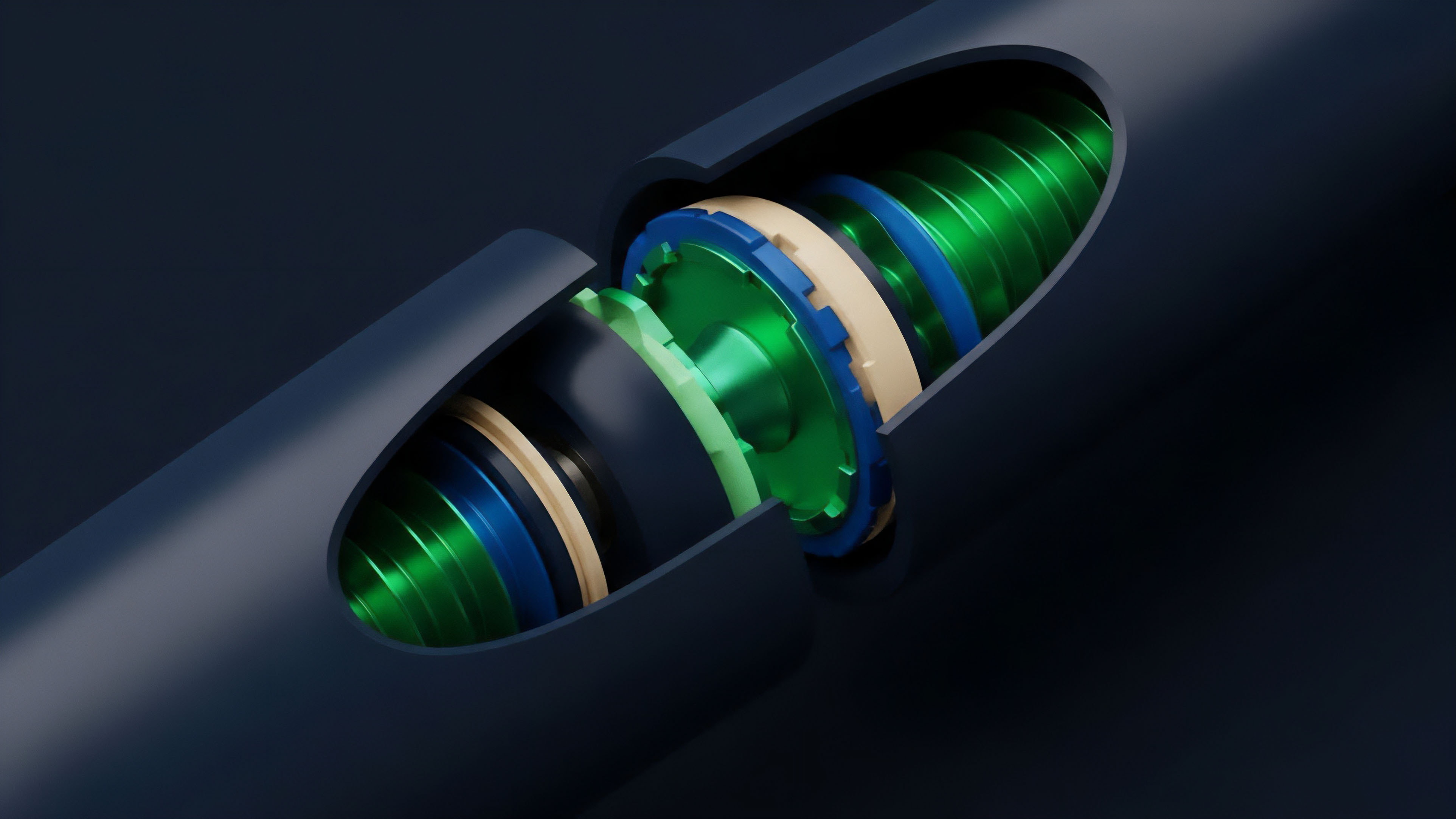

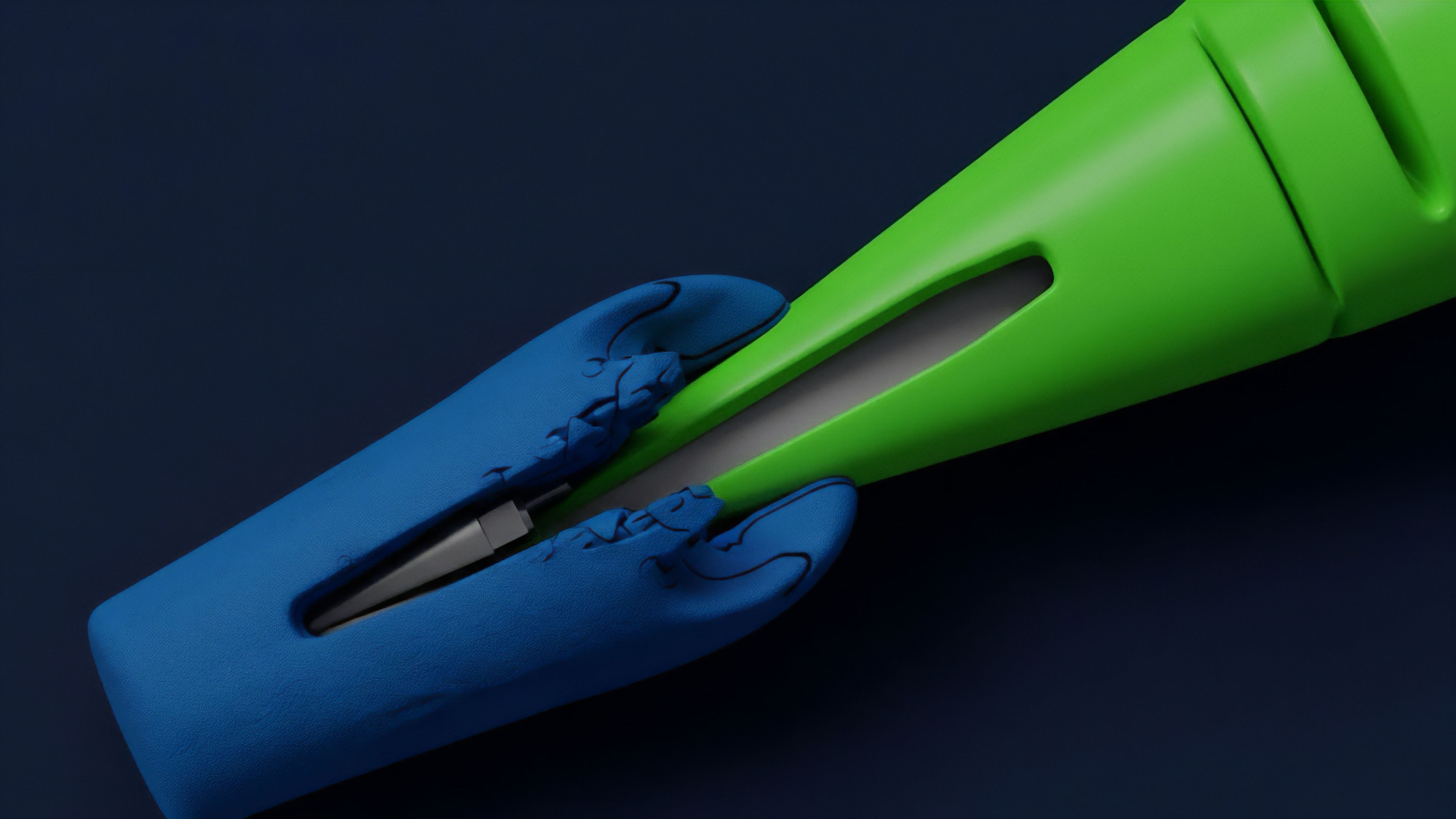

The future of financial data integrity in crypto options will likely center on two critical developments: the use of zero-knowledge proofs for data verification and the expansion of data sources beyond traditional financial venues. The current system relies on a consensus of data providers; the next step is to cryptographically prove data accuracy without revealing the underlying data itself.

Zero-Knowledge Proofs for Data Verification

Zero-knowledge proofs (ZKPs) offer a pathway to verify the integrity of data feeds without relying on a network of external validators. A ZKP allows a data provider to prove that a specific price feed (e.g. a VWAP calculation) was derived correctly from a set of off-chain data sources, without revealing the specific data points or sources. This shifts the burden of trust from the data providers themselves to a mathematical proof.

The protocol can then verify the integrity of the data stream on-chain with high confidence, reducing reliance on economic incentives alone. This approach enhances privacy and reduces the attack surface by making it difficult for malicious actors to reverse-engineer the oracle’s logic.

Decentralized Physical Infrastructure Networks (DePIN)

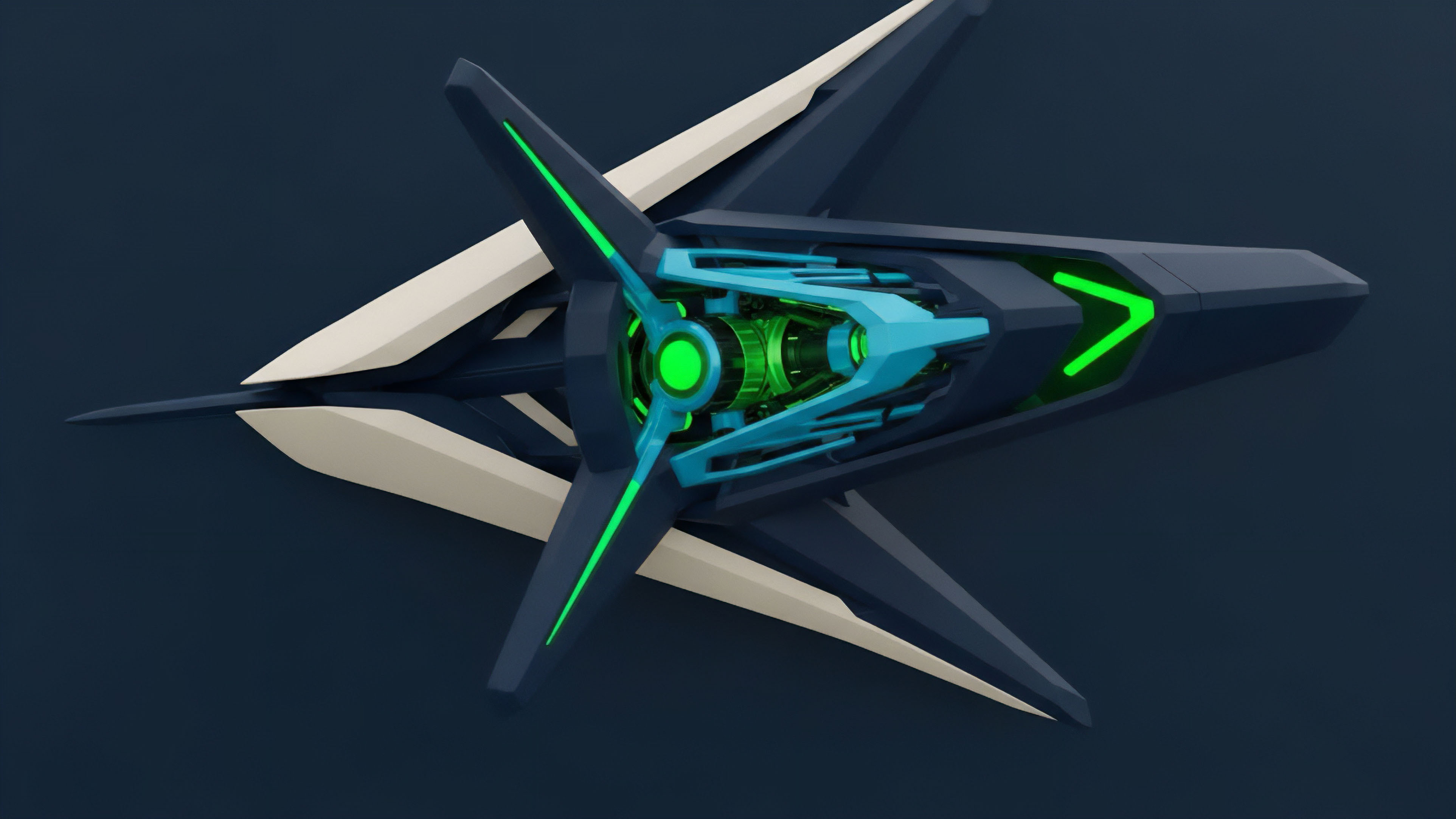

The expansion of data integrity beyond traditional exchanges will be driven by DePIN projects. These networks aim to create decentralized, verifiable data sources from physical infrastructure. While early DePIN projects focus on environmental data or location services, the next iteration will likely extend to financial data.

This could involve decentralized networks of hardware devices that verify market data in real-time, providing a censorship-resistant and tamper-proof source of information. The combination of ZKPs and DePIN could create a truly autonomous data layer, removing the need for external data providers entirely.

The ultimate goal is to create a data integrity layer that is not only robust against manipulation but also completely permissionless and verifiable. The current model of relying on a select group of staked data providers, while effective, still introduces a layer of centralization. The future architecture aims to remove this layer by using cryptographic proofs and decentralized hardware to ensure data accuracy at the source.

Glossary

Slashing Mechanisms

Audit Integrity

Pricing Model Integrity

Trustless Systems

Derivatives Settlement Integrity

Market Integrity Frameworks

Integrity Verified Data Stream

Consensus Layer Integrity

Financial Data Privacy Regulations