Essence

Data Provider Staking represents the foundational economic mechanism that secures the integrity of price feeds for decentralized derivatives protocols. The core challenge in building trustless financial instruments, particularly options, lies in sourcing reliable, real-time data from outside the blockchain’s state. This is the “oracle problem.” Data Provider Staking solves this by aligning economic incentives: providers lock up collateral, typically in the protocol’s native token or a stablecoin, as a guarantee of data accuracy.

If a data provider submits erroneous or malicious data that causes harm to the protocol or its users ⎊ for instance, triggering incorrect liquidations or allowing for price manipulation ⎊ that provider’s staked collateral is subject to slashing.

The system transforms the trust assumption from reliance on a centralized entity’s reputation to a verifiable financial commitment. For options markets, this data integrity is non-negotiable. An option’s value is derived directly from the underlying asset’s price, and its settlement depends on a precise price at expiration.

Without a robust, economically secured data feed, the entire options pricing model collapses, leaving participants vulnerable to manipulation and front-running.

Data Provider Staking secures decentralized derivatives by transforming trust in data feeds from reputation-based to collateral-based.

The design of the staking mechanism must account for the specific vulnerabilities of options trading. Unlike spot markets, derivatives protocols have higher-leverage risk. A small data manipulation can create large, cascading liquidations.

Therefore, the collateral required for staking must be large enough to deter profitable manipulation, a calculation often tied to the potential value at risk within the protocol’s liquidity pools.

Origin

The concept of Data Provider Staking emerged directly from the failures and vulnerabilities observed in early decentralized finance (DeFi) protocols. Early attempts at building decentralized options relied on simplistic, uncollateralized oracles, often drawing data from single exchanges or small sets of unverified nodes. This design created a significant attack vector known as the “oracle attack.” An attacker could manipulate the price on a small-volume exchange, feed that manipulated price to the options protocol’s oracle, and then profit from incorrect liquidations or settlements.

The protocol had no recourse against the data provider, as there was no economic disincentive for malicious behavior.

The transition to collateralized data provision was a necessary architectural evolution. The initial iterations of staking were rudimentary, often simply requiring a large token stake without sophisticated slashing logic. Over time, protocols adopted more sophisticated designs.

The key shift was recognizing that data provision for derivatives requires a different level of security than for simple spot swaps. The high-leverage nature of options necessitates a corresponding increase in the cost of attack, which staking directly addresses. This evolution was heavily influenced by game theory principles, specifically focusing on creating a system where the cost of attacking the oracle exceeds the potential profit from the attack.

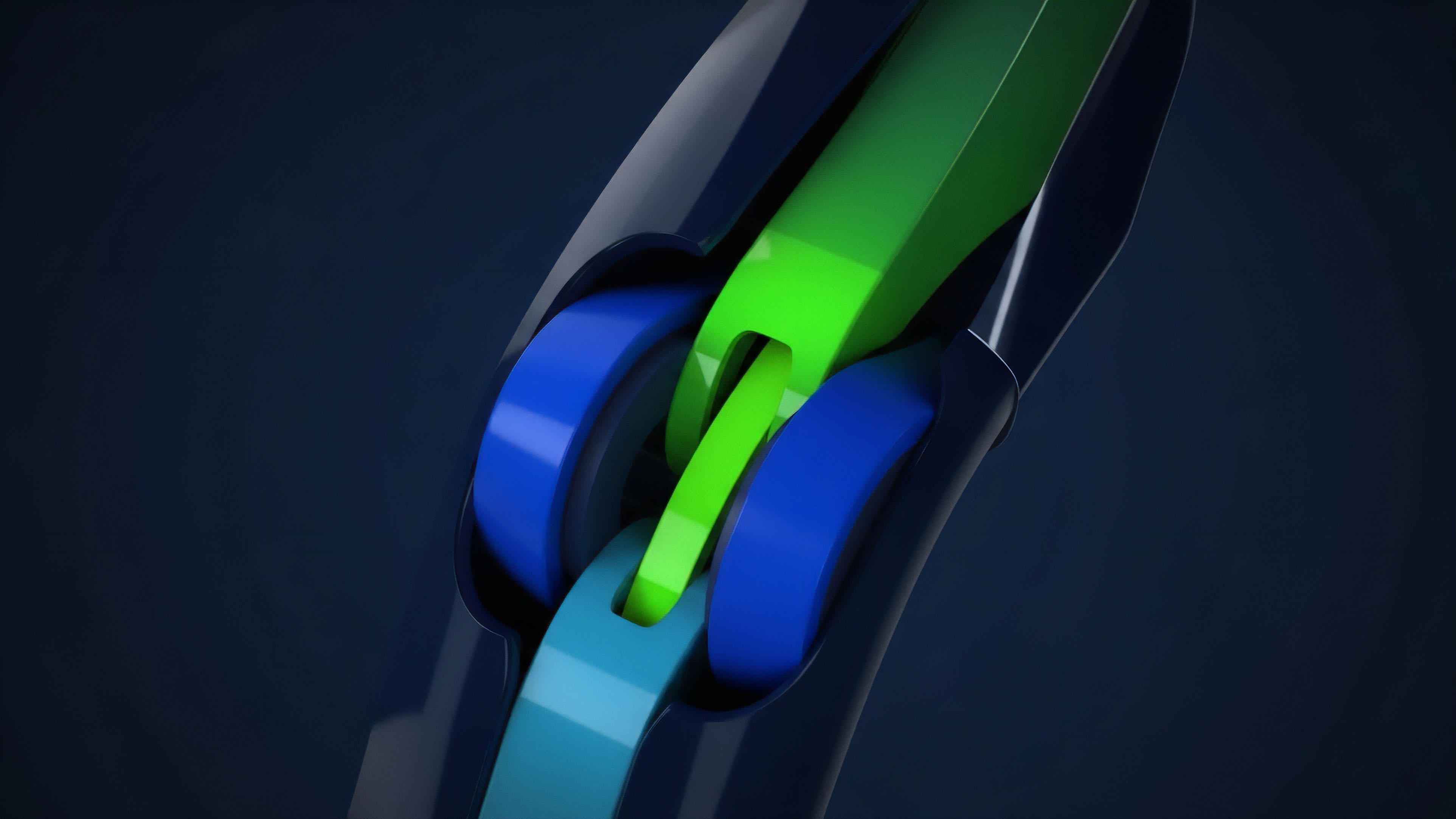

The development of staking also involved a transition from single-source oracles to aggregated oracles. The initial models were fragile, relying on one source of truth. The new generation of data staking involves multiple providers, each staking collateral, and an aggregation mechanism that filters out outliers and malicious inputs.

This redundancy increases the system’s resilience and distributes the risk across multiple independent actors.

Theory

The theoretical foundation of Data Provider Staking rests on a specific application of game theory, where participants are assumed to be rational economic actors seeking to maximize profit. The system’s security relies on ensuring that the expected cost of providing incorrect data (the slashing penalty) significantly outweighs the expected profit from doing so.

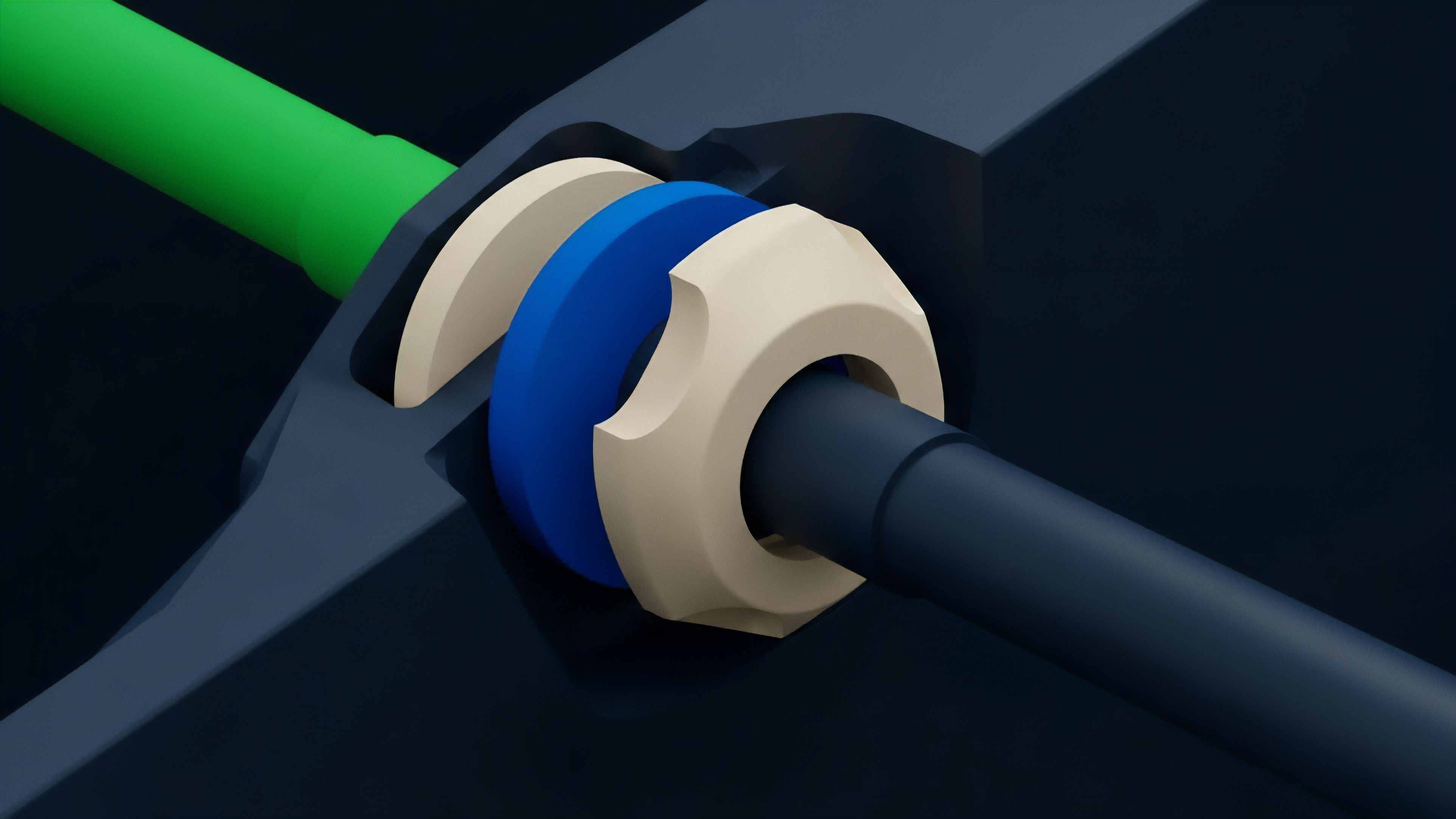

Slashing Mechanics and Collateralization Ratio

The core mechanism is slashing. If a data provider submits data that deviates significantly from the aggregated median or a predetermined threshold, a portion of their staked collateral is removed. The protocol’s design must determine the optimal collateralization ratio.

This ratio is a function of several variables:

- Value at Risk (VaR) in Protocol: The maximum potential profit an attacker could gain from manipulating the data feed.

- Collateral Size: The total amount staked by data providers. The collective collateral must be greater than the VaR to deter a large-scale attack.

- Slashing Threshold: The specific deviation percentage that triggers a slashing event. A tighter threshold increases security but also risks penalizing providers for natural market volatility or latency differences.

This creates a complex feedback loop. As the value locked in the derivatives protocol increases, the required collateral for data providers must also increase to maintain the same level of security. If the collateral requirements are too low, the system becomes vulnerable to large-scale manipulation.

If they are too high, it creates a barrier to entry for new data providers, potentially leading to centralization.

Volatility Oracles and Pricing Models

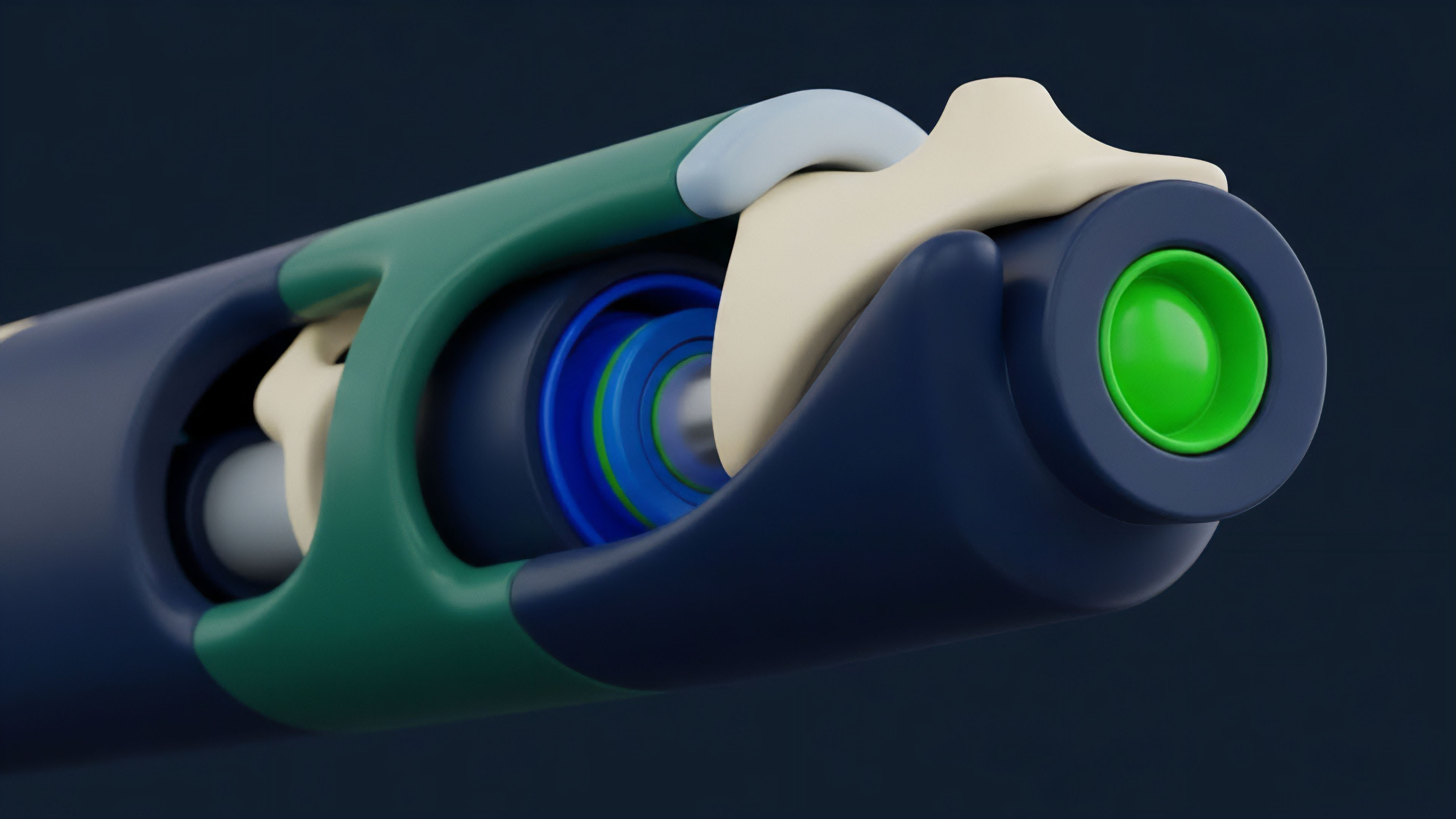

For options, the relevant data extends beyond a simple spot price. The pricing of options relies heavily on implied volatility (IV), which is often represented as a volatility surface across different strike prices and expirations. Traditional oracles only provide spot prices.

Data Provider Staking for options protocols must extend to providing accurate implied volatility data. This presents a unique challenge, as implied volatility is not a single, objective price but a calculation derived from market prices and an options pricing model (e.g. Black-Scholes or variations like jump diffusion models).

The core challenge in options data staking is providing a verifiable volatility surface, not just a spot price, requiring complex consensus mechanisms to validate calculations rather than simple data points.

The game theory changes when validating volatility. A provider cannot simply check a single exchange price. Instead, they must run a complex calculation on market data, and the consensus mechanism must validate the calculation itself, not just the result.

This requires a higher level of technical sophistication and greater trust assumptions regarding the pricing model used by the oracle network.

| Oracle Type | Data Provided | Risk Profile | Slashing Complexity |

|---|---|---|---|

| Spot Price Oracle | Single asset price (e.g. ETH/USD) | Lower, less susceptible to model risk | Simple deviation check against median |

| Volatility Oracle | Implied Volatility Surface | Higher, susceptible to model assumptions | Complex validation of pricing model inputs |

Approach

The implementation of Data Provider Staking in modern decentralized options protocols requires a multi-layered approach to ensure data integrity and capital efficiency. The design must balance the need for high-frequency updates with the cost of on-chain computation and data submission.

Staking and Reward Structure

Data providers stake collateral and receive rewards for correctly submitting data. The reward structure is designed to incentivize participation and compensate for the risk of slashing. Rewards are typically generated from protocol fees or a specific inflation schedule.

The penalty for incorrect data submission, or a failure to submit data (liveness failure), is the slashing of staked collateral. This creates a continuous feedback loop where providers are economically incentivized to maintain high-quality data feeds.

Data Aggregation and Validation

Most sophisticated options protocols do not rely on a single data provider. Instead, they use a network of providers, often with a rotating selection or a weighted average system. This aggregation process is critical for filtering out bad data.

The protocol design must define the specific aggregation logic:

- Median Aggregation: The most common method, where the protocol takes the median value of all submitted data points. This naturally filters out outliers and malicious inputs without requiring complex calculations.

- Weighted Average: Data providers with larger stakes or higher historical accuracy may have their data weighted more heavily in the final calculation. This rewards consistent, high-quality performance.

- Time-Weighted Average Price (TWAP): For high-frequency options, a TWAP calculation may be used to smooth out sudden, short-term price spikes that could be caused by manipulation.

Data Provider Selection and Governance

The selection process for data providers can vary significantly. Some protocols allow permissionless staking, where anyone can become a data provider by locking collateral. Others use a permissioned model, where a governance vote selects specific providers based on reputation or technical capability.

The permissioned model reduces the risk of malicious actors entering the network but increases centralization risk. The permissionless model is more resilient against collusion but requires higher collateralization ratios to deter large-scale attacks.

Evolution

The evolution of Data Provider Staking for options protocols reflects a shift from simple price feeds to sophisticated risk parameter inputs. Early protocols primarily focused on securing the spot price of the underlying asset for settlement purposes. The next generation of protocols recognized that implied volatility (IV) is a more critical input for accurate options pricing and risk management.

Volatility Surface Oracles

The primary evolution in data provision for derivatives is the emergence of volatility surface oracles. These oracles do not just report a single price; they provide a matrix of implied volatilities across various strike prices and expiration dates. This data is essential for calculating Greeks (Delta, Gamma, Vega, Theta), which are critical for market makers to manage their risk exposures.

Staking for these oracles is significantly more complex, as it requires consensus on a calculation rather than a simple price point.

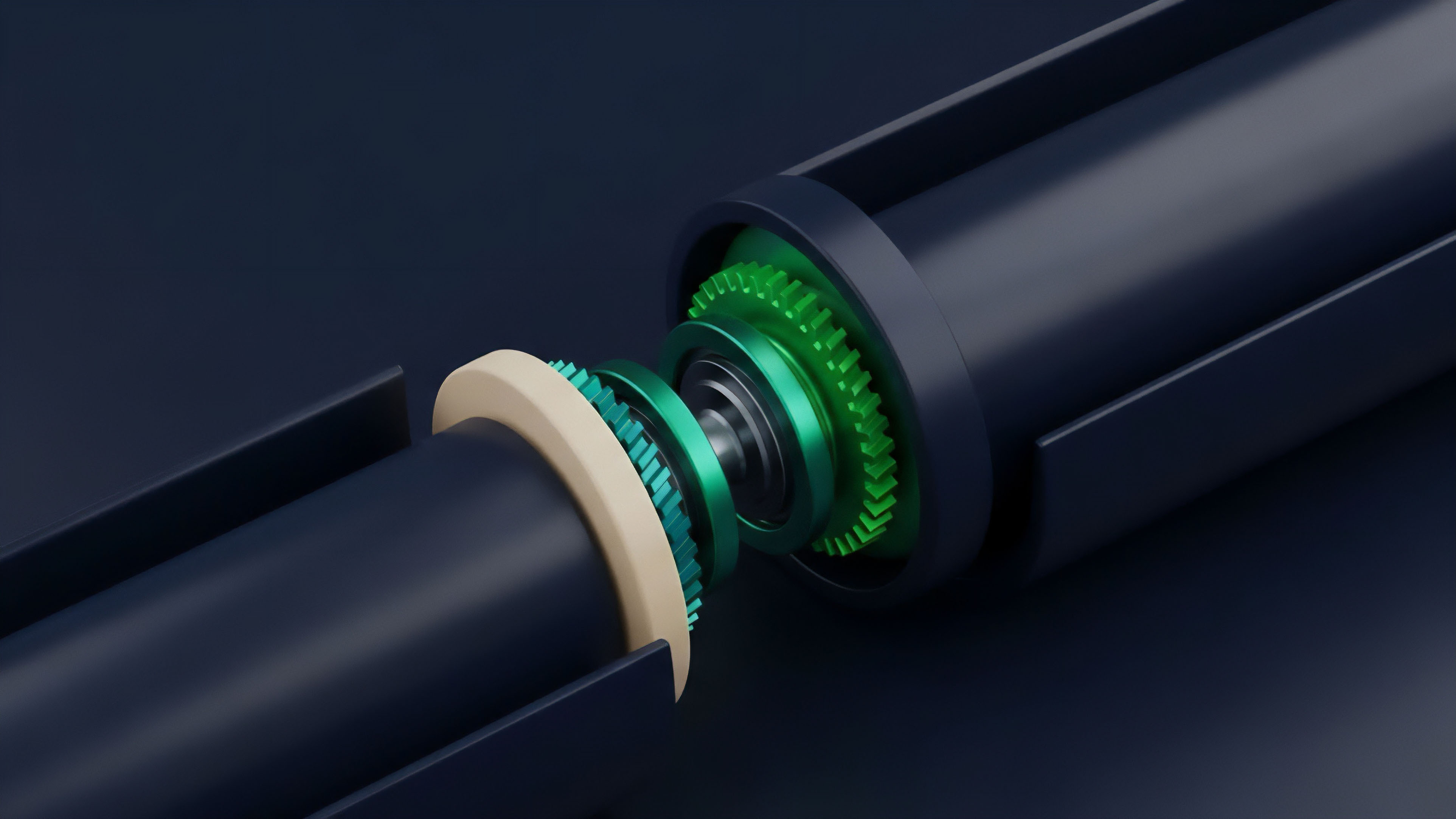

Data Provider Collateralization and Liquidity Provision

A further evolution involves the integration of data provision with liquidity provision. Some protocols are experimenting with models where liquidity providers are also required to stake collateral, or where the data provider’s collateral is directly linked to the liquidity pool. This creates a stronger alignment between the data provider and the protocol’s overall health.

It also allows for more dynamic adjustments to collateral requirements based on market conditions. For instance, during periods of high volatility, the collateral required for data providers may increase to reflect the higher risk of manipulation.

| Evolutionary Stage | Data Focus | Security Mechanism | Risk Mitigation |

|---|---|---|---|

| Stage 1 (Early DeFi) | Spot Price | Single source/uncollateralized | Low, susceptible to manipulation |

| Stage 2 (Current) | Spot Price & IV | Collateralized staking & aggregation | Moderate, relies on high collateralization |

| Stage 3 (Future) | Dynamic Risk Parameters | Dynamic collateral & integrated liquidity | High, adaptive to market conditions |

Horizon

Looking ahead, Data Provider Staking will likely evolve beyond simple price data and become an integral component of a fully automated risk management system. The next iteration of decentralized derivatives protocols will demand more sophisticated data feeds that include not only implied volatility but also inputs for advanced models, such as jump diffusion or stochastic volatility models.

The Challenge of Data Sovereignty

A significant challenge on the horizon is the issue of data sovereignty and intellectual property. As protocols begin to rely on complex, proprietary data feeds (such as those providing institutional-grade volatility surfaces), the data providers gain significant leverage. The question arises: who owns the data, and how is its integrity maintained if the data source itself is proprietary?

Staking mechanisms will need to evolve to account for this. We may see a future where data providers stake collateral not just on data accuracy, but on the intellectual property rights and availability of their data feeds, creating a new layer of financial and legal risk for protocols.

Dynamic Staking and Risk Contagion

The future of Data Provider Staking will likely involve dynamic collateral requirements that adjust automatically based on real-time market conditions. A sudden increase in implied volatility, for example, could automatically increase the required collateral for data providers to ensure security against potential manipulation. This dynamic adjustment creates a more resilient system, but it also introduces new risks.

If a large number of protocols rely on the same data providers, a slashing event in one protocol could trigger a cascade effect, causing data providers to become undercollateralized across multiple systems. This creates a systemic risk of contagion that must be carefully managed in future designs.

The ultimate goal is to move beyond simply securing a price feed to securing the entire risk calculation. This means data providers may eventually stake on the output of complex calculations, such as the Value at Risk (VaR) of a protocol’s liquidity pool, rather than just raw price inputs. This shifts the burden of risk calculation from the protocol to the data provider, creating a more efficient and secure system.

Glossary

Staking Slashing

Collateralization Mechanism

Delta Hedging

Dynamic Staking Market

Staking and Slashing Mechanisms

Data Provider Collusion

Liquidity Provider Inventory Risk

Liquidity Provider Accounting

Liquidity Provider Challenges