Essence

The functional integrity of a decentralized options protocol rests entirely on the quality and reliability of its data inputs. This requirement, often referred to as Data Integrity Standards, defines the set of protocols and mechanisms that ensure a smart contract receives accurate, timely, and manipulation-resistant information. In traditional finance, this data integrity is guaranteed by centralized exchanges and regulatory bodies that enforce specific reporting requirements.

The transition to a decentralized architecture necessitates a fundamental shift in trust, moving from institutional oversight to cryptographic verification and economic incentives. The core challenge is bridging the gap between the off-chain reality of market prices and the on-chain, deterministic environment of a smart contract. Without robust standards for data integrity, a derivatives market cannot function reliably; it becomes susceptible to manipulation, leading to incorrect option pricing, inaccurate collateral valuation, and potentially catastrophic liquidations.

The specific data requirements for options are far more complex than for spot markets or simple lending protocols. An options contract requires not only the spot price of the underlying asset for collateral and settlement calculations but also a reliable measure of its expected future volatility. This data is essential for accurate pricing models and risk management.

The integrity of these inputs determines whether a protocol can accurately calculate the Greeks ⎊ the risk sensitivities that define the option’s value. A failure in data integrity creates systemic risk, as automated liquidations and settlement calculations will be based on faulty premises, leading to a cascade of failures across interconnected protocols.

Data integrity in decentralized options is the foundation upon which accurate pricing, reliable risk management, and systemic stability are built.

Origin

The necessity for stringent data integrity standards emerged from the earliest systemic failures in decentralized finance. The initial wave of DeFi protocols often relied on simplistic oracle designs, frequently pulling price data from a single, centralized exchange or an on-chain automated market maker (AMM). This created a critical vulnerability: the single-point-of-failure oracle problem.

A market participant could exploit this weakness by executing a large, manipulative trade on the source exchange, causing a temporary price spike that the oracle would report to the smart contract. This led to flash loan attacks where attackers borrowed large sums, manipulated the oracle price, and then repaid the loan, often resulting in massive losses for the protocol and its users. The development of options protocols introduced a new layer of complexity to this challenge.

While lending protocols primarily needed a reliable spot price for collateral valuation, options required a measure of implied volatility (IV). Early protocols struggled with how to source and calculate this volatility data on-chain. The Black-Scholes model, the bedrock of options pricing, requires five inputs: strike price, time to expiration, risk-free rate, underlying price, and volatility.

Sourcing a reliable volatility input, which itself is derived from market data, proved to be a significant hurdle. The lack of a robust, decentralized volatility oracle meant early options protocols either had to rely on highly centralized inputs or create simplified, less accurate models, severely limiting their capital efficiency and market depth. The market’s reaction to these early exploits demonstrated that data integrity was not an abstract goal, but an existential requirement for decentralized derivatives.

Theory

The theoretical framework for data integrity in crypto options rests on a blend of quantitative finance principles and protocol physics. From a financial perspective, the primary requirement is the accurate calculation of Greeks ⎊ specifically Delta, Gamma, Theta, and Vega ⎊ which are fundamental to risk management. These sensitivities are highly dependent on accurate, real-time data inputs, particularly the underlying asset price and the implied volatility surface.

The integrity challenge is twofold: ensuring the accuracy of the underlying price and accurately modeling the volatility dynamics.

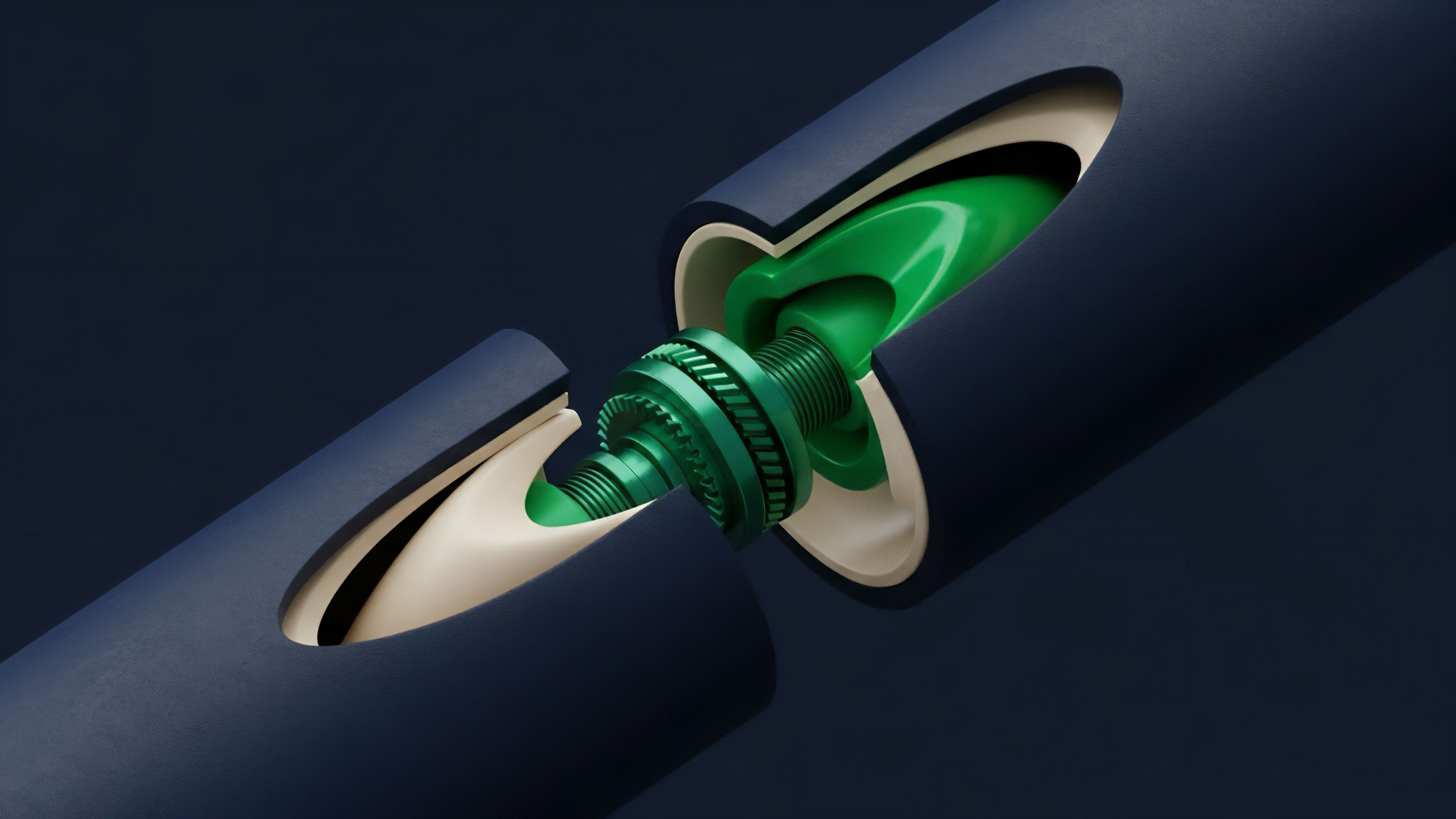

Oracle Aggregation and Manipulation Resistance

The most common solution for price data integrity is the use of decentralized oracle networks (DONs). These networks aggregate data from multiple independent sources to prevent manipulation of a single data feed. The theoretical strength of this approach lies in the cost of attack: to manipulate the aggregated price, an attacker must successfully manipulate a majority of the data sources simultaneously.

This makes the attack economically infeasible for most assets. Current approaches to data aggregation include:

- Time-Weighted Average Price (TWAP): This method calculates the average price over a specific time window, smoothing out instantaneous spikes caused by flash loan attacks or temporary market anomalies. TWAP provides stability but introduces latency, which can be problematic for high-frequency trading and rapid liquidations.

- Volume-Weighted Average Price (VWAP): VWAP calculates the average price weighted by trading volume. This method provides a more accurate reflection of the true market price, as it accounts for liquidity depth, making it harder to manipulate large volumes.

- Median Aggregation: Taking the median price from multiple sources ensures that outliers ⎊ whether from manipulation or technical errors ⎊ have a minimal impact on the final reported price.

Volatility Surface Modeling

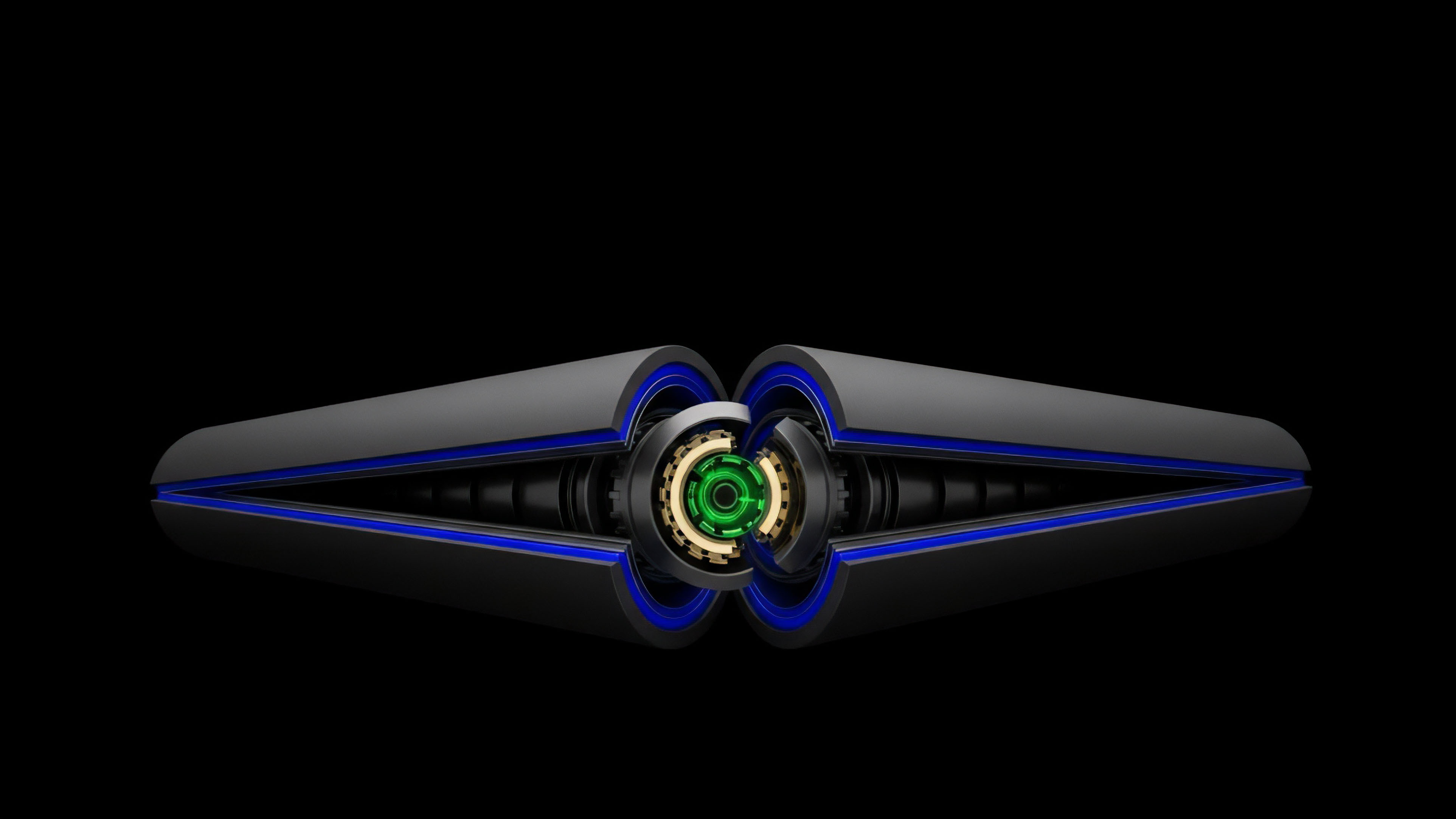

A more advanced challenge is creating a data integrity standard for implied volatility. The volatility surface is a three-dimensional plot that represents the implied volatility of options across different strike prices and maturities. In traditional markets, this surface is derived from liquid options exchanges.

In decentralized markets, creating this surface requires either a high degree of on-chain liquidity or a complex oracle solution that pulls data from centralized sources. A protocol’s ability to accurately price options and manage risk is directly proportional to the integrity of its volatility surface data. A mispriced volatility input can lead to options being sold at incorrect premiums, creating arbitrage opportunities that drain protocol liquidity.

Approach

The implementation of data integrity standards varies significantly across different decentralized options protocols. The choice of architecture represents a trade-off between security, capital efficiency, and decentralization.

Off-Chain Computation with On-Chain Verification

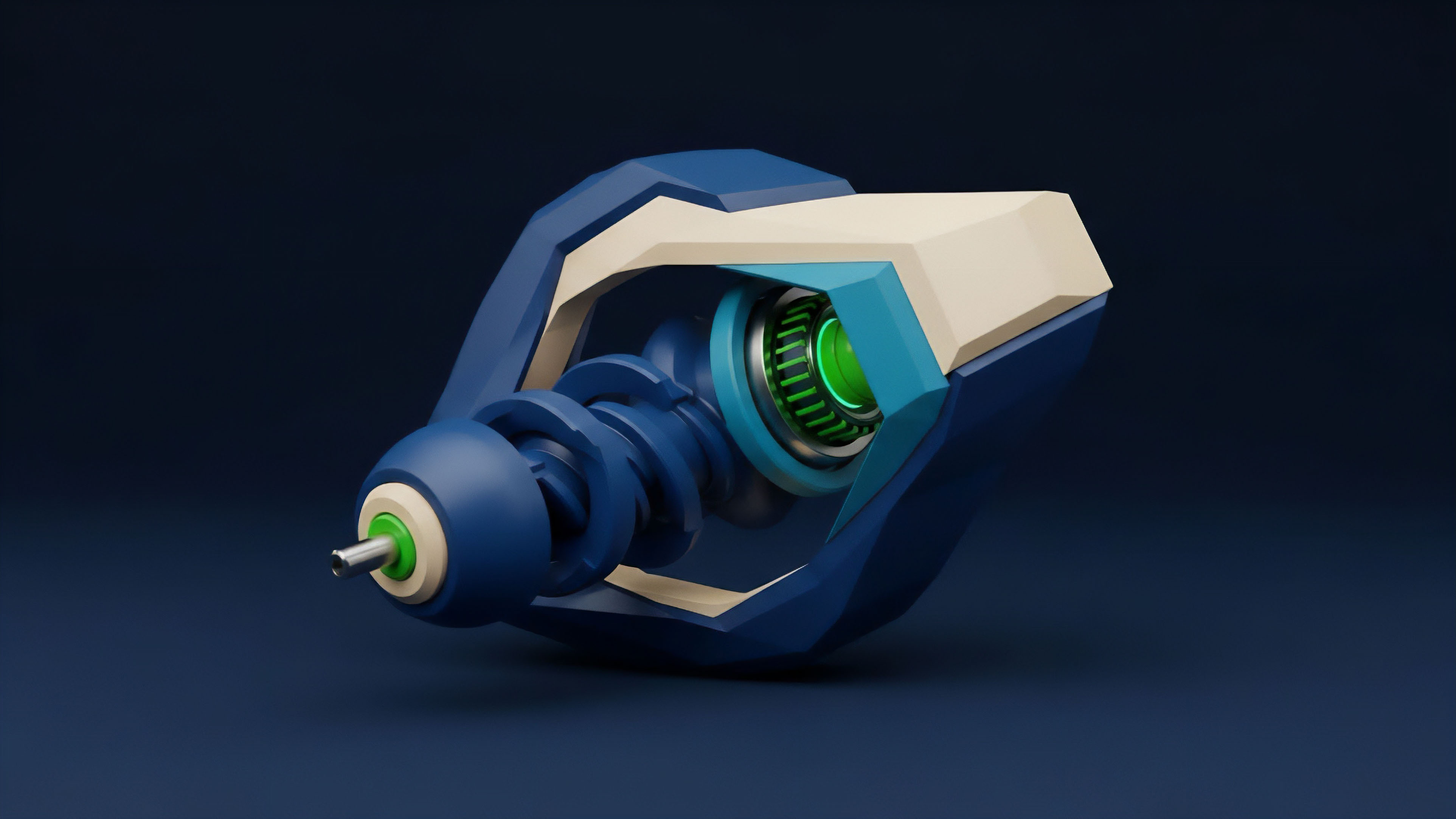

Some protocols adopt a hybrid approach where complex calculations, such as options pricing and volatility surface generation, are performed off-chain by specialized services. The resulting data is then submitted to the smart contract for verification. This method reduces on-chain gas costs and latency.

However, it requires a robust verification mechanism to ensure the integrity of the off-chain calculation. The data integrity standard here relies on cryptographic proofs and economic incentives for verifiers. If the off-chain data is incorrect, verifiers are penalized, and honest participants are rewarded.

Decentralized Volatility Oracles

A significant development in options data integrity is the creation of decentralized volatility oracles. These systems are designed to calculate implied volatility from on-chain market data, often from automated market makers (AMMs) specifically designed for options. By deriving volatility from the actual trading activity within the protocol, these systems remove the dependency on external, centralized sources.

This approach creates a closed feedback loop where the protocol’s data integrity is self-contained.

| Data Integrity Component | Traditional Finance Approach | Decentralized Finance Approach |

|---|---|---|

| Spot Price Source | Centralized exchanges (CEXs), regulated price feeds (e.g. Bloomberg) | Decentralized oracle networks (DONs) aggregating multiple CEX and DEX data points |

| Volatility Data Source | Implied volatility surface from CBOE or other major options exchanges | On-chain calculation from options AMM liquidity or external data feeds from DONs |

| Data Verification | Regulatory audits, counterparty risk checks | Cryptographic proofs, economic incentives for honest reporting, consensus mechanisms |

Settlement Mechanisms

The final point of data integrity for an options contract is settlement. The settlement price must be accurate and unmanipulable. For American-style options, which can be exercised at any time, the integrity of the real-time price feed is critical.

For European-style options, which settle at expiration, a robust Time-Weighted Average Price (TWAP) mechanism over a short window before expiration is often used. This prevents last-second manipulation of the settlement price.

Evolution

The evolution of data integrity standards for crypto options reflects a continuous effort to improve robustness and reduce latency.

Early solutions were rudimentary, relying on simple price feeds that were vulnerable to manipulation. The first major step forward involved the transition to decentralized oracle networks that aggregate data from multiple sources. This significantly raised the cost of attack.

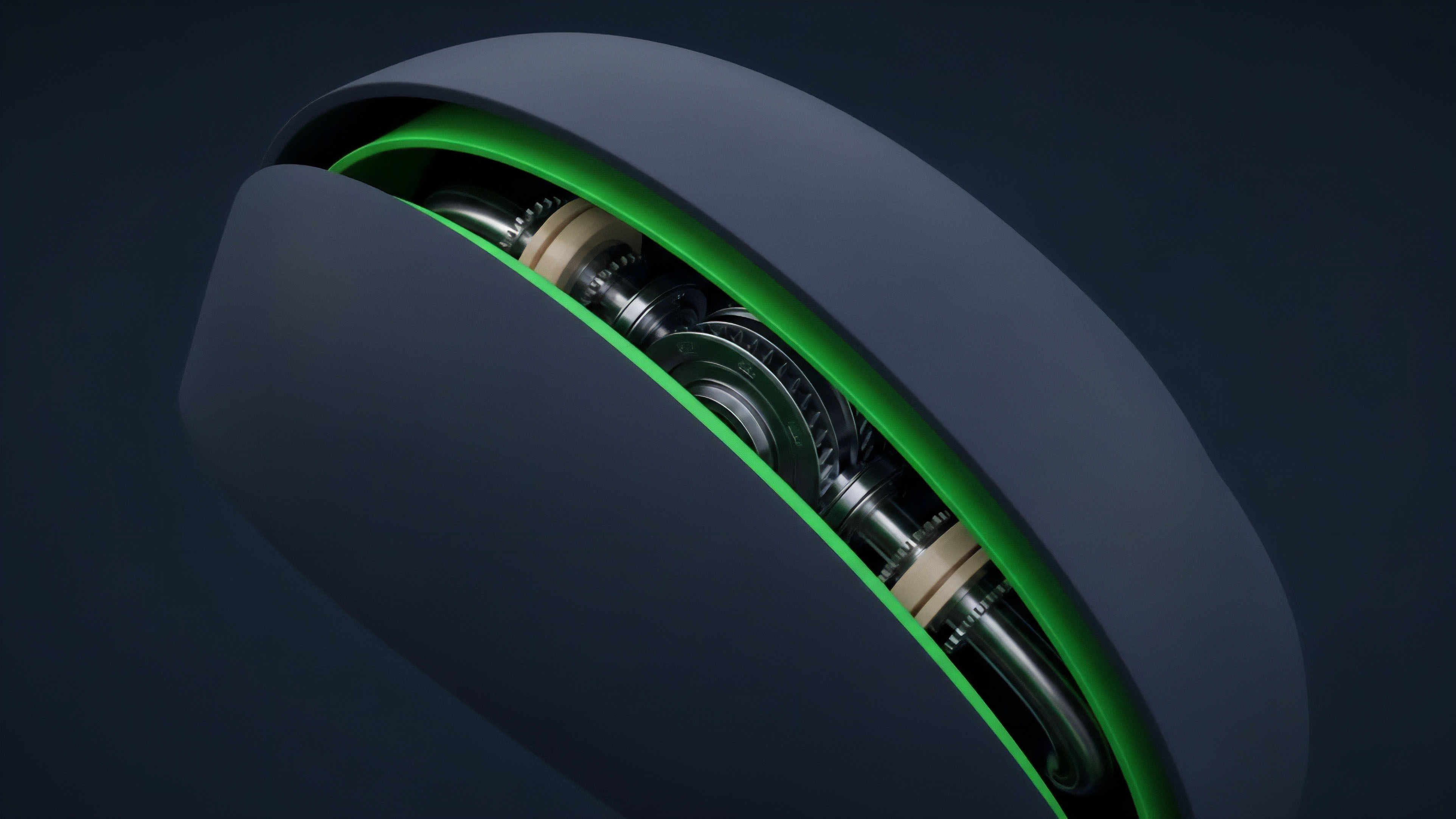

The current generation of protocols is focused on solving the more complex problem of volatility data integrity. We have moved from a simple reliance on a single, static volatility input to dynamic models that attempt to replicate the volatility skew. The volatility skew represents the difference in implied volatility between options with different strike prices.

Accurately modeling this skew is essential for protocols that offer a range of options products. The current trajectory is toward fully on-chain data integrity. Protocols are moving away from external data dependencies where possible, building options AMMs that derive volatility from their own liquidity pools.

This creates a more robust system where the protocol’s internal data reflects its own market dynamics. The shift also involves the use of layer-2 scaling solutions to process data faster and more cost-effectively. This reduces the latency between off-chain events and on-chain contract execution, minimizing the window for data manipulation.

The progression of data integrity standards demonstrates a shift from simply protecting against price manipulation to accurately modeling complex market dynamics like volatility skew.

Horizon

Looking ahead, the next phase of data integrity standards will be defined by two key areas: zero-knowledge (ZK) proofs and regulatory compliance. ZK proofs offer a pathway to verify the integrity of data without revealing the data itself. A ZK oracle could verify that a price feed was generated correctly according to a specific algorithm and source data, without ever exposing the raw inputs on-chain. This provides a new level of data privacy and integrity. Furthermore, regulatory bodies are beginning to scrutinize decentralized derivatives markets. The demand for auditable and verifiable data sources will increase significantly. Future data integrity standards will need to account for this regulatory pressure by providing clear data provenance and verifiable audit trails. The challenge will be to reconcile the need for decentralization with the requirement for regulatory oversight. The development of decentralized volatility surfaces (DVS) represents a critical horizon. A DVS would provide a robust, on-chain representation of market-implied volatility, removing the need for external data sources entirely. This would complete the shift toward fully autonomous, self-contained options protocols. The successful implementation of a DVS requires a deep, liquid options AMM and sophisticated mathematical models to interpolate volatility across different strikes and maturities. The integrity of the options market hinges on the ability to achieve this level of data self-sufficiency.

Glossary

Options Settlement Price Integrity

Regulatory Standards

Throughput Integrity

High Frequency Market Integrity

Structural Integrity Metrics

Protocol Parameter Integrity

Institutional Derivative Standards

Open Financial System Integrity

Volatility Surface Integrity