Essence

Cryptographic Proof Efficiency defines the computational economy of validating state transitions without re-executing the underlying logic. It represents the mathematical ratio between the resources consumed by a prover to construct a validity statement and the resources required by a verifier to confirm its accuracy. Within decentralized finance, this efficiency dictates the throughput limits of zero-knowledge circuits and the cost structure of on-chain settlement.

Verification cost determines the economic viability of trustless state transitions.

High levels of Cryptographic Proof Efficiency allow for massive batching of transactions into single succinct proofs. This compression is the primary driver for reducing per-transaction data availability costs. Without high efficiency, the gas fees required to post proofs to a base layer would exceed the value of the transactions themselves, rendering decentralized derivatives unfeasible for retail participants.

- Succinctness: The property where proof size remains small regardless of the computation complexity.

- Zero Knowledge: The ability to prove validity without revealing the underlying transaction data.

- Soundness: The mathematical guarantee that a false proof cannot be generated by a malicious actor.

Origin

The requirement for Cryptographic Proof Efficiency surfaced during the early development of privacy-preserving protocols and layer-two scaling solutions. Initial implementations of non-interactive proofs required massive trusted setups and significant computational time, limiting their utility to simple transfers. As the demand for complex smart contract execution grew, the industry shifted toward more efficient proving systems that could handle thousands of constraints per second.

Computational overhead in proof generation creates a latency floor for high-frequency settlement.

The transition from interactive protocols to non-interactive versions enabled asynchronous verification, which is a requirement for blockchain consensus. Early research into Probabilistically Checkable Proofs provided the theoretical basis for current efficiency gains. These early models demonstrated that a verifier only needs to examine a small portion of a proof to achieve high confidence in its validity, a principle that remains central to modern efficiency optimizations.

Theory

The mathematical architecture of Cryptographic Proof Efficiency relies on the asymptotic behavior of prover and verifier functions.

We define efficiency through the lens of computational complexity, specifically targeting sub-linear verification time. If C represents the number of gates in a circuit, an efficient system seeks a verification time of O(log C) or O(1).

| Proof System | Prover Complexity | Verifier Complexity | Proof Size |

|---|---|---|---|

| Groth16 | O(n log n) | O(1) | Constant |

| STARKs | O(n log n) | O(log² n) | Logarithmic |

| Bulletproofs | O(n) | O(n) | Logarithmic |

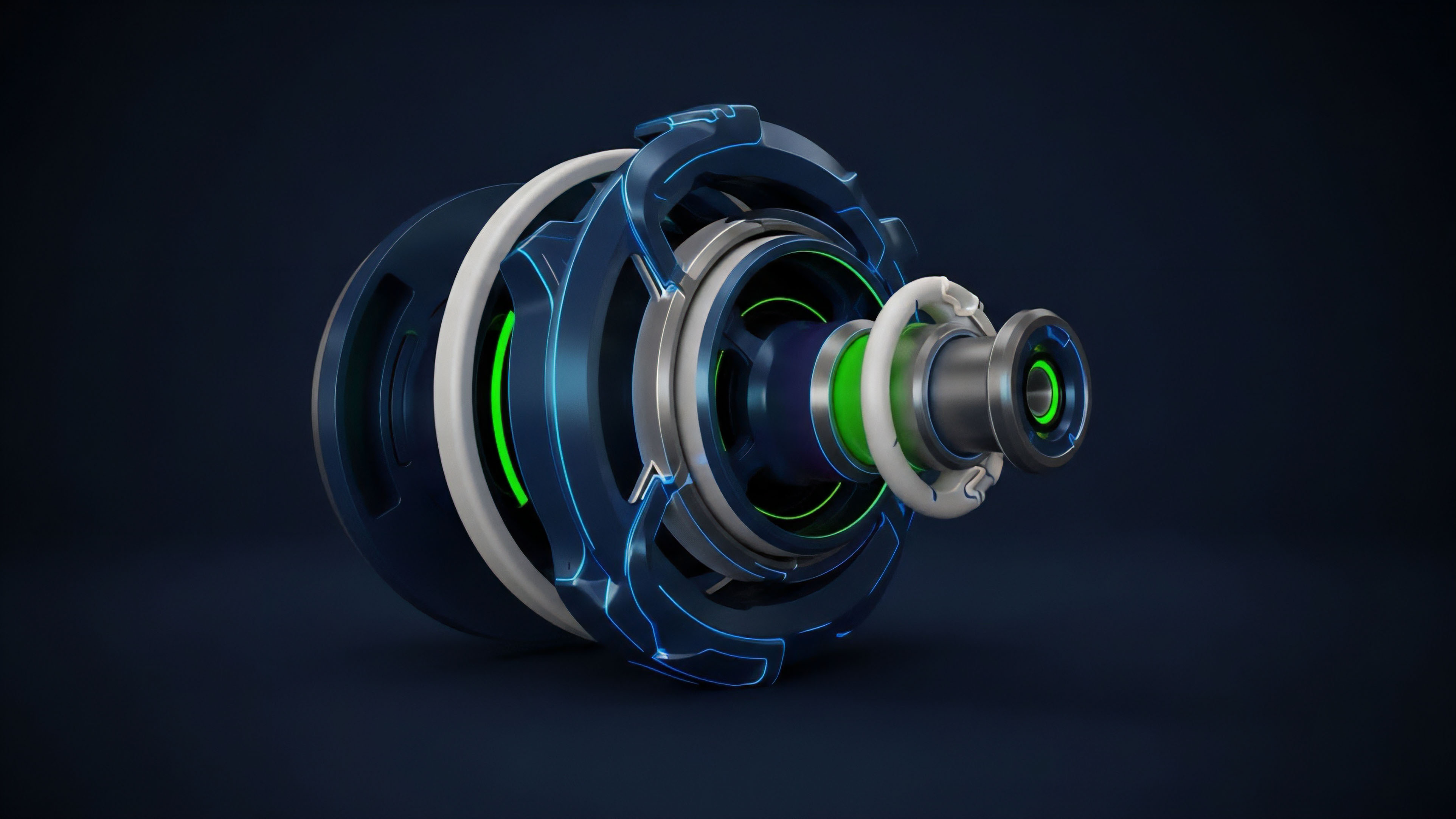

Polynomial commitment schemes serve as the engine for this efficiency. By representing state transitions as high-degree polynomials, provers can use Reed-Solomon codes or elliptic curve pairings to create compact commitments. The physical limits of this process are governed by the Landauer principle, which suggests that proof generation is ultimately constrained by the thermodynamic cost of bit manipulation.

This connection between information theory and physical reality highlights the boundary of what can be computed within a single block time.

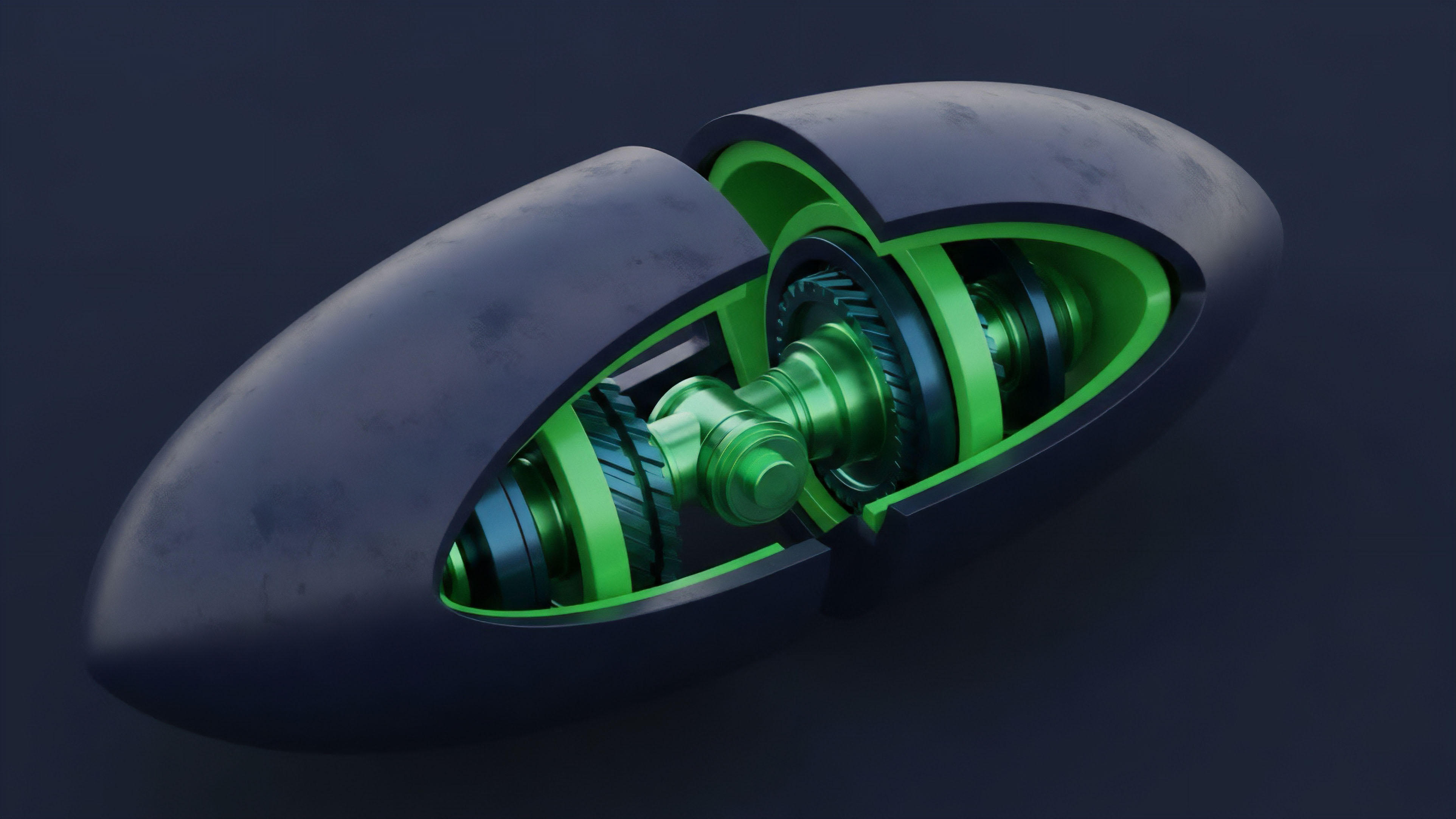

Recursive proof structures enable infinite scaling by compressing verification history into a single constant-time check.

Approach

Current implementation strategies for Cryptographic Proof Efficiency focus on hardware-software co-design. Developers utilize PlonKish arithmetization to create flexible circuits that accommodate diverse logic gates. This flexibility reduces the total constraint count, directly improving the speed of proof generation.

| Optimization Layer | Primary Technique | Benefit |

|---|---|---|

| Arithmetic Logic | Custom Gates | Reduced Constraint Count |

| Hardware Layer | MSM Acceleration | Faster Prover Time |

| Commitment Layer | KZG Commitments | Smaller Proof Size |

Prover networks now employ specialized hardware to handle the heavy lifting of Multi-Scalar Multiplication and Fast Fourier Transforms. These operations are the primary bottlenecks in the proving pipeline. By offloading these tasks to FPGAs or ASICs, protocols achieve the low latency required for real-time derivative settlement and margin calls.

Evolution

The shift from monolithic proof generation to modular and recursive structures marks the current stage of development.

Initially, proofs were generated for individual blocks, creating a linear relationship between time and throughput. Modern systems utilize recursion, where a proof can verify the validity of multiple previous proofs. This architectural change allows for the aggregation of thousands of blocks into a single verification step.

- Monolithic Proving: Single proof per transaction set.

- Batch Proving: Multiple transactions aggregated into one proof.

- Recursive Proving: Proofs of proofs, enabling exponential scaling.

Market participants now treat Cryptographic Proof Efficiency as a competitive advantage. Protocols with faster proving times offer lower slippage and faster withdrawal times from rollups to the mainnet. This efficiency directly impacts the capital efficiency of market makers who must manage liquidity across multiple isolated layers.

Horizon

The next phase of Cryptographic Proof Efficiency involves the total commoditization of proving power. We are moving toward a future where proof generation is a background utility, similar to internet bandwidth. Prover marketplaces will allow protocols to outsource computation to the most efficient global providers, driving down costs through pure market competition. Institutional adoption of decentralized options depends on the ability to prove complex risk models in milliseconds. Future developments in lookup tables and folding schemes promise to reduce the overhead of repetitive computations. These advancements will enable the on-chain execution of sophisticated Greeks and risk management algorithms that were previously too computationally expensive for decentralized environments.

Glossary

Hardware Acceleration

Commitment Schemes

Distributed Proving

Plonkish Arithmetization

Nova Protocol

Lookup Tables

Information Theory

Validity Rollups

Halo2 Proof System