Zero-Coupon Bond Model

Meaning ⎊ The Tokenized Future Yield Model uses the Zero-Coupon Bond principle to establish a fixed-rate term structure in DeFi, providing the essential synthetic risk-free rate for options pricing.

Black-Scholes Model Verification

Meaning ⎊ Black-Scholes Model Verification is the critical financial engineering process that quantifies pricing model error and assesses systemic risk in crypto options protocols.

Black Scholes Model On-Chain

Meaning ⎊ The Black-Scholes Model On-Chain translates the core option pricing equation into a gas-efficient, verifiable smart contract primitive to enable trustless derivatives markets.

Black-Scholes Model Inadequacy

Meaning ⎊ The Volatility Skew Anomaly is the quantifiable market rejection of Black-Scholes' constant volatility, exposing high-kurtosis tail risk in crypto options.

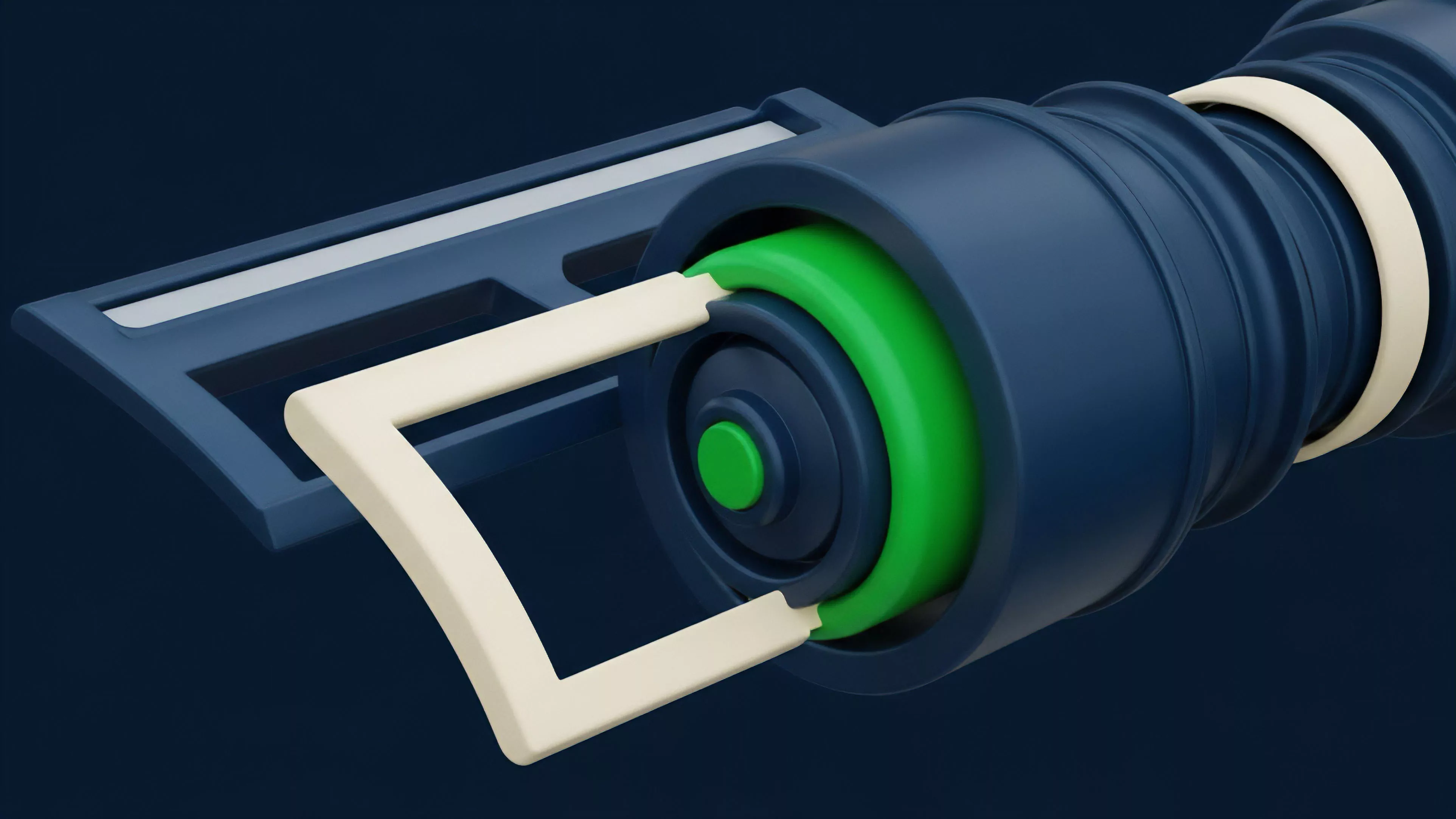

Hybrid Order Book Model

Meaning ⎊ The Hybrid CLOB-AMM Architecture blends CEX-grade speed with AMM-guaranteed liquidity, offering a capital-efficient foundation for sophisticated crypto options and derivatives trading.

Risk-Free Rate Challenge

Meaning ⎊ The Risk-Free Rate Challenge refers to the difficulty of identifying a stable benchmark rate for options pricing in decentralized finance due to the inherent credit and smart contract risks present in all crypto assets.

Black-Scholes Model Manipulation

Meaning ⎊ Black-Scholes Model Manipulation exploits the model's failure to account for crypto's non-Gaussian volatility and jump risk, creating arbitrage opportunities through mispriced options.

Machine Learning Volatility Forecasting

Meaning ⎊ Machine learning volatility forecasting adapts predictive models to crypto's unique non-linear dynamics for precise options pricing and risk management.

Black-Scholes Model Integration

Meaning ⎊ Black-Scholes Integration in crypto options provides a reference for implied volatility calculation, despite its underlying assumptions being frequently violated by high-volatility, non-continuous decentralized markets.

Stochastic Volatility Jump-Diffusion Model

Meaning ⎊ The Stochastic Volatility Jump-Diffusion Model is a quantitative framework essential for accurately pricing crypto options by accounting for volatility clustering and sudden price jumps.

Security Model

Meaning ⎊ The Decentralized Liquidity Risk Framework ensures options protocol solvency by dynamically managing collateral and liquidation processes against high market volatility and systemic risk.

Risk Model Calibration

Meaning ⎊ Risk Model Calibration adjusts financial model parameters to align with current market conditions, ensuring accurate options pricing and systemic resilience against tail risk in volatile crypto markets.

Black-Scholes Model Vulnerabilities

Meaning ⎊ The Black-Scholes model's core vulnerability in crypto stems from its failure to account for stochastic volatility and fat tails, leading to systemic mispricing in decentralized markets.

Black-Scholes Model Vulnerability

Meaning ⎊ The Black-Scholes model vulnerability in crypto is its systemic failure to price tail risk due to high-kurtosis price distributions, leading to undercapitalized derivatives protocols.

Interest Rate Model

Meaning ⎊ The Interest Rate Model in crypto options addresses the challenge of pricing derivatives where the cost of carry is a highly stochastic, endogenous variable determined by decentralized lending and staking protocols rather than a stable, external risk-free rate.

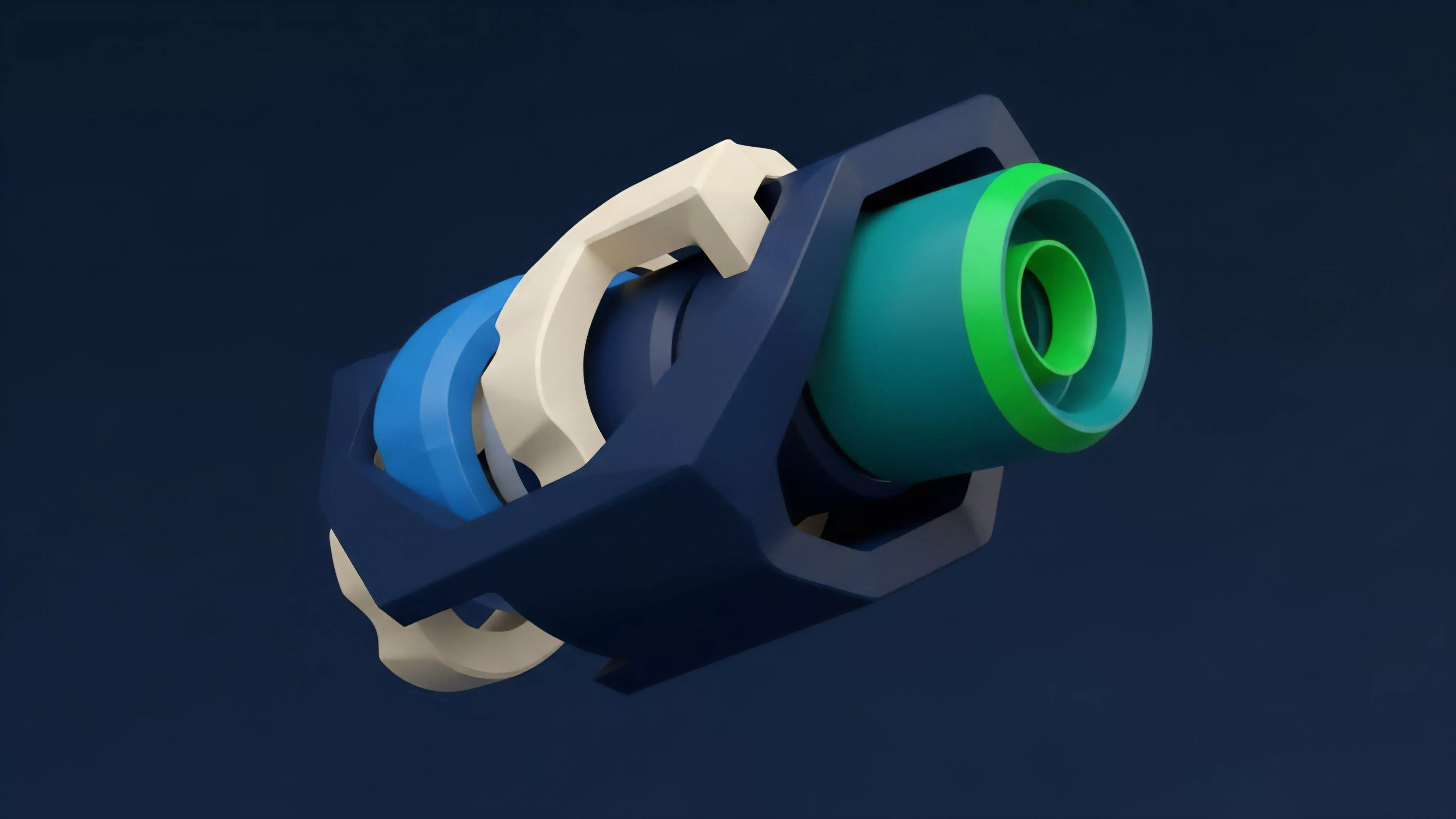

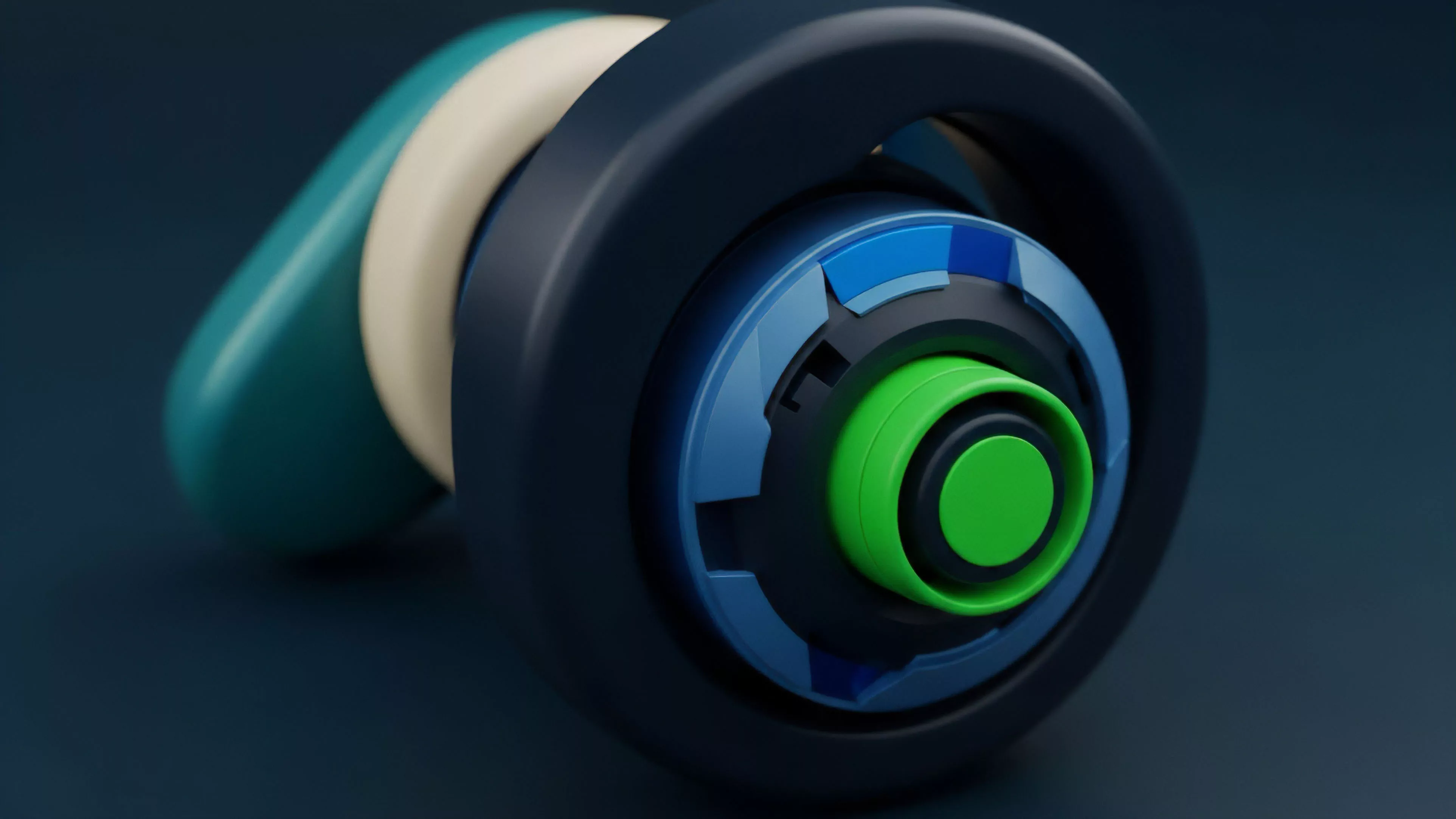

Prover Verifier Model

Meaning ⎊ The Prover Verifier Model uses cryptographic proofs to verify financial transactions and collateral without revealing private data, enabling privacy preserving derivatives.

Black-Scholes Pricing Model

Meaning ⎊ The Black-Scholes model is the foundational framework for pricing options, but its assumptions require significant adaptation to accurately reflect the unique volatility dynamics of crypto assets.