Essence

Zero-Knowledge Proof Order Books represent the architectural intersection of cryptographic privacy and high-frequency financial matching. These systems utilize non-interactive zero-knowledge proofs to validate the state transitions of a centralized or decentralized limit order book without exposing the underlying order data, participant identities, or proprietary trading strategies to the public ledger. The mechanism ensures that while the matching engine processes trades according to strict price-time priority, the sanctity of individual order information remains mathematically shielded.

Zero-Knowledge Proof Order Books enable verifiable trade execution while maintaining absolute confidentiality of order flow and participant identity.

The systemic relevance of this construction lies in its capacity to mitigate the risks of predatory front-running and toxic information leakage common in transparent decentralized exchanges. By decoupling the act of trade verification from the act of data exposure, these protocols construct a foundation for institutional-grade market making within permissionless environments. The system functions by requiring participants to submit encrypted orders accompanied by proofs that verify sufficient collateral and order validity, allowing the sequencer to maintain an accurate state without ever observing the plaintext values.

Origin

The genesis of Zero-Knowledge Proof Order Books resides in the convergence of SNARK-based scalability research and the inherent limitations of automated market makers.

Early decentralized finance architectures relied on public order books that broadcasted every intent, providing adversaries with a map of market depth and liquidity distribution. This vulnerability necessitated a transition toward privacy-preserving computational models that could handle the complexity of limit order management.

- Cryptographic foundations established the theoretical feasibility of verifying complex state changes without revealing input data.

- Scalability constraints drove the adoption of rollup-based architectures, necessitating efficient proof generation for high-throughput order matching.

- Market microstructure research identified the high cost of information leakage, incentivizing the development of dark pool-inspired decentralized mechanisms.

These systems emerged from the realization that financial privacy is a prerequisite for professional liquidity provision. When liquidity providers face the risk of being exploited by bots monitoring the mempool, they withdraw or widen spreads, degrading the overall market health. The shift toward proof-based matching attempts to restore the informational asymmetry advantage traditionally held by market makers in legacy venues, while operating on a decentralized, trust-minimized substrate.

Theory

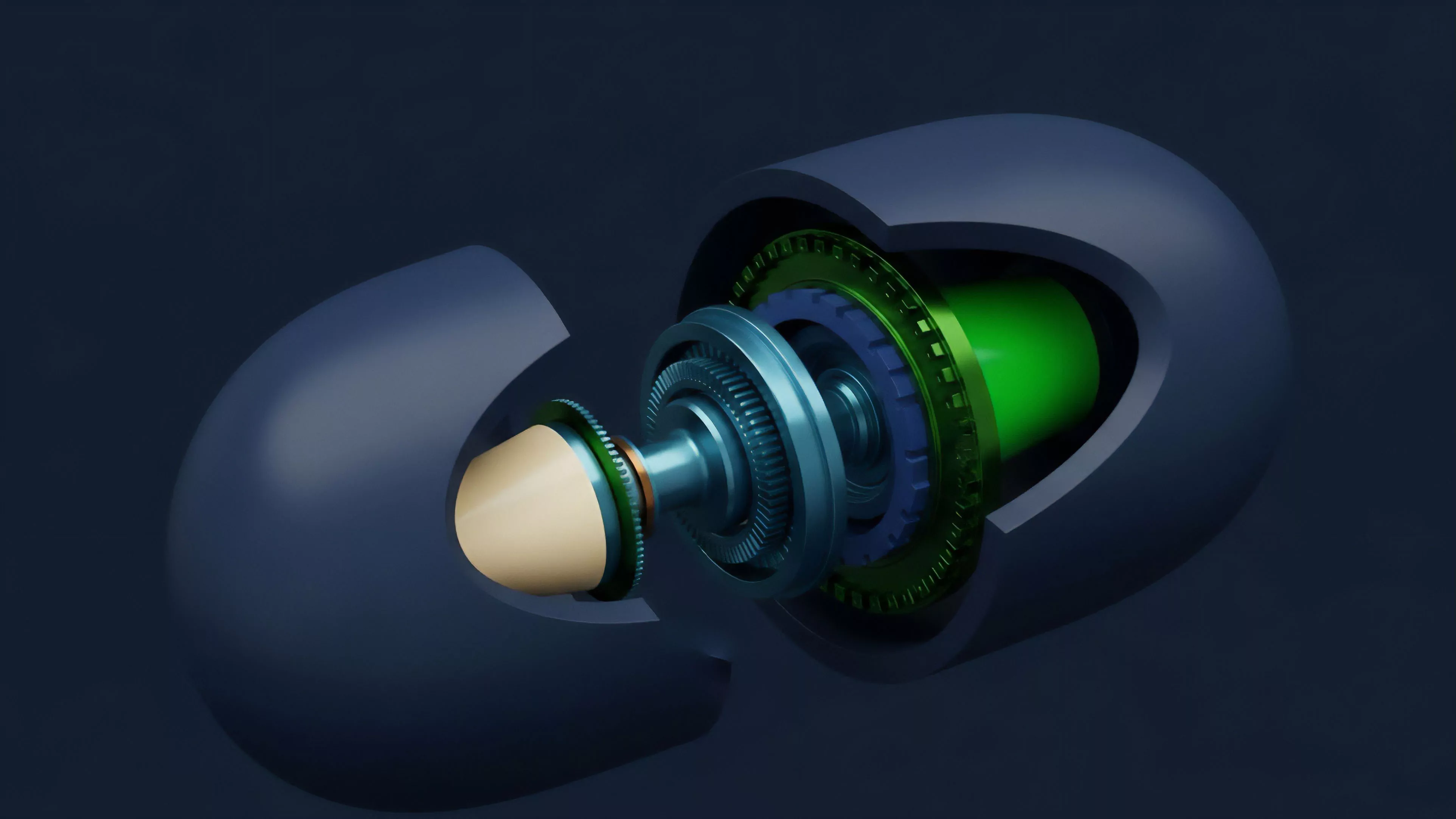

The operational logic of a Zero-Knowledge Proof Order Book relies on the rigorous application of cryptographic primitives to ensure consistency between the private order state and the public blockchain settlement layer.

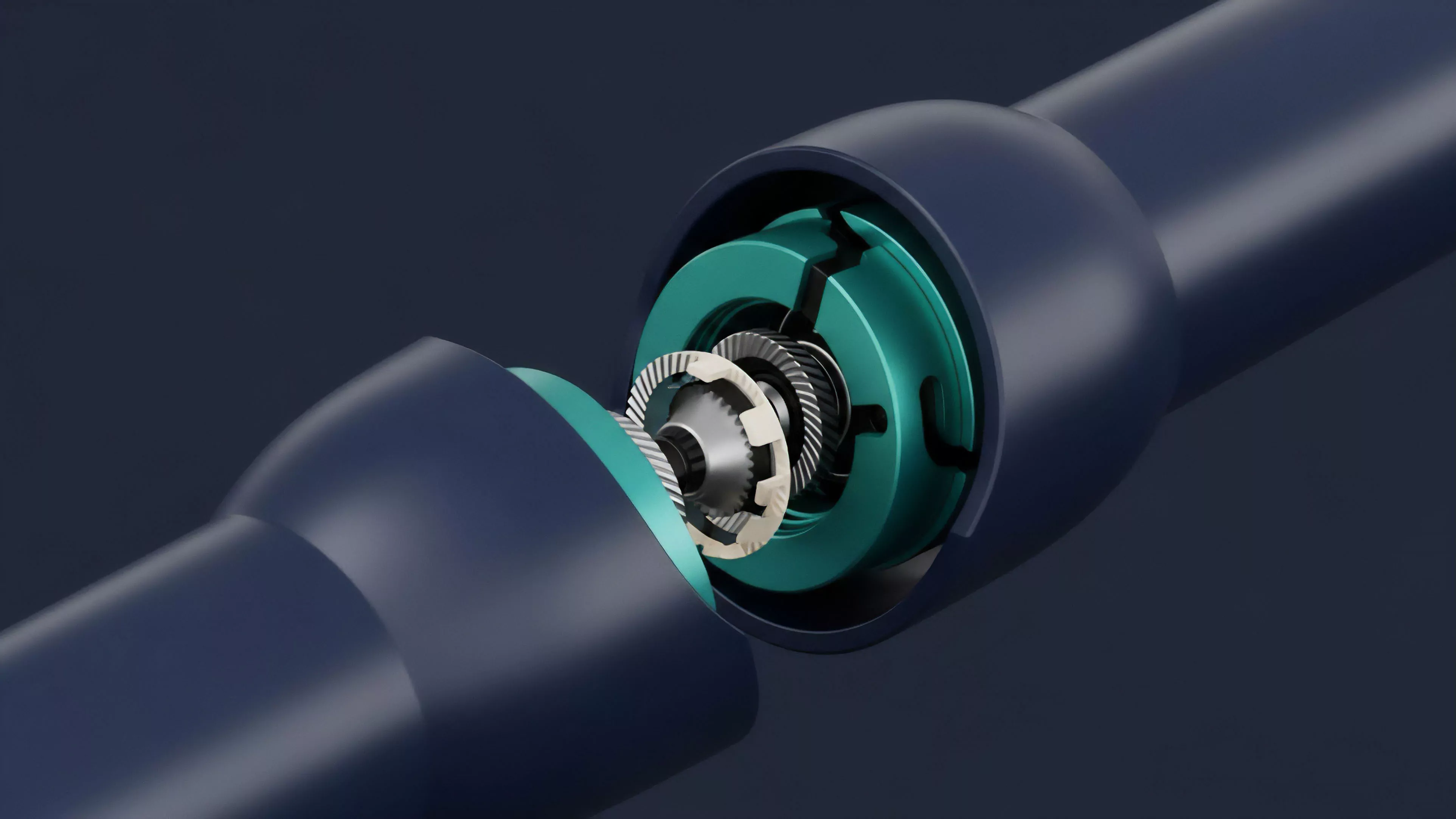

The matching engine operates within a secure environment ⎊ often a Trusted Execution Environment or a ZK-rollup circuit ⎊ where it receives encrypted orders and generates a succinct proof that the matching process followed the protocol rules.

| Parameter | Mechanism |

| State Commitment | Merkle trees or Polynomial Commitments |

| Validity Proof | zk-SNARKs or zk-STARKs |

| Matching Logic | Deterministic Price-Time Priority |

| Settlement | Atomic cross-chain or layer-two clearing |

The integrity of the matching process is guaranteed by the mathematical impossibility of producing a valid proof for an invalid state transition.

From a quantitative perspective, the system must address the trade-off between proof generation latency and market responsiveness. As order volume increases, the computational burden of generating proofs for every matching event scales, creating a potential bottleneck. Advanced protocols utilize batching techniques, where multiple trades are compressed into a single proof, significantly reducing the per-transaction cost.

This architecture mirrors the function of traditional dark pools, where institutional participants execute large blocks of volume away from the public gaze, yet it replaces the trusted intermediary with a verifiable, immutable circuit.

Approach

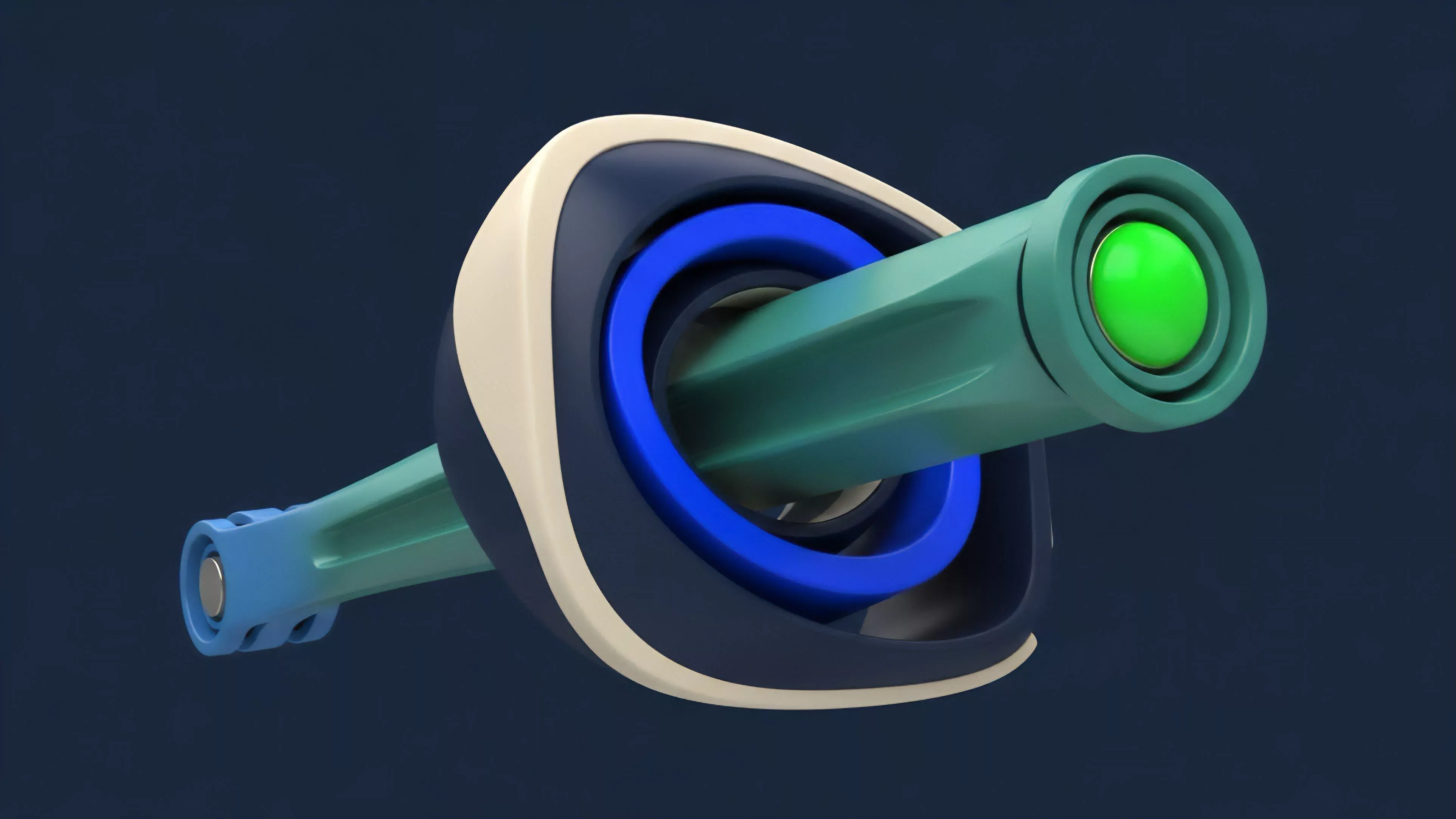

Current implementation strategies focus on the tension between decentralized custody and the speed required for efficient price discovery. Developers deploy specialized rollups that serve as dedicated order book engines, where the sequencer manages the matching logic and the ZK-circuit provides the necessary verification for settlement. Participants interact with these systems by submitting encrypted messages, ensuring their intent remains opaque until the trade is finalized.

- Sequencer decentralization remains the primary challenge to prevent single points of failure in order matching.

- Recursive proof composition allows protocols to aggregate multiple matching events into a single, compact state update.

- Collateral management requires tight integration between the order book and the underlying margin engine to ensure solvency.

The pragmatic market strategist views these systems as the only viable path for on-chain institutional participation. By neutralizing the threat of mempool observation, these order books allow for the deployment of sophisticated delta-neutral strategies that would be otherwise impossible on transparent automated market makers. The challenge persists in the complexity of smart contract security; the very circuits designed to provide privacy introduce new vectors for potential exploits, requiring rigorous formal verification of the underlying cryptographic code.

Evolution

The trajectory of these systems has moved from experimental, low-throughput prototypes to robust, production-ready engines.

Initial iterations struggled with the computational overhead of zero-knowledge circuits, leading to sluggish update frequencies that made high-frequency trading impossible. The integration of specialized hardware accelerators and more efficient proof systems has drastically reduced latency, bringing performance closer to centralized exchange standards.

The evolution of these protocols is defined by the transition from proof-of-concept cryptographic puzzles to scalable, high-performance matching engines.

This progress is not linear; it is a cycle of refinement where each generation of the protocol addresses a specific failure point, such as liquidity fragmentation or excessive proof generation costs. As the underlying cryptography matures, the industry has shifted focus toward interoperability, attempting to connect these private order books with broader liquidity networks. The adoption of shared sequencing layers represents a significant milestone, enabling multiple protocols to leverage the same decentralized infrastructure for secure, private trade execution.

Horizon

The future of Zero-Knowledge Proof Order Books lies in the maturation of fully homomorphic encryption and its integration with zero-knowledge proofs, potentially allowing for matching engines that operate on encrypted data without ever needing to decrypt it during the process.

This advancement would eliminate the final requirement for a trusted sequencer, pushing the architecture toward a fully trustless, permissionless state.

| Future Development | Impact on Market |

| Fully Homomorphic Matching | Elimination of sequencer trust assumptions |

| Cross-Chain Liquidity Bridges | Unified global liquidity across protocols |

| Institutional Regulatory Hooks | Selective disclosure for compliance requirements |

The strategic trajectory suggests that these protocols will eventually serve as the primary venues for derivative trading, where privacy regarding position sizing and entry points is paramount for risk management. The ultimate objective is a global financial fabric where the efficiency of centralized order books is combined with the censorship resistance and privacy of cryptographic protocols. The success of this transition depends on the ability of developers to manage the systemic risk inherent in such high-leverage, high-velocity environments, ensuring that the infrastructure remains resilient against both technical failures and adversarial market behavior.